AI in Workforce Management

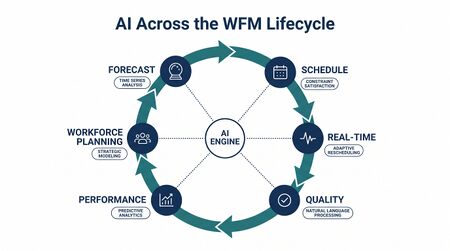

Artificial intelligence in workforce management refers to the application of machine learning, natural language processing, optimization algorithms, and autonomous agents across the workforce management lifecycle — from demand forecasting through scheduling, real-time operations, quality management, and increasingly, the direct handling of customer interactions by AI systems. AI does not replace the WFM discipline; it transforms every stage of it while introducing fundamentally new planning problems that classical methods cannot address.

This page serves as the central reference connecting all AI-related content on the wiki. For foundational concepts in artificial intelligence and machine learning as they apply to WFM, see Artificial Intelligence Fundamentals and Machine Learning Concepts.

AI across the WFM lifecycle

AI touches every phase of the workforce management cycle, but its maturity, reliability, and organizational readiness requirements differ significantly by function. The following table maps AI capabilities to WFM stages, indicating both the current state of adoption and the planning implications for each.

| WFM stage | AI capability | Dominant technique(s) | Maturity level | Planning implication |

|---|---|---|---|---|

| Forecasting | Volume and AHT prediction; anomaly detection; special-event adjustment | Gradient-boosted trees, neural networks, hybrid ARIMA-ML, quantile regression | Production-grade (Level 3–4) | Shifts planning from point estimates to distributional forecasts; requires retraining pipelines and monitoring for model drift |

| Capacity planning | Scenario simulation; attrition prediction; hiring pipeline optimization | Monte Carlo simulation, survival models, [[Multi-Objective Optimization in Contact Center|multi-objective optimization]] | Emerging (Level 3) | Extends planning horizon but demands clean historical data on attrition, ramp, and cost; sensitivity to assumption quality is high |

| Scheduling | Automated schedule generation; preference optimization; constraint relaxation | [[Reinforcement Learning for Schedule Optimization|Reinforcement learning]], mixed-integer programming, metaheuristics | Production-grade (Level 3–4) | Enables continuous re-optimization but requires explicit fairness constraints; see Algorithmic Fairness and Bias in Workforce Scheduling |

| Real-time operations | Intraday reforecasting; automated schedule adjustments; anomaly alerting | Streaming ML, rule-based triggers with ML thresholds, digital twins | Maturing (Level 3) | Compresses decision cycles from hours to minutes; demands integration between WFM platform and routing/telephony systems |

| Quality management | Automated interaction scoring; sentiment detection; coaching trigger identification | Speech analytics, NLP classifiers, large language models | Maturing (Level 3) | Scales quality monitoring from 2–5% sample to 100% coverage; introduces calibration challenges between AI and human evaluators |

| AI as workforce | Direct interaction handling; task automation; blended human-AI staffing | Conversational AI, RPA, agentic architectures | Emerging–Advanced (Level 3–5) | Creates entirely new planning problems — see Three-Pool Architecture, Cognitive Portfolio Model (N*), AI Containment Rate and Its Workforce Implications |

AI in forecasting

Main article: Machine Learning for Volume Forecasting|Probabilistic Forecasting

Contact center forecasting has historically relied on time-series decomposition — isolating trend, seasonality, and residual components to project future volumes. Classical methods such as exponential smoothing and ARIMA remain effective for stable, high-volume queues with well-behaved seasonal patterns. AI extends forecasting capability in three directions that classical methods handle poorly: feature richness, distributional output, and adaptive recalibration.

Feature-rich models incorporate external variables — marketing campaign calendars, weather data, social-media sentiment, competitor actions, macroeconomic indicators — that influence contact volume but are invisible to univariate time-series methods. Gradient-boosted tree ensembles (XGBoost, LightGBM) and neural architectures handle high-dimensional feature spaces without requiring the forecaster to specify functional relationships in advance. Research by Makridakis et al. in the M5 forecasting competition demonstrated that ML ensemble methods outperformed classical statistical approaches on intermittent and lumpy demand series, a pattern common in back-office and digital-channel workloads.[1]

Distributional (probabilistic) forecasting replaces the single-number forecast with a full probability distribution over future outcomes. Rather than predicting "1,200 calls at 10:00 AM," a probabilistic model produces a density — "90% confidence the volume falls between 1,050 and 1,380." This shift is consequential for downstream planning because it allows staffing models to optimize against a risk-adjusted demand curve rather than a point estimate. Quantile regression forests, conformal prediction, and deep probabilistic models (DeepAR, Temporal Fusion Transformer) are the dominant approaches.[2] See Probabilistic Forecasting and Deterministic vs Probabilistic Models for detailed treatment.

Adaptive recalibration addresses model drift — the gradual degradation of forecast accuracy as customer behavior, channel mix, or operational conditions change. Traditional WFM forecasting requires manual intervention to detect and correct drift (adjusting seasonal factors, excluding anomalous periods, rebuilding models). ML-based systems can implement automated drift detection using statistical tests on residual distributions and trigger retraining when accuracy degrades beyond a threshold. This capability is operationally valuable but introduces its own risks: automated retraining without guardrails can amplify errors if the training data itself is corrupted by operational anomalies.

The practical constraint on AI forecasting is not algorithmic sophistication — it is data quality. ML models are only as reliable as their input features. Missing data, inconsistent interval definitions, undocumented contact-type reclassifications, and retroactive ACD configuration changes are the primary sources of forecast error in most operations, and no algorithm compensates for systematically corrupted inputs.

AI in scheduling

Main article: [[Scheduling Methods|Reinforcement Learning for Schedule Optimization|Probabilistic Scheduling]]

Schedule optimization is a constrained combinatorial problem: assign N employees to shifts, breaks, and activities across T intervals such that staffing meets demand requirements while satisfying labor rules, contractual obligations, employee preferences, and cost targets. The problem is NP-hard in the general case, meaning exact solutions become computationally intractable as the number of agents and constraints grows.[3]

Classical WFM systems solve scheduling through heuristic search — greedy algorithms, tabu search, simulated annealing, or genetic algorithms that find "good enough" solutions within acceptable computation time. AI advances scheduling in three ways.

Reinforcement learning (RL) treats schedule generation as a sequential decision problem. An RL agent learns a scheduling policy by iterating through millions of simulated scheduling scenarios, receiving reward signals based on coverage quality, cost, fairness metrics, and employee satisfaction proxies. Unlike static optimization, RL policies can adapt to changing conditions — a new labor regulation, a shift in employee preference distributions, or a structural change in demand patterns — by continuing to learn from new data. The challenge is that RL requires a high-fidelity simulation environment to train against, and building that environment is itself a significant engineering effort. See Reinforcement Learning for Schedule Optimization for implementation considerations.

Continuous re-optimization replaces the traditional "generate schedule once, then manage exceptions" workflow with a model that continuously adjusts the schedule as conditions change. When an agent calls in sick, demand spikes unexpectedly, or a training session is cancelled, the system re-solves the schedule in near-real-time rather than requiring a planner to manually rearrange shifts. This capability depends on fast solver performance (sub-minute solve times for the affected portion of the schedule) and clear business rules governing which schedule changes are permissible without employee consent.

Fairness-aware optimization explicitly incorporates equity constraints into the objective function. Without explicit fairness constraints, optimization algorithms will concentrate undesirable shifts (overnight, weekend, holiday) on employees with the least seniority or the fewest contractual protections — an outcome that is mathematically optimal but organizationally corrosive. Research on algorithmic fairness in scheduling has demonstrated that fairness constraints can be incorporated with modest cost increases (typically 1–3% above the unconstrained optimum) when properly formulated.[4] See Algorithmic Fairness and Bias in Workforce Scheduling for a full treatment.

The adoption barrier for AI scheduling is not technology — it is organizational trust. Schedulers who have built expertise over years are understandably resistant to systems that produce schedules they cannot fully explain. Effective implementation requires transparency in how the algorithm makes tradeoffs, clear override mechanisms, and a transition period where AI-generated schedules are reviewed before deployment.

AI in real-time operations

Main article: Workforce Digital Twins and Continuous Planning

Real-time operations — the monitoring and adjustment of staffing during the operating day — is where AI delivers its most immediately visible impact. Traditional real-time management depends on analysts watching dashboards, comparing actual volumes to forecast, and manually triggering responses (activating overtime, releasing agents to off-phone work, adjusting skill routing). AI compresses the observation-decision-action loop from minutes-to-hours to seconds.

Intraday reforecasting uses streaming data to continuously update the remainder-of-day volume and AHT forecast. Rather than relying on a static intraday profile generated the night before, ML models incorporate the actual trajectory of the day — current volume run rate, abandonment patterns, AHT trends, channel-shift signals — to produce an updated forecast for remaining intervals. This capability is particularly valuable on days when reality diverges sharply from forecast: weather events, viral social-media incidents, product outages, and marketing-driven volume spikes.

Automated schedule adjustments translate reforecast signals into staffing actions without human intervention for predefined scenarios. When predicted understaffing exceeds a threshold, the system can automatically extend shifts (for willing agents), activate voluntary overtime pools, restrict off-phone activities, or adjust skill-routing weights. When overstaffing is detected, agents can be released to training, coaching, or project work. The key design decision is the boundary between automated and human-approved actions — most mature implementations automate low-risk adjustments (shifting break times by 15 minutes) while requiring human approval for high-impact changes (cancelling training, mandating overtime).

Digital twins represent the operation as a continuously-updated simulation model that can be used to test "what if" scenarios in real time. A workforce digital twin ingests live ACD data, schedule data, and agent-state data to maintain a current-state model of the operation, then projects forward under different intervention scenarios. This capability transforms real-time management from reactive (responding to service-level breaches) to anticipatory (detecting emerging understaffing 45–90 minutes before it materializes and intervening preemptively).

The organizational prerequisite for AI-driven real-time operations is system integration. The AI layer must have read access to ACD/routing data, WFM schedule data, and agent-state feeds in near-real-time, plus write access (or at minimum, recommendation access) to scheduling and routing systems. In many operations, these systems are poorly integrated, making the technical plumbing more challenging than the AI itself.

AI in quality management

Main article: Speech Analytics

Quality management in contact centers has historically operated on sample-based evaluation — supervisors score 5–10 interactions per agent per month against a rubric. AI transforms quality management from a sampling exercise to a census operation: every interaction can be evaluated, scored, and analyzed.

Automated interaction scoring uses natural language processing to evaluate interactions against quality criteria — compliance with required disclosures, empathy signals, first-contact resolution indicators, process adherence. Speech analytics platforms transcribe voice interactions and apply classification models to identify quality-relevant patterns. Large language models have further expanded capability by enabling evaluation against nuanced, context-dependent criteria that rule-based systems cannot capture.

Real-time agent assist provides agents with AI-generated guidance during live interactions — suggesting knowledge-base articles, flagging compliance requirements, detecting customer sentiment shifts, and recommending next-best-actions. This capability blurs the boundary between quality management and real-time operations, creating a feedback loop where quality insights inform immediate coaching rather than waiting for the next monthly review cycle.

Predictive quality models identify interactions at risk of poor outcomes (escalation, repeat contact, customer churn) before they conclude, enabling supervisory intervention during the interaction rather than after. These models combine interaction features (duration, hold time, sentiment trajectory) with customer features (tenure, recent contact history, product complexity) to generate risk scores in real time.

The primary risk in AI-driven quality management is calibration drift between AI evaluators and human evaluators. If the AI scores interactions differently than human quality analysts — even if the AI is technically more consistent — the resulting disconnect erodes trust in the quality program. Rigorous calibration protocols, where AI and human evaluators score the same interaction set and discrepancies are resolved, are essential.

AI as workforce

Main article: Conversational AI|AI Containment Rate and Its Workforce Implications

The most consequential shift is not AI supporting WFM processes but AI becoming part of the workforce. When conversational AI systems handle customer interactions autonomously, or when robotic process automation completes back-office tasks without human involvement, AI transitions from a tool used by planners to a resource that planners must staff, schedule, and manage.

This shift creates planning problems that have no precedent in classical WFM.

Containment and its workforce implications

The AI containment rate — the percentage of interactions fully resolved by AI without human intervention — is the single most consequential metric for workforce planning in an AI-augmented operation. Each percentage point of containment represents a direct reduction in human-handled volume, but the relationship between containment and staffing is non-linear. As AI handles simpler interactions, the residual human workload becomes more complex, driving up average handle time and skill requirements for remaining agents. A 2023 analysis by the National Bureau of Economic Research found that generative AI tools increased customer support agent productivity by 14% on average, with the largest gains among less-experienced workers, but also noted that productivity measurement must account for changes in task composition.[5]

The planning implication is that workforce reductions lag containment gains. An operation achieving 40% AI containment does not reduce headcount by 40% — it reduces headcount by less (often 25–30%) because residual interactions are harder and longer. See AI Containment Rate and Its Workforce Implications for the mathematical treatment.

The three-pool architecture

The Three-Pool Architecture provides the structural framework for planning a blended human-AI workforce. It decomposes the operation into three pools — Autonomous AI (Pool AA), Collaborative (Pool Collab), and Specialist (Pool Spec) — each requiring a fundamentally different staffing methodology:

- Pool AA is sized by cost modeling (investment, transaction cost, escalation tax, maintenance, rebound), not headcount.

- Pool Collab uses the Cognitive Portfolio Model (N*), where one human monitors N concurrent AI-handled interactions, bounded by cognitive capacity.

- Pool Spec uses simulation-based staffing for the concentrated-complexity residual workload.

This architecture is positioned at Level 4 — Advanced on the WFM Labs Maturity Model™.

Blended staffing models

Between fully autonomous AI and fully human handling lies a spectrum of blended staffing models. These include AI-assisted (AI provides suggestions, human executes), AI-led with human supervision (AI handles the interaction while a human monitors and can intervene), and human-led with AI augmentation (human handles the interaction with AI-powered tools). Each model requires different staffing assumptions, different skill profiles, and different planning methodologies. See Agentic AI Workforce Planning and Workforce Planning with AI Agents for emerging frameworks.

Supervision and escalation

AI systems handling customer interactions require supervision frameworks that define when and how human oversight is applied. Supervision models range from synchronous (human reviews every AI response before delivery) to asynchronous (human reviews a sample of completed AI interactions) to exception-based (human intervenes only when the AI signals uncertainty or policy boundaries). The supervision model directly affects staffing: synchronous supervision requires roughly one human per AI instance, while exception-based supervision may allow ratios of 20:1 or higher depending on the AI's confidence calibration.

The human-AI workforce

Main article: Organizational Change Management for AI Workforce Transitions

The transition to a blended human-AI workforce is not primarily a technology problem — it is an organizational design problem. McKinsey's 2023 analysis of generative AI's economic potential estimated that customer operations is among the functions most exposed to AI-driven transformation, with 30–45% of current activities potentially automatable using existing technology.[6] But potential automation and actual workforce transformation are separated by organizational readiness, change management, and the redesign of roles and career paths.

Workforce composition shifts

As AI absorbs routine interactions, the remaining human workforce shifts toward higher-complexity, higher-judgment work. This has several planning implications:

- Skill requirements increase. Agents handling post-AI residual work need deeper product knowledge, stronger problem-solving capability, and greater emotional intelligence. Hiring profiles, training curricula, and speed-to-proficiency expectations must be recalibrated.

- Role differentiation emerges. The traditional flat "agent" role fragments into AI trainers (who improve AI performance through feedback and content curation), AI supervisors (who monitor AI-handled interactions per the CPM), and specialist agents (who handle the concentrated-complexity residual). Each role has a different staffing model, compensation structure, and career path.

- Attrition patterns change. When routine work is automated, the agents most likely to leave are those who preferred predictable, repetitive tasks. Retention strategies must adapt to a workforce doing harder work with higher cognitive load.

Change management

The workforce transition requires deliberate change management. Gartner's 2024 survey of customer service leaders found that the majority planned to integrate AI into their operations within two years, but far fewer had change management plans addressing the workforce impact.[7] The gap between AI deployment plans and workforce transition plans is a reliable predictor of implementation failure.

Effective change management addresses three domains: communication (transparent explanation of how AI will affect roles), capability building (training existing staff for new roles), and career path redesign (ensuring employees see a future in the AI-augmented operation, not just a countdown to obsolescence). See Organizational Change Management for AI Workforce Transitions for a structured approach.

Implementation considerations

Data readiness

AI capability in WFM is bounded by data quality, not algorithmic sophistication. The most common implementation failures trace to data problems rather than model problems:

- Interval consistency. Forecasting models require consistent interval definitions over time. Changes in ACD configuration, queue groupings, or contact-type classifications create discontinuities that corrupt training data.

- Feature availability. ML models that incorporate external features (marketing calendars, weather, digital traffic) require those features to be reliably available in production, not just historically. A model trained on features that become unavailable after deployment is useless.

- Labeling quality. Supervised learning for quality scoring, intent classification, and routing requires labeled training data. The quality of labels — inter-rater reliability among human labelers, consistency of labeling guidelines over time — directly determines model quality.

- Volume thresholds. ML models require sufficient training data to learn stable patterns. Small queues (under 50 contacts per day) or new contact types with limited history are poor candidates for ML forecasting and are better served by classical statistical methods or judgmental approaches.

Organizations should assess data readiness before investing in AI capability. A high-sophistication algorithm applied to low-quality data produces confidently wrong outputs — the worst possible outcome for workforce planning.

Vendor evaluation

The WFM vendor landscape has shifted significantly as AI capabilities have become competitive differentiators. Evaluation criteria specific to AI-enabled WFM include:

- Model transparency. Can the organization understand why the AI made a specific forecast or scheduling decision? Black-box models create operational risk when planners cannot diagnose unexpected outputs.

- Retraining control. Who controls when and how models are retrained? Vendor-managed retraining on shared infrastructure may not account for organization-specific seasonality or operational changes.

- Integration architecture. AI capabilities that require data to leave the organization's environment raise data-governance concerns. On-premises inference, private cloud deployment, and data-residency guarantees are relevant evaluation dimensions.

- Fallback mechanisms. What happens when the AI component fails? Systems that degrade gracefully to rule-based operation are preferable to systems that fail entirely.

Forrester's 2024 analysis of the WFM technology market emphasized that organizations should evaluate AI capabilities not in isolation but as part of the end-to-end WFM workflow, noting that disconnected AI features create more complexity than value.[8]

The AI scaffolding approach

The AI Scaffolding Framework provides a structured approach to AI implementation that avoids the "rip and replace" pattern. Rather than deploying AI as a wholesale replacement for existing WFM processes, scaffolding introduces AI incrementally — first as a parallel system (running alongside existing processes for comparison), then as an assistant (providing recommendations that humans approve), then as an autonomous system (making decisions within defined guardrails). This graduated approach builds organizational trust, generates calibration data, and surfaces integration issues before they affect operations.

Economic framework

Main article: Automation Economics and ROI Decision Frameworks

ROI analysis

The return on investment for AI in WFM is real but frequently overstated by vendor business cases that account for only a subset of costs. A complete ROI analysis must include:

- Direct cost savings. Reduced headcount from AI containment, reduced overtime from better scheduling, reduced shrinkage from real-time optimization. These are measurable and typically constitute the vendor business case.

- Implementation costs. Platform licensing, integration engineering, data preparation, model training, and the opportunity cost of the implementation team's time. These are one-time but substantial — typically 1.5–3× the first-year licensing cost for enterprise WFM AI deployments.

- Ongoing operational costs. Model monitoring, retraining, content maintenance (for conversational AI), escalation handling, and the supervision workforce required for AI-handled interactions. These are recurring and often underestimated. See the Three-Pool Architecture cost model for a rigorous treatment.

- Transition costs. Change management, workforce retraining, severance or redeployment costs, and the temporary productivity loss during the transition period. Deloitte's fifth edition survey of enterprise AI deployments reported that organizations consistently underestimate transition-related costs, which erode projected first-year savings by a significant margin when properly accounted for.[9]

- Opportunity costs. What the organization cannot do while implementing AI in WFM — other technology investments deferred, management attention consumed, operational disruption during transition.

The Automation Economics framework provides structured methods for evaluating these costs against benefits. The critical insight is that AI ROI is portfolio-level, not feature-level: the return from AI forecasting, AI scheduling, and AI-handled interactions must be evaluated as an integrated system, not as independent line items.

Build versus buy

The build-versus-buy decision for AI in WFM is not binary. Most organizations operate on a spectrum:

- Buy (platform AI): Use AI capabilities embedded in the WFM platform (NICE, Verint, Calabrio, Genesys). Lowest implementation cost, fastest time-to-value, least customization. Appropriate for organizations at Level 2–3 on the maturity model.

- Configure (platform AI + custom models): Use platform AI for standard capabilities (forecasting, scheduling) and build custom models for organization-specific needs (specialized quality scoring, proprietary routing logic). Moderate implementation cost, requires data science capability.

- Build (custom AI stack): Build AI capabilities on general-purpose ML infrastructure. Highest cost, longest time-to-value, maximum customization. Appropriate only for very large operations (10,000+ agents) with dedicated data science teams and unique operational characteristics that platform AI cannot address.

The decision should be driven by the question: "Where does AI create competitive advantage versus operational efficiency?" For most organizations, WFM AI is an efficiency play (buy), not a differentiation play (build).

Total cost of AI-handled interactions

A common error in AI economics is comparing the marginal cost of an AI-handled interaction to the fully-loaded cost of a human-handled interaction. This comparison is misleading because it ignores the full cost stack for AI-handled interactions:

- Marginal interaction cost (API/compute cost per interaction)

- Escalation cost (human handling of interactions AI failed to resolve, at higher AHT due to the handoff)

- Maintenance cost (content updates, model retraining, regression testing)

- Rebound cost (induced demand from AI availability — see Service Demand Rebound Model)

- Supervision cost (human oversight of AI-handled interactions)

When all five cost layers are included, the true cost of an AI-handled interaction is typically several multiples of the marginal cost alone. The economics remain favorable compared to fully-human handling for suitable interaction types, but the margin is narrower than vendor business cases suggest. See Three-Pool Architecture#Pool AA — Autonomous AI for the full cost model.

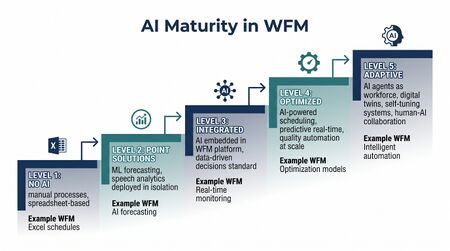

AI maturity in the WFM Labs Maturity Model

The WFM Labs Maturity Model™ positions AI capabilities across five maturity levels. AI is not a separate track — it is woven into the progression of every WFM function:

| Level | AI posture | Characteristics |

|---|---|---|

| Level 1 — Foundational | No AI; manual processes | Spreadsheet-based forecasting, manual scheduling, reactive real-time management. AI is not present. Data infrastructure may be insufficient to support AI adoption. |

| Level 2 — Developing | Rule-based automation | WFM platform automates schedule generation using heuristic algorithms. Forecasting uses built-in statistical methods (exponential smoothing, regression). No machine learning. Automation is deterministic and explainable. |

| Level 3 — Established | ML-augmented planning | Machine learning models supplement or replace classical forecasting. Schedule optimization uses advanced algorithms. Speech analytics enables automated quality scoring. AI is a tool — planners use AI outputs but retain decision authority. Probabilistic Forecasting and Probabilistic Scheduling emerge. |

| Level 4 — Advanced | AI as workforce member | AI handles interactions directly (Conversational AI). The Three-Pool Architecture structures the blended workforce. The Cognitive Portfolio Model (N*) governs collaborative staffing. Workforce planning must account for AI capacity, AI failure modes, and human-AI interaction dynamics. AI Agent Orchestration for WFM coordinates across pools. |

| Level 5 — Pioneering | Autonomous, self-optimizing operations | Digital twins enable continuous planning. AI systems self-tune within guardrails. Human role shifts to governance, exception handling, and strategic direction. The operation functions as an integrated human-AI system with closed-loop optimization across all WFM functions. |

Progression through these levels is not automatic. Each level transition requires specific investments in data infrastructure, organizational capability, and change management. Most contact center operations as of 2025 operate at Level 2 or early Level 3, with pockets of Level 4 capability in large, technology-forward organizations.[10]

Risks and limitations

AI adoption in WFM carries risks that practitioners must manage actively:

- Automation bias. Planners who trust AI outputs without verification are vulnerable to systematic errors that compound over time. AI-generated forecasts and schedules should be monitored against outcomes with the same rigor applied to human-generated plans.

- Data dependency. AI models trained on historical data encode historical patterns, including patterns the organization wants to change (seasonal understaffing, inequitable schedule distribution, biased quality scoring). See Algorithmic Fairness and Bias in Workforce Scheduling.

- Explainability gaps. Complex ML models (deep neural networks, large ensembles) may produce accurate outputs that planners cannot explain to operations leadership. This creates accountability gaps when AI-driven decisions produce poor outcomes.

- Vendor lock-in. AI capabilities embedded in WFM platforms create switching costs beyond standard platform lock-in: trained models, calibration data, and integration architectures are platform-specific. Organizations should evaluate data portability and model export capabilities during vendor selection.

- Workforce disruption. Poorly managed AI transitions damage employee engagement, increase attrition, and erode institutional knowledge. The productivity gains from AI can be offset by the costs of workforce instability. Research by Acemoglu and Restrepo on the labor market effects of automation found that industries with rapid automation adoption experienced short-term displacement effects that were partially offset by new task creation over multi-year horizons, but only when organizations invested in retraining and role redesign.[11]

- Customer preference. Organizations must account for customer attitudes toward AI-handled service interactions. Gartner's 2024 customer experience research highlighted that customer acceptance of AI varies significantly by interaction complexity and urgency, suggesting that containment targets should be calibrated by interaction type rather than applied uniformly.[12]

Emerging frontiers

Several developments are reshaping AI in WFM:

- Agentic AI architectures. Autonomous AI agents that can plan, reason, use tools, and execute multi-step workflows represent the next evolution beyond conversational AI. In WFM, agentic AI could manage scheduling autonomously, conduct capacity planning scenarios, or orchestrate real-time adjustments across multiple queues simultaneously. See Agentic AI Workforce Planning and AI Agent Orchestration for WFM.

- Foundation models for WFM. Large language models fine-tuned on operational data could enable natural-language interaction with WFM systems ("Show me understaffed intervals next Tuesday and suggest fixes"), reducing the expertise barrier for WFM platform use.

- Federated learning. Training ML models across multiple contact center operations without sharing raw data could improve model performance for organizations with limited individual training data, particularly relevant for smaller operations that lack the volume thresholds for effective ML.

- Causal ML for WFM. Moving beyond predictive models (what will happen) to causal models (what will happen if we intervene) would enable WFM AI to answer questions like "If we add 5 FTE to the morning shift, what is the causal effect on afternoon abandonment?" rather than simply predicting what abandonment will be. This represents a fundamental shift from forecasting to counterfactual planning.

See Also

- Contact Center Modernization — Program context for applied AI in the contact center

- Generative AI Impact on Contact Center Operations

- Artificial Intelligence Fundamentals

- Machine Learning Concepts

- AI Scaffolding Framework

- Three-Pool Architecture

- Cognitive Portfolio Model (N*)

- Conversational AI

- Speech Analytics

- Robotic Process Automation

- Algorithmic Fairness and Bias in Workforce Scheduling

- AI Containment Rate and Its Workforce Implications

- Automation Economics and ROI Decision Frameworks

- Organizational Change Management for AI Workforce Transitions

- Workforce Digital Twins and Continuous Planning

- Human AI Blended Staffing Models

- Human AI Supervision and Escalation Frameworks

- Deterministic vs Probabilistic Models

References

- ↑ Makridakis, S., Spiliotis, E., & Assimakopoulos, V. (2022). "M5 accuracy competition: Results, findings, and conclusions." International Journal of Forecasting, 38(4), 1346–1364.

- ↑ Salinas, D., Flunkert, V., Gasthaus, J., & Januschowski, T. (2020). "DeepAR: Probabilistic forecasting with autoregressive recurrent networks." International Journal of Forecasting, 36(3), 1181–1191.

- ↑ Ernst, A.T., Jiang, H., Krishnamoorthy, M., & Sier, D. (2004). "Staff scheduling and rostering: A review of applications, methods and models." European Journal of Operational Research, 153(1), 3–27.

- ↑ Bertsimas, D., Farias, V.F., & Trichakis, N. (2011). "The Price of Fairness." Operations Research, 59(1), 17–31.

- ↑ Brynjolfsson, E., Li, D., & Raymond, L. (2023). "Generative AI at Work." NBER Working Paper No. 31161.

- ↑ Chui, M., Hazan, E., Roberts, R., et al. (2023). "The economic potential of generative AI: The next productivity frontier." McKinsey Global Institute report, June 2023.

- ↑ Gartner (2024). "Gartner Survey Finds 64% of Customers Would Prefer That Companies Didn't Use AI in Customer Service." Gartner Newsroom, July 2024.

- ↑ Forrester Research (2024). "The Forrester Wave: Workforce Optimization, Q2 2024." Forrester Research, Inc.

- ↑ Deloitte (2023). "State of AI in the Enterprise, 5th Edition." Deloitte AI Institute.

- ↑ International Customer Management Institute (2024). "Contact Center Technology Trends Report." ICMI Research.

- ↑ Acemoglu, D. & Restrepo, P. (2020). "Robots and Jobs: Evidence from US Labor Markets." Journal of Political Economy, 128(6), 2188–2244.

- ↑ Gartner (2024). "Customer Experience Survey: AI in Service Delivery." Gartner Research.