ARIMA Models

ARIMA Models (AutoRegressive Integrated Moving Average) are a class of forecasting methods that explicitly model the autocorrelation structure of a time series. Where ETS models a series through level, trend, and seasonal components, ARIMA models a series through its own past values and past forecast errors.

For workforce management, ARIMA is the next step beyond ETS when residual diagnostics show that ETS has missed predictable structure in the data — typically autocorrelation patterns ETS cannot represent[1]. ARIMA is more flexible than ETS but requires more careful diagnostic work, more data, and more practitioner judgment to fit well.

This page documents AR, MA, ARIMA, and SARIMA forms with equations, parameter intuition, and the WFM contexts where ARIMA earns its complexity.

The AR, MA, and I components

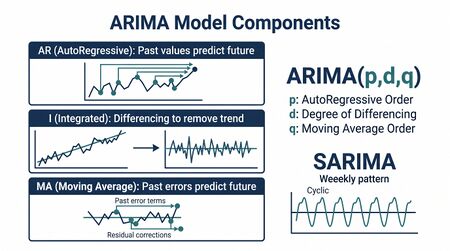

ARIMA decomposes into three components:

- AR(p) — autoregressive: the current value depends on its past values

- MA(q) — moving average: the current value depends on past forecast errors (not to be confused with the simple moving average smoothing technique)

- I(d) — integration: the series has been differenced times to achieve stationarity

ARIMA(p,d,q) combines all three.

AR(p) — autoregressive

A pure autoregressive model expresses the current value as a linear combination of past values:

where are coefficients and is white noise. The order is how far back the model looks. AR(1) means today depends on yesterday; AR(7) on a weekly series means today depends on each day of the prior week.

WFM intuition: if call volume today is correlated with volume one week ago (after seasonal adjustment), an AR(7) component captures that. If volume today is correlated only with yesterday, AR(1) suffices.

MA(q) — moving average

A pure moving average model expresses the current value as a linear combination of past forecast errors:

This is not the simple "moving average" of statistics. The "moving average" here is over past errors — past surprises — not past values.

WFM intuition: MA components capture the persistence of shocks. If yesterday's volume spike (an unexpected error in yesterday's forecast) tends to persist into today, an MA(1) component models that persistence.

I(d) — differencing for stationarity

ARIMA assumes the input series is stationary — its statistical structure (mean, variance, autocorrelation) does not change over time. Most WFM volume series are not stationary in raw form: there are trends, seasonal cycles, and structural breaks.

Differencing transforms a non-stationary series into a stationary one. First differences:

If first differences are stationary, . Second differences (the difference of the first differences) are sometimes needed for series with strong trend; is rare in practice.

The Augmented Dickey-Fuller (ADF) test and the KPSS test diagnose whether differencing is needed. Most automated ARIMA routines run these tests and select automatically.

ARIMA(p,d,q)

The full non-seasonal ARIMA model combines all three:

where is the differenced series.

SARIMA — seasonal ARIMA

For seasonal series — almost all WFM volume data — SARIMA adds seasonal AR, seasonal MA, and seasonal differencing components. Notation:

where:

- is the non-seasonal part

- is the seasonal part

- is the seasonal period (e.g., 7 for weekly seasonality on daily data)

A common WFM specification: ARIMA(1,1,1)(1,1,1)7 on daily call volume — first differences for trend, seasonal differences at lag 7 for weekly seasonality, AR(1) and MA(1) at both seasonal and non-seasonal levels.

Identifying p, d, q from data

Before automated tools, ARIMA orders were chosen by inspecting the autocorrelation function (ACF) and partial autocorrelation function (PACF) of the differenced series:

- Pure AR(p) — ACF decays gradually; PACF cuts off after lag p

- Pure MA(q) — ACF cuts off after lag q; PACF decays gradually

- ARMA — both decay gradually

In modern practice, automated routines (like `auto.arima` in R or `pmdarima` in Python) search the model space and select by AIC, BIC, or cross-validated error. The practitioner verifies the result is reasonable rather than choosing orders by hand.

Practitioner discipline: if the automated routine selects ARIMA(0,1,0) (just differencing, no AR or MA terms), that is a random walk — the data tells you no model adds value beyond the naive forecast. Trust the result; do not force complexity.

When ARIMA beats ETS in WFM

ARIMA's flexibility makes it the better choice when:

- Residuals from ETS show autocorrelation. If ETS residuals have a clear ACF pattern, ETS has missed structure. ARIMA can capture it.

- The series has irregular seasonality or non-standard cycles. ARIMA's seasonal differencing is more flexible than ETS's seasonal smoothing for non-standard patterns.

- Need explicit error structure. ARIMA's MA component models error persistence directly; ETS's error structure is implicit.

- Hybrid with regression on explanatory variables. ARIMA errors combined with regression on business drivers (marketing spend, holidays) produces the regression-with-ARIMA-errors model commonly notated as "regression with ARMA errors" or ARIMAX. This is a major WFM use case for ARIMA.

ETS often beats ARIMA on shorter, cleaner, strongly seasonal series. ARIMA wins on longer, irregular series where the autocorrelation structure carries forecastable signal ETS misses.

Common WFM pitfalls

- Insufficient data. ARIMA needs roughly two full seasonal cycles to fit reliably. With weekly seasonality () on daily data, that is at least 14 weeks; for monthly seasonality () on monthly data, at least 24 months. Shorter series should default to ETS or seasonal naive.

- Treating ARIMA as a black box. Automated selection is reliable, but blindly trusting the output without checking residual diagnostics produces overconfident forecasts on poorly specified models.

- Over-differencing. If is selected, double-check; is almost always enough. Over-differencing destroys long-run information.

- Ignoring outliers. Like ETS, ARIMA is sensitive to outliers. Pre-process or use robust ARIMA variants for series with known anomaly contamination.

- Stationarity tests on seasonal data. Run KPSS or ADF on the seasonally-differenced series, not the raw series. Otherwise the test reports stationarity issues that are just seasonality.

Diagnostic checks after fitting

After fitting an ARIMA model, four checks before trusting the forecast:

- Residual ACF and PACF. The residuals should look like white noise. Patterns mean the model has missed structure.

- Ljung-Box test on residuals. Formal test for residual autocorrelation. -value above 0.05 usually means residuals are clean.

- Residual normality. Histogram or Q-Q plot of residuals. Non-normal residuals are not fatal but bias prediction intervals.

- Forecast against held-out data. Out-of-sample error on a held-out portion is the only measure that matters for production use.

ARIMAX — regression with ARIMA errors

Pure ARIMA cannot incorporate known business drivers. Most WFM forecasts that respond to marketing spend, product launches, or holiday calendars require the ARIMAX form:

where follows an ARIMA process. The regression captures the response to known drivers; the ARIMA errors capture the autocorrelation that remains.

This is often the right method for capacity planning forecasts where business inputs (campaign spend, promotional calendar) are known in advance and have measurable historical effect on volume.

Connection to WFM software

ARIMA is supported in most modern WFM software via "advanced forecasting" or "statistical forecasting" modules. Implementation quality varies dramatically:

- The math is standard and well-validated; if ARIMA results from a vendor disagree with results from R's `forecast` package or Python's `statsforecast`, the vendor's pre-processing, differencing, or order-selection logic is the suspect, not the method

- Most platforms expose the seasonal period but hide behind automated selection. Verify the platform's automated selection on a sample series against an independent tool before trusting it across the production workflow

- For ARIMAX (with explanatory variables), most WFM platforms have weak support; this is where modern capacity planning tools (see Pillar 3 in WFM Ecosystem Architecture) earn their place

Maturity Model Position

In the WFM Labs Maturity Model™, ARIMA sits in the L2-to-L3 transition zone of the forecasting toolkit. It earns its complexity only when an organization has the data, the diagnostic discipline, and the practitioner skill to interpret residuals; without those, ARIMA produces overconfident forecasts on poorly specified models.

- Level 1 — Initial (Emerging Operations) — ARIMA is not in use; forecasting is judgmental or absent. Adopting it here would be premature.

- Level 2 — Foundational (Traditional WFM Excellence) — vendor-default ARIMA fit through "advanced forecasting" toggles, with little diagnostic discipline beyond MAPE; often delivers no real lift over seasonal naive.

- Level 3 — Progressive (Breaking the Monolith) — ARIMA is one of several methods evaluated head-to-head against naive baselines; residual diagnostics, MASE, and out-of-sample validation are routine; ARIMAX captures known business drivers.

- Level 4 — Advanced (The Ecosystem Emerges) — ARIMA participates in forecast combinations and reconciled hierarchies; outputs feed probabilistic capacity planning rather than point estimates.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — ARIMA is one component in continuously-tuned ensembles whose weights and specifications are managed by the analytical platform itself, with practitioner oversight rather than hand-fitting.

ARIMA is best understood as a transitional method — a Level 2 capability when used as a black box, a Level 3+ capability when used with the diagnostic rigor it requires.

References

- Hyndman, R. J., & Athanasopoulos, G. "ARIMA models." Forecasting: Principles and Practice (Python edition). otexts.com/fpppy.

- Box, G. E. P., Jenkins, G. M., Reinsel, G. C., & Ljung, G. M. Time Series Analysis: Forecasting and Control (5th ed.). Wiley, 2015. The canonical Box-Jenkins methodology.

See Also

- Forecasting Methods — overview of all forecasting families

- Naive and Seasonal Naive Forecasting — the baseline ARIMA must beat

- Exponential Smoothing — the alternative family; often simpler and equally accurate on clean seasonal series

- Demand calculation — calculator that consumes a forecast

- Power of One — interval-level sensitivity that depends on forecast accuracy

- WFM Ecosystem Architecture — Pillar 3 (Advanced Capacity Planning) where ARIMAX-class methods earn their complexity

- ↑ Hyndman, R.J. & Athanasopoulos, G. (2021). "Forecasting: Principles and Practice" (3rd ed.). OTexts.