AI Scaffolding Framework

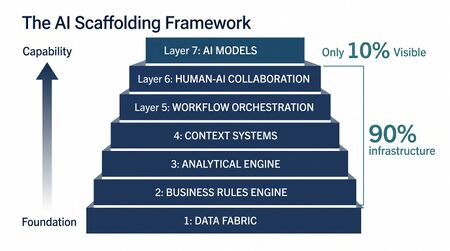

AI Scaffolding Framework is a seven-layer model for the infrastructure a workforce management (WFM) organization builds to support autonomous decisioning. The central operating claim: roughly 90% of the value lies in the enabling infrastructure beneath the visible 10% of AI models. Capable models on weak scaffolding produce impressive demos and disappointing production results. Capable models on strong scaffolding produce reliable autonomous operations.

This framing aligns with a consistent finding across industries: organizations that invest disproportionately in model sophistication while neglecting data, rules, and workflow infrastructure see diminishing returns on AI spending. McKinsey's research on AI scaling found that only 11% of organizations capture significant financial value from AI, with the primary differentiator being investment in enabling infrastructure rather than model capability.[1] Gartner's analysis of AI project failure rates reinforces the point: through 2025, an estimated 85% of AI projects delivered erroneous outcomes, primarily due to bias in data, algorithms, or the teams responsible for managing them—failures concentrated in the lower layers of the scaffolding, not in model architecture.[2]

This page is the practitioner reference: what each layer is, what teams build at each layer, how to assess maturity, and what failure modes to look for. The framework was introduced in the Contact Center Compass newsletter[3] and developed further in Adaptive: Building Workforce Systems for an (Unpredictable) Future.[4] The iceberg metaphor for AI infrastructure — visible capability resting on massive hidden foundations — was influenced by Daniel Miessler's framing in Unsupervised Learning.[5]

Thinking vs. Optimization

A precondition before applying the framework: AI in operational systems is not "thinking" in the human sense. Thinking — with intuition, context, and judgment — remains in the human domain. What appears autonomous in WFM is more accurately the evolution from deterministic rule execution to probabilistic optimization. The system selects from a structured decision space using probability-weighted criteria; it does not deliberate.

Practitioners get this distinction wrong all the time, especially under vendor pitches. The discipline: when evaluating a capability, ask whether the underlying behavior is rule execution, optimization across a defined decision space, or both. Anything described as "thinking" is being oversold. This distinction maps directly to the concepts explored in Artificial Intelligence Fundamentals: the difference between narrow AI (which optimizes within defined parameters) and the general intelligence implied by marketing language.

The practical consequence is architectural. Systems that optimize within a defined space can be bounded, tested, and audited. Systems marketed as "thinking" often resist those constraints, making them harder to govern and more dangerous in production. The application of AI to WFM succeeds precisely because the decision space—staffing levels, schedule assignments, real-time adjustments—is well-defined and constrainable.

Deterministic vs. Probabilistic

Two algorithmic categories underpin WFM:

- Deterministic — same inputs produce same outputs. Sorting, business-rule execution, compliance checks, schedule optimization solvers, Erlang-C calculation.

- Probabilistic — incorporates randomness or uncertainty. Monte Carlo simulation, forecasting distributions, risk modeling, stochastic capacity planning.

A subtle but operationally important point: an algorithm can be deterministic in execution but probabilistic in meaning. The Erlang-C formula executes deterministically — same inputs, same answer — while modeling a probabilistic system (queueing under random arrivals). This distinction is treated comprehensively in Deterministic vs Probabilistic Models, which provides the mathematical foundations underlying the Scaffolding Framework's analytical layers.

The practitioner skill: knowing when an answer is a single point you can act on directly versus a distribution that describes a range of possible futures. Treating a probabilistic answer as deterministic produces fragile plans; treating a deterministic answer as probabilistic produces analysis paralysis. Machine Learning Concepts provides the technical vocabulary for how modern systems navigate this boundary — training on historical distributions to produce actionable predictions.

In scaffolding terms, this distinction matters because Layers 1 through 4 must preserve signal fidelity regardless of whether the downstream consumer is deterministic or probabilistic. A data fabric that rounds timestamps to the nearest minute destroys the sub-minute variance information that probabilistic models need. A business rules engine that expresses constraints as hard boundaries (deterministic) when the operational reality is a soft preference gradient (probabilistic) will produce automation that is technically compliant and operationally wrong.

The Seven Layers

The scaffolding stacks from foundation upward. Each layer below is described in practitioner terms: what teams build, how to tell where you are on maturity, and what failure modes to look for. The layers align with the architectural principles described in WFM Ecosystem Architecture and the maturity progression codified in the WFM Labs Maturity Model™.

Layer 1 — Data Fabric

What practitioners build: Real-time data pipelines that move agent state, queue depth, schedule adherence, customer demand signals, and operational outcomes into systems that downstream layers can query. Source-of-truth designations for each signal. Sub-second latency for in-day decisioning data; sub-minute for capacity-planning data. The architectural patterns for this layer are explored in detail in WFM Data Infrastructure and Integration Architecture.

Implementation patterns: Modern data fabric implementations typically follow one of three architectures. The simplest is a hub-and-spoke model where a central data platform (often a cloud data warehouse or lakehouse) ingests from ACD, WFM, CRM, and HRIS systems on varying cadences. More mature organizations implement event-driven architectures using message brokers (Apache Kafka, Amazon Kinesis, or cloud-native equivalents) that publish state changes as they occur, enabling multiple consumers to subscribe without point-to-point integration. The most advanced pattern is a true data mesh where each domain (scheduling, forecasting, real-time operations) owns and publishes its data products with defined contracts and SLAs.

The technology stack matters less than the design principles: every data element has exactly one source of truth, every consumer reads from the fabric rather than the source system, latency SLAs are defined and monitored per data product, and schema changes are versioned. Organizations that solve the technology problem without solving the governance problem end up with fast pipelines moving unreliable data.

Maturity tells:

- Level 2 (Foundational) — Data lives inside the WFM platform; reports are generated nightly; intraday answers come from the ACD console

- Level 3 (Progressive) — Real-time APIs expose adherence and queue state; batch loads still drive forecast updates

- Level 4 (Advanced) — Sub-second event streams; multiple consumers (BI, automation, real-time dashboards) reading the same fabric

Common failure modes: Data exists but only inside the WFM platform UI. Latency too high for decisioning. Multiple "sources of truth" disagreeing. No backfill protocol when an upstream system is offline. Schema drift between source systems and consumers causing silent data quality degradation. Timestamp inconsistency across systems (timezone mismatches, clock skew) producing phantom variance.

Cost of getting it wrong: Every layer above Layer 1 inherits its defects. A 2023 study by Sculley et al. on technical debt in machine learning systems found that data-dependency debt—the accumulated cost of unreliable, undocumented, or inconsistent data flows—is the single largest category of technical debt in ML systems, exceeding model complexity debt by a factor of three to five.[6] In WFM terms: a 5% data quality issue in adherence tracking cascades into forecast error, scheduling waste, and real-time decision failures that compound across every interval of every day.

Most organizational blocking issues live here. Without solving Layer 1, no higher layer matters.

Layer 2 — Business Rules Engine

What practitioners build: Codified, API-accessible representations of compliance constraints, labor law, fairness rules, scheduling policy, override authorities, escalation paths. Rules versioned and reviewable. Rule changes auditable. This layer transforms what the Intelligent Automation literature calls "tribal knowledge" into machine-queryable policy.

Implementation patterns: The evolution from tribal rules to a queryable engine follows a predictable path. Organizations begin by cataloguing rules—extracting constraints from policy manuals, collective bargaining agreements, regulatory requirements, and (critically) the undocumented practices that experienced analysts apply daily. This catalogue typically reveals 3-5x more rules than leadership expected, many of them contradictory.

The technical implementation ranges from simple rule tables in a database (adequate for deterministic if-then logic) to specialized business rules management systems (BRMS) like Drools or commercial equivalents that support complex rule chaining and conflict resolution. The most mature implementations expose rules via API so that any automated system—scheduling engine, real-time automation, RPA bot—can query applicable constraints before acting.

Critical design principle: rules must be separable from the systems that enforce them. When rules are embedded in scheduling software configuration, changing a labor law compliance requirement means reconfiguring the WFM platform. When rules live in an independent engine, the same change propagates to every consuming system simultaneously.

Maturity tells:

- Level 2 — Rules live in policy documents and the heads of senior analysts

- Level 3 — Subset of rules codified in WFM platform configuration; remainder still tribal

- Level 4 — Full rule set in a versioned engine; automation queries the engine before acting; audit trail per rule invocation

Common failure modes: Rules drift between documented policy and operational practice. New hires take 6+ months to absorb tribal rules. Automation that follows codified rules contradicts the human practice that follows tribal rules. Rule conflicts discovered only in production. No mechanism to test the impact of a proposed rule change before deployment.

Cost of getting it wrong: Labor law violations carry direct financial penalties, but the more common (and often larger) cost is operational: automation that produces technically valid but operationally rejected outputs. When analysts override 40% of automated schedule outputs because the rules engine missed a tribal constraint, the automation investment yields negative ROI—worse than manual, because the override process adds steps.

Second-most-common blocker. Rules trapped in policy documents cannot constrain automation.

Layer 3 — Analytical Engine

What practitioners build: Both deterministic and probabilistic mathematical capabilities. Erlang calculations, scheduling optimization, Monte Carlo simulation, stochastic forecasts, risk modeling. The math layer beneath the model layer. This is where the concepts described in Multi-Objective Optimization in Contact Center and Deterministic vs Probabilistic Models translate into operational capability.

Implementation patterns: The analytical engine typically begins with the WFM platform's built-in capabilities—Erlang-based staffing calculations and time-series forecasting—and extends outward as analytical needs exceed platform capabilities. Common extensions include Monte Carlo simulation for capacity planning (moving beyond single-point forecasts), multi-objective optimization for schedule generation (balancing cost, service level, and employee preferences simultaneously), and machine learning models for pattern detection in forecast residuals.

The architectural pattern that separates Level 3 from Level 4 organizations is composability. In a Level 3 organization, each analytical capability operates independently: the forecasting system produces a forecast, the staffing calculator converts it to requirements, the scheduler builds schedules. In a Level 4 organization, these capabilities compose via APIs—a probabilistic forecast feeds directly into a stochastic staffing model, which feeds a scheduler that optimizes across the uncertainty distribution rather than a single point estimate. This composability depends on Layer 1 (data fabric) carrying distributional information, not just point values.

The Reporting Automation and Self Service Analytics capabilities that organizations build often serve as leading indicators of analytical engine maturity. When analysts can self-serve complex queries, the underlying analytical infrastructure is typically approaching the composability needed for automation.

Maturity tells:

- Level 2 — Excel-augmented Erlang and traditional time-series forecasting

- Level 3 — Specialized capacity-planning tools introduced (e.g., stochastic modeling software); analytical capability lives outside the WFM core

- Level 4 — Probabilistic and deterministic engines composed via APIs; outputs flow back into the data fabric

Common failure modes: Sophisticated analytical capability disconnected from operations (analysts produce great models that nobody uses). Single-point forecasts presented as if they were certainties. Probabilistic outputs without confidence intervals. Analytical capability concentrated in one team member whose departure creates a capability gap.

Cost of getting it wrong: The most expensive failure mode is invisible: single-point planning that systematically understaffs during high-variance periods and overstaffs during low-variance periods. Because the average looks acceptable, the variance cost hides in service level failures and idle time until someone does the variance decomposition that reveals it.

Layer 4 — Context Systems

What practitioners build: Organizational memory of what worked under what conditions. Pattern libraries — "when queue depth rose like this, the response that captured the most value was X." Decision histories. Outcome tracking that closes the loop from action to result. This layer provides the operational intelligence that distinguishes Agentic AI Workforce Planning from simple automation.

Implementation patterns: Context systems are the least standardized layer in the scaffolding, in part because the concept is relatively new in WFM operations. The simplest implementation is a structured decision log: for each significant operational decision (voluntary time off offered, overtime authorized, skill reassignment executed), record the triggering conditions, the action taken, and the measured outcome. Over time, this log becomes a queryable knowledge base.

More sophisticated implementations use Speech Analytics and interaction metadata to enrich the context—understanding not just what staffing decisions were made but what customer experience resulted. Conversational AI interactions provide another rich source of context data: what types of inquiries spiked, what resolution patterns emerged, what containment rates shifted.

The most advanced pattern is a recommendation engine built on this decision history: given current conditions (queue depth, adherence rate, time of day, day of week, recent trend), what response historically produced the best outcome? This is where machine learning earns its value—not in replacing human judgment but in surfacing relevant precedent faster than human memory can.

Maturity tells:

- Level 2 — Lessons learned in heads of senior staff; institutional memory dies when staff leave

- Level 3 — Some patterns documented in playbooks; manually consulted by humans

- Level 4 — Pattern recognition built into automation; the system selects responses based on prior outcomes, not just current state

Common failure modes: Each decision made in isolation. No outcome data fed back to the decision system. Patterns recognized only by individual experts whose knowledge isn't transferred. Decision context captured but never queried—the system has memory but doesn't use it.

Cost of getting it wrong: Every crisis is treated as novel. Organizations without context systems repeat the same mistakes in predictable seasonal patterns—understaffing the same way every holiday season, overreacting to the same type of volume spike, authorizing overtime that historical data shows produces diminishing returns after hour 10.

Layer 5 — Workflow Orchestration

What practitioners build: Decision triggers, action sequences, handoffs, feedback loops. The connective tissue that turns "we know what should happen" into "the right thing happened, in the right order, with the right confirmations." This layer operationalizes the capabilities described in Intelligent Automation and connects them into coherent operational sequences.

Implementation patterns: Workflow orchestration in WFM typically progresses through three phases. The first is simple event-action automation: adherence drops below threshold, alert fires. The second introduces conditional logic and branching: adherence drops, check context (is this agent in training? is queue depth critical?), route to appropriate response. The third adds feedback loops: execute response, measure outcome, adjust response parameters for next occurrence.

The technology landscape for this layer has expanded significantly. Robotic Process Automation handles cross-system execution (logging into the WFM platform, adjusting schedules, sending notifications). Specialized platforms like Intradiem provide pre-built WFM workflow patterns. Integration platforms (iPaaS) connect cloud systems. The emerging pattern is workflow-as-code, where orchestration logic lives in version-controlled repositories rather than vendor-specific configuration, enabling testing, review, and rollback.

The critical design principle is graceful degradation. When a step in an automated workflow fails—API timeout, unexpected data state, downstream system offline—the orchestration must detect the failure, attempt recovery, and escalate to human intervention if recovery fails. Silent failure in workflow orchestration is the single most dangerous failure mode in operational automation because its effects compound: an undetected broken workflow produces cascading downstream failures that are difficult to diagnose.

Maturity tells:

- Level 2 — Workflows live in shift-handoff emails and supervisor checklists

- Level 3 — Some automation platforms (e.g., Intelligent Automation) execute defined workflows

- Level 4 — Orchestration spans multiple systems; failures detected and rerouted; humans engaged on exceptions only

Common failure modes: Successful automation in isolated workflows that don't compose. Handoffs between automated and human steps fail silently. No way to reroute when a step in the chain breaks. Workflow logic duplicated across systems with no single source of truth for the intended process.

Cost of getting it wrong: Partially automated workflows are often worse than fully manual ones. In manual operations, humans compensate for process gaps unconsciously. In partially automated operations, each gap between automated steps is a potential point of failure that no one is watching. The MLOps literature documents this pattern extensively: production ML systems fail most often at the integration boundaries between components, not within individual components.[7]

Layer 6 — Human-AI Collaboration Interface

What practitioners build: Surfaces that define how humans and AI share authority. Exception handling. Subject-matter-expert (SME) override mechanisms. Decision support displays that explain why the system proposed an action. Audit trails that let a reviewer reconstruct the system's reasoning. This layer embodies the collaboration models described in Human AI Blended Staffing Models.

Implementation patterns: The design of the human-AI interface is fundamentally an authority design problem. Three models appear in practice:

- Human-in-the-loop — automation proposes, human approves. Common in early implementations and for high-stakes decisions (mass overtime authorization, schedule rebids). Bottleneck risk: if humans must approve every action, the automation runs at human speed.

- Human-on-the-loop — automation acts, human monitors and can intervene. Appropriate for routine decisions with bounded consequences (individual break reassignments, small VTO offers). Requires strong alerting when automation deviates from expected patterns.

- Human-out-of-the-loop — automation acts autonomously within defined parameters. Reserved for well-understood, low-variance decisions where the cost of delayed human review exceeds the cost of occasional errors.

The progression from loop to on-loop to out-of-loop should be gradual and evidence-based, gated by demonstrated accuracy and bounded consequence. The Future WFM Operating Standard describes the end state this progression aims toward: a hybrid operating model where the division of labor between human and AI is explicit, dynamic, and auditable.

Explainability is the enabler. ICMI research on contact center technology adoption consistently shows that supervisor trust is the primary determinant of whether automation achieves its intended benefit—and trust correlates directly with the system's ability to explain its reasoning in terms supervisors find credible.[8] A system that says "schedule changed" gets overridden. A system that says "moved Agent Kim's break 15 minutes earlier because queue depth forecast shows a surge at 14:30 and Kim's skill profile matches the expected inquiry type" gets accepted.

Maturity tells:

- Level 2 — Humans make all decisions; automation surfaces alerts only

- Level 3 — Mixed: automation acts on routine decisions, humans handle exceptions; explanations weak

- Level 4 — Confident bidirectional collaboration; automation defers appropriately; humans trust the system's defaults

Common failure modes: Black-box automation that humans don't trust. Explanation quality so poor that overrides happen by default. Authority models that don't match operational reality (e.g., automation acts but humans are blamed). Alert fatigue from too many low-value notifications drowning out genuinely important exceptions.

Cost of getting it wrong: The most expensive failure mode is trust collapse. Once frontline supervisors lose confidence in automated decisions, override rates spike to 60-80%, and the organization is effectively running a manual operation with extra steps. Rebuilding trust after a collapse takes 3-6x longer than building it initially, because every anomaly is now interpreted as evidence of system failure rather than normal operational variance.

Layer 7 — AI Models

What practitioners build: LLMs, predictive models, classification engines. The visible 10% that gets all the marketing attention. This layer encompasses the capabilities described in Artificial Intelligence Fundamentals and Machine Learning Concepts, applied to WFM-specific use cases.

Implementation patterns: AI models in WFM operations fall into several categories, each with distinct infrastructure requirements:

- Predictive models — volume forecasting, AHT prediction, attrition risk scoring. These are the most mature application, with well-understood training pipelines, evaluation metrics, and retraining cadences. Most WFM platforms include some version of these; the scaffolding question is whether the organization can evaluate, tune, and override them.

- Classification models — interaction categorization, root cause classification, skill inference from interaction patterns. Often built on Speech Analytics data and used to enrich Layers 1 and 4.

- Optimization models — scheduling, routing, capacity allocation. These extend Layer 3's analytical engine with ML-driven parameter tuning and constraint relaxation. Multi-Objective Optimization in Contact Center describes the mathematical foundations.

- Generative models — LLM-based capabilities including Conversational AI for customer interactions, agent-assist tools, and automated reporting narrative generation. The newest category, with the least mature operational patterns.

The critical insight for Layer 7 is that model performance in production is bounded by the weakest lower layer. A state-of-the-art forecasting model trained on a data fabric with 5% missing data will underperform a simple exponential smoothing model trained on clean data. This is not a theoretical claim—it is the consistent finding of the MLOps research literature and the practical experience of every organization that has deployed ML in operations.[9]

Maturity tells:

- Level 2 — Pre-trained vendor models used as black boxes; no understanding of model behavior

- Level 3 — Domain-tuned models; performance monitored; retraining cadence

- Level 4 — Multiple models composed; performance gated by Layer 6 trust levels

Common failure modes: Investing in this layer first. Buying impressive models that have no scaffolding to support them. Treating model accuracy as the relevant metric when scaffolding gaps are the actual bottleneck. Model drift undetected because no monitoring infrastructure exists at Layer 1.

Cost of getting it wrong: The cost is not just wasted model investment—it is organizational disillusionment. When an expensive AI initiative fails because the scaffolding was not ready, the organization often concludes that "AI doesn't work for us" rather than "our infrastructure wasn't ready." This misattribution delays the genuine scaffolding work by years.

Interaction Between Layers

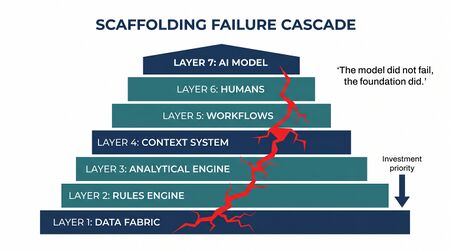

The seven-layer model is not merely a checklist; the layers interact in ways that create both compounding value and cascading failure. Understanding these interactions is essential for prioritizing investment and diagnosing production issues.

Upward Dependency

Each layer depends on every layer below it. This is the framework's primary structural claim, and it has direct investment implications:

- Layer 1 failure breaks everything. If the data fabric delivers stale adherence data, the business rules engine evaluates against wrong state, the analytical engine forecasts from corrupted history, context systems learn from misleading patterns, workflows trigger on false signals, humans receive bad recommendations, and models train on noise.

- Layer 2 failure breaks automation. If the rules engine is incomplete, Layers 5-7 will produce outputs that violate unstated constraints, triggering manual overrides that negate automation value.

- Layer 3 failure limits precision. If the analytical engine provides only point estimates, context systems cannot learn which responses work under which uncertainty conditions, and Layer 7 models optimize against phantom certainty.

The practical implication: organizations should diagnose failures by walking down the stack, not up. When a model underperforms (Layer 7), the first question is not "do we need a better model?" but "what is the data quality at Layer 1?"

Cross-Layer Feedback Loops

Mature scaffolding creates feedback loops that improve the system over time:

- Layer 7 → Layer 4: Model predictions and their outcomes feed the context system, building the institutional memory of what worked.

- Layer 6 → Layer 2: Human override patterns reveal missing or incorrect business rules. A high override rate on a specific decision type signals a Layer 2 gap.

- Layer 4 → Layer 3: Context data on outcome variance informs analytical model selection—revealing when a deterministic approach is insufficient and probabilistic methods are needed.

- Layer 5 → Layer 1: Workflow execution metadata (latency, failure rates, data freshness at decision time) feeds back into data fabric monitoring and SLA management.

These feedback loops are what distinguish a scaffolding that learns from one that merely executes. Organizations at Level 4 on the WFM Labs Maturity Model™ have all four feedback loops operational; Level 3 organizations typically have zero or one.

Failure Cascade Example

Consider a concrete failure cascade originating at Layer 1. A telco contact center's ACD system experiences a 90-second data delivery delay due to a network configuration change. The delay is within the ACD vendor's SLA but exceeds the data fabric's freshness requirement for real-time decisioning:

- Layer 1 (Data Fabric): Adherence data arrives 90 seconds stale.

- Layer 2 (Business Rules): Rules engine evaluates adherence thresholds against data that is already outdated, triggering false out-of-adherence flags.

- Layer 5 (Workflow Orchestration): Automated workflows send adherence alerts to supervisors for agents who have already returned to correct state.

- Layer 6 (Human-AI Interface): Supervisors receive a burst of false-positive alerts, learn to ignore adherence alerts, and begin overriding the system.

- Layer 4 (Context Systems): The context system records that adherence alerts are routinely overridden, reducing its confidence in adherence-based triggers.

- Layer 7 (AI Models): Forecasting models that use adherence patterns as features see degraded signal, reducing forecast accuracy.

The root cause was a 90-second latency increase. The observed symptom—reduced forecast accuracy—appeared months later and would never be diagnosed by looking at Layer 7 in isolation. This cascade pattern is consistent with the technical debt dynamics documented in the ML systems literature.[6]

Practical Application: Walking the Framework

To illustrate how an organization applies the framework, consider a mid-size insurance contact center (800 agents, 4 sites) that has purchased an AI-powered scheduling optimization tool and is seeing disappointing results—automated schedules are overridden 55% of the time by planners.

Layer-by-Layer Diagnosis

Layer 1 assessment: Data audit reveals that adherence data from two of four sites arrives via nightly batch (24-hour latency) while the AI tool expects real-time feeds. Historical volume data has a 3% gap due to a migration two years ago that was never backfilled. Verdict: Layer 1 gaps present.

Layer 2 assessment: Rule catalog identifies 47 scheduling constraints. Only 28 are codified in the WFM platform. The remaining 19 exist as "things Sarah knows"—tribal rules accumulated over 12 years. Seven of the 19 directly conflict with automated scheduling outputs, explaining a significant portion of the 55% override rate. Verdict: Layer 2 is the primary blocker.

Layer 3 assessment: The organization uses single-point Erlang-based staffing calculations. When actual volume deviates from forecast (which happens 40% of intervals by more than 10%), the staffing requirements are wrong and the schedule is misaligned. No probabilistic capability exists. Verdict: Layer 3 gaps amplify the problem but are not the primary cause.

Layer 4 assessment: No formal decision logging. When planners override schedules, the reason is not recorded. Pattern recognition is entirely in planners' heads. Verdict: Layer 4 nonexistent—the organization cannot learn from its own experience.

Layer 5 assessment: The AI scheduling tool operates in isolation. Its outputs are emailed as PDFs to planners. No automated workflow connects the tool to the WFM platform. Planners manually rekey approved schedules. Verdict: Layer 5 disconnected—even when the AI produces good output, the delivery mechanism adds friction.

Layer 6 assessment: The AI tool provides no explanation for its scheduling decisions. Planners see a proposed schedule but cannot determine why Agent X was assigned to Shift Y. Verdict: Layer 6 absent—planners override because they cannot evaluate.

Layer 7 assessment: The AI scheduling model itself is technically sound—when tested on clean data with complete rules, it produces schedules that outperform manual planning by 8-12% on cost-efficiency metrics.

Prioritized Remediation

Based on this assessment, the organization prioritizes:

- Layer 2 first: Catalog and codify the 19 tribal rules. Estimated effort: 4-6 weeks with experienced planners. Expected impact: override rate drops from 55% to ~25%.

- Layer 1 second: Upgrade the two batch-feed sites to real-time adherence streaming. Backfill the historical data gap. Estimated effort: 8-12 weeks with IT involvement.

- Layer 5 third: Build API integration between the AI scheduling tool and the WFM platform, eliminating the PDF-and-rekey workflow. Estimated effort: 4-8 weeks.

- Layer 6 fourth: Implement explanation features—each scheduling decision annotated with the driving constraints and optimization trade-offs.

This sequence invests zero additional dollars in Layer 7 (the AI model) while dramatically improving the model's effective performance. The model was never the problem; the scaffolding was.

Self-Assessment

To assess where your organization stands, walk the seven layers in order and answer one question per layer:

- Data Fabric: Can your automation read agent state, queue depth, and schedule adherence with sub-second freshness, or does it depend on a stale batch?

- Business Rules: Can a non-engineer query the system to see which compliance constraints apply to a specific scenario, or are the rules in someone's head?

- Analytical Engine: Are your capacity plans expressed as ranges with confidence levels, or as single numbers?

- Context Systems: When variance occurred last Thursday at 2pm, can you point to what response was activated and what outcome resulted?

- Workflow Orchestration: If an automated workflow step fails, what happens — does the system reroute, alert, or silently fail?

- Human-AI Interface: When automation proposes an action, can the supervisor see why before approving?

- AI Models: Are your model performance metrics tied to operational outcomes, or to abstract accuracy scores?

The lowest "no" answer is your bottleneck. Investments at higher layers won't pay off until the lower layer is solid. This assessment maps directly to the maturity levels in the WFM Labs Maturity Model™, where scaffolding completeness is a gating criterion for progression from Level 2 to Level 3 and from Level 3 to Level 4.

Historical Context

The framework reflects two decades of operational AI in WFM, most of which has nothing to do with LLMs:

- Rule-based automation — for over twenty years, Intradiem and similar platforms have executed deterministic if-then logic on real-time operational state. This is "AI" in the practical, value-producing sense, even if it predates the modern model-centric framing. The sophistication was never in the rules themselves but in the scaffolding that made rules actionable: real-time data feeds (Layer 1), codified business constraints (Layer 2), and workflow orchestration (Layer 5) that ensured actions executed reliably across thousands of agents.

- Multi-objective optimization — for over twenty years, Bay Bridge Decision Technologies and similar specialists demonstrated Pareto-efficient solutions across competing objectives in WFM contexts. This is probabilistic optimization applied to scheduling and capacity — a Layer 3 capability that delivered measurable value because it was built on solid Layer 1 and Layer 2 foundations. Organizations that attempted multi-objective optimization without clean data or complete constraints found that the optimizer produced mathematically optimal but operationally useless results.

- Emerging capability — the new frontier is real-time, continuous supply-and-demand allocation that applies multi-objective optimization to operational execution rather than just quarterly planning. This requires scaffolding maturity at all seven layers and represents the operating model described in Future WFM Operating Standard. The emergence of Agentic AI Workforce Planning and Human AI Blended Staffing Models reflects this trajectory — autonomous systems that can plan, execute, and adapt in real-time, but only when the scaffolding supports them.

The pattern: the value-producing AI in WFM was never the model layer. It was always Layers 1-5 plus a domain-specific decision engine. The current wave of LLM-based innovation does not change this pattern — it amplifies it. More powerful models are more sensitive to scaffolding quality, not less, because they can extract more signal from good data and more noise from bad data.

Maturity Model Position

In the WFM Labs Maturity Model™, scaffolding maturity gates progression:

- Level 2 — Foundational (Traditional WFM Excellence) organizations typically have data and rules trapped in monolithic platforms; Layer 1 and Layer 2 deficits prevent meaningful autonomous decisioning regardless of model investment.

- Level 3 — Progressive (Breaking the Monolith) organizations have built API-accessible data and codified business rules, unlocking Layers 3-5. The transition from Level 2 to Level 3 is fundamentally a scaffolding investment, not a model investment.

- Level 4 — Advanced (The Ecosystem Emerges) organizations have all seven layers in production. Autonomous operations are real; humans operate by exception. The WFM Ecosystem Architecture at this level integrates multiple specialized systems, each contributing to one or more scaffolding layers.

The framework explains why "buying AI" rarely delivers on the demo: the model is the smallest piece of what makes autonomous operations work. Organizations that recognize this invest accordingly — 70% of AI-related budget in Layers 1-5, 20% in Layer 6, and 10% in Layer 7 — inverting the allocation that marketing materials would suggest.[10]

References

- ↑ The state of AI in 2022—and a half decade in review. McKinsey & Company. December 2022.

- ↑ Gartner Identifies Top Strategic Technology Trends for 2023. Gartner. October 2022.

- ↑ Lango, Ted. "The Scaffolding Problem: Why Your AI Can't Decision (Yet)." Contact Center Compass, LinkedIn, January 2026.

- ↑ Lango, Ted. Adaptive: Building Workforce Systems for an (Unpredictable) Future.

- ↑ Miessler, Daniel. Unsupervised Learning (iceberg metaphor for AI infrastructure).

- ↑ 6.0 6.1 "Hidden Technical Debt in Machine Learning Systems". Advances in Neural Information Processing Systems 28 (NIPS 2015). 2015.

- ↑ "Challenges in Deploying Machine Learning: A Survey of Case Studies". ACM Computing Surveys. 55(6): 1–29. 2022. doi:10.1145/3533378.

- ↑ The State of Contact Center Technology. ICMI (International Customer Management Institute). 2023.

- ↑ "Software Engineering for Machine Learning: A Case Study". IEEE/ACM 41st International Conference on Software Engineering: Software Engineering in Practice (ICSE-SEIP). 2019.

- ↑ Predicts 2024: AI Infrastructure and Platform Engineering. Gartner. November 2023.

See Also

- Artificial Intelligence Fundamentals — Core AI concepts underlying the scaffolding framework

- Machine Learning Concepts — Technical foundations for Layer 7 and Layer 3 capabilities

- Deterministic vs Probabilistic Models — Mathematical distinction central to Layers 3 and 7

- AI in Workforce Management — Application of AI across WFM functions, enabled by scaffolding

- Intelligent Automation — Rule-based and AI-augmented automation operating on the scaffolding

- WFM Ecosystem Architecture — Four-pillar architecture that maps to scaffolding layers

- Multi-Objective Optimization in Contact Center — Probabilistic optimization in Layer 3

- Future WFM Operating Standard — The strategic framework the scaffolding enables

- WFM Labs Maturity Model™ — Maturity progression gated by scaffolding completeness

- Variance Harvesting — Operational capability that depends on scaffolding maturity

- WFM Data Infrastructure and Integration Architecture — Detailed treatment of Layer 1 patterns

- Agentic AI Workforce Planning — Autonomous planning that requires full scaffolding maturity

- Human AI Blended Staffing Models — Layer 6 collaboration patterns in practice

- Conversational AI — Layer 7 generative AI application in contact centers

- Speech Analytics — Data source enriching Layers 1 and 4

- Robotic Process Automation — Layer 5 execution technology

- Reporting Automation and Self Service Analytics — Layer 3 analytical capability indicator