Conversational AI

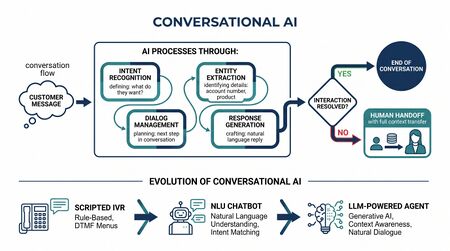

Conversational AI refers to artificial intelligence systems that can engage in natural language dialogue with humans — understanding intent, maintaining context across multiple turns, and generating appropriate responses. In contact center and workforce management contexts, conversational AI powers chatbots, virtual assistants, AI-driven IVR systems, and increasingly, autonomous AI agents that handle customer interactions end-to-end.

Conversational AI represents the technology layer that makes the agentic workforce possible: AI systems that can resolve customer issues without human involvement, fundamentally changing the demand equation that WFM plans against.

Core Technologies

Natural Language Understanding (NLU)

NLU interprets customer intent from unstructured text or speech:

- Intent classification: Determining what the customer wants (billing inquiry, technical issue, account change)

- Entity extraction: Identifying specific details (account numbers, dates, product names, amounts)

- Sentiment detection: Assessing customer emotion (frustrated, satisfied, urgent)

- Context management: Maintaining understanding across multi-turn conversations

Natural Language Generation (NLG)

NLG produces human-readable responses:

- Template-based: Pre-written responses selected by the AI (traditional chatbots)

- Generative: Large language models (LLMs) generating original responses in real time (modern conversational AI)

- Hybrid: LLM generation constrained by business rules, knowledge bases, and guardrails

Speech Technologies

For voice-based conversational AI:

- Automatic Speech Recognition (ASR): Converting spoken language to text

- Text-to-Speech (TTS): Converting AI-generated text to natural-sounding speech

- Voice biometrics: Authenticating callers by voiceprint, replacing knowledge-based verification

Large Language Models (LLMs)

The current generation of conversational AI is powered by LLMs (GPT, Claude, Gemini, Llama) that can:

- Understand complex, ambiguous customer requests

- Generate contextually appropriate, natural-sounding responses

- Reason about multi-step processes and make decisions

- Learn from interaction data to improve over time

LLMs have transformed conversational AI from rigid, rule-based chatbots to flexible, capable systems that approach human-level dialogue in many customer service scenarios.

Applications in Contact Centers

Customer-Facing Applications

| Application | Channel | WFM Impact |

|---|---|---|

| AI-powered IVR | Voice | Increases IVR containment; reduces agent call volume |

| Chat/messaging bots | Digital | Handles Tier 1 inquiries; reduces chat agent staffing |

| Email auto-resolution | Drafts or sends responses; reduces email queue backlog | |

| Social media response | Social | Monitors and responds to routine social inquiries |

| Proactive outreach | Voice/Digital | AI-initiated communications (appointment reminders, status updates, payment reminders) |

Agent-Facing Applications

| Application | Function | WFM Impact |

|---|---|---|

| Real-time agent assist | Suggests responses, surfaces knowledge during live interactions | Reduces AHT by 10-20% |

| Automated summarization | Generates post-interaction summaries | Reduces after-call work by 30-60% |

| Next-best-action | Recommends actions based on customer context | Improves FCR |

| Auto-disposition | Classifies and codes interactions automatically | Reduces ACW |

| Real-time translation | Translates between languages during interaction | Enables cross-language staffing |

WFM Implications

Volume Deflection

Conversational AI's most direct WFM impact is reducing the volume of contacts requiring human agents:

Organizations deploying mature conversational AI report 20-40% of contact volume handled without human involvement. This requires WFM to:

- Forecast human-handled volume separately from total volume

- Track AI resolution rate as a forecasting variable (it changes over time as AI improves)

- Plan for the complexity shift — remaining human contacts are harder, longer, and require more skill

The Complexity Shift

As AI handles routine contacts, the remaining human workload becomes:

- Higher AHT: Complex issues take longer than simple ones

- Higher skill requirements: Agents need deeper expertise and better judgment

- Higher emotional load: Frustrated customers (who couldn't resolve via AI) and emotionally complex situations

- Lower volume, higher value: Each human interaction matters more

This shift has profound WFM implications: Erlang C staffing with historically average AHT will understaff if AHT is rising due to complexity shift. WFM must forecast AHT trends separately from volume trends.

Planning for AI Agents

When conversational AI handles contacts autonomously, it becomes part of the workforce being planned:

- AI capacity is elastic: AI agents scale instantly, unlike human agents who require hiring and training

- AI has no shrinkage: No breaks, training, or absence — but has downtime for maintenance and updates

- AI quality is consistent: No variance between agents, but may fail on edge cases

- AI cost structure differs: Per-interaction cost rather than per-hour cost

See Agentic AI Workforce Planning, Three-Pool Architecture, and Cognitive Portfolio Model (N*) for frameworks addressing AI agent capacity planning.

Quality and Risk Management

Accuracy and Hallucination

LLM-based conversational AI can generate plausible but incorrect responses ("hallucinations"). In customer service, this creates risks:

- Providing wrong policy information

- Making unauthorized commitments

- Mishandling regulated interactions (financial advice, healthcare, legal)

Mitigation strategies include retrieval-augmented generation (RAG), knowledge-base grounding, response validation layers, and confidence-based escalation to human agents.

Escalation Design

Effective conversational AI requires seamless escalation to human agents when:

- AI confidence falls below threshold

- Customer explicitly requests a human

- Interaction involves regulated or high-risk decisions

- Sentiment detection indicates escalating frustration

The escalation framework determines when and how AI-to-human handoff occurs. WFM must staff for escalation volume as a distinct workload stream.

Maturity Model Position

Conversational AI maturity correlates with organizational maturity:

- Level 1 (Reactive): No conversational AI. All contacts handled by humans.

- Level 2 (Foundational): Rule-based chatbot handling FAQs. Low containment rate (<15%).

- Level 3 (Integrated): NLU-powered chatbot and speech-enabled IVR. 15-30% AI resolution. AI volume tracked in WFM forecasting.

- Level 4 (Optimized): LLM-powered conversational AI across channels. 30-50% AI resolution. Agent-assist tools deployed. WFM plans for complexity shift.

- Level 5 (Adaptive): Autonomous AI agents as part of the three-pool workforce. Dynamic allocation between AI and human agents. Continuous AI quality optimization.

See Also

- Intelligent Self-Service — Self-service powered by conversational AI

- Digital Messaging — Channels where virtual agents open and triage interactions

- AI-Powered Support — AI that augments human agents (vs. automating the contact)

- Agent Assist — Real-time augmentation counterpart to conversational automation

- Workforce Management — Overview of the WFM discipline

- Contact Center — Operational environment

- Interactive Voice Response — Voice-based conversational AI deployment

- Three-Pool Architecture — AI workforce architecture

- Agentic AI Workforce Planning — Planning for AI agents as workforce members

- Cognitive Portfolio Model (N*) — Staffing math for human-supervised AI

- Human AI Supervision and Escalation Frameworks — AI-to-human handoff design

- Intelligent Automation — Broader automation landscape

- Speech Analytics — AI analysis of voice interactions

- Average Handle Time — Metric impacted by AI agent-assist tools

- Service Level — Improved through AI volume deflection

- Natural Language Processing

- Large Language Models and Generative AI

- Neural Networks and Deep Learning