Speech Analytics

Speech analytics (also interaction analytics or conversation intelligence) is a technology that applies natural language processing, machine learning, and acoustic analysis to recorded or real-time customer interactions to extract actionable insights. In contact center and workforce management contexts, speech analytics transforms unstructured conversation data into structured intelligence about customer intent, agent performance, process effectiveness, and compliance adherence.

Speech analytics is a core component of workforce optimization (WFO) suites, bridging the gap between quality management (which evaluates individual interactions) and operational analytics (which identifies systemic patterns across thousands of interactions). The global speech analytics market was valued at USD 4.31 billion in 2024 and is projected to reach USD 13.34 billion by 2032, growing at a CAGR of 15.2%.[1]

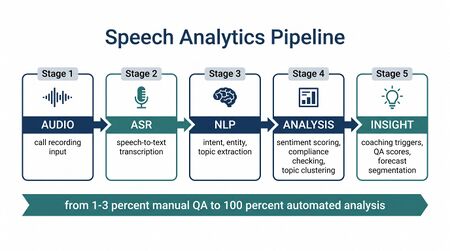

How Speech Analytics Works

Speech analytics systems process voice interactions through a multi-stage pipeline that converts raw audio into structured, queryable data. Understanding each stage is essential for evaluating vendor capabilities and setting realistic accuracy expectations.

Stage 1: Audio Ingestion and Preprocessing

Raw call recordings are ingested from telephony infrastructure (PBX, SIP trunks, or cloud CCaaS platforms). Preprocessing steps include:

- Channel separation: Splitting stereo recordings into distinct agent and customer audio tracks, enabling speaker-specific analysis

- Noise reduction: Filtering background noise, hold music, and IVR tones that degrade transcription accuracy

- Voice activity detection (VAD): Identifying segments containing speech versus silence, which feeds silence detection metrics

- Audio normalization: Standardizing volume levels and sample rates across recording sources

Stage 2: Automatic Speech Recognition (ASR)

The ASR engine converts spoken audio into text transcripts. Modern ASR systems use deep neural networks — typically transformer-based architectures — that process audio spectrograms and output word sequences with timing metadata.

Key ASR performance considerations:

- Word error rate (WER): State-of-the-art systems achieve below 5% WER on clean benchmark audio, though real-world contact center conditions (background noise, accents, overlapping speech) typically produce higher error rates ranging from 10-25%[2]

- Speaker diarization: Identifying who spoke when — critical for attributing statements to agent versus customer

- Domain adaptation: Models fine-tuned on contact center audio (with industry-specific vocabulary) outperform general-purpose ASR

- Real-time vs. batch: Real-time ASR enables live coaching but may sacrifice accuracy compared to post-call batch processing

Stage 3: Natural Language Processing and Understanding

Once transcribed, text passes through multiple NLP layers:

- Intent classification: Determining the purpose of the interaction (billing inquiry, technical support, cancellation request)

- Entity extraction: Identifying specific data points — product names, account numbers, dates, dollar amounts

- Topic modeling: Clustering interactions by subject matter to build contact reason taxonomies

- Sentiment analysis: Scoring emotional polarity (positive/negative/neutral) at the utterance, turn, and interaction level

- Discourse analysis: Mapping conversation flow — opening, problem identification, resolution attempt, closing

Stage 4: Acoustic Analysis

Parallel to text-based NLP, acoustic analysis extracts signals directly from the audio waveform:

- Emotion detection: Identifying frustration, anger, satisfaction, or confusion from vocal characteristics (pitch, pace, volume)

- Silence detection: Measuring dead air that may indicate agent uncertainty, system delays, or hold time

- Overtalk detection: Identifying when agent and customer speak simultaneously, often correlating with frustration

- Speech rate analysis: Measuring words per minute for both parties — rapid customer speech may signal agitation

- Stress indicators: Detecting vocal tension patterns associated with escalation risk

Stage 5: Scoring and Insight Generation

The final stage applies business rules and AI models to generate actionable outputs:

- Automated quality scores: Evaluating each interaction against defined criteria (greeting compliance, empathy language, resolution effectiveness)

- Compliance flags: Identifying interactions missing required disclosures or containing prohibited language

- Coaching triggers: Surfacing specific agent behaviors that warrant supervisor attention

- Trend dashboards: Aggregating interaction-level data into operational intelligence views

- Generative summaries: Using large language models (LLMs) to produce after-call summaries, reducing after-call work (ACW)

Core Capabilities

Post-Interaction Analytics

Analysis of recorded interactions after they conclude:

- Topic and intent detection: Automatically categorizing interactions by subject (billing, technical issue, cancellation)

- Sentiment analysis: Measuring customer emotion throughout the interaction — detecting frustration, satisfaction, anger, or confusion

- Keyword and phrase spotting: Identifying specific words or phrases (competitor mentions, compliance language, escalation triggers)

- Silence and overtalk detection: Measuring dead air (agent uncertainty) and interruption patterns

- Agent behavior analysis: Evaluating script adherence, empathy language, greeting compliance

- Call driver analysis: Identifying why customers are calling — the "contact reason" taxonomy that feeds customer access strategy

Real-Time Analytics

Analysis during live interactions:

- Live sentiment monitoring: Alerting supervisors when customer emotion turns negative

- Compliance detection: Flagging interactions where required disclosures are missed (financial services, healthcare)

- Agent guidance: Providing real-time coaching prompts based on conversation context — next-best-action suggestions, knowledge base article surfacing, and objection-handling scripts

- Escalation prediction: Identifying interactions likely to require supervisor intervention based on acoustic and linguistic signals

Real-time analytics requires sub-second processing latency, which constrains model complexity. Most implementations use lighter models for real-time triggers while reserving deeper analysis for post-interaction processing.

Use Cases

Automated Quality Scoring

Traditional quality management samples 1-3% of interactions for manual evaluation — typically 2-4 calls per agent per month.[3] Speech analytics enables 100% interaction scoring, fundamentally changing quality from a sampling exercise to a census:

- Every interaction scored against consistent evaluation criteria, eliminating evaluator subjectivity and recency bias

- Coaching opportunities identified automatically based on behavioral patterns across all interactions, not isolated samples

- Compliance violations detected across the entire interaction volume, critical for regulated industries

- Performance management data enriched with statistically significant quality signals rather than anecdotal samples

Compliance Monitoring

For regulated industries (financial services, healthcare, insurance, collections), speech analytics provides automated compliance verification:

- Disclosure monitoring: Verifying agents deliver required legal disclosures (Mini-Miranda in collections, HIPAA acknowledgments in healthcare)

- Prohibited language detection: Flagging language that creates regulatory risk — unauthorized promises, discriminatory statements, misleading claims

- Consent verification: Confirming call recording consent was obtained where legally required

- Audit trail generation: Creating searchable compliance records across 100% of interactions

The cost-avoidance ROI for compliance monitoring alone can justify the investment, as a single regulatory violation can result in penalties far exceeding the annual platform cost.[4]

Agent Coaching and Development

Speech analytics transforms coaching from subjective supervisor impression to data-driven development:

- Skill gap identification: Pinpointing specific behavioral deficiencies (e.g., poor empathy language, inconsistent closing techniques) across an agent's full interaction volume

- Targeted coaching queues: Automatically routing representative interaction examples to supervisors for coaching conversations

- Progress tracking: Measuring behavioral change over time as agents implement coaching feedback

- Peer benchmarking: Identifying top-performer behaviors that can be modeled and taught to lower-performing agents

Implementations have demonstrated a 23% reduction in AHT and 97% improvement in compliance adherence through targeted, analytics-driven coaching programs.[5]

Topic Clustering and Contact Reason Analysis

Speech analytics provides the most accurate method for understanding why customers contact the organization:

- Automated taxonomy building: AI-driven topic clustering identifies contact reasons without requiring predefined categories, surfacing patterns humans might miss

- Emerging issue detection: New contact reasons appearing in the data signal product issues, policy confusion, or market changes — often days before formal reporting channels surface them

- Competitive intelligence: Customer mentions of competitor products, pricing, or features aggregated across thousands of interactions

- Deflection opportunity identification: Contacts that could be resolved through self-service, conversational AI, or proactive communication — directly reducing agent volume

WFM Integration

Forecast Segmentation by Contact Reason

Speech analytics enriches forecasting by enabling volume segmentation:

- Contact-type forecasting: Forecasting by contact reason (billing + tech support + cancellation) rather than total volume improves accuracy because different contact types follow different patterns

- Routing optimization: Understanding the real-time contact mix enables dynamic skill-group design and routing adjustments

- Seasonal pattern detection: Certain contact reasons exhibit different seasonality — product-related contacts spike post-launch while billing contacts follow billing cycle patterns

AHT Driver Analysis

Speech analytics reveals why AHT varies, not just that it varies:

- Process bottleneck identification: Long holds during specific interaction types may indicate system or knowledge gaps

- Complexity scoring: Interactions involving multiple topics or emotional escalation predictably drive higher AHT

- Agent skill correlation: Connecting AHT variation to specific agent behaviors (e.g., agents who skip needs assessment spend longer in resolution)

Reducing AHT by just 30 seconds in a 50-agent center can eliminate the need for 3-4 new hires annually, representing $150,000-$200,000 in savings.[6]

Quality-Staffing Correlation

Connecting quality metrics to workforce metrics reveals operational dynamics:

- Understaffing impact on quality: When occupancy exceeds thresholds, quality scores decline — speech analytics quantifies this relationship

- Schedule adherence and quality: Agents consistently working outside their scheduled shifts show measurable quality degradation

- New hire ramp curves: Analytics data reveals precisely how long new agents take to reach quality benchmarks, improving workforce planning assumptions

Vendor Landscape

The speech analytics market includes established WFO suite vendors and AI-native specialists:

| Vendor | Category | Key Differentiator |

|---|---|---|

| NICE (CXone/Nexidia) | WFO Suite | Deep integration with NICE CXone CCaaS platform; legacy Nexidia phonetic indexing plus modern NLU; broad channel coverage across voice, chat, email, and social |

| Verint | WFO Suite | Strong compliance monitoring focus; popular in regulated industries (financial services, healthcare); decades of workforce optimization expertise |

| CallMiner (Eureka) | Pure-Play Analytics | Dedicated conversation intelligence platform; Coach and RealTime modules for agent development; extensive API/connector ecosystem |

| Observe.AI | AI-Native | Purpose-built for contact centers; Auto QA scoring 100% of interactions; real-time agent assist; modern ML-first architecture |

| Calabrio | WFO Suite | Quality management integration; workforce engagement focus; mid-market positioning |

| Cresta | AI-Native | Real-time agent guidance emphasis; generative AI coaching; strong in sales-oriented contact centers |

| Level AI | AI-Native | Generative AI-powered QA and coaching; custom AI model training on organization-specific evaluation criteria |

Organizations should evaluate vendors based on their primary use case: those prioritizing compliance should evaluate Verint, quality-focused operations benefit from Observe.AI or Calabrio, and organizations needing real-time guidance should consider Cresta or CallMiner RealTime.[7]

Implementation Considerations

Integration Architecture

Speech analytics requires integration with multiple systems:

- Telephony/recording: Access to call recordings (API-based retrieval from recording platforms or real-time audio streams via SIPREC/SPAN)

- CRM: Customer context enrichment — associating interactions with customer records, account history, and case data

- Quality management: Feeding automated scores into existing QA workflows and calibration processes

- WFM: Passing contact reason data and AHT drivers into forecasting and scheduling systems

- BI/reporting: Exporting analytics data to enterprise reporting platforms

Data Requirements

Successful implementation depends on data quality:

- Audio quality: Poor recording quality directly degrades ASR accuracy; stereo recordings with channel separation significantly outperform mono

- Metadata enrichment: Interaction metadata (queue, skill group, disposition code) enables meaningful segmentation of analytics results

- Historical volume: Initial model training and calibration typically requires 3-6 months of historical interaction data

- Labeling effort: Supervised models require human-labeled training examples for custom intent categories and quality criteria

Change Management

Agent and supervisor adoption is a critical success factor:

- Agent perception: Agents may view 100% monitoring as surveillance rather than development support — transparent communication about how analytics data will (and will not) be used is essential

- Supervisor enablement: Supervisors need training to interpret analytics outputs and translate them into effective coaching conversations

- Calibration alignment: Automated quality scores must be calibrated against human evaluator standards to maintain credibility

- Insight-to-action workflows: Without clear processes for acting on analytics findings, insights accumulate unused — organizations should define escalation paths and response protocols before deployment[8]

ROI Framework

Speech analytics ROI spans multiple value categories:

Direct Cost Reduction

- QA labor savings: Automating 100% quality scoring reduces manual evaluation effort by 50-80%, redeploying QA analysts to coaching and calibration

- AHT reduction: Identifying and coaching specific AHT-driving behaviors yields measurable handle time improvements

- Compliance cost avoidance: Automated monitoring reduces regulatory risk exposure across all interactions

Revenue Impact

- Churn reduction: Detecting at-risk customers through sentiment and topic analysis enables proactive retention

- Upsell identification: Recognizing purchase intent signals in conversation data and routing to sales-enabled agents

- FCR improvement: Root cause analysis of repeat contacts drives resolution rate improvements

Strategic Value

- Voice of customer: Aggregating customer feedback across 100% of interactions provides richer customer intelligence than survey-based VoC programs

- Product feedback: Customer complaints and feature requests surfaced through analytics feed product development prioritization

- Competitive positioning: Systematic tracking of competitor mentions and customer switching triggers

Organizations implementing speech analytics report a 10% improvement in customer satisfaction scores on average, with prioritized voice-focused analytics solutions correlating with 62% higher overall ROI from contact center technology investments.[9]

Limitations and Challenges

Accuracy Constraints

- ASR error propagation: Transcription errors cascade through downstream NLP — a misrecognized word can flip sentiment polarity or miss a compliance phrase. Real-world WER in contact centers (10-25%) significantly exceeds benchmark figures[2]

- Accent and dialect variation: ASR models trained primarily on standard dialects underperform on regional accents, non-native speakers, and code-switching — creating potential equity concerns in quality scoring

- Acoustic environment: Background noise, poor-quality headsets, and telephony compression degrade accuracy, particularly in work-from-home environments

Analytical Limitations

- Context blindness: Current systems struggle with sarcasm, cultural nuance, and context-dependent meaning — a customer saying "great, just great" sarcastically may score as positive sentiment

- Static rule fragility: Basic implementations relying on keyword lists and static rules produce high false-positive rates and require constant maintenance as language evolves

- Insight overload: Systems that generate dashboards and scores without built-in action workflows create "analysis paralysis" — insights accumulate without driving operational change

Privacy and Ethics

- Regulatory compliance: Implementations must comply with data privacy regulations (GDPR, CCPA) including consent requirements, data retention policies, and right-to-deletion requests

- Surveillance perception: 100% monitoring raises workforce ethics questions — organizations must balance oversight with agent trust and autonomy

- Demographic bias: ASR and sentiment models may perform unevenly across demographic groups, requiring regular bias audits to ensure equitable quality scoring

Technology Evolution

Speech analytics has undergone three distinct generations:

First Generation: Phonetic Indexing (2000s)

Early systems like NICE Nexidia used phonetic indexing — converting audio into phoneme sequences and searching for phonetic patterns matching target keywords. This approach avoided full transcription but was limited to keyword spotting with high false-positive rates. Rule creation was entirely manual, requiring analysts to define every phrase of interest.

Second Generation: LVCSR and Statistical NLP (2010s)

Large vocabulary continuous speech recognition (LVCSR) enabled full transcription, unlocking text-based NLP techniques. Statistical models (n-grams, support vector machines, conditional random fields) powered topic classification and sentiment scoring. This generation dramatically expanded analytical capability but still required substantial feature engineering and domain-specific model tuning.

Third Generation: Deep Learning and LLMs (2020s)

Current systems leverage transformer-based ASR (e.g., Whisper, Conformer architectures), pre-trained language models for semantic understanding, and generative AI for summarization and insight extraction. This generation reduces manual configuration, handles multilingual analysis without per-language models, and enables conversational understanding that approaches human-level comprehension in structured interaction contexts.

Connection to the AI Scaffolding Framework

Speech analytics represents a high-value AI scaffolding opportunity in contact centers:

- Augmentation layer: Real-time analytics augments agent capability without replacing human judgment — supervisors retain decision authority while AI surfaces relevant information

- Progressive automation: Organizations can begin with post-interaction analytics (lower risk, easier validation) before advancing to real-time guidance (higher complexity, greater impact)

- Human-in-the-loop: Automated quality scores should be calibrated against human evaluators, maintaining the human oversight that effective AI scaffolding requires

- Continuous learning: Analytics models improve as they process more interactions, but require ongoing human validation to prevent drift

Maturity Model Position

- Level 2 (Foundational): No speech analytics. Quality relies on manual sampling.

- Level 3 (Integrated): Post-interaction analytics deployed. Contact reason taxonomy built from analytics data. WFM uses contact-type segmentation in forecasting.

- Level 4 (Optimized): Real-time analytics with live coaching. 100% quality scoring. Automated compliance monitoring. Analytics-driven forecast segmentation.

- Level 5 (Adaptive): AI-powered continuous analysis across all channels. Predictive insights feed WFM planning. Self-optimizing quality and coaching systems.

See Also

- Interaction Analytics — The cross-channel discipline speech analytics is part of

- AI-Powered Support — Real-time and post-interaction AI capabilities built on interaction analytics

- Natural Language Processing — Core NLP technology powering text analysis

- Neural Networks and Deep Learning — Underlying model architectures for ASR and NLU

- Quality Management — Quality evaluation that analytics scales to 100%

- Conversational AI — Overlapping AI technology stack and deflection target

- Sentiment Analysis in Customer Service — Emotional analysis component of speech analytics

- AI in Workforce Management — Broader AI applications in WFM

- AI Scaffolding Framework — Human-AI collaboration model for analytics deployment

- Average Handle Time — Key metric improved through analytics-driven coaching

- First Contact Resolution — Resolution metric enhanced by root cause analysis

- Workforce Optimization — WFO suite that includes analytics

- Performance Management — Performance data enriched by analytics

- Customer Experience Management — CX insights from analytics

- Forecasting Methods — Forecast segmentation using contact reasons

- Coaching and Agent Development — Coaching driven by analytics findings

- Contact Center — Operational environment

References

- ↑ Speech Analytics Market Size, Trends | Growth Overview [2032]. Fortune Business Insights. 2026-05-13.

- ↑ 2.0 2.1 Accuracy Benchmarking. Speechmatics. 2026-05-13.

- ↑ Speech Analytics for Call Center Quality Monitoring [2025]. QEval Pro. 2026-05-13.

- ↑ ROI of Speech Analytics for Contact Centers. Etech Global Services. 2026-05-13.

- ↑ Contact Center Speech Analytics: How it Works and How to Use It. Calabrio. 2026-05-13.

- ↑ 10 Pro Ways To Use Speech Analytics in Contact Centers. Sprinklr. 2026-05-13.

- ↑ Best AI Speech Analytics Platforms Compared: NICE vs Verint vs CallMiner. Insight7. 2026-05-13.

- ↑ 3 Challenges of Call Centers Implementing Speech Analytics. Picovoice. 2026-05-13.

- ↑ AI Contact Center Success: Measuring Speech Analytics ROI. Xima Software. 2026-05-13.