Sentiment Analysis in Customer Service

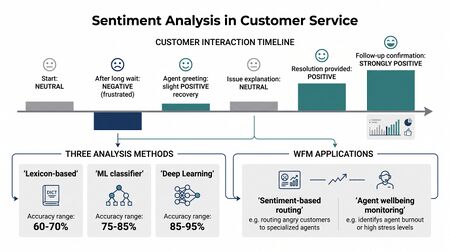

Sentiment analysis (also opinion mining or emotion detection) in customer service is the application of natural language processing and machine learning to automatically assess the emotional tone, attitude, and satisfaction level expressed by customers during interactions. In contact center operations, sentiment analysis operates across voice (speech analytics), text (chat, email, social media), and survey responses to provide real-time and historical insight into customer experience.

From a workforce management perspective, sentiment analysis feeds multiple planning functions: it enriches quality management with scalable emotion detection, provides early warning signals for real-time operations, informs customer experience strategy, and increasingly influences routing decisions that match customer emotional state to agent capability. The integration of sentiment signals across these functions is explored further in Sentiment Analysis and CX Signal Integration.

History and Evolution

Sentiment analysis traces its origins to computational linguistics research in the early 2000s. Pang, Lee, and Vaithyanathan (2002) published foundational work demonstrating that machine learning classifiers could determine the polarity of movie reviews with accuracy exceeding simple human-crafted rules.[1] This work established the supervised learning paradigm that dominated the field for over a decade.

The application to customer service lagged academic research by several years. Early commercial deployments in contact centers (2008–2012) focused on post-interaction survey analysis and social media monitoring. The shift to real-time analysis began around 2015 as cloud computing reduced the latency and cost of running inference models on live interaction streams.

Liu (2012) provided the first comprehensive taxonomy of sentiment analysis, distinguishing document-level, sentence-level, and aspect-level analysis — a framework that remains relevant in contact center applications where aspect-level analysis (e.g., sentiment about wait time vs. sentiment about agent helpfulness within the same interaction) provides more actionable insight than aggregate polarity.[2]

The transformer revolution, beginning with Devlin et al.'s BERT (2019), dramatically improved sentiment analysis accuracy by enabling models to understand context, negation, and implicit sentiment in ways that prior architectures could not.[3] Contact center vendors rapidly adopted transformer-based models, and by 2023 most commercial speech analytics platforms incorporated some form of large language model for sentiment classification.

How Sentiment Analysis Works

Lexicon-Based Methods

The simplest approach matches words in customer text against curated sentiment dictionaries. Systems like VADER (Valence Aware Dictionary and sEntiment Reasoner) assign polarity scores to individual words and compute aggregate sentiment for each utterance.[4]

Strengths:

- No training data required — works immediately on any domain

- Transparent and interpretable — every score traces back to specific words

- Low computational cost — suitable for extremely high-volume, low-latency applications

Weaknesses:

- Cannot handle sarcasm, irony, or implied sentiment ("Oh great, another transfer")

- Misses domain-specific language — "escalate" is neutral in general English but signals negative experience in customer service

- Ignores word order and context — "not bad" should be mildly positive but word-level scoring treats "not" and "bad" separately

- Limited accuracy — typically 65–75% on contact center text, well below modern ML approaches

Despite limitations, lexicon methods remain useful as a baseline, as a feature input to hybrid systems, and in environments where model training data is unavailable.

Machine Learning Classifiers

Supervised machine learning approaches train models on labeled datasets of customer interactions annotated with sentiment categories. Common algorithms include:

- Naive Bayes: Fast, works well with small training sets, strong baseline

- Support Vector Machines (SVMs): Higher accuracy on well-structured feature spaces, effective with TF-IDF features

- Random Forests: Ensemble method effective for multi-class sentiment (beyond binary positive/negative)

- Logistic regression: Interpretable, regularizable, performs competitively with more complex methods

These classifiers typically achieve 78–85% accuracy on customer service text when trained on domain-specific data. Performance degrades significantly when models trained on one domain (e.g., retail) are applied to another (e.g., healthcare), making transfer learning and domain adaptation critical concerns.

Deep Learning and Transformer Models

Deep learning architectures represent the current state of the art for sentiment analysis in contact centers:

- Recurrent Neural Networks (RNNs/LSTMs): Process text sequentially, capturing word-order dependencies. Effective for sentiment trajectory analysis — tracking how sentiment evolves across a conversation turn by turn.

- Convolutional Neural Networks (CNNs): Extract local patterns (n-gram features) efficiently. Often used for short-text sentiment (chat messages, social media posts).

- Transformer models (BERT, RoBERTa, DeBERTa): Bidirectional attention mechanisms capture long-range dependencies and contextual meaning. Fine-tuned transformers typically achieve 88–93% accuracy on customer service sentiment classification.

- Large Language Models (GPT-4, Claude, Gemini): Zero-shot and few-shot sentiment analysis without domain-specific training data. Capable of nuanced emotion detection, sarcasm identification, and aspect-level sentiment in a single pass. Trade-off is higher latency and cost per inference.

Zhang, Zhao, and LeCun (2015) demonstrated that character-level deep learning models could classify sentiment without any feature engineering, a result that accelerated commercial adoption of neural approaches.[5]

Voice-Based Sentiment

Analysis of spoken interactions through speech analytics adds paralinguistic dimensions unavailable in text:

- Acoustic features: Pitch (fundamental frequency), volume (amplitude), speaking rate, silence patterns, and voice quality (jitter, shimmer) — these signals are independent of word content and can detect emotional arousal even when words are neutral

- Prosodic analysis: Stress patterns, intonation contours, and rhythm changes that signal frustration, confusion, or satisfaction

- Linguistic analysis: Automatic speech recognition (ASR) produces transcripts that undergo text-based sentiment analysis

- Multimodal fusion: Combined acoustic + linguistic models outperform either modality alone. Late fusion (separate models with combined scores) and early fusion (joint feature representation) are both common architectures

Voice sentiment accuracy is generally 5–10 percentage points lower than text sentiment due to ASR errors, background noise, accent variation, and the inherent ambiguity of paralinguistic cues.

Sentiment Dimensions

Modern sentiment analysis goes beyond simple positive/negative classification:

| Dimension | What It Measures | WFM Application |

|---|---|---|

| Polarity | Positive, negative, neutral | Basic quality signal |

| Emotion | Anger, frustration, confusion, satisfaction, gratitude | Agent matching; escalation triggers |

| Intensity | Mild dissatisfaction vs. extreme anger | Priority routing; supervisor alerts |

| Trajectory | How sentiment changes during the interaction | Agent effectiveness assessment |

| Effort | Perceived difficulty of resolution | Customer effort score proxy |

| Aspect | Sentiment toward specific elements (wait time, agent knowledge, resolution) | Targeted process improvement |

| Intent | Churn risk, purchase intent, advocacy likelihood | Proactive retention; upsell routing |

Real-Time vs. Post-Interaction Analysis

Contact centers deploy sentiment analysis in two fundamentally different modes, each with distinct technical requirements and operational applications.

Real-Time Analysis

Real-time sentiment operates during the live interaction, typically with latency requirements under 2 seconds:

- Intra-call routing: Sentiment detected during IVR navigation or the first 30 seconds of agent interaction can trigger re-routing to specialized agents

- Agent desktop alerts: Supervisors and agents receive live sentiment indicators, enabling mid-conversation course correction

- Dynamic scripting: Agent-assist tools adjust recommended responses based on detected customer emotion

- Escalation triggers: Sustained negative sentiment or sharp sentiment drops automatically flag interactions for supervisor intervention

Real-time analysis demands lightweight models (distilled transformers, streaming acoustic models) and edge or near-edge deployment to meet latency requirements. Accuracy is typically 3–5% lower than post-interaction analysis due to partial context.

Post-Interaction Analysis

Post-interaction analysis processes complete interactions, usually in batch:

- 100% interaction scoring: Every call, chat, and email receives sentiment scores — replacing the 1–3% sampling rate of manual quality evaluation

- Trend analysis: Sentiment aggregated across days, weeks, and months reveals systemic patterns

- Root cause identification: Aspect-level sentiment pinpoints specific drivers of negative experience

- Compliance review: Interactions flagged by sentiment models undergo targeted compliance and quality review

Post-interaction analysis can use larger, more accurate models since latency is not a constraint. Full conversation context improves accuracy, particularly for trajectory and aspect-level analysis.

Applications in Contact Centers

Sentiment-Informed Routing

Sentiment detected during IVR or early in the interaction can influence routing decisions:

- Emotion-to-skill matching: Angry customers routed to experienced, high-empathy agents trained in de-escalation

- Frustration-aware callbacks: Frustrated callers offered callback to avoid extended hold (which would worsen sentiment)

- Sentiment-triggered escalation: Supervisor alerts for live intervention when sentiment drops below configurable thresholds

- Churn-risk priority: Customers detected as high churn risk based on sentiment patterns receive priority queue assignment

- Agent energy matching: Routing avoids assigning consecutive high-negativity contacts to the same agent, protecting against emotional exhaustion

The effectiveness of sentiment-informed routing depends on classification accuracy. At 85% accuracy, roughly 1 in 7 customers will be misclassified — an angry customer routed to a standard queue, or a satisfied customer unnecessarily escalated. Organizations must design routing logic with appropriate confidence thresholds and fallback paths.

Quality Management at Scale

Traditional quality management evaluates 1–3% of interactions manually. Sentiment analysis enables:

- Universal scoring: 100% of interactions scored for customer sentiment, eliminating sampling bias

- Targeted review: Automatic identification of worst-experience interactions for human evaluation, dramatically improving the yield of QA time

- Multi-dimensional quality: Sentiment trends analyzed by agent, team, queue, time period, and interaction type

- Coaching prioritization: Coaching priorities driven by sentiment patterns rather than random sampling

- Calibration support: Sentiment models provide a consistent baseline against which human evaluators can calibrate their own scoring

Medhat, Hassan, and Korashy (2014) provided a comprehensive survey of sentiment analysis techniques that informed many early quality management integrations, cataloguing the strengths and limitations of different approaches for operational deployment.[6]

Voice of the Customer (VoC)

Aggregated sentiment across thousands of interactions reveals systemic patterns invisible in individual evaluations:

- Process failure detection: A policy change causing widespread frustration appears as a sentiment shift days before formal complaint volumes rise

- Product issue early warning: Emerging product defects surface in sentiment data before they appear in structured feedback channels

- Competitive intelligence: Customers mentioning competitor offers correlate with specific sentiment patterns, informing retention strategy

- Geographic and demographic patterns: Sentiment variation across regions, customer segments, and contact reasons informs localized experience improvement

- Seasonal and event-driven patterns: Sentiment baselines shift during billing cycles, outage events, and promotional periods — distinguishing normal variation from actionable signals

Agent Performance and Well-Being

Sentiment analysis provides objective measurement of agent emotional effectiveness and enables monitoring of agent well-being:

- Sentiment improvement rate: How consistently an agent turns negative sentiment positive during interactions — a metric that correlates more strongly with customer retention than traditional quality scores

- De-escalation success: Rate of angry contacts resolved without supervisor escalation

- Emotional labor monitoring: Agents consistently handling high-negative-sentiment contacts face elevated burnout risk. Sentiment-aware scheduling can distribute emotional load more equitably across teams

- Agent sentiment tracking: Analyzing agent-side sentiment (tone, word choice, energy) can detect early signs of disengagement or burnout before performance metrics decline

- Recovery time: Measuring the gap between a difficult interaction and the agent's return to baseline sentiment performance indicates resilience and recovery capacity

The relationship between emotional labor and agent attrition is well-documented. Sentiment-based workload management — limiting consecutive negative interactions, scheduling recovery breaks after difficult contacts — represents an emerging application of AI in workforce management.

Integration with Speech Analytics

Sentiment analysis is a core component of modern speech analytics platforms, but the integration extends beyond simple feature inclusion:

- Emotion-topic correlation: Linking detected sentiment to specific topics discussed reveals which subjects drive negative experience

- Silence and sentiment: Extended silence patterns correlate with customer confusion or frustration — acoustic analysis enriches text-based sentiment with timing signals

- Agent-customer sentiment divergence: When agent sentiment remains positive but customer sentiment deteriorates, it may indicate scripted or inauthentic agent responses

- Cross-channel consistency: Comparing voice sentiment with subsequent survey responses validates model accuracy and identifies systematic biases

- Predictive escalation models: Combining real-time sentiment trajectory with historical patterns enables prediction of escalation before it occurs

Customer Effort and Sentiment Correlation

Customer effort — the perceived difficulty of getting an issue resolved — correlates strongly with sentiment but is not identical to it. Dixon, Toman, and DeLisi (2013) demonstrated that reducing customer effort is a stronger driver of loyalty than exceeding expectations, a finding that has shaped how contact centers interpret sentiment data.[7]

Key relationships between effort and sentiment:

- High effort + positive resolution = mixed sentiment: Customers who eventually get their issue resolved but had to work hard express lower loyalty than those who had easy negative outcomes

- Effort signals in language: Phrases like "I've already called three times," "let me explain again," and "can you transfer me to someone who can help" are high-effort indicators that sentiment models can detect

- Channel-switching as effort proxy: Customers who move from self-service to chat to phone exhibit escalating effort — sentiment typically degrades with each channel switch

- Effort-adjusted sentiment: Some organizations normalize sentiment scores by interaction complexity, recognizing that complex issues inherently generate more friction

Accuracy Challenges

Sarcasm and Irony

Sarcasm remains the most difficult challenge for sentiment analysis systems. Statements like "Oh wonderful, I've only been on hold for 45 minutes" carry negative sentiment expressed through positive language. Even state-of-the-art LLMs achieve only 70–80% accuracy on sarcasm detection in customer service contexts, compared to 90%+ on straightforward sentiment.

Context Dependency

The same phrase carries different sentiment depending on context:

- "This is unbelievable" — positive (product delight) or negative (service failure)

- "I can't believe you did that" — gratitude or outrage

- "That's fine" — genuine acceptance or resigned frustration

Multi-turn context is critical: "fine" after three transfers carries very different sentiment than "fine" after a quick resolution. Models that analyze individual utterances in isolation miss these contextual signals.

Cultural and Linguistic Variation

Sentiment expression varies significantly across cultures:

- Directness: Some cultures express dissatisfaction indirectly, making explicit sentiment cues sparse

- Politeness norms: Highly polite language may mask strong negative sentiment in cultures with formal communication norms

- Emotional display rules: Vocal expression of emotion varies across cultures — pitch and volume norms differ, affecting acoustic sentiment models

- Code-switching: Multilingual customers switching between languages within an interaction challenge models trained on monolingual data

- Dialect variation: Regional expressions, slang, and colloquialisms may not appear in training data

Organizations operating global contact centers must either maintain culture-specific models or validate that their models perform equitably across the populations they serve.

Domain Specificity

Models trained on general-purpose data (product reviews, social media) perform poorly on contact center interactions because:

- Contact center language is more constrained and procedural

- Industry-specific terminology carries domain-specific sentiment

- The conversational nature of interactions differs from review text

- Agent-customer dynamics introduce power asymmetries absent in reviews

Fine-tuning on domain-specific data typically improves accuracy by 5–12 percentage points.

Vendor Landscape

The sentiment analysis vendor landscape spans several categories:

| Category | Examples | Characteristics |

|---|---|---|

| Speech analytics platforms | NICE Nexidia, Verint, CallMiner, Observe.AI | Full voice + text pipeline; deep contact center integration; sentiment as one component of broader analytics |

| Conversational AI / CCaaS | Genesys, Five9, NICE CXone, Talkdesk | Sentiment embedded in routing and agent-assist; real-time focus |

| Specialized NLP | AWS Comprehend, Google Cloud Natural Language, Azure Text Analytics | Cloud API sentiment services; require integration work; general-purpose models |

| Quality management | Scorebuddy, EvaluAgent, MaestroQA | Sentiment integrated with QA workflows; coaching-oriented |

| CX analytics | Medallia, Qualtrics, InMoment | Survey and feedback sentiment; journey-level analysis; VoC focus |

Key vendor evaluation criteria for contact center sentiment:

- Domain-specific accuracy: Performance on contact center data, not benchmark datasets

- Language coverage: Support for all languages the organization serves

- Real-time capability: Latency and throughput for live interaction analysis

- Aspect-level granularity: Ability to decompose sentiment by topic, not just overall polarity

- Integration depth: Native connectors to ACD, WFM, QM, and CRM systems

- Customization: Ability to fine-tune models on organization-specific data and terminology

Ethical Considerations

Customer Privacy and Consent

Emotional profiling of customers raises significant ethical and regulatory questions:

- Consent: Customers may not realize their emotional state is being analyzed and classified. Transparency about sentiment analysis in privacy disclosures is increasingly expected.

- Data retention: Sentiment labels attached to customer records create emotional profiles over time. Retention policies must address whether and how long emotional data persists.

- Regulatory compliance: GDPR classifies emotional data as potentially sensitive personal data. The EU AI Act specifically addresses emotion recognition systems in workplace and consumer contexts.

Algorithmic Bias

Sentiment models can exhibit systematic bias:

- Demographic bias: Models may score sentiment differently based on dialect, accent, or communication style correlated with race, ethnicity, or socioeconomic status

- Gender bias: Research has documented that identical statements are scored differently based on perceived speaker gender

- Age bias: Communication style differences across age groups may produce systematic sentiment scoring disparities

Organizations must audit sentiment models for disparate impact across protected classes and customer segments.

Agent Surveillance Concerns

Using sentiment analysis to monitor agent emotional state raises distinct ethical issues:

- Emotional surveillance: Continuous monitoring of agent sentiment can feel invasive and erode trust

- Performance pressure: If sentiment scores directly affect compensation or employment, agents may engage in emotional suppression rather than authentic interaction

- Union and labor considerations: Organized labor may challenge sentiment-based performance management as overreach

- Wellbeing vs. control: The same technology that detects burnout risk can be used for productivity surveillance — organizational intent and governance matter

Best practice: use agent sentiment data for supportive purposes (workload management, proactive wellness) rather than punitive performance management.

Accuracy and Accountability

When sentiment scores influence routing, quality evaluation, or agent performance ratings, accuracy errors have real consequences:

- A customer misclassified as satisfied may not receive appropriate follow-up

- An agent whose interactions are systematically mis-scored faces unfair evaluation

- Routing decisions based on incorrect sentiment may worsen customer experience

Organizations should maintain human-in-the-loop oversight for consequential decisions and publish accuracy metrics for their sentiment systems.

Future Directions

Several trends are shaping the next generation of sentiment analysis in customer service:

- Multimodal fusion: Combining text, voice, and video (for video-enabled contact centers) sentiment into unified models

- Real-time LLM inference: As large language model latency decreases and cost drops, LLM-based sentiment will replace purpose-built models for real-time applications

- Predictive sentiment: Models that predict how sentiment will evolve based on early interaction signals, enabling preemptive intervention

- Causal sentiment analysis: Moving beyond correlation to understand what specific actions, words, and behaviors cause sentiment changes — informing prescriptive agent guidance

- Federated learning: Training sentiment models across organizations without sharing raw customer data, addressing privacy concerns while improving model quality

- Emotion-aware generative AI: Generative AI systems that modulate their own tone and approach based on detected customer sentiment in real time

See Also

- AI-Powered Support — Capability set that consumes real-time sentiment

- Agent Assist — Surfaces sentiment cues to live agents

- Natural Language Processing — Foundation technology for text-based sentiment analysis

- Speech Analytics — Voice interaction analysis platform

- Quality Management — Quality evaluation enhanced by sentiment

- Customer Experience Management — CX strategy informed by sentiment data

- AI in Workforce Management — Broader AI applications in WFM including sentiment

- Neural Networks and Deep Learning — Technical foundation for modern sentiment models

- Large Language Models and Generative AI — Current state-of-the-art for nuanced sentiment understanding

- Agent Experience and Wellbeing — Agent-side sentiment monitoring and burnout prevention

- Sentiment Analysis and CX Signal Integration — Cross-functional integration of sentiment signals

- Skill-Based Routing — Routing influenced by detected sentiment

- Real-Time Operations — Real-time sentiment alerts

- Coaching and Agent Development — Coaching driven by sentiment patterns

- Conversational AI — AI systems that detect and respond to sentiment

References

- ↑ Pang, B., Lee, L., & Vaithyanathan, S. (2002). "Thumbs up? Sentiment classification using machine learning techniques." Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 79–86.

- ↑ Liu, B. (2012). Sentiment Analysis and Opinion Mining. Morgan & Claypool Publishers. ISBN 978-1-60845-884-4.

- ↑ Devlin, J., Chang, M.-W., Lee, K., & Toutanova, K. (2019). "BERT: Pre-training of deep bidirectional transformers for language understanding." Proceedings of NAACL-HLT 2019, pp. 4171–4186.

- ↑ Hutto, C. J., & Gilbert, E. (2014). "VADER: A parsimonious rule-based model for sentiment analysis of social media text." Proceedings of the Eighth International AAAI Conference on Weblogs and Social Media (ICWSM-14), pp. 216–225.

- ↑ Zhang, X., Zhao, J., & LeCun, Y. (2015). "Character-level convolutional networks for text classification." Advances in Neural Information Processing Systems (NeurIPS) 28, pp. 649–657.

- ↑ Medhat, W., Hassan, A., & Korashy, H. (2014). "Sentiment analysis algorithms and applications: A survey." Ain Shams Engineering Journal, 5(4), pp. 1093–1113.

- ↑ Dixon, M., Toman, N., & DeLisi, R. (2013). The Effortless Experience: Conquering the New Battleground for Customer Loyalty. Portfolio/Penguin. ISBN 978-1-59184-581-9.