Natural Language Processing

Natural Language Processing (NLP) is a subfield of artificial intelligence concerned with enabling computers to understand, interpret, and generate human language. In modern contact centers, NLP is the foundational technology behind Speech Analytics, Conversational AI, IVR systems, chatbots, automated quality scoring, and real-time agent assistance. For workforce management practitioners, NLP has become inseparable from daily operations — it powers the tools that transcribe calls, detect customer sentiment, classify interaction topics, and increasingly automate the analytical work that once required manual review of a tiny fraction of customer interactions.

NLP sits at the intersection of linguistics, computer science, and machine learning. While the theoretical underpinnings span decades of academic research, the practical explosion of NLP in contact centers began in the 2010s with advances in deep learning and has accelerated dramatically with the emergence of large language models. WFM professionals do not need to build NLP systems, but understanding what these systems can and cannot do — their capabilities, their failure modes, and their impact on staffing models — is increasingly essential to effective workforce planning and operational decision-making.

Core NLP Tasks

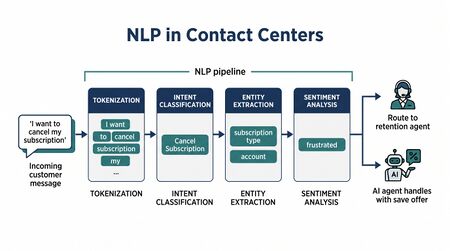

Every NLP application in a contact center relies on a pipeline of lower-level language processing tasks. These tasks break raw text into structured, computable representations that downstream applications can act on. Understanding these building blocks clarifies why NLP tools sometimes fail and where their limitations originate.

Tokenization

Tokenization is the process of splitting a stream of text into discrete units called tokens — typically words, subwords, or characters. The sentence "I'd like to cancel my subscription" might be tokenized into ["I", "'d", "like", "to", "cancel", "my", "subscription"]. While this seems trivial in English, tokenization becomes significantly more complex in languages without clear word boundaries (such as Mandarin or Japanese), in text that contains abbreviations, contractions, or domain-specific jargon, and in transcribed speech where punctuation is absent or unreliable.[1]

In contact center applications, tokenization quality directly affects everything downstream. Poorly tokenized transcripts — common when automatic speech recognition systems produce run-on text without punctuation — degrade the accuracy of intent classification, entity extraction, and sentiment analysis.

Part-of-Speech Tagging

Part-of-speech (POS) tagging assigns grammatical labels (noun, verb, adjective, etc.) to each token. POS tagging helps NLP systems disambiguate words that have multiple meanings depending on context. The word "book" functions differently in "I want to book a flight" (verb) versus "I received the book" (noun). In contact center NLP, POS tagging contributes to more accurate parsing of customer requests, particularly when those requests are syntactically complex or ambiguous.

Named Entity Recognition

Named entity recognition (NER) identifies and classifies proper nouns and specific data within text — person names, dates, account numbers, addresses, product names, monetary amounts, and other structured information. When a customer says "I moved to 425 Oak Street in Denver last Tuesday," NER extracts the address and date as discrete, labeled entities that downstream systems can use to route, classify, or act on the interaction.

NER is particularly important in contact centers for automating data capture that agents would otherwise enter manually, and for compliance applications where personally identifiable information (PII) must be detected and redacted.

Syntactic Parsing

Syntactic parsing (or dependency parsing) analyzes the grammatical structure of sentences, identifying relationships between words — which noun a verb acts upon, which adjective modifies which noun, and so on. While most contact center practitioners will never interact with parse trees directly, parsing underpins the ability of NLP systems to handle complex, multi-clause customer statements like "I want to change the shipping address on the order I placed yesterday but keep the billing address the same."[1]

Intent Classification and Entity Extraction

Intent classification and entity extraction are the two NLP capabilities most directly responsible for how Conversational AI systems and modern IVR platforms understand what customers want. Together, they form the core intelligence layer of any chatbot, virtual agent, or voice assistant deployed in a contact center.

How Intent Classification Works

Intent classification is a text classification task: given a customer utterance, the system assigns it to one of a predefined set of intents — categories that represent what the customer is trying to accomplish. A system might define intents such as cancel_account, change_address, check_order_status, billing_inquiry, and technical_support.

The utterance "I want to change my address" would be classified under change_address. The utterance "I need to cancel my account" maps to cancel_account. Modern intent classifiers use machine learning models trained on labeled examples — hundreds or thousands of sample utterances per intent — to learn patterns that generalize beyond exact wording. A well-trained classifier recognizes that "cancel my subscription," "I want to stop my service," and "please close my account" all express the same intent despite different surface language.

The accuracy of intent classification has direct containment rate implications. Every misclassified intent is a failed self-service attempt that escalates to a live agent, increasing staffing demand and eroding the ROI of conversational AI investments.

Entity Extraction in Practice

Entity extraction works alongside intent classification to capture the specific details within a customer's statement. If the intent is change_address, entity extraction identifies what the new address is. If the intent is check_order_status, entity extraction pulls out the order number.

Modern systems combine intent classification and entity extraction in a single model pass, producing structured output such as:

Intent: change_address

Entities: {new_address: "742 Evergreen Terrace", city: "Springfield", state: "IL"}

This structured output enables automated fulfillment — the system can update the address in the CRM without human intervention, provided confidence thresholds are met.

WFM Implications

For workforce management, intent classification data aggregated across interactions becomes a powerful forecasting input. Topic distributions shift over time — a product recall spikes return_request intents, a billing system outage floods billing_inquiry — and NLP-derived intent data captures these shifts in near real-time, enabling more responsive staffing adjustments than traditional reason-code systems that depend on agent-entered disposition codes.

Speech-to-Text and Transcription

Automatic speech recognition (ASR) is the NLP technology that converts spoken language into written text. In a contact center context, ASR is the critical first step that makes voice interactions accessible to all downstream NLP applications — you cannot analyze sentiment, classify intents, or summarize calls without first converting audio to text.[2]

How ASR Works

Modern ASR systems use deep neural networks (typically encoder-decoder architectures) trained on thousands of hours of transcribed audio. The system takes an audio waveform as input, extracts acoustic features, and generates a sequence of text tokens as output. State-of-the-art models like OpenAI's Whisper and Google's Universal Speech Model can achieve word error rates (WER) below 5% on clean, standard-accent English — approaching human transcription accuracy.

Accuracy Challenges in Contact Centers

Contact center audio presents particular challenges that degrade ASR accuracy well beyond benchmark performance:

- Background noise and cross-talk: Agents in open-floor call centers, customers calling from noisy environments, or multiple speakers talking simultaneously all reduce transcription accuracy.

- Accents and dialects: ASR systems trained predominantly on standard American or British English perform measurably worse on regional dialects, non-native speakers, and code-switching between languages — a significant concern for global contact centers.[3]

- Domain-specific vocabulary: Product names, technical terms, and company-specific jargon are frequently absent from general-purpose ASR training data, leading to systematic transcription errors.

- Telephony audio quality: Traditional phone lines compress audio to 8 kHz sample rates — half the bandwidth of typical speech recognition training data — further reducing accuracy.

Real-Time vs. Batch Transcription

Contact centers use ASR in two modes. Real-time (streaming) transcription processes audio as the conversation happens, enabling live agent assist tools and real-time supervisor alerts. Batch transcription processes recorded calls after the fact, typically for Speech Analytics and Quality Management applications. Batch processing generally achieves higher accuracy because the system can use the full audio context, apply multiple decoding passes, and is not constrained by latency requirements.

WFM Impact

ASR accuracy has a direct workforce impact. When Speech Analytics platforms process transcribed calls for topic analysis, forecast segmentation, or compliance monitoring, transcription errors propagate through the entire analytical pipeline. A 10% word error rate does not mean 10% of analyses are wrong — errors in key terms (product names, action verbs, negations) can flip the meaning of entire utterances, making sentiment analysis and intent classification unreliable in precisely the cases that matter most.

Sentiment Analysis

Sentiment analysis uses NLP to determine the emotional tone or attitude expressed in text. In contact centers, it is applied to transcribed calls, chat messages, emails, and social media interactions to gauge customer satisfaction, detect escalation risk, and measure agent performance.

How It Works

Sentiment analysis models typically classify text along a polarity scale — positive, negative, or neutral — and may additionally detect specific emotions (frustration, satisfaction, confusion, anger). Modern approaches use transformer-based neural networks fine-tuned on customer service conversations, replacing earlier rule-based systems that relied on keyword dictionaries and linguistic heuristics.[1]

More sophisticated systems analyze sentiment at the utterance level rather than the interaction level, tracking how sentiment changes over the course of a conversation. This enables detection of patterns like negative-to-positive recovery (indicating successful issue resolution) or positive-to-negative degradation (indicating a worsening customer experience).

What Sentiment Analysis Actually Measures

It is important for WFM practitioners to understand what sentiment analysis measures and what it does not. Sentiment models detect expressed sentiment — the emotional tone present in the language used. They do not directly measure customer satisfaction, loyalty, or intent to churn. A customer may express frustration during a call but still remain loyal; another may use polite, neutral language while fully intending to switch providers.

The gap between expressed sentiment and actual customer state is a persistent source of error in sentiment-driven analytics. Systems that treat sentiment scores as direct proxies for customer satisfaction without additional validation risk generating misleading workforce and operational insights.

Connection to CX Signal Integration

Advanced contact center operations integrate sentiment data with other customer experience signals — survey responses, behavioral data, and interaction metadata — to build more reliable composite indicators. The Sentiment Analysis and CX Signal Integration approach treats sentiment as one input among many rather than a standalone metric, reducing the impact of individual measurement errors on workforce planning decisions.

Reliability Concerns

Sentiment analysis accuracy varies significantly by channel and context. Performance is generally strongest on written channels (chat, email) where customers express themselves explicitly, and weakest on transcribed voice interactions where ASR errors, sarcasm, and tonal nuance degrade accuracy. Cross-cultural and cross-linguistic sentiment detection remains a significant challenge — expressions of dissatisfaction vary dramatically across cultures, and models trained on English-language data perform poorly on other languages without dedicated training data.[4]

Text Summarization and Agent Assist

Text summarization applies NLP to automatically generate concise summaries of customer interactions — a capability with significant workforce management implications through its impact on average handle time (AHT) and after-call work (ACW).

Auto-Summarization

After a customer interaction ends, agents typically spend 30 to 90 seconds writing notes summarizing the conversation, the issue, and the resolution. NLP-powered auto-summarization generates these notes automatically from the interaction transcript, either as a draft for agent review or as a fully automated disposition. Modern summarization systems based on large language models produce summaries that are often indistinguishable from human-written notes in quality.

The WFM impact is substantial. If auto-summarization reduces ACW by 30 to 60 seconds per interaction across an operation handling 50,000 interactions per day, the aggregate time savings translate directly into reduced staffing requirements or increased capacity for handling additional volume — a measurable input to workforce planning models.

Real-Time Agent Assist

Real-time agent assist tools use NLP to analyze ongoing conversations and provide agents with contextual suggestions: relevant knowledge base articles, scripted responses, compliance reminders, next-best-action recommendations, and upsell prompts. These systems combine real-time ASR with intent classification, entity extraction, and knowledge retrieval to surface the right information at the right moment.

From a WFM perspective, effective agent assist tools reduce handle time by decreasing the time agents spend searching for information, reduce transfers by enabling agents to resolve issues outside their primary skill set, and improve first-contact resolution rates. These effects compound across the operation, influencing shrinkage assumptions, skill group configurations, and training requirements.

Limitations

Auto-summarization and agent assist tools depend on accurate transcription and correct interpretation of conversational context. When ASR errors corrupt the transcript or the NLP model misidentifies the topic or resolution, the generated summary or suggestion can be misleading or incorrect. Organizations deploying these tools must establish quality monitoring processes to measure summarization accuracy and agent assist relevance — adding a new dimension to Quality Management programs.

NLP in Quality Management

NLP has fundamentally changed the economics and coverage of Quality Management in contact centers. Traditional QA programs rely on supervisors manually evaluating 1–3% of interactions — a statistically inadequate sample that misses the vast majority of compliance violations, coaching opportunities, and customer experience failures. NLP-powered automated QA enables analysis of 100% of interactions.[5]

Automated QA Scoring

Automated QA systems use NLP to evaluate interactions against predefined criteria: Did the agent use the required greeting? Did the agent verify the customer's identity? Did the agent offer a resolution? Were prohibited phrases used? Was proper disclosure language included?

These evaluations combine multiple NLP techniques — keyword and phrase detection, intent classification, sentiment analysis, speaker diarization (distinguishing agent from customer), and increasingly, LLM-based evaluation that can assess nuanced criteria like empathy, active listening, and problem-solving approach.

Compliance Monitoring

Regulated industries (financial services, healthcare, insurance) require specific disclosures, consent confirmations, and prohibited-topic avoidance during customer interactions. NLP-based compliance monitoring scans every interaction for required and prohibited language, flagging violations for review. This application delivers particular value because compliance failures carry regulatory risk — the cost of missing a violation on one of the 97% of unmonitored calls can far exceed the cost of the monitoring technology.

Calibration Challenges

Automated QA scores must be calibrated against human evaluation to ensure consistency and accuracy. NLP systems may weight criteria differently than human evaluators, miss contextual nuances, or flag false positives that waste reviewer time. Effective programs use a calibration loop where human evaluators review a sample of automated scores, discrepancies are analyzed, and the automated model is adjusted. This calibration process itself requires WFM planning — evaluator time must be scheduled and forecasted like any other workload.

Impact on WFM

NLP-powered QA changes WFM in several ways. First, it reduces the headcount required for manual QA evaluation while increasing coverage — a staffing model change. Second, the data it produces (topic distributions, compliance rates, agent performance trends) feeds back into forecasting and scheduling models. Third, the coaching insights it generates affect training schedules, skill development plans, and ultimately agent proficiency curves that influence long-term capacity planning.

NLP for WFM Analytics

Beyond its role in specific contact center applications, NLP provides analytical capabilities that directly serve workforce management functions: forecasting, capacity planning, and operational decision-making.

Topic Clustering for Forecast Segmentation

NLP topic modeling algorithms (such as Latent Dirichlet Allocation or transformer-based clustering) can automatically group customer interactions into thematic clusters without predefined categories. This unsupervised approach discovers contact drivers that traditional disposition codes miss — either because agents categorize inconsistently or because new topics emerge faster than disposition code lists are updated.

Topic clusters, tracked over time, provide granular volume forecasting inputs. Rather than forecasting total call volume as a single number, WFM teams can forecast volume by topic cluster, enabling more accurate staffing by skill group and more responsive adjustment when specific topic volumes shift. The AI in Workforce Management paradigm increasingly relies on this type of NLP-derived signal for demand decomposition.

Reason-Code Automation

Manual reason-code entry by agents is notoriously unreliable. Agents under time pressure select codes hastily, use catch-all categories, or apply codes inconsistently. NLP-based reason-code automation analyzes the interaction transcript and assigns reason codes automatically, improving both accuracy and consistency. This directly improves the quality of data available for WFM analysis — better reason codes mean better understanding of demand drivers, which means better forecasts.

Emerging Issue Detection

NLP systems monitoring interaction streams in near real-time can detect emerging issues before they appear in traditional metrics. A sudden spike in interactions containing references to a specific product defect, a website error message, or a billing discrepancy shows up in NLP topic analysis minutes or hours before it manifests as a volume spike in ACD statistics. This early-warning capability enables proactive staffing adjustments — calling in additional agents, activating overflow queues, or deploying targeted IVR messages — that mitigate the impact of unexpected demand surges.

The AI Scaffolding Framework provides a structured approach to integrating these NLP-derived analytical capabilities into existing WFM processes, ensuring that the outputs of NLP systems connect to actionable workforce decisions rather than generating insights that go unactioned.

Limitations and Challenges

Despite significant advances, NLP technologies deployed in contact centers face persistent limitations that WFM practitioners should understand when interpreting NLP-derived data and making workforce decisions based on NLP outputs.

Sarcasm and Irony

NLP systems consistently struggle with sarcasm, irony, and indirect speech. A customer who says "Oh, great, another hour on hold — what wonderful service" is expressing strong negative sentiment, but keyword-level and even many neural sentiment models may classify "great" and "wonderful" as positive indicators. While research continues on sarcasm detection, it remains one of the hardest problems in NLP, and real-world accuracy on sarcastic utterances is substantially lower than on straightforward expressions.[1]

Context and Conversational History

Many NLP models analyze utterances in isolation or with limited context. A customer statement like "that's not what I was told" requires understanding of the prior conversation — and potentially prior interactions — to interpret correctly. While modern architectures handle longer contexts than earlier models, maintaining coherent understanding across multi-turn, multi-channel customer journeys remains a challenge.

Multilingual and Dialectal Support

NLP capability varies enormously across languages. English-language NLP is most mature, with robust models for virtually every task. Other major languages (Spanish, Mandarin, French, German) have good but not equivalent coverage. Many languages spoken by significant customer populations — particularly lower-resource languages in South and Southeast Asia, Africa, and indigenous communities — have minimal NLP support. Contact centers serving multilingual populations cannot assume uniform NLP performance across their language mix.[3]

Domain-Specific Vocabulary

General-purpose NLP models do not know industry-specific terminology out of the box. Healthcare contact centers use clinical vocabulary; financial services operations reference specific product names and regulatory terms; technology companies have product and feature names that do not appear in general training data. Without domain adaptation — fine-tuning models on industry-specific data — NLP accuracy on domain-specific content degrades significantly.

Bias in Training Data

NLP models inherit and can amplify biases present in their training data. ASR systems trained predominantly on standard-accent speakers perform worse on minority dialects. Sentiment models trained on formal text may misinterpret informal or culturally specific communication styles. These biases can produce systematically unequal outcomes — lower transcription accuracy for certain customer populations, biased sentiment scoring, or intent misclassification that disproportionately affects specific demographic groups. Organizations deploying NLP must audit model performance across their customer demographics and address identified disparities.[3]

Adversarial and Edge Cases

NLP systems can be brittle in the face of unusual inputs: misspellings, code-switching between languages within a single utterance, customers who are incoherent due to distress or intoxication, or deliberately adversarial input from bad actors attempting to exploit automated systems. These edge cases, while individually rare, occur at scale in high-volume contact centers and represent a category of failure that WFM teams should account for in their assumptions about automation effectiveness and containment rates.

The Evolution to Large Language Models

The history of NLP in contact centers can be roughly divided into three eras: rule-based systems (1990s–2000s), statistical and early neural models (2010s), and the transformer/LLM era (2020s–present).

From Rules to Statistical Models

Early contact center NLP relied on hand-crafted rules and keyword dictionaries. These systems were brittle, expensive to maintain, and could only handle patterns explicitly programmed by human developers. Statistical models, particularly support vector machines and recurrent neural networks, improved generalization but still required task-specific training data and architecture for each application.

The Transformer Revolution

The introduction of the transformer architecture in 2017, and the subsequent development of pre-trained language models (BERT, GPT, and their descendants), fundamentally changed NLP.[6] Instead of training separate models for each NLP task, a single large model pre-trained on vast text corpora could be fine-tuned for specific applications with relatively little task-specific data. This dramatically reduced the cost and effort of deploying NLP in contact centers and enabled capabilities — like high-quality text summarization and nuanced conversational understanding — that were previously impractical.

Implications for Contact Center NLP

Large language models have collapsed the distinction between many previously separate NLP tasks. A single LLM can perform intent classification, entity extraction, sentiment analysis, summarization, and free-form question answering without task-specific fine-tuning, using only natural language instructions (prompting). This architectural simplification is reshaping how vendors build contact center analytics platforms and how organizations think about their NLP technology stack.

However, LLMs also introduce new challenges: higher computational costs, latency concerns for real-time applications, hallucination risks (generating plausible but factually incorrect output), and the need for robust evaluation frameworks to ensure output quality. The AI Scaffolding Framework addresses how organizations can integrate LLM capabilities into their operations while managing these risks.

For a comprehensive treatment of large language models and their contact center applications, see Large Language Models and Generative AI.

See Also

- Artificial Intelligence Fundamentals

- Machine Learning Concepts

- Neural Networks and Deep Learning

- Large Language Models and Generative AI

- AI in Workforce Management

- AI Scaffolding Framework

- Speech Analytics

- Conversational AI

- Sentiment Analysis in Customer Service

- Sentiment Analysis and CX Signal Integration

- Quality Management

- Knowledge Management

- Interactive Voice Response

- Automatic Call Distributor

- AI Containment Rate and Its Workforce Implications

References

- ↑ 1.0 1.1 1.2 1.3 Speech and Language Processing. Stanford University. 2024.

- ↑ "Deep Neural Networks for Acoustic Modeling in Speech Recognition". IEEE Signal Processing Magazine. 29(6): 82–97. 2012. doi:10.1109/MSP.2012.2205597.

- ↑ 3.0 3.1 3.2 "Racial disparities in automated speech recognition". Proceedings of the National Academy of Sciences. 117(14): 7684–7689. 2020. doi:10.1073/pnas.1915768117.

- ↑ Doherty, Brian. Market Guide for Contact Center Speech Analytics. Gartner. 2023.

- ↑ Jacobs, Max. The Forrester Wave: Conversation Intelligence For Customer Service, Q3 2023. Forrester Research. 2023.

- ↑ "Attention Is All You Need". Advances in Neural Information Processing Systems. 2017.