Machine Learning Concepts

Machine learning (ML) is a branch of artificial intelligence in which computer systems learn patterns from data and improve their performance on tasks without being explicitly programmed for each scenario.[1] Unlike traditional software—where a developer writes rules such as "if average handle time exceeds 400 seconds, flag for coaching"—a machine learning system ingests historical data, discovers its own rules, and applies those rules to new situations. In workforce management, this distinction matters because contact center operations generate enormous volumes of structured data (call records, schedule adherence logs, quality scores) that are well suited to ML techniques.

WFM practitioners encounter machine learning daily, whether or not they recognize it. Modern forecasting engines use ML to predict contact volumes across channels. Scheduling optimizers apply ML-derived demand curves. Quality management platforms use ML to score interactions automatically. Understanding the core concepts behind these systems enables workforce planners, capacity analysts, and real-time analysts to evaluate vendor claims, communicate effectively with data science teams, and make better decisions about when ML adds value—and when simpler methods suffice.[2]

This article introduces the foundational concepts of machine learning as they apply to workforce management operations. It is not a computer science textbook; it is a working reference for WFM professionals who need to understand what is happening inside their AI-enhanced platforms and tools.

How Machine Learning Works

Machine learning follows a cyclical process that practitioners can think of as a learning loop:

- Data collection — The system ingests historical data. In WFM, this typically includes call volumes, handle times, schedule adherence, agent attributes, customer satisfaction scores, and external variables such as marketing calendars or weather data.

- Feature engineering — Raw data is transformed into meaningful inputs (called features) that the model can use. A timestamp might become "day of week," "hour of day," "days since last holiday," and "is it a pay cycle week."

- Model training — An algorithm processes the features and learns relationships between inputs and outcomes. During training, the model adjusts its internal parameters to minimize prediction errors on historical data.

- Prediction — The trained model is applied to new, unseen data to generate outputs: a forecast for next Monday's call volume, a probability that an agent will attrite within 90 days, or a recommended shift assignment.

- Evaluation — Predictions are compared against actual outcomes using metrics such as mean absolute error (MAE) for forecasts or accuracy for classifications. This step determines whether the model is useful.

- Iteration — Based on evaluation results, the team refines features, adjusts the algorithm, gathers more data, or retrains the model. ML systems are rarely "set and forget."

This loop is continuous. As new data arrives—new weeks of call volumes, updated agent rosters, changed business conditions—models are retrained to maintain accuracy. A volume forecasting model trained on pre-pandemic data, for example, would need retraining as channel mix and contact patterns shifted permanently.

Supervised Learning

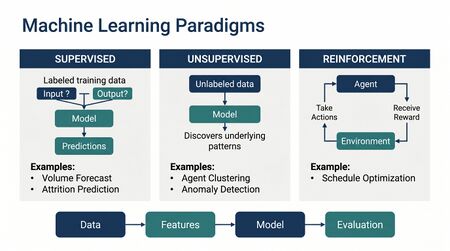

Supervised learning is the most widely used category of machine learning in workforce management applications. In supervised learning, the model is trained on labeled data—historical examples where both the inputs and the correct output are known. The model learns a mapping from inputs to outputs and can then predict outputs for new inputs.[3]

Supervised learning divides into two primary task types:

Classification

Classification predicts which category an observation belongs to. The output is a discrete label rather than a continuous number.

WFM examples:

- Agent attrition prediction — Given an agent's tenure, schedule satisfaction score, commute distance, recent adherence trends, and performance metrics, will this agent leave within the next 90 days? (Labels: will attrite / will stay)

- Call reason classification — Based on speech-to-text transcription of the first 30 seconds, which queue or skill group should handle this contact? (Labels: billing / technical support / account changes / etc.)

- Quality pass/fail — Given interaction features (silence percentage, hold time, sentiment scores), does this interaction meet quality standards? (Labels: pass / fail)

Regression

Regression predicts a continuous numerical value. This is the task type behind most WFM forecasting.

WFM examples:

- Volume forecasting — Given day of week, seasonality patterns, marketing events, and trend, how many calls will arrive next Tuesday between 10:00 and 10:30? (Output: a number, e.g., 347 calls)

- Average handle time prediction — Given call type, agent experience level, and time of day, what AHT should be expected? (Output: a duration, e.g., 312 seconds)

- Shrinkage estimation — Given historical patterns, scheduled training, known PTO, and day of week, what effective shrinkage rate should be applied? (Output: a percentage, e.g., 31.4%)

Main article: Machine Learning for Volume Forecasting

Most ARIMA, exponential smoothing, and regression-based forecasting methods used in WFM fall under the supervised learning umbrella, even when practitioners do not think of them in ML terms.

Unsupervised Learning

Unsupervised learning works with unlabeled data—the model receives inputs but no correct answers. Instead, it discovers structure, patterns, or groupings within the data on its own.[3]

Clustering

Clustering algorithms group similar observations together. The model identifies natural segments without being told what segments to find.

WFM examples:

- Agent performance clustering — Rather than imposing arbitrary performance tiers (top 20%, middle 60%, bottom 20%), clustering can discover natural groupings based on multiple metrics simultaneously: AHT, first call resolution, quality score, adherence, and customer satisfaction. The resulting clusters often reveal performance profiles that simple percentile ranking misses—for example, a group of agents with high AHT but exceptional quality and FCR who should not be coached the same way as agents with high AHT and low quality.

- Demand pattern discovery — Clustering daily volume curves can reveal that a center has three or four distinct "day shapes" (e.g., Monday shape, mid-week shape, Friday shape, end-of-month shape) rather than the seven individual patterns a planner might manually maintain.

- Customer segmentation for routing — Grouping customers by interaction history, product mix, and value to inform skills-based routing decisions.

Anomaly Detection

Anomaly detection identifies observations that deviate significantly from expected patterns. In real-time operations, this capability is particularly valuable.

WFM examples:

- Real-time volume spike detection — Identifying when incoming volume deviates from forecast beyond normal variance, triggering real-time adjustments before service level deteriorates.

- Adherence anomalies — Detecting unusual patterns in schedule adherence that may indicate system issues (e.g., a telephony outage causing mass aux states) rather than individual agent behavior.

- AHT drift detection — Flagging when handle times for a specific call type begin shifting, which may indicate a product issue, a system change, or a training gap.

Dimensionality Reduction

Dimensionality reduction compresses many variables into fewer variables while preserving the most important information. WFM data often involves dozens of metrics per agent or per interval. Dimensionality reduction helps identify which variables carry the most information and can simplify analysis and visualization.

Reinforcement Learning

Reinforcement learning (RL) differs from both supervised and unsupervised learning. In RL, an agent (a software agent, not a contact center agent) interacts with an environment, takes actions, receives rewards or penalties, and learns a strategy (called a policy) that maximizes cumulative reward over time.[3]

Main article: Reinforcement Learning for Schedule Optimization

WFM examples:

- Schedule optimization — An RL agent generates candidate schedules, receives a reward signal based on service level achievement, cost, and employee preference satisfaction, and iteratively learns which scheduling decisions produce the best trade-offs. Unlike traditional linear programming, RL can handle complex, non-linear constraints and adapt as conditions change.

- Real-time routing decisions — An RL system learns routing policies that balance immediate service level with longer-term objectives like agent skill development or equitable workload distribution.

- Intraday reforecasting — An RL agent learns when and how aggressively to adjust intraday forecasts based on early-interval arrival patterns, balancing the cost of unnecessary staffing changes against the cost of missed service levels.

Reinforcement learning is computationally expensive and requires careful reward function design. In WFM, it is most commonly found in advanced scheduling and routing engines rather than in day-to-day analyst tools.

Key Concepts for Practitioners

Features and Feature Engineering

A feature is any measurable input variable provided to a machine learning model. Feature engineering—the process of selecting, transforming, and creating features from raw data—is often the most impactful step in building an effective ML model. In many WFM applications, the quality of features matters more than the choice of algorithm.[4]

Common WFM features for volume forecasting:

- Day of week, hour of day, week of year

- Historical volume (same interval last week, same interval last year)

- Holiday indicators and days relative to holidays

- Marketing campaign calendars and promotional events

- Billing cycle indicators

- Weather data (for industries where weather drives contact volume)

- Product launch or outage indicators

- Trend and seasonality components

A workforce analyst who understands which features drive model performance can provide critical domain expertise to data science teams. The analyst who knows that a billing system migration caused a temporary volume spike can prevent that spike from corrupting training data—a contribution no algorithm can make on its own.

Training, Validation, and Test Data

Machine learning models are evaluated using a disciplined data-splitting approach:

- Training data — The data the model learns from. Typically 60–80% of available data.

- Validation data — A held-out set used to tune the model during development. The model does not learn from this data, but the analyst uses its performance on this set to make decisions about model configuration.

- Test data — A final held-out set used only once to estimate how the model will perform on truly unseen data. This set is never used during model development.

For time series data in WFM, the split must respect temporal order. A volume forecasting model should be trained on earlier periods and tested on later periods—never the reverse. Using future data to predict the past inflates accuracy estimates and produces models that fail when deployed.

Main article: Deterministic vs Probabilistic Models

Overfitting and Underfitting

Overfitting occurs when a model learns the training data too precisely, including its noise and anomalies, and performs poorly on new data. Underfitting occurs when a model is too simple to capture genuine patterns in the data.

WFM example of overfitting: A volume forecasting model is trained on three years of historical data. It learns that the Tuesday after Thanksgiving always produces exactly 2,847 calls at the main site. When a new Thanksgiving arrives and the company has added a digital channel and a new site, the model rigidly predicts 2,847 calls for the original site—it memorized a specific historical value rather than learning the underlying pattern of post-holiday demand surges.

WFM example of underfitting: A model uses only day-of-week as a feature to predict volume. It captures the basic weekly pattern but cannot account for monthly billing cycles, seasonal trends, or marketing events. Its forecasts are consistently mediocre because it lacks sufficient complexity to represent the true demand drivers.

The balance between overfitting and underfitting is one of the central challenges in applied machine learning. Validation data and cross-validation techniques help practitioners detect and correct both problems.

Bias and Fairness

Main article: Algorithmic Fairness and Bias in Workforce Scheduling

Machine learning models can encode and amplify biases present in historical data. In workforce management, this risk is particularly acute in systems that affect employee outcomes:

- Schedule preference allocation — If historical data shows that senior agents (who are disproportionately of one demographic) received preferred shifts, a model trained on this data may perpetuate that pattern, systematically disadvantaging newer or underrepresented groups.

- Performance scoring — Models trained on historical quality evaluations may inherit the biases of human evaluators, penalizing certain communication styles or accents.

- Attrition prediction — If used to allocate retention resources, biased attrition models could result in unequal investment in employee retention across demographic groups.

Research on algorithmic fairness in hiring and workforce decisions has documented these risks extensively.[5] WFM teams implementing or evaluating ML-driven tools should ask vendors specific questions about bias testing, protected class analysis, and fairness metrics.

Explainability

Explainability (also called interpretability) refers to the degree to which humans can understand why a model made a specific prediction or decision. In workforce management, explainability matters for several reasons:

- Operational trust — A real-time analyst is unlikely to act on an intraday reforecast if the system cannot explain why it expects a volume surge. "The model predicts 40% above forecast for the next two hours" is less actionable than "the model detects an arrival pattern consistent with an unplanned outage event, similar to the CRM outage of March 15."

- Employee impact — When ML influences scheduling, performance evaluation, or coaching recommendations, affected employees and their managers deserve understandable explanations for decisions that affect their work lives.

- Regulatory compliance — In jurisdictions with predictive scheduling laws or AI transparency requirements, organizations may be legally obligated to explain automated workforce decisions.

Simpler models (linear regression, decision trees) are inherently more interpretable than complex models (deep neural networks, large ensembles). The trade-off between accuracy and explainability is a recurring design decision in WFM AI systems. Techniques such as SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-Agnostic Explanations) can provide post-hoc explanations for complex models, but add implementation overhead.

ML Model Types Commonly Used in WFM

Decision Trees and Random Forests

A decision tree makes predictions by learning a series of if-then rules from data, structured as a branching tree. For example, a tree predicting whether a call will be long might split first on call type, then on time of day, then on agent tenure. Random forests combine many individual decision trees, each trained on a slightly different sample of data, and aggregate their predictions. Random forests are resistant to overfitting and handle mixed data types (numerical and categorical) well. In WFM, they are commonly used for agent attrition modeling, call classification, and as components in ensemble forecasting systems.

Gradient Boosting (XGBoost, LightGBM, CatBoost)

Gradient boosting builds an ensemble of decision trees sequentially, with each new tree correcting errors made by the previous trees. Implementations such as XGBoost, LightGBM, and CatBoost have become dominant in applied forecasting. In the M5 forecasting competition—a major international demand forecasting benchmark using Walmart retail data—gradient boosted trees were used by all of the top 50 competitors and consistently outperformed both traditional statistical methods and deep learning approaches.[6] WFM vendors increasingly use gradient boosting for multi-step volume forecasting, AHT prediction, and demand decomposition.

Neural Networks and Deep Learning

Neural networks are composed of layers of interconnected nodes (neurons) that learn complex non-linear relationships. Deep learning refers to neural networks with many layers. While powerful, they require large amounts of training data and are computationally expensive. In WFM, neural networks appear in speech analytics (transcription and topic detection), sentiment analysis, conversational AI platforms, and increasingly in multi-variate time series forecasting where they can capture complex cross-channel and cross-site dependencies.

Time Series Models

Traditional time series models such as ARIMA and exponential smoothing remain foundational in WFM forecasting. While some practitioners debate whether these qualify as "machine learning," the distinction is increasingly academic—modern forecasting frameworks combine statistical time series decomposition with ML techniques. Probabilistic forecasting methods extend point forecasts with prediction intervals, enabling WFM teams to plan for a range of scenarios rather than a single expected value.[4]

Main article: Forecasting Methods

Evaluating ML Models

Main article: Model Evaluation and Validation

Understanding model evaluation metrics enables WFM practitioners to assess whether a forecasting engine or classification system is performing adequately. Different task types require different metrics.

Regression Metrics (Forecasting)

- Mean Absolute Error (MAE) — The average of the absolute differences between predictions and actual values. If a model forecasts 500 calls and 520 arrive, the error for that interval is 20. MAE is intuitive and expressed in the same units as the forecast.

- Root Mean Squared Error (RMSE) — Similar to MAE but penalizes large errors more heavily. RMSE is useful when large forecast misses (e.g., underforecasting a volume spike by 200 calls) are far more costly than small misses.

- Mean Absolute Percentage Error (MAPE) — Expresses error as a percentage of actual values. Useful for comparing forecast accuracy across sites or channels with different volume levels. However, MAPE is undefined when actual values are zero and can be misleading for low-volume intervals.

- Weighted Mean Absolute Percentage Error (WMAPE) — Addresses MAPE's limitations by weighting errors by volume, giving more importance to high-volume intervals where forecast accuracy matters most for staffing.

Classification Metrics

- Accuracy — The percentage of correct predictions. Misleading when classes are imbalanced—an attrition model that always predicts "will stay" achieves 85% accuracy if only 15% of agents attrite, but it is completely useless for its intended purpose.

- Precision — Of all predictions of a given class, how many were correct? High precision in attrition prediction means that when the model flags an agent as at-risk, that agent usually does attrite.

- Recall — Of all actual instances of a given class, how many did the model catch? High recall means the model identifies most agents who will actually attrite, even if it also flags some who will not.

- F1 Score — The harmonic mean of precision and recall, useful when both false positives and false negatives carry meaningful costs.

WFM teams should establish baseline performance using simple methods (e.g., "same day last week" for forecasting, or random assignment for classification) and measure whether ML models improve meaningfully over those baselines. A sophisticated model that barely outperforms a naive approach may not justify its complexity and maintenance cost.

ML in WFM Platforms

Modern WFM platforms incorporate machine learning across multiple functional areas. Understanding where ML operates within these platforms helps practitioners set appropriate expectations and ask informed questions during vendor evaluations.

Forecasting engines — Most enterprise WFM platforms now offer ML-based forecasting alongside or replacing traditional statistical methods. These engines typically combine multiple model types (ensemble approaches) and automatically select the best-performing model for each queue or work type. Some platforms support external feature injection, allowing planners to incorporate marketing calendars, known events, or weather data.

Auto-scheduling and optimization — ML-driven scheduling engines use learned demand patterns, employee preferences, skill proficiency models, and constraint sets to generate schedules that balance service level targets, labor costs, and employee satisfaction. Some platforms apply reinforcement learning to iteratively improve schedule quality.

Quality and interaction scoring — Platforms use ML to automatically evaluate customer interactions, assigning quality scores, detecting compliance issues, and identifying coaching opportunities. These systems rely on natural language processing, sentiment analysis, and classification models trained on human-evaluated interactions.

Demand prediction and capacity planning — Long-range ML models project volume trends across months or years, incorporating macroeconomic indicators, business growth projections, and seasonal patterns. These feed into strategic capacity planning and hiring models.

Real-time decisioning — Some platforms apply ML in real-time operations: dynamic routing, intraday reforecasting, and automated schedule adjustments. These systems must balance prediction accuracy with computational speed, as decisions must be made in seconds or minutes.

Main article: AI Scaffolding Framework

WFM teams evaluating ML capabilities in platforms should ask: What data does the model require? How often is it retrained? What accuracy improvements does it deliver over simpler methods? Can it explain its predictions? What happens when the model encounters conditions it was not trained on?

Getting Started

For WFM teams beginning to work with machine learning—whether evaluating vendor capabilities, partnering with internal data science teams, or building analytical skills—the following practical steps provide a foundation:

Start with your data. ML is only as good as the data it learns from. Before pursuing any ML initiative, audit your data: Is historical volume data clean and complete? Are agent attributes current? Are quality scores consistently applied? Data quality issues that seem minor to a planner (a few missing intervals, inconsistent call type coding) can significantly degrade model performance.

Define the problem precisely. "Use AI to improve forecasting" is not a problem statement. "Reduce WMAPE for our top-10 queues from 12% to 8% at the daily level" is. Clear problem definitions enable meaningful model evaluation and prevent scope creep.

Establish baselines. Before evaluating any ML solution, document the performance of current methods. If existing exponential smoothing forecasts achieve 10% MAPE, a proposed ML model must demonstrably and consistently outperform that baseline to justify adoption.

Pilot on one use case. Select a single, well-defined use case with clean data, a clear success metric, and manageable scope. Volume forecasting for a single high-volume queue is a common starting point. Avoid attempting to apply ML across all queues, channels, and planning horizons simultaneously.

Partner with data science. WFM practitioners bring irreplaceable domain expertise: knowledge of business cycles, understanding of operational constraints, awareness of data quality issues, and intuition about what drives demand. Data scientists bring algorithmic expertise and engineering skills. The most effective ML implementations in WFM result from close collaboration between these disciplines, not from either working in isolation.

Plan for ongoing maintenance. ML models degrade over time as business conditions, customer behavior, and operational processes change. Any ML deployment requires a plan for monitoring performance, triggering retraining, and managing the transition when models need to be updated or replaced.

Main article: AI in Workforce Management

See Also

- Artificial Intelligence Fundamentals

- AI in Workforce Management

- Machine Learning for Volume Forecasting

- Reinforcement Learning for Schedule Optimization

- Probabilistic Forecasting

- Deterministic vs Probabilistic Models

- ARIMA Models

- Exponential Smoothing

- Forecasting Methods

- Scheduling Methods

- AI Scaffolding Framework

- Speech Analytics

- Conversational AI

- Sentiment Analysis in Customer Service

- Algorithmic Fairness and Bias in Workforce Scheduling

- Model Evaluation and Validation

References

- ↑ Mitchell, Tom M. Machine Learning. McGraw-Hill, 1997. ISBN 978-0070428072.

- ↑ McKinsey & Company. "AI-driven operations forecasting in data-light environments." 2024. https://www.mckinsey.com/capabilities/operations/our-insights/ai-driven-operations-forecasting-in-data-light-environments

- ↑ 3.0 3.1 3.2 Géron, Aurélien. Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 3rd ed. O'Reilly Media, 2022. ISBN 978-1098125974.

- ↑ 4.0 4.1 Hyndman, Rob J. and George Athanasopoulos. Forecasting: Principles and Practice, 3rd ed. OTexts, 2021. ISBN 978-0987507136. Available at https://otexts.com/fpp3/

- ↑ Raghavan, Manish, et al. "Mitigating Bias in Algorithmic Hiring: Evaluating Claims and Practices." Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAT*), 2020. pp. 469–481.

- ↑ Makridakis, Spyros, et al. "M5 accuracy competition: Results, findings, and conclusions." International Journal of Forecasting, Vol. 38, No. 4, 2022, pp. 1346–1364.