Reinforcement Learning for Schedule Optimization

Reinforcement learning for schedule optimization is an application of reinforcement learning (RL) — a branch of machine learning in which an agent learns to make decisions by interacting with an environment and receiving reward signals — to the problem of generating and adjusting workforce schedules that maximize defined operational objectives. Traditional Schedule Optimization approaches rely on mixed-integer linear programming (MILP) or heuristic search; RL-based approaches treat scheduling as a sequential decision problem, learning shift assignment policies through simulated or historical experience. Sutton and Barto's foundational text defines reinforcement learning as the computational approach to goal-directed learning from interaction, in which an agent learns a policy mapping states to actions through trial-and-error with delayed reward.[1] Lykourentzou et al. (2021) demonstrated that deep RL approaches can achieve near-optimal schedule assignments in complex multi-skill environments that defeat classical optimization methods due to combinatorial explosion.[2] This article examines the RL formulation, current capabilities, limitations, and maturity implications of RL-based schedule optimization.

Reinforcement Learning Foundations

Core Framework

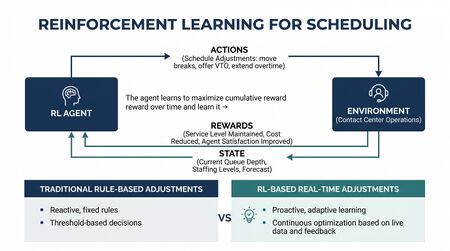

A reinforcement learning system consists of four components:

- Agent — the decision-making entity (in scheduling, the scheduling algorithm)

- Environment — the system the agent interacts with (the contact center, including demand patterns, agent constraints, and operational rules)

- State — the current condition of the environment observable by the agent (current schedule, unassigned shifts, agent availability, forecast demand)

- Reward signal — a scalar feedback value the agent receives after each action (e.g., improvement in Service Level coverage, reduction in Occupancy variance, schedule cost)

The agent's goal is to learn a policy π(a|s) — a mapping from states to actions — that maximizes cumulative expected reward over time. In scheduling contexts, the reward function encodes the objectives the schedule is intended to achieve.

Scheduling as a Sequential Decision Problem

Traditional Schedule Optimization treats scheduling as a one-shot optimization: given demand requirements and agent constraints, find the assignment that minimizes cost (or maximizes coverage) subject to all constraints. This formulation works well when the problem is static and fully specified.

RL formulates scheduling as a sequential decision problem: at each step, the scheduler assigns one shift or makes one scheduling decision, observing the consequences before making the next decision. This formulation accommodates:

- Dynamic demand that evolves during the scheduling horizon

- Uncertainty in agent availability (unplanned absences, voluntary time off requests)

- Non-stationary constraint sets (rule changes, new agent hires, skill acquisitions)

- Multi-objective tradeoffs (service level vs. cost vs. agent preference satisfaction)

Reward Function Design

The reward function is the most critical design decision in RL-based scheduling. A poorly designed reward function produces policies that maximize the metric while violating unstated objectives — a form of "reward hacking." Common reward components in WFM scheduling include:

- Service Level coverage achieved versus target

- Occupancy within target range (penalizing both under- and over-occupancy)

- Schedule cost (total hours, overtime, premium shift costs)

- Agent preference satisfaction (adherence to preference bids)

- Constraint violations (labor law compliance, minimum rest periods)

Lykourentzou et al. (2021) used a composite reward combining service level achievement, fairness (measured as variance in shift quality across agents), and constraint satisfaction, demonstrating that the composite reward produced more balanced schedules than single-objective formulations.[3]

Deep RL Architectures for Scheduling

Policy Gradient Methods

Policy gradient methods (REINFORCE, Proximal Policy Optimization) directly optimize a parameterized policy by gradient ascent on expected reward. In scheduling, the policy is typically parameterized as a neural network that maps schedule state representations to shift assignment probabilities. Policy gradient methods handle continuous action spaces (e.g., shift start times on a continuous scale) and are well-suited to large state spaces.

Actor-Critic Methods

Actor-critic architectures maintain two networks: an actor (the policy) and a critic (an estimate of expected future reward given the current state). The critic reduces variance in policy gradient estimates, accelerating training. Proximal Policy Optimization (PPO), a widely used actor-critic variant, has been applied in workforce scheduling research due to its stability in high-dimensional scheduling environments.

Attention-Based Architectures

Transformer-based attention mechanisms have been applied to scheduling RL to capture dependencies between shift assignments — for example, the interaction between one agent's schedule and another's in multi-skill environments. Attention mechanisms enable the policy network to focus on the most relevant parts of the current scheduling state when making each assignment decision.

Comparison with Classical Methods

| Dimension | MILP / Classical | Reinforcement Learning |

|---|---|---|

| Optimality guarantee | Yes (with sufficient solver time) | No (learned policy is near-optimal, not provably optimal) |

| Scalability | Limited (combinatorial explosion beyond ~500 agents) | Better (policy generalizes; compute scales with network size, not problem size) |

| Adaptability to dynamic demand | Poor (requires re-solve) | Good (policy responds to updated state without re-solve) |

| Constraint handling | Explicit, provably enforced | Requires reward engineering; constraint violations possible |

| Training data requirement | None (algorithmic) | High (requires simulation environment or historical data) |

| Interpretability | High (LP dual variables explain trade-offs) | Low (neural network policy not easily explained) |

| Multi-objective flexibility | Requires weighted objective (pre-specified) | Reward function can be adjusted dynamically |

Current State and Limitations

RL-based schedule optimization is an active research area but remains predominantly at the experimental stage in production WFM deployments as of 2025. Key limitations include:

Training Instability

RL training can be sensitive to hyperparameter choices, reward function design, and environment simulation fidelity. Policies that perform well in simulation may degrade when deployed against real demand patterns or agent constraints not represented in the training environment.

Constraint Guarantee Gap

Classical MILP can provably guarantee constraint satisfaction (labor law compliance, minimum rest periods) because constraints are encoded algebraically. RL policies that are trained to penalize constraint violations may still produce occasional violations when penalty weights are insufficient. For regulatory-sensitive scheduling constraints, this gap is a significant deployment barrier.

Simulation Fidelity Requirement

Training requires a simulation environment that accurately models demand variability, agent behavior (absence, preference adherence), and operational dynamics. Building a high-fidelity simulation of a contact center environment is itself a substantial engineering effort.

Interpretability

Workforce managers and labor relations stakeholders typically require explanations for scheduling decisions — particularly when schedules allocate less preferred shifts. Neural network policies do not provide natural explanations for their decisions, creating adoption friction in unionized or preference-bidding environments.

Practical Applications and Emerging Use Cases

Despite current limitations, RL is being applied in several WFM-adjacent scheduling domains:

- Intraday reoptimization — RL agents that adjust schedules in response to real-time demand deviations, triggering Real-Time Schedule Adjustment actions faster than human intraday managers

- Multi-Skill Scheduling optimization — RL approaches to the multi-skill assignment problem have shown advantages over MILP at large scale, where classical solvers time out

- Agent preference learning — RL systems that learn agent preferences over shift attributes from historical bidding and satisfaction data, embedding learned preferences in scheduling objectives

- Capacity portfolio optimization — RL for optimizing the mix of full-time, part-time, and contract labor in Capacity Planning Methods, treating staffing mix as a sequential decision problem

Maturity Model Considerations

RL-based schedule optimization is firmly a Level 5 capability. It requires:

- High-fidelity historical data for simulation environment construction (L3 prerequisite)

- Robust schedule optimization infrastructure to serve as a baseline (L4 prerequisite)

- Machine learning operations (MLOps) capabilities to train, validate, and monitor RL policies in production

- Organizational tolerance for explainability gaps in scheduling decisions

Organizations at L1–L4 are better served by advancing classical Schedule Optimization and Schedule Generation capabilities before investing in RL-based approaches.

| Maturity Level | RL Scheduling Posture |

|---|---|

| L1–L2 | Not applicable; manual or basic rule-based scheduling |

| L3 | Classical optimization deployed; awareness of RL research emerging |

| L4 | Advanced MILP or heuristic optimization; simulation infrastructure potentially in place |

| L5 | RL-based intraday reoptimization possible; experimental multi-skill RL scheduling; continuous policy improvement cycle |

Related Concepts

- Schedule Optimization

- Schedule Generation

- Multi-Skill Scheduling

- Real-Time Schedule Adjustment

- Simulation Software

- Forecasting Methods

- Service Level

- Occupancy

- Interior Optimum

- WFM Labs Maturity Model

- AI Scaffolding Framework

References

- ↑ Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press.

- ↑ Lykourentzou, I., Antoniou, G., Naudet, Y., & Lepouras, G. (2021). Workforce Management via Deep Reinforcement Learning. Proceedings of the AAAI Conference on Artificial Intelligence.

- ↑ Lykourentzou, I., Antoniou, G., Naudet, Y., & Lepouras, G. (2021). Workforce Management via Deep Reinforcement Learning. Proceedings of the AAAI Conference on Artificial Intelligence.