AI Agent Capacity Planning

AI agent capacity planning is the discipline of determining how many AI agent instances, how much infrastructure, and what concurrency configuration an operation requires to meet service demand at target quality levels. Where traditional capacity planning uses Erlang C and Erlang-A to translate offered load into human headcount, AI capacity planning translates offered load into throughput requirements measured in tokens per second, concurrent sessions, and queue depth. The distinction matters: human capacity is discrete (one agent handles one interaction at a time in most voice environments), while AI capacity is continuous and elastic — a single model endpoint can serve dozens to hundreds of concurrent sessions, but degrades nonlinearly under load. This article provides the operational mathematics, scaling models, and infrastructure sizing methods required to plan AI agent capacity within blended human-AI operations.

For the broader planning context, see Agentic AI Workforce Planning. For cost implications of capacity decisions, see AI Cost Modeling for Workforce Operations. For governance over capacity changes, see AI Workforce Governance Frameworks.

Throughput Modeling

AI agent throughput is measured differently than human agent throughput. Human agents process one interaction at a time (in voice) or 2–4 simultaneously (in chat), with throughput expressed as contacts per hour. AI agents process interactions as token streams, with throughput governed by model inference speed, context window utilization, and tool-call latency.

Core Throughput Metrics

| Metric | Definition | Typical Range |

|---|---|---|

| Tokens per second (TPS) | Output token generation rate per model instance | 30–150 TPS (cloud API); 10–80 TPS (self-hosted) |

| Time to first token (TTFT) | Latency from request submission to first output token | 200ms–2s (cloud); 100ms–5s (self-hosted, load-dependent) |

| Concurrent sessions | Simultaneous interactions being processed | 1–500+ per endpoint (model and infrastructure dependent) |

| Tokens per interaction | Total input + output tokens consumed per customer interaction | 800–8,000 (simple FAQ); 5,000–50,000 (complex resolution) |

| Tool-call overhead | Additional latency from external API calls (CRM, knowledge base) | 200ms–3s per tool call; 1–8 tool calls per interaction |

The fundamental throughput equation for an AI agent endpoint:

effective_interactions_per_minute = (TPS × 60) / avg_output_tokens_per_interaction × concurrency_factor

Where concurrency_factor accounts for the fact that token generation is not the only bottleneck — prefill computation, tool calls, and memory retrieval consume cycles that reduce effective throughput below the theoretical maximum.

Prefill vs Generation Bottleneck

Modern transformer models have two distinct computation phases: prefill (processing all input tokens in parallel to build the KV cache) and generation (producing output tokens autoregressively, one at a time). Capacity planning must account for both:

- Prefill-bound workloads occur when interactions have long input contexts (lengthy conversation histories, large knowledge retrieval chunks). Prefill time scales roughly linearly with input length and consumes GPU memory proportional to context size.

- Generation-bound workloads occur when interactions require long outputs (detailed explanations, multi-step troubleshooting guides). Generation time scales linearly with output length but can be parallelized across batched requests.

Most contact center AI workloads are mixed: moderate input contexts (1,000–4,000 tokens including system prompt, conversation history, and retrieved knowledge) with moderate output lengths (200–1,500 tokens). The practical implication is that capacity planning cannot use a single TPS number — it must model the full request lifecycle including prefill latency, generation time, and tool-call overhead.

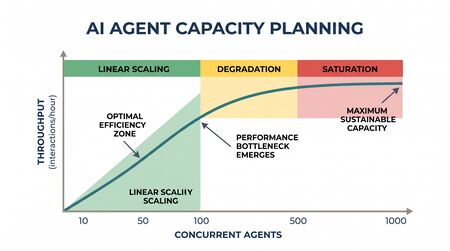

Scaling Curves

AI agent capacity does not scale linearly with infrastructure investment. Understanding the shape of the scaling curve is essential for avoiding both under-provisioning (degraded service) and over-provisioning (wasted spend).

Linear Scaling

Linear scaling occurs when each additional unit of compute produces a proportional increase in throughput. This holds approximately when:

- Requests are independent (no shared state between sessions)

- Infrastructure is horizontally scalable (adding GPU instances or API rate limit increases)

- Load is below the saturation point of any shared resource (network, database, rate limiter)

In practice, cloud-hosted LLM APIs approximate linear scaling within contracted rate limits. If an API endpoint handles 100 concurrent sessions and the operation needs 200, doubling the endpoint allocation (or using two endpoints behind a load balancer) roughly doubles capacity.

Sublinear Scaling

Sublinear scaling occurs when throughput increases less than proportionally with added compute. Common causes in AI agent operations:

- Shared backend contention: All AI agent instances query the same CRM, knowledge base, or order management system. As AI concurrency increases, backend response times degrade, increasing tool-call overhead and reducing effective throughput.

- Context window pressure: Longer conversations consume more memory per session, reducing the number of concurrent sessions a single GPU can support. A model serving 100 short-context sessions might only support 40 long-context sessions on the same hardware.

- Batching efficiency loss: GPU inference is most efficient when batching similar-length requests. High variance in request lengths reduces batching efficiency and effective throughput.

Step-Function Scaling

Step-function scaling occurs when capacity can only increase in discrete jumps — adding a new GPU node, provisioning a new model replica, or upgrading to a higher API tier. Between steps, the operation runs at fixed capacity regardless of demand. This is the dominant scaling pattern for self-hosted models: a single 8×H100 node might support 200 concurrent sessions, but there is no way to get 250 — the operation must provision a second node (jumping to 400 theoretical capacity) or accept the constraint.

Cloud API services smooth out step functions by abstracting infrastructure, but rate limits and tier structures reintroduce step-like behavior at the organizational level.

The Capacity Equation

The core capacity equation for AI agents parallels the human staffing equation but substitutes throughput for handle time:

AI_agents_needed = (volume × (1 - human_share)) / throughput_per_agent

Where:

volume= total offered interactions per planning periodhuman_share= fraction of interactions routed to human agents (1 minus containment rate)throughput_per_agent= interactions per agent instance per planning period at target quality

This equation yields the steady-state requirement. Real operations must add capacity buffers for:

Burst Capacity

Contact centers experience predictable intraday volume peaks (Monday morning, post-holiday, billing cycle dates) and unpredictable spikes (product outages, viral social media events). AI capacity planning must account for burst demand.

For cloud-hosted AI agents, burst capacity is managed through:

- Auto-scaling policies: Pre-configured scaling rules that add model instances when queue depth or latency exceeds thresholds. Typical scale-up latency: 30–120 seconds for additional API capacity, 5–15 minutes for new self-hosted instances.

- Burst rate limits: Many cloud providers offer burst capacity above contracted steady-state limits, typically at premium pricing. Planning must determine the maximum burst multiplier needed (commonly 2–3× steady state).

- Queue buffering: Unlike human agents where queue time directly impacts Service Level, AI agents can absorb brief queuing with minimal customer impact if the wait is seconds rather than minutes. A 5-second queue wait for an AI agent may be imperceptible, whereas a 5-second additional wait for a human agent compounds an already-long hold time.

For self-hosted deployments, burst capacity requires pre-provisioned headroom — GPU instances running below capacity during normal periods to absorb spikes. The cost of idle capacity must be weighed against the cost of degraded service during peaks.

The Latency-Throughput Tradeoff

Increasing throughput (more concurrent sessions per instance) increases latency (each session gets a smaller share of compute). This tradeoff is governed by:

effective_latency = base_latency × (1 + (utilization / (1 - utilization))^α)

Where α typically ranges from 0.5 to 1.5 depending on the inference engine's batching efficiency. At 70% utilization, latency is roughly 2–3× base; at 90%, it is 5–10× base. For customer-facing AI agents, acceptable generation latency is typically under 500ms time-to-first-token and under 100ms per subsequent token (perceived as natural typing speed in chat). This latency constraint bounds the maximum utilization, just as Occupancy targets of 85–90% bound human agent utilization to prevent burnout.

Practical utilization targets for AI agent infrastructure:

- Chat/messaging: 70–80% utilization (latency somewhat tolerant)

- Real-time voice: 50–60% utilization (strict latency requirements for natural conversation)

- Async processing (email, back-office): 85–95% utilization (latency tolerant)

Infrastructure Sizing

Infrastructure sizing translates throughput requirements into specific resource allocations.

Cloud API Sizing

For organizations consuming AI agents via cloud APIs (OpenAI, Anthropic, Google, AWS Bedrock):

| Resource | Sizing Parameter | Planning Method |

|---|---|---|

| API rate limits | Requests per minute (RPM), tokens per minute (TPM) | Peak volume × tokens_per_interaction / 60 × burst_multiplier |

| Tier selection | Standard vs batch vs provisioned throughput | Cost optimization: batch for async, provisioned for sustained high volume |

| Redundancy | Multi-provider failover | Primary + fallback provider for >99.9% uptime targets |

| Region selection | Latency to end users and backend systems | Co-locate with primary data center; <100ms round-trip |

Self-Hosted Sizing

For organizations running models on their own infrastructure:

| Resource | Sizing Rule | Notes |

|---|---|---|

| GPU memory | Model parameters × 2 bytes (FP16) + KV cache per session | 70B model ≈ 140GB; plus ~2GB per concurrent long-context session |

| GPU compute | Concurrent sessions × tokens_per_second_per_session | Scale horizontally with tensor parallelism (within node) or pipeline parallelism (across nodes) |

| CPU/RAM | Model loading, preprocessing, tool-call orchestration | 2–4× GPU memory in system RAM; 8–16 CPU cores per GPU |

| Network | Inter-node communication for distributed inference | 400Gbps+ InfiniBand for multi-node; 10Gbps minimum for single-node |

| Storage | Model weights, logs, conversation state | 500GB–2TB SSD per node; fast read for model loading |

Erlang vs Throughput Engineering: A Comparison

| Dimension | Human Capacity Planning (Erlang) | AI Capacity Planning (Throughput Engineering) |

|---|---|---|

| Unit of capacity | Agent (1 person) | Endpoint (variable concurrency) |

| Concurrency | 1 (voice), 2–4 (chat) | 10–500+ per instance |

| Handle time distribution | Log-normal, 3–15 min | Bimodal: fast resolution (10–30s) or complex (2–10 min) |

| Scaling granularity | 1 FTE at a time | Continuous (cloud API) or step-function (self-hosted) |

| Scale-up latency | Weeks to months (hire, train) | Seconds to minutes (auto-scale) |

| Scale-down latency | Months (notice periods, severance) | Seconds (reduce instances) |

| Quality degradation under load | Gradual (fatigue, rushing) | Threshold (works fine until saturation, then rapid degradation) |

| Failure mode | Absenteeism, attrition, errors | Outage, rate limiting, hallucination spike |

| Primary constraint | Labor market, budget | Compute, rate limits, backend throughput |

The most important distinction: human agents degrade gradually under overload (longer handle times, lower quality, higher after-call work), while AI agents exhibit cliff behavior — they perform normally up to a utilization threshold, then degrade rapidly as requests queue, context windows are exceeded, or rate limits are hit. This cliff behavior demands more aggressive capacity buffers than traditional Erlang models recommend.

Worked Example: Sizing an AI Agent Fleet

Scenario: A mid-size contact center receives 10,000 daily interactions across chat and email. The target AI containment rate is 70%. The operation uses a cloud-hosted LLM API.

Step 1: Determine AI-handled volume

AI_volume = 10,000 × 0.70 = 7,000 interactions/day

Step 2: Characterize interaction profiles

| Channel | Share of AI Volume | Avg Input Tokens | Avg Output Tokens | Avg Tool Calls | Avg Duration |

|---|---|---|---|---|---|

| Chat | 60% (4,200) | 2,500 | 800 | 3 | 45 seconds |

| 40% (2,800) | 3,200 | 1,200 | 2 | 8 seconds (async) |

Step 3: Calculate token throughput requirement

- Chat: 4,200 × (2,500 + 800) = 13.86M tokens/day = 160 tokens/second average

- Email: 2,800 × (3,200 + 1,200) = 12.32M tokens/day = 143 tokens/second average

- Total: ~303 tokens/second average

Step 4: Apply peak multiplier

- Peak hour handles approximately 12% of daily volume (industry standard contact center distribution)

- Peak throughput: 303 × (0.12 × 24) = 303 × 2.88 = 873 tokens/second during peak

- With 1.5× burst buffer: 1,310 tokens/second peak capacity required

Step 5: Determine concurrent session requirement

- Peak hour chat volume: 4,200 × 0.12 = 504 chats in peak hour

- Average chat duration 45 seconds → concurrent sessions at any moment: 504 × (45/3600) = ~6.3 concurrent chat sessions

- Email is async — batch processing, no concurrency constraint

- With burst buffer: target 15–20 concurrent chat sessions capacity

Step 6: Size the API contract

- Required: 1,310 tokens/second peak = ~78,600 tokens/minute

- Typical cloud API tier: 100,000 TPM tier covers peak with 27% headroom

- Backup provider at 50,000 TPM for failover

Step 7: Estimate infrastructure cost

- At $3.00/1M input tokens and $15.00/1M output tokens (GPT-4 class):

- Daily input cost: (4,200 × 2,500 + 2,800 × 3,200) / 1M × $3.00 = $58.38

- Daily output cost: (4,200 × 800 + 2,800 × 1,200) / 1M × $15.00 = $100.80

- Daily total: ~$159.18 → Monthly: ~$4,775

- See AI Cost Modeling for Workforce Operations for full cost analysis including oversight and error remediation.

Step 8: Define monitoring thresholds

| Metric | Green | Yellow | Red (Auto-scale or Alert) |

|---|---|---|---|

| Concurrent sessions | <15 | 15–18 | >18 |

| Time to first token | <500ms | 500ms–1.5s | >1.5s |

| Error rate | <1% | 1–3% | >3% |

| Queue depth | 0 | 1–3 | >3 |

WFM Applications

AI agent capacity planning integrates directly into the WFM lifecycle:

- Forecasting: AI containment rates must be forecasted alongside volume. A 5-point swing in containment (65% to 70%) shifts thousands of interactions between human and AI pools. Forecasting Methods must incorporate containment trend analysis.

- Scheduling: Human schedules must account for AI capacity — if AI infrastructure has a planned maintenance window, human staffing must cover the gap. This creates a new dependency in Schedule Optimization.

- Real-time management: Real-Time Operations must monitor AI capacity metrics (latency, error rate, queue depth) alongside human metrics (adherence, occupancy). When AI capacity degrades, real-time analysts must activate contingency plans — either scaling AI infrastructure or redirecting volume to human agents.

- Long-range planning: Long-Run Workforce Sizing must model AI capacity growth alongside headcount planning. Model transitions (e.g., migrating from one model generation to the next) create capacity planning events analogous to technology migrations. See Digital Worker Lifecycle Management for version transition planning.

Maturity Model Position

Within the WFM Labs Maturity Model:

- Level 2 (Developing): AI capacity managed reactively — infrastructure sized based on vendor defaults with manual scaling during incidents.

- Level 3 (Advanced): Proactive capacity planning with throughput modeling, defined burst capacity, and latency targets. AI metrics integrated into WFM dashboards.

- Level 4 (Strategic): Predictive capacity management — AI throughput requirements forecasted alongside volume, auto-scaling policies tuned to demand patterns, joint human-AI capacity optimization.

- Level 5 (Transformative): Autonomous capacity orchestration across model types, providers, and deployment modes. Self-healing infrastructure with automatic failover, model substitution, and cost-optimized routing.

See Also

- AI Scaffolding Framework

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- Three-Pool Architecture

- Cognitive Portfolio Model (N*)

- Workforce Cost Modeling

- AI Agent Orchestration for WFM

- AI Cost Modeling for Workforce Operations

- Digital Worker Lifecycle Management

- Capacity Planning Methods