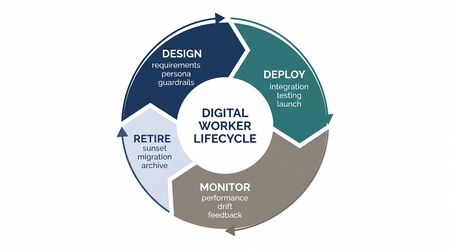

Digital Worker Lifecycle Management

Digital worker lifecycle management governs how AI agents are versioned, deployed, monitored, maintained, and retired across their operational lifespan. Unlike human agents — who are hired, trained, perform, and eventually leave — AI agents follow software lifecycles: they are built, tested, deployed, monitored, patched, and deprecated. This distinction has profound implications for workforce management. Human attrition is unpredictable and emotionally complex; AI agent retirement is planned and reversible. Human onboarding takes weeks; AI agent deployment takes minutes. Human performance is coached over months; AI agent performance is tuned in hours. But the software lifecycle introduces its own complexities: version management across concurrent deployments, regression risks from updates, dependency chains with external systems, and the operational challenge of managing transitions between model generations.

For governance of lifecycle decisions, see AI Workforce Governance Frameworks. For quality monitoring throughout the lifecycle, see AI Agent Quality Assurance. For capacity implications of lifecycle events, see AI Agent Capacity Planning.

Lifecycle Phases

| Phase | Human Agent Equivalent | Duration | Key Activities |

|---|---|---|---|

| Design | Job design, role definition | 1–4 weeks | Define scope, select model, design prompts, configure tools, set guardrails |

| Development | Recruiting, selection | 2–8 weeks | Build prompts, integrate tools, develop evaluation suites, create test cases |

| Testing | Training, nesting | 1–4 weeks | Automated evaluation, human evaluation, adversarial testing, compliance review |

| Deployment | Go-live, probation | Hours to days | Canary release → A/B test → graduated rollout → full production |

| Operation | Productive employment | Weeks to months | Monitoring, quality assurance, continuous optimization, incident response |

| Maintenance | Ongoing development, coaching | Continuous | Prompt updates, knowledge base refresh, guardrail adjustments, model patches |

| Transition | Role change, team transfer | Days to weeks | Model generation migration, scope expansion/contraction, architecture changes |

| Retirement | Separation, offboarding | Days to weeks | Graceful deprecation, traffic migration, knowledge preservation, decommissioning |

Versioning

AI agents are composite systems with multiple independently versioned components. Managing these versions — and understanding which combination is running in production — is essential for debugging, rollback, and compliance.

Version Components

| Component | What Changes | Versioning Approach | Change Frequency |

|---|---|---|---|

| System prompt | Instructions, personality, guardrails, output format | Semantic versioning (major.minor.patch) | Weekly to monthly |

| Model version | Underlying LLM (GPT-4o-2025-05-01, Claude Sonnet 4, etc.) | Provider version string | Monthly to quarterly (provider-initiated) |

| Tool definitions | Available tools, API schemas, parameter descriptions | Hash-based versioning tied to API contracts | Monthly |

| Knowledge base | Retrieval corpus, FAQ content, policy documents | Timestamp + content hash | Daily to weekly |

| Guardrail configuration | Confidence thresholds, content filters, action limits | Semantic versioning | Monthly |

| Orchestration logic | Routing rules, escalation criteria, fallback chains | Semantic versioning | Quarterly |

Composite Version Identifier

A production AI agent's full version is a composite:

agent_version = {prompt: v2.3.1, model: claude-sonnet-4-20250514, tools: v1.8, kb: 2026-05-14T06:00Z, guardrails: v1.2.0, orchestration: v3.1}

Every interaction log must record the composite version identifier. When a quality issue is detected, the composite version enables precise root cause analysis — was it the new prompt, the model update, or the knowledge base refresh?

Version Compatibility Matrix

Not all component versions are compatible. A prompt written for one model may underperform on another. Tools designed for a specific orchestration version may fail on newer versions. Maintain a compatibility matrix:

| Prompt Version | Compatible Models | Compatible Tool Versions | Notes |

|---|---|---|---|

| v2.3.x | Claude Sonnet 4, GPT-4o-2025-05+ | v1.7–v1.9 | Current production |

| v2.2.x | Claude Sonnet 3.5, GPT-4o-2024-11+ | v1.5–v1.8 | Deprecated, rollback target |

| v3.0.0-beta | Claude Sonnet 4, GPT-4.1 | v2.0-beta | Testing only |

Deployment

AI agent deployment follows software deployment best practices, adapted for the operational reality that deployment failures directly affect live customer interactions.

Canary Release

Process:

- Deploy new version to a single endpoint handling 1–5% of traffic

- Monitor for 24–72 hours against canary-specific dashboards

- Compare canary metrics against production baseline:

- Quality score: must be within 0.2 points of baseline

- Error rate: must not exceed baseline by more than 0.5%

- Customer satisfaction: must not decline by more than 2%

- Latency: must not increase by more than 20%

- If all metrics pass: proceed to graduated rollout

- If any metric fails: rollback canary, investigate root cause

Canary kill criteria (automatic rollback):

- Error rate >5% (regardless of baseline)

- Any compliance violation not present in baseline

- Customer complaint rate >2× baseline rate

- Latency >3× baseline (indicating infrastructure issue)

Graduated Rollout

After canary validation:

- 5% → 10% (24 hours, metrics check)

- 10% → 25% (24 hours, metrics check)

- 25% → 50% (24 hours, metrics check)

- 50% → 100% (maintain old version as rollback target for 7 days)

Total deployment timeline: 4–7 days from canary start to full production. This feels slow for software but is fast for workforce — hiring and training a human agent takes 4–12 weeks.

Rollback Procedures

Rollback must be fast and reliable. Target: rollback to previous version within 5 minutes of decision.

Rollback prerequisites:

- Previous version maintained in ready-to-deploy state for minimum 7 days after full deployment

- Routing infrastructure supports instant traffic switching between versions

- All external integrations (tools, knowledge base) compatible with previous version

- Rollback authority delegated to on-call operations (no committee approval needed for emergency rollback)

Post-rollback actions:

- Confirm rollback success (traffic on previous version, metrics recovering)

- Capture diagnostic data from failed version

- Root cause analysis within 48 hours

- Governance committee review before re-deploying fixed version

Monitoring

Operational monitoring covers the metrics that determine whether an AI agent is performing its workforce role effectively.

Real-Time Dashboards

| Metric Category | Specific Metrics | Refresh Rate | Alert Threshold |

|---|---|---|---|

| Performance | Latency (TTFT, total), throughput (interactions/min), queue depth | 10 seconds | Latency >2× baseline; queue depth >5 |

| Quality | Automated quality score, deterministic check pass rate, escalation rate | 1 minute | Quality <3.5; check fail rate >2% |

| Cost | Token cost/interaction, total hourly spend, cost trend | 5 minutes | Cost >2× baseline; hourly spend >budget |

| Containment | Containment rate, escalation rate by reason, self-serve completion | 5 minutes | Containment drop >5 points from target |

| Customer | CSAT (rolling), re-contact rate, complaint rate | 15 minutes | CSAT <4.0; re-contact >15% |

| Infrastructure | API error rate, rate limit utilization, provider status | 10 seconds | Error rate >1%; rate limit >80% |

Anomaly Detection

Beyond threshold-based alerts, deploy statistical anomaly detection:

- Isolation forests on multi-dimensional metric vectors (detect unusual metric combinations that individual thresholds miss)

- CUSUM charts for detecting gradual shifts in quality or cost metrics

- Seasonal decomposition to separate expected daily/weekly patterns from genuine anomalies

Log Management

Every AI agent interaction generates logs that serve multiple purposes:

- Operational: Real-time debugging, incident investigation

- Quality: Input to automated evaluation, human sampling, trend analysis

- Compliance: Audit trail for regulatory requirements, customer data requests

- Financial: Token-level cost accounting, budget tracking

- Improvement: Training data for evaluation models, prompt optimization insights

Retention requirements: operational logs 30 days; quality and compliance logs per regulatory requirement (typically 3–7 years); financial logs per accounting standards.

SLA Management

AI agents require formal SLAs analogous to human agent performance expectations, but structured around infrastructure and software metrics rather than behavioral metrics.

AI Agent SLA Framework

| SLA Dimension | Target | Measurement | Consequence of Breach |

|---|---|---|---|

| Availability | 99.9% uptime during operating hours | (total_minutes - downtime_minutes) / total_minutes | Failover to backup provider or human agents |

| Response time | <2 seconds time-to-first-token for 95th percentile | Latency percentile tracking | Auto-scale or route to faster model tier |

| Quality | Composite score ≥4.0, no dimension <3.0 | Automated + human-calibrated scoring | Escalate to governance; potential rollback |

| Containment | Within ±5 points of target rate | Daily containment calculation | Prompt investigation, capacity plan adjustment |

| Accuracy | <2% factual error rate | Knowledge base verification + human audit | Knowledge base update, guardrail tightening |

| Compliance | Zero regulatory violations | Deterministic compliance checking | Immediate investigation; potential circuit breaker |

| Cost | Within ±10% of budgeted cost per interaction | Token-level cost tracking | Model tier review, prompt optimization |

SLA Reporting

Monthly SLA report to governance committee:

- Actual vs target for each SLA dimension

- Breach count, duration, and root cause for each breach

- Trend analysis (improving, stable, degrading)

- Remediation actions taken and their effectiveness

- Forward-looking risk assessment (upcoming model changes, volume forecasts, infrastructure changes)

Incident Management

AI agent incidents differ from human agent performance issues in speed, scale, and reversibility.

Incident Classification

| Severity | Definition | Example | Response Time | Resolution Target |

|---|---|---|---|---|

| P1 — Critical | AI agents unable to serve customers; widespread customer impact | Platform outage, model returning errors, compliance violation affecting all interactions | 5 minutes | 1 hour |

| P2 — Major | Significant quality degradation affecting >10% of interactions | Quality score drop >1 point, containment rate drop >15 points, cost spike >3× | 15 minutes | 4 hours |

| P3 — Moderate | Noticeable degradation affecting 1–10% of interactions | Elevated error rate, minor quality drift, specific interaction type failing | 1 hour | 24 hours |

| P4 — Minor | Minor issue with minimal customer impact | Slightly elevated latency, cosmetic formatting issue, non-critical tool failure | 4 hours | 72 hours |

Incident Response Process

- Detection: Automated monitoring alert or human report

- Triage: On-call operations classifies severity, activates response

- Containment: For P1/P2: circuit breaker activation (redirect to humans or backup AI), rollback to previous version, or targeted fix

- Investigation: Root cause analysis using interaction logs, version history, infrastructure telemetry

- Resolution: Fix deployed through normal deployment pipeline (canary → rollout) or emergency hotfix for P1

- Post-incident review: Within 48 hours for P1/P2; within 1 week for P3. Document: timeline, root cause, impact, remediation, prevention measures

- Governance notification: P1/P2 incidents reported to oversight committee at next meeting (or emergency session for P1)

Common AI Agent Failure Modes

| Failure Mode | Symptoms | Root Cause | Mitigation |

|---|---|---|---|

| Provider outage | 100% error rate, timeout errors | Cloud API infrastructure failure | Multi-provider failover, human agent backup capacity |

| Model regression | Quality score drop after provider model update | Provider-side model change (often unannounced patches) | Pin model versions where possible; monitor quality after any version change |

| Prompt injection | AI agent behaves contrary to instructions for specific interactions | Adversarial customer input bypassing guardrails | Input sanitization, behavioral monitoring, guardrail hardening |

| Knowledge staleness | Increased inaccuracy on recent policy/product changes | Knowledge base not updated after business change | Automated knowledge refresh pipeline, change management integration |

| Backend degradation | Increased latency, incomplete responses | CRM/knowledge base/tool API degradation | Health checks on all dependencies, graceful degradation handling |

| Token budget exhaustion | Responses truncated or degraded late in month | Rate limit or budget cap reached | Budget monitoring with early warning, automatic tier switching |

Capacity Planning for Model Transitions

Model transitions — migrating from one model generation to the next (e.g., GPT-4 → GPT-4.1, Claude Sonnet 3.5 → Claude Sonnet 4) — are major lifecycle events requiring dedicated capacity planning.

Transition Planning Checklist

- Evaluation: Test new model against existing evaluation suite. Compare quality scores, latency, cost, and edge case handling. Minimum 1,000 evaluated interactions.

- Prompt migration: Prompts often need adjustment for new models (different instruction-following characteristics, different output patterns). Budget 1–2 weeks for prompt optimization.

- Compatibility testing: Verify all tool integrations, guardrails, and orchestration logic work with the new model. Regression test suite.

- Capacity assessment: New model may have different throughput characteristics. Re-run capacity planning calculations from AI Agent Capacity Planning.

- Cost modeling: New model has different pricing. Re-run cost modeling from AI Cost Modeling for Workforce Operations.

- Deployment: Standard canary → graduated rollout. Consider extended canary period (72–168 hours) for model generation changes versus prompt-only changes.

- Parallel operation: Run old and new models in parallel for 1–2 weeks after full deployment. Old model ready for immediate rollback.

- Retirement: Decommission old model only after new model has operated at full production for 2+ weeks with stable metrics.

Transition Risk: The Capability Gap

New model generations often have different strengths and weaknesses than their predecessors. A model that excels at reasoning may be worse at concise formatting. A model with better safety alignment may be more likely to refuse legitimate requests. The evaluation suite must cover not just overall quality but specific capability dimensions relevant to the operation.

Retirement

AI agent retirement follows a managed deprecation process.

Retirement Triggers

- End of support: Model provider deprecates the model version

- Supersession: New model delivers better quality at equal or lower cost

- Strategic change: Business decision to change AI agent scope, provider, or architecture

- Compliance: Regulatory change requiring capabilities the current agent lacks

Retirement Process

- Announcement: Internal stakeholders notified 30+ days before retirement

- Migration planning: Successor agent tested and validated. Capacity and cost impact assessed.

- Gradual transition: Traffic migrated from retiring agent to successor via graduated rollout (inverse of deployment)

- Knowledge preservation: Document what worked (effective prompts, successful guardrails, useful tools) for successor agent development

- Final shutdown: Remove retiring agent from routing. Maintain logs per retention policy.

- Post-retirement review: Confirm successor agent metrics match or exceed retired agent. Close lifecycle record.

Version Archaeology

Maintain a version history for every AI agent that has operated in production:

- Deployment date and retirement date

- Composite version identifier

- Performance summary (average quality score, containment rate, cost per interaction)

- Notable incidents

- Reason for retirement

This historical record enables trend analysis across agent generations and informs design decisions for future agents.

WFM Applications

Digital worker lifecycle management integrates into WFM operations at every phase:

- Forecasting: Model transitions affect containment rates and AHT distributions. Forecasting Methods must incorporate planned lifecycle events as forecast adjustments — similar to how new product launches or marketing campaigns adjust demand forecasts.

- Scheduling: Deployment and rollback events require human oversight staffing. Canary releases need dedicated QA analyst coverage. These requirements enter the scheduling demand through Schedule Optimization.

- Real-time management: Real-Time Operations must have visibility into AI agent lifecycle status — which version is in production, whether a deployment is in progress, whether a rollback has been triggered. This information determines available capacity and contingency procedures.

- Capacity planning: Lifecycle events (model transitions, retirement, new deployments) are capacity planning events. The capacity plan must account for transition periods where both old and new agents operate simultaneously, temporarily doubling infrastructure requirements.

- Long-range planning: Long-Run Workforce Sizing must incorporate AI agent lifecycle roadmaps — planned model transitions, scope expansions, provider migrations. These events create capacity and cost step changes that affect multi-year workforce plans.

Maturity Model Position

Within the WFM Labs Maturity Model:

- Level 2 (Developing): AI agents deployed with minimal lifecycle management. No version tracking. Updates applied directly to production. Rollback is manual and untested. Incidents handled reactively.

- Level 3 (Advanced): Formal versioning and deployment pipeline. Canary releases standard. Monitoring dashboards operational. Incident classification and response procedures defined. SLAs documented.

- Level 4 (Strategic): Full lifecycle management with automated deployment pipeline, comprehensive monitoring, proactive capacity planning for transitions, and SLA management integrated into governance. Version archaeology maintained. Model transition playbooks tested.

- Level 5 (Transformative): Autonomous lifecycle management — AI systems that monitor their own performance, trigger upgrades when better models become available, manage their own canary releases (with human approval gates), and maintain version history. Self-healing with automatic rollback on quality degradation. Lifecycle decisions informed by predictive models of quality, cost, and capacity impact.

See Also

- AI Scaffolding Framework

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- Three-Pool Architecture

- Cognitive Portfolio Model (N*)

- Workforce Cost Modeling

- AI Agent Orchestration for WFM

- AI Workforce Governance Frameworks

- AI Agent Quality Assurance

- AI Cost Modeling for Workforce Operations

- AI Agent Capacity Planning