AI Agent Quality Assurance

AI agent quality assurance (QA) is the systematic process of measuring, monitoring, and improving the output quality of AI agents that handle customer interactions. Traditional contact center QA evaluates a small sample of human agent interactions (typically 5–10 per agent per month) using manual scoring rubrics. AI agent QA inverts this model: because every AI interaction is logged and parseable, automated evaluation can score 100% of interactions, while human review focuses on calibration, edge cases, and nuance that automated scoring misses. This shift from sample-based to census-based quality monitoring — supplemented by targeted human audit — is one of the most significant operational changes in blended human-AI workforce environments.

For the governance framework that QA operates within, see AI Workforce Governance Frameworks. For cost implications of quality decisions, see AI Cost Modeling for Workforce Operations. For lifecycle context, see Digital Worker Lifecycle Management.

The QA Paradigm Shift

| Dimension | Traditional QA (Human Agents) | AI Agent QA |

|---|---|---|

| Coverage | 0.1–2% of interactions sampled | 100% automated scoring + targeted human sampling |

| Scoring method | Manual rubric, single evaluator | Multi-layer: deterministic checks + LLM-as-judge + human audit |

| Turnaround | Days to weeks | Real-time (automated) + hours (human sampling) |

| Consistency | High inter-rater variability (κ = 0.4–0.7 typical) | High automated consistency; human audit adds calibration |

| Cost | $2–$8 per evaluated interaction | $0.01–$0.05 per automated evaluation + $5–$15 per human audit |

| Feedback loop | Monthly coaching sessions | Real-time prompt adjustment (minutes to hours) |

| Failure detection | Lag of days to weeks | Seconds (automated) to hours (human-detected nuance issues) |

The paradigm shift is not merely technological — it changes the QA team's role. In a human agent operation, QA analysts spend 80% of their time scoring interactions and 20% analyzing trends. In an AI agent operation, automated scoring handles the volume work, and QA analysts spend 80% of their time on calibration, root cause analysis, prompt improvement recommendations, and edge case investigation.

Automated Evaluation Layers

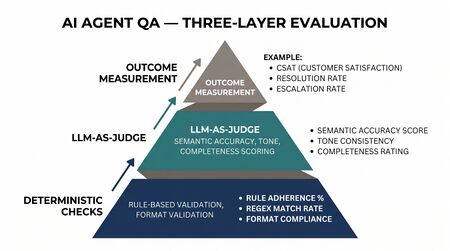

Automated evaluation operates in three layers, each catching different types of quality failures.

Layer 1: Deterministic Checks

Deterministic checks are rule-based evaluations that produce binary pass/fail results with zero ambiguity. They are fast, cheap, and should run on 100% of interactions.

| Check Type | What It Evaluates | Implementation |

|---|---|---|

| Format compliance | Response structure matches required format (greeting, body, closing) | Regex or template matching |

| Required disclosures | Mandatory statements included (AI disclosure, legal disclaimers) | String matching with synonym expansion |

| Prohibited content | No profanity, no competitor mentions, no unauthorized promises | Blocklist matching + semantic similarity for paraphrased violations |

| Data handling | No PII echoed unnecessarily, no cross-customer data leakage | Pattern matching for SSN, credit card, account numbers in output |

| Action authorization | Actions taken (refunds, account changes) within approved authority limits | Rule engine checking action parameters against policy limits |

| Response length | Output within acceptable range (not truncated, not excessively verbose) | Token count bounds check |

| Tool usage | Required tools called (knowledge base lookup, account verification) | Tool call log analysis |

Deterministic checks catch approximately 60–70% of serious quality failures. They are necessary but insufficient — an interaction can pass all deterministic checks while still being unhelpful, tonally inappropriate, or factually wrong in ways that escape pattern matching.

Layer 2: LLM-as-Judge Scoring

LLM-as-judge uses a separate language model to evaluate the quality of the primary AI agent's output. This approach catches nuanced quality issues that deterministic checks miss — tone inappropriateness, logical inconsistencies, incomplete resolutions, and subtle factual errors.

Implementation pattern:

A smaller, cheaper model (economy tier) evaluates the primary agent's interaction against defined quality dimensions. The judge model receives:

- The customer message(s)

- The AI agent's response(s)

- The quality rubric with scoring criteria

- Reference material (correct answers, policy documents) when available

Scoring output: Structured scores on each quality dimension (1–5 scale) with brief justification.

Cost: At economy model pricing, evaluating a single interaction costs $0.002–$0.01 — enabling 100% coverage at a fraction of human evaluation cost. For 200,000 interactions per month, full LLM-as-judge coverage costs approximately $400–$2,000.

Calibration requirement: LLM-as-judge scores must be calibrated against human evaluator scores. Initial calibration requires 200–500 interactions scored by both humans and the judge model. Ongoing calibration requires 50–100 interactions per month to detect judge model drift. Target: judge-human agreement within 0.5 points on a 5-point scale, with Cohen's kappa > 0.7.

Layer 3: Customer Outcome Correlation

The ultimate quality measure is customer outcome — did the interaction resolve the customer's issue? Outcome correlation connects quality scores to business metrics:

- Resolution rate: Did the customer's issue get resolved without re-contact? (Measured via re-contact rate within 24–72 hours)

- Customer satisfaction: Post-interaction survey scores (CSAT, CES)

- Escalation outcome: For escalated interactions, did the human agent confirm the AI's work was adequate up to the escalation point?

- Revenue impact: For sales or retention interactions, conversion rate comparison

Outcome correlation validates whether the automated scoring actually predicts quality that customers experience. A scoring system that gives high marks to interactions that customers rate poorly is worse than useless — it creates false confidence.

Quality Dimensions

AI agent quality is multidimensional. A single "quality score" obscures the specific dimensions that need attention.

| Dimension | Definition | Measurement Method | Weight (Typical) |

|---|---|---|---|

| Accuracy | Factual correctness of information provided | Knowledge base verification, deterministic fact-checking | 25% |

| Completeness | All aspects of the customer's question/issue addressed | Checklist extraction from customer message vs response coverage | 20% |

| Resolution effectiveness | Customer's underlying need met, not just question answered | Re-contact rate, customer satisfaction correlation | 20% |

| Tone and empathy | Appropriate emotional register for the situation | LLM-as-judge tone scoring, sentiment analysis | 10% |

| Compliance | Adherence to regulatory and policy requirements | Deterministic rule checks | 15% |

| Efficiency | Resolution achieved without unnecessary back-and-forth | Turn count, token efficiency, time to resolution | 10% |

Weights should be calibrated to the operation's priorities. A financial services operation might weight compliance at 30% and tone at 5%; a luxury retail operation might weight tone at 25% and efficiency at 5%.

Composite Quality Score

QS_composite = Σ(dimension_score_i × weight_i) / Σ(weight_i)

Target: QS_composite ≥ 4.0 on a 5-point scale for production AI agents. Interactions scoring below 3.0 on any HIGH-weight dimension (accuracy, completeness, resolution, compliance) should trigger automatic escalation review.

Human Sampling

Human sampling provides ground truth that calibrates automated scoring and catches quality issues invisible to automated evaluation.

Sample Size Determination

The required sample size depends on what the sampling needs to detect:

For baseline quality measurement (estimating the true quality score):

n = (Z² × σ²) / e²- With 95% confidence (Z=1.96), estimated standard deviation of 0.8 on a 5-point scale, and ±0.1 margin of error:

n = (3.84 × 0.64) / 0.01 = 246 samples

For defect detection (detecting a defect rate of p):

n = (Z² × p × (1-p)) / e²- For detecting a 2% defect rate with ±1% margin at 95% confidence:

n = (3.84 × 0.02 × 0.98) / 0.0001 = 753 samples

For change detection (detecting a quality change between periods):

n = 2 × (Z_α + Z_β)² × σ² / δ²- To detect a 0.3-point shift with 80% power: ~113 samples per period

Practical recommendation: 500–1,000 human-evaluated interactions per month covers baseline measurement, defect detection, and change detection for most operations. This is 0.25–0.5% of a 200,000 interaction volume — far below traditional human QA effort while providing stronger statistical power.

Sampling Strategy

Optimal sampling allocates human review effort where it has the highest value:

- 50% random sample: Unbiased baseline quality measurement

- 25% risk-stratified: Over-sample from flagged interactions (automated score < threshold), new prompt versions, complex interaction types

- 15% edge case investigation: Interactions near decision boundaries — cases where the AI agent almost escalated, barely passed compliance checks, or showed uncertainty

- 10% adversarial testing: Deliberate attempts to break the AI agent through prompt injection, manipulation, edge case inputs, and ambiguous requests

Drift Detection

AI agent quality can degrade over time even without explicit changes to the model or prompts. Drift occurs when:

- Data drift: Customer inquiries evolve (new products, new policies, seasonal changes) and the AI agent's knowledge becomes stale

- Model drift: Cloud API providers update models without explicit notification (safety patches, fine-tuning adjustments)

- Interaction drift: Customers learn to interact with AI agents differently over time (shorter inputs, testing behaviors)

- Backend drift: External systems the AI agent depends on change (updated CRM fields, new knowledge base structure)

Statistical Process Control for AI Quality

Apply SPC principles from manufacturing quality to AI agent monitoring:

Control chart setup:

- Metric: daily composite quality score (averaged across all evaluated interactions)

- Center line: established baseline quality score (from initial calibration period)

- Control limits: ±3σ from baseline (approximately 99.7% of natural variation)

- Warning limits: ±2σ from baseline

Detection rules (Western Electric rules adapted):

- Single point outside 3σ → immediate investigation

- Two of three consecutive points outside 2σ → investigation within 24 hours

- Four of five consecutive points outside 1σ (same side) → trend investigation within 48 hours

- Eight consecutive points on same side of center line → systematic shift, prompt/model review required

Implementation: Calculate daily quality score from automated evaluation. Plot on control chart. Automate alerting on rule violations. Review weekly for trends not caught by individual rules.

Drift Response Protocol

| Drift Severity | Indicator | Response | Timeline |

|---|---|---|---|

| Minor | Warning limit breach, quickly returns | Log and monitor | Next regular review |

| Moderate | Sustained shift (Rule 4 or Rule 8 trigger) | Root cause analysis, prompt adjustment | 48 hours |

| Major | Control limit breach, persistent | Escalate to governance committee, consider rollback | 24 hours |

| Critical | Quality score collapse (>1 point drop) | Circuit breaker: redirect to human agents | Immediate |

A/B Testing AI Agent Variants

A/B testing applies the same experimental rigor used in product optimization to AI agent operations. Test one variable at a time: prompt version, model tier, guardrail configuration, or tool setup.

Experimental Design

Sample size for A/B test:

n_per_variant = (Z_α + Z_β)² × 2σ² / δ²

To detect a 0.2-point quality score difference (on 5-point scale) with 80% power and 95% confidence:

n = (1.96 + 0.84)² × 2 × 0.64 / 0.04 = 251 per variant

At 7,000 AI interactions per day (from the capacity planning example), an A/B test reaches statistical power in under 1 hour per variant. This is radically faster than human agent A/B tests, which require weeks or months to accumulate sufficient sample.

Metrics to compare:

- Composite quality score (primary)

- Resolution rate (secondary)

- Customer satisfaction (secondary)

- Cost per interaction (secondary)

- Escalation rate (guardrail)

Guardrail metrics: If the test variant's compliance violation rate exceeds the control's by any statistically significant amount, terminate the test immediately regardless of other metrics.

Canary Testing

Before full A/B testing, deploy new variants as canary releases handling 1–5% of traffic. Monitor for catastrophic failures (error spikes, compliance violations, customer complaints) before expanding to full A/B test volumes. Canary period: 24–72 hours minimum.

Calibration Between Human and AI Quality Scores

In blended operations, quality scores must be comparable across human and AI agents. A customer should receive equivalent quality regardless of which agent type handles their interaction.

The Calibration Problem

Human agent quality is scored by human evaluators using rubrics. AI agent quality is scored by automated systems. These scoring systems have different biases:

- Human evaluators may score AI agents more harshly (novelty bias, distrust of AI)

- Human evaluators may score AI agents more leniently (impressed by fluent output)

- Automated scoring may miss nuance that human evaluators catch

- Automated scoring may penalize formatting differences that don't affect quality

Calibration Method

- Parallel scoring: 200+ interactions scored by both human evaluators and automated system

- Bias detection: Compare mean scores, variance, and distribution shape between scoring methods

- Equating: Apply linear equating (or equipercentile equating for non-normal distributions) to map automated scores onto the human scoring scale

- Ongoing calibration: 50 parallel-scored interactions per month to detect calibration drift

- Cross-agent comparison: Once calibrated, compare human agent and AI agent quality on the same scale. This enables unified quality reporting and identifies which agent type performs better on which interaction types.

Practical Target

After calibration:

- Mean score difference between human evaluators and automated scoring: < 0.2 points (5-point scale)

- Rank-order correlation (Spearman): > 0.80

- Cohen's kappa for pass/fail classification: > 0.75

WFM Applications

AI agent QA integrates into WFM operations through several mechanisms:

- Quality-adjusted capacity: AI Agent Capacity Planning should use quality-adjusted throughput — interactions that pass quality thresholds — not raw interaction counts. An AI agent that handles 1,000 interactions but only 900 meet quality standards has an effective capacity of 900.

- Quality-triggered staffing adjustments: When AI quality degrades (drift detected), WFM must increase human staffing to handle redirected volume. Quality monitoring signals feed directly into Real-Time Operations staffing decisions.

- Quality-cost optimization: AI Cost Modeling for Workforce Operations shows that error remediation dominates AI costs. QA identifies which quality dimensions drive the most remediation cost, enabling targeted improvement with the highest ROI.

- Scheduling QA analyst coverage: Human sampling requires QA analysts. These analysts need schedules aligned with AI agent operating hours and volume patterns — a scheduling requirement that itself falls within WFM's scope.

- Continuous improvement cycle: QA findings feed prompt engineering improvements, which feed quality improvements, which feed capacity and cost improvements. This cycle — analogous to the DMAIC cycle in Six Sigma — is the primary mechanism for AI agent performance improvement over time.

Maturity Model Position

Within the WFM Labs Maturity Model:

- Level 2 (Developing): Basic automated checks (format compliance, prohibited content). Occasional manual review. No systematic quality measurement. Quality issues detected through customer complaints.

- Level 3 (Advanced): Three-layer automated evaluation (deterministic + LLM-as-judge + outcome correlation). Statistically powered human sampling. Quality dimensions defined and weighted. Drift detection via control charts.

- Level 4 (Strategic): Calibrated quality scoring across human and AI agents. A/B testing infrastructure for continuous optimization. Quality metrics integrated into capacity and cost models. Predictive quality models anticipating drift before threshold breach.

- Level 5 (Transformative): Autonomous quality management — AI systems that detect quality issues, diagnose root causes, and implement prompt fixes with human approval. Self-calibrating scoring systems. Quality-optimized model routing that automatically selects the model tier most likely to achieve quality targets for each interaction type.

See Also

- AI Scaffolding Framework

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- Three-Pool Architecture

- Cognitive Portfolio Model (N*)

- Workforce Cost Modeling

- AI Agent Orchestration for WFM

- AI Workforce Governance Frameworks

- AI Cost Modeling for Workforce Operations

- Digital Worker Lifecycle Management

- Quality Management