AI Workforce Governance Frameworks

AI workforce governance frameworks define who oversees AI workers, how decisions made by AI agents are audited and controlled, and what organizational structures ensure accountability when autonomous systems operate alongside human staff. In traditional workforce management, governance is largely implicit — human agents are managed through supervisors, quality teams, HR policies, and labor law. AI agents require explicit governance because they lack the social accountability mechanisms that constrain human behavior: they do not fear termination, respond to coaching, or internalize organizational values. Every control must be engineered, monitored, and enforced through technical and organizational mechanisms. This article provides the governance architecture required to operate AI agents as responsible workforce members at scale.

For the technical framework governing AI agent integration, see AI Scaffolding Framework. For quality assurance specifics, see AI Agent Quality Assurance. For ethical considerations, see Ethical AI in Workforce Decisions.

The Governance Problem

Human workers operate within overlapping governance systems: employment law, company policy, management oversight, peer accountability, professional ethics, and personal judgment. These systems are redundant by design — if one fails, others constrain behavior. An agent who bypasses a process is caught by QA; a QA failure is caught by a customer complaint; a complaint failure is caught by regulatory audit.

AI agents have none of these natural governance layers. An AI agent that generates a harmful response does not self-correct through professional guilt, does not get warned by a colleague, and does not fear disciplinary action. Without engineered governance, the only detection mechanism is customer harm — which is the governance equivalent of detecting fire by waiting for the building to burn.

Effective AI workforce governance must recreate the governance redundancy that human workforces inherit naturally. This requires layered controls across organizational structure, policy, technical enforcement, and continuous monitoring.

Governance Structure

AI Oversight Committee

An AI oversight committee (or AI governance board) provides executive-level accountability for AI workforce operations. Unlike traditional technology governance (focused on IT risk and project approval), AI workforce governance addresses operational decisions that directly affect customers, employees, and compliance.

Composition:

| Role | Responsibility | Why This Role |

|---|---|---|

| VP/Director of Operations | Operational performance accountability | AI agents are workforce; operations owns performance |

| WFM Leadership | Capacity and staffing impact assessment | AI deployment changes staffing equations |

| Quality Management | Quality standards and monitoring | AI outputs must meet same quality standards as human agents |

| Compliance/Legal | Regulatory and legal risk | AI decisions create legal liability |

| IT/Engineering | Technical risk and capability assessment | Infrastructure, security, data governance |

| Customer Experience | Customer impact assessment | AI interactions affect CX metrics and brand |

| HR/People Operations | Workforce impact and change management | AI deployment affects human workforce |

Meeting cadence: Monthly for steady-state operations; weekly during deployment or significant model changes; emergency session for incidents affecting >1% of customer interactions.

Decision authority:

- Approve new AI agent deployment to production queues

- Set and modify containment rate targets

- Authorize model changes (new versions, new providers)

- Define escalation thresholds and human oversight levels

- Review incident reports and approve remediation plans

Model Risk Management

Borrowed from financial services (where model risk management is regulatory requirement under SR 11-7), model risk management for AI workforce operations governs the lifecycle of models used in customer-facing roles.

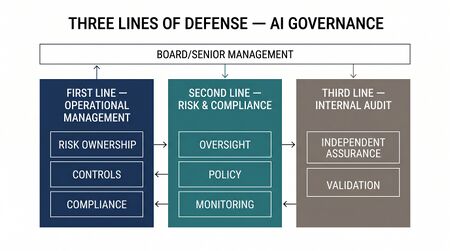

Three lines of defense:

- First line — Operations: Day-to-day model operation, monitoring, and frontline issue detection. Operations teams monitor performance dashboards, flag anomalies, and execute escalation protocols.

- Second line — Risk and Compliance: Independent review of model performance, bias testing, regulatory compliance validation. Reviews first-line monitoring and conducts periodic deep assessments.

- Third line — Internal Audit: Periodic independent evaluation of the entire governance framework, including first and second line effectiveness. Reports to board/executive leadership.

Operational Controls

Operational controls constrain AI agent behavior in real time:

- Guardrails: Hard-coded constraints that prevent specific actions regardless of model output — cannot promise refunds above $X, cannot access restricted data, cannot provide medical/legal/financial advice. Implemented at the orchestration layer, not within the model itself.

- Rate controls: Maximum interactions per time period, maximum token expenditure per interaction, maximum tool calls per session. Prevent runaway costs and limit blast radius of model misbehavior.

- Content filters: Pre- and post-processing filters that detect and block harmful, off-topic, or non-compliant content before it reaches customers.

- Confidence thresholds: When the model's confidence (measured via calibrated probability, internal entropy, or classification score) falls below a defined threshold, the interaction is automatically escalated to a human agent.

- Circuit breakers: Automatic shutdown triggers when aggregate metrics (error rate, customer satisfaction, escalation rate) exceed defined thresholds. Modeled on software circuit breaker patterns — the AI agent pool is taken offline and volume redirected to human agents until the issue is resolved.

Accountability Chains

When an AI agent makes a harmful decision — gives incorrect information, makes an unauthorized promise, exposes sensitive data — who is responsible?

The Accountability Framework

| Level | Accountable Party | Responsible For | Escalation Path |

|---|---|---|---|

| Interaction level | AI agent (technical) + supervising human (organizational) | Individual interaction quality and compliance | Flagged to QA team |

| Queue/skill level | Operations manager | Aggregate performance of AI agents in their queues | Flagged to oversight committee |

| Model level | AI/ML engineering team | Model selection, prompt design, guardrail configuration | Flagged to model risk management |

| Platform level | IT/Infrastructure | Availability, latency, security, data integrity | Flagged to IT incident management |

| Strategic level | AI oversight committee | Deployment decisions, risk acceptance, policy | Flagged to executive leadership |

| Organizational level | Executive leadership | Overall accountability for AI workforce decisions | Flagged to board/regulators |

The critical principle: AI agents cannot be accountable. Every AI agent decision must trace to a human who approved the system's capability to make that decision. The prompt engineer who wrote the instructions, the manager who approved the deployment, the committee that set the guardrails — these humans form the accountability chain. "The AI did it" is not an acceptable root cause; "the governance framework failed to prevent the AI from doing it" is the correct framing.

Decision Documentation

Every significant AI governance decision must be documented:

- What was decided (deploy model X to queue Y with guardrails Z)

- Who decided (committee vote, manager approval)

- What evidence informed the decision (test results, pilot metrics, risk assessment)

- What monitoring will validate the decision (KPIs, review dates)

- What rollback plan exists if the decision produces poor outcomes

This documentation forms the audit trail that regulators, legal teams, and internal auditors require.

Audit Framework

Automated Monitoring (Continuous)

Automated monitoring provides 100% coverage of AI agent interactions at low cost:

| Monitor Type | What It Checks | Alert Threshold |

|---|---|---|

| Compliance rules | Prohibited phrases, required disclosures, data handling violations | Any violation → immediate flag |

| Sentiment shift | Customer sentiment degradation during AI interaction | >2 standard deviations from baseline → flag for review |

| Hallucination detection | Factual claims checked against knowledge base; fabricated references | Any unverifiable claim → flag |

| Guardrail breach attempts | Model outputs that were blocked by guardrails | >5% of interactions triggering guardrails → engineering review |

| Cost anomalies | Per-interaction token usage significantly exceeding baseline | >3× average tokens → flag for prompt investigation |

| Resolution verification | Post-interaction check: did the customer's issue actually get resolved? | Re-contact rate >15% for AI-handled interactions → quality review |

Human Sampling (Periodic)

Automated monitoring catches rule violations but misses nuance — tone inappropriateness, technically correct but unhelpful responses, missed empathy opportunities. Human sampling fills this gap.

Sampling rate determination:

The minimum sample size to detect a defect rate of p with confidence level c and margin of error e:

n = (Z² × p × (1-p)) / e²

For a 3% defect rate with 95% confidence and ±1% margin:

n = (1.96² × 0.03 × 0.97) / 0.01² = 1,118 samples/month

For a queue handling 200,000 AI interactions per month, this is a 0.56% sample rate — far lower than the 5–10 calls per agent per month typical in human QA, but applied across the entire AI output volume.

Sampling strategy:

- Random sampling: Baseline quality measurement, unbiased

- Stratified sampling: Over-sample from categories with known higher risk (complex interactions, new prompt versions, after model updates)

- Triggered sampling: 100% review of interactions flagged by automated monitoring

- Adversarial sampling: Deliberate testing with edge cases, ambiguous inputs, and manipulation attempts

Escalation Triggers

Governance escalation is triggered by defined threshold breaches:

| Metric | Warning | Escalate to Oversight Committee | Emergency (Circuit Breaker) |

|---|---|---|---|

| Customer satisfaction (CSAT) | >5% decline week-over-week | >10% decline | >20% decline |

| Error rate | >2% (from baseline) | >5% | >10% |

| Compliance violation rate | Any violation | >0.1% of interactions | >0.5% of interactions |

| Customer complaint rate | >1.5× baseline | >2× baseline | >3× baseline |

| Containment rate drop | >5 points | >10 points | >15 points |

Policy Governance

Acceptable Use Policy

Defines what AI agents are permitted and prohibited from doing:

Permitted:

- Handle defined contact types within trained scope

- Access customer data necessary for resolution (with minimum-necessary principle)

- Make decisions within pre-approved authority (refunds up to $X, account changes within defined scope)

- Escalate to human agents when confidence is low or request exceeds authority

Prohibited:

- Provide medical, legal, or financial advice

- Make promises or commitments not authorized in the prompt configuration

- Access data beyond the minimum necessary for the current interaction

- Represent itself as human (customer disclosure requirements)

- Process interactions in categories not approved by the oversight committee

Customer Disclosure

Transparency requirements vary by jurisdiction and organizational policy. Best practice:

- Clear disclosure at interaction start: "You're chatting with an AI assistant"

- Easy opt-out: customer can request human agent at any point

- Disclosure in interaction records: metadata clearly marks AI-handled interactions

- No deceptive persona: AI agent names/personas should not imply human identity

Data Handling

AI agents interact with customer data at scale, creating data governance obligations:

- Data minimization: AI prompts should request only data necessary for resolution. Conversation context should be pruned to remove unnecessary PII before model inference.

- Retention: Interaction logs containing customer data must follow retention policies. Cloud API providers may retain request data for model training — contracts must address this.

- Cross-border: If AI inference occurs in a different jurisdiction than the customer (e.g., US-based API processing EU customer data), data transfer regulations apply.

- Right to explanation: Under regulations like GDPR, customers may have the right to understand how an AI decision was made. This requires maintaining decision audit trails and explainability mechanisms.

Regulatory Landscape

EU AI Act

The EU AI Act (effective 2025–2026[1]) classifies AI systems by risk level. Contact center AI agents likely fall into limited risk or high risk depending on the decisions they make:

- Limited risk: AI systems that interact with humans must disclose their AI nature (transparency obligation). Applies to virtually all customer-facing AI agents.

- High risk: AI systems that make decisions affecting individuals' access to services may qualify as high-risk[2], requiring conformity assessments, human oversight, and documentation. AI agents that make credit decisions, insurance determinations, or employment-related decisions are explicitly high-risk.

Workforce governance implications: organizations must document their AI agent systems, implement human oversight mechanisms, ensure transparency, and maintain records sufficient for regulatory audit.

US State and Federal Landscape

The US regulatory environment is fragmented:

- Colorado AI Act (effective 2026): Requires disclosure when AI makes "consequential decisions" and mandates impact assessments for high-risk AI systems.

- New York City Local Law 144: Requires bias audits for automated employment decision tools — relevant for AI used in workforce-facing decisions (scheduling, performance evaluation).

- CFPB guidance: Consumer Financial Protection Bureau has indicated that AI-generated responses to consumers must comply with the same disclosure and accuracy requirements as human responses.

- FTC enforcement: The Federal Trade Commission has brought enforcement actions against deceptive AI practices, including AI systems that impersonate humans.

Practical Compliance Strategy

Rather than building jurisdiction-specific governance, design for the most restrictive applicable regulation:

- Disclose AI nature in all customer interactions (satisfies EU AI Act and emerging US disclosure laws)

- Maintain decision audit trails for all AI-handled interactions (satisfies right-to-explanation requirements)

- Conduct periodic bias audits across protected categories (satisfies NYC LL144 and emerging bias legislation)

- Implement human oversight with authority to override AI decisions (satisfies EU AI Act human oversight requirement)

- Document the AI system's purpose, capabilities, and limitations (satisfies conformity assessment requirements)

Risk Taxonomy

| Risk Category | Description | Likelihood | Impact | Primary Mitigation |

|---|---|---|---|---|

| Hallucination | AI generates plausible but factually incorrect information | High | Medium-High | Knowledge base grounding, fact-checking layer, retrieval-augmented generation |

| Bias | AI produces systematically different outcomes across protected groups | Medium | High | Regular bias audits, diverse test sets, fairness metrics monitoring |

| Data leakage | AI inadvertently exposes sensitive data from training data or other interactions | Low | Critical | Data isolation, PII scrubbing, context window management |

| Customer harm | AI provides advice that leads to customer financial/physical/emotional harm | Low-Medium | Critical | Guardrails, restricted action scope, mandatory disclaimers |

| Manipulation | Adversarial users trick AI into bypassing controls | Medium | Medium-High | Prompt injection defenses, input sanitization, behavioral monitoring |

| Availability | AI platform outage disrupts service delivery | Low-Medium | High | Multi-provider redundancy, human failover capacity, circuit breakers |

| Regulatory violation | AI actions violate applicable law or regulation | Low | Critical | Compliance rules engine, legal review of prompts, audit trails |

| Cost overrun | Token consumption exceeds budget due to verbose outputs or prompt attacks | Medium | Medium | Per-interaction cost caps, token budgets, anomaly detection |

| Model drift | AI performance degrades over time without visible model change | Medium | Medium | Continuous monitoring, statistical process control on quality metrics |

Governance Maturity Model

| Level | Name | Characteristics |

|---|---|---|

| L1 | Ad Hoc | AI agents deployed without formal governance. No oversight committee. Quality monitored reactively (customer complaints). No audit trail. Accountability unclear. Common in pilot phases and shadow AI deployments. |

| L2 | Defined | Basic governance structure established. AI oversight committee meets quarterly. Acceptable use policy documented. Human sampling initiated (<500 samples/month). Compliance rules partially automated. Accountability chain documented but not tested. |

| L3 | Managed | Active governance with regular cadence. Oversight committee meets monthly. Automated monitoring covers compliance and sentiment. Human sampling statistically powered. Incident response procedures documented and tested. Regulatory compliance tracked. Risk taxonomy maintained. |

| L4 | Measured | Quantitative governance with KPIs. Governance effectiveness measured (time-to-detect, time-to-remediate, false positive rates). Bias audits conducted quarterly. Cross-functional accountability tested through tabletop exercises. Regulatory horizon scanning active. Governance costs tracked and optimized. |

| L5 | Autonomous with Guardrails | Self-governing AI operations within defined boundaries. Automated governance adjusts guardrails based on real-time performance. Circuit breakers activate and deactivate without human intervention. Human oversight focused on governance framework evolution rather than individual decisions. Continuous compliance verification. Governance itself is audited by independent AI systems. |

WFM Applications

AI workforce governance integrates directly into WFM operations:

- Scheduling governance: When AI agents handle a contact type, governance determines whether that deployment is approved, what hours it can operate (some jurisdictions may restrict AI availability), and what human oversight staffing is required during AI operating hours.

- Real-time management: Real-Time Operations teams need authority and procedures to disable AI agents during incidents. Governance defines who can pull the circuit breaker and under what conditions.

- Capacity planning integration: Governance decisions (e.g., "AI cannot handle financial transactions until Q3 audit is complete") directly constrain AI Agent Capacity Planning — the capacity model must reflect governance-imposed limitations.

- Change management: Model updates, prompt changes, and guardrail modifications are governance events. The WFM team must be notified because these changes can alter containment rates, AHT distributions, and escalation patterns — all of which affect Schedule Optimization and staffing.

- Workforce transition: Governance determines the pace at which contact types are transitioned from human to AI handling, protecting against both operational risk and workforce disruption. See Agentic AI Workforce Planning for workforce transition planning frameworks.

Maturity Model Position

Within the WFM Labs Maturity Model:

- Level 2 (Developing): Basic AI policies exist but governance is reactive. AI deployed with minimal oversight structure.

- Level 3 (Advanced): Formal governance framework with oversight committee, defined policies, and automated monitoring. Audit trails maintained.

- Level 4 (Strategic): Measured governance with effectiveness KPIs, regular bias audits, and cross-regulatory compliance. Governance integrated into WFM planning processes.

- Level 5 (Transformative): Autonomous governance within engineered boundaries. Self-adjusting controls, continuous compliance verification, and governance-as-code integrated into the AI agent platform.

See Also

- AI Scaffolding Framework

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- Three-Pool Architecture

- Cognitive Portfolio Model (N*)

- Workforce Cost Modeling

- AI Agent Orchestration for WFM

- Ethical AI in Workforce Decisions

- Human AI Supervision and Escalation Frameworks

- AI Agent Quality Assurance

- Digital Worker Lifecycle Management