Ethical AI in Workforce Decisions

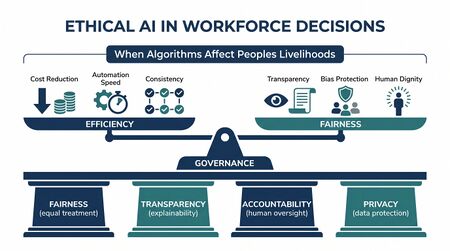

Ethical AI in workforce decisions addresses the fairness, transparency, and accountability requirements that arise when algorithms influence or determine outcomes affecting workers' schedules, performance evaluations, career trajectories, and livelihoods. As AI-powered tools become embedded across WFM operations — from schedule generation to quality scoring to attrition prediction — organizations face growing ethical obligations to ensure these systems do not discriminate, deceive, or deprive workers of agency.

This page provides a comprehensive framework for WFM leaders deploying AI systems that affect people. For the technical scheduling fairness perspective, see Algorithmic Fairness and Bias in Workforce Scheduling. For the human oversight architecture, see Human AI Supervision and Escalation Frameworks. For model validation methodology, see Model Evaluation and Validation.

Why Ethics Matter in WFM AI

WFM AI systems are not abstract. They make decisions that directly affect people's incomes, work-life balance, career progression, and job security:

- Scheduling algorithms determine when people work — including overnight shifts, weekends, and holidays that affect family time, health, and social participation

- Performance scoring models influence who receives coaching, promotion opportunities, or performance improvement plans

- Attrition risk models flag employees as flight risks, potentially triggering preemptive management actions that become self-fulfilling prophecies

- Quality evaluation AI determines whether a customer interaction is scored as satisfactory, directly affecting compensation in many contact centers

- Workforce planning models inform headcount decisions — including reductions in force

The asymmetry is stark: organizations deploy these systems to optimize business outcomes (cost, service level, efficiency), but the humans subject to algorithmic decisions bear the consequences of errors, biases, and opacity. A scheduling algorithm that consistently assigns single mothers to undesirable shifts is not merely an optimization failure — it is a civil rights issue.Cite error: Closing </ref> missing for <ref> tag</ref>

The stakes are compounded by scale. A biased human manager might affect dozens of employees. A biased algorithm deployed enterprise-wide affects thousands simultaneously, with the additional problem that algorithmic discrimination operates invisibly unless actively audited.

The Fairness Challenge

Fairness in WFM AI encompasses multiple dimensions, each presenting distinct challenges:

Scheduling Fairness

Scheduling algorithms optimize against constraints (service level targets, labor laws, skills coverage) and objectives (minimize cost, maximize preference satisfaction). Without explicit fairness constraints, optimization naturally exploits patterns in the data:

- Shift desirability disparities: If historical data shows certain demographic groups accepting undesirable shifts more often (due to power dynamics, not genuine preference), the algorithm learns to assign those groups disproportionately to those shifts

- Preference learning bias: Algorithms that learn from stated preferences may encode existing inequities — workers who feel less empowered to state preferences get systematically disadvantaged

- Overtime and premium pay distribution: Optimization that minimizes labor cost may systematically exclude higher-paid (often more senior, often demographically skewed) workers from overtime opportunities

See Algorithmic Fairness and Bias in Workforce Scheduling for technical fairness definitions (demographic parity, equal opportunity, individual fairness) and their application to scheduling.

Performance Model Bias

Performance scoring models trained on historical evaluations inherit the biases of past human evaluators:

- Rater bias propagation: If historical quality scores show systematic differences by agent demographics (gender, accent, name), ML models trained on those scores reproduce and amplify those patternsCite error: Closing

</ref>missing for<ref>tag</ref> - Proxy discrimination: Models may use features that correlate with protected characteristics — schedule adherence patterns that correlate with caregiving responsibilities, handle time patterns that correlate with language background

- Feedback loops: Agents scored poorly receive less coaching investment, perform worse, receive worse scores — a cycle that entrenches initial algorithmic assessments

Attrition Model Profiling

Attrition prediction models present particular ethical risks because they attempt to predict individual behavior from group characteristics:

- Models may effectively profile workers by demographic similarity to past leavers

- "Flight risk" labels can trigger differential treatment (reduced investment, preemptive replacement hiring) that creates the very outcome the model predicted

- The base rate problem means most flagged employees would not have left, resulting in widespread unnecessary intervention

Transparency and Explainability

A fundamental ethical test for any WFM AI system: can you explain to an agent why the algorithm made that decision? If a supervisor cannot articulate why an agent received a particular schedule, performance score, or coaching recommendation, the system fails a basic accountability standard.

The Explainability Spectrum

| Level | Description | Example | Sufficient For |

|---|---|---|---|

| Global | General description of factors the model considers | "The scheduling algorithm weighs skills, preferences, seniority, and fairness balance" | Public communication |

| Cohort | Why groups receive certain patterns | "Night shifts are distributed across all qualified agents on a rotating basis" | Team-level transparency |

| Individual | Why this person received this outcome | "You were assigned Tuesday evening because your skills match demand, you listed Tuesday as available, and rotation equity required a balancing assignment" | Individual agent explanation |

| Counterfactual | What would need to change for a different outcome | "If you add the billing skill certification, you become eligible for the preferred Monday morning slots" | Actionable agent feedback |

Meaningful transparency requires at minimum the individual level. Most deployed WFM AI systems operate at the global level at best — a gap that AI scaffolding approaches must address.

Technical Approaches

- Interpretable models: Using inherently explainable algorithms (decision trees, linear models, rule-based systems) for high-stakes workforce decisions, accepting some accuracy trade-off for transparency

- Post-hoc explanations: SHAP values, LIME, or attention analysis applied to complex models to generate after-the-fact explanations

- Decision logging: Recording not just outcomes but the reasoning chain — inputs, feature weights, alternative options considered, and why the selected option won

- Explanation interfaces: Building agent- and supervisor-facing interfaces that translate model outputs into natural language explanations

Regulatory Landscape

The regulatory environment for workforce AI is rapidly evolving, with significant implications for WFM operations.

EU AI Act

The European Union's AI Act, which entered into force in August 2024, classifies AI systems by risk level. Workforce AI falls squarely in the high-risk category:Cite error: Closing </ref> missing for <ref> tag</ref>

- Article 6 & Annex III: AI systems used in "employment, workers management and access to self-employment" are classified as high-risk

- Requirements for high-risk systems: Risk management system, data governance, technical documentation, transparency to users, human oversight capability, accuracy/robustness/cybersecurity standards

- Conformity assessment: Must be completed before market placement

- Fundamental rights impact assessment: Required for high-risk AI deployers

For WFM specifically, scheduling optimization, performance evaluation, promotion/termination recommendation, and workforce monitoring systems all qualify as high-risk. Organizations operating in the EU or managing EU-based workers must comply by August 2026 for most provisions.

NYC Local Law 144

New York City's Local Law 144 (effective July 2023) specifically regulates automated employment decision tools (AEDTs):Cite error: Closing </ref> missing for <ref> tag</ref>

- Requires annual bias audits by independent auditors before use

- Mandates public posting of audit results (summary of disparate impact findings by race/ethnicity and sex)

- Requires notice to candidates and employees subject to AEDT decisions

- Covers any tool that "substantially assists or replaces discretionary decision making"

While focused on hiring, the law's principles extend to any AI tool making employment-affecting decisions — including WFM systems that influence scheduling, performance evaluation, and workforce reduction decisions.

Emerging US State Regulations

Multiple US states are advancing AI workforce legislation:

- Illinois: The AI Video Interview Act (2020) requires consent and disclosure for AI-analyzed video interviewsCite error: Closing

</ref>missing for<ref>tag</ref> - Colorado: The AI Act (SB 24-205, effective 2026) requires deployers of high-risk AI to implement risk management programs, conduct impact assessments, and provide consumer notificationCite error: Closing

</ref>missing for<ref>tag</ref> - California, Texas, Connecticut: Active legislative proposals addressing various aspects of workplace AI

EEOC Guidance

The US Equal Employment Opportunity Commission has issued guidance clarifying that existing anti-discrimination laws apply to AI-driven employment decisions:Cite error: Closing </ref> missing for <ref> tag</ref>

- Employers remain liable for disparate impact caused by vendor-supplied AI tools

- "I didn't know the algorithm was biased" is not a defense

- Title VII, ADA, and ADEA protections apply regardless of whether decisions are made by humans or algorithms

Bias in WFM Data

Historical data reflects historical discrimination. Training AI systems on biased data perpetuates and scales that bias — a fundamental challenge documented extensively in the algorithmic fairness literature.Cite error: Closing </ref> missing for <ref> tag</ref>

Sources of Bias in WFM Data

| Data Source | Bias Mechanism | WFM Impact |

|---|---|---|

| Historical schedules | Reflect past power dynamics and discriminatory assignment patterns | Algorithm learns to replicate unfair shift distribution |

| Performance evaluations | Encode rater biases (halo effect, affinity bias, cultural bias in communication style assessment) | Model amplifies systematic scoring differences across demographic groups |

| Attendance records | Disproportionately penalize workers with caregiving responsibilities, chronic health conditions, or unreliable transportation | Adherence models punish structural disadvantage |

| Tenure/attrition data | Historical attrition patterns reflect past workplace culture, discrimination, and lack of advancement opportunity | Attrition models learn demographic patterns rather than causal factors |

| Customer satisfaction scores | Customer ratings reflect customer biases (documented racial and gender bias in customer surveys) | Agent evaluation systems amplify customer prejudice |

The Measurement Problem

Bias in WFM data is not always visible in standard summary statistics. Techniques required to surface it include:

- Disaggregated analysis: Breaking metrics down by demographic group to reveal disparities hidden in aggregate numbers

- Intersectional analysis: Examining combinations of protected characteristics (e.g., Black women, older workers with disabilities) where bias compounds

- Temporal analysis: Tracking whether algorithmic decisions cause disparities to widen over time through feedback effects

- Counterfactual testing: Systematically varying protected attributes in model inputs to test whether outcomes change

Algorithmic Auditing for WFM

Proactive algorithmic auditing is the primary mechanism for ensuring WFM AI systems operate fairly. The following framework provides a practical approach for WFM teams.

WFM Algorithmic Audit Framework

Phase 1: Scope and Risk Assessment

- Inventory all AI/ML systems making or influencing workforce decisions

- Classify each system by impact severity (scheduling preference vs. termination recommendation)

- Identify protected characteristics relevant to each system's jurisdiction

- Determine audit frequency based on risk level (quarterly for high-risk, annually for lower-risk)

Phase 2: Data Audit

- Examine training data for representation gaps and historical bias patterns

- Test for proxy variables (features that correlate with protected characteristics)

- Validate data labels — especially human-generated labels like quality scores

- Document data lineage and any preprocessing that may introduce or remove bias

Phase 3: Model Audit

- Run disparate impact analysis: for each protected group, compare selection/assignment rates (the 4/5ths rule as a starting heuristic, not a ceiling)

- Test model performance across demographic subgroups (equal accuracy, equal false positive/negative rates)

- Conduct counterfactual fairness testing: would the decision change if only the protected attribute differed?

- Evaluate explanation quality: are generated explanations faithful to actual model reasoning?

Phase 4: Outcome Audit

- Monitor real-world outcomes by demographic group over time

- Track whether algorithmic recommendations are overridden differently for different groups

- Measure downstream effects: do scheduling patterns correlate with attrition by group? Do performance scores predict promotion equitably?

- Survey affected workers on perceived fairness and transparency

Phase 5: Remediation and Reporting

- Document findings with specific disparities quantified

- Implement corrections (retraining, constraint modification, feature removal, model replacement)

- Publish summary results appropriate to stakeholder audience

- Schedule follow-up audit to verify remediation effectiveness

Human Oversight Requirements

Human oversight is not merely a regulatory checkbox — it is a fundamental design requirement for ethical AI deployment. The key question is: when must a human review an algorithmic decision?

Decision Impact Framework

| Impact Level | Examples | Oversight Requirement |

|---|---|---|

| Low | Routine schedule optimization within established parameters, break timing suggestions | Automated execution with periodic statistical review |

| Medium | Schedule changes affecting work-life balance, quality score aggregation, coaching recommendations | Human review of exceptions and edge cases; statistical monitoring of aggregate fairness |

| High | Performance improvement plan triggers, overtime denial patterns, shift bid adjudication | Mandatory human review before action; algorithm serves as recommendation only |

| Critical | Termination risk flagging, workforce reduction targeting, promotion/demotion decisions | Algorithm prohibited from making final decision; human decision-maker with full context required |

Effective Human Oversight

Simply requiring human review is insufficient without the conditions for meaningful oversight:

- Information asymmetry: Reviewers must have access to the algorithm's reasoning, not just its conclusion

- Genuine authority: Overriding the algorithm must be culturally acceptable and practically supported — not just theoretically possible

- Time and capacity: Reviewers must have adequate time to evaluate decisions, not rubber-stamp algorithmic outputs under time pressure

- Training: Human reviewers need training in algorithmic bias, fairness concepts, and the specific system's limitations

- Accountability: Both the algorithmic recommendation and the human review decision must be logged and auditable

Employee Rights and AI

Workers affected by AI-driven WFM decisions have emerging rights that organizations must respect, both as legal obligations and ethical commitments.

Right to Explanation

Workers should understand why algorithmic decisions affect them. This includes:

- What data inputs the system used (and confirming their accuracy)

- What factors most influenced the specific decision

- How the worker can affect future outcomes (actionable feedback)

- What alternatives the system considered

Right to Contest

Meaningful contestation requires:

- A clear process for challenging algorithmic decisions

- Access to a human decision-maker empowered to override the system

- No retaliation for raising algorithmic fairness concerns

- Timely resolution — particularly for scheduling decisions where delay renders the remedy moot

Data Privacy

WFM AI systems consume extensive worker data, raising privacy concerns:

- Data minimization: Collecting only data necessary for the specific WFM function

- Purpose limitation: Data collected for scheduling should not be repurposed for surveillance or performance evaluation without consent

- Retention limits: Historical data used for training should be retained only as long as necessary

- Monitoring boundaries: AI-powered productivity monitoring (keystroke logging, screen capture, location tracking) must be proportionate and disclosed

Agent experience research consistently shows that perceived surveillance and algorithmic control correlate with increased stress, reduced job satisfaction, and higher attrition — creating a counterproductive cycle where the tools meant to optimize workforce outcomes actively degrade them.Cite error: Closing </ref> missing for <ref> tag</ref>

Responsible Deployment Framework

The following checklist provides a practical starting point for WFM teams deploying AI systems that affect workers.

Pre-Deployment

- ☐ Impact assessment complete: Documented which workers are affected, how, and what could go wrong

- ☐ Bias audit conducted: Independent audit of training data and model outputs for disparate impact

- ☐ Explainability tested: Individual-level explanations generated and validated for accuracy and comprehensibility

- ☐ Human oversight designed: Clear escalation paths and override mechanisms for each decision type

- ☐ Regulatory compliance verified: System meets requirements for all jurisdictions where affected workers are located

- ☐ Employee notification prepared: Clear communication about what the system does, what data it uses, and how to contest decisions

- ☐ Rollback plan documented: Process for reverting to non-AI decision-making if problems emerge

Deployment

- ☐ Phased rollout: Pilot with monitoring before full deployment

- ☐ Baseline metrics captured: Pre-deployment fairness metrics documented for comparison

- ☐ Feedback channels active: Workers and supervisors can report concerns about algorithmic decisions

- ☐ Monitoring dashboards live: Real-time tracking of decision distributions across demographic groups

Post-Deployment

- ☐ Regular bias audits scheduled: Quarterly for high-impact systems, annually minimum

- ☐ Outcome tracking active: Monitoring downstream effects (attrition, satisfaction, grievances) by group

- ☐ Model drift detection: Alerting when model behavior shifts from validated baseline

- ☐ Periodic stakeholder review: Regular review with affected workers, supervisors, HR, legal, and compliance

- ☐ Continuous improvement: Documented process for incorporating audit findings and worker feedback into system updates

This framework aligns with the AI Scaffolding approach to progressive AI deployment, where human oversight starts high and decreases only as system reliability and fairness are demonstrated over time.

Organizational Accountability

Ethical AI in WFM requires clear accountability structures:

- Executive sponsorship: A named executive responsible for AI ethics in workforce decisions

- Cross-functional governance: WFM, HR, Legal, Compliance, IT, and worker representatives involved in AI oversight

- Vendor accountability: Contracts requiring vendors to provide bias audit support, explainability features, and transparency documentation

- Incident response: Defined process for responding to discovered bias, including affected worker notification and remediation

Change management for ethical AI deployment must address not only the technical system but also the cultural shift required — from trusting algorithmic outputs by default to maintaining healthy skepticism and active oversight.

See Also

- Algorithmic Fairness and Bias in Workforce Scheduling

- AI in Workforce Management

- Quality Management

- Performance Management

- Schedule Generation

- AI Scaffolding Framework

- Human AI Supervision and Escalation Frameworks

- Model Evaluation and Validation

- Agent Experience and Wellbeing

- Organizational Change Management for AI Workforce Transitions

- Attrition and Retention

- Workforce Digital Twins and Continuous Planning