Model Evaluation and Validation

Model Evaluation and Validation is the systematic process of assessing whether a machine learning or statistical model performs well enough to support real-world decisions. In [[AI in Workforce Management|workforce management]], where models drive staffing levels, agent scheduling, attrition planning, and customer routing, evaluation is not an academic exercise — it determines whether a model creates value or destroys it.

A model that appears accurate on paper may be useless in practice. A volume forecast with 5% MAPE sounds impressive until it consistently underpredicts Monday mornings by 20%, causing chronic understaffing during the week's busiest period. Conversely, a model that looks imperfect by standard metrics may be the best tool available for the decision at hand. Model evaluation bridges the gap between statistical performance and operational impact, and every WFM team deploying AI-driven tools needs a working understanding of how it is done.

The Evaluation Mindset

The single most important principle in model evaluation is this: models are tools, and tools are evaluated by how well they support the decisions they inform.

A forecast model should not be judged solely on whether its mean absolute percentage error is low. It should be judged on whether the staffing plans built from its forecasts lead to acceptable service levels, reasonable occupancy, and manageable shrinkage. A classification model that predicts agent attrition should not be judged solely on overall accuracy. It should be judged on whether acting on its predictions — intervening with at-risk agents, adjusting hiring pipelines — produces better outcomes than not having the model at all.

This decision-centric framing changes how practitioners approach evaluation:

- Choose metrics that align with downstream consequences. If underpredicting volume is three times more costly than overpredicting (because understaffing degrades service far more than overstaffing wastes labor), then a symmetric error metric like MAE may not capture what matters. Bias-aware or asymmetric loss metrics become essential.

- Compare against realistic baselines. A model is not good or bad in isolation. It is better or worse than the alternative. That alternative might be a simpler model, a manual forecast, or the current production system. Always evaluate relative to a baseline.

- Evaluate on data the model has never seen. The most common evaluation mistake is testing a model on the same data used to build it. This produces artificially optimistic results that collapse when the model encounters real-world conditions.

- Evaluate continuously, not once. A model that performed well six months ago may have degraded as patterns shifted. Evaluation is an ongoing practice, not a one-time gate.

The AI Scaffolding Framework provides a broader structure for how AI capabilities should be deployed and governed in WFM environments. Model evaluation is one critical component of that governance.

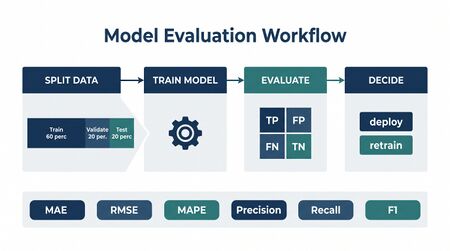

Regression Metrics

Regression metrics apply when a model produces continuous numerical predictions — most commonly in forecasting applications such as contact volume prediction, average handle time estimation, or demand modeling.

Main article: Forecast Accuracy Metrics Main article: MAPE WAPE and Forecast Bias

Mean Absolute Error (MAE)

MAE is the average of the absolute differences between predicted and actual values:

MAE is expressed in the same units as the target variable (e.g., calls, seconds, agents), making it directly interpretable. A MAE of 15 on a call volume forecast means the model is off by 15 calls on average. MAE treats all errors equally regardless of direction — overpredictions and underpredictions of the same magnitude contribute identically.

When to use: MAE is appropriate when errors of all sizes matter roughly equally and when the metric needs to be interpretable in business terms. It is less sensitive to outliers than RMSE.

Mean Absolute Percentage Error (MAPE)

MAPE expresses error as a percentage of actual values:

MAPE is the most commonly reported forecast accuracy metric in WFM because it normalizes across different scales — a 5% MAPE is comparable whether the interval averages 200 calls or 2,000 calls. However, MAPE has well-documented problems: it is undefined when actual values are zero, it penalizes underprediction more heavily than overprediction (the denominator shrinks for small actuals), and it can produce misleadingly high values for low-volume intervals.[1]

When to use: MAPE works well for reporting high-level forecast quality to stakeholders who understand percentages. Avoid it for low-volume intervals or when zero values are common.

Weighted Absolute Percentage Error (WAPE)

WAPE (sometimes called weighted MAPE) addresses MAPE's distortion on low-volume intervals by weighting errors by volume:

WAPE gives more influence to high-volume intervals, which typically have greater operational impact. A 50% miss on a 10-call interval matters less than a 10% miss on a 500-call interval, and WAPE reflects this.

When to use: WAPE is generally preferred over MAPE for WFM forecast evaluation, particularly when the forecast spans intervals with widely varying volumes.

Root Mean Squared Error (RMSE)

RMSE squares errors before averaging, then takes the square root:

The squaring means large errors are penalized disproportionately. A single interval where the forecast is off by 100 calls contributes far more to RMSE than ten intervals where the forecast is off by 10 calls each — even though the total absolute error is the same.

When to use: RMSE is appropriate when large errors are particularly costly. In WFM, a forecast miss of 200 calls in a single interval is often more damaging than twenty intervals each off by 10, because the concentrated miss may cause a service level breach. RMSE captures this asymmetry.

Forecast Bias

Bias measures the systematic tendency of a model to overpredict or underpredict:

A positive bias means the model tends to overpredict; negative means it underpredicts. In WFM, forecast bias directly translates to chronic over- or understaffing. A forecast with low MAPE but consistent negative bias will systematically understaff, eroding service levels even though the "accuracy" number looks good.

Bias should always be evaluated alongside accuracy metrics. See MAPE WAPE and Forecast Bias for detailed treatment of bias decomposition and correction strategies.

Classification Metrics

Classification metrics apply when a model assigns inputs to discrete categories — attrition risk (stay/leave), call intent (billing/support/sales), quality scores (pass/fail), or sentiment (positive/negative/neutral).

Accuracy

Accuracy is the proportion of correct predictions:

Accuracy is intuitive but dangerously misleading when classes are imbalanced. If 95% of agents do not attrite in a given quarter, a model that predicts "no attrition" for everyone achieves 95% accuracy while being completely useless — it identifies zero at-risk agents.

When to use: Only when classes are roughly balanced and all types of errors are equally costly. Rarely appropriate as a primary metric in WFM.

Precision and Recall

Precision and recall address the shortcomings of accuracy by focusing on specific classes:

- Precision (positive predictive value): Of all predictions for a given class, what fraction was correct? If the model flags 100 agents as attrition risks and 40 actually leave, precision is 40%.

- Recall (sensitivity, true positive rate): Of all actual instances of a class, what fraction did the model identify? If 60 agents actually leave and the model flagged 40 of them, recall is 67%.

The precision-recall tradeoff is central to WFM decision-making. Consider an attrition prediction model:

- High precision, low recall: The model flags few agents, but those it flags are very likely to leave. You can target interventions confidently but will miss many at-risk agents.

- High recall, low precision: The model catches most at-risk agents but also flags many who would have stayed. Interventions reach the right people but also waste resources on false alarms.

Which tradeoff is appropriate depends on the cost structure. If retention interventions are cheap (a conversation with a manager), high recall is preferable — cast a wide net. If interventions are expensive (retention bonuses, schedule accommodations), high precision matters more — spend resources only where they will have impact.

F1 Score

F1 is the harmonic mean of precision and recall:

F1 balances precision and recall into a single number. It is useful for comparing models but obscures the underlying tradeoff. When the business cost of false positives differs from the cost of false negatives — which is nearly always the case in WFM — reporting precision and recall separately is more informative than F1 alone.

Confusion Matrices

A confusion matrix provides the complete picture of a classifier's performance by tabulating predictions against actual outcomes:

| Predicted: Stay | Predicted: Leave | |

|---|---|---|

| Actual: Stay | True Negative (TN) — 850 | False Positive (FP) — 50 |

| Actual: Leave | False Negative (FN) — 20 | True Positive (TP) — 80 |

From this matrix, all other classification metrics can be derived:

- Precision = TP / (TP + FP) = 80 / 130 = 61.5%

- Recall = TP / (TP + FN) = 80 / 100 = 80.0%

- Accuracy = (TP + TN) / Total = 930 / 1000 = 93.0%

The confusion matrix reveals what summary metrics hide. In this example, accuracy looks excellent at 93%, but 20 agents who left were not identified — potentially 20 avoidable departures. Whether that matters depends on the cost of attrition versus the cost of intervention.

For multi-class problems — such as call intent classification with dozens of categories — confusion matrices become large but remain the most complete evaluation tool. Heatmap visualizations help identify which categories the model confuses most frequently.

ROC Curves and AUC

When a classifier outputs probability scores rather than hard categories, the Receiver Operating Characteristic (ROC) curve plots true positive rate against false positive rate across all possible classification thresholds. The Area Under the Curve (AUC) summarizes this into a single number between 0.5 (random guessing) and 1.0 (perfect classification).

AUC is useful for comparing models independent of threshold choice but can be misleading when class imbalance is severe — a common situation in WFM problems like fraud detection or rare-event prediction.[2] In such cases, the precision-recall curve provides a more informative view.

Train/Validation/Test Split

A model's performance on the data it was trained on is not a meaningful measure of its quality. A sufficiently complex model can memorize training data perfectly — achieving zero error on known examples while failing catastrophically on new data. This phenomenon is called overfitting, and guarding against it is the primary purpose of data splitting.[3]

The standard approach divides available data into three partitions:

- Training set (typically 60-70%): Used to fit the model — the model learns patterns from this data.

- Validation set (typically 15-20%): Used during development to tune model parameters and compare candidate models. The model never trains on this data, so validation performance approximates real-world performance.

- Test set (typically 15-20%): Held out entirely until final evaluation. The test set provides an unbiased estimate of how the model will perform in production. It should be used only once — repeated testing on the same test set introduces optimistic bias.

In WFM, a practical analogy: training data is the calls you staffed and observed. Validation data is next week's calls that you forecast for but haven't seen yet. Test data is next month — a completely unseen period that reveals whether your model generalizes.

The cardinal rule: information from the test set must never influence model development. If you peek at test results and adjust your model, the test set becomes a second validation set, and you no longer have an unbiased estimate of production performance.

For time-series applications common in WFM — contact volume forecasting, handle time prediction, arrival pattern modeling — random splitting violates temporal ordering. A model should never be trained on future data and tested on past data. Instead, splits must respect chronology: train on January-June, validate on July-August, test on September-October. This is called a temporal split or walk-forward split.

Cross-Validation

When data is limited — common with newer contact centers, seasonal products, or emerging channels — a single train/validation split may produce unreliable estimates. Cross-validation addresses this by systematically rotating which data serves as training and which serves as validation.

K-Fold Cross-Validation

In k-fold cross-validation, data is divided into k equally sized partitions (folds). The model is trained k times, each time using k-1 folds for training and the remaining fold for validation. The final performance estimate is the average across all k validation results.

Common choices are k = 5 or k = 10. Higher values of k produce less biased estimates but require more computation.

Time-Series Cross-Validation

Standard k-fold cross-validation cannot be applied to time-series data because random assignment to folds breaks temporal order — a model might train on December data and validate on October data, learning patterns it shouldn't yet have access to.

Time-series cross-validation (also called rolling-origin or expanding-window cross-validation) preserves temporal ordering:[4]

- Train on months 1-3, validate on month 4

- Train on months 1-4, validate on month 5

- Train on months 1-5, validate on month 6

- Continue expanding the training window

This approach mirrors how WFM forecasting actually works — models are built on historical data and used to predict the next unseen period. Each fold adds one more period of training data.

A variant called sliding window cross-validation uses a fixed-size training window that moves forward, discarding the oldest data at each step. This is appropriate when older data is less relevant — for example, when contact patterns have shifted due to product changes or channel migration.

For deterministic and probabilistic models alike, the choice of cross-validation strategy materially affects evaluation quality. Using standard k-fold on time-series data routinely produces accuracy estimates that are 10-30% more optimistic than actual production performance.

A/B Testing and Champion-Challenger

Offline evaluation — however carefully conducted — cannot fully predict how a model will perform in production. Customer behavior, operational processes, and data pipelines all introduce variability that historical test sets cannot capture. A/B testing and champion-challenger frameworks evaluate models under real operating conditions.

Champion-Challenger Design

In WFM platform contexts, the champion-challenger pattern is the standard approach:

- Champion: The current production model (or manual process). All decisions are currently made using this approach.

- Challenger: A candidate replacement model. The hypothesis is that it will produce better decisions.

The simplest implementation runs both models in parallel on the same inputs and compares outputs without acting on the challenger's predictions — a shadow deployment. This reveals whether the challenger produces different predictions and whether those differences, had they been acted upon, would have improved outcomes.

A more rigorous implementation actually splits traffic or decision-making between champion and challenger. In WFM, this might mean:

- Using the champion forecast for some queues and the challenger forecast for others

- Applying the champion attrition model to half of a site's agents and the challenger to the other half

- Routing a percentage of scheduling decisions through the challenger algorithm

The critical requirement is that the split is randomized (or at least controlled for confounders) so that differences in outcomes can be attributed to the model rather than to differences in the populations being served.

Statistical Significance

A/B tests must run long enough and on large enough samples to produce statistically significant results. In WFM, this often means weeks or months — volume patterns are cyclical, and a model that looks better during a quiet week may fail during peak periods. Premature conclusions from A/B tests are a common source of poor model selection decisions.

Practical Considerations

A/B testing in WFM has constraints that differ from web experimentation:

- Spillover effects: Scheduling decisions are interdependent. Changing how one group of agents is scheduled affects available coverage for other groups.

- Ethical considerations: If a challenger model systematically understaffs, service degrades for real customers. Guardrails and early stopping rules are essential.

- Measurement lag: The outcome of a staffing decision made today may not be measurable until weeks later (e.g., attrition driven by chronic overwork).

Evaluating Probabilistic Models

Traditional accuracy metrics evaluate point predictions — single-number forecasts. Probabilistic models output distributions, prediction intervals, or probability scores, requiring different evaluation approaches.

Calibration

A probabilistic model is well-calibrated if its predicted probabilities match observed frequencies. If the model assigns 70% probability to an event, that event should occur roughly 70% of the time across all instances where 70% was predicted.

Calibration is assessed using reliability diagrams (or calibration plots), which bin predictions by predicted probability and compare against observed frequency within each bin. A perfectly calibrated model produces a diagonal line.

In WFM, calibration matters for decisions that depend on probability thresholds. An attrition model that says "this agent has 80% chance of leaving" should be reliable — if 80% predictions only materialize 50% of the time, interventions will be misallocated.

Prediction Interval Coverage

For forecasting models that produce prediction intervals (e.g., "volume will be between 180 and 220 calls with 90% confidence"), the key evaluation metric is coverage — the percentage of actual values that fall within the stated interval. A 90% prediction interval should contain the actual value approximately 90% of the time.

Undercoverage (actual values fall outside intervals more often than expected) means the model is overconfident. Overcoverage means the model is underconfident and the intervals are too wide to be useful. Both are problems, but undercoverage is typically more dangerous in WFM because it means staffing plans based on the intervals provide less cushion than expected.[5]

Brier Score

The Brier score evaluates probability predictions for binary outcomes:

where is the predicted probability and is the actual outcome (0 or 1). Brier scores range from 0 (perfect) to 1 (worst). The score can be decomposed into calibration, resolution, and uncertainty components, making it useful for diagnosing why a probability model is performing poorly.

Sharpness

Sharpness measures how narrow (concentrated) a model's predicted distributions are. A model that predicts "volume will be between 0 and 10,000 calls" for every interval is perfectly calibrated if the actual values always fall in that range — but it is useless because the intervals are too wide to inform decisions. Sharpness ensures that calibration is not achieved trivially. The goal is "calibrated sharpness" — prediction intervals that are as narrow as possible while maintaining correct coverage.

Evaluating AI Agent Performance

AI agents deployed in contact centers — chatbots, virtual assistants, automated resolution systems — require evaluation frameworks that differ fundamentally from statistical models. An AI agent is not producing a number or a classification; it is conducting a conversation and attempting to resolve a customer need.

Main article: AI Containment Rate and Its Workforce Implications

Core Agent Metrics

- Containment rate: The percentage of interactions fully resolved by the AI agent without human escalation. This is the primary efficiency metric but must be balanced against quality — an agent that refuses to escalate difficult cases may report high containment while delivering poor resolution.

- Resolution accuracy: The percentage of contained interactions where the customer's issue was actually resolved. Measured through follow-up contact analysis (did the customer call back about the same issue?) and disposition auditing.

- Customer satisfaction (CSAT): Post-interaction survey scores for AI-handled contacts versus human-handled contacts. Significant CSAT gaps indicate the AI agent is delivering inferior experiences even when it "resolves" the issue.

- Escalation appropriateness: Of the interactions the AI agent escalated, what percentage genuinely required human handling? Over-escalation wastes agent capacity; under-escalation frustrates customers.

Evaluation Challenges

AI agent evaluation introduces challenges that traditional model evaluation does not face:

- No single ground truth: A forecasting model is either right or wrong. A conversational AI agent may resolve an issue through multiple valid paths, making "correct" behavior harder to define.

- Interaction quality is subjective: Two agents might resolve the same issue — one with a terse, transactional exchange and another with an empathetic, thorough conversation. Both succeed on resolution accuracy but differ on experience quality.

- Long-tail failures: AI agents may handle 95% of cases well but fail catastrophically on edge cases. Evaluation must sample across the full distribution of interaction types, not just aggregate metrics.

Fairness and bias considerations extend to AI agents as well — evaluation should verify that agent performance does not systematically vary across customer demographics, languages, or issue types.

Common Evaluation Mistakes

Even experienced WFM teams make evaluation errors that lead to overconfidence in model quality or selection of inferior models. The most prevalent mistakes are:

Evaluating on Training Data

Testing a model on the same data it was trained on produces artificially inflated performance. A complex model can memorize training examples, reporting near-perfect accuracy that evaporates on new data. This is the most fundamental evaluation error and remains surprisingly common when teams lack formal data science practices.

Ignoring Class Imbalance

In WFM, many classification problems are heavily imbalanced — attrition affects 5-15% of agents per quarter, fraud occurs in a fraction of a percent of transactions, and service-level breaches happen on a minority of intervals. Reporting overall accuracy on imbalanced problems is meaningless. A model that predicts the majority class for every input achieves high accuracy while providing zero value.

Always use class-specific metrics (precision, recall, F1 per class) or metrics designed for imbalanced settings (AUC, average precision) when evaluating imbalanced classification problems.

Optimizing the Wrong Metric

A model tuned to minimize MAPE may produce forecasts with significant bias, because MAPE does not penalize systematic directional error. A model tuned to maximize F1 may sacrifice precision for recall (or vice versa) in ways that do not align with operational cost structures. Always verify that the optimization objective aligns with the business decision the model supports.

Comparing Models on Different Test Sets

If Model A was evaluated on Q3 data and Model B on Q4 data, their performance numbers are not comparable. Q4 may include holiday patterns, volume spikes, or behavioral changes that make it inherently harder (or easier) to predict. Valid model comparison requires evaluation on identical test sets under identical conditions.

Ignoring Temporal Dynamics

A model evaluated on a random holdout sample of historical data may appear to perform well because it has access to information from adjacent time periods. When deployed on truly future data, performance degrades. Time-series problems require temporal evaluation strategies — always test on data that is chronologically after the training period.

Survivorship Bias in Agent Models

Attrition prediction models are sometimes evaluated only on agents who completed a full observation period, excluding those who left early in the window. This biases evaluation because the excluded agents were often the easiest to predict. The model appears better than it would perform on the full population.

Building an Evaluation Practice

Model evaluation should not be an event that happens once during deployment. It should be an ongoing operational practice integrated into WFM operations.

Evaluation Cadence

Establish a regular schedule for model performance review:

- Daily: Automated metric tracking for production models. Dashboard visibility into key performance indicators.

- Weekly: Review of model performance against baseline. Flag intervals or segments where performance has degraded.

- Monthly: Comprehensive evaluation including bias analysis, segment-level performance, and comparison against updated baselines.

- Quarterly: Full model audit including re-evaluation on fresh test data, assessment of whether the model still aligns with current business objectives, and consideration of retraining or replacement.

Automated Monitoring

Production models should be monitored continuously with automated alerting:

- Performance degradation alerts: Trigger when rolling accuracy metrics fall below a threshold (e.g., MAPE exceeds 15% over a 7-day window).

- Data drift detection: Monitor input data distributions for shifts that may invalidate model assumptions. If call volume patterns, handle time distributions, or customer demographics change significantly, the model may need retraining even if current accuracy appears acceptable.[6]

- Prediction distribution monitoring: Track whether the model's output distribution has shifted. A forecast model that suddenly predicts much higher or lower volumes than historical norms may be reacting to a data pipeline issue rather than a genuine pattern change.

Retraining Triggers

Define explicit conditions under which a model should be retrained:

- Sustained performance below threshold for a defined period

- Significant data drift detected in input features

- Major operational change (new product launch, channel migration, organizational restructure)

- Scheduled periodic retraining (e.g., quarterly for forecast models, monthly for classification models in fast-changing environments)

Retraining is not free — it requires compute resources, testing, and validation. But the cost of operating a degraded model typically exceeds the cost of retraining, particularly for high-impact decisions like staffing and scheduling.

Documentation and Governance

Every production model should have documented evaluation records:

- Training and test data descriptions (date ranges, sources, preprocessing)

- Evaluation metrics at deployment time

- Ongoing performance tracking with timestamps

- Records of retraining events and their impact on performance

- Known limitations and failure modes

This documentation supports quality management practices and ensures institutional knowledge survives personnel changes. The AI Scaffolding Framework provides a governance structure within which model evaluation practices can be formalized.

See Also

- Artificial Intelligence Fundamentals

- Machine Learning Concepts

- Forecast Accuracy Metrics

- MAPE WAPE and Forecast Bias

- Forecasting Methods

- Machine Learning for Volume Forecasting

- Probabilistic Forecasting

- Deterministic vs Probabilistic Models

- AI in Workforce Management

- AI Scaffolding Framework

- Algorithmic Fairness and Bias in Workforce Scheduling

- AI Containment Rate and Its Workforce Implications

- Quality Management

- Sentiment Analysis in Customer Service

- Speech Analytics

References

- ↑ "Another look at measures of forecast accuracy". International Journal of Forecasting. 22(4): 679–688. 2006. doi:10.1016/j.ijforecast.2006.03.001.

- ↑ "The Relationship Between Precision-Recall and ROC Curves". Proceedings of the 23rd International Conference on Machine Learning. 2006.

- ↑ The Elements of Statistical Learning: Data Mining, Inference, and Prediction. Springer. 2009. ISBN 978-0-387-84857-7.

- ↑ Forecasting: Principles and Practice. OTexts. 2018. ISBN 978-0-9875071-1-2.

- ↑ "Probabilistic forecasts, calibration and sharpness". Journal of the Royal Statistical Society: Series B. 69(2): 243–268. 2007. doi:10.1111/j.1467-9868.2007.00587.x.

- ↑ "Learning under Concept Drift: A Review". IEEE Transactions on Knowledge and Data Engineering. 31(12): 2346–2363. 2019. doi:10.1109/TKDE.2018.2876857.