Forecast Accuracy Metrics

Forecast Accuracy Metrics are the measurements practitioners use to evaluate forecast quality. The choice of metric matters as much as the choice of method — different metrics reward different behaviors, and the wrong metric for the wrong context produces forecasts optimized for the wrong outcome.

Forecast Accuracy Metrics are the measurements practitioners use to evaluate forecast quality. The choice of metric matters as much as the choice of method — different metrics reward different behaviors, and the wrong metric for the wrong context produces forecasts optimized for the wrong outcome.

This page documents the metrics WFM practitioners encounter, their behavior under different data shapes, and which to use when. The headline rule: do not optimize MAPE on intermittent demand.

Why metrics matter

A forecast cannot be evaluated against itself. Accuracy is measured against actuals on held-out data — either historical periods reserved for testing or a rolling production comparison. The metric is the function that turns the difference between forecast and actual into a single comparable number.

Different metrics produce different rankings. A method that wins on MAPE may lose on RMSE. A method that ties two competitors on RMSE may tie or differ dramatically on MASE. The metric is a value choice — what kind of error matters more.

In WFM contexts, the choice has operational consequences. A forecast that minimizes MAPE may systematically under-predict skill-level intermittent volume because percentage errors blow up near zero. A forecast that minimizes RMSE may produce smoother but less responsive forecasts that miss legitimate spikes. The practitioner needs to know what each metric rewards.

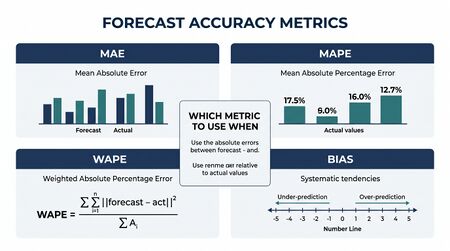

The core metrics

Throughout, let be the actual at time and the forecast for time , with errors .

Mean Absolute Error (MAE)

In the units of the data. Easy to interpret: "on average, the forecast is off by N calls per interval." Robust to scale; treats all errors equally regardless of size.

When to use: general-purpose accuracy on a single series. Reporting accuracy in business-friendly units.

Limitations: cannot compare across series with different scales (MAE on a queue of 1000 vs 10 are not comparable).

Root Mean Squared Error (RMSE)

Also in the units of the data. Penalizes larger errors more than smaller ones (because of the squaring). A method with rare large errors will score worse on RMSE than on MAE.

When to use: when large errors are operationally costly and you want the metric to reflect that. Common in capacity planning where a forecast 50% over the actual is much more expensive than a forecast 5% over.

Limitations: same scale issue as MAE; no cross-series comparison.

Mean Absolute Percentage Error (MAPE)

Scale-free; expressed as a percentage. The default metric most people reach for first because the units are intuitive ("our forecast is 8% off on average").

When to use: communicating accuracy to non-technical audiences; comparing methods across series with different scales (cautiously).

Critical limitation in WFM: MAPE is undefined when and explodes when is small. Any series with intermittent demand (skill-level data with frequent zero intervals; low-volume specialty queues) makes MAPE unstable or unusable.

A second subtle issue: MAPE is asymmetric. A forecast that over-predicts by produces a different MAPE than a forecast that under-predicts by . This biases optimization toward under-prediction.

Symmetric Mean Absolute Percentage Error (sMAPE)

A symmetric version that handles the asymmetry of MAPE. Bounded between 0 and 200. Still unstable when both and are near zero.

When to use: as a less-misleading alternative to MAPE on series that have small but non-zero values. Better than MAPE; still not robust on truly intermittent series.

Mean Absolute Scaled Error (MASE)

The Hyndman-recommended metric. The denominator is the in-sample MAE of the seasonal naive forecast on the training data; is the seasonal period.

A MASE of 1.0 means the forecast performs exactly as well as in-sample seasonal naive. MASE less than 1.0 means the forecast beats naive. MASE greater than 1.0 means the forecast is worse than naive — which usually means the method is broken.

When to use: the default for cross-series comparison. The natural metric for benchmarking against the seasonal naive baseline at scale. Robust on intermittent demand because the denominator is non-zero as long as the training series has any variation at the seasonal lag.

Why Hyndman recommends it: MASE is scale-free, comparable across series, defined for intermittent data, and grounded in the benchmark imperative (every method must beat naive).

Mean Error / Forecast Bias

Not an accuracy metric in the usual sense — ME measures the bias of the forecast. Positive ME means the forecast systematically under-predicts (actuals exceed forecasts on average); negative ME means systematic over-prediction.

When to use: diagnostic alongside any accuracy metric. A forecast can have low MAE and large bias, indicating that errors are small individually but skewed in one direction. Bias has different operational consequences than variance: an unbiased forecast missing by 8% in either direction is different from a biased forecast that systematically misses by 8% on the low side.

Choosing a metric

A practical decision matrix:

| Use case | Recommended metric | Reasoning |

|---|---|---|

| General-purpose, single series, well-behaved data | MAE or RMSE | Easy to interpret in units of the data |

| Capacity planning, large errors costly | RMSE | Penalizes the operationally expensive errors |

| Communicating accuracy to non-technical audiences | MAPE (with caveats) | Percentage is intuitive |

| Series with intermittent or zero values | MASE | MAPE is unusable on these series |

| Cross-series comparison (multiple skills, multiple sites) | MASE | Scale-free; comparable |

| Detecting systematic over- or under-prediction | ME (alongside MAE/RMSE) | Bias is separate from variance |

| Method benchmarking against seasonal naive | MASE | Built-in benchmark interpretation |

Time series cross-validation

Computing accuracy on a single train-test split is not enough. The held-out period may be unrepresentative — a holiday, a launch, an outage. Time series cross-validation (also called "rolling origin" or "expanding window") repeats the train-test split across multiple origins:

- Train on data through period ; forecast periods ahead; record errors

- Train on data through period ; forecast periods ahead; record errors

- Continue until the data runs out

- Aggregate errors across all origins for the final accuracy metric

This produces a more reliable estimate of forecast accuracy than a single split, particularly when the time series has changing characteristics.

For long horizons, vary as well: a method that wins at may lose at . Report accuracy by horizon, not just an aggregate number.

Common WFM pitfalls

- Optimizing MAPE on skill-level data with frequent zero intervals. MAPE explodes; the optimizer chases the explosions. Use MASE or WAPE (a weighted variant) instead.

- Single-split evaluation. A method that "wins" on one held-out month may have been lucky. Use rolling-origin cross-validation.

- Reporting only one metric. Bias (ME) and variance (MAE, RMSE) are separate phenomena. Report both. A low-MAE high-bias forecast and a low-MAE low-bias forecast have different operational implications.

- Comparing methods across different held-out windows. Method A on Q3 and Method B on Q4 are not comparable. Same data, same metric, same horizon.

- Comparing across different series without scaling. MAPE comparisons across skills with very different volumes hide which skill has the worst forecast quality. Use MASE.

Forecast accuracy in WFM software

Most WFM platforms report MAPE by default — sometimes MAPE is the only available metric. This is a usability decision (MAPE is easy to explain) but a methodological liability for skill-level reporting.

When evaluating WFM platform forecasts:

- If only MAPE is reported, request raw forecast and actual data to compute MASE independently

- If the platform's MAPE looks great but the forecast still feels off, the platform may be biased — request ME (or compute it from the raw data)

- Beware platforms that report MAPE without specifying which periods are included; small denominators get filtered out, biasing the reported number

Maturity Model Position

In the WFM Labs Maturity Model™, how an organization measures forecast accuracy is itself a maturity indicator. The metric an organization optimizes reveals whether it understands the data shapes it actually has.

- Level 1 — Initial (Emerging Operations) — accuracy is rarely measured formally; "the forecast looked close" is the assessment.

- Level 2 — Foundational (Traditional WFM Excellence) — MAPE is the headline (often only) metric, reported by the WFM platform; intermittent skill-level data produces unstable or hidden numbers; bias is not separated from variance.

- Level 3 — Progressive (Breaking the Monolith) — MASE is adopted for cross-series comparison; bias (ME) is reported alongside variance (MAE/RMSE); metric choice is matched to data shape; rolling-origin cross-validation replaces single-split evaluation.

- Level 4 — Advanced (The Ecosystem Emerges) — accuracy is reported by horizon and by forecast distribution (interval coverage, pinball loss for quantile forecasts), not just point error; method selection is driven by horizon-specific accuracy.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — accuracy metrics drive automated method selection and combination weights inside the analytical platform; the practitioner sets the loss function, not the method.

Choice of metric is a legacy-to-progressive marker: optimizing MAPE on intermittent demand is a Level 2 tell, while MASE plus bias plus horizon-specific reporting is a Level 3+ tell.

References

- Hyndman, R. J., & Athanasopoulos, G. "Evaluating forecast accuracy." Forecasting: Principles and Practice (Python edition). otexts.com/fpppy.

- Hyndman, R. J., & Koehler, A. B. "Another look at measures of forecast accuracy." International Journal of Forecasting 22(4), 2006. The paper introducing MASE.

See Also

- Forecasting Methods — overview; the place where method choice happens, evaluated against these metrics

- Naive and Seasonal Naive Forecasting — the benchmark MASE is built around

- Exponential Smoothing — methods evaluated against these metrics

- ARIMA Models — methods evaluated against these metrics

- Demand calculation — calculator that consumes a forecast; forecast quality is measured here

- Variance Harvesting — operational practice that depends on knowing forecast accuracy at multiple horizons

- MAPE WAPE and Forecast Bias

Interactive tools

- MAPE vs WAPE — mapewape.wfmlabs.com. Interactive deep-dive into the structural difference between MAPE (treats every interval equally) and WAPE (weights by volume). Walks the math through worked examples, demonstrates how the two metrics diverge on intermittent-demand series, and connects to the minimal-interval-variance floor. Seven tabbed sections covering fundamentals through advanced math. The teaching tool for the metric-choice discussion above.

- Forecast Accuracy Analyzer — sd.wfmlabs.com. Upload interval-level forecast and actual call volume; the analyzer overlays the series with configurable 1σ and 2σ bands and reports the Weighted Minimal Interval Variance (WMIV) floor. Use it to distinguish forecast errors that need investigation from normal statistical variation. Four sample datasets included for practice on different queue sizes.