Variance Harvesting

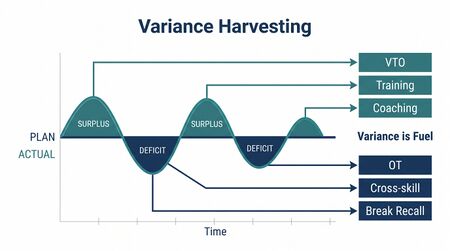

Variance Harvesting is the operational principle that inverts traditional WFM thinking by treating in-day variance as fuel rather than a problem. Where traditional WFM aims to minimize deviations from plan, variance harvesting captures deviations as opportunities for activities that would otherwise compete with operational delivery — coaching, training, voluntary time off, micro-learning, protected breaks.

The principle is the central operating concept of Level 3 — Progressive (Breaking the Monolith) in the WFM Labs Maturity Model™ and was articulated in "The WFM Maturity Model Revisited" (Lango, January 2026).

The Inversion

Traditional WFM treats variance as an enemy. Every deviation from plan is friction to be reduced; every spike or dip is a problem to manage. The operating logic is straightforward: minimize variance and the plan executes as designed. This orientation has deep roots: Six Sigma methodology defines its core purpose as reducing process variation to achieve consistency and quality,[1] and most WFM tooling inherits that assumption uncritically.

Variance harvesting reframes the same operational reality. Variance is signal that something useful can happen now that the plan did not anticipate:

- A queue dip is an opportunity to deliver coaching to agents who happen to be free at that moment.

- A sustained surge is a signal to activate flexible workforce capacity and to pre-empt agent burnout via protective intervention.

- A volume lull during forecast uncertainty is the right time to deliver micro-learning rather than the worst time to keep agents idle.

The same deviation, reframed, becomes the conditions under which you do other valuable work that traditional WFM has no good place to put.

This reframing has a direct parallel in organizational theory. Nohria and Gulati (1996) demonstrated an inverted U-shaped relationship between organizational slack and innovation: too little slack starves experimentation because variance outcomes cannot be absorbed, while too much slack erodes discipline in resource allocation.[2] Variance harvesting operationalizes this insight at the intraday level — the "slack" created by a volume dip is neither waste nor accident, but a resource window with a short shelf life.

Deming's Variation Framework

W. Edwards Deming distinguished two types of variation in any system: common cause variation (inherent to the system, stable and predictable within limits) and special cause variation (attributable to specific assignable factors outside the system).[3] Traditional WFM conflates the two — it treats all intraday variance as a problem to suppress, whether the source is systemic randomness in call arrivals or a one-time event like a system outage.

Variance harvesting respects the distinction. Common cause variation in contact volume (the natural randomness of when customers call) produces predictable surplus and deficit windows that recur daily. These are not problems to fix — they are structural features of the system that can be harvested. Special cause variation (an unexpected marketing campaign, a billing system failure) requires different treatment: containment first, then root cause analysis. Harvesting applies to the former; incident management applies to the latter.

Deming warned that the most costly management mistake is treating common cause variation as if it were special cause — what he called "tampering."[3] In contact center terms, tampering looks like frantic real-time adjustments to staffing every time interval volume deviates from forecast by any amount. Variance harvesting replaces tampering with a structured response library that activates on predictable patterns.

The Probabilistic Foundation

Contact center arrival patterns are stochastic processes. The foundational operations research literature models call arrivals as time-varying Poisson processes, where the arrival rate itself fluctuates both within the day and between days.[4] This means variance is not a planning failure — it is a mathematical certainty. Any single-point forecast of interval volume will be wrong; the question is how the operation responds to the inevitable deviation.

Green, Kolesar, and Whitt (2007) demonstrated that setting staffing requirements in time-varying demand environments requires adapting stationary models to capture time-dependent performance.[5] Their work showed that naive period-by-period staffing based on point forecasts systematically misstaffs when demand changes rapidly — precisely the intervals where variance harvesting finds its greatest opportunities.

Ibrahim et al. (2016) surveyed call center arrival forecasting methods and found that arrival rates exhibit both interday and intraday correlations that point-forecast methods fail to capture.[6] When the forecast itself is a probability distribution rather than a single number, the concept of "deviation from plan" dissolves — replaced by the question of where actual conditions fall within the predicted range, and what response that position triggers. This is the intellectual foundation for Probabilistic Planning in WFM and Deterministic vs Probabilistic Models, and it is why variance harvesting requires probabilistic thinking to operate at scale.

Practical Applications

Common harvesting moves, ordered by sophistication:

- Micro-learning during natural volume lulls — short structured learning modules pushed to agents during forecast-confident dips

- Protected breaks during call volume overruns — automation surfaces overdue breaks during sustained load, preventing burnout from stacking

- Proactive VTO offerings — when forecast probability favors overstaffing, voluntary time-off is offered before the situation requires reactive scrambling

- Training during operational gaps — pre-staged content delivered as conditions allow, replacing the legacy practice of canceling training when volume rises

- Coaching capture — supervisors are surfaced agents and topics matched to current load conditions, not following a static cadence

The common pattern: the system has a library of activities that would happen anyway if conditions allowed, and it activates them when conditions allow rather than scheduling them rigidly in advance.

Worked Example: Harvesting a Morning Dip

Consider a 500-seat contact center running a standard 8:00–17:00 operation. The real-time forecast shows a probabilistic range for the 09:00–09:30 window:

| Metric | Forecast (P50) | Actual | Variance |

|---|---|---|---|

| Offered contacts | 620 | 540 | −80 (−12.9%) |

| Required staff | 285 | 248 | −37 |

| Available staff | 290 | 290 | 0 |

| Surplus agents | 5 | 42 | +37 |

Under traditional WFM, this is a neutral-to-negative event. Occupancy drops, cost-per-contact rises, and the real-time analyst may scramble to offer ad hoc VTO. Under variance harvesting, the 42-agent surplus triggers the response library:

- Automated triage — the system checks the response library priority stack. Coaching has highest priority (three supervisors have pending sessions), followed by a compliance micro-learning module due this week.

- Coaching capture — 12 agents with pending coaching topics are pulled into supervisor sessions. The Daily ROC Routine logs the capture.

- Micro-learning push — 18 agents receive a 12-minute compliance module. Completion is tracked against the weekly target.

- Protected break acceleration — 8 agents who missed morning breaks during an earlier surge are cycled through breaks now.

- Reserve held — 4 agents remain in queue as buffer against volume recovery.

Total harvested: 38 of 42 surplus agent-intervals captured for productive activity. Variance Capture Efficiency: 90.5% for this window. The 09:30 interval sees volume recover to forecast, and the system returns to standard staffing. No reactive scrambling occurred. The Real-Time Schedule Adjustment was automated, not manual.

Without harvesting, those 42 agents would have sat in queue at low occupancy for 30 minutes — 21 agent-hours of capacity wasted. With harvesting, the operation delivered coaching, training, and wellness outcomes and maintained Service Level stability.

New Metrics

Variance harvesting requires metrics that don't show up in traditional WFM dashboards. Three are foundational:

- Service Level Stability (SLS) — measures how consistently service level holds across the day, not just the daily aggregate. Stability is the goal, not narrow optimization to a target average that masks intraday volatility. Aksin, Armony, and Mehrotra (2007) identified service level consistency as a key operational challenge distinct from aggregate performance.[7]

- Automation Acceptance Rate (AAR) — measures the fraction of automation-suggested actions that are accepted (by agents, supervisors, or the system itself). Low AAR signals weak suggestions, fatigue, or trust deficits.

- Variance Capture Efficiency (VCE) — measures the fraction of available variance moments that produce a captured outcome (coaching delivered, learning completed, break protected). The opposite of "variance leaked through without doing anything useful."

These metrics make harvesting visible. Without them, variance harvesting devolves into anecdote — "we did some coaching during the dip yesterday."

Metric Interaction

The three metrics interact in predictable ways during implementation:

- Early phase (Months 1–3): SLS may be unchanged, AAR starts low (40–60% is typical), VCE rises from single digits to 15–25% as the response library activates.

- Stabilization (Months 3–6): AAR rises as automation suggestions improve based on acceptance data. VCE reaches 30–50%. SLS begins improving as harvested activities reduce the need for disruptive schedule changes.

- Mature operation (Months 6+): AAR stabilizes above 75%, VCE reaches 50–70%, and SLS shows measurable improvement because the system absorbs variance productively rather than fighting it.

Required Capabilities

Variance harvesting depends on:

- Forecasted variance windows visible in real time, not after-the-fact

- Automation that can act on those windows faster than human analysts can compose responses (see Intelligent Automation)

- A library of activities pre-staged to fire on appropriate conditions

- Authority models that let the automation activate without waiting for human approval on routine actions

- Measurement infrastructure that makes the new metrics queryable

This depends in turn on most of the AI Scaffolding Framework — Layers 1, 2, 3, and 5 in particular.

Connection to Resource Optimization Center (ROC)

The Resource Optimization Center (ROC) is the organizational unit that operates variance harvesting in production. The ROC's role shifts from reactive exception processing to proactive operational coordination:

- Document playbooks for managing different variance signatures

- Track intervention data systematically

- Build evidence bases for automation investments (which harvesting moves produced measurable outcomes)

- Operate largely as a mindset shift from existing real-time analyst work — same role, different orientation

The Daily ROC Routine codifies this shift into repeatable practice: pre-shift variance forecasting, real-time response activation, and post-shift harvest analysis. The ROC does not eliminate the real-time analyst role — it elevates it from reactive firefighting to systematic resource optimization.

"Precision Theater"

The phrase precision theater captures what variance harvesting replaces: the elaborate apparatus of deterministic forecasting, scheduling, and adherence policing that produces single-point answers about a probabilistic reality, then enforces those answers as if they were correct.

Precision theater can be sophisticated and well-executed. It can also be entirely confident about a forecast that happens to be wrong. Variance harvesting accepts the forecast as a probability range and builds operational responses that work across the range rather than insisting on the central estimate.

The operations research literature supports this framing. Green, Kolesar, and Whitt showed that "the stationary independent period-by-period (SIPP) approach" — the standard WFM method of treating each interval independently with a point forecast — "can be very inaccurate" when demand changes rapidly between intervals.[5] The more demand varies (i.e., the more interesting the harvesting opportunities), the worse the deterministic approach performs. This is not a minor technical limitation — it is a structural mismatch between the tool and the reality it models.

Maturity Model Position

In the WFM Labs Maturity Model™:

- Level 2 — Foundational organizations may use elements of variance harvesting opportunistically but lack the metrics and automation to make it systematic.

- Level 3 — Progressive (Breaking the Monolith) organizations make variance harvesting central — the metrics are visible, automation activates the harvesting moves, the ROC operates the practice.

- Level 4 — Advanced (The Ecosystem Emerges) organizations have variance harvesting as default in-day operating mode; precision theater has been displaced.

Implementation Sequence

Adopting variance harvesting is sequenced, not flipped. The transition from precision-theater operations to harvesting-as-default takes most organizations 6-18 months. The order below is what works in practice.

Phase 1 — Establish the metrics

Before any automation, expose the three metrics that will measure progress:

- Service Level Stability (SLS) — capture intraday SL variance, not just daily aggregate. The dashboard view changes from "we hit 80/20" to "we held 80/20 for 12 of 16 intraday windows."

- Automation Acceptance Rate (AAR) — initially zero (no automation yet). Track the baseline: how often do supervisors and agents accept manually-suggested actions?

- Variance Capture Efficiency (VCE) — measure the fraction of variance windows that produced a captured outcome under current manual processes. Most orgs find baseline VCE is shockingly low — single digits.

Without these visible, harvesting can't be measured and won't be funded.

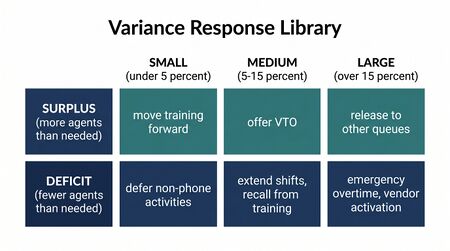

Phase 2 — Build the response library

Create a structured catalog of activities that would happen anyway if conditions allowed. Each activity needs:

- Trigger conditions (what variance signature activates it)

- Pre-staged content (the coaching module, the learning unit, the VTO offer)

- Authority model (who approves the activation, or is it auto-approved)

- Measurement (how is the captured outcome tracked)

Start with 5-7 activities, not 50. Common starter set: micro-learning during dips, VTO during sustained overstaffing, coaching capture, protected breaks during overruns, off-phone training delivery.

| Activity | Trigger Signature | Duration | Authority | Measurement |

|---|---|---|---|---|

| Micro-learning push | Volume < P25 for 3+ min | 8–15 min | Auto-approved | Module completion rate |

| Coaching capture | Surplus ≥ 5 agents + supervisor available | 15–20 min | Supervisor confirms | Coaching session logged |

| VTO offer | Volume < P10 for 10+ min, forecast confidence high | Remainder of shift | Agent accepts, ROC confirms | Hours released, cost avoided |

| Protected break | Agent overdue break + queue stable | 15 min | Auto-approved | Break compliance rate |

| Off-phone training | Surplus ≥ 10 agents + training module available | 20–45 min | ROC approves | Training completion, cert progress |

Phase 3 — Connect automation to the library

Wire the Intelligent Automation platform to the response library. The first integrations are deterministic: simple if-then rules ("if queue depth < forecast - 15% for 3 minutes, surface coaching to N agents"). Probabilistic logic comes later.

Track AAR closely during this phase. Low AAR signals weak triggers, fatigue, or trust deficits — diagnose before rolling out further.

Phase 4 — ROC operates harvesting in production

The Resource Optimization Center (ROC) takes ownership of harvesting as a primary operational mode rather than an experiment. The ROC's playbooks are written around variance signatures and matching responses. Real-time analyst roles shift from reactive variance management to designing harvesting moves and reviewing automation outcomes.

This is the inflection point where harvesting becomes "how operations runs," not "a project we're piloting."

Pilot path

If the four-phase sequence feels too heavy for the current organization, run a single-signature pilot:

- Pick one variance signature with high frequency and clear capture opportunity. Commonly: forecast-confident dip lasting 10+ minutes.

- Build a single response (e.g., push one specific micro-learning module).

- Wire the trigger and the response.

- Track AAR and VCE on this single signature for 4-6 weeks.

- Use the data to fund the broader rollout.

The pilot's purpose is data — to demonstrate that variance moments produce measurable outcomes when captured. Without that evidence, the broader investment doesn't get funded.

Anti-Patterns

Common failure modes when organizations attempt variance harvesting without sufficient foundation:

- Harvesting without metrics — teams report anecdotal wins ("we coached 5 agents during a dip") but cannot demonstrate systematic improvement. Without VCE and AAR tracking, the practice never scales beyond enthusiastic individuals.

- Over-harvesting — aggressive response triggers that pull agents from queues too early, creating the service level problems harvesting is meant to prevent. The buffer reserve in the worked example above is not optional.

- Rigid response libraries — a library built once and never updated. Trigger conditions, activity durations, and authority models need quarterly review as the operation's variance profile changes.

- Confusing special cause with common cause — attempting to "harvest" a system outage or a marketing-driven volume spike. These are incident response scenarios, not harvesting opportunities. The Real-Time Operations team must distinguish between the two in real time.

References

- ↑ Six Sigma Definition — What is Lean Six Sigma?. American Society for Quality (ASQ). 2026-05-13.

- ↑ Nohria, N. and Gulati, R. (1996). "Is Slack Good or Bad for Innovation?" Academy of Management Journal, 39(5), 1245–1264.

- ↑ 3.0 3.1 Knowledge of Variation. The W. Edwards Deming Institute. 2026-05-13.

- ↑ Gans, N., Koole, G. and Mandelbaum, A. (2003). "Telephone Call Centers: Tutorial, Review, and Research Prospects." Manufacturing & Service Operations Management, 5(2), 79–141.

- ↑ 5.0 5.1 Green, L.V., Kolesar, P.J. and Whitt, W. (2007). "Coping with Time-Varying Demand When Setting Staffing Requirements for a Service System." Production & Operations Management, 16(1), 13–39.

- ↑ Ibrahim, R., Ye, H., L'Ecuyer, P. and Shen, H. (2016). "Modeling and Forecasting Call Center Arrivals: A Literature Survey and a Case Study." International Journal of Forecasting, 32(3), 865–874.

- ↑ Aksin, Z., Armony, M. and Mehrotra, V. (2007). "The Modern Call Center: A Multi-Disciplinary Perspective on Operations Management Research." Production and Operations Management, 16(6), 665–688.

- Lango, Ted. "The WFM Maturity Model Revisited." Contact Center Compass, LinkedIn, January 2026.

- Lango, Ted. Adaptive: Building Workforce Systems for an (Unpredictable) Future.

See Also

- WFM Labs Maturity Model™ — The maturity context where variance harvesting locates an organization

- Resource Optimization Center (ROC) — The operational unit that runs harvesting in production

- Real-Time Operations — The operational discipline that executes harvesting in real time

- Daily ROC Routine — The daily cadence that codifies harvesting practice

- Real-Time Schedule Adjustment — The mechanism for activating harvesting moves

- Service Level — The primary constraint harvesting must respect

- Occupancy — The metric that reveals surplus and deficit windows

- Probabilistic Planning in WFM — The forecasting philosophy underlying harvesting

- Deterministic vs Probabilistic Models — Why single-point forecasts create the conditions precision theater depends on

- Intelligent Automation — The automation capability that enables real-time harvesting

- AI Scaffolding Framework — The infrastructure layers harvesting depends on

- WFM Ecosystem Architecture — The ecosystem context for harvesting practice

- Future WFM Operating Standard — The broader operating model

- Value-Based Planning Model — Level 4 framework that depends on Level 3 variance instrumentation

- Service Demand Rebound Model — Three rebound components (R_d, R_i, R_s) are measured via variance harvesting infrastructure

Interactive tools

- WFM Variance Analysis — occupancy-variance-analysis.wfmlabs.com. Demonstrates the planned-vs-actual occupancy decomposition that makes variance harvesting visible. Most WFM teams calculate staffing variance using actual occupancy, which masks understaffing (occupancy rises to compensate) and overstaffing (occupancy drops). The tool decomposes interval variance into volume, AHT, and staffing components using planned occupancy — the methodology variance harvesting depends on for distinguishing genuine signal from compensating-variable noise.