Value-Based Planning Model

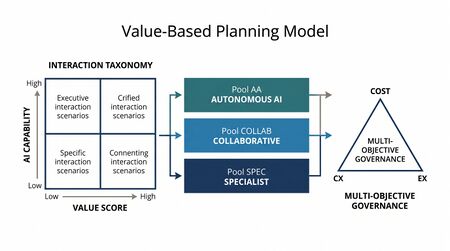

The Value-Based Planning Model (VPM) is a workforce planning framework for contact centers operating with agentic AI as part of the workforce. It replaces the century-old Erlang-lineage question — "how many agents to handle the volume that arrives?" — with a bottom-up structure: classify interactions by value and AI capability, route each to one of three workforce pools, staff each pool with a methodology fitted to its work, and govern the system across cost, customer experience, and employee experience as a coupled multi-objective optimization.

VPM is the canonical WFM Labs Maturity Model™ Level 4 — Advanced (The Ecosystem Emerges) framework. It assumes Level 3 instrumentation (probabilistic forecasting, variance harvesting, simulation-grade capacity planning) as a prerequisite and reaches into Level 5 — Pioneering (Enterprise-Wide Intelligence) through closed-loop governance and the Cognitive Portfolio Model (N*).

The framework is documented in full in Lango (2026), Value-Based Models for Customer Operations.[1]

The Erlang Inversion

A century of contact-center planning has worked top-down. Forecast volume. Apply Erlang-C (or Erlang-A, or simulation). Solve for headcount. Every contact is treated as an interchangeable unit of work. The arrival rate is the planning input.

VPM inverts this. The planning input is no longer volume; it is the interaction taxonomy — the catalogue of work types the operation actually handles, each classified by value, AI capability, handle time, skill requirements, and emergence probability. Each type flows to one of three pools. Headcount is solved per pool, then summed.

The inversion changes what practitioners build. Top-down asks: how big should the operation be? Bottom-up asks: what work is the operation doing, who (or what) should be doing it, and what does each pool need to run? The first question has an answer in volume. The second has an answer in structure.

This shift echoes a broader movement in operations research away from aggregate capacity toward differentiated service design. Rust and Huang (2012) demonstrated that firms maximizing lifetime customer value outperform those optimizing for cost efficiency alone, precisely because undifferentiated service destroys value at both extremes — over-serving low-value interactions and under-serving high-value ones.[2]

How Value Classification Works

The core intellectual move in VPM is treating not all interactions as equal — a statement that is obvious in the abstract but systematically violated by every Erlang-based staffing model, which treats arrivals as a homogeneous Poisson stream.

What makes an interaction high-value

An interaction is high-value when mishandling it produces outsized negative consequences or handling it well produces outsized positive consequences. Four sub-dimensions compose the Value Score (each scored 0–10):

- CLV Impact — Does this interaction touch a high-lifetime-value customer, or does its resolution materially change a customer's future spend trajectory? A billing dispute from a customer with $50K annual spend scores differently from the same dispute type at $500.

- Revenue Opportunity — Does the interaction contain a cross-sell, upsell, save, or renewal moment? Retention calls during a cancellation window carry revenue opportunity that password resets do not.

- Churn Risk — What is the probability that poor handling triggers defection? Interactions that occur during "moments of truth" — first contact after onboarding, escalation after repeated failures, high-emotion complaint — carry elevated churn risk. Reichheld and Sasser (1990) showed a 5% improvement in retention can increase profits 25–85% depending on industry, making churn-adjacent interactions disproportionately valuable.[3]

- Customer Effort Score (CES) — How much effort must the customer expend to achieve resolution? Dixon, Toman, and DeLisi (2013) found that reducing customer effort is a stronger predictor of loyalty than delight, and that high-effort interactions are 96% more likely to drive disloyalty.[4] Interactions where AI resolution is effortless (status checks, tracking) score low on CES concern; interactions where AI creates friction (complex disputes, emotional complaints) score high.

Each sub-dimension is scored twice: once for human handling, once for AI handling. The value differential — the gap between human and AI scores — is the signal that drives routing. A high absolute value score with zero differential (AI handles it just as well) routes to Pool AA. A high absolute value score with a large differential routes to Pool Spec.

What makes an interaction low-value

Low-value interactions share three properties:

- Commoditized resolution — the answer is deterministic or near-deterministic (order status, balance inquiry, address change)

- Low consequence of error — if the AI gets it wrong, the cost of recovery is low (re-contact, not churn)

- High repeatability — the interaction follows a pattern with minimal variation, making AI capability scores reliably high

These interactions are the natural population for Pool AA. They are also the interactions that traditional Erlang models handle well — which is why VPM does not replace Erlang for organizations that have no AI workforce. It replaces Erlang only when the workforce becomes heterogeneous.

The scoring process

Scoring is not a one-time exercise. The interaction taxonomy is a living document that requires:

- Initial calibration from QA samples (minimum 200 interactions per type for statistical stability), CRM lifetime-value data, and contact-driver analysis

- Quarterly refresh as AI capabilities change — today's "AI cannot handle" is next quarter's "AI handles at 85%"

- Post-deployment recalibration using the Service Demand Rebound Model to capture emergent inquiry types that did not exist before AI deployment

The Routing Decision Framework

Once every interaction type has a Value Score and AI Capability score, routing follows a structured decision framework.

The primary routing heuristic

The default routing heuristic uses two dimensions to partition the space:

- Pool AA (Autonomous AI) — AI Capability > 80% AND Value Score ≤ 4

- Pool Spec (Specialist) — AI Capability < 30% OR Value Score ≥ 8

- Pool Collab (Collaborative) — everything else

This creates a two-dimensional decision space. The collaborative pool is the residual — it absorbs everything that is neither clearly autonomous nor clearly specialist. In practice, Pool Collab is often the largest pool by interaction volume in early deployments, shrinking as AI capabilities mature and more interaction types cross the 80% threshold.

Why the thresholds are planning decisions, not laws

The 80/30/4/8 thresholds are motivated by Cobham's c-μ scheduling rule (prioritize work by the ratio of cost-of-delay to service rate) but lack a closed-form optimality guarantee.[5] Organizations calibrate them to local conditions:

- Risk-averse organizations (healthcare, financial services) lower the AI Capability threshold for Pool AA and raise the Value Score threshold for Pool Spec, shrinking the autonomous pool and expanding specialist coverage

- Cost-pressured organizations (high-volume retail, travel) raise the AI Capability threshold for Pool Spec and lower the Value Score threshold for Pool AA, expanding autonomous coverage

- Regulatory-constrained organizations may impose hard overrides: certain interaction types route to Pool Spec regardless of scores (e.g., complaints that trigger regulatory reporting obligations)

Routing as portfolio optimization

The routing decision is structurally a portfolio allocation problem. Each interaction type is an "asset" with a cost profile, a quality profile, and a risk profile that depends on which pool handles it. The optimal routing minimizes expected cost subject to CX and EX constraints — or equivalently, maximizes expected CX subject to cost and EX constraints. This is the same multi-objective optimization that governs the system at the macro level, applied at the routing level.

The Four Building Blocks

1. Interaction Taxonomy

Every interaction type is scored on five dimensions: Value Score (composite, 0–10), AI Capability (%), Handle Time, Skill Requirements, and Emergence Probability. The fifth dimension is structurally absent from traditional taxonomies and is the diagnostic for demand rebound — it forces planners to budget for inquiry types that do not exist pre-deployment.

The Value Score is itself a composite of four sub-dimensions: CLV Impact, Customer Effort Score (CES), Revenue Opportunity, and Churn Risk. Each is scored 0–10 for both human and AI handling; the differential drives the routing recommendation. The composite methodology, scoring rubrics, measurement protocols, and source literature are documented on the Value Routing Model page.

Data sources for the taxonomy: contact-center QA samples, IVR/digital logs, web analytics, email/social/chat archives, CRM lifetime-value data, and (for Emergence Probability) post-deployment contact-driver analysis.

2. Three-Pool Architecture

Each interaction type routes to exactly one of three pools:

- Pool AA — Autonomous AI. High AI Capability, low Value Score. Cost-modeled across five layers (initial investment, transaction, escalation, maintenance, rebound) — not the marginal-cost-only basis vendors typically present.

- Pool Collab — Collaborative. Mid-range AI Capability and Value Score. Staffed via the Cognitive Portfolio Model (N*) — humans monitoring N concurrent AI-handled interactions, with N solved as a fixed point.

- Pool Spec — Specialist. Low AI Capability, high Value Score. Staffed via simulation, not closed-form Erlang, because the workload that concentrates here is too heterogeneous for closed-form approximations.

Full architecture, escalation cascades, and worked example on the Three-Pool Architecture page.

3. Pool-specific staffing methodologies

The three pools run on three different staffing models because the work is structurally different:

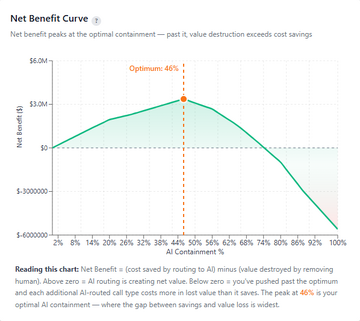

- Pool AA is a cost model, not a queuing model — but its full cost expression includes the escalation tax (cascade-adjusted expected cost) and the demand-rebound penalty.

- Pool Collab uses the Cognitive Portfolio fixed-point equation. The key insight: human agents in Pool Collab are not handling interactions sequentially — they are monitoring N concurrent AI sessions. The optimal N depends on AI reliability, intervention complexity, cognitive load ceiling, and the cost of missed interventions. This is structurally different from any Erlang model.

- Pool Spec uses discrete-event or Monte Carlo simulation over heterogeneous arrival and service distributions. The work that concentrates here is the longest, most variable, most skill-dependent in the operation.

A consequence: there is no single Erlang-C calculator for VPM. The "calculator" is a pipeline.

4. Multi-objective governance layer

The governance layer replaces the single service-level metric with three coupled dimensions — Cost, Customer Experience (CX), Employee Experience (EX) — framed as a Pareto frontier optimization. Outputs are distributional, not point estimates: resolution rates as Beta, effort scores as Gamma, value-per-interaction as shifted lognormal, cost as a simulated distribution.

Drift signals trigger recalibration: if CX falls, re-solve N* and re-route borderline types; if cost rises, recalibrate the containment rate; if EX deteriorates, audit Pool Collab cognitive load. The loop is the Level 5 reach.

Integration with Adjacent Frameworks

VPM does not operate in isolation. It connects to several frameworks within the AI-augmented WFM ecosystem:

Three-Pool Architecture

The Three-Pool Architecture is VPM's structural backbone. VPM defines why interactions route where they do (value classification); the Three-Pool Architecture defines how each pool operates (staffing math, escalation cascades, capacity boundaries). The two are complementary halves of a single system.

Cognitive Portfolio Model (N*)

The Cognitive Portfolio Model (N*) is Pool Collab's staffing equation. It solves for the number of concurrent AI sessions a human agent can effectively monitor — a question that has no analog in traditional WFM. VPM feeds N* its inputs (the interaction types routed to Pool Collab, their AI reliability scores, their intervention complexity) and receives back a staffing requirement that accounts for cognitive load.

AI Scaffolding Framework

The AI Scaffolding Framework governs how AI capabilities are deployed and matured within each pool. Pool AA interactions may begin with rule-based automation and graduate to agentic AI. Pool Collab interactions may begin with AI-assisted handling and graduate to autonomous handling as AI Capability scores cross the 80% threshold. The scaffolding framework sequences these transitions; VPM absorbs the routing consequences.

Workforce Planning with AI Agents

Workforce Planning with AI Agents addresses the operational mechanics of treating AI as a workforce pool — scheduling, capacity management, performance measurement. VPM provides the strategic layer (which work goes where); AI agent workforce planning provides the tactical layer (how many AI instances, at what concurrency, with what failover).

Workforce Cost Modeling

Workforce Cost Modeling supplies the cost-per-producing-FTE calculations that feed VPM's cost objective. Without accurate fully-loaded cost figures — including overhead allocation, shrinkage, attrition replacement cost, and training investment — the multi-objective optimization cannot produce meaningful Pareto frontiers.

Worked Example: Value-Based Decomposition of a Contact Center

Consider a mid-size insurance contact center handling 2.4M annual interactions across 14 identified interaction types. The traditional planning approach: forecast total volume by interval, apply Erlang-C with blended AHT, solve for headcount at 80/20 service level. Result: ~620 FTEs.

Step 1: Build the interaction taxonomy

| Interaction Type | Annual Vol. | Value Score | AI Cap. | Avg AHT | Emergence Prob. | Pool Assignment |

|---|---|---|---|---|---|---|

| Policy status inquiry | 480K | 1.5 | 95% | 2.1 min | 0% | Pool AA |

| Claims status check | 360K | 2.0 | 92% | 2.8 min | 0% | Pool AA |

| First notice of loss (FNOL) | 310K | 6.5 | 55% | 14.2 min | 5% | Pool Collab |

| Coverage change request | 290K | 4.5 | 60% | 8.5 min | 3% | Pool Collab |

| Complex claims dispute | 180K | 9.0 | 15% | 28.5 min | 8% | Pool Spec |

| Cancellation / retention | 120K | 8.5 | 20% | 18.0 min | 12% | Pool Spec |

Step 2: Apply pool-specific staffing

Pool AA (840K interactions, ~35% of volume): Cost model. Five-layer cost per interaction: $0.12 transaction + $4.80 escalation cost × 8% escalation rate + maintenance amortization. No FTE headcount — this is infrastructure, not labor. But the rebound estimate adds ~6% net new volume (50K interactions) that must be budgeted across pools.

Pool Collab (720K interactions, ~30% of volume): N* solved at 4.2 concurrent sessions per human agent (given 55–60% AI capability, moderate intervention rate). Effective throughput per agent-hour is ~2.8x traditional sequential handling. Staffing requirement: ~185 FTEs (vs. ~290 under traditional single-pool Erlang).

Pool Spec (840K interactions, ~35% of volume): Simulation over heterogeneous service-time distributions (lognormal with high variance). These are the longest, most complex interactions. Staffing requirement: ~310 FTEs at 80/20 service level (vs. ~330 under blended Erlang, because removing the short interactions from the pool changes the service-time distribution).

Step 3: Compare to traditional model

| Metric | Traditional (single pool) | VPM (three pools) |

|---|---|---|

| Total human FTEs | 620 | 495 |

| AI infrastructure cost | External (deflection layer) | Internalized (Pool AA + Pool Collab assist) |

| Service level approach | Single 80/20 target | Differentiated: Pool AA = resolution rate, Pool Collab = intervention SL, Pool Spec = 80/20 |

| Cost optimization | Minimize FTEs | Minimize total cost across Pareto frontier |

| CX risk | Uniform — high-value interactions get same service as low-value | Concentrated — Pool Spec gets disproportionate investment |

The 125-FTE reduction (~20%) is real but misleading if taken as the headline. The actual value of VPM is structural: high-value interactions now receive specialist handling instead of competing for attention with password resets in a blended queue. The CX improvement on high-value interactions is the primary benefit; the cost reduction is a secondary effect.

Metrics and Measurement

VPM requires a measurement framework that goes beyond traditional service level and AHT.

Pool-level metrics

| Pool | Primary Metric | Secondary Metrics | Governance Signal |

|---|---|---|---|

| Pool AA | Containment rate (with quality gate) | Escalation rate, rebound volume, cost per resolution | Containment drift > 3pp triggers recalibration |

| Pool Collab | Intervention success rate | N* utilization, cognitive load index, intervention latency | N* drift > 0.5 sessions triggers re-solve |

| Pool Spec | Value-weighted resolution rate | CSAT on high-value interactions, first-contact resolution, effort score | Value-weighted CSAT drop > 5pp triggers routing audit |

System-level metrics

The governance layer tracks three coupled dimensions:

- Cost efficiency — total cost per value-weighted resolution (not cost per contact, which treats all interactions as equal)

- Customer experience — value-weighted CSAT, where high-value interactions carry 3–5x the weight of low-value interactions in the composite score

- Employee experience — cognitive load index for Pool Collab agents, skill utilization rate for Pool Spec agents, and overall attrition/engagement scores

These three dimensions form the Pareto frontier. Movement along the frontier is a strategic choice; movement away from it is a governance failure.

Leading vs. lagging indicators

Traditional WFM operates almost entirely on lagging indicators (service level achieved, AHT realized, cost incurred). VPM introduces leading indicators:

- AI Capability drift — if a model's capability score on a given interaction type drops (e.g., after a product change that invalidates training data), that type may need re-routing before CX impact materializes

- Taxonomy coverage gap — percentage of interactions that do not match any catalogued type, signaling either incomplete taxonomy or emergent demand

- Routing override rate — frequency of manual routing overrides, signaling that the heuristic thresholds may need recalibration

Implementation Roadmap

Phase 1: Taxonomy Construction (Weeks 1–6)

- Audit QA samples (minimum 200 per interaction type) to identify and catalogue interaction types

- Score each type on the five dimensions using cross-functional teams (WFM, QA, CX, Finance)

- Validate CLV Impact scores against CRM data; validate AI Capability scores against pilot data or vendor benchmarks

- Output: interaction taxonomy with pool assignments

Phase 2: Pool AA Pilot (Weeks 4–12, overlaps Phase 1)

- Deploy AI on highest-confidence Pool AA interaction types (AI Capability > 90%, Value Score ≤ 2)

- Instrument five-layer cost model; measure actual escalation rates and rebound volume

- Calibrate the interior cost optimum — find the containment rate that minimizes total cost, not the one that maximizes containment

- Output: validated Pool AA cost model, calibrated escalation tax

Phase 3: Pool Collab Design (Weeks 8–16)

- Design the human-AI collaborative workflow for Pool Collab interaction types

- Estimate N* parameters from analogous domains or small-scale pilots

- Build the monitoring interface — agents need real-time visibility into AI session states

- Output: Pool Collab operating model, initial N* estimate

Phase 4: Full Three-Pool Operation (Weeks 14–24)

- Migrate all interaction types to assigned pools

- Stand up differentiated service-level targets per pool

- Implement routing logic in the ACD/orchestration layer

- Output: operational three-pool architecture

Phase 5: Governance Layer (Weeks 20–30)

- Define drift thresholds for each governance signal

- Implement automated monitoring dashboards

- Establish recalibration cadences (weekly for Pool AA containment, monthly for N*, quarterly for taxonomy refresh)

- Output: closed-loop governance system

Prerequisites checklist

The implementation roadmap assumes the organization has achieved Level 3 maturity. Specific prerequisites:

- Probabilistic Forecasting in production (not just point estimates)

- Variance Harvesting infrastructure (the ability to decompose forecast error by source)

- Simulation-grade capacity planning (discrete-event or Monte Carlo, not just Erlang calculators)

- CRM data integrated with contact center data (for CLV Impact scoring)

- AI deployment in at least pilot stage (for AI Capability scoring from real data, not vendor claims)

What the Framework Replaces

| Dimension | Traditional | Value-Based Planning |

|---|---|---|

| Planning input | Volume forecast | Interaction taxonomy |

| Staffing math | Erlang-C / Erlang-A / multi-skill simulation | Three pool-specific models, summed |

| Output form | Point estimate ("287 FTEs") | Distributional ("P50 / P90 staffing across CX scenarios") |

| Optimization target | Service level (single metric) | Cost / CX / EX (Pareto frontier) |

| AI's role | Deflection layer above the operation | Third workforce pool inside the operation |

| Cost modeling | Marginal AI cost vs. fully-loaded human cost | Five-layer AI cost (incl. escalation tax + rebound) |

| Demand model | Volume + AHT | Volume + AHT + emergent inquiry types + rebound elasticity |

| Value differentiation | None (all interactions treated equally) | Explicit (4-dimension Value Score drives routing) |

| Employee experience | Implicit (managed through scheduling) | Explicit (third optimization dimension, cognitive load tracked) |

Maturity Prerequisites and Position

VPM is a Level 4 framework. The architecture cannot be operated at Level 1 or Level 2 — there is no interaction taxonomy, no per-type cost modeling, no probabilistic forecasting. Level 3 organizations are the natural population for adoption: they have broken the staffing monolith, instrumented variance, and adopted probabilistic outputs, but they still typically run a single pool against a single service-level metric.

The Level 4 transition is structural, not incremental. Before: one pool, one metric, one staffing methodology. After: three pools, three coupled metrics, three staffing methodologies, and a governance layer that recalibrates the system when outcomes drift.

Level-by-level readiness

- Level 1 — Initial (Emerging Operations) — VPM is unreachable. No interaction taxonomy, no per-type costing, no probabilistic outputs, no instrumentation for variance.

- Level 2 — Foundational (Traditional WFM Excellence) — VPM is unreachable. Operation runs on point-estimate forecasts and a single Erlang-C-based staffing model against a single service-level metric. The framework's premise (interaction taxonomy as planning input) is invisible from inside this paradigm.

- Level 3 — Progressive (Breaking the Monolith) — VPM is the natural next destination. Level 3 organizations have already adopted Probabilistic Forecasting and Variance Harvesting; the missing pieces are the interaction taxonomy, three-pool architecture, and pool-specific staffing models.

- Level 4 — Advanced (The Ecosystem Emerges) — VPM is the canonical operating model. All four building blocks are in place. Outputs are distributional. AI is treated as a workforce pool, not a deflection layer.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — VPM is extended via closed-loop governance: drift signals trigger model recalibration without human intervention, and the Cognitive Portfolio Model (N*) is empirically calibrated against in-house data rather than estimated.

Specific Level 5 reaches present in the framework but awaiting empirical calibration:

- The Cognitive Portfolio Model (N*) — Pool Collab's staffing equation — is novel. No Level 1–3 analog exists.

- Closed-loop governance — using drift signals across cost, CX, and EX to trigger model recalibration — is the Level 5 signature.

Practitioner Playbook

For a Level 3 organization moving toward Level 4 adoption:

- Start with the taxonomy, not the math. Catalogue interaction types from QA samples and contact-driver analysis. Score each on the five dimensions. This is the work; the rest follows from it.

- Use the Value Routing Model to score Value. The composite (CLV / CES / Revenue / Churn) is more defensible than a single 0–10 expert estimate, and the differential between human and AI scores per dimension is what actually drives routing.

- Apply the routing heuristic before doing any math. Sort interaction types into the three pools. Discover what the Pool AA volume actually is — it is rarely the vendor-promised number.

- Model Pool AA's full cost. Apply the escalation tax formula. Apply the demand-rebound discount to projected savings. Find the interior cost optimum — it is rarely 100% containment.

- Solve N* for Pool Collab. Use the Cognitive Portfolio fixed-point equation with calibrated estimates for the five parameters. Run sensitivity over ρ_max and intervention rate.

- Simulate Pool Spec. Use existing simulation tooling; the work that concentrates here is the longest, most heterogeneous, most variance-rich portion of the operation.

- Stand up the governance layer. Define drift signals for cost, CX, and EX. Schedule recalibration cadences. Treat the three metrics as coupled, not independent.

The first three steps are accessible to any Level 3 organization. Steps 4–7 require simulation-grade infrastructure.

Limitations and Research Agenda

The framework is documented honestly. Limitations the white paper enumerates:

- Cognitive Portfolio parameters await empirical calibration in contact-center contexts. Cross-domain analogs (air traffic control, ICU monitoring) inform the parameter ranges; direct contact-center calibration is open research. Wickens' multiple resource theory provides the cognitive architecture but does not supply contact-center-specific parameter values.[6]

- Three-pool implementation evidence is thin. The architecture is mathematically coherent and operationally implementable, but no published large-scale implementation exists yet.

- Routing heuristic is not optimal. The default thresholds are motivated by Cobham's c-μ scheduling rule but lack a closed-form optimality guarantee.

- Complexity Premium needs better empirical anchoring. The 5–8% AHT increase per 10pp containment is reasonable as a planning estimate but is not yet empirically pinned for contact-center work.

- Demand rebound elasticities are short-run. Long-run elasticities (which transportation and energy economics consistently find to be larger) are not yet measured for service operations. Hymel, Small, and Van Dender (2010) found long-run rebound effects 2–3x larger than short-run in transportation — a pattern that may hold for service demand.[7]

- Value Score subjectivity. While the four-dimension composite is more rigorous than a single expert estimate, CLV Impact and Churn Risk scores still depend on the quality of CRM data integration. Organizations with poor CRM-to-contact-center data linkage will produce noisy Value Scores.

See Also

- Service Demand Rebound Model — why deflection systematically misses its savings

- Three-Pool Architecture — Pool AA, Pool Collab, Pool Spec

- Cognitive Portfolio Model (N*) — Pool Collab's staffing equation

- The Escalation Tax — the gap between marginal AI cost and expected AI cost

- Interior Optimum (containment rate) — the U-curve in total cost over containment rate

- Value Routing Model — composite Value Score methodology

- WFM Labs Maturity Model™ — the five-level framework VPM anchors at Level 4

- WFM Ecosystem Architecture — the four-pillar architecture VPM operates inside

- Probabilistic Forecasting — Level 3 prerequisite

- Variance Harvesting — Level 3 prerequisite

- Multi-Objective Optimization in Contact Center — the optimization basis for the governance layer

- Discrete-Event vs. Monte Carlo Simulation Models — Pool Spec's staffing basis

- Workforce Cost Modeling — the cost-per-producing-FTE frame that feeds the VBPM cost objective

- AI in Workforce Management — the broader ecosystem context

- AI Scaffolding Framework — how AI capabilities mature within each pool

- Workforce Planning with AI Agents — tactical AI workforce management

- Capacity Planning Methods — the capacity planning methods VPM draws from

- Service Level — the metric VPM replaces with a multi-objective frontier

Interactive Tools

- Service Model Simulator — servicemodel.wfmlabs.com. The interactive companion that lets practitioners sweep volume, handle time, agent cost, BPO rates, and AI capability across in-house, outsourced, and AI-automated service models. Produces the side-by-side cost / quality / scalability comparison that grounds VBPM pool-mix decisions before the full Three-Pool architecture is applied.

References

- ↑ Lango, T. (2026). Value-Based Models for Customer Operations — From Traditional Queuing to Bottom-Up Value Planning. WFM Labs white paper. 207 sources, 600+ evidence claims, 94 calibrated parameters.

- ↑ Rust, R. T. & Huang, M.-H. (2012). "Optimizing Service Productivity." Journal of Marketing, 76(2), 47–66.

- ↑ Reichheld, F. F. & Sasser, W. E. (1990). "Zero defections: quality comes to services." Harvard Business Review, 68(5), 105–111.

- ↑ Dixon, M., Toman, N., & DeLisi, R. (2013). The Effortless Experience. Portfolio/Penguin.

- ↑ Cobham, A. (1954). "Priority Assignment in Waiting Line Problems." Journal of the Operations Research Society of America, 2(1), 70–76.

- ↑ Wickens, C. D. (2008). "Multiple Resources and Mental Workload." Human Factors, 50(3), 449–455.

- ↑ Hymel, K. M., Small, K. A., & Van Dender, K. (2010). "Induced demand and rebound effects in road transport." Transportation Research Part B, 44(10), 1220–1241.