Probabilistic Forecasting

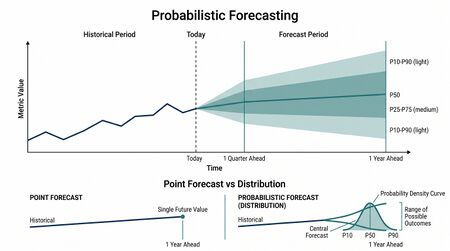

Probabilistic Forecasting produces forecasts as distributions rather than single numbers. Where a point forecast says "we expect 412 calls next Tuesday," a probabilistic forecast says "next Tuesday's call volume will be between 380 and 460 with 80% confidence; here's the full distribution." The shift from point forecasts to probabilistic forecasts is the operational expression of the Risk-Aware Planning principle — capacity decisions made on ranges, not on point estimates.

This page documents how probabilistic forecasts are produced, how they are evaluated, and how WFM organizations operationalize them in practice.

Why probabilistic forecasts matter

A point forecast is incomplete. The reality is uncertain — same forecast process, applied to many comparable series, will produce errors of varying magnitude. The point forecast is the central estimate of the distribution of plausible futures; the distribution itself is what matters for decisioning.

Three operational consequences:

- Capacity planning conversations change. "We need 412 FTE" produces a budget argument. "We need 380-460 FTE with 80% confidence; here are the risk drivers" produces a risk-management conversation.

- Service-level reliability becomes computable. Given the demand distribution, you can compute the probability of meeting service level under any given staffing plan. This is the inverse of "what's our average service level under this plan."

- Variance harvesting becomes principled. Variance Harvesting depends on knowing not just expected variance but the distribution — when variance falls within the expected range, automation activates harvesting moves; when it falls outside, exception escalation kicks in.

The skill: producing probabilistic forecasts that are honest about uncertainty without being so wide they're useless.

Three forms of probabilistic output

Prediction intervals

A range with associated confidence: "80% PI of [380, 460]" means the actual is expected to fall in [380, 460] with 80% probability. PIs are summarized by their boundaries.

Quantile forecasts

The distribution at named percentiles: P10, P50, P80, P90, P95. The P50 is the median (the central estimate when symmetric); P80 is the value the actual is expected to exceed only 20% of the time. Capacity planning to P80 means staffing for the 80th-percentile demand.

Full predictive distributions

The full probability density over possible future values. Mathematically the most complete; practically used as the input to PI / quantile computation.

In WFM practice, quantile forecasts are the most useful form: they map directly onto risk-tolerance decisions. P80 vs P95 vs P99 capacity plans are different conversations with different cost-vs-reliability tradeoffs.

Producing probabilistic forecasts from existing methods

Most forecasting methods have an associated error model. The methods on the linked pages — ETS, ARIMA, regression — produce both a point forecast and an estimate of forecast variance.

Gaussian assumption (default)

If the forecast errors are assumed Normally distributed:

where is the standard normal quantile (1.96 for 95% PI; 1.28 for 80% PI).

This works when the assumption holds — typically true for well-behaved smooth series with the trend and seasonal structure modeled. For an ETS or ARIMA model with reasonable residuals, the built-in PI from the model fitting is a Gaussian PI of this form.

Bootstrap prediction intervals

When the Gaussian assumption fails — non-normal residuals, heavy tails, skewed errors — bootstrap PIs are more reliable.

The procedure: resample the in-sample residuals many times, simulate forward forecasts using each resampled residual sequence, and read off the quantiles of the simulated forecast paths.

In WFM, residuals from contact-volume models are often skewed (volume can spike up much further than it can dip down). Bootstrap PIs preserve that asymmetry where Gaussian PIs do not.

Conformal prediction

A modern approach (active research area; growing software support) that produces PIs with finite-sample coverage guarantees regardless of the underlying error distribution.

The idea: hold out a calibration set; compute residuals on the calibration set; the empirical residual quantiles define the PI margins for new forecasts.

For WFM contexts where the error distribution is unknown or changes over time, conformal prediction provides honest PIs without distributional assumptions. Implementation is straightforward in modern Python forecasting stacks (statsforecast, mapie).

Evaluating probabilistic forecasts

Standard accuracy metrics evaluate point forecasts. Probabilistic forecasts need different metrics.

Pinball loss (quantile loss)

For a quantile forecast at level :

Pinball loss is asymmetric. For a P80 forecast (), missing on the high side costs , missing on the low side costs . The asymmetry matches the asymmetry of the quantile being forecast.

Average pinball loss across many forecast periods is the right metric for evaluating quantile forecast quality.

Continuous Ranked Probability Score (CRPS)

For full distributional forecasts, CRPS measures the integrated squared error between the forecast CDF and the empirical step function at the realized actual:

Lower is better. CRPS reduces to MAE when the forecast is a point estimate, so it generalizes the standard accuracy metric.

Coverage and reliability

A 90% PI should contain the actual 90% of the time. If your "90% PI" contains the actual only 70% of the time, the PI is mis-calibrated — usually too narrow. Coverage diagnostics on held-out data are the basic reliability check.

A reliable probabilistic forecast can still be wide (wide PIs that always cover); a narrow forecast that often misses is over-confident. The trade-off is captured by metrics like CRPS that combine reliability and sharpness.

Common WFM pitfalls

- Treating the model's default PI as accurate. WFM software platforms ship with PIs based on Gaussian assumptions and in-sample fit. On out-of-sample data with structural changes, those PIs are often badly mis-calibrated. Run coverage diagnostics on held-out data before trusting them.

- Using point forecasts for capacity decisions when uncertainty is large. If the 80% PI on a skill is [200, 600], staffing to the point forecast of 400 produces a fragile plan that breaks under modest variance. Plan to P80 (around 540 in this example) and accept the over-staffing in expected value to gain the reliability.

- Confusing PI for confidence interval. A confidence interval on a model parameter expresses uncertainty about the estimate; a prediction interval expresses uncertainty about the future realization. They are not the same, and PIs are typically wider than CIs.

- Ignoring asymmetry. Volume distributions are usually right-skewed; Gaussian PIs treat the tails symmetrically and underestimate the upper tail. For capacity planning, the upper tail is what matters most.

Connection to capacity planning

Probabilistic forecasting is the layer between standard forecasting and the Pillar 3 — Advanced Capacity Planning discipline. Single-point estimates feeding into Erlang or simulation produce single-point capacity plans; probabilistic forecasts feeding the same engines produce capacity plans with explicit risk profiles.

The downstream conversation: "what risks are we comfortable resourcing for, and what risks do we accept?" — exactly the conversation risk-aware planning frames as the upgrade over precision-theater capacity planning.

Connection to variance harvesting

Variance Harvesting depends on probabilistic forecasts to define the "expected variance window." When intraday variance falls within the 80% PI, the system runs harvesting moves (coaching, training, VTO) automatically. When variance falls outside the PI — an unforecasted event — automation surfaces an exception for human handling.

Without a probabilistic forecast, "expected variance" is a vague concept. With one, it is computable, calibrated, and operationalized.

Distributional outputs in the Value-Based Planning Model

The Value-Based Planning Model consumes distributional outputs as the primary feed into its multi-objective governance layer. Three distribution families anchor the framework, each chosen to match the structural shape of the underlying quantity rather than for analytical convenience.[1]

Beta-distributed resolution rates. Pool AA (Autonomous AI) containment and Pool Collab first-contact resolution are bounded on [0, 1] and exhibit asymmetric uncertainty — high-confidence containment estimates near the upper bound have less variance than estimates near the middle. The Beta distribution natively encodes this. Modeling resolution as Beta(α, β) rather than Gaussian preserves the bounded-asymmetric shape and prevents the governance layer from sampling impossible values (containment > 1 or < 0) during scenario sweeps.

Gamma-distributed effort scores. Customer effort and agent cognitive load are non-negative, right-skewed quantities with a long upper tail. Gamma is the natural family. The shape parameter encodes the tail thickness — heavy-tail effort regimes (where occasional contacts are far harder than the median) are exactly the cases where Gaussian effort assumptions produce dangerously narrow planning intervals.

Shifted-lognormal value-per-interaction. Value per interaction has a floor (no contact is worth less than its handling cost) and an unbounded right tail (a single retention save or upsell can be worth thousands). The shifted lognormal — lognormal shifted by the floor — is the closed-form match. Aggregating value across the Three-Pool Architecture requires this distributional shape; treating value as Gaussian or as a point estimate hides the long-tail interactions that disproportionately drive Pool Spec ROI.

The governance layer simulates Cost / CX / EX outcomes by sampling jointly from these distributions. The Pareto frontier described in Multi-Objective Optimization in Contact Center is computed against the distributional outputs, not against point estimates, which is what allows the framework to report expected outcomes alongside risk-adjusted operating points.

N* as a sensitivity band

The Cognitive Portfolio Model (N*) reports its core staffing parameter — the cognitive concurrency limit — as a sensitivity band, not a point estimate. This is the same probabilistic discipline applied to the human-AI portfolio staffing problem. N* is the fixed-point solution of an equation in five parameters (intervention rate, intervention duration, switching cost, monitoring overhead, sustainable utilization), and three of those parameters await empirical calibration in contact-center contexts. Reporting N* as "12 to 28, with the working point at 18 conditional on intervention rate < 0.4" is the honest output; reporting "N* = 18" is precision theater.

The same techniques on this page — bootstrap intervals, conformal coverage, sensitivity sweeps — apply directly to N*. The distinction is that the underlying parameters are estimated with high uncertainty, so the band is wide. That width is the point. Pool Collab staffing recommendations should be expressed as ranges with documented sensitivity drivers, not as authoritative point estimates.

Probabilistic measurement of rebound elasticities

The Service Demand Rebound Model decomposes post-deflection demand recovery into three components — direct (15-35%), indirect (10-20%), and systemic (5-15%) rebound — each parameterized by an elasticity coefficient. These elasticities cannot be calibrated honestly from point estimates. The whole purpose of the SDRM is to quantify a behavioral response that is itself stochastic, time-varying, and confounded with concurrent business changes.

Calibrating SDRM rebound elasticities requires probabilistic measurement throughout: forecast the counterfactual no-deflection volume as a distribution, measure the deflected-state volume as a distribution, and infer the rebound elasticity as the distributional difference. The framework's empirical ranges (R_d 15-35%, R_i 10-20%, R_s 5-15%) are themselves probabilistic — they are quantile bands of the elasticity, not point estimates. Treating them as point estimates collapses the structure and reproduces the single-number planning failure the framework was built to replace.

Maturity Model Position

Probabilistic forecasting is the discipline that gates the upper WFM Labs Maturity Model™ levels. Level 2 — Foundational (Traditional WFM Excellence) operates on point forecasts with informal variance buffers. Level 3 — Progressive (Breaking the Monolith) introduces formal prediction intervals and the variance-harvesting loop that depends on them. Level 4 — Advanced (The Ecosystem Emerges) requires distributional outputs throughout the planning stack, since the Value-Based Planning Model cannot be operated on point inputs. Level 5 — Pioneering (Enterprise-Wide Intelligence) extends probabilistic forecasting to the enterprise data fabric — customer-behavior prediction, channel propensity, value-per-contact — with continuous calibration against live data. The mathematical content does not change across levels; the breadth of inputs and the rigor of calibration do.

References

- Hyndman, R. J., & Athanasopoulos, G. "Prediction intervals." Forecasting: Principles and Practice (Python edition). otexts.com/fpppy.

- Gneiting, T., & Katzfuss, M. "Probabilistic forecasting." Annual Review of Statistics and Its Application 1, 2014.

- Romano, Y., Patterson, E., & Candès, E. J. "Conformalized quantile regression." Advances in Neural Information Processing Systems 32, 2019.

- ↑ Lango, T. (2026). Value-Based Models for Customer Operations.

See Also

- Forecasting Methods — overview; probabilistic forecasting layers on top of any method

- Exponential Smoothing — built-in Gaussian PIs from ETS state space models

- ARIMA Models — built-in Gaussian PIs from ARIMA state space models

- Regression for Forecasting — PIs from regression with appropriate error model

- Forecast Accuracy Metrics — point-forecast metrics; this page extends to probabilistic

- Variance Harvesting — the operational practice that depends on probabilistic forecasts

- WFM Ecosystem Architecture — Pillar 3 (Advanced Capacity Planning) and Risk-Aware Planning

- Intermittent Demand Forecasting — where probabilistic forecasting is most important and most challenging

- Hierarchical Forecasting — probabilistic forecasts can also be reconciled across hierarchies

- Value-Based Planning Model — distributional outputs feed the Level 4 multi-objective governance layer

- Service Demand Rebound Model — rebound elasticities require probabilistic measurement

- Cognitive Portfolio Model (N*) — N* is reported as a sensitivity band, not a point estimate

- Probabilistic Planning in WFM

- Statsmodels and Time Series Analysis

- Prophet and Automated Forecasting

- Staffing to Percentile vs Mean Forecast

Interactive tools

- Minimal Interval Variance (MIV) calculator — minimal-variance.wfmlabs.com. Computes the Poisson-derived statistical floor of forecast variance for any interval given weekly volume and operating hours. Adds a binomial-derived MIV for abandon rate. If your point-forecast errors are tighter than the MIV floor, you are chasing statistical noise that no method can eliminate. The natural starting point for setting forecast accuracy targets and for sizing prediction intervals against the irreducible component.