Regression for Forecasting

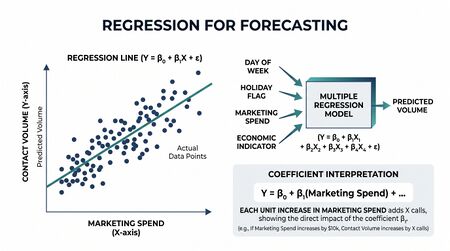

Regression for Forecasting is the family of methods that produces forecasts as a function of one or more explanatory variables. Where naive, ETS, and ARIMA are univariate methods (the forecast depends only on the series's own past values), regression methods incorporate external drivers — marketing spend, holiday calendars, promotional events, weather, economic indicators — that affect the series.

For workforce management, regression is the canonical method when contact volume responds to known business inputs that can be planned in advance. The campaign team knows the marketing spend three months out; the WFM forecast should incorporate that input rather than try to reconstruct it from volume history alone.

When to reach for regression

Regression earns its complexity when:

- Known business drivers exist. Marketing spend, promotional events, product launches, billing cycles, holiday calendars. If the driver can be enumerated and quantified, regression can use it.

- The driver-response relationship is stable. Last year's $1M ad spend produced predictable volume lift; this year's $1M ad spend will produce a similar lift. If the response curve is unstable or unknown, regression's coefficients are not reliable.

- Univariate methods miss the structural changes. If your ETS or ARIMA forecast misses every product launch by a wide margin, the launch is the missing variable.

- Capacity planning needs scenario modeling. "What if marketing spend is 1.5×?" is exactly the question regression answers — a univariate forecast cannot.

Regression earns its place alongside univariate methods, not instead of them. The regression captures the response to known drivers; an ARIMA error structure (see ARIMAX below) captures the autocorrelation regression alone misses.

Linear regression for time series

The basic form: the value at time is a linear combination of explanatory variables plus error.

where are the values of explanatory variables at time , the are coefficients estimated from historical data, and is error.

In WFM:

- = call volume in interval

- = marketing spend that week

- = binary indicator for promotional event

- = day-of-year holiday flag

The regression estimates how much each driver contributes to volume. The forecast: plug in next period's planned values of and read off the predicted .

Common explanatory variables in WFM

Calendar variables

- Day of week (Monday=1, ..., Sunday=7) — usually represented as 6 dummy variables

- Month or quarter — for monthly/quarterly forecasts with seasonal effects

- Holiday flag — binary indicator for known holidays

- Day-after-holiday — often as important as the holiday itself in contact center data

- Trading days — number of business days in the period

- Distance to month-end / quarter-end — for billing-driven contact patterns

Business drivers

- Marketing spend — total or by channel

- Active customer count — for support volume modeling

- New customer signups — leading indicator for support volume in subsequent periods

- Product launch flag — binary indicator for known launches

- Billing cycle position — bills sent / due dates

- Promotion / campaign flags — known events expected to drive volume

Lagged variables

A driver's effect may be delayed. Marketing spend in week may lift volume in week through :

Lagged regression captures distributed-lag effects — the response curve over time, not just the immediate response.

ARIMAX — regression with ARIMA errors

Pure linear regression assumes the errors are uncorrelated. In time series, they almost never are: residuals after fitting a regression typically show autocorrelation (the unmodeled part of the series correlates with itself over time).

ARIMAX handles this by fitting a regression and modeling the residuals as an ARIMA process simultaneously:

where follows ARIMA(p,d,q):

This is the standard approach for WFM forecasts that combine known drivers with autocorrelation. Modern statistical software (R's `forecast` package, Python's `statsmodels`) fits ARIMAX models in one step, automatically selecting for the residual ARIMA structure.

See ARIMA Models for the underlying ARIMA mechanics. ARIMAX is essentially "regression + ARIMA on the residuals" with simultaneous parameter estimation.

Dummy variables for events

Most WFM regression uses dummy variables — binary 0/1 indicators — for events:

Common WFM dummy structures:

- One dummy per major holiday — Thanksgiving, Christmas, New Year's, Independence Day, etc., learned individually because their effects differ

- Day-of-week dummies — typically 6 dummies for Tue-Sun with Mon as the reference

- Promotion-specific dummies — one per major campaign

A subtle point: don't pool dummies that have heterogeneous effects. A single "holiday" dummy averages Christmas (massive volume drop) with Memorial Day (modest drop), producing a coefficient that under-predicts Christmas and over-predicts Memorial Day.

Common WFM pitfalls

- Multicollinearity. Explanatory variables that are correlated with each other (marketing spend and active customer count, both rising over time) produce unstable coefficients. Drop one or use regularization.

- Look-ahead bias. Including in a variable whose value is unknown at forecast time. If you forecast next month's volume, you cannot include next month's actual marketing spend; you can only include planned or budgeted spend.

- Treating dummies as continuous. A holiday dummy that equals 1 occasionally and 0 the rest of the time is not a continuous variable. Don't transform it (e.g., logging it) without thinking carefully.

- Ignoring residual autocorrelation. Plain linear regression on time series produces residuals with strong autocorrelation. ARIMAX or generalized least squares with autocorrelated errors fixes this.

- Overfitting with too many dummies. One dummy per holiday + one per promotion + one per day of week + ... can produce a model with more parameters than reliable signal. Use cross-validation to verify the additions actually improve out-of-sample performance.

Diagnostic checks

After fitting a regression model:

- Residual ACF and PACF. If the residuals show autocorrelation, switch to ARIMAX or include lag terms.

- Coefficient significance. Coefficients with p-values >> 0.05 are not reliably non-zero — consider dropping them, especially if the variable was added speculatively.

- Coefficient signs. Marketing spend coefficient should be positive (more spend → more volume); a negative or unexpected sign signals multicollinearity, look-ahead bias, or model misspecification.

- Out-of-sample accuracy. Hold out a recent period; verify the regression's accuracy on that period using Forecast Accuracy Metrics. If it doesn't beat the simpler ETS/ARIMA on out-of-sample data, the regression's complexity is not justified.

Connection to capacity planning

Regression for forecasting is one of the practitioner-level capabilities that distinguishes Pillar 3 (Advanced Capacity Planning) from traditional WFM software. Most WFM platforms support univariate forecasting well; few support meaningful regression with ARIMA errors. When the capacity plan must respond to known business drivers, regression-capable tooling is the differentiator.

The output of a regression-based forecast is also more useful for scenario planning: "what FTE do we need under the high marketing spend scenario versus the low?" can be answered directly by varying the input values and re-deriving the forecast.

Maturity Model Position

In the WFM Labs Maturity Model™, regression-based forecasting (and especially ARIMAX) is a Level 3+ capability — most enterprise WFM platforms support univariate methods well but offer weak support for regression with explanatory variables. Adopting regression-capable forecasting is one of the moves that distinguishes Pillar 3 (Advanced Capacity Planning) tooling from the WFM core.

- Level 1 — Initial (Emerging Operations) — known business drivers are not modeled; their effects are absorbed into judgmental adjustments.

- Level 2 — Foundational (Traditional WFM Excellence) — calendar dummies (day-of-week, holiday) may be in use inside the WFM platform, but marketing spend, billing cycles, and product launches are handled by manual override rather than structured regression.

- Level 3 — Progressive (Breaking the Monolith) — regression with calendar variables and business drivers is the production method when drivers exist; ARIMAX captures residual autocorrelation; coefficients are inspected for sign, significance, and multicollinearity.

- Level 4 — Advanced (The Ecosystem Emerges) — regression-based forecasts feed scenario planning ("FTE under high vs low marketing spend") that would be impossible with univariate methods; outputs feed distributional capacity plans.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — regression specifications are continuously refit by the analytical platform as driver-response relationships shift; new drivers are tested automatically; the practitioner role is hypothesis design rather than model fitting.

Regression for forecasting is a clear next-gen practice for any WFM team whose volume responds to plannable business inputs — its absence at Level 2 leaves significant accuracy on the table.

References

- Hyndman, R. J., & Athanasopoulos, G. "Time series regression models." Forecasting: Principles and Practice (Python edition). otexts.com/fpppy.

See Also

- Forecasting Methods — overview of all forecasting families

- ARIMA Models — the ARIMA component of ARIMAX

- Exponential Smoothing — alternative when no useful explanatory variables exist

- Forecast Accuracy Metrics — how to evaluate whether the regression earns its complexity

- Demand calculation — calculator that consumes the regression's forecast output

- WFM Ecosystem Architecture — Pillar 3 (Advanced Capacity Planning)