Reporting and Analytics Framework

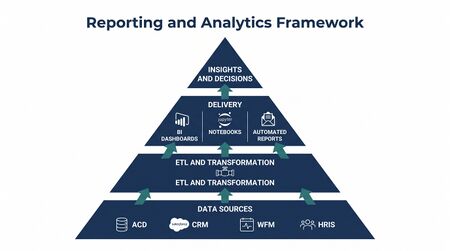

A Reporting and Analytics Framework in workforce management (WFM) is a structured architecture of data sources, transformation processes, delivery mechanisms, and governance policies that converts raw operational data into actionable intelligence for contact center and workforce planning decisions. Unlike a simple collection of dashboards, a reporting framework defines how data flows from source systems to decision-makers, at what cadence, and at what level of aggregation, across the full spectrum from intraday agents to enterprise executives. The discipline spans traditional graphical business intelligence (BI) tools, programmatic data pipelines, and self-service analytics platforms. As organizations mature in their use of workforce data, the emphasis shifts from reactive "pull reports" — analysts generating files on request — toward proactive "push insights" where automated systems surface anomalies and forecasts before stakeholders ask.[1] A coherent framework is foundational to reliable Performance Management, Forecast Accuracy Metrics tracking, and cost transparency as described in Workforce Cost Modeling.

Core Purpose and Scope

WFM reporting exists to answer a specific class of operational and strategic questions: Is staffing adequate today? Did the forecast hold last week? What is driving unit labor cost this quarter? Are adherence levels consistent across sites? These questions differ in time horizon, granularity, and audience, which is why a single report or dashboard cannot serve all purposes. A framework approach acknowledges this diversity and assigns each question to the appropriate reporting layer.

The scope of WFM reporting overlaps with, but is distinct from, broader contact center analytics. Quality management metrics (see Quality Management) and customer experience indicators (see Customer Experience Management) feed into the same data infrastructure but are typically governed by different teams. A mature framework defines clear ownership at the data layer, preventing the common failure mode in which the WFM platform, the BI tool, and the CRM all report different handle time figures with no mechanism to adjudicate the discrepancy.

The operational intelligence produced by a reporting framework supports three decision classes:

- Intraday decisions — real-time staffing adjustments, break management, overflow routing

- Tactical decisions — schedule optimization for the next one to four weeks, shrinkage budgeting, training scheduling

- Strategic decisions — headcount planning, site expansion or consolidation, technology investment, vendor contract evaluation

Each class has different latency requirements, accuracy tolerances, and audience profiles, all of which inform framework design.

Data Architecture: Sources and Flows

WFM analytics draws from several distinct source systems, each with its own data model, refresh cadency, and reliability characteristics.

Source Systems

- Automatic Call Distributor (ACD) / Contact Routing Platform

- The primary source for volume, handle time, service level, and abandonment data. Modern cloud contact center platforms (e.g., Amazon Connect, Genesys Cloud, Five9) expose this data via real-time APIs and historical reporting APIs in addition to traditional bulk exports. ACD data is typically the ground truth for Service Level and Average Handle Time calculations.

- WFM Platform

- Systems such as NICE WFM, Verint WFM, and Calabrio provide forecasts, schedules, real-time adherence data, and workforce cost projections. WFM platforms often have embedded reporting modules adequate for operational use but limited in cross-system join capability.

- Customer Relationship Management (CRM)

- Contains case, ticket, and customer interaction data that contextualizes contact volume — useful for First Contact Resolution analysis and linking workforce outcomes to customer experience.

- Human Resources Information System (HRIS)

- Provides headcount, employment classification, leave balances, and compensation data required for cost modeling and compliance reporting.

- Quality and Workforce Engagement Management (WEM) Platforms

- Contribute quality scores, coaching records, and agent development data that intersect with performance and adherence reporting.

ETL Patterns

Extract, Transform, Load (ETL) — or increasingly Extract, Load, Transform (ELT) — pipelines move data from source systems into an analytics layer. In contact center environments, several patterns are common:

- Scheduled batch exports: The WFM platform or ACD generates flat files (CSV, Excel) on a nightly or hourly schedule, which are ingested into a data warehouse. Simple to implement; introduces latency; fragile when source formats change.

- Database-to-database replication: Direct queries against operational databases using tools such as Fivetran, Airbyte, or custom SQL scripts. Lower latency; requires database access credentials and schema knowledge.

- API-driven ingestion: REST or GraphQL API calls to cloud platforms, storing JSON responses and transforming downstream. Supports near-real-time pipelines; complexity scales with the number of source APIs.

- Streaming ingestion: For intraday dashboards, event-stream architectures (Apache Kafka, AWS Kinesis) deliver sub-minute latency but require significantly more engineering investment.

Data transformation — normalization of time zones, reconciliation of interval boundaries, deduplication, and enrichment — is where most data quality failures originate. A framework must specify transformation rules explicitly rather than relying on individual analyst judgment each time a report is produced.

Data Quality Considerations

Contact center data is structurally noisy. Phantom contacts, misrouted calls logged to wrong queues, system outages that create artificial zero-volume intervals, and clock skew between ACD and WFM timestamps are common. A production reporting framework requires data validation rules at the ingestion layer — not as an afterthought — and clear documentation of known data quality exceptions so that report consumers understand the confidence interval around published figures.[2]

Traditional Reporting Stack

The traditional reporting stack for WFM organizations combines platform-native reports with one or more BI layers.

Platform-Native Reports

WFM platforms ship with pre-built report templates covering the most common operational views: schedule adherence, intraday staffing versus forecast, schedule efficiency, and planned versus actual headcount. These reports are adequate for day-to-day operational use and require minimal technical overhead. Their limitations include restricted join capability (they can only access data within the WFM platform), limited visualization flexibility, and export formats (usually CSV or Excel) that require manual effort to incorporate into executive-level communications.

Business Intelligence Tools

BI platforms — Microsoft Power BI, Tableau, Looker (now Google Looker), Qlik Sense — sit atop the data warehouse or connect directly to source systems to provide interactive visualization, cross-system joins, and scheduled report distribution. For mid-to-large WFM teams, a dedicated BI layer is the standard approach to delivering consistent reporting across audiences.

Power BI is prevalent in Microsoft-ecosystem organizations due to licensing bundling (Microsoft 365) and native integration with Azure data services. Tableau has historically dominated in organizations with dedicated analytics teams due to its flexibility and calculation depth. Looker, positioned as a semantic-layer BI tool, enforces metric definitions in a centralized data model (LookML), addressing the "metric drift" problem where different teams calculate the same KPI differently.

Regardless of platform, BI-layer reporting shares a common constraint: it reflects the data model of the underlying warehouse. When source data is incomplete or inconsistently structured, no BI tool compensates automatically.

Embedded Reporting in the WFM Stack

Some contact center technology vendors provide embedded analytics tightly integrated with their operational tools — for example, real-time dashboards within WFM scheduling interfaces, or supervisor views within the ACD routing console. These are appropriate for role-specific operational use but are rarely the right tool for cross-functional or executive reporting.

Modern and Programmatic Reporting Stack

The increasing availability of cloud APIs, open-source data tooling, and computational notebook environments has expanded the WFM analytics toolkit beyond GUI-based BI tools.

Python and pandas for Ad-Hoc Analysis

Python, with the pandas library for tabular data manipulation, has become a standard tool for ad-hoc WFM analysis. Analysts use Python to perform calculations that would require complex BI formulas or manual spreadsheet work: Erlang C staffing model runs, Monte Carlo forecast uncertainty simulation, cohort analysis of agent attrition, and multi-variable regression on schedule adherence drivers. Python integrates directly with REST APIs, enabling direct data pulls from ACD and WFM platforms without intermediary exports.

The tradeoff is reproducibility and accessibility. A Python script run locally by one analyst produces results others cannot easily inspect or verify. Jupyter notebooks (discussed below) address the reproducibility concern; documentation and version control address the accessibility concern.

Jupyter Notebooks for Reproducible Analysis

Jupyter notebooks combine code, prose documentation, and rendered output (tables, charts) in a single document that can be re-executed against new data. In WFM analytics, notebooks serve two distinct use cases:

- Exploratory analysis: An analyst investigates a specific question — why did service level degrade in week 34? — building up analysis cells interactively. The notebook captures the full analytical narrative alongside the code.

- Scheduled production reports: Tools such as Papermill (parameterized notebook execution) and nbconvert (notebook-to-HTML or PDF rendering) allow notebooks to function as scheduled report generators, producing formatted reports on a cron schedule without manual intervention.

Notebooks stored in version control (Git) provide an audit trail for analytical decisions — a significant advantage over Excel-based ad-hoc analysis where formula changes leave no history.

SQL-Based Data Pipelines and dbt

SQL remains the primary language for data transformation in modern analytics stacks. dbt (data build tool) structures SQL transformations as a directed acyclic graph (DAG) of models with built-in testing, documentation, and lineage visualization. For WFM teams that have moved data into a cloud warehouse (Snowflake, BigQuery, Redshift, DuckDB), dbt provides a disciplined approach to defining and versioning the metrics that feed BI dashboards and scheduled reports.

A dbt model for, say, daily Adherence and Conformance by team encodes the calculation logic once, tests it against known edge cases, and ensures every downstream consumer — whether a Power BI dashboard, a Jupyter notebook, or a Python script — reads from the same computed table rather than implementing the calculation independently.

API-Driven Report Generation

Cloud WFM and ACD platforms expose programmatic APIs that allow reports to be generated, fetched, and distributed without human interaction with the platform's UI. An organization running NICE WFM can script nightly pulls of schedule adherence data via the NICE API, join them with ACD data from the Genesys Cloud API, and push the result to a Slack channel or email distribution list — all without opening a browser. This pattern reduces analyst manual effort and eliminates the latency introduced by waiting for a human to run and distribute a report.

Report Types by Cadence and Audience

A complete reporting framework serves multiple time horizons simultaneously.

Real-Time Dashboards

Wallboard displays and supervisor desktop views provide interval-level (typically 15- or 30-minute) data on current staffing levels, queue depth, service level, and agent states. Data latency is typically under two minutes from ACD. The audience is intraday operations staff: team leads, WFM real-time analysts, and operations supervisors making immediate staffing decisions. See WFM KPI Hierarchy and Reporting Cadence for guidance on which metrics belong at this layer.

Daily Scorecards

Produced after the prior day's data is finalized (typically early morning), daily scorecards summarize key performance indicators for team leads and site managers: service level attainment versus target, occupancy, shrinkage, adherence, and handle time versus forecast. Format is typically tabular — a structured view by team or queue — rather than narrative.

Weekly Summaries

Aggregated to the week level, weekly summaries support trend analysis across the forecast cycle. They are appropriate for WFM analysts, operations managers, and site directors. Weekly cadence aligns with the primary scheduling cycle in most contact center operations.

Monthly Business Reviews

Monthly packages consolidate operational performance (service level trends, cost per contact, headcount versus plan) with quality and customer experience data for director and VP-level audiences. These reports require more narrative context than daily or weekly scorecards — explaining variance, identifying root cause, and connecting workforce metrics to business outcomes.

Quarterly Strategic Reviews

Long-horizon views of workforce productivity, cost trends, attrition, and capacity planning support C-suite and board-level conversations. At this cadence, individual KPIs matter less than trajectory, comparative benchmarks, and forward-looking projections. Executive audiences rarely need — and are typically harmed by — the operational metric detail appropriate for team leads.

Ad-Hoc Deep Dives

Request-driven analyses that investigate specific questions outside the normal reporting cadence. Examples: root cause analysis of a service level failure during a peak period, impact assessment of a new routing change on handle time, or financial modeling of a proposed schedule change. Ad-hoc work is where programmatic tools (Python, Jupyter, SQL) most clearly outperform GUI BI tools in speed and analytical depth.

The Shift from "Pull Reports" to "Push Insights"

In early-stage WFM organizations, reporting is largely reactive: a manager emails the analytics team requesting a specific report, an analyst pulls data and produces an Excel file, and the file is distributed hours or days after the question arose. This "pull" model is labor-intensive, slow, and depends on the analyst correctly interpreting the manager's underlying question.

Mature organizations shift toward "push insights" delivery: automated systems monitor key metrics continuously, surface anomalies when thresholds are breached, and deliver pre-built context to decision-makers without waiting for a human request. Examples include automated Slack alerts when intraday service level falls below target, weekly narrative summaries generated from structured data by templated report programs, and proactive exception reports identifying agents at risk for adherence failure before the end of the week.

This shift requires both technical investment (pipeline reliability, automation tooling) and organizational change (stakeholders must trust automated outputs enough to act on them without manual validation).[3]

Maturity Model Considerations

At Level 2 (Developing), reporting typically consists of platform-native reports and manually maintained Excel scorecards. Data quality issues are common and often unacknowledged. Reports are produced on demand rather than on a reliable schedule. The primary bottleneck is analyst bandwidth.

At Level 3 (Defined), a BI layer (Power BI, Tableau, or equivalent) provides standardized dashboards for operational audiences. ETL pipelines exist but may be fragile or poorly documented. Report cadences are established and generally reliable. Ad-hoc analysis still relies heavily on Excel.

At Level 4 (Advanced), the reporting framework includes a data warehouse with documented transformation logic, scheduled automated reports, and programmatic tools for ad-hoc analysis. Data quality monitoring is automated. The team uses Python or SQL for analyses beyond BI tool capability. The "pull to push" transition is underway for key operational metrics.

At Level 5 (Optimizing), the framework incorporates self-service capabilities for non-analyst stakeholders, automated anomaly detection, and forecasting pipelines integrated with reporting outputs. The analytics team functions as an internal data product team, maintaining governed metric definitions consumed by multiple downstream systems. See WFM Labs Maturity Model for the full maturity framework.

Related Concepts

- WFM KPI Hierarchy and Reporting Cadence

- Reporting Automation and Self-Service Analytics

- Forecast Accuracy Metrics

- Adherence and Conformance

- Service Level

- Average Handle Time

- Occupancy

- Shrinkage

- Performance Management

- Workforce Cost Modeling

- WFM Labs Maturity Model

- Technology

References

- ↑ Bodin, L., et al. "Workforce Analytics Framework for Contact Centers." ICMI Global Contact Center Conference Proceedings, 2019.

- ↑ Few, S. Show Me the Numbers: Designing Tables and Graphs to Enlighten. 2nd ed. Analytics Press, 2012.

- ↑ Bodin, L., et al. "Workforce Analytics Framework for Contact Centers." ICMI Global Contact Center Conference Proceedings, 2019.

See Also

- Enterprise Data Platform — Enterprise data foundation (data lake / warehouse / Snowflake)

- Business Intelligence and Reporting — Enterprise BI and reporting layer

- Interaction Analytics — Content analytics across interaction channels

- Workforce Management — Overview of the WFM discipline

- Service Level — Primary accessibility metric reported in WFM dashboards

- Occupancy — Efficiency metric tracked in agent reporting

- Average Handle Time — Key operational metric in performance reports

- Shrinkage — Planning metric reported across WFM teams

- Forecast Accuracy Metrics — Measuring forecast quality

- WFM KPI Hierarchy and Reporting Cadence — Which metrics at which organizational level