Pandas and Data Manipulation for WFM

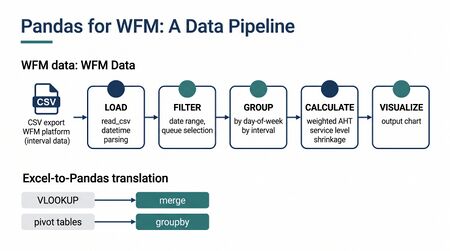

Pandas is an open-source [[Python for Workforce Management|Python]] library for data manipulation and analysis that has become the foundational tool for workforce management analytics built outside commercial platforms.[1] Named after "panel data"—a term from econometrics for multi-dimensional structured datasets—pandas provides two primary data structures (DataFrames and Series) that allow WFM practitioners to load, clean, transform, and analyze interval-level operational data using concise, readable code. For analysts accustomed to manipulating contact center data in Excel, pandas offers the same conceptual operations—filtering, pivoting, lookups, conditional logic—but with the ability to handle millions of rows, automate repetitive transformations, and integrate directly with forecasting models and visualization libraries.

The library is particularly well-suited to WFM work because contact center data is inherently tabular and time-indexed. Call records, interval-level metrics, agent schedules, adherence logs, and forecast outputs all arrive as rows-and-columns data with timestamps. Pandas was designed precisely for this kind of structured, time-series-oriented analysis.[2] Every operation a workforce analyst performs regularly—calculating average handle time by skill group, comparing forecast versus actual volumes, identifying shrinkage patterns by day of week—maps directly to pandas operations.

This article introduces pandas from the perspective of WFM practitioners who are building analytical capabilities in Python, whether through Jupyter notebooks, automated reporting scripts, or custom forecasting pipelines.

What Is Pandas

Pandas provides two core data structures that serve as the foundation for all data manipulation work.

DataFrames

A DataFrame is a two-dimensional labeled data structure—essentially a table with rows and columns, similar to a spreadsheet worksheet or a SQL table. Each column can hold a different data type (numbers, strings, dates, booleans), and both rows and columns have labels (called an index and column names respectively).[1]

For a WFM analyst, a DataFrame is the natural representation of interval-level data:

import pandas as pd

# A typical WFM interval DataFrame might look like:

# Interval Offered Handled AHT SL% Abandoned

# 2024-03-11 08:00 142 138 285 0.82 4

# 2024-03-11 08:15 156 149 291 0.79 7

# 2024-03-11 08:30 163 157 278 0.84 6

# ...

Each row represents a single interval (typically 15 or 30 minutes). Each column holds a metric. The index—the leftmost label—is a datetime that identifies when the interval occurred. This structure mirrors exactly how WFM platforms export data and how analysts think about interval performance.

DataFrames support operations on entire columns at once (vectorized operations), which means calculating a new metric across thousands of intervals requires a single line of code rather than a loop through each row.

Series

A Series is a one-dimensional labeled array—a single column of data with an index. When you select one column from a DataFrame, you get a Series. When you calculate an aggregate (mean AHT across all intervals), you get a scalar value from a Series.

# Selecting a single column returns a Series

aht_series = df['AHT']

# Series support direct arithmetic

# Calculate total handle minutes per interval

df['HandleMinutes'] = df['Handled'] * df['AHT'] / 60

The relationship between DataFrames and Series is analogous to the relationship between an Excel workbook and a single column: the DataFrame is the container, and each column is a Series that you can manipulate independently or in combination with other columns.

Loading WFM Data

WFM data arrives from multiple sources, each with its own format. Pandas provides dedicated functions for the most common formats encountered in contact center operations.

CSV Exports

Most WFM and ACD platforms offer CSV exports. This is the most common starting point for pandas-based analysis.[2]

import pandas as pd

# Basic CSV load

df = pd.read_csv('interval_data.csv')

# With datetime parsing — critical for WFM interval data

df = pd.read_csv('interval_data.csv', parse_dates=['Interval'],

index_col='Interval')

# Specifying data types to control memory usage

df = pd.read_csv('interval_data.csv',

dtype={'SkillGroup': 'category', 'Offered': 'int32'},

parse_dates=['Interval'])

The parse_dates parameter is particularly important for WFM data. Without it, pandas treats datetime columns as plain text strings, which prevents time-based operations like resampling, date filtering, and time zone conversion.

Excel Files

Many WFM teams exchange data via Excel workbooks, often with multiple sheets representing different skill groups, time periods, or metric categories.

# Read a specific sheet

df = pd.read_excel('weekly_report.xlsx', sheet_name='Interval Detail')

# Read all sheets into a dictionary of DataFrames

all_sheets = pd.read_excel('weekly_report.xlsx', sheet_name=None)

inbound = all_sheets['Inbound']

outbound = all_sheets['Outbound']

Database Connections

Larger WFM operations store historical data in SQL databases. Pandas integrates with any database that has a Python connector through the read_sql function.

import pandas as pd

from sqlalchemy import create_engine

engine = create_engine('postgresql://user:pass@host/wfm_database')

# Query interval data directly into a DataFrame

df = pd.read_sql("""

SELECT interval_start, offered, handled, avg_handle_time, service_level

FROM interval_metrics

WHERE skill_group = 'CustomerService'

AND interval_start >= '2024-01-01'

""", engine, parse_dates=['interval_start'])

This approach is preferable to CSV exports for ongoing analytical work because it eliminates the manual export step, ensures data freshness, and allows the database to handle initial filtering before data reaches Python.[1]

Working with Interval-Level Data

Contact center data is fundamentally time series data organized into fixed intervals. Pandas has extensive built-in support for exactly this type of data through its DatetimeIndex and time series functionality.

Datetime Indexing

Setting a datetime column as the DataFrame's index unlocks time-aware operations that are essential for WFM analysis.

# Convert string column to datetime and set as index

df['Interval'] = pd.to_datetime(df['Interval'])

df = df.set_index('Interval')

# Now time-based slicing works naturally

march_data = df['2024-03'] # All of March

first_week = df['2024-03-04':'2024-03-08'] # Monday to Friday

morning_peak = df.between_time('08:00', '12:00') # Morning hours only

This indexing behavior is one of pandas' most powerful features for WFM work. Selecting a full month of interval data, which might span 2,880 rows (30 days × 96 quarter-hour intervals), requires only a single string expression rather than manual filtering.

Interval Resampling

WFM platforms export data at various granularities. Some ACD systems report in 15-minute intervals; others use 30-minute intervals. Forecasting may require daily or weekly aggregation. Pandas' resample method converts between these levels.[2]

# Convert 15-minute intervals to 30-minute intervals

# Volumes should be summed; rates should be averaged

df_30min = df.resample('30min').agg({

'Offered': 'sum',

'Handled': 'sum',

'Abandoned': 'sum',

'AHT': 'mean',

'ServiceLevel': 'mean'

})

# Aggregate to daily totals

daily = df.resample('D').agg({

'Offered': 'sum',

'Handled': 'sum',

'AHT': 'mean'

})

# Aggregate to weekly totals (starting Monday)

weekly = df.resample('W-MON').sum()

The choice of aggregation function matters and reflects WFM domain knowledge. Contact volumes are additive—you sum them when rolling up from intervals to days. Handle time is an average—you take the mean (or, more precisely, a weighted average using handle counts). Service level percentages cannot simply be averaged across intervals; they must be recalculated from the underlying contacts offered and contacts answered within threshold. Pandas allows this level of control through the agg method.

Time Zone Handling

Multi-site contact center operations frequently encounter time zone complexity. An offshore site in Manila reporting in PHT needs to be aligned with a domestic site reporting in EST for consolidated analysis.[1]

# Localize naive timestamps to a specific time zone

df.index = df.index.tz_localize('America/New_York')

# Convert to a different time zone for consolidated reporting

df_utc = df.tz_convert('UTC')

df_manila = df.tz_convert('Asia/Manila')

# Combine data from multiple sites after converting to common time zone

domestic = domestic_df.tz_convert('UTC')

offshore = offshore_df.tz_convert('UTC')

combined = pd.concat([domestic, offshore])

This is a common source of errors in spreadsheet-based WFM analysis, where time zone conversions are performed manually and daylight saving transitions are frequently mishandled. Pandas uses the IANA time zone database and handles DST transitions automatically.

Common WFM Data Operations

The daily analytical work of workforce management—slicing data by time periods, grouping by patterns, calculating rolling trends, building interval profiles—maps directly to pandas operations.

Filtering by Date Range

# Last 8 weeks of data (common lookback for WFM forecasting)

import datetime

cutoff = datetime.datetime.now() - datetime.timedelta(weeks=8)

recent = df[df.index >= cutoff]

# Exclude holidays (assuming a list of holiday dates)

holidays = pd.to_datetime(['2024-01-01', '2024-01-15', '2024-02-19'])

workdays = df[~df.index.normalize().isin(holidays)]

# Business days only

business = df[df.index.dayofweek < 5]

Grouping by Day of Week

Day-of-week patterns are fundamental to WFM forecasting. Monday contact volumes behave differently from Wednesday volumes, and these patterns must be identified and maintained.[3]

# Average offered contacts by day of week

dow_pattern = df.groupby(df.index.dayofweek)['Offered'].mean()

# Result: 0=Monday through 6=Sunday with average volumes

# Day-of-week AND time-of-day pattern (the classic interval profile)

interval_profile = df.groupby(

[df.index.dayofweek, df.index.time]

)['Offered'].mean()

# Percentage distribution within each day

daily_totals = df.groupby(df.index.date)['Offered'].transform('sum')

df['IntraDayPct'] = df['Offered'] / daily_totals

Rolling Averages

Rolling averages smooth out day-to-day variability and reveal trends—critical for identifying whether contact volumes or handle times are drifting.[1]

# 4-week rolling average of daily volume (common in WFM trend analysis)

daily_volume = df['Offered'].resample('D').sum()

rolling_4wk = daily_volume.rolling(window=28).mean()

# Rolling AHT trend (weighted by handle count)

daily_aht = (

df['Handled'] * df['AHT']

).resample('D').sum() / df['Handled'].resample('D').sum()

rolling_aht = daily_aht.rolling(window=28).mean()

Pivot Tables for Interval Patterns

Pivot tables are among the most-used tools in WFM spreadsheet work. Pandas provides an equivalent that handles much larger datasets.

# Classic WFM pivot: intervals as rows, days of week as columns

df['DayOfWeek'] = df.index.day_name()

df['TimeOfDay'] = df.index.time

pivot = df.pivot_table(

values='Offered',

index='TimeOfDay',

columns='DayOfWeek',

aggfunc='mean'

)

# Reorder columns Monday through Sunday

pivot = pivot[['Monday','Tuesday','Wednesday','Thursday','Friday',

'Saturday','Sunday']]

This produces the standard WFM interval-by-day-of-week grid that workforce planners use to validate forecasts and build staffing requirement templates.

Calculating WFM Metrics

Pandas enables WFM-specific metric calculations that go beyond simple averages. Each calculation below reflects how the metric is actually defined in WFM operations, not just a naive mathematical summary.

Average Handle Time Computation

AHT must be calculated as a weighted average when aggregating across intervals or agents, because each interval contributes a different number of handled contacts.[3]

# WRONG: Simple mean of AHT column (treats all intervals equally)

wrong_aht = df['AHT'].mean()

# CORRECT: Weighted by handled contacts

correct_aht = (df['AHT'] * df['Handled']).sum() / df['Handled'].sum()

# Weighted AHT by skill group

def weighted_aht(group):

return (group['AHT'] * group['Handled']).sum() / group['Handled'].sum()

aht_by_skill = df.groupby('SkillGroup').apply(weighted_aht)

This distinction matters. Naive averaging of AHT across intervals produces misleading results because low-volume intervals (early morning, late night) receive the same weight as peak intervals. A simple mean can misrepresent true operational AHT by 5–15% depending on volume distribution.

Service Level by Interval

Service level is the percentage of contacts answered within a defined threshold (commonly 20 or 30 seconds). Like AHT, it cannot be averaged across intervals without weighting.[3]

# Per-interval service level (already calculated)

# But aggregating to daily requires re-weighting

daily_sl = (

df['AnsweredInThreshold'].resample('D').sum() /

df['Offered'].resample('D').sum()

)

# Service level by day of week

dow_sl = df.groupby(df.index.dayofweek).apply(

lambda g: g['AnsweredInThreshold'].sum() / g['Offered'].sum()

)

Shrinkage Calculation

Shrinkage measures the difference between scheduled staff and staff actually available to handle contacts. Pandas makes it straightforward to calculate shrinkage from schedule and adherence data.

# Shrinkage = 1 - (Productive Hours / Scheduled Hours)

df['Shrinkage'] = 1 - (df['ProductiveHours'] / df['ScheduledHours'])

# Shrinkage breakdown by category

shrinkage_categories = df.groupby('ShrinkageCategory')[

'Hours'

].sum() / df['ScheduledHours'].sum()

# Trend: weekly shrinkage rate

weekly_shrinkage = 1 - (

df['ProductiveHours'].resample('W').sum() /

df['ScheduledHours'].resample('W').sum()

)

Forecast vs. Actual Comparison

Comparing forecast to actual performance is a core WFM discipline. Pandas enables systematic accuracy measurement across any dimension.[4]

# Merge forecast and actual DataFrames on interval timestamp

comparison = forecast_df.merge(actual_df, on='Interval', suffixes=('_fcst', '_actual'))

# Absolute Percentage Error per interval

comparison['APE'] = abs(

comparison['Volume_fcst'] - comparison['Volume_actual']

) / comparison['Volume_actual']

# MAPE (Mean Absolute Percentage Error)

mape = comparison['APE'].mean()

# WAPE (Weighted Absolute Percentage Error) — preferred for WFM

wape = (

abs(comparison['Volume_fcst'] - comparison['Volume_actual']).sum() /

comparison['Volume_actual'].sum()

)

# Forecast Bias (systematic over/under-forecasting)

bias = (

(comparison['Volume_fcst'] - comparison['Volume_actual']).sum() /

comparison['Volume_actual'].sum()

)

WAPE is generally preferred over MAPE in WFM because it weights errors by volume—a 10% miss on a 500-contact interval matters more than a 10% miss on a 20-contact interval. Pandas makes both calculations trivial.

Merging and Joining WFM Datasets

WFM analysis frequently requires combining data from multiple systems. Forecast data lives in the WFM platform, actual volumes come from the ACD, agent attributes sit in HR systems, quality scores arrive from QM platforms. Pandas provides SQL-style join operations to combine these sources.[1]

Combining Forecast with Actuals

# Merge on interval timestamp

combined = pd.merge(

forecast_df, actual_df,

on='Interval',

how='outer', # Keep all intervals even if one source has gaps

suffixes=('_forecast', '_actual')

)

The how parameter controls join behavior: 'inner' keeps only intervals present in both datasets, 'outer' keeps all intervals from both, 'left' keeps all intervals from the first dataset. For forecast-versus-actual analysis, 'outer' is typically appropriate because it surfaces intervals where one source has data gaps—information that is analytically important.

Schedule with Adherence

# Agent-level join: schedule expectations matched with actual adherence

agent_analysis = pd.merge(

schedule_df, adherence_df,

on=['AgentID', 'Interval'],

how='left'

)

# Calculate conformance

agent_analysis['Conformance'] = (

agent_analysis['ActualState'] == agent_analysis['ScheduledState']

)

Multi-Source Analysis

# Build a comprehensive interval-level dataset from multiple sources

interval_data = (

volume_df

.merge(aht_df, on='Interval', how='left')

.merge(staffing_df, on='Interval', how='left')

.merge(forecast_df, on='Interval', how='left')

)

This chained merge pattern is common in WFM analytics. It constructs a single denormalized DataFrame where each row is an interval containing volume, handle time, staffing, and forecast data side by side—enabling multi-dimensional analysis that would require multiple VLOOKUP operations and careful alignment in Excel.

Handling Missing Data and Anomalies

WFM data is rarely complete. Holidays produce zero-volume intervals that skew averages. System outages create gaps. Daylight saving transitions cause duplicate or missing intervals. Pandas provides explicit tools for managing these realities.

Identifying Missing Data

# Check for missing values across all columns

df.isnull().sum()

# Identify gaps in the interval sequence

expected_index = pd.date_range(

start=df.index.min(), end=df.index.max(), freq='15min'

)

missing_intervals = expected_index.difference(df.index)

Holiday and Anomaly Handling

Holidays are the most common source of data anomalies in WFM. Including holiday intervals in historical averages distorts forecasts and metrics.[4]

# Flag holidays

holidays = pd.to_datetime([

'2024-01-01', '2024-05-27', '2024-07-04',

'2024-09-02', '2024-11-28', '2024-12-25'

])

df['IsHoliday'] = df.index.normalize().isin(holidays)

# Exclude holidays from baseline calculations

baseline = df[~df['IsHoliday']]

normal_pattern = baseline.groupby(baseline.index.time)['Offered'].mean()

# Flag statistical outliers using IQR method

Q1 = df['Offered'].quantile(0.25)

Q3 = df['Offered'].quantile(0.75)

IQR = Q3 - Q1

df['IsOutlier'] = (df['Offered'] < Q1 - 1.5*IQR) | (df['Offered'] > Q3 + 1.5*IQR)

Filling Data Gaps

When intervals are missing, analysts must decide how to handle them. The choice depends on why data is missing and what the analysis requires.

# Forward-fill: use last known value (appropriate for slow-changing metrics)

df['Staffed'] = df['Staffed'].ffill()

# Interpolation: estimate values between known points

df['Offered'] = df['Offered'].interpolate(method='time')

# Fill with typical pattern: replace missing with same-day-of-week/time average

typical = df.groupby([df.index.dayofweek, df.index.time])['Offered'].transform('mean')

df['Offered'] = df['Offered'].fillna(typical)

The third approach—filling with the typical pattern for that day-of-week and time-of-day—is the most WFM-appropriate for volume data because it preserves the intraday and weekly seasonality that are central to time series patterns in contact center operations.

Performance Considerations

Contact center data at interval level accumulates rapidly. A single skill group generating 96 intervals per day produces 35,040 rows per year. A center with 30 skill groups across 3 years generates over 3 million rows. When agent-level data is included—adherence, state changes, interaction records—row counts can reach tens of millions. Pandas can handle this scale, but certain practices become important.[5]

Memory Management

# Use appropriate data types to reduce memory

df['SkillGroup'] = df['SkillGroup'].astype('category') # Huge savings for repeated strings

df['Offered'] = df['Offered'].astype('int32') # int32 vs int64 halves memory

df['AHT'] = df['AHT'].astype('float32') # float32 vs float64 halves memory

# Check memory usage

print(df.memory_usage(deep=True).sum() / 1024**2, 'MB')

# Read only needed columns from large files

df = pd.read_csv('huge_export.csv', usecols=['Interval', 'Offered', 'AHT'])

Processing Large Datasets

# Process in chunks for files that exceed available memory

chunks = pd.read_csv('massive_file.csv', chunksize=100000)

daily_totals = []

for chunk in chunks:

chunk['Interval'] = pd.to_datetime(chunk['Interval'])

daily = chunk.groupby(chunk['Interval'].dt.date)['Offered'].sum()

daily_totals.append(daily)

result = pd.concat(daily_totals).groupby(level=0).sum()

Vectorized Operations

Pandas is fastest when operating on entire columns at once rather than iterating row by row. This distinction becomes significant at WFM data scales.

# SLOW: Row-by-row iteration

for idx, row in df.iterrows():

df.at[idx, 'Occupancy'] = row['Handled'] * row['AHT'] / (row['Staffed'] * 1800)

# FAST: Vectorized operation (100x+ faster on large DataFrames)

df['Occupancy'] = df['Handled'] * df['AHT'] / (df['Staffed'] * 1800)

For WFM practitioners coming from Excel, this is a significant conceptual shift. In Excel, formulas are written per-cell and copied down. In pandas, operations are written once and applied to the entire column simultaneously. The performance difference is negligible for small datasets but becomes orders of magnitude for multi-year interval data.

From Excel to Pandas

Most WFM analysts begin their careers in Excel and develop deep fluency with its tools. The transition to pandas is smoother when framed as translation rather than replacement. Every common Excel operation has a pandas equivalent, often more concise and always more scalable.[1]

VLOOKUP → merge

Excel's VLOOKUP finds a value in one table and retrieves a corresponding value from another. In pandas, this is a merge (join) operation.

# Excel: =VLOOKUP(A2, AgentTable, 3, FALSE) to get agent's team

# Pandas:

df = df.merge(agent_table[['AgentID', 'Team']], on='AgentID', how='left')

The pandas merge is more powerful than VLOOKUP in several respects: it can match on multiple columns simultaneously, it handles duplicate matches explicitly rather than silently returning the first match, and it works bidirectionally (VLOOKUP only looks right).

Pivot Tables → groupby and pivot_table

# Excel: Pivot table with DayOfWeek as rows, SkillGroup as columns, sum of Offered

# Pandas:

pivot = df.pivot_table(

values='Offered', index='DayOfWeek', columns='SkillGroup', aggfunc='sum'

)

IF Statements → apply, np.where, and Boolean Indexing

# Excel: =IF(E2>0.80, "Met", "Missed")

# Pandas (multiple equivalent approaches):

# Using np.where (fastest)

import numpy as np

df['SL_Status'] = np.where(df['ServiceLevel'] > 0.80, 'Met', 'Missed')

# Using apply for complex logic

def classify_interval(row):

if row['ServiceLevel'] >= 0.80 and row['Offered'] >= 50:

return 'Met (Significant)'

elif row['ServiceLevel'] >= 0.80:

return 'Met (Low Volume)'

else:

return 'Missed'

df['SL_Category'] = df.apply(classify_interval, axis=1)

SUMIFS / COUNTIFS → groupby with conditions

# Excel: =SUMIFS(Offered, DayOfWeek, "Monday", SkillGroup, "Sales")

# Pandas:

monday_sales = df[(df['DayOfWeek'] == 'Monday') & (df['SkillGroup'] == 'Sales')]['Offered'].sum()

# Or for all combinations at once:

summary = df.groupby(['DayOfWeek', 'SkillGroup'])['Offered'].sum()

Manual Copy-Paste Reporting → Automated Pipelines

Perhaps the most significant advantage of pandas over Excel for WFM work is not any single function—it is the ability to encode an entire analytical workflow as a reusable script. A weekly reporting process that takes two hours of manual Excel manipulation (download exports, paste into template, update formulas, verify, format) can be reduced to a Python script that executes in seconds and produces identical output every time.[3]

# Entire weekly report pipeline

def weekly_report(start_date, end_date):

# Load data

actuals = pd.read_csv('actuals_export.csv', parse_dates=['Interval'])

forecast = pd.read_csv('forecast_export.csv', parse_dates=['Interval'])

# Filter date range

actuals = actuals[(actuals['Interval'] >= start_date) &

(actuals['Interval'] <= end_date)]

# Calculate metrics

daily = actuals.groupby(actuals['Interval'].dt.date).agg(

Offered=('Offered', 'sum'),

Handled=('Handled', 'sum'),

AHT=('AHT', lambda x: np.average(x, weights=actuals.loc[x.index, 'Handled']))

)

# Merge forecast comparison

comparison = daily.merge(forecast, on='Date')

# Export

comparison.to_excel('weekly_report_output.xlsx', index=False)

return comparison

Integration with the WFM Analytics Ecosystem

Pandas does not work in isolation. It is the data manipulation layer within a broader Python ecosystem that WFM teams use for end-to-end analytics.

- Visualization — Matplotlib and Plotly accept pandas DataFrames directly. A single method call (

df.plot()) generates charts from interval data without manual data extraction. - Statistical Forecasting — Libraries such as statsmodels (for decomposition, ARIMA) and Prophet (for automated forecasting) accept pandas DataFrames as input and return pandas DataFrames as output.

- Jupyter Notebooks — DataFrames render as formatted HTML tables in Jupyter, making notebooks natural environments for exploratory WFM analysis.

- Scikit-learn — Machine learning models for forecasting or classification ingest pandas DataFrames after minimal transformation.

This interoperability means that learning pandas is not an isolated investment—it is the gateway to the entire Python data science ecosystem.[6]

See Also

- Python for Workforce Management

- Jupyter Notebooks for WFM Analysis

- Forecasting Methods

- Interval Level Staffing Requirements

- Time Series Decomposition

- Forecast Accuracy Metrics

- MAPE WAPE and Forecast Bias

- Reporting and Analytics Framework

- Data Visualization for WFM

- Average Handle Time

- Service Level

- Shrinkage

References

- ↑ 1.0 1.1 1.2 1.3 1.4 1.5 1.6 McKinney, Wes. Python for Data Analysis: Data Wrangling with pandas, NumPy, and Jupyter, 3rd ed. O'Reilly Media, 2022. ISBN 978-1098104030.

- ↑ 2.0 2.1 2.2 pandas development team. "pandas: powerful Python data analysis toolkit." pandas documentation, 2024. https://pandas.pydata.org/docs/

- ↑ 3.0 3.1 3.2 3.3 Cleveland, Brad. Contact Center Management on Fast Forward, 4th ed. ICMI Press, 2019. ISBN 978-0985461133.

- ↑ 4.0 4.1 Hyndman, Rob J. and George Athanasopoulos. Forecasting: Principles and Practice, 3rd ed. OTexts, 2021. https://otexts.com/fpp3/

- ↑ McKinney, Wes. "Apache Arrow and the '10 Things I Hate About pandas'." Wes McKinney blog, 2017. https://wesmckinney.com/blog/apache-arrow-pandas-internals/

- ↑ VanderPlas, Jake. Python Data Science Handbook: Essential Tools for Working with Data, 2nd ed. O'Reilly Media, 2023. ISBN 978-1098121228.