Jupyter Notebooks for WFM Analysis

Jupyter Notebooks for WFM Analysis are interactive computing documents that combine executable code, rich text narrative, data visualizations, and mathematical equations in a single shareable file. For workforce management (WFM) practitioners, Jupyter notebooks represent a fundamental shift in how analytical work is conducted: instead of performing analysis in one tool, building charts in another, and writing up conclusions in a third, the entire analytical workflow—from raw data ingestion through statistical computation to stakeholder-ready visualization—lives in a single, reproducible document.

The significance for WFM operations is practical, not theoretical. Contact center analytics involves repetitive workflows that change slightly each cycle: weekly forecast accuracy reviews, monthly capacity planning scenarios, quarterly staffing model recalibrations. When these analyses exist as notebooks rather than spreadsheet files or slide decks, they become executable documentation—a colleague can rerun last month's forecast accuracy review against this month's data without reverse-engineering the analyst's process. Project Jupyter, the open-source organization behind the notebook ecosystem, reports over 10 million public Jupyter notebooks on GitHub as of 2023, with data science and operational analytics among the dominant use cases.[1]

This page covers notebook architecture, WFM-specific workflows, collaboration patterns, and the practical considerations that determine whether notebooks accelerate or complicate a WFM team's analytical capability.

What Is a Jupyter Notebook

A Jupyter notebook is an open-source web application that creates documents containing live code, equations, visualizations, and narrative text. The name "Jupyter" derives from the three core programming languages it originally supported—Julia, Python, and R—though Python dominates WFM usage. The notebook format (.ipynb) is a JSON file that stores both the code and its output, meaning a rendered notebook preserves results even when the underlying kernel is not running.

Cells

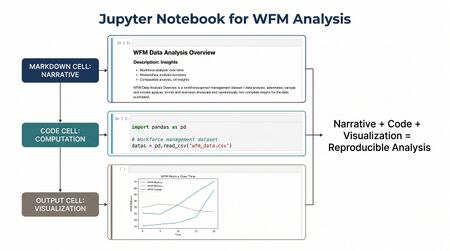

The fundamental unit of a Jupyter notebook is the cell. Notebooks contain two primary cell types:

- Code cells — Contain executable code (typically Python). When a code cell runs, its output—whether a number, table, chart, or error message—displays directly below the cell. Code cells execute in the order the user runs them, not necessarily top-to-bottom, which creates both flexibility and a common source of confusion for new users.

- Markdown cells — Contain formatted text using Markdown syntax. These cells render headings, bullet lists, bold/italic text, hyperlinks, LaTeX equations, and embedded images. Markdown cells transform a notebook from a script into a narrative document.

A third cell type, raw cells, passes content through without execution or rendering—useful for including content intended for external conversion tools but rarely used in day-to-day WFM analysis.

The Kernel

Behind every notebook is a kernel—a computational engine that executes the code in code cells. For Python notebooks, the kernel is an instance of the Python interpreter that maintains state: variables defined in one cell persist and are accessible in subsequent cells. The kernel runs independently of the notebook interface, meaning computation continues even if the browser tab is closed (in server-based deployments).

Key kernel behaviors WFM practitioners should understand:

- State persistence — Variables, dataframes, and model objects persist across cells within a session. Loading a 500 MB interval dataset in cell 3 means that data is available in cell 47 without reloading.

- Restart clears state — Restarting the kernel erases all variables. This is both a debugging tool (clearing corrupted state) and a validation step (ensuring the notebook runs correctly from scratch).

- Execution order matters — Running cells out of order can produce inconsistent state. A disciplined practice is to periodically restart the kernel and run all cells sequentially to verify reproducibility.

Combining Code, Charts, and Narrative

The defining feature of notebooks is the interleaving of computation and explanation. A WFM forecast accuracy review might flow as:

- Markdown cell explaining the review period and methodology

- Code cell loading interval-level forecast and actual data from a CSV export

- Code cell calculating MAPE, WAPE, and bias metrics using Pandas

- Markdown cell interpreting the results and noting anomalies

- Code cell generating a time-series visualization of forecast vs. actual by interval

- Markdown cell with conclusions and recommended forecast model adjustments

This interleaving means the analytical document is the analysis—not a summary of analysis performed elsewhere. Anyone reading the notebook can verify every number by examining the code that produced it.

Why Notebooks for WFM

Spreadsheets remain the dominant analytical tool in most WFM operations. They are flexible, accessible, and familiar. They are also opaque (formulas hidden in cells), fragile (a misplaced sort breaks downstream references), and non-reproducible (two analysts running "the same" analysis in separate spreadsheets routinely produce different results). Notebooks address these structural weaknesses while preserving the interactive, exploratory nature that makes spreadsheets effective.

Reproducibility

Reproducibility is the single most valuable property notebooks bring to WFM analytics. When a forecast accuracy report is a notebook, the methodology is not described—it is encoded. Rerunning the notebook against new data produces an updated report using identical methodology. This eliminates the "methodology drift" that plagues spreadsheet-based WFM reporting, where small procedural differences accumulate over reporting cycles until two analysts reviewing the same period produce materially different conclusions.

A 2019 survey published in Nature found that more than 70% of researchers had experienced difficulty reproducing another scientist's experiments, and over 50% had failed to reproduce their own work—findings that parallel the analytical reproducibility challenges in operational settings.[2] Notebooks do not solve reproducibility automatically (dependency management and data versioning require additional discipline), but they make the methodology component of reproducibility a solved problem.

Documentation

The narrative capability of Markdown cells transforms analytical work from undocumented computation into documented reasoning. In a well-structured notebook, every analytical decision—why this date range, why this metric, why this filter—is explained adjacent to the code that implements it. This is particularly valuable in WFM, where analytical context matters enormously: a forecast accuracy number means something different during a product launch week than during a normal week, and that context should travel with the analysis.

Collaboration

Notebooks create a shared language between technical and non-technical WFM team members. An analyst builds the notebook; a WFM manager reads the narrative and charts without needing to understand the code; a data engineer reviews the data loading patterns. Each stakeholder engages with the layer relevant to their role. This multi-audience capability maps directly to the collaboration challenges described in the Reporting and Analytics Framework, where the same analytical insight must serve operational, tactical, and strategic consumers.

Presentation to Stakeholders

Notebooks convert directly to HTML, PDF, and slide-deck formats via the nbconvert tool. A forecast accuracy review notebook can be exported as a static HTML report for email distribution or a PDF for executive review—preserving all charts and narrative without requiring stakeholders to install any software. This export capability eliminates the common WFM workflow of performing analysis in one tool and manually recreating results in PowerPoint.

Common WFM Notebook Workflows

The following workflows represent the most common applications of Jupyter notebooks in WFM operations. Each follows a pattern: load WFM data, apply domain-specific calculations, visualize results, and document conclusions.

Interval Analysis

Interval-level analysis is the foundation of WFM operations, and it is where notebooks provide the most immediate value over spreadsheets. A typical interval analysis notebook:

- Loads 15-minute or 30-minute interval data (call volume, handle time, staffing, service level) from a WFM platform export or database query

- Calculates derived metrics: occupancy, utilization, shrinkage percentage, offered-to-answered ratios

- Identifies anomalous intervals using statistical thresholds (z-scores, IQR-based outlier detection)

- Visualizes patterns across time-of-day, day-of-week, and week-over-week dimensions

- Documents findings with contextual annotations (known events, system outages, campaign launches)

The advantage over spreadsheet-based interval analysis is scale. A notebook can process months of interval data across dozens of queues in seconds, applying consistent methodology that would require hours of manual spreadsheet manipulation. Pandas DataFrames handle the columnar operations that cause spreadsheets to slow or crash at scale—a consideration explored in depth in Pandas and Data Manipulation for WFM.

Forecast Accuracy Review

Forecast accuracy review is perhaps the highest-value recurring notebook workflow. The standard approach:

- Load forecast data (by interval, by queue) alongside actual contact volume and handle time

- Calculate accuracy metrics: MAPE (Mean Absolute Percentage Error), WAPE (Weighted Absolute Percentage Error), bias (directional error), and tracking signal

- Segment accuracy by time dimension (time-of-day, day-of-week), queue, and forecast horizon

- Identify systematic patterns: consistent under-forecasting on Mondays, degrading accuracy beyond 3-week horizon, specific queues where the forecast model underperforms

- Compare accuracy across forecasting methods if multiple approaches are in use

Because the notebook is reusable, each review cycle requires only updating the date range parameters. The methodology, visualizations, and narrative structure persist from cycle to cycle, ensuring consistency and dramatically reducing preparation time.

Schedule Coverage Analysis

Schedule coverage analysis evaluates how well generated schedules align with forecasted requirements:

- Load schedule data (agent assignments by interval) and staffing requirements (from the forecasting/Erlang pipeline)

- Calculate coverage ratios: scheduled staff divided by required staff, by interval

- Identify chronic over-staffing and under-staffing patterns by time-of-day and day-of-week

- Model the service level impact of coverage gaps using Erlang-C calculations

- Quantify the cost impact of over-staffing intervals (idle labor cost) and under-staffing intervals (service level degradation, potential abandon cost)

This workflow connects directly to Capacity Planning Methods, where schedule coverage analysis informs longer-horizon staffing decisions.

Ad-Hoc Capacity Scenarios

Scenario modeling is where notebooks most clearly outperform spreadsheets. A capacity scenario notebook:

- Defines baseline parameters: current volume forecast, handle time assumptions, service level targets, shrinkage rates

- Models multiple scenarios by varying one or more parameters: 10% volume increase, 15-second handle time reduction from a new IVR, seasonal ramp patterns

- Calculates staffing requirements for each scenario using Erlang-C or simulation-based methods

- Visualizes the delta between scenarios—incremental FTE needed, cost impact, service level sensitivity

- Documents assumptions explicitly so stakeholders can evaluate which scenario best matches their expectations

Parameterized notebooks (discussed in the Best Practices section) make this workflow particularly powerful: changing a single parameter set regenerates the entire scenario analysis.

Notebook Architecture

A well-structured WFM notebook follows a consistent architecture that mirrors the analytical workflow. This structure serves both the original analyst (who will return to the notebook weeks later) and any colleague who inherits it.

Setup

The first section of any notebook handles environment configuration:

- Import statements for required libraries (Pandas, NumPy, Matplotlib, database connectors)

- Configuration parameters: date ranges, queue filters, service level targets, file paths

- Helper function definitions that will be reused throughout the analysis

- Display settings: chart dimensions, decimal formatting, color palettes

Concentrating all imports and configuration at the top ensures that dependencies are visible immediately and that parameter changes propagate throughout the notebook.

Data Load

The second section acquires and validates data:

- Data ingestion from source (CSV files, database queries, API calls)

- Initial data quality checks: row counts, null value assessment, date range verification, duplicate detection

- Data type conversions: parsing timestamps, converting categorical variables, handling timezone normalization

- Documentation of any data quality issues discovered and how they were handled

This section should produce a clearly defined set of DataFrames with documented schemas that all subsequent sections consume.

Analysis

The core analytical section applies domain-specific calculations to the prepared data. Each analytical step occupies its own cell or cell group, with Markdown cells explaining the methodology and any assumptions. Complex calculations include intermediate validation steps—sanity checks that catch errors before they propagate into downstream results.

Visualization

Charts and tables render inline, immediately following the code that generates them. Effective WFM notebooks use visualization strategically:

- Time-series plots for trend identification

- Heatmaps for day-of-week × time-of-day patterns

- Distribution plots for variability analysis (handle time distributions, volume variability)

- Comparison charts for scenario analysis and benchmark evaluation

The visualization techniques applicable to WFM notebook workflows are covered comprehensively in Data Visualization for WFM.

Conclusions

The final section synthesizes findings into actionable recommendations. This section is pure Markdown—no code—and is written for the stakeholder audience, not the analyst audience. It references specific charts and tables from earlier sections and translates analytical findings into operational language.

This five-section architecture is not arbitrary. It maps to the analytical pipeline described in the Reporting and Analytics Framework and ensures that notebooks function as both analytical tools and communication documents.

Sharing and Collaboration

Raw .ipynb files are JSON documents that require Jupyter to view interactively. For WFM teams that need to share analysis with stakeholders who will not install Jupyter, several distribution mechanisms exist.

nbviewer

Jupyter nbviewer is a free web service that renders any publicly accessible notebook (e.g., hosted on GitHub) as a static web page. For internal use, organizations can deploy a private nbviewer instance that renders notebooks stored in internal repositories. The rendering is read-only—stakeholders see the narrative, code, and output but cannot modify or re-execute cells.

JupyterHub

JupyterHub is a multi-user server that gives each team member their own Jupyter environment while centralizing administration, authentication, and resource management. For WFM teams larger than 2-3 analysts, JupyterHub provides:

- Centralized library and dependency management (every analyst uses the same package versions)

- Shared data directories (WFM platform exports accessible to all notebooks)

- Administrative controls over compute resources (preventing a runaway notebook from consuming all server memory)

- Integration with organizational authentication systems (LDAP, OAuth)

JupyterHub deployments can run on-premises or in cloud environments, with The Littlest JupyterHub (TLJH) providing a lightweight option for teams of up to 100 users.[3]

Converting to PDF and HTML

The nbconvert tool transforms notebooks into multiple output formats:

- HTML — Self-contained web pages that preserve all formatting and interactivity. Suitable for email distribution or intranet publishing.

- PDF — Publication-quality documents via LaTeX rendering. Suitable for executive reports and formal deliverables.

- Slides — Reveal.js-based presentations where each cell or cell group becomes a slide. Useful for presenting analytical findings in meeting settings.

- Markdown — Plain text with formatting, suitable for wiki integration or documentation systems.

The conversion process can be automated: a scheduled job runs the notebook with current data and exports the result as an HTML report, achieving the reporting automation pattern without building a separate reporting pipeline.

JupyterLab vs Classic Notebook vs Google Colab

Three primary interfaces exist for working with Jupyter notebooks, each with different trade-offs relevant to WFM teams.

JupyterLab

JupyterLab is the next-generation interface from Project Jupyter, providing a full integrated development environment (IDE) experience. It supports multiple notebooks open simultaneously, a file browser, terminal access, and a modular extension system. For WFM analysts who work with multiple data files and notebooks in a single session—common when cross-referencing forecast data, schedule data, and adherence data—JupyterLab's multi-panel layout is significantly more productive than the classic interface. JupyterLab became the default interface in Jupyter Notebook 7.0, released in 2023.[4]

Classic Notebook

The original Jupyter Notebook interface—a single-document view focused on one notebook at a time. Simpler to learn and lower in resource consumption, but lacking the productivity features of JupyterLab. Still adequate for single-notebook workflows and for environments with constrained compute resources.

Google Colab

Google Colaboratory (Colab) is a free, cloud-hosted Jupyter notebook environment that requires no local installation. Notebooks run on Google's infrastructure with access to free GPU/TPU resources for computationally intensive work. For WFM teams evaluating notebooks without IT infrastructure investment, Colab provides an immediate starting point.

Trade-offs for WFM use:

- Advantages — Zero setup, free compute resources, built-in Google Drive integration for data storage, easy sharing via Google Drive permissions

- Limitations — Data must be uploaded to Google's infrastructure (potential compliance concern for sensitive WFM data), session timeouts disconnect after idle periods (losing in-memory data), limited customization compared to self-hosted Jupyter, dependency on internet connectivity

For organizations with strict data governance requirements—common in financial services and healthcare contact centers—Colab's cloud-hosted model may be incompatible with data handling policies. Self-hosted JupyterLab or JupyterHub deployments keep data within organizational boundaries.

Integration with WFM Data Sources

Notebooks become valuable for WFM analysis only when they can efficiently access WFM data. Three integration patterns dominate.

WFM Platform Exports

The most common starting point: exporting data from the WFM platform (NICE, Verint, Calabrio, Aspect, Genesys) as CSV or Excel files, then loading those files into notebook DataFrames using Pandas. This pattern requires no IT infrastructure changes and works immediately. The limitation is manual: someone must perform the export before each analysis cycle.

Automation improves this pattern incrementally. Many WFM platforms support scheduled report exports to network drives or SFTP locations. A notebook can be configured to read from those locations automatically, reducing the manual step to ensuring the scheduled export is running.

Database Connections

For organizations with WFM data warehouses or data lakes, notebooks connect directly to databases using Python database connectors:

- SQL databases (SQL Server, PostgreSQL, MySQL) — via SQLAlchemy or database-specific drivers (pyodbc, psycopg2)

- Cloud data warehouses (Snowflake, BigQuery, Redshift) — via vendor-provided Python SDKs

- Data lakes (S3, Azure Data Lake) — via cloud storage SDKs with Pandas or PySpark for processing

Direct database connections eliminate the export-then-load workflow, enabling notebooks to query current data on every execution. This pattern aligns with the data fabric architecture described in the AI Scaffolding Framework, where analytical consumers access a unified data layer rather than individual source systems.

API Integration

Modern WFM and contact center platforms increasingly expose REST APIs for data access. Python's requests library and platform-specific SDKs enable notebooks to pull data programmatically:

- Real-time queue statistics from ACD APIs

- Historical contact records from CRM APIs

- Schedule and adherence data from WFM platform APIs

- Quality scores from QM platform APIs

API integration enables the most dynamic notebook workflows—pulling live data, performing analysis, and pushing results back to operational systems—but requires API access credentials and typically involves IT collaboration to provision access.

Best Practices

Notebooks offer flexibility that, without discipline, produces analytical chaos. The following practices distinguish notebooks that remain useful from those that become disposable.

Version Control

Jupyter notebooks are JSON files that diff poorly in standard version control tools (Git). Several approaches address this:

- nbstripout — A Git filter that strips output cells before committing, reducing diff noise and file size. Analysts see clean diffs of code and Markdown changes.

- Jupytext — Pairs each notebook with a plain-text representation (.py or .md file) that diffs cleanly. The notebook and text file stay synchronized automatically.

- Review discipline — Regardless of tooling, notebooks should be committed in a "clean" state: kernel restarted, all cells run sequentially, outputs reflecting the current code.

Version control is especially important for recurring WFM analyses (weekly forecast accuracy, monthly capacity reviews) where tracking methodology changes over time has direct operational value.

Naming Conventions

Notebook filenames should encode enough context to be useful without opening the file:

- Date prefix for point-in-time analyses:

2026-05-13_forecast_accuracy_Q2.ipynb - Descriptive names for template notebooks:

interval_analysis_template.ipynb - Version suffixes when iterating:

capacity_scenario_v3.ipynb

Avoid generic names (analysis.ipynb, Untitled.ipynb, Copy of Copy of forecast.ipynb) that proliferate in unmanaged notebook environments.

Parameterized Notebooks

Parameterized notebooks separate configuration from logic. Tools like Papermill allow notebooks to accept parameters at execution time—a single forecast accuracy notebook can be run for any queue, any date range, and any metric threshold by changing parameter values rather than editing code cells.[5]

This pattern enables:

- Batch execution — Run the same analysis across 50 queues overnight, producing 50 output notebooks

- Scheduled reporting — A cron job or orchestration tool runs parameterized notebooks on a schedule, replacing manual report generation

- Scenario modeling — Define parameter sets for different scenarios, execute all, and compare results

Parameterized notebooks bridge the gap between ad-hoc analysis and automated reporting, connecting to the automation patterns described in Reporting Automation and Self Service Analytics.

Cell Discipline

Effective notebooks follow cell-level practices:

- Each code cell does one thing and is independently understandable

- Markdown cells precede complex code cells, explaining what follows and why

- Long utility functions are defined in external .py modules and imported, keeping notebook cells focused on analytical logic

- Output cells are kept clean—suppress intermediate outputs that add noise without insight

Environment Management

Python dependency conflicts are a common source of notebook failures. Best practices:

- Use virtual environments (venv, conda) to isolate notebook dependencies from system Python

- Maintain a

requirements.txtorenvironment.ymlthat documents exact package versions - Include environment verification in the notebook's setup section (checking library versions against expected values)

Limitations

Notebooks excel at interactive analysis, documentation, and exploration. They are not appropriate for all analytical workloads.

Not for Production Pipelines

Notebooks are poor candidates for production data pipelines. Their stateful execution model, implicit cell ordering dependencies, and lack of robust error handling make them fragile in unattended execution. Analyses that need to run reliably on a schedule without human oversight should be refactored into Python scripts or integrated into orchestration frameworks (Airflow, Prefect, Dagster). The Model Evaluation and Validation process, for instance, requires the kind of deterministic, testable execution that scripts provide more reliably than notebooks.

Kleppmann's observation in Designing Data-Intensive Applications applies directly: interactive tools and batch processing systems serve fundamentally different reliability requirements, and using one where the other is needed creates systemic fragility.[6]

Hidden State Bugs

The ability to run cells out of order creates a class of bugs unique to notebooks: the analysis produces correct results in the analyst's session (where cells were run in a particular order with particular intermediate modifications) but fails or produces different results when run top-to-bottom by a colleague. The mitigation is disciplined use of "Restart & Run All" before sharing or committing any notebook.

Merge Conflicts

The JSON format of .ipynb files makes Git merge conflicts extremely difficult to resolve manually. Teams with multiple analysts editing the same notebook simultaneously need clear workflow conventions—either using Jupytext for text-based diffs or adopting a "one analyst per notebook at a time" discipline.

Scaling Limits

Notebooks execute in a single Python kernel, meaning computation is limited to the resources of a single machine. For WFM analyses involving very large datasets (multi-year, multi-site interval data at 15-minute granularity), notebooks may require integration with distributed computing frameworks (Dask, PySpark) or pre-aggregation strategies that reduce data volume before notebook-level analysis.

Notebooks in the WFM Technology Landscape

Jupyter notebooks occupy a specific position in the WFM analytical stack. They sit between spreadsheets (fully interactive, no reproducibility) and production pipelines (fully automated, no interactivity). This positioning makes them the natural tool for:

- Exploratory analysis — Investigating a data question before deciding whether to formalize the analysis

- Methodology development — Prototyping a new forecasting approach before implementing it in the WFM platform

- Analytical communication — Sharing detailed analytical work with stakeholders who need to see methodology alongside results

- Training — Teaching WFM analysts new techniques with executable examples they can modify and experiment with

The AI Scaffolding Framework positions analytical tooling at Layer 3 (Analytical Engine), where notebooks serve as the development environment for capabilities that may later be promoted to production systems. A forecast model prototyped in a notebook, validated using Model Evaluation and Validation techniques, and proven effective over several manual cycles becomes a candidate for production deployment—at which point the notebook transitions from operational tool to documentation artifact.

For WFM teams beginning their Python journey (as outlined in Python for Workforce Management), notebooks provide the lowest-friction entry point: install Jupyter, open a notebook, and start exploring WFM data interactively. The immediate feedback loop—write code, see results, adjust, repeat—accelerates learning in a way that script-based development cannot match.

The connection to Capacity Planning Methods is direct: capacity scenario notebooks that model staffing under various demand assumptions are among the most immediately valuable applications, replacing spreadsheet-based models that obscure their assumptions and resist modification. Similarly, the analytical workflows that feed into Reporting and Analytics Framework deliverables often begin as notebook explorations before being formalized into recurring reports.

See Also

- Python for Workforce Management

- Pandas and Data Manipulation for WFM

- Data Visualization for WFM

- Forecasting Methods

- Reporting and Analytics Framework

- Reporting Automation and Self Service Analytics

- AI Scaffolding Framework

- Model Evaluation and Validation

- Capacity Planning Methods

References

- ↑ Jupyter Notebook on GitHub. Project Jupyter. 2026-05-13.

- ↑ Baker, Monya. "1,500 scientists lift the lid on reproducibility." Nature, vol. 533, 2016, pp. 452–454. doi:10.1038/533452a

- ↑ JupyterHub Technical Overview. Project Jupyter. 2026-05-13.

- ↑ JupyterLab Documentation. Project Jupyter. 2026-05-13.

- ↑ Papermill: Parameterize, Execute, and Analyze Notebooks. nteract. 2026-05-13.

- ↑ Kleppmann, Martin. Designing Data-Intensive Applications. O'Reilly Media, 2017. ISBN 978-1449373320.