Reporting Automation and Self Service Analytics

Reporting automation in workforce management (WFM) refers to the use of technical systems — scheduled scripts, orchestration pipelines, parameterized notebooks, and programmatic report generators — to produce and distribute recurring reports without requiring manual analyst effort for each cycle. Self-service analytics extends this concept to end users: operations managers, team leads, and site directors query their own data directly, without submitting requests to a centralized WFM or business intelligence (BI) team. Together, these capabilities address two persistent failure modes in WFM reporting: analyst time consumed by repetitive report production, and decision latency caused by the queue of requests waiting for analyst capacity. Both automation and self-service require a reliable data foundation; neither can substitute for it. The governing tradeoff is between accessibility (easy for non-technical users) and precision (correct metric definitions enforced consistently), a tension that has structured the evolution of workforce analytics tooling from the 1990s through the current era of cloud-native data platforms.[1]

Why Automate: The Cost of Manual Reporting

Recurring manual report production is one of the largest sinks of analyst time in WFM operations. A team that produces daily scorecards, weekly trend summaries, and monthly business review packages manually — pulling data from the WFM platform, refreshing pivot tables, formatting charts, and distributing via email — may spend 30–50% of analyst capacity on mechanical report production rather than on analysis and insight generation.

The costs of manual reporting extend beyond analyst time:

- Latency: Reports produced by a human are available only when that human completes them. Daily scorecards produced at 11 AM are less useful for morning operations decisions than the same report available at 7 AM.

- Human error: Manual copy-paste, formula reference errors, and incorrect date range selections introduce inaccuracies that undermine confidence in the reporting system. Error rates increase under time pressure.

- Inconsistency: When different analysts produce the "same" report on different days, unstated calculation differences accumulate. A monthly report produced by the analyst who is on vacation may calculate shrinkage differently than the one produced by their coverage.

- Fragility: Reports built on manual processes fail silently when source data is delayed, renamed, or reformatted. Automated pipelines with monitoring can surface these failures immediately.

Reporting automation addresses all four failure modes. The tradeoff is upfront engineering investment — building reliable pipelines requires more initial effort than building an Excel template — and ongoing maintenance as source systems change.[2]

Traditional Automation Approaches

Scheduled BI Tool Refreshes

BI platforms such as Power BI, Tableau, and Looker support scheduled data refresh and report distribution. A Power BI dataset configured to refresh from a SQL Server connection at 6 AM daily, with a report email subscription sent at 6:30 AM, is a form of automation available without custom code. For organizations already invested in Microsoft 365 licensing, Power BI scheduled refresh is often the lowest-friction first step toward automation.

Limitations: scheduled BI refreshes are limited to the data sources and transformation capabilities of the BI platform. Cross-system joins that require custom ETL logic may not be expressible as a direct BI query. Distribution is limited to the channels the BI platform supports (email, Teams in Microsoft's case). And if the underlying data source is a manually maintained Excel file, the "automation" is only partial.

SSRS and Paginated Report Subscriptions

SQL Server Reporting Services (SSRS) — Microsoft's legacy paginated reporting platform — supports subscription-based report delivery: a report renders on a schedule and is emailed to a distribution list as PDF or Excel. SSRS is widespread in organizations with SQL Server data infrastructure and remains the backbone of operational reporting in many contact center environments. Its primary limitations are limited visualization capability (designed for tabular output, not interactive dashboards) and the investment required to maintain a separate SSRS report server.

Email-Based Report Distribution

The most basic automation pattern: a script queries a database, formats output as a table or attachment, and sends it via email. This pattern predates modern BI tools and remains prevalent, particularly for stakeholders who do not use BI platforms. A Python script using pandas for data transformation, matplotlib or plotly for chart generation, and smtplib for email delivery can produce a formatted daily scorecard with ten to fifty lines of code.

Email-based distribution has a significant disadvantage: reports are static snapshots. Recipients cannot filter, drill down, or explore the underlying data. They are consumers, not analysts.

Modern and Programmatic Automation

Python Scripts on Cron

Python scripts scheduled via cron (on Linux/macOS) or Windows Task Scheduler execute report logic on a defined schedule without human intervention. A typical WFM automation script:

- Authenticates to the WFM platform or ACD API

- Queries prior-day or prior-week data

- Applies transformation logic (pandas DataFrames)

- Generates tables and charts

- Distributes via email, Slack API, or file drop to a shared location

This approach is flexible — any data source with an API or database connection is accessible — and produces reproducible results when the script is version-controlled in Git. The primary risk is orphaned scripts: automation built by individual analysts that becomes critical infrastructure but is not documented, not monitored, and fails silently when the analyst leaves the organization. Robust cron-based automation requires monitoring (email or Slack alerts on script failure), logging, and documentation equivalent to production software.

Jupyter Notebooks as Scheduled Production Reports

Jupyter notebooks that were originally built for exploratory analysis can be promoted to scheduled production reports using two tools:

- Papermill: Executes a Jupyter notebook with parameter injection (e.g., setting the report date range programmatically), producing an output notebook with all cells executed and outputs embedded. Multiple parameterized runs can be executed in sequence.

- nbconvert: Converts an executed notebook to HTML, PDF, or other formats for distribution. A pipeline combining Papermill execution and nbconvert rendering produces a formatted report from a notebook that can be emailed or hosted on an internal site.

This pattern has significant advantages over standalone Python scripts: the notebook format embeds narrative documentation alongside code and output, making the analytical logic transparent. Notebooks stored in Git provide full version history. Recipients of the rendered HTML report can see not just the output but the calculation steps that produced it, supporting auditability.

Limitations: notebooks as production reports require discipline around state management (cells should be written to run top-to-bottom without side effects) and can become unwieldy if report logic becomes complex. For very large or complex reporting pipelines, dedicated orchestration tools are more appropriate.

dbt for Data Transformation

dbt (data build tool) applies software engineering practices — version control, testing, documentation, modularity — to SQL-based data transformation. In a WFM context, dbt models define the canonical transformations that produce reporting tables: daily adherence by team, weekly service level by queue, monthly cost per contact by site. These models are stored in a Git repository, tested against known edge cases (e.g., confirm that total adherence never exceeds 100%, confirm that intervals with zero staffing are handled correctly), and documented with metric definitions.

Downstream consumers — BI dashboards, Python scripts, Jupyter notebooks — read from dbt-produced tables rather than implementing transformation logic independently. This enforces the single source of truth principle at the data layer. When a metric definition changes (e.g., how Shrinkage is calculated), the change is made once in the dbt model and propagates to all consumers on the next run.[3]

Airflow and Prefect for Pipeline Orchestration

For organizations with multiple interdependent reporting jobs — ingest from five source APIs, transform via ten dbt models, render four reports, distribute to three channels — a workflow orchestration tool manages execution order, retry logic, and failure alerting. Apache Airflow defines workflows as Python-based directed acyclic graphs (DAGs); Prefect offers a similar capability with a more modern API and managed execution environment.

WFM teams reaching the orchestration layer are typically operating at Level 4–5 maturity: they have multiple automated pipelines with real dependencies between them, and manual coordination of execution order is no longer viable. For smaller operations, cron-based scheduling of individual scripts is adequate and significantly simpler to operate.

Self-Service Analytics

The Self-Service Model

Self-service analytics gives operations leaders — managers, directors, and in some cases team leads — the ability to query workforce data themselves, without submitting requests to a WFM or BI analyst. The goal is to reduce decision latency (an operations manager can answer their own question in minutes rather than waiting days for an analyst to produce a custom report) and to free analyst capacity for higher-order analysis.

Self-service is a spectrum. At the simple end, a Power BI or Tableau dashboard with slicers and drill-down capability is self-service: a manager filters the existing report to their site and time period without analyst involvement. At the more sophisticated end, a business user writes SQL or uses a low-code query tool to construct a custom analysis against a governed data catalog.

Low-Code vs. Code-First Tradeoffs

The choice between low-code BI tools and code-first platforms reflects a fundamental tradeoff between accessibility and analytical power.

Low-code tools (Power BI, Tableau, Looker, Metabase, Mode Analytics) allow non-programmers to build reports by dragging fields, applying filters, and selecting visualization types. They lower the barrier to self-service but constrain what questions can be asked: analyses that require joins across multiple tables, statistical transformations, or iterative simulation are difficult or impossible in GUI-based tools.

Code-first tools (Python with pandas/matplotlib, SQL query interfaces, Jupyter notebooks) allow analysts to answer any question expressible in code but require programming literacy. They are not appropriate for direct use by non-technical operations managers.

Most mature WFM analytics environments use both: low-code tools for standardized self-service by operations audiences, and code-first tools for analyst-led deep dives. The key architectural requirement is that both layers draw from the same governed data source, preventing metric drift between the "manager's version" and the "analyst's version" of the same figure.

Data Literacy as a Prerequisite

Self-service analytics fails when users lack the data literacy to interpret results correctly. An operations manager who filters a service level dashboard to a single team without understanding that service level is a queue-level metric (not a team-level metric) may draw incorrect conclusions and make decisions that harm performance. A site director who interprets a rise in Occupancy as a positive sign (more productive agents) without understanding that occupancy above 85–90% is a driver of agent burnout and Adherence and Conformance failures will misuse the tool.

Data literacy investment — training users in how metrics are calculated, what drives them, and what their appropriate interpretations are — is a prerequisite for self-service deployment, not an optional follow-on. Documentation embedded in the BI tool (field descriptions, metric definitions, contextual notes on limitations) reduces the training burden but does not eliminate it. See WFM KPI Hierarchy and Reporting Cadence for the framework that determines which metrics are appropriate for which audiences.

Security and Governance

Access Control and PII Considerations

WFM data contains personally identifiable information (PII) at the agent level: names, employee IDs, performance records, attendance data, and compensation figures. Access to agent-level data must be role-controlled: team leads should access their own teams, site managers their own sites, and HR business partners the employees they support. Broad self-service access to an ungoverned data mart creates compliance risk under privacy regulations (GDPR, CCPA) and exposes sensitive employment records to unauthorized personnel.

Access control implementation in the reporting layer typically uses row-level security (RLS) in Power BI or Tableau, column-level access controls in the data warehouse, or separate data marts by audience segment. The governance model must specify: who is permitted to access what data, at what level of granularity, and for what business purpose.

Metric Governance

Self-service capability increases the risk of metric proliferation: if every user can create their own calculated fields, the organization accumulates dozens of slightly different versions of "handle time" or "adherence" with no mechanism to determine which is correct. Metric governance frameworks address this by distinguishing between:

- Certified metrics: defined in the governed semantic layer, tested, and documented. These are the official figures used in executive reporting and performance management.

- Exploratory metrics: analyst-defined, ad-hoc, not yet validated. Marked clearly as non-certified to prevent accidental promotion to decision-making use.

Tools such as Looker (LookML) and dbt (metrics layer) enforce this distinction programmatically. Without it, self-service analytics frequently produces the "spreadsheet proliferation" problem familiar from pre-BI environments, now replicated in BI tools.

Audit Trails

Automated report systems should maintain audit logs recording: when each report ran, what data was queried, what output was produced, and to whom it was distributed. Audit trails serve two purposes: debugging (when a report contains an error, the log helps identify the specific run and data state that produced it) and compliance (regulatory environments may require documentation that workforce metrics were produced from certified sources using approved calculation methods).

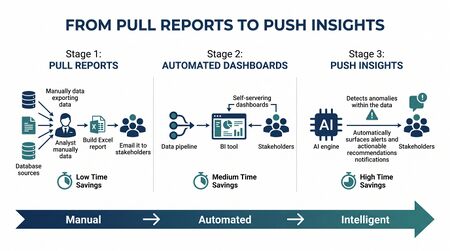

Maturity Model Considerations

At Level 3 (Defined), some report automation exists — scheduled BI refreshes and email distribution — but automation is partial and manually supplemented. Self-service is limited to filtering existing dashboards. Data literacy investment is informal.

At Level 4 (Advanced), core recurring reports are fully automated via Python scripts or notebook pipelines. Analysts are freed from mechanical report production and focus on analysis. Self-service extends to SQL query access for trained analysts. A governed metric layer (dbt or BI semantic layer) is in place. Access controls and PII governance are documented and enforced.

At Level 5 (Optimizing), the organization operates a comprehensive data platform: automated pipelines with orchestration and failure monitoring, a governed self-service environment accessible to operations managers, programmatic alerting for exception conditions, and embedded data literacy programs that enable non-technical stakeholders to use analytics safely. The WFM analytics team functions as an internal data product team. See WFM Labs Maturity Model.

Related Concepts

- Reporting and Analytics Framework

- WFM KPI Hierarchy and Reporting Cadence

- Performance Management

- Forecast Accuracy Metrics

- Adherence and Conformance

- WFM Labs Maturity Model

- Technology