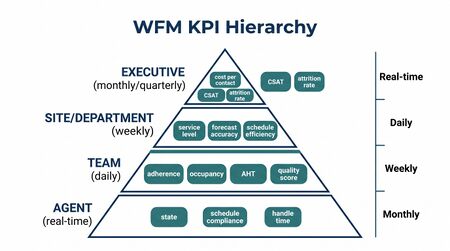

WFM KPI Hierarchy and Reporting Cadence

A WFM KPI Hierarchy is a structured taxonomy that assigns workforce management key performance indicators (KPIs) to specific organizational levels and reporting cadences, ensuring that each audience receives the metrics most relevant to the decisions they make. In contact center and workforce planning contexts, a single metric such as Occupancy may be meaningful for a team lead managing a queue in real time but misleading or irrelevant when presented to a chief financial officer reviewing quarterly labor efficiency. The hierarchy principle holds that metric selection, aggregation level, and reporting frequency should match the decision authority and planning horizon of the consumer.[1] Alignment between KPI hierarchy and reporting cadence — the rhythm at which reports are produced and reviewed — ensures that data reaches decision-makers at the moment it can most influence action, rather than retrospectively or prematurely. This page describes the standard hierarchy from agent-level metrics through executive summaries, the appropriate cadences for each layer, and the governance challenges that arise when multiple systems report the same metric differently.

The KPI Hierarchy: Levels and Appropriate Metrics

KPIs in WFM operate across five organizational levels, each characterized by distinct planning horizons, decision types, and metric needs. Displaying the wrong metric at the wrong level produces either information overload (operational detail obscuring strategic signal) or dangerous oversimplification (strategic averages hiding operational problems).

Agent Level

Agent-level metrics are the most granular and time-sensitive. They support self-management, coaching conversations, and real-time supervisor intervention. Key metrics at this level include:

- Adherence and Conformance — Is the agent logged in and in the correct state at the scheduled time?

- Average Handle Time — Is the agent's handle time within expected range for queue and interaction type?

- After-Call Work (ACW) — Is after-call wrap time appropriate?

- Contacts Handled — Productivity count for the interval or day

- Quality Score — Where quality monitoring is conducted (see Quality Management)

- First Contact Resolution — Where measurable at the agent level

Agent-level metrics are appropriate for team leads, coaches, and WFM real-time analysts. They are not appropriate as executive metrics — averages at the enterprise level mask agent-level variance and provide no actionable information to leaders who cannot intervene at the agent level.

Team Level

Team-level aggregates support first-line manager decisions about coaching priorities, schedule management, and intraday escalation. Metrics appropriate at this level include:

- Team average adherence rate

- Team average handle time versus queue target

- Team Occupancy — aggregate utilization across the team

- Team service level contribution (for queue-specific teams)

- Team quality score average and distribution

- Absenteeism rate and unplanned Shrinkage

Team-level reporting cadence is typically real-time (supervisor wallboards) plus daily scorecards and weekly summaries.

Site Level

Site-level metrics aggregate across teams and queues to give site directors and operations managers a view of overall site performance and capacity. Key site-level metrics include:

- Service Level by queue and aggregate — the primary operational KPI for most contact center sites

- Site occupancy (volume divided by capacity)

- Forecast accuracy at the site level (see Forecast Accuracy Metrics)

- Overall shrinkage versus budget

- Headcount versus plan (scheduled, available, absent)

- Cost per contact at the site level

- Net Promoter Score or Customer Satisfaction (CSAT) averages, where attributable to site

Site directors need both the "right now" view (intraday staffing) and the "this week / this month" trend view. Reporting cadence combines real-time dashboards, daily scorecards, weekly trend reports, and monthly business review packages.

Enterprise Level

Enterprise-level reporting aggregates across sites, channels, and lines of business for VP and Director audiences. Metrics at this level are necessarily higher-order:

- Enterprise service level attainment versus target (time-trended)

- Enterprise forecast accuracy trend

- Total labor cost and cost per contact trend

- Attrition rate by site and tenure cohort

- Capacity versus demand trend (are we adequately staffed for projected volume?)

- Schedule Quality Metrics — are schedules meeting coverage targets?

- Headcount efficiency (contacts per FTE, cost per resolved contact)

Enterprise-level metrics should be presented in trend form — current period versus prior period, versus plan, versus year-ago — rather than as point-in-time snapshots. An enterprise service level of 76% in a given week is uninformative without context; a decline from 84% over four weeks against a target of 80% is a decision trigger.

Executive Level

C-suite and board-level workforce reporting is financial and strategic, not operational. The questions at this level are about investment, risk, and competitive position, not queue management. Appropriate executive metrics include:

- Total workforce cost as a percentage of revenue

- Labor efficiency trend (contacts or resolutions per dollar of labor cost)

- Workforce cost forecast versus budget (with variance explanation)

- Attrition cost and retention program ROI

- Technology investment payback (automation, AI-assisted contacts)

- Compliance and risk indicators (regulatory staffing requirements, overtime exposure)

Showing Occupancy or Adherence and Conformance percentages to a CEO is a framework failure. These are operational levers for WFM practitioners; they carry no decision-actionable meaning at the executive level and often invite uninformed micromanagement. The executive view of workforce performance is expressed through cost, output, risk, and trend — not through operational KPI tables.[2]

Reporting Cadence: Aligning Data to Decision Cycles

Cadence — the rhythm at which reports are produced and reviewed — must align with the decision cycle of the audience. Producing a strategic report daily does not make it more strategic; it produces noise. Producing an intraday dashboard weekly makes it useless for the purpose it is designed to serve.

Real-Time (Continuous / Sub-Minute to 15-Minute Interval)

Real-time data feeds wallboards, supervisor desktop views, and automated alerting systems. The audience is intraday WFM analysts, supervisors, and team leads making immediate staffing decisions: moving agents between queues, approving or denying break requests, escalating to management when service level is at risk.

Key real-time metrics: current queue depth, current service level (rolling 15 or 30 minutes), agents available, agents in ACW, agents in non-productive states, estimated wait time.

Real-time reporting is not a venue for trend analysis. Data at this cadence is inherently noisy — a single spike in volume or a handful of agents taking simultaneous breaks can temporarily distort every metric. Decision-makers who rely on real-time data need training in distinguishing signal from noise and understanding that interval-level metrics are inputs to intraday adjustments, not performance evaluations.

Daily Scorecards

Daily reports close the loop on the prior day: did staffing meet demand, did service level attain target, what was adherence, what was actual versus forecast volume? Daily scorecards are produced automatically from finalized prior-day data and distributed to site managers and WFM analysts, typically by mid-morning.

Daily cadence aligns with the scheduling cycle: if a pattern of underperformance is visible in the daily scorecard, WFM can adjust the near-term schedule or staffing plan before cumulative impact materializes. Daily scorecards that require manual assembly each morning are a process efficiency failure — see Reporting Automation and Self-Service Analytics.

Weekly Summaries and Trend Reviews

Weekly reporting serves the primary WFM planning cycle. Most contact centers build schedules one to four weeks in advance; weekly reports close the loop on the preceding week and inform the upcoming planning cycle. Audiences are operations managers, WFM managers, and site directors.

Weekly summaries should include trend lines (4–8 weeks of history) rather than the prior week in isolation. A single week's adherence figure is meaningful only in the context of trajectory. A week-over-week decline in forecast accuracy combined with a week-over-week increase in shrinkage is a diagnostically actionable pattern; each in isolation may be within acceptable variance.

Monthly Business Reviews

Monthly business reviews (MBRs) combine performance data with narrative context for director and VP audiences. They address: How did the month perform against plan? What explains significant variances? What corrective actions are underway? What does next month's outlook look like?

MBRs require both data rigor and narrative skill. A table of KPIs without explanation of root causes leaves executive audiences to draw their own (often incorrect) conclusions. The WFM team's role in the MBR is to translate operational data into business-language explanations of what happened and why.

Quarterly Strategic Reviews

Quarterly reviews span workforce cost, capacity planning, attrition, and technology investment ROI. At this cadence, operational KPIs appear only as context for strategic conclusions — not as the primary subject matter. Quarterly reviews inform budget reallocation, headcount plan adjustments, and multi-quarter program investments.

The quarterly reporting cycle aligns with most organizations' financial planning cadence, making it the appropriate venue for WFM input to budget discussions and capacity investment decisions. See WFM Goals for the connection between reporting cadence and goal-setting cycles.

The Single Source of Truth Problem

A persistent challenge in WFM reporting is metric inconsistency across systems. The WFM platform may report service level at 78.3% for a given day; the ACD reporting console shows 79.1%; the BI dashboard shows 77.8%. Each number is technically "correct" under its own calculation assumptions — differences in how abandoned calls are treated, which queue groups are included, what timestamp anchors the interval, and whether half-intervals at day boundaries are included or excluded.

This is the "single source of truth" (SSOT) problem: when multiple systems report the same-named metric differently, stakeholders lose confidence in all of them and begin manually reconciling reports instead of acting on them.[3]

Solving the SSOT problem requires:

- Authoritative metric definitions — documented calculation rules specifying exactly how each metric is computed, including edge cases (how are abandoned calls counted? what qualifies as a "handled" contact?)

- A designated authoritative source for each metric (e.g., ACD is authoritative for volume; WFM platform is authoritative for adherence)

- A governed transformation layer — typically SQL in a data warehouse, or dbt models — that enforces the canonical definition and feeds all downstream consumers

- Explicit documentation of known discrepancies when systems cannot be reconciled, explaining why the difference exists and which number to use for which purpose

Organizations using a dbt-based semantic layer or Looker's LookML approach encode metric definitions once, in version-controlled code, and prevent downstream consumers from independently reimplementing (and diverging from) the canonical calculation.

Modern Approaches to Cadence Automation

Automated Jupyter Notebooks on Schedule

Jupyter notebooks parameterized with Papermill and executed on a cron schedule (or via orchestration tools such as Apache Airflow or Prefect) can produce formatted daily, weekly, and monthly reports without analyst intervention. A notebook that queries the data warehouse, computes the standard KPI table, generates charts, renders to HTML via nbconvert, and emails the result to distribution lists replaces hours of manual analyst work per reporting cycle.

This approach has the additional advantage of version control: the notebook (and therefore the report logic) lives in a Git repository, changes are tracked, and prior report runs can be reproduced from the same code. This is a significant improvement over Excel-based reporting where formula changes are invisible and irreversible.

Programmatic Alerting

Rather than waiting for a weekly or daily report to surface a problem, programmatic alerting systems monitor metrics continuously and trigger notifications when thresholds are breached. Examples:

- Service level falls below 70% for two consecutive 30-minute intervals → Slack message to on-call WFM analyst and operations manager

- Adherence rate for a team drops more than 10 points week-over-week → automated flag in weekly scorecard with root-cause query pre-populated

- Forecast error exceeds 15% for three consecutive days → alert to WFM manager and forecast analyst

Alerting systems shift the operating model from periodic review to exception-based management: stakeholders only need to actively review reports when something is outside normal bounds, freeing attention for the issues that most need human judgment.

Self-Service Data Catalogs

Self-service analytics platforms — tools such as Mode Analytics, Metabase, or Apache Superset — allow operations leaders to run ad-hoc queries against governed data without waiting for an analyst. When combined with a well-governed semantic layer (consistent metric definitions, access controls, documented data sources), self-service capability distributes analytical capacity without sacrificing consistency. See Reporting Automation and Self-Service Analytics for a detailed treatment.

Maturity Model Considerations

At Level 2 (Developing), KPI selection is informal: whatever metrics the WFM platform reports are what get used, regardless of audience. Executive reports often include the same operational tables sent to team leads. The single source of truth problem exists but is unacknowledged — different teams cite different numbers in the same meeting.

At Level 3 (Defined), a documented KPI dictionary exists, metric ownership is assigned, and reports are differentiated by audience. Cadences are established and reliable. The SSOT problem is acknowledged and partially resolved through a designated authoritative source for major metrics.

At Level 4 (Advanced), metric definitions are enforced at the data layer (warehouse/dbt), automated reports run on schedule without manual intervention, and programmatic alerting handles exception notification. Ad-hoc requests are handled in hours rather than days.

At Level 5 (Optimizing), the KPI hierarchy is embedded in a governed semantic layer consumed by BI tools, notebooks, and alerting systems simultaneously. Self-service analytics allow non-analyst stakeholders to explore data safely. The organization's reporting cadence is aligned to decision cycles rather than to the technical convenience of report producers. See WFM Labs Maturity Model.

Related Concepts

- Reporting and Analytics Framework

- Reporting Automation and Self-Service Analytics

- Service Level

- Occupancy

- Adherence and Conformance

- Average Handle Time

- Shrinkage

- Forecast Accuracy Metrics

- Schedule Quality Metrics

- Performance Management

- Workforce Cost Modeling

- WFM Goals

- WFM Labs Maturity Model