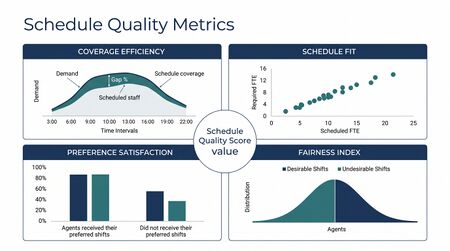

Schedule Quality Metrics

Schedule Quality Metrics are the KPIs that measure how good a published schedule actually is — as a planning artifact, before adherence is even possible. A schedule is the output of Schedule Generation; before any agent shows up, it can be evaluated against the requirement it was built for, the preferences it was supposed to honor, the cost envelope it was given, and the stability commitments the operation made to its workforce. These metrics are the practitioner's answer to the question, "is the schedule we just published actually good?"

This page is distinct from Adherence and Conformance: adherence asks how well agents executed the schedule once it was real; schedule quality asks whether the schedule was worth executing in the first place. A high-adherence operation running a low-quality schedule still misses service level — the variance was baked into the plan.

Most WFM teams report on adherence, occupancy, service level, and labor cost. They rarely report on the schedule itself. The schedule is treated as an input to operations, evaluated only by whether the day worked — a Level 2 default that hides a measurable source of waste. The Level 3 lift is to treat the schedule as a measurable artifact in its own right. Two schedules that produce the same day-of operational result can have very different quality profiles — different coverage variance, fairness, stability, and preference satisfaction.

What practitioners build

A schedule quality scorecard, published alongside every released schedule. Typical components:

- Coverage Adherence — % of intervals where staffing meets the requirement curve within tolerance (commonly ±5%)

- Coverage Variance — distribution of (scheduled FTE − required FTE) across intervals; reported as P10 / P50 / P90 to show under-staffing and over-staffing tails

- Plan-vs-Actual Delta — for the prior period, scheduled FTE compared to actual served FTE after Shrinkage; a structural gap signals a forecasting or shrinkage-modeling failure rather than a schedule failure

- Preference Satisfaction Score — % of agent preferences honored, weighted by stated intensity (a "must-have" preference counts more than a "nice-to-have")

- Fairness (Gini Coefficient on Shift Desirability) — concentration of desirable shifts (weekends off, daytime hours, preferred queues) across the workforce; lower is more equitable

- Schedule Stability — % of agent-shifts unchanged from the prior publish cycle; high volatility is a workforce-experience tax

- Commute / Weekend Equity — distribution of long-commute shifts and weekend rotations across the workforce

- Cost Efficiency — over-staffing cost as % of total scheduled labor cost (the labor that was scheduled in excess of requirement, valued at fully-loaded rate)

- Agility — median time from forecast change to schedule republish, measured in hours

The scorecard is the deliverable. Every released schedule produces a row in a long-format dataset; trend analysis at the WFM-team level becomes possible.

Metric architecture notes

- Coverage adherence vs variance. Adherence is binary; variance is magnitude and direction. A schedule that hits 95% of intervals but is structurally under-staffed on the misses is a different artifact than one structurally over-staffed on the misses. Report the distribution, not just the rate.

- Plan-vs-actual delta. After the day, scheduled FTE minus actually-served FTE attributes residual to one of three sources: forecast error, Shrinkage mis-modeling, or schedule infeasibility. The metric isolates which source dominates.

- Preference satisfaction. Use weighted satisfaction — Σ(preference satisfied × intensity) / Σ(intensity). Unweighted satisfaction lets a schedule look good by honoring trivial preferences while ignoring high-intensity ones. Self-bid systems produce different dynamics than manager-built; report variance across the workforce.

- Fairness Gini. Compute a desirability score per shift (preferred-time, weekend-off, queue preference); take the Gini across workforce of total desirability earned per period. Disaggregate by tenure, location, and protected categories before publishing the headline.

- Stability. % of agent-shifts unchanged from prior publish, plus median magnitude of changed start times. Stability competes with optimality; treat the target as a published constraint, not a comfort feature.

- Cost efficiency. Over-staffing cost as % of total scheduled labor — the integral of (scheduled FTE − required FTE)+ across intervals, valued at fully-loaded rate. Pair with the [[Multi-Objective Optimization in Contact Center|Pareto frontier]] view: the trade-off against fairness and stability must be visible.

- Agility. Median hours from forecast change (±5% interval volume) to schedule republish. 24-hour agility = Level 2 batch; 1-2 hours = Level 3; sub-hour = integrated Real-Time Schedule Adjustment.

In the Variance Harvesting frame, schedule variance is not noise to be smoothed but signal to be diagnosed. Coverage variance distributions tell you where the forecast is consistently wrong, which intervals chronically under-staff, which day-of-week patterns the optimizer misses. The scorecard becomes the operational front-end on a variance program — every publish cycle generates new evidence about where the planning stack is fragile.

Practitioner playbook

- Define the metric set. Coverage adherence, coverage variance distribution, plan-vs-actual delta, preference satisfaction, fairness Gini, stability, cost efficiency, agility. Eight numbers, every publish.

- Establish baselines. Run the metric set against four to eight prior schedules to establish current-state distributions. The mean is the baseline; the variance is the noise floor.

- Set targets, not point goals. Targets are distributional. "Coverage Gini below 0.25" beats "fairness above 80%." Specify the metric, the target, and the tolerance.

- Publish the scorecard. Every released schedule. Share with operations, HR, and the workforce. The published scorecard is what makes schedule quality a managed artifact.

- Diagnose tails. When the variance tails widen, treat it as a variance signal — investigate the forecast, the shrinkage model, the preference architecture, the optimizer settings.

- Iterate the optimizer. Tune the optimization weights based on the scorecard signal. The optimizer is a knob, not an oracle.

- Tie to compensation. Senior WFM teams tie at least one schedule quality metric (commonly fairness or stability) to manager performance. Without that tie, the scorecard is a dashboard; with it, the scorecard is governance.

Common failure modes

- Reporting only adherence, not variance. Adherence is a binary; the variance is the diagnosis. A 95% adherence rate masks whether the misses are a thin tail or a structural gap.

- Conflating schedule quality with operational outcome. The day's service level is the joint result of schedule quality, adherence, and event-day variance. Attributing all three to "the schedule" produces wrong corrective action.

- Treating fairness as a single aggregate. A 0.20 Gini at the workforce level can hide a 0.45 Gini within a tenure cohort. Disaggregate before you publish.

- Optimizing one metric at the expense of others. A schedule that maximizes preference satisfaction at the cost of fairness is not better, only differently bad. The metric set must be reported jointly so the trade-off is visible.

- Ignoring stability as a quality dimension. A schedule that re-shuffles 40% of agent-shifts every week may have great coverage variance numbers and a workforce that quits. Stability is a quality metric, not a comfort feature.

- Forgetting plan-vs-actual. If the residual is large and chronic, the problem is upstream of the schedule. Schedule quality metrics that don't include the delta let upstream failures masquerade as scheduling failures.

Maturity Model Position

In the WFM Labs Maturity Model™, schedule quality measurement is a Level 3 lift — making explicit what Level 2 organizations leave implicit.

- Level 1 — Initial (Emerging Operations) — schedule quality is not measured; "the schedule is the schedule"; failure shows up only in operational outcomes (missed SL, attrition).

- Level 2 — Foundational (Traditional WFM Excellence) — coverage adherence is reported (commonly as a single % across the day); fairness, preference, stability are managed informally if at all; cost efficiency is implicit in labor budgeting.

- Level 3 — Progressive (Breaking the Monolith) — published schedule quality scorecard with the full metric set; variance distributions reported and diagnosed; stability and fairness are managed targets; the scorecard is governance.

- Level 4 — Advanced (The Ecosystem Emerges) — quality metrics are integrated into the [[Multi-Objective Optimization in Contact Center|multi-objective optimization]] directly as constraints or weights; the optimizer is tuned against the scorecard; schedule quality enters Workforce Cost Modeling as an input.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — schedule quality metrics are pool-aware (Three-Pool Architecture) and continuously co-optimized with probabilistic forecasts and probabilistic schedules; the scorecard becomes an automated quality-of-plan signal for the orchestration layer.

The cluster's progression is from "the schedule is invisible" (L1-L2) to "the schedule is a measured artifact" (L3) to "the schedule's measurement drives the optimizer" (L4-L5).

References

- Koole, G. Call Center Optimization. ccmath/book.pdf MG Books, 2013. Chapter 7 covers shift scheduling; the staffing-cost trade-offs underlie the cost-efficiency and coverage metrics.

- Gans, N., Koole, G., & Mandelbaum, A. "Telephone call centers: tutorial, review, and research prospects." Manufacturing & Service Operations Management 5(2), 2003. Foundational treatment of staffing variance and its operational consequences.

- Aksin, Z., Armony, M., & Mehrotra, V. "The modern call center: A multi-disciplinary perspective on operations management research." Production and Operations Management 16(6), 2007. Coverage of preference satisfaction and fairness in workforce scheduling.

- Atkinson, A. B. "On the measurement of inequality." Journal of Economic Theory 2(3), 1970. Foundational treatment of the Gini-style inequality metric, applied here to shift desirability distribution.

Tools

- WFM Variance Analysis — decomposes plan-vs-actual variance into volume, AHT, and staffing components; the diagnostic engine behind the plan-vs-actual delta.

- Staffing Gap Optimizer — the OT-vs-temp Pareto frontier; useful for the cost-efficiency component.

- Minimal Interval Variance — the floor below which coverage variance is statistical noise rather than schedule quality signal.

- MAPE vs WAPE — the metric-architecture argument that applies equally to schedule quality (use volume-weighted, not interval-equal, aggregations).

See Also

- Adherence and Conformance — downstream operational metric; complements schedule quality

- Schedule Generation — the optimization that produces what these metrics measure

- Schedule Maintenance — quality must be maintained across edits

- Variance Harvesting — variance as signal frame

- Multi-Objective Optimization in Contact Center — the metrics live on the Pareto surface

- Multi-Skill Scheduling — multi-skill quality has skill-utilization fairness as an additional axis

- Probabilistic Scheduling — distributional version of these quality metrics

- Self-Scheduling and Flexible Workforce Models — preference and fairness dynamics differ

- Real-Time Schedule Adjustment — agility metric ties here

- Workforce Cost Modeling — cost efficiency feeds workforce cost models

- Forecast Accuracy Metrics — adjacent measurement discipline

- Shrinkage — embedded in the plan-vs-actual delta

- Shift Design — the catalog whose quality the metrics ultimately reflect

- Scheduling Methods — overview