Python for Workforce Management

Python for Workforce Management is the dominant programming language adopted by workforce management teams moving beyond the built-in capabilities of commercial WFM platforms. Where Excel and platform-native tools reach their limits—custom forecasting models, multi-objective schedule optimization, automated reporting pipelines, simulation-driven capacity planning—Python provides a mature, well-supported ecosystem purpose-built for the analytical work WFM practitioners perform daily.

The adoption pattern is consistent across the industry: WFM teams that develop even basic Python capability report significant gains in analytical throughput, forecast accuracy, and operational agility. A 2023 Stack Overflow Developer Survey found Python was the most-wanted language across all developer categories for the seventh consecutive year, with particular dominance in data science and analytics—the exact domains WFM practitioners operate in.[1] McKinsey's research on analytics-driven organizations found that teams with programmatic data analysis capabilities (Python, R, or SQL beyond basic queries) make decisions 5x faster than those relying solely on spreadsheet-based workflows.[2]

This page serves as the hub for the WFM Python practitioner tooling section. It introduces why Python matters for WFM work, maps the ecosystem of libraries to specific WFM use cases, and provides the starting path for analysts making the transition from spreadsheet-only workflows. Each major library and technique links to a dedicated deep-dive page.

Why Python for WFM

WFM analysts face a recurring question: why learn a programming language when Excel works and commercial platforms handle most tasks? The answer is not that Excel or platforms are inadequate—they are essential. The answer is that a meaningful category of WFM analytical work either cannot be done in those tools or can only be done with fragile, unmaintainable workarounds.

The Limits of Excel

Excel remains the single most important analytical tool in workforce management. Every WFM analyst knows it, every stakeholder can open its output, and for many tasks—ad hoc calculations, quick data inspection, simple charts—nothing beats it. The problems begin at scale and complexity:

- Row limits — Excel handles approximately 1,048,576 rows. A mid-size contact center generating interval-level data across multiple queues and channels can exhaust this within weeks. WFM analysts routinely truncate historical data to fit, losing the depth that produces better forecasts.

- Reproducibility — An Excel workbook with multiple tabs, VLOOKUP chains, pivot tables, and conditional formatting is effectively a program without version control, documentation, or testing. When the analyst who built it leaves, the workbook becomes a black box. Python scripts are plain text: versionable, reviewable, testable.

- Automation — Refreshing a weekly report in Excel requires a human to open the file, update data sources, adjust filters, and export. A Python script performs the same sequence unattended, on schedule, with error handling. The Reporting and Analytics Framework describes how automated pipelines replace manual refresh cycles.

- Advanced analytics — Monte Carlo simulation, machine learning, optimization solvers, and time series decomposition either do not exist in native Excel or require add-ins that introduce their own maintenance burden. These capabilities are native to Python's ecosystem.

Why Not R?

R is a capable language for statistical analysis, and some WFM teams use it effectively. Python's advantage for WFM practitioners is breadth: Python handles not only statistics and machine learning but also automation, web scraping, API integration, database access, and application development within a single language. A WFM analyst who learns Python can build a forecasting model and the automated pipeline that delivers its output to stakeholders—without switching tools. The Python ecosystem also benefits from significantly larger community support, more tutorials, and broader industry adoption outside of pure statistics, which matters for practitioners learning on their own.[3]

Why Not Just Use the WFM Platform?

Commercial WFM platforms—NICE, Verint, Calabrio, Alvaria, Assembled, Playvox—are designed for operational execution: generating forecasts, building schedules, managing real-time adherence. They excel within their designed scope. Python fills the gaps outside that scope:

- Custom forecasting models that incorporate variables the platform does not support (marketing campaign calendars, competitor pricing changes, social media sentiment)

- Cross-platform analysis that combines data from ACD, WFM, CRM, HRIS, and quality systems into a unified analytical view

- What-if simulation that explores hundreds of scenarios rather than the handful a platform's scenario planner supports

- Automated data quality monitoring that catches upstream issues before they corrupt forecasts

- Custom optimization that balances objectives the platform's scheduler does not natively support

Python does not replace WFM platforms. It extends them. The relationship between Python and commercial WFM tools is analogous to the relationship between AI capabilities and human judgment: the programmatic tool handles volume, speed, and consistency while the platform (and the human) handles operational workflow and domain-specific process.

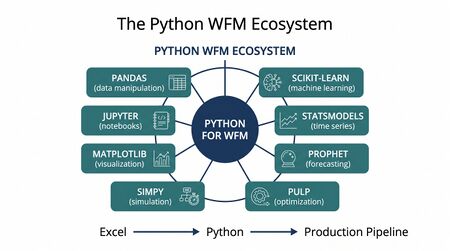

The WFM Python Ecosystem

Python's power for WFM lies not in the language itself but in its ecosystem of specialized libraries. Each library below addresses a specific WFM analytical need. Dedicated pages provide implementation detail; this section maps the landscape.

Pandas — Data Manipulation

Pandas is the foundational data manipulation library for WFM analytics. It provides the DataFrame—a tabular data structure similar to an Excel spreadsheet but capable of handling millions of rows with operations that execute in seconds rather than minutes. Every WFM Python workflow begins with Pandas: loading interval data from CSV exports, cleaning timestamps, pivoting across queues, merging forecast and actual volumes, calculating rolling averages, and reshaping data for downstream analysis. Pandas is to Python-based WFM analysis what the spreadsheet grid is to Excel—the surface on which all other work happens.

Jupyter Notebooks — Interactive Analysis

Jupyter Notebooks provide an interactive environment where WFM analysts write code, see results immediately, add narrative explanation, and share the entire workflow as a single document. For WFM teams, Jupyter occupies the space between ad hoc Excel analysis and production automation: an analyst can prototype a new forecast evaluation methodology in a notebook, share it with the team for review (code, output, and explanation together), and later extract the proven code into an automated pipeline. The notebook format also serves as living documentation—a WFM team's analytical methods are preserved in executable form rather than tribal knowledge.

Scikit-learn — Machine Learning

Scikit-learn is Python's most widely used machine learning library, providing consistent interfaces for regression, classification, clustering, and model evaluation. WFM applications include building custom volume forecasting models (Random Forest, Gradient Boosting), agent attrition prediction, contact reason classification, and anomaly detection for real-time volume spikes. Scikit-learn's strength is its uniform API: every model follows the same fit-predict-evaluate pattern, lowering the barrier for practitioners who are learning ML concepts alongside implementation. The library connects directly to the Model Evaluation and Validation practices that determine whether a model is production-ready.

Statsmodels — Time Series Analysis

Statsmodels provides classical statistical modeling capabilities that map directly to traditional WFM forecasting: ARIMA, exponential smoothing (Holt-Winters), seasonal decomposition, and statistical hypothesis testing. For WFM practitioners coming from platforms that use these methods internally, Statsmodels makes the underlying mathematics visible and customizable. Analysts can decompose a volume series into trend, seasonal, and residual components; fit ARIMA models with specific parameters; and run statistical tests on forecast residuals to assess model adequacy. This library bridges the gap between the Forecasting Methods WFM teams already use and the ability to customize, extend, and validate those methods programmatically.

Prophet — Automated Forecasting

Prophet, developed by Meta's Core Data Science team, is a forecasting library designed for business time series with strong seasonal patterns and holiday effects—a description that maps precisely to contact center volume data.[4] Prophet handles missing data gracefully, accommodates multiple seasonality layers (daily, weekly, annual), and allows analysts to specify known future events (holidays, marketing campaigns, product launches) as regressors. For WFM teams, Prophet offers a path to sophisticated forecasting with less statistical expertise required than manual ARIMA specification—though understanding its assumptions and limitations remains critical.

PuLP — Optimization

PuLP is a linear programming library that translates scheduling and staffing optimization problems into mathematical models solvable by industry-standard solvers. WFM applications include shift optimization (minimizing labor cost subject to service level constraints), break placement optimization, and capacity planning scenarios that balance headcount against service targets across multiple planning horizons. PuLP makes the optimization logic explicit and auditable—unlike a commercial scheduler's black-box optimizer, every constraint, objective, and trade-off is visible in the code. The approach connects to the broader Scheduling Methods that WFM teams employ.

Simulation Libraries

Simulation tools in Python—including SimPy for discrete-event simulation and NumPy/SciPy for Monte Carlo methods—enable WFM teams to model operational uncertainty explicitly. Rather than planning to a single forecast number, simulation generates thousands of scenarios and reports the distribution of outcomes: "There is a 90% probability that we can meet an 80/20 service level with 47 agents, but only a 60% probability with 44 agents." This probabilistic approach to planning produces more resilient staffing decisions than deterministic single-point methods.

Data Visualization

Data visualization libraries—Matplotlib, Seaborn, Plotly, and Altair—enable WFM teams to build custom visualizations that go beyond platform-native charting. Forecast vs. actual overlays with confidence intervals, interactive staffing heatmaps, schedule coverage gap visualizations, and executive dashboards can all be generated programmatically and refreshed automatically. Visualization is often the first Python capability that delivers visible value to stakeholders, because the output is immediately interpretable by non-technical audiences.

Common WFM Use Cases

Python adoption in WFM follows predictable patterns. Teams typically start with one high-pain use case, prove value, and expand. The use cases below are ordered by adoption frequency—the first is the most common entry point.

Forecast Enhancement

The most common Python entry point for WFM teams. Commercial platforms produce baseline forecasts; Python extends them by incorporating external variables (marketing calendars, weather, competitor actions), applying ensemble methods that combine multiple models, generating prediction intervals rather than point forecasts, and automating forecast accuracy evaluation across horizons and granularities. Teams using scikit-learn or Prophet alongside their platform forecasts frequently report 10-20% accuracy improvements on specific volume streams, particularly those influenced by external factors the platform does not model.

The technical foundation for this work draws on the principles described in Forecasting Methods—the same decomposition and modeling concepts apply whether implemented in a platform or in Python. The difference is flexibility: Python allows the analyst to specify exactly which features, transformations, and evaluation criteria to use.

Schedule Optimization

Schedule optimization is the second most common adoption path. Python-based optimization (via PuLP or similar solvers) enables WFM teams to model scheduling as a formal optimization problem with explicit objectives and constraints. Common implementations include shift-start optimization that minimizes overstaffing while respecting service level targets, break placement that accounts for meal period regulations alongside coverage needs, and multi-skill scheduling that balances agent utilization across queues.

The critical advantage over manual or platform-based scheduling is the ability to define and balance multiple objectives simultaneously—cost, service level, agent preference satisfaction, fairness—rather than optimizing for one while treating others as hard constraints.

Reporting Automation

WFM teams spend substantial time producing recurring reports: daily performance summaries, weekly forecast accuracy reviews, monthly capacity plans, and ad hoc analyses for leadership. Python automates the entire pipeline—data extraction, transformation, analysis, visualization, and distribution—reducing cycle time from hours to minutes and eliminating manual error. The Reporting and Analytics Framework describes the architectural patterns for this automation.

A typical implementation uses Pandas for data manipulation, Matplotlib or Plotly for visualization, and a scheduling library (or operating system scheduler) to trigger execution. Output formats include Excel workbooks (for stakeholders who require them), HTML dashboards, PDF reports, or direct updates to BI platforms.

Data Quality Monitoring

Upstream data quality issues—missing intervals, duplicate records, timestamp misalignment, sudden changes in AHT or volume patterns—corrupt forecasts and schedules silently. Python-based monitoring scripts run on schedule, comparing incoming data against expected patterns and alerting analysts to anomalies before they propagate. This defensive use case is less visible than forecasting or optimization but often delivers the highest ROI per hour invested, because it prevents downstream failures rather than adding downstream capability.

What-If Analysis and Simulation

Simulation enables WFM teams to explore scenarios that platforms handle poorly or not at all: "What happens to service level if attrition increases 5% and we cannot backfill for 8 weeks?" "How does adding a chat channel at 20% of current voice volume affect required headcount across all skills?" "What is the probability of meeting annual service level targets given historical forecast error distributions?" These questions require Monte Carlo simulation or discrete-event modeling—capabilities native to Python's scientific computing stack and central to probabilistic planning approaches.

Getting Started

WFM practitioners new to Python face a practical question: where to begin, given limited time and an immediate need to deliver value in their current role. The path below is designed for working analysts, not aspiring software engineers.

Installation

The recommended approach for WFM analysts is Anaconda Distribution, which packages Python with the most common data science libraries (Pandas, NumPy, Scikit-learn, Matplotlib, Jupyter) in a single installer. Anaconda eliminates the most common frustration new users face: dependency management. Install Anaconda, and the core WFM analytical toolkit is immediately available.

For analysts working in environments with restricted software installation, Google Colab provides a browser-based Jupyter Notebook environment with all major libraries pre-installed, requiring no local installation at all.

Environments

Python virtual environments isolate project dependencies, preventing conflicts between projects that require different library versions. For WFM analysts, this means each project (forecasting model, reporting pipeline, scheduling optimizer) maintains its own library set. Anaconda manages this through its conda environment system:

conda create -n wfm-forecast python=3.11 pandas scikit-learn prophet jupyter

conda activate wfm-forecast

This creates an environment named wfm-forecast with the specified libraries, activated before each work session.

First Steps for WFM Analysts

The highest-value first project for most WFM analysts:

- Export interval data from the WFM platform or ACD (CSV or Excel format)

- Load it in a Jupyter Notebook using Pandas:

df = pd.read_csv('interval_data.csv') - Explore the data —

df.describe(),df.head(),df.info() - Create a visualization — plot actual vs. forecast volume for the past week

- Calculate a metric — forecast accuracy by interval, day, or week

This sequence takes an experienced analyst from zero to a working notebook in under two hours, produces immediately useful output, and introduces the core tools (Jupyter, Pandas, Matplotlib) that every subsequent project builds on. Jupyter Notebooks for WFM Analysis provides a detailed walkthrough of this workflow.

From Excel to Python

The most effective way for WFM practitioners to learn Python is to map operations they already perform in Excel to their Python equivalents. The conceptual models are the same; only the syntax changes.

| Excel Operation | Python Equivalent | Notes |

|---|---|---|

| Open a workbook | df = pd.read_excel('file.xlsx') |

Returns a DataFrame (Pandas' equivalent of a worksheet) |

| Filter rows | df[df['queue'] == 'Sales'] |

Boolean indexing replaces AutoFilter |

| VLOOKUP / INDEX-MATCH | pd.merge(df1, df2, on='agent_id') |

SQL-style joins; more reliable than VLOOKUP |

| Pivot table | df.pivot_table(values='calls', index='date', columns='queue', aggfunc='sum') |

Same concept, any number of rows |

| Conditional formatting | Seaborn heatmaps or Pandas .style |

Programmatic; reproducible across datasets |

| SUMIFS / COUNTIFS | df.groupby(['date', 'queue']).agg({'calls': 'sum', 'aht': 'mean'}) |

GroupBy replaces multi-criteria aggregation |

| Charts | df.plot() or Matplotlib/Plotly |

Static or interactive; auto-refreshed with data |

| Macros (VBA) | Python scripts | Version-controlled; portable; no workbook dependency |

| Solver add-in | PuLP or SciPy.optimize | Industrial-strength solvers; explicit constraints |

| Power Query | Pandas ETL pipelines | Scriptable data transformation |

The critical mental shift: in Excel, data and analysis live together in one file. In Python, data is loaded into a script that processes it. The script is reusable across datasets; the analysis is separated from the data. This separation is what makes automation, version control, and collaboration possible.

Pandas and Data Manipulation for WFM provides comprehensive coverage of these operations with WFM-specific examples.

Python in the WFM Technology Stack

Python does not replace the WFM technology stack—it occupies a specific layer within it. Understanding where Python fits prevents both underuse and overreach.

The Integration Layer

In a mature WFM technology stack, Python operates as the analytical integration layer between data sources and operational systems:

- Upstream: Python reads from ACD data exports, WFM platform APIs, CRM databases, HRIS systems, and external data sources (weather APIs, marketing calendars). The data fabric concepts described in the AI Scaffolding Framework define the architectural patterns for this integration.

- Processing: Python performs analysis that no single platform provides—cross-system data quality checks, custom forecasting models, optimization across multiple planning dimensions, simulation.

- Downstream: Python writes results back to operational systems—updated forecasts loaded into the WFM platform, optimized schedules exported for implementation, automated reports distributed to stakeholders, alerts triggered to real-time management dashboards.

This positioning means Python complements rather than competes with WFM Analytics Platforms and commercial WFM tools. The platform handles operational execution (schedule generation, real-time management, agent self-service); Python handles analytical extension (custom models, automation, cross-system analysis).

API Integration

Most modern WFM and ACD platforms expose APIs that Python can interact with directly. This capability transforms Python from a batch-processing tool (working with exported files) to a real-time integration layer:

- Pulling real-time adherence data for custom dashboards

- Pushing forecast updates from custom models back into the WFM platform

- Extracting schedule data for optimization processing and importing results

- Triggering alerts based on custom-defined thresholds that the platform does not natively support

The technical patterns for this integration work are covered across the AI fundamentals and AI in WFM pages, which describe how programmatic capabilities extend platform-native intelligence.

Production Considerations

WFM teams moving from prototype notebooks to production pipelines encounter infrastructure questions that the AI Scaffolding Framework addresses systematically:

- Scheduling: Production scripts need a scheduler (cron, Apache Airflow, cloud-native schedulers) to trigger execution at defined intervals.

- Error handling: Production code requires logging, alerting, and graceful failure handling that prototype notebooks do not.

- Version control: Git repositories track changes to analytical code, enabling rollback, collaboration, and audit trails.

- Testing: Automated tests verify that data processing logic produces expected outputs, catching regressions before they affect production.

- Monitoring: Model performance monitoring ensures forecasting models maintain accuracy over time—critical for the Model Evaluation and Validation practices that separate reliable analytics from abandoned prototypes.

Learning Path for WFM Practitioners

The learning path below is sequenced for working WFM professionals. Each stage builds on the previous one and produces usable output within the analyst's current role.

Stage 1: Foundations (Weeks 1-4)

- Python basics: Variables, data types, loops, functions, conditionals. Focus on syntax sufficient to write data processing scripts—not software engineering.

- Pandas: Loading, filtering, grouping, merging, pivoting. Map every operation to its Excel equivalent. See Pandas and Data Manipulation for WFM.

- Jupyter Notebooks: Set up the interactive environment. Build a first notebook that loads WFM data and produces a summary. See Jupyter Notebooks for WFM Analysis.

- Milestone: Reproduce a weekly report currently built in Excel. Compare effort and output.

Stage 2: Visualization and Exploration (Weeks 5-8)

- Matplotlib and Seaborn: Build standard WFM visualizations—forecast vs. actual plots, interval heatmaps, distribution histograms, service level trend charts. See Data Visualization for WFM.

- Exploratory data analysis: Apply descriptive statistics and visualization to real WFM datasets. Identify patterns the team has not previously surfaced.

- Milestone: Deliver an analysis to the WFM team that surfaces a previously unseen pattern in the data (volume correlation, AHT driver, adherence trend).

Stage 3: Forecasting and Time Series (Weeks 9-16)

- Statsmodels: Implement ARIMA, exponential smoothing, seasonal decomposition. Understand the math behind the platform's forecast engine. See Statsmodels and Time Series Analysis.

- Prophet: Build automated forecasts with holiday effects and external regressors. See Prophet and Automated Forecasting.

- Scikit-learn: Apply machine learning to volume forecasting. Feature engineering with WFM-specific variables. See Scikit-learn for WFM Forecasting.

- Milestone: Build a custom forecast model and compare its accuracy against the platform's native forecast over a 4-week holdout period.

Stage 4: Optimization and Simulation (Weeks 17-24)

- PuLP: Formulate scheduling as a linear programming problem. Implement shift optimization. See PuLP and Optimization for Scheduling.

- Simulation: Build Monte Carlo simulations for capacity planning scenarios. See Simulation Tools for WFM.

- Milestone: Deliver a capacity plan that quantifies risk using simulation rather than single-point estimates, applying probabilistic planning methods.

Stage 5: Integration and Automation (Ongoing)

- API integration: Connect Python workflows to WFM platform APIs for automated data exchange.

- Production pipelines: Move proven notebooks into scheduled, error-handled production scripts.

- Advanced ML: Deep learning for complex pattern recognition, reinforcement learning for dynamic optimization, NLP for interaction analysis.

- Milestone: Maintain at least one production Python pipeline that runs autonomously and delivers ongoing value.

This learning path aligns with the progression from deterministic to probabilistic analytical capabilities described across the wiki's forecasting, scheduling, and capacity planning pages.

See Also

- Artificial Intelligence Fundamentals — Core AI concepts underlying Python-based analytics

- Machine Learning Concepts — ML theory for WFM practitioners

- AI in Workforce Management — How AI capabilities integrate into WFM operations

- AI Scaffolding Framework — Infrastructure requirements for production AI/ML systems

- Forecasting Methods — Traditional and modern forecasting approaches

- Scheduling Methods — Schedule generation and optimization techniques

- Capacity Planning Methods — Long-range planning methodologies

- WFM Analytics Platforms — Commercial analytics tools that Python complements

- Reporting and Analytics Framework — Automated reporting architecture

- Deterministic Planning in WFM — Single-point planning approaches

- Probabilistic Planning in WFM — Distribution-based planning approaches

- Model Evaluation and Validation — Assessing whether models are production-ready

References

- ↑ 2023 Developer Survey. Stack Overflow. June 2023.

- ↑ The data-driven enterprise of 2025. McKinsey & Company. January 2022.

- ↑ State of Data Science and Machine Learning 2022. Kaggle. December 2022.

- ↑ Prophet: Forecasting at Scale. Meta Open Source. 2026-05-13.

External Resources

- Anaconda Distribution — Recommended Python distribution for data science

- Pandas Documentation — Official documentation for the Pandas library

- Project Jupyter — Interactive notebook environment

- Scikit-learn — Machine learning library documentation

- Prophet — Automated forecasting library

- PuLP — Linear programming optimization library[1]

- Real Python — Tutorials and guides for Python learners[2]

- ↑ PuLP: A Linear Programming Toolkit for Python. COIN-OR Foundation. 2026-05-13.

- ↑ Python Tutorials – Real Python. Real Python. 2026-05-13.