Artificial Intelligence Fundamentals

Artificial Intelligence Fundamentals provides workforce management practitioners with a grounded understanding of artificial intelligence concepts, terminology, and techniques relevant to operational planning and contact center management. As AI capabilities become embedded in workforce management platforms, CCaaS solutions, and intelligent automation tools, practitioners who understand what AI actually does — and does not do — make better decisions about technology adoption, vendor evaluation, and operational design.

This article is not a computer science textbook. It is a practitioner reference that frames AI concepts through the lens of workforce planning, real-time operations, scheduling, and capacity management. The goal is fluency, not expertise: enough understanding to evaluate vendor claims, participate in technology decisions, ask the right questions about model behavior, and recognize when AI is being applied appropriately versus being oversold. According to a 2025 McKinsey survey, 88 percent of organizations report regular AI use in at least one business function, yet most remain in experimenting or piloting stages — a gap that underscores the need for practitioners to develop informed judgment about AI capabilities.[1]

The sections below build from foundational definitions through a taxonomy of approaches, key learning paradigms, the training-to-inference pipeline, and common misconceptions. Each section connects to dedicated sub-pages for deeper treatment and links back to workforce management applications throughout.

What AI Is and Is Not

Artificial intelligence, in the broadest accepted definition, refers to the study and construction of agents that perceive their environment and take actions to achieve goals.[2] In practice, almost all AI deployed in workforce management and contact center operations falls under a narrower definition: software that uses mathematical models to identify patterns in data and make predictions or decisions without being explicitly programmed for each specific case.

What AI Is

AI in its current operational form is pattern recognition and statistical optimization at scale. When a forecasting engine predicts next Tuesday's call volume, it applies learned patterns from historical data to generate a probability distribution of likely outcomes. When a routing system assigns an interaction to a specific agent, it evaluates a set of features (agent skills, current queue depth, predicted handle time) against a trained model to select the option most likely to produce the desired outcome. When a speech analytics platform categorizes calls by topic, it matches acoustic and linguistic patterns against labeled training data.

Each of these examples shares the same underlying structure: data in, model applied, prediction or classification out. The sophistication lies in the mathematics, the volume of data processed, and the speed of execution — not in any form of understanding or reasoning in the human sense.

What AI Is Not

AI is not thinking. It is not conscious. It does not understand the contact center, the customer, or the business. It has no goals of its own, no judgment, and no common sense. These statements are not philosophical hedging — they are operationally important distinctions that protect practitioners from making poor decisions based on vendor marketing.

When a vendor describes their product as "AI-powered," the practitioner's first question should be: What specific model architecture processes what specific data to produce what specific output? If the answer is vague ("our AI understands your business"), the claim is marketing. If the answer is specific ("a gradient-boosted decision tree trained on 18 months of interval-level volume data produces a 15-minute-interval forecast with confidence intervals"), the claim is evaluable.

A useful heuristic: AI systems are narrow. They do one thing, or a small cluster of related things, with statistical reliability. A system that forecasts call volume well does not thereby understand why volume changed, what to do about a staffing shortage, or whether the business should launch a new product. AI applied to workforce management augments human decision-making; it does not replace the need for experienced practitioners who understand operations holistically.

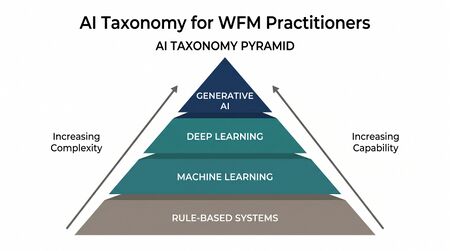

A Taxonomy of AI Approaches

Main article: Machine Learning Concepts

AI is not a single technology but a family of approaches that have evolved over decades. Understanding this taxonomy helps practitioners recognize what type of AI underlies a given vendor capability and what its inherent strengths and limitations are.

Rule-Based and Expert Systems

The earliest practical AI systems encoded human expertise as explicit if-then rules. In workforce management, rule-based systems remain pervasive and useful:

- Scheduling rules: If an agent has worked five consecutive days, assign two days off. If a shift exceeds eight hours, insert a mandatory break at the four-hour mark.

- Routing rules: If the caller selected the billing menu and speaks Spanish, route to the Spanish-speaking billing queue.

- Adherence rules: If an agent has been in after-call work for more than three minutes beyond the expected duration, flag for supervisor review.

Rule-based systems are deterministic — same inputs always produce the same outputs — and fully transparent. A practitioner can inspect every rule and predict every outcome. Their limitation is rigidity: they handle only situations the rule author anticipated, and they do not improve with experience. When the volume of rules grows into the hundreds or thousands, interactions between rules become difficult to predict, and maintenance becomes a significant operational burden.

Most WFM platforms still rely heavily on rule-based logic for schedule generation, adherence monitoring, and compliance enforcement. This is appropriate. Not every problem benefits from probabilistic AI, and deterministic rule execution provides the auditability that labor law and union agreements require.

Machine Learning

Machine learning (ML) represents the shift from explicitly programmed rules to systems that learn patterns from data. Tom Mitchell's widely cited definition captures the core idea: "A computer program is said to learn from experience E with respect to some task T and some performance measure P, if its performance on T, as measured by P, improves with experience E."[3]

In workforce management, machine learning appears in:

- Forecasting: Models trained on historical volume data learn seasonal patterns, day-of-week effects, trend components, and the impact of known events to predict future demand. Techniques range from exponential smoothing and ARIMA (which have statistical rather than ML origins but share the learning-from-data paradigm) to gradient-boosted trees and neural networks.

- Agent performance prediction: Models trained on agent metrics, tenure, training scores, and interaction outcomes predict which agents are at risk of attrition or which will achieve proficiency fastest. See Speed to Proficiency Curve.

- Anomaly detection: Models learn the normal range of operational metrics and flag deviations — unexpected volume spikes, sudden changes in handle time distributions, or unusual patterns in agent behavior.

The key distinction from rule-based systems: ML models are not explicitly told what patterns to find. They discover patterns in data, which makes them capable of identifying relationships too subtle or complex for human rule authors to specify — but also makes them opaque, potentially biased, and dependent on the quality and representativeness of their training data.

Deep Learning

Main article: Neural Networks and Deep Learning

Deep learning is a subset of machine learning that uses artificial neural networks with multiple layers (hence "deep") to learn hierarchical representations of data. Where traditional ML often requires human engineers to select and engineer relevant features, deep learning can learn useful representations directly from raw data.

In workforce management and contact center operations, deep learning powers:

- Speech Analytics: Automatic speech recognition (ASR) systems that convert spoken language to text, enabling quality monitoring, compliance checking, and topic classification at scale.

- Natural Language Processing: Systems that analyze the meaning of text in chat interactions, email, and social media messages for routing, categorization, and sentiment analysis.

- Complex forecasting: Recurrent neural networks and transformer architectures that capture long-range dependencies in time-series data — useful when volume patterns have complex, multi-layered seasonality.

Deep learning models require substantially more training data and computational resources than traditional ML. For many WFM applications — particularly forecasting with limited historical data — simpler ML techniques outperform deep learning. The practitioner skill is recognizing when the additional complexity of deep learning is justified by the nature of the problem and the available data.

Generative AI

Main article: Large Language Models and Generative AI

Generative AI refers to models that produce new content — text, images, code, or synthetic data — rather than classifying or predicting from existing data. Large language models (LLMs) such as GPT-4 and Claude are the most prominent examples, trained on massive text corpora to predict and generate human-like language.

In contact center and workforce management contexts, generative AI applications include:

- Conversational AI: Customer-facing chatbots and voice assistants that handle routine inquiries, reducing inbound volume to human agents.

- Agent assist: Real-time suggestions to agents during interactions — recommended responses, knowledge base lookups, next-best-action prompts.

- Content generation: Drafting knowledge base articles, training materials, quality evaluation summaries, and operational reports.

- Synthetic data: Generating realistic but artificial interaction data for testing and training purposes.

Generative AI introduces distinct risks for workforce management. Outputs can be plausible but factually incorrect (a phenomenon called hallucination). Models trained on broad internet data may not reflect an organization's specific policies, products, or operational context. And the probabilistic nature of generation means the same prompt can produce different outputs — a property that conflicts with the consistency requirements of compliance-sensitive operations.

Supervised, Unsupervised, and Reinforcement Learning

Main article: Machine Learning Concepts

The taxonomy of AI approaches describes what a system does. Learning paradigms describe how it learns. Three paradigms cover the vast majority of AI applications in workforce management.

Supervised Learning

In supervised learning, a model trains on labeled data — input-output pairs where the correct answer is known. The model learns to map inputs to outputs, then applies that mapping to new, unseen inputs.

WFM example: A forecasting model trains on historical data where the inputs are features (day of week, month, whether a marketing campaign was active, holiday proximity) and the output is the known actual volume for each interval. After training, the model predicts volume for future intervals where only the input features are known.

Supervised learning requires labeled data, which in WFM contexts is often abundant (historical actuals serve as natural labels) but sometimes costly to produce (manually labeling call recordings by topic for speech analytics training).

Unsupervised Learning

In unsupervised learning, a model receives data without labels and discovers structure on its own — groupings, patterns, anomalies, or compressed representations.

WFM example: A clustering algorithm analyzes agent performance data across multiple dimensions (handle time, quality scores, adherence, customer satisfaction) and identifies natural groupings — perhaps revealing that agents fall into four distinct performance profiles that the organization had not previously recognized. These clusters might inform skill mix strategies or targeted coaching approaches.

Unsupervised learning is exploratory. It finds patterns, but a human must interpret whether those patterns are meaningful and actionable.

Reinforcement Learning

In reinforcement learning (RL), an agent learns by taking actions in an environment and receiving rewards or penalties. Over time, it discovers the sequence of actions that maximizes cumulative reward.

WFM example: A real-time routing system learns which agent-interaction assignments produce the best outcomes (shortest handle time, highest resolution rate, best customer satisfaction) by treating each routing decision as an action, the resulting metrics as reward signals, and the current queue state as the environment. Over thousands of interactions, the system discovers routing strategies that outperform static rule-based approaches. See Next Generation Routing.

Reinforcement learning is powerful but data-hungry and can produce unexpected behaviors if the reward signal is poorly designed. A routing system optimized purely for handle time reduction might learn to route complex calls to agents who transfer them quickly — technically reducing handle time while degrading resolution rates.

Deterministic vs. Probabilistic Systems

Main article: Deterministic vs Probabilistic Models

This distinction is one of the most practically important concepts for workforce management practitioners, because WFM operations depend on both types of systems and the failure modes differ fundamentally.

Deterministic Systems

A deterministic system produces the same output every time it receives the same input. Traditional Erlang calculations, business-rule engines, schedule optimization solvers (given the same constraints), and spreadsheet formulas are deterministic. The advantage is predictability and auditability: a practitioner can trace exactly why a system produced a given output and verify correctness.

Probabilistic Systems

A probabilistic system incorporates randomness, uncertainty, or learned distributions. Forecasting models, Monte Carlo simulations, stochastic capacity plans, and all machine learning models (including those that appear to give single-point answers) are probabilistic. The output is, at its core, a distribution of possible values — even when the system reports only a single number (typically the mean or mode of that distribution).

Why This Matters for Workforce Planning

The practical consequence: treating a probabilistic output as if it were deterministic produces fragile plans. When a forecast predicts 450 calls for the 10:00 AM interval, that number is the center of a distribution. Staffing to exactly 450 calls with no buffer ignores the variance inherent in the prediction. Competent workforce planners account for this uncertainty through techniques like confidence-interval-based staffing, variance harvesting, scenario modeling, and the risk score framework.

Conversely, treating deterministic outputs as uncertain when they are not creates unnecessary complexity. If a labor law states that agents must receive a 30-minute break after five consecutive hours of work, that constraint is deterministic — there is no distribution to consider, no confidence interval to weigh. The system either complies or it does not.

The AI Scaffolding Framework addresses this distinction at Layer 3 (Analytical Engine), where both deterministic and probabilistic mathematical capabilities are composed and their outputs appropriately interpreted.

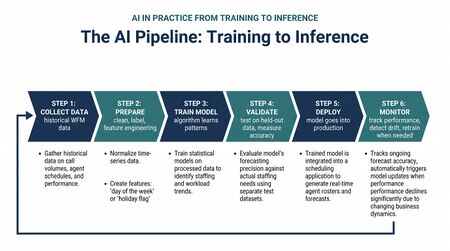

AI in Practice: From Training to Inference

Understanding the lifecycle of an AI system — from raw data to operational decisions — demystifies what happens when an organization "uses AI." The process follows a consistent pipeline regardless of the specific technique.

Step 1: Data Collection and Preparation

All machine learning begins with data. In workforce management, relevant data includes historical contact volumes, agent schedules and actual states, handle times, queue depths, customer satisfaction scores, agent skills and certifications, marketing calendars, known event schedules, and external factors like weather or economic indicators.

Data preparation — cleaning, transforming, and organizing raw data into a format suitable for model training — typically consumes 60 to 80 percent of the total effort in any AI project. Common preparation tasks include handling missing values (what happens when the ACD was offline for two hours and no volume data was recorded?), removing outliers (should a volume spike from a system outage be included in training data?), aligning time zones, and decomposing time series into trend, seasonal, and residual components.

The AI Scaffolding Framework's Layer 1 (Data Fabric) directly addresses this requirement. Organizations with mature data infrastructure spend less time on preparation and produce more reliable models.

Step 2: Feature Engineering

Features are the input variables that a model uses to make predictions. In a volume forecasting model, features might include:

- Day of week (categorical)

- Time of day / interval number (categorical or cyclical)

- Month or season (categorical)

- Days since last holiday (numeric)

- Whether a marketing campaign is active (binary)

- Previous week's actual volume at the same interval (numeric)

- Trend component from decomposition (numeric)

Feature engineering — selecting, creating, and transforming features — is where domain expertise directly improves AI performance. A workforce planner who knows that volume always spikes the Monday after a billing cycle can encode that knowledge as a feature, giving the model information it might otherwise need thousands of additional data points to discover on its own.

Step 3: Model Training

During training, the model adjusts its internal parameters to minimize the difference between its predictions and the known actual outcomes in the training data. The specific mechanism varies by model type: linear regression adjusts coefficient weights, decision trees select splitting criteria, neural networks adjust connection weights through backpropagation.

Training is computationally intensive and occurs offline — not during real-time operations. A forecasting model might be retrained weekly or monthly as new actual data accumulates, while a speech analytics model might be retrained quarterly as new labeled interaction data becomes available.

Step 4: Validation and Testing

Main article: Model Evaluation and Validation

Before deployment, a trained model is evaluated on data it has never seen during training. This step catches overfitting — the condition where a model has memorized the training data's specific patterns (including noise) rather than learning generalizable relationships. An overfit forecasting model might perfectly reproduce last year's volume pattern but fail to predict next month's volume because it learned the noise, not the signal.

Common validation techniques include holdout testing (reserving a portion of historical data for evaluation), cross-validation (systematically rotating which data is used for training versus testing), and backtesting (applying the model to historical periods and comparing predictions to known actuals). The forecast accuracy metrics used in workforce management — mean absolute percentage error (MAPE), weighted absolute percentage error (WAPE), and interval-level accuracy — serve as the performance measures for forecasting model validation.

Step 5: Deployment and Inference

Inference is the operational phase: the trained model receives new input data and produces predictions or decisions in real time or near-real time. When the WFM platform generates tomorrow's forecast, when the routing engine selects an agent for an incoming call, when the speech analytics system classifies a completed interaction — all are inference.

The practitioner concern at inference time is monitoring: Is the model still performing as expected? Has the underlying data distribution shifted (a phenomenon called data drift) in ways that degrade model accuracy? Seasonal changes, new products, policy changes, and external disruptions can all cause a model trained on historical data to underperform on current data. Continuous monitoring and periodic retraining are operational necessities, not optional enhancements.

Step 6: Action and Feedback

The value of AI is realized only when predictions translate into operational actions — staffing decisions, routing changes, schedule adjustments, coaching interventions — and when the outcomes of those actions feed back into the system as new data for future model improvement. This feedback loop is what distinguishes an AI-enhanced operation from a static analytics implementation.

In the AI Scaffolding Framework, this full pipeline spans all seven layers: data fabric (Layer 1) feeds preparation and feature engineering; the business rules engine (Layer 2) constrains what actions are permissible; the analytical engine (Layer 3) houses the models; the orchestration layer (Layer 4) coordinates the pipeline; the decisioning layer (Layer 5) translates predictions into actions; the governance layer (Layer 6) monitors and audits; and the feedback layer (Layer 7) closes the loop.

Key Concepts for Practitioners

The following terms appear frequently in AI discussions and vendor materials. Each is defined in practical, WFM-relevant terms.

Features

Features are the measurable properties or characteristics used as inputs to a model. In WFM forecasting, features include day of week, time of day, historical volume at the same interval, presence of holidays or events, and marketing activity indicators. Selecting the right features — and recognizing when important features are missing — is one of the most impactful activities in AI model development.

Labels

Labels are the known correct answers in supervised learning training data. For a volume forecasting model, labels are the actual observed volumes. For a call classification model, labels are the categories assigned to each call by human reviewers. Label quality directly constrains model quality: if human reviewers inconsistently categorize calls, no model trained on those labels will produce consistent classifications.

Training Data

Training data is the historical dataset used to teach a model its patterns. The representativeness of training data determines the range of situations a model can handle. A forecasting model trained only on data from January through September will not have learned holiday-season patterns and will underperform in December. A speech analytics model trained only on English-language interactions will fail on Spanish-language calls.

Overfitting

Overfitting occurs when a model learns the specific noise and quirks of its training data rather than the underlying generalizable patterns. An overfit model performs excellently on historical data but poorly on new data. In WFM, overfitting manifests as a forecast that closely matches the training period's actuals but produces large errors on future intervals. Techniques to prevent overfitting include using sufficient training data, applying regularization (mathematical constraints that penalize model complexity), and rigorous validation on held-out data.

Underfitting

Underfitting is the opposite problem: a model too simple to capture the real patterns in the data. A linear trend applied to volume data with strong day-of-week seasonality will underfit — it captures the overall direction but misses the weekly pattern. Underfitting typically indicates that a more complex model or better features are needed.

Bias

In AI, bias has two distinct meanings. Statistical bias refers to systematic errors in a model's predictions — consistently predicting too high or too low. Societal bias refers to a model producing systematically different outcomes for different demographic groups, often because the training data reflects historical patterns of unequal treatment. In workforce management, societal bias can appear in agent performance models (if historical evaluation data reflects biased supervisor ratings), hiring recommendation systems, and scheduling algorithms that systematically disadvantage certain groups. Both forms of bias require active monitoring and mitigation.

Explainability

Explainability (sometimes called interpretability) refers to the degree to which a human can understand why a model produced a particular output. A linear regression model is highly explainable — each input's contribution to the output is a visible coefficient. A deep neural network with millions of parameters is largely opaque. For workforce management, explainability matters when practitioners need to justify staffing decisions to leadership, explain forecast variances, or audit AI-driven scheduling for labor compliance.

Confidence and Uncertainty

Most AI models can produce not just a point prediction but a measure of confidence or uncertainty. A forecast of 450 calls with a 90 percent confidence interval of 410 to 490 is far more useful for staffing decisions than a bare prediction of 450. Practitioners should always ask whether a system can provide uncertainty estimates and should treat systems that provide only point predictions with appropriate skepticism — not because the prediction is wrong, but because the uncertainty is hidden rather than absent.

Common Misconceptions

AI adoption in workforce management is hampered by misconceptions that circulate through vendor marketing, executive briefings, and industry media. Correcting these misconceptions is a prerequisite for sound technology decisions.

"AI Thinks and Understands"

Current AI systems, including large language models, process statistical patterns. They do not think, understand, or possess awareness. A conversational AI system that produces a grammatically perfect, contextually appropriate response to a customer query has matched patterns from training data — it has not understood the customer's problem. This distinction matters operationally because "understanding" implies reliability across novel situations, while pattern matching implies reliability only within the distribution of training data.

"AI Will Replace Workforce Planners"

AI automates specific tasks within the workforce management function — generating baseline forecasts, detecting schedule adherence violations, classifying interactions. It does not replace the judgment, contextual awareness, and organizational knowledge that experienced practitioners bring to capacity planning, stakeholder management, exception handling, and strategic workforce decisions. A 2025 Gartner survey of customer service leaders found that 95 percent plan to retain human agents and strategically define AI's role rather than pursue wholesale replacement.[4]

"Garbage In, Garbage Out" Is Cliché but Underappreciated

The phrase is so familiar that practitioners nod and move on — then feed models data riddled with missing intervals, inconsistent ACD codes, timezone misalignments, and unrecorded outage periods. Data quality is not a background concern; it is the primary determinant of AI model performance. Organizations that invest in the data fabric layer before investing in models consistently outperform those that reverse the sequence.

"More Data Is Always Better"

More data helps only when the additional data is representative, clean, and relevant. Adding three years of historical volume data that predates a major operational restructuring may actively harm a forecasting model by teaching it patterns that no longer apply. Adding thousands of labeled interaction records that were inconsistently labeled introduces noise, not signal. Data curation — selecting what to include and exclude — is as important as data collection.

"AI Is Objective and Unbiased"

AI models learn from historical data, and historical data reflects historical practices — including any biases present in those practices. A scheduling algorithm trained on data where certain agent groups were systematically assigned less desirable shifts will learn to reproduce that pattern. An attrition prediction model trained on data where performance evaluations reflected supervisor bias will embed that bias in its predictions. AI does not remove human bias from decisions; it scales whatever biases exist in the training data. Active bias detection and mitigation are operational requirements.

"AI Works Out of the Box"

Vendor demonstrations use carefully prepared data, optimized configurations, and selected examples. Production deployment requires integration with existing data infrastructure, adaptation to organizational data formats and quality levels, configuration for specific operational contexts, validation against local performance standards, and ongoing monitoring and retraining. The gap between demo and production is where most AI projects stall. The AI Scaffolding Framework exists precisely to help organizations build the infrastructure that bridges this gap.

AI Across the WFM Lifecycle

Main article: AI in Workforce Management

AI applications touch every phase of the workforce management lifecycle, from long-range capacity planning to intraday real-time operations. The following provides a brief orientation; the dedicated article covers each application area in depth.

Forecasting

AI-driven forecasting applies machine learning techniques — from enhanced exponential smoothing and ARIMA models to ensemble methods and neural networks — to predict contact volume, handle time, and workload across channels and intervals. The primary improvement over traditional methods is the ability to automatically detect complex patterns, incorporate many input features simultaneously, and provide forecast uncertainty estimates. See Forecasting Methods and Hierarchical Forecasting.

Scheduling and Optimization

AI enhances schedule optimization by exploring larger solution spaces than traditional heuristic methods, incorporating more constraints simultaneously, and adapting to changing conditions. Reinforcement learning approaches can discover scheduling strategies that balance service level targets, agent preferences, labor compliance, and operational cost more effectively than static rule-based optimizers. See also Self-Scheduling and Flexible Workforce Models.

Real-Time Management

During the operating day, AI supports real-time operations through anomaly detection (identifying unexpected volume deviations as they occur), predictive alerting (forecasting service level breaches before they happen), and automated response actions (triggering reforecasts, activating contingency staffing plans, or adjusting routing weights). The daily ROC routine increasingly incorporates AI-generated insights as inputs to human decision-making.

Quality and Performance

Speech Analytics and natural language processing enable automated quality monitoring at 100 percent of interactions — a capability impossible with human-only QA programs that typically sample 1 to 3 percent of calls. AI-driven performance analytics identify patterns across agent populations, predict attrition risk, and recommend targeted coaching interventions. See Coaching and Agent Development.

Workforce Planning

At the strategic level, AI supports long-run workforce sizing and cost modeling by simulating multiple demand scenarios, modeling the impact of automation on staffing requirements, and optimizing hiring and training pipelines against probabilistic demand forecasts. These applications are among the highest-value but also the most sensitive to data quality and model assumptions. According to Gartner, by 2029, agentic AI is expected to autonomously resolve 80 percent of common customer service issues without human intervention — a projection that, if realized, would fundamentally alter the demand assumptions underlying workforce capacity plans.[5]

Building AI Literacy in WFM Teams

Organizations that successfully integrate AI into workforce management operations invest in practitioner literacy — not by turning planners into data scientists, but by building enough conceptual fluency to bridge the gap between technical teams and operational teams. ICMI research consistently identifies this gap as a barrier: workforce management practitioners who understand forecasting methodology, accuracy measurement, and operational constraints are better positioned to evaluate AI tools, challenge vendor claims, and collaborate with data science teams on model development and validation.[6]

Key literacy areas for WFM practitioners include:

- Data fluency: Understanding what data a model needs, recognizing data quality issues, and knowing how data preparation decisions affect model outputs.

- Model evaluation: Ability to interpret accuracy metrics, distinguish between training performance and production performance, and identify signs of overfitting or data drift.

- Vendor evaluation: Asking specific questions about model architecture, training data, validation methodology, explainability, and bias testing when evaluating AI-enhanced WFM tools.

- Ethical awareness: Recognizing when AI applications raise fairness, privacy, or transparency concerns and knowing what safeguards to require.

The AI Scaffolding Framework provides a maturity model that helps organizations assess their current AI readiness and identify the infrastructure gaps that must be addressed before AI investments can deliver reliable operational value.

See Also

- Machine Learning Concepts

- Neural Networks and Deep Learning

- Natural Language Processing

- Large Language Models and Generative AI

- Deterministic vs Probabilistic Models

- Model Evaluation and Validation

- AI in Workforce Management

- AI Scaffolding Framework

- Conversational AI

- Speech Analytics

- Intelligent Automation

- Forecasting Methods

- Forecast Accuracy Metrics

- Technology

References

- ↑ The state of AI in 2025: Agents, innovation, and transformation. McKinsey & Company. 2025.

- ↑ Artificial Intelligence: A Modern Approach. Pearson. 2020. ISBN 978-0-13-461099-3.

- ↑ Mitchell, Tom. Machine Learning. McGraw-Hill. 1997. ISBN 978-0-07-042807-2.

- ↑ Gartner Survey Finds Only 20% of Customer Service Leaders Report AI-Driven Headcount Reduction. Gartner. 2025-12-02.

- ↑ Gartner Predicts Agentic AI Will Autonomously Resolve 80% of Common Customer Service Issues Without Human Intervention by 2029. Gartner. 2025-03-05.

- ↑ The State of Workforce Management. ICMI and NICE. 2022.