Neural Networks and Deep Learning

Neural networks are a class of machine learning models inspired by the structure of biological nervous systems. They consist of interconnected layers of computational units ("neurons") that learn to recognize patterns in data by adjusting internal parameters during training. Deep learning refers to neural networks with multiple hidden layers—networks deep enough to learn hierarchical representations of data, from simple features to complex abstractions.[1]

Workforce management practitioners encounter neural networks whether they realize it or not. The forecasting engine in a modern WFM platform may use recurrent neural networks or transformer architectures to predict contact volumes. Speech Analytics systems use deep neural networks to convert audio to text. Chatbots and virtual agents rely on neural networks for intent recognition and response generation. Agent performance prediction models, sentiment analysis engines, and even some schedule optimization systems incorporate neural network components.

This article provides a conceptual foundation for WFM professionals who need to understand neural networks—not to build them, but to evaluate vendor claims, communicate with data science teams, interpret model outputs, and make informed decisions about when neural networks add genuine value versus when simpler methods like ARIMA or exponential smoothing are more appropriate. For the broader context of how machine learning fits into WFM operations, see Machine Learning Concepts. For the organizational infrastructure required to support neural network deployments, see the AI Scaffolding Framework.

From Biological Inspiration to Mathematical Model

The neural network concept originates from a 1943 paper by Warren McCulloch and Walter Pitts, who proposed a simplified mathematical model of how biological neurons process information.[2] The biological analogy is useful for intuition but should not be taken too literally—artificial neural networks are mathematical functions, not simulations of brains.

The Biological Metaphor

A biological neuron receives electrical signals from other neurons through connections called synapses. If the combined input exceeds a threshold, the neuron fires—it sends its own signal to connected neurons downstream. The strength of each synaptic connection determines how much influence one neuron has on another, and these connection strengths change over time as the organism learns.

An artificial neuron mirrors this process in simplified form:

- It receives numerical inputs (data values or outputs from other neurons).

- Each input is multiplied by a weight (analogous to synaptic strength).

- The weighted inputs are summed.

- The sum passes through an activation function that determines the neuron's output.

- That output becomes an input to neurons in the next layer.

From Perceptron to Network

Frank Rosenblatt's perceptron (1958) was the first implementation of this idea as a learning machine.[1] A single perceptron can learn to classify inputs into two categories—but only if the categories are linearly separable (drawable with a straight line on a graph). This is a severe limitation. Most real-world problems, including nearly all WFM applications, involve non-linear relationships.

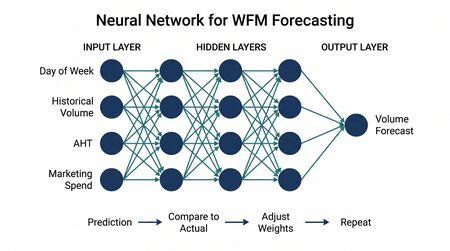

The solution is to connect many neurons in layers:

- An input layer receives the raw data—for example, day of week, hour, recent volume trend, holiday flag, and marketing event indicator for a volume forecasting model.

- One or more hidden layers process the data through successive transformations. Each layer extracts increasingly abstract patterns. An early layer might learn that Mondays and Tuesdays have similar volume shapes; a deeper layer might learn that "Monday after a holiday" is a distinct pattern requiring different treatment.

- An output layer produces the final prediction—a volume number, a classification label, a probability score.

When a network has many hidden layers (typically more than two or three), it qualifies as a deep neural network, and training it is called deep learning. The depth allows these networks to learn complex, hierarchical representations that shallow models cannot capture efficiently.

Why Depth Matters

Consider the task of converting a customer's spoken words into text (speech-to-text), which is foundational to Speech Analytics. A shallow model would need to learn the mapping from raw audio waveforms to words directly—an enormously complex relationship. A deep network can decompose this problem: early layers learn to recognize basic audio features (phonemes, pitch patterns), middle layers assemble these into syllables and words, and upper layers incorporate context to distinguish between "cancel" and "counsel." Each layer builds on the representations learned by the layer below it. This hierarchical learning is why deep networks dominate tasks involving speech, language, images, and complex sequential data.

How Neural Networks Learn

The training process for a neural network can be understood in three steps, repeated thousands or millions of times. No mathematical notation is required to grasp the concept.

Step 1: The Forward Pass (Make a Prediction)

Data enters the input layer and flows forward through the network. At each neuron in each layer, inputs are multiplied by weights, summed, and passed through an activation function. The result flows to the next layer until it reaches the output layer, which produces a prediction.

For a WFM example: the network receives input features for a specific half-hour interval (Monday, 10:00 AM, no holiday, normal marketing calendar, recent trend slightly upward) and produces a prediction of 342 contacts.

At the start of training, the weights are initialized randomly, so the first predictions are essentially random guesses.

Step 2: Measure the Error (Loss Function)

The prediction is compared to the actual known outcome from historical data. A loss function (also called a cost function or objective function) quantifies how wrong the prediction was. For volume forecasting, this might be the squared difference between predicted and actual volume. For classification tasks (e.g., predicting whether a call is a complaint), it might measure how far the predicted probability was from the true label.

The network predicted 342 contacts; the actual value was 387. The loss function assigns a numerical penalty to this 45-contact error.

Step 3: Adjust the Weights (Backpropagation)

This is where learning happens. An algorithm called backpropagation—short for "backward propagation of errors"—works backward through the network, calculating how much each weight contributed to the error.[1] Each weight is then adjusted slightly in the direction that would reduce the error. Weights that contributed heavily to the mistake are adjusted more; weights that were less responsible are adjusted less.

The adjustment magnitude is controlled by the learning rate—a critical hyperparameter. Too high, and the network overshoots, bouncing between bad solutions. Too low, and training takes impractically long or gets stuck. Modern training frameworks handle this automatically through adaptive learning rate algorithms, but the concept matters because learning rate issues are a common source of poor model performance that vendors may not surface to WFM users.

This three-step cycle repeats across the entire training dataset, often for hundreds of passes (called epochs). Gradually, the weights converge toward values that produce accurate predictions on the training data. The goal, however, is not just accuracy on training data but generalization—the ability to make accurate predictions on new, unseen data. This distinction between training performance and real-world performance is critical for WFM practitioners evaluating model claims, as described in Model Evaluation and Validation.

Types of Neural Networks Relevant to WFM

Different network architectures are designed for different data types and problem structures. WFM practitioners are most likely to encounter four families.

Feedforward Neural Networks

The simplest architecture. Data flows in one direction—from input layer through hidden layers to output layer—with no loops or feedback connections. These are the "standard" neural networks described in the learning section above.

WFM applications:

- Average handle time estimation — Given call type, agent tenure, time of day, queue wait time, and customer segment, predict expected AHT for the interaction. A feedforward network can capture non-linear interactions between these variables that linear regression would miss (e.g., the interaction between agent tenure and call complexity may not be additive).

- Agent attrition risk scoring — Given schedule satisfaction, commute time, performance trend, tenure, and compensation band, estimate the probability that an agent will leave within 90 days. The network can learn complex risk factor interactions—such as the compounding effect of low schedule satisfaction and declining performance—without the analyst needing to specify those interactions manually.

- Shrinkage prediction — Predicting effective shrinkage for a given day based on historical patterns, scheduled activities, day of week, and seasonal factors.

Feedforward networks work well when each input is independent—when the order of the data points does not matter. They are less suitable for sequential or time-dependent data, which is where recurrent architectures become necessary.

Recurrent Neural Networks and LSTMs

Contact center data is inherently sequential. Call volumes follow patterns across hours, days, weeks, and months. Agent performance evolves over tenure. Customer sentiment shifts across the course of an interaction. Standard feedforward networks treat each data point independently, discarding the sequential structure that carries critical information.

Recurrent neural networks (RNNs) solve this by adding connections that loop backward—the output of a neuron at one time step becomes an additional input at the next time step. This gives the network a form of memory: when processing Tuesday's data, it has information about Monday's data still influencing its internal state.

However, basic RNNs struggle with long-term dependencies. When predicting next January's volume, the network needs to "remember" last January—but the signal degrades as it passes through dozens of intermediate time steps. This is called the vanishing gradient problem.

Long Short-Term Memory (LSTM) networks, introduced by Hochreiter and Schmidhuber in 1997, address this with a gating mechanism that allows the network to selectively remember or forget information across long sequences.[3] An LSTM can learn that "January follows a specific pattern regardless of what happened in the intervening months" while simultaneously learning that "yesterday's volume influences today's volume."

WFM applications:

- Multi-step volume forecasting — Predicting volume for the next 7, 14, or 30 days, where each day's prediction accounts for the sequence of preceding days. LSTMs have shown strong performance on contact center volume forecasting, particularly for centers with complex multi-seasonal patterns (daily, weekly, monthly, annual) that are difficult to capture with traditional time series methods.[4]

- Sequential pattern detection — Identifying that an agent's adherence has been declining over consecutive weeks (a trend), as opposed to a single bad day (noise). The recurrent structure naturally distinguishes between transient deviations and sustained trends.

- Interaction-level analysis — Processing the sequence of utterances in a customer interaction for real-time sentiment tracking, escalation prediction, or next-best-action recommendation.

A variant called GRU (Gated Recurrent Unit) simplifies the LSTM architecture while retaining much of its effectiveness. Many modern WFM platforms use GRU or LSTM layers within their forecasting engines without surfacing this detail to the user.

Main article: Probabilistic Forecasting

Convolutional Neural Networks

Convolutional neural networks (CNNs) were originally designed for image recognition. They use specialized layers that scan input data with small filters, detecting local patterns (edges, textures, shapes in images) and combining them into larger features. CNNs revolutionized computer vision and are the reason modern systems can recognize faces, read handwriting, and classify images.[1]

CNNs are less directly relevant to core WFM operations than recurrent networks, but they appear in adjacent applications:

- Document processing — Extracting information from scanned forms, handwritten notes, or photographed ID documents in back-office operations.

- Screen recording analysis — Some desktop analytics tools use CNNs to classify agent screen activity (which application is in focus, whether the agent is on a relevant screen during a call).

- Time series as images — A less intuitive application: some researchers have shown that converting time series data into image-like representations (spectrograms, recurrence plots) and applying CNNs can match or exceed RNN performance for certain forecasting tasks.[4]

For most WFM practitioners, CNNs are relevant primarily as a component within larger systems (e.g., the vision component of a multimodal AI system) rather than something they would deploy directly for workforce planning.

Transformer Architectures

The transformer, introduced in the 2017 paper "Attention Is All You Need" by Vaswani et al., has become the dominant architecture for language-related AI tasks and is increasingly applied to time series forecasting.[5]

The key innovation is the attention mechanism, which allows the network to learn which parts of the input are most relevant to each part of the output—without processing the input sequentially. Unlike RNNs, which must process data one step at a time (Monday before Tuesday before Wednesday), transformers can attend to any part of the input simultaneously. This makes them faster to train on modern hardware and better at capturing long-range dependencies.

WFM relevance:

- Large language models — GPT, Claude, and other large language models are built on transformer architectures. Every WFM application that involves an LLM—agent assist tools, automated quality summaries, intelligent knowledge bases, conversational IVR—relies on transformers.

- Natural language processing — NLP tasks in WFM (call categorization, intent detection, entity extraction from transcripts) increasingly use transformer-based models rather than older RNN approaches.

- Time series transformers — Research and commercial implementations of transformer architectures for forecasting are active areas. Google's Temporal Fusion Transformer and similar architectures can model multiple time series simultaneously with attention to relevant external variables, which aligns well with multi-queue, multi-channel contact center forecasting.

For a detailed treatment of transformer-based language models and their WFM applications, see Large Language Models and Generative AI.

Neural Networks in WFM Applications

Neural networks appear across the WFM technology stack. Understanding where they operate helps practitioners ask better questions of vendors and set appropriate expectations for model performance.

Advanced Forecasting Engines

Traditional WFM forecasting relies on exponential smoothing, ARIMA, and regression—methods that are well understood, explainable, and effective for many contact center forecasting scenarios. Modern platforms increasingly offer neural network-based forecasting as an alternative or complement.

Neural network forecasting adds value in specific scenarios:

- Multi-variate, multi-seasonal patterns — When volume is driven by many external factors (marketing campaigns, product launches, economic indicators, weather) and exhibits multiple overlapping seasonal patterns (intraday, day-of-week, monthly billing cycle, annual), neural networks can capture interactions between these factors that additive decomposition methods cannot.

- Cross-series learning — A neural network can be trained across multiple queues or channels simultaneously, learning shared patterns (e.g., a product issue that affects calls and chats proportionally) that per-series models would miss.

- Regime change detection — Neural networks with attention mechanisms can learn to detect when historical patterns have broken and adjust predictions accordingly, rather than requiring manual intervention to exclude or down-weight irrelevant history.

However, for single-queue, stable-pattern forecasting with clean historical data, ARIMA and exponential smoothing frequently match or outperform neural networks while being simpler to maintain and explain. The Makridakis Competition (M5), a large-scale forecasting competition, demonstrated that while neural network methods achieved top results, the margin over well-tuned statistical methods was narrow, and ensembles combining both approaches often performed best.[6]

Speech-to-Text and Voice Analytics

Every modern speech analytics system uses deep neural networks at its core. The speech-to-text pipeline typically involves:

- An acoustic model (usually a deep CNN or transformer) that converts audio waveforms into phoneme probabilities.

- A language model (usually a transformer) that assembles phonemes into words and sentences, using context to resolve ambiguities.

- A post-processing layer that handles domain-specific vocabulary (product names, account number formats, company terminology).

For WFM practitioners, the practical implication is that speech analytics accuracy depends heavily on model training data. Systems trained primarily on general English may struggle with industry-specific terminology, regional accents, or noisy call center audio. Understanding that neural networks are driving this process helps practitioners diagnose accuracy issues and set realistic expectations.

Sentiment Analysis and Emotion Detection

Sentiment analysis systems analyze text (from transcripts or chat logs) or audio (tone, pace, pitch) to assess customer or agent emotional state. Modern implementations use transformer-based models that can detect sentiment shifts within a conversation—identifying the point at which a customer moves from neutral to frustrated, for example.

WFM applications include:

- Real-time alerts when customer sentiment drops below a threshold, triggering supervisor attention.

- Aggregate sentiment scoring by queue, time period, or agent for quality management.

- Correlation analysis between schedule-related factors (overtime, off-preference shifts) and agent sentiment trends.

Chatbot and IVR Natural Language Understanding

Conversational AI systems—chatbots, virtual agents, and intelligent IVR—use neural networks for intent classification (what does the customer want?) and entity extraction (what account number, what product, what date?). These are typically transformer-based models fine-tuned on domain-specific training data.

The WFM implication is capacity planning. As AI-powered self-service handles a growing share of contacts, WFM teams must forecast not just total demand but the split between AI-handled and agent-handled contacts. The accuracy of the neural network driving the chatbot directly affects this split—and therefore affects staffing requirements.

Agent Performance Prediction

Neural networks can model agent performance trajectories, predicting metrics like quality scores, AHT convergence, and attrition risk based on training history, coaching interactions, schedule patterns, and performance trends. These models inform capacity planning (expected productive hours accounting for the learning curve of new hires) and talent management (early identification of agents who may need additional support or who are flight risks).

When Neural Networks Are Overkill

Neural networks are powerful, but power comes with costs: data requirements, computational expense, training complexity, and reduced explainability. For many WFM problems, simpler methods are not just adequate—they are preferable.

Small Datasets

A contact center with 18 months of daily volume history has roughly 550 data points. A neural network designed for volume forecasting may have thousands or tens of thousands of parameters. Training a model with more parameters than data points is a recipe for overfitting—the model memorizes the training data rather than learning generalizable patterns. Simple exponential smoothing or ARIMA, with far fewer parameters, will typically produce better forecasts on small datasets.

Rule of thumb: if a WFM team has fewer than two years of clean, consistent historical data for a queue, traditional statistical methods should be the default choice. Neural networks should be considered only when there is a clear reason to believe the additional complexity will be rewarded—for example, if the volume pattern involves complex multi-variate dependencies that simpler methods cannot capture even with manual intervention.

Simple, Stable Patterns

Many contact center volume patterns are surprisingly regular. A queue with a clear intraday shape, consistent day-of-week pattern, and predictable seasonal cycle can be forecast effectively with Holt-Winters or seasonal ARIMA. The neural network may achieve marginally better accuracy on average, but the marginal improvement often does not justify the additional complexity, computational cost, and reduced transparency.

Explainability Requirements

When a forecast drives a staffing decision that affects people—overtime mandates, shift changes, headcount reductions—stakeholders reasonably ask "why does the model predict this?" With ARIMA, the answer is traceable: "The model detected a 12% year-over-year trend and a strong Monday effect." With a deep neural network, the answer is often "the model's internal weights, distributed across thousands of neurons, collectively produce this output." This opacity has real operational consequences, discussed in the next section.

Vendor Evaluation Implications

When a WFM vendor claims their platform uses "AI-powered forecasting" or "deep learning," practitioners should ask:

- What specific architecture is used? (If the answer is vague, skepticism is warranted.)

- How does the neural network forecast compare to a simple baseline (e.g., seasonal naive, Holt-Winters) on representative data?

- What is the minimum data requirement for the neural network to outperform simpler methods?

- Can the system fall back to statistical methods when neural network forecasts degrade?

The most sophisticated platforms use ensemble approaches—combining neural network and statistical forecasts, weighted by recent performance—rather than relying exclusively on either approach.

The Black Box Problem

Neural networks are often described as "black boxes"—systems where the relationship between inputs and outputs is not human-interpretable. This characterization is largely accurate for deep networks. A network with millions of parameters distributed across dozens of layers does not produce explanations; it produces outputs.

Why This Matters in WFM

Workforce management decisions directly affect people. Schedules determine when agents work, what shifts they get, and how their work-life balance is structured. Performance models influence coaching priorities, promotion decisions, and sometimes termination. Forecast models drive staffing levels that determine overtime, voluntary time off, and headcount plans.

When these decisions are influenced by neural network outputs that cannot be explained, several problems arise:

- Trust and adoption — Analysts and supervisors who cannot understand why a model recommends a particular action are less likely to trust and act on it. This leads to either wholesale rejection of the model or, worse, selective acceptance (using the model when it agrees with intuition, ignoring it when it doesn't—which defeats the purpose).

- Error diagnosis — When a neural network forecast is wrong, identifying the cause is difficult. Was it a data quality issue? A pattern the model hasn't seen? A genuine regime change? With ARIMA, a forecast analyst can decompose the error into trend, seasonal, and residual components. With a deep network, error diagnosis often requires specialized data science expertise.

- Regulatory and ethical concerns — In jurisdictions with algorithmic transparency requirements (e.g., the EU AI Act's provisions on high-risk AI systems), using opaque neural networks for decisions that affect worker scheduling or performance evaluation may create compliance obligations.[7]

- Fairness auditing — If a neural network-based attrition model systematically assigns higher risk scores to agents in certain demographic groups, detecting and correcting this bias is significantly harder than with a transparent model where each feature's contribution is visible.

Mitigation Approaches

The field of explainable AI (XAI) has developed techniques to partially address the black box problem:

- Feature importance — Methods like SHAP (SHapley Additive exPlanations) can estimate how much each input feature contributed to a specific prediction, even for complex neural networks.

- Attention visualization — For transformer-based models, attention weights can reveal which parts of the input the model focused on (though interpreting attention weights requires caution—they do not always correspond to causal importance).

- Surrogate models — A simpler, interpretable model (like a decision tree) can be trained to approximate the neural network's behavior, providing a rough explanation of its logic.

These techniques improve transparency but do not fully resolve the problem. WFM practitioners should treat neural network outputs as one input to a decision process—not as the decision itself—particularly for high-stakes decisions that affect individuals.

Training Data Requirements

Neural networks are data-hungry. The number of parameters in a network determines the minimum amount of training data needed to learn reliably without overfitting. This creates a practical challenge for contact centers, which generate large volumes of data in absolute terms but may have limited data for specific modeling tasks.

What "Lots of Data" Means for Contact Centers

The data requirement depends on the task:

- Volume forecasting — A single queue with two years of half-hourly data has approximately 35,000 data points. This is generally sufficient for moderate-sized neural networks (hundreds to low thousands of parameters). Centers with five or more years of clean history and multiple queues that share patterns are well positioned for neural network forecasting.

- Agent attrition prediction — A center with 500 agents and 20% annual attrition generates roughly 100 attrition events per year. Training a neural network to predict attrition with 100-200 positive examples is challenging; traditional logistic regression or gradient-boosted trees will typically perform better unless the organization has thousands of agents across multiple sites.

- Speech analytics — Speech-to-text models require thousands of hours of transcribed audio for training. This is why virtually all contact centers use pre-trained speech models (from Google, Amazon, Nuance, or similar providers) rather than training their own. The pre-trained model may be fine-tuned on center-specific vocabulary, but the base model requires data volumes no single center could provide.

- Sentiment analysis — General-purpose sentiment models work reasonably well out of the box for contact center transcripts. Fine-tuning for domain-specific language (e.g., "cancel" meaning account cancellation rather than general negativity) requires hundreds to thousands of labeled examples.

Data Quality vs. Data Quantity

For neural networks, data quality matters as much as data quantity. Common data quality issues in WFM contexts:

- Label inconsistency — If call categorization (the labels used for training) varies by agent or site, the model learns inconsistency rather than patterns.

- Temporal discontinuities — System changes (new ACD, revised queue structure, changed call coding) create breaks in historical data that can confuse neural networks trained across the discontinuity.

- Survivorship bias — Attrition models trained only on agents who remain in the dataset (because agents who left early have incomplete records) learn a biased representation of the population.

- Distribution shift — The data the model was trained on may not represent current or future conditions. A model trained on pre-pandemic data will mispredict in a hybrid-work environment. This connects directly to the concerns addressed in Deterministic vs Probabilistic Models regarding the assumptions underlying any model.

The AI Scaffolding Framework addresses these challenges at Layer 1 (Data Fabric)—without reliable, consistent, well-governed data infrastructure, neural network deployments will underperform regardless of model sophistication.

Practical Considerations

Pre-Trained vs. Custom Models

Most WFM neural network applications use pre-trained models—networks trained on massive general-purpose datasets and then adapted for specific tasks. This approach, called transfer learning, is practical because:

- Training large neural networks from scratch requires computational resources (GPU clusters, weeks of training time) that most WFM organizations cannot justify.

- Pre-trained models have already learned general patterns (language structure, audio features, time series dynamics) that transfer well to WFM-specific tasks.

- Fine-tuning a pre-trained model on domain-specific data requires far less data and computation than training from scratch.

The practical implication: when a WFM vendor says their system uses "AI" or "deep learning," the neural network components are almost certainly built on pre-trained foundations (open-source models like BERT for NLP tasks, Whisper for speech-to-text, or proprietary equivalents) with domain-specific fine-tuning. This is not a weakness—it is the standard, efficient approach. The vendor's value-add lies in the fine-tuning, the integration with WFM workflows, and the operational infrastructure around the model, not in having trained a neural network from scratch.

Evaluating "AI-Powered" Vendor Claims

The term "AI-powered" in WFM vendor marketing can mean anything from a genuine deep learning forecasting engine to a set of hard-coded business rules with a chatbot interface. Practitioners can cut through the ambiguity with specific questions:

- What training data was the model trained on? — Relevant, recent, representative data from contact center environments is necessary. A language model trained on internet text will behave differently than one fine-tuned on contact center transcripts.

- How is the model evaluated? — Ask for performance metrics on held-out test data, not training data. Ask how the test data was selected (random split, time-based split, cross-validation). Time-based splits are essential for forecasting models—forecast evaluation using randomly shuffled data gives artificially inflated accuracy.

- What happens when the model is wrong? — Does the system provide confidence intervals? Can analysts override model outputs? Is there a fallback to simpler methods when neural network performance degrades?

- How often is the model retrained? — Neural network models for dynamic environments (contact centers experience constant change) need regular retraining. A model trained once and deployed indefinitely will degrade as the operational environment shifts.

- What is the explainability story? — For high-stakes applications (performance management, staffing decisions), what tools does the vendor provide for understanding model outputs?

The Ensemble Principle

The most robust WFM implementations do not rely on any single model type. Instead, they combine multiple approaches:

- Statistical methods (ARIMA, exponential smoothing) for stable, well-understood patterns.

- Neural networks (LSTMs, transformers) for complex, multi-variate, non-linear patterns.

- Ensemble methods that weight the outputs of multiple models based on recent performance.

This approach captures the strengths of each method while mitigating individual weaknesses. It also provides a natural performance benchmark—if the neural network consistently fails to outperform the statistical baseline, it should be removed from the ensemble rather than maintained for the sake of technological sophistication.

The Human-in-the-Loop Imperative

Neural networks in WFM should augment human judgment, not replace it. The most effective deployments position neural network outputs as recommendations that analysts can accept, modify, or reject based on contextual knowledge that the model does not have. An analyst who knows that a major client just announced a product recall—information not yet in any dataset—should override the neural network's volume forecast, and the system should make that override easy and trackable.

This principle connects to the broader framework described in AI in Workforce Management: AI systems in WFM succeed when they are designed as collaborative tools within a governed decision process, not as autonomous decision-makers.

Relationship to Other AI Concepts

Neural networks are one branch of the broader machine learning family, which itself is a subset of artificial intelligence. Understanding where neural networks sit in this hierarchy helps practitioners navigate vendor terminology and evaluate technology choices.

- Machine learning encompasses neural networks but also includes decision trees, random forests, gradient-boosted machines, support vector machines, and statistical methods. Many of these non-neural methods are better suited to specific WFM tasks, particularly when data is limited or explainability is important.

- Deep learning is the subset of neural network methods using deep (multi-layer) architectures. Nearly all modern neural network applications in WFM qualify as deep learning.

- Natural Language Processing (NLP) is an application domain—not a model type—that heavily uses neural networks (particularly transformers) as its underlying technology.

- Large language models (LLMs) are very large transformer-based neural networks trained on massive text corpora. They represent the current frontier of neural network capability for language tasks and are increasingly integrated into WFM platforms for summarization, knowledge retrieval, and agent assistance.

See Also

- Artificial Intelligence Fundamentals — Overview of AI concepts and terminology

- Machine Learning Concepts — Broader ML context including supervised, unsupervised, and reinforcement learning

- AI in Workforce Management — How AI technologies are applied across WFM operations

- AI Scaffolding Framework — Infrastructure requirements for successful AI deployment

- Machine Learning for Volume Forecasting — Practical ML applications in demand forecasting

- Probabilistic Forecasting — Uncertainty quantification in forecasting

- Forecasting Methods — Comprehensive overview of forecasting approaches

- ARIMA Models — Traditional time series methods that complement or compete with neural networks

- Exponential Smoothing — Statistical smoothing methods for forecasting

- Speech Analytics — Speech-to-text and voice analytics powered by deep learning

- Conversational AI — Chatbots and virtual agents built on neural networks

- Sentiment Analysis in Customer Service — Neural network applications in customer experience

- Natural Language Processing — NLP techniques and their WFM applications

- Large Language Models and Generative AI — Transformer-based language models

- Model Evaluation and Validation — Assessing model performance and reliability

- Deterministic vs Probabilistic Models — Conceptual framework for model types

References

- ↑ 1.0 1.1 1.2 1.3 Goodfellow, Ian; Bengio, Yoshua; Courville, Aaron. Deep Learning. MIT Press, 2016. ISBN 978-0262035613. https://www.deeplearningbook.org/

- ↑ McCulloch, Warren S.; Pitts, Walter. "A Logical Calculus of the Ideas Immanent in Nervous Activity." Bulletin of Mathematical Biophysics, vol. 5, no. 4, 1943, pp. 115–133.

- ↑ Hochreiter, Sepp; Schmidhuber, Jürgen. "Long Short-Term Memory." Neural Computation, vol. 9, no. 8, 1997, pp. 1735–1780.

- ↑ 4.0 4.1 Borovykh, Anastasia; Bohte, Sander; Oosterlee, Cornelis W. "Conditional Time Series Forecasting with Convolutional Neural Networks." arXiv preprint arXiv:1703.04691, 2017.

- ↑ Vaswani, Ashish; Shazeer, Noam; Parmar, Niki; et al. "Attention Is All You Need." Advances in Neural Information Processing Systems 30 (NeurIPS 2017), 2017.

- ↑ Makridakis, Spyros; Spiliotis, Evangelos; Assimakopoulos, Vassilios. "The M5 Accuracy Competition: Results, Findings, and Conclusions." International Journal of Forecasting, vol. 38, no. 4, 2022, pp. 1346–1364.

- ↑ European Parliament and Council of the European Union. "Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act)." Official Journal of the European Union, 2024.