Machine Learning for Volume Forecasting

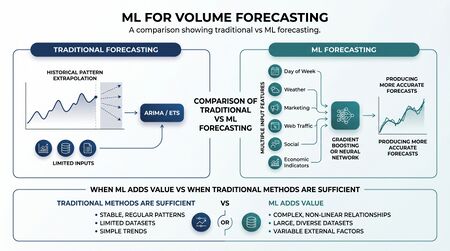

Machine learning (ML) methods for contact volume forecasting encompass a family of data-driven approaches — including gradient boosting, recurrent neural networks, and additive regression models — that learn predictive patterns from historical data without requiring explicit specification of a statistical model structure. These methods have gained significant adoption in large-scale forecasting applications following competitive benchmarks, most notably the M4 and M5 forecasting competitions, which provided evidence about when ML methods outperform classical statistical approaches and when they do not. In workforce planning, ML-based volume forecasting is most valuable when contact volumes are influenced by a rich set of external predictors, when interaction effects between variables are complex, or when the number of time series to be forecast simultaneously is large enough that manual model-building is impractical. ML methods are associated with WFM Labs Maturity Model Levels 4 and 5, though the boundary between statistical and ML methods is increasingly blurred by hybrid approaches.

Gradient Boosting Methods

Overview

Gradient boosting constructs an ensemble of decision trees, each tree learning from the residuals of the previous ensemble, iteratively reducing forecast error. XGBoost (Extreme Gradient Boosting), introduced by Chen and Guestrin (2016), and its successors LightGBM and CatBoost became dominant algorithms in tabular data forecasting by combining strong predictive performance with computational efficiency.[1]

Application to Contact Volume Forecasting

In contact center volume forecasting, gradient boosting is applied by converting the time-series forecasting problem into a supervised regression problem. Lagged volume values, calendar features (day of week, hour of day, week of year, holiday indicators), and external predictors (marketing send counts, website traffic, CRM pipeline activity) are constructed as features. The model learns the relationship between these features and future volume, producing a point forecast.

Key advantages in this context:

- Naturally incorporates non-linear interactions between calendar effects and external drivers.

- Handles missing values without imputation.

- Scales efficiently to thousands of simultaneous time series (skill queues, channel-level forecasts).

- Supports Hierarchical Forecasting applications where forecasts must be produced at multiple aggregation levels.

Key limitations:

- Does not extrapolate outside the range of training data; structural breaks (new channels, major product changes) require retraining.

- Feature engineering quality — particularly the construction of lag features and calendar encodings — has substantial influence on model performance.

- Produces point forecasts by default; Probabilistic Forecasting requires additional techniques (quantile regression, conformal prediction).

Recurrent Neural Networks and LSTMs

Long Short-Term Memory networks (LSTMs) are a class of recurrent neural network designed to learn long-range temporal dependencies in sequential data. Unlike gradient boosting, LSTMs operate directly on the sequence structure of time series, learning temporal patterns through gated memory cells that can retain information across many time steps.

In contact volume forecasting, LSTMs are most applicable when:

- Long-range seasonal dependencies (e.g., annual cycles with complex intraday patterns) are important and difficult to encode as tabular features.

- Multiple related time series can be jointly modeled, allowing the network to learn shared patterns across skill queues or geographies.

- The forecasting horizon is medium-term (days to weeks) rather than short-interval (next 15 minutes).

LSTMs require substantially more training data than gradient boosting or classical statistical methods, and are sensitive to hyperparameter tuning. The M4 competition findings (Makridakis et al., 2018) demonstrated that pure ML methods — including LSTMs — did not consistently outperform statistical methods on the full competition dataset, though hybrid approaches (statistical + ML) performed strongly.[2]

Prophet

Prophet, developed by Taylor and Letham at Meta (Facebook) and released as an open-source library in 2018, is an additive regression model designed for business time series with strong seasonal patterns and irregular holidays.[3]

Prophet decomposes a time series into trend, weekly seasonality, annual seasonality, and holiday effects, fitting each component with Fourier series or piecewise linear segments. Primary advantages for contact center forecasting:

- Automatic change point detection — identifies structural changes in trend without manual specification.

- Holiday and event modeling — named holidays and one-time events can be registered directly, preventing these observations from distorting seasonality estimation.

- Analyst-friendly interface — parameters are interpretable and adjustable by domain experts without deep statistical training, aligning with the role of Judgmental Forecasting overlays in forecast governance.

Prophet's limitations include reduced performance on highly irregular time series, limited ability to incorporate complex external predictors compared to gradient boosting, and a tendency to underperform specialized statistical models on short time series with strong autocorrelation structure.

M4 and M5 Competition Findings

The M4 competition (2018, 100,000 time series across multiple domains and frequencies) produced several findings directly relevant to contact volume forecasting practice:

- No single ML method dominated. Pure ML methods (neural networks, gradient boosting) did not systematically outperform classical statistical methods across the full dataset.

- Hybrid methods won. The top-performing entries combined statistical base forecasts with ML-based error correction or ensemble weighting, achieving accuracy gains over either method alone.

- Forecast Combination outperforms selection. Simple averages of multiple model forecasts consistently outperformed single best-model selection, consistent with the broader forecasting literature.

The M5 competition (2020, Walmart retail sales data) extended these findings to a hierarchical forecasting context and confirmed the strong performance of gradient boosting (specifically LightGBM) when external predictors and hierarchical structure are present.

When ML Beats Classical Methods — and When It Does Not

| Condition | Favors ML | Favors Classical (ARIMA/ETS) |

|---|---|---|

| Number of time series | Many (>100) | Few (<20) |

| External predictors available | Yes (marketing, web traffic, CRM) | No or few |

| Historical data length | Long (2+ years, high frequency) | Short or sparse |

| Structural breaks present | With retraining | Intervention modeling |

| Interpretability required | Lower | Higher |

| Irregular holidays/events | Prophet, gradient boosting | Manual adjustment |

| Intermittent demand | Specialized methods preferred | Croston's method |

Implementation Considerations

Feature Engineering

The quality of features engineered from raw time series and external data typically determines ML forecast quality more than algorithm selection. Standard feature sets for contact volume forecasting include:

- Lagged volume values (t-1, t-7, t-14, t-28 for daily data)

- Rolling means and standard deviations over multiple windows

- Calendar indicators: day of week, hour of day, month, week of year

- Holiday and event flags

- Promotion/campaign flags from marketing systems

- Prior-period service level (captures feedback loops between volume and deflection)

Retraining Cadence

ML models for contact volume must be retrained on a defined cadence to incorporate recent data and adapt to structural changes. Monthly or quarterly full retraining with weekly incremental updates is a common pattern.

Evaluation and Governance

ML forecasts should be evaluated against MAPE, WAPE, and Forecast Bias benchmarks using a proper holdout set, and compared to classical baselines (seasonal naive, ETS) before deployment. Production ML forecasting systems require monitoring for data drift — changes in the statistical properties of input features that signal model degradation.

Maturity Model Considerations

At Level 3 (Integrated), organizations may deploy Prophet or simple gradient boosting models for specific high-volume queues, typically as pilot implementations alongside existing statistical models.

At Level 4 (Optimized), ML-based forecasting is systematically deployed across the queue portfolio, with formal model comparison and governance. Hybrid approaches combining statistical and ML methods are used where evidence supports performance improvement.

At Level 5 (Adaptive), ML forecasting systems self-retrain on new data, detect their own performance degradation, and flag anomalies for human review. Forecasts cover human and AI agent capacity simultaneously as part of a unified workforce demand model.

Related Concepts

- Forecasting Methods

- ARIMA Models

- Exponential Smoothing

- Probabilistic Forecasting

- Forecast Combination

- Hierarchical Forecasting

- Reforecast and Rolling Forecast Methodology

- MAPE, WAPE, and Forecast Bias

- Time Series Decomposition

- Judgmental Forecasting

References

- ↑ Chen, T. & Guestrin, C. (2016). XGBoost: A scalable tree boosting system. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (pp. 785–794).

- ↑ Makridakis, S., Spiliotis, E. & Assimakopoulos, V. (2018). The M4 competition: Results, findings, conclusion and way forward. International Journal of Forecasting, 34(4), 802–808.

- ↑ Taylor, S.J. & Letham, B. (2018). Forecasting at scale. The American Statistician, 72(1), 37–45.