Judgmental Forecasting

Judgmental Forecasting is the disciplined use of subject-matter-expert (SME) judgment alongside statistical forecasting methods. WFM teams already do this informally — adjusting a forecast when they know about an upcoming marketing campaign, a product launch, or a one-time event. Doing it well requires structure, documentation, and explicit separation of the model from the override.

The case for judgmental adjustment: statistical methods learn from history. Events that haven't happened before — or haven't happened in a way the data captures — are exactly the ones where SME knowledge adds value. The case for restraint: human judgment is biased. Studies of judgmental forecasting consistently find that unstructured adjustments often degrade rather than improve accuracy.

This page documents the methods, the biases, and the operational discipline that lets judgmental forecasting earn its place alongside statistical methods.

When judgment beats statistics

Three patterns where SME judgment reliably outperforms a statistical model alone:

- Knowledge of upcoming events not in historical data. A new product launches next month; marketing spend spikes; a competitor exits the market. The model has no history of these events; the SME does.

- Structural change recognized in real time. Customer demographics shifted; a regulation took effect; the business model evolved. The historical data still reflects the old structure; the SME knows the structure has changed.

- One-time anomalies worth correcting. The forecast is contaminated by an outage, a data-quality incident, or a holiday adjustment that the model didn't handle correctly. SME knows to clean it.

In all three patterns, SME knowledge is information the statistical model literally cannot have.

When statistics beats judgment

Three patterns where unstructured judgmental adjustment degrades accuracy:

- Routine forecasting. For typical week-over-week volume forecasts, a well-tuned ETS model outperforms most analyst overrides. The analyst's "I think next week will be lower because Tuesday felt slow" is anecdote; the model has the seasonal pattern explicitly.

- Optimism / pessimism bias. Forecasters under pressure to please stakeholders systematically adjust upward (when growth is the goal) or downward (when cost is the concern). The bias is unconscious and persistent.

- Anchoring on recent observations. "Volume was high last week, so I'm raising next week's forecast." The recent observation is already incorporated by an exponential smoothing model — adjusting again double-counts it.

The empirical evidence is striking: across many studies, analyst judgmental adjustments to statistical forecasts are about as likely to make accuracy worse as to make it better.

The operational discipline: judgmental adjustment is justified only when the SME has information the model literally cannot have. "I have a feeling" is not justification.

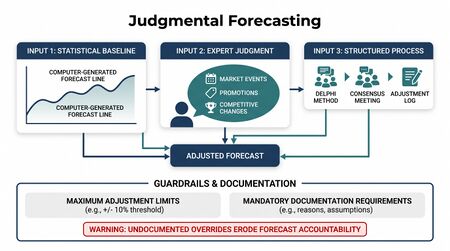

Methods of judgmental forecasting

Pure judgmental forecasting

The forecast is generated entirely by SME estimation, with no statistical model. Used when no historical data exists (new business, new market, new product) or when the data is so contaminated that a statistical baseline is meaningless.

Best practice: multiple SMEs forecast independently; reconcile via discussion or formal aggregation. Avoid groupthink and anchoring effects.

Judgmental adjustment to statistical forecasts

The most common pattern in WFM. A statistical method produces a baseline forecast; the SME adjusts it based on knowledge not in the model. Adjustments are documented and tracked.

Process discipline:

- Generate the unadjusted statistical forecast first

- Document the SME adjustment as a separate quantity (size, direction, reason)

- Store both the unadjusted forecast and the adjusted forecast for later evaluation

- After the actual is observed, evaluate which was more accurate

Over time, the data on past adjustments tells you which kinds of overrides actually help and which kinds make things worse.

Delphi method

Multiple SMEs forecast independently in writing; their forecasts are aggregated and shared anonymously; SMEs revise based on the aggregate; the process iterates until consensus or near-consensus.

When to use: major strategic forecasts (annual capacity, multi-year planning) where multiple expert perspectives matter and where in-room dynamics would distort discussion.

Strengths: avoids groupthink; reduces anchoring on the loudest voice; produces explicit reasoning.

Weaknesses: time-consuming; only works when the experts are genuinely diverse in perspective and well-informed.

Scenario forecasting

Multiple plausible futures are forecast separately; the operating plan is built around the scenarios rather than around a single point estimate. Common scenarios in WFM: "marketing campaign hits target," "marketing campaign misses by 30%," "competitor exits market," "new product delays by one quarter."

When to use: when the future depends on one or two large uncertain events and committing to a single scenario would produce a fragile plan.

Connection to other methods: scenario forecasts can be combined with Probabilistic Forecasting by assigning probabilities to each scenario and producing a probability-weighted forecast distribution.

Common biases

Judgmental forecasting suffers from documented cognitive biases:

- Anchoring — overweighting the first number seen (the statistical baseline, the prior forecast, last year's actual)

- Optimism / pessimism — adjusting forecasts toward the outcome stakeholders want

- Confirmation bias — interpreting ambiguous information to confirm existing forecasts

- Recency bias — overweighting the most recent observation

- Availability bias — overweighting easily-remembered events (the last big spike) versus less-memorable patterns (gradual drift)

- Overconfidence — narrow PIs that miss the actual more often than they should

These are real, persistent, and largely unconscious. Process discipline (documenting adjustments, tracking outcomes, separating the model from the override) is the only reliable defense.

Operational discipline for WFM teams

A practical workflow for WFM teams using judgmental adjustment:

- Run the statistical baseline first. Don't look at it before deciding what adjustment to make. Generate, save, then look.

- Document every adjustment. Size, direction, reason, expected effect.

- Constrain by history. Adjustments larger than (say) 20% of the baseline forecast require additional sign-off or scrutiny. The threshold reflects how much the analyst's "I know better" is genuinely better than statistics.

- Track adjustment accuracy separately. Every quarter, evaluate: across all adjustments made, did the adjusted forecasts beat the unadjusted ones on average? On specific event types?

- Build adjustment templates for recurring events. If marketing campaigns reliably produce a 15% volume lift two days after launch, that becomes a template (or, better, a regression variable) rather than an ad-hoc judgment each time.

- Demote consistently bad adjusters. If an SME's adjustments consistently make accuracy worse, their adjustments need re-examination — possibly retraining, possibly removal from the override authority.

The goal: a WFM organization that knows which kinds of overrides help and applies them by process, not by hunch.

Common WFM pitfalls

- Adjustments that are really pressure responses. "Finance wants a lower number, so I'll adjust the forecast down." This is not judgmental forecasting; it is forecasting under duress. Document and surface the conflict.

- Black-box overrides. Forecasts that arrive without documentation of which parts came from the model and which from the analyst. Impossible to evaluate; impossible to improve.

- Adjustment fatigue. Analysts adjust everything because adjusting feels active. The model's forecast is treated as a starting point rather than a default. Discipline: most periods don't warrant adjustment.

- Forgetting to remove the adjustment. A one-time event adjustment for last quarter's product launch carries forward into this quarter's forecast because nobody removed it. Adjustments should be event-specific and time-bounded.

- Over-relying on judgment when data is poor. Judgmental adjustments are sometimes used to compensate for bad data quality. Fix the data; don't paper over with judgment.

Connection to forecast accuracy

Tracking judgmental adjustment quality is itself a forecasting accuracy exercise. The metric is straightforward: for each adjustment, compare the adjusted forecast accuracy to the unadjusted forecast accuracy. Aggregate across adjustments to see whether the adjuster systematically helps or hurts.

This is operationalized via paired evaluation:

- For every period with an adjustment, compute MAE (or MASE) of both the unadjusted and adjusted forecasts

- Compute the difference per period

- Aggregate the differences across all adjustments to get the analyst's net contribution

Over enough periods, the data is unambiguous. Either the adjustments help on average (the analyst is genuinely contributing information) or they don't (the adjustments are noise or bias).

Connection to WFM operating model

Judgmental forecasting sits at the intersection of WFM Roles (the forecaster who produces the baseline) and the broader business functions (marketing, finance, product) that have information the model doesn't. The interpersonal-relationship pillar of the Future WFM Operating Standard frames this directly: WFM teams that operate as deeply-connected nodes in the organizational graph have better access to the information that justifies adjustments.

The Resource Optimization Center (ROC) is also where judgmental forecasting becomes operational — real-time analysts in the ROC apply judgmental adjustments to intraday forecasts based on observed conditions (a system outage, a sustained sentiment shift, a competitor event) that the model cannot know about in real time.

Maturity Model Position

In the WFM Labs Maturity Model™, judgmental forecasting is present at every level — but it looks different at every level. The maturity signal is process discipline, not the presence or absence of judgment.

- Level 1 — Initial (Emerging Operations) — judgmental forecasting is the forecasting; no statistical baseline exists; adjustments are unstructured and undocumented.

- Level 2 — Foundational (Traditional WFM Excellence) — a statistical baseline exists, but analysts adjust it freely, often under stakeholder pressure; adjustments are not separated from the model output, not documented, and not evaluated against the unadjusted forecast.

- Level 3 — Progressive (Breaking the Monolith) — explicit "model-then-override" discipline: the unadjusted forecast is generated and stored before adjustment; every adjustment is documented with size, direction, and reason; adjuster accuracy is tracked over time; recurring events graduate from judgment to regression dummies.

- Level 4 — Advanced (The Ecosystem Emerges) — Delphi and scenario forecasting layered onto statistical methods for major strategic forecasts; scenarios are probability-weighted into the forecast distribution; consistently bad adjusters are demoted from override authority.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — judgment is reserved for genuinely novel events; the analytical platform absorbs recurring patterns automatically; the WFM organization operates as a deeply-connected node receiving SME information through structured channels.

Judgmental forecasting is the canonical case of "same activity, different maturity" — the unstructured override is Level 2; the documented, tracked, evaluated override is Level 3+.

References

- Hyndman, R. J., & Athanasopoulos, G. "Judgmental forecasts." Forecasting: Principles and Practice (Python edition). otexts.com/fpppy.

- Lawrence, M., Goodwin, P., O'Connor, M., & Önkal, D. "Judgmental forecasting: A review of progress over the last 25 years." International Journal of Forecasting 22(3), 2006.

- Fildes, R., Goodwin, P., Lawrence, M., & Nikolopoulos, K. "Effective forecasting and judgmental adjustments: An empirical evaluation and strategies for improvement in supply-chain planning." International Journal of Forecasting 25(1), 2009.

See Also

- Forecasting Methods — overview; judgmental forecasting layers on top of statistical methods

- Forecast Accuracy Metrics — for tracking adjustment quality over time

- Forecast Combination — alternative way to incorporate multiple perspectives without unstructured judgment

- Regression for Forecasting — preferred for known recurring events; a structured alternative to repeated judgmental overrides

- WFM Roles — the forecaster role and adjustment authority

- Resource Optimization Center (ROC) — where intraday judgmental adjustments operate in production

- Future WFM Operating Standard — broader operating frame; interpersonal relationships pillar relevant here