Forecast Combination

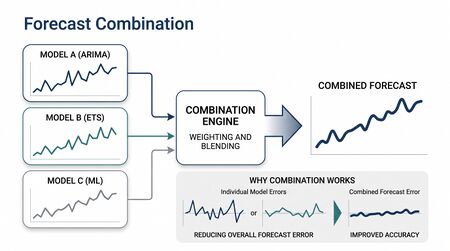

Forecast Combination is the practice of combining forecasts from multiple methods to produce a single forecast that outperforms any individual method. The empirical finding, robust across decades of forecasting competitions, is that a simple equally-weighted average of multiple methods routinely beats the best individual method on out-of-sample accuracy.

For workforce management organizations running multiple forecasting methods — most do, even if implicitly — combination is methodology-agnostic accuracy improvement. It works with whatever methods are already deployed, requires no new infrastructure beyond the ability to run multiple forecasts and average them, and reliably produces measurable gains.

This page documents the combination methods, the empirical evidence, and the practitioner workflow.

The empirical finding

In forecasting competitions running back to the 1969 Bates & Granger paper, the average of multiple forecasts beats the individual methods on out-of-sample accuracy. The pattern repeats across the M-competitions (1982 through M5 in 2020) with remarkable consistency: simple averages outperform sophisticated individual methods.

The intuition: different methods have different errors. ETS may consistently over-predict during ramp-ups; ARIMA may consistently miss seasonal turning points. Averaging cancels independent errors, leaving the agreed-upon signal.

The math: if two unbiased methods have forecast variances and with correlation , the equally-weighted average has variance:

When errors are independent (), this is half the average of the individual variances — a substantial reduction. As correlation increases, the gain shrinks; perfectly correlated methods produce no benefit from combination.

Practical implication: combine diverse methods. ETS + ARIMA + naive baseline tend to make different mistakes on different series. Combining three variants of ETS produces less benefit because they share assumptions.

Combination methods

Simple average

The unweighted average of individual forecasts. Surprisingly hard to beat. The "boring" baseline that becomes the production method when sophisticated weighting schemes fail to outperform it.

When to use: as the default combination method when method-specific accuracy is approximately comparable.

Weighted average

When some methods are reliably more accurate than others, weighted averaging can improve on simple averaging. Common weight schemes:

- Inverse error variance weighting — using historical out-of-sample variance. The most accurate method gets the most weight.

- Performance-weighted — based on a chosen accuracy metric.

- Shrunk weights — start with the data-derived weights, shrink toward equal weighting; reduces overfitting.

The pitfall: estimated weights are noisy. A method that "won" on the held-out validation set may have been lucky. Weights estimated on too little data overfit and underperform simple averaging.

The Bayesian shrinkage interpretation: if you have weak evidence that one method is better than another, weight them equally; if you have strong evidence, weight by performance. The weight vector should reflect the strength of the evidence.

Optimal combination by least squares

Given historical periods of forecasts from methods plus actuals, solve for the weights that minimize the in-sample combined forecast error:

This is the Granger-Ramanathan combination. In practice, it overfits unless is large; cross-validation or shrinkage to equal weights typically helps.

Stacking / ensemble methods

When combining many methods (10+), modern machine learning frameworks treat the combination as a meta-learning problem. Cross-validated forecasts from each base method are stacked into a feature matrix; a meta-learner (regression, gradient boosting, neural network) learns the optimal combination, possibly conditional on series characteristics.

Stacking is the dominant approach in M5 and M6 competition winning entries. For a typical WFM operation with 2-5 forecast methods, the simple average is sufficient; stacking is overkill.

M-competition empirical evidence

The M-competitions (Spyros Makridakis, every few years since 1982) provide the standard evidence base on forecast accuracy. The M4 (2018) and M5 (2020) competitions both confirmed:

- Simple averaging beats most individual methods

- Hybrid methods that combine ETS + ARIMA + statistical learning win the top ranks

- Ensembling produces 10-20% accuracy improvements over the best individual method, robustly across data shapes

This is some of the most reliable evidence in operational forecasting. Combination is not an esoteric technique — it is the modal best practice.

Practitioner workflow

For a WFM organization adopting combination:

- Choose 2-4 diverse methods. Typical set: seasonal naive (baseline), ETS, ARIMA. Adding regression when explanatory variables exist produces a fourth.

- Run all methods on the same training data and same forecast horizon.

- Average the forecasts — start with the simple average. Use this as the production forecast for a baseline period.

- Evaluate against seasonal naive using Forecast Accuracy Metrics. The combination should beat the best individual method.

- Optionally upgrade to weighted — only if you have enough validation data to estimate weights reliably and the weighted version actually outperforms simple averaging on held-out data.

- Re-evaluate periodically. As series characteristics change, the relative ranking of methods may change too. Don't lock in weights forever.

Common WFM pitfalls

- Combining only similar methods. Three variants of Holt-Winters average to nearly the same forecast as one Holt-Winters; the combination doesn't help.

- Including methods that are clearly broken. If one method consistently underperforms seasonal naive, including it in the combination dilutes the result. Exclude broken methods from combination; investigate their failure separately.

- Over-weighting recently good methods. A method that was best last quarter may not be best next quarter. Use rolling-window weights or shrink toward equal.

- Combining at different horizons without thought. A method that wins at may lose at . Horizon-specific weights make sense when accuracy ranking varies meaningfully by horizon.

- Confusing combination with averaging different scenarios. Combining "high marketing spend" and "low marketing spend" forecasts is a different operation (scenario reconciliation, weighted by scenario probability). Method combination assumes the underlying scenario is the same.

Connection to existing forecasting infrastructure

Combination is implementable in any WFM software stack that supports running multiple forecasting methods on the same data. Most enterprise WFM platforms support this; a few support automatic combination as a built-in feature.

For platforms without built-in combination, the workflow is:

- Run each method via the platform's forecasting interface (or via APIs)

- Export the forecasts to a common format

- Compute the average (or weighted combination) externally — Excel works for small operations; analytical notebooks for larger

- Submit the combined forecast back to the platform as the production forecast

This is a low-investment, high-return enhancement. Few practitioners do it; everyone benefits when they start.

Maturity Model Position

In the WFM Labs Maturity Model™, forecast combination is a next-generation practice that is operationally trivial yet rare below Level 3. The empirical evidence — from Bates & Granger forward — is overwhelming, but it is not the modal practice in WFM.

- Level 1 — Initial (Emerging Operations) — typically only one (often judgmental) forecast exists; nothing to combine.

- Level 2 — Foundational (Traditional WFM Excellence) — a single statistical method (typically ETS) is the production forecast; multiple methods may exist as scratch but are not combined.

- Level 3 — Progressive (Breaking the Monolith) — two-to-four diverse methods (naive, ETS, ARIMA, regression) are run on the same data and the simple average is the production forecast; combination is benchmarked against the best individual method.

- Level 4 — Advanced (The Ecosystem Emerges) — performance- or inverse-variance-weighted combinations, validated by rolling-origin out-of-sample evaluation; combination is applied per-horizon when accuracy ranking varies meaningfully by horizon.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — stacking / meta-learning combinations across many base methods are managed by the analytical platform, with weights conditioned on series characteristics (M5-style ensembling at scale).

Combination is the canonical L2-to-L3 lift: it requires no new infrastructure beyond running multiple forecasts and averaging, yet produces 10-20% accuracy gains. Its absence at Level 2 is more about practice habit than technical capability.

References

- Bates, J. M., & Granger, C. W. J. "The combination of forecasts." Operational Research Quarterly 20(4), 1969. The foundational paper.

- Granger, C. W. J., & Ramanathan, R. "Improved methods of combining forecasts." Journal of Forecasting 3(2), 1984.

- Makridakis, S., Spiliotis, E., & Assimakopoulos, V. "The M4 competition: 100,000 time series and 61 forecasting methods." International Journal of Forecasting 36(1), 2020.

- Makridakis, S., Spiliotis, E., & Assimakopoulos, V. "The M5 competition: background, organization, and implementation." International Journal of Forecasting 38(4), 2022.

- Hyndman, R. J., & Athanasopoulos, G. "Combinations of forecasts." Forecasting: Principles and Practice (Python edition). otexts.com/fpppy.

See Also

- Forecasting Methods — overview; combination operates over the methods catalogued there

- Naive and Seasonal Naive Forecasting — almost always one of the methods in the combination set

- Exponential Smoothing — typically a strong combination component

- ARIMA Models — typically a strong combination component

- Regression for Forecasting — adds combination value when explanatory variables exist

- Forecast Accuracy Metrics — how to evaluate whether combination is helping

- Probabilistic Forecasting — combination of probabilistic forecasts requires combining the distributions, not just point estimates