Naive and Seasonal Naive Forecasting

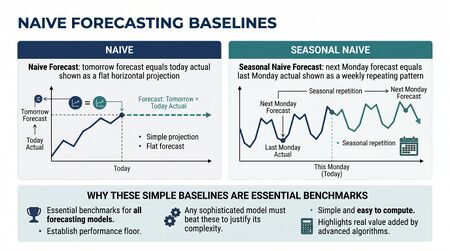

Naive and Seasonal Naive Forecasting are the simplest forecasting methods. They produce forecasts using the most recent observation (naive) or the most recent observation from the same season (seasonal naive). They are not sophisticated. They are essential.

In WFM, naive methods serve two primary purposes: as quick benchmarks against which more sophisticated methods must justify their complexity, and as the actual production method for series too short, too irregular, or too low-volume to support fitting more complex models[1].

Why naive methods matter

The most common forecasting failure in WFM is adopting a sophisticated method that performs worse than the trivial baseline. A WFM team running Holt-Winters on weekly volume that cannot beat seasonal naive on the same horizon is using more complexity for less accuracy. The naive forecast is the benchmark that disciplines method selection — and "we cannot beat naive" is information about the data, the configuration, or the method family choice.

Hyndman's principle: every forecasting analysis starts with naive baselines computed on the same horizon and same metric.

The methods

Mean forecast

The forecast for any future period is the mean of historical values:

Best for: stationary series with no trend or seasonality. Rare in WFM volume data; sometimes appropriate for steady-state metrics like long-run schedule adherence percentages.

Naive

The forecast for any future period is the last observed value:

Best for: random-walk-like series where the best estimate of tomorrow is today. The naive forecast assumes that change is unpredictable, which is often closer to the truth than sophisticated methods admit.

Seasonal naive

The forecast for period is the value from the same season one period ago:

where is the seasonal period (e.g., for daily data with weekly seasonality, for monthly data with annual seasonality, for half-hour intraday data with daily seasonality), and is the integer part of .

In plain language: the forecast for next Tuesday at 9am is what happened last Tuesday at 9am.

Best for: any WFM volume series with strong seasonality. The seasonal naive forecast on weekly call volume is a remarkably hard baseline to beat.

Drift method

A small extension of naive that fits a line through the first and last observation and extrapolates:

Best for: series with consistent linear trend. The drift method is essentially "naive plus a trend term."

When to use naive methods in production

Naive methods are not just benchmarks. There are WFM contexts where they are the right production method:

- Very short history — new sites, new skills, new product launches. Twelve weeks of history is not enough to fit ARIMA reliably; seasonal naive against the available weeks may be the best available answer.

- Intermittent demand — low-volume skills with frequent zero-volume intervals. Sophisticated methods often fail catastrophically on intermittent series; seasonal naive is a defensible default.

- Step-function changes — when business operations change discontinuously (a new product launches, a vendor migration completes), the historical relationship to predict from has changed. Seasonal naive on the post-change period — even if short — often outperforms a sophisticated method fit on the contaminated full series.

- High-frequency intraday — half-hour interval forecasts for the next day. Seasonal naive on yesterday's same-half-hour pattern is competitive with much more sophisticated methods.

Common WFM pitfalls

- Wrong seasonal period. Daily call volume has weekly seasonality (), not 365-day annual seasonality. Choosing the wrong produces nonsense forecasts.

- Multiple seasonal periods. Half-hour interval data has both intraday seasonality (48 intervals per day) and weekly seasonality (7×48 = 336 intervals per week). Single-period seasonal naive captures one or the other, not both. This is a case where ETS or more sophisticated methods are genuinely needed.

- Treating seasonal naive as inadequate without testing. Many WFM teams reach for sophisticated methods on the assumption that "of course we can do better than naive." Sometimes you can. Sometimes you cannot. Compute both and compare.

Accuracy and benchmarking

To use naive methods as benchmarks:

- Hold out the most recent periods of historical data

- Generate the seasonal naive forecast for those periods

- Generate the candidate sophisticated forecast for the same periods

- Compute MAE, RMSE, and MASE for both

- If the candidate method has lower error across the metrics, adopt it. If not, the candidate has not justified its complexity.

The MASE metric is particularly suited here because it is defined as the candidate method's MAE relative to the in-sample seasonal naive MAE. A MASE less than 1 means the method beats naive.

Implementation in WFM software

Most WFM software platforms ship multiple forecasting methods, and most include a seasonal naive option (sometimes called "same day last week," "last year same week," or similar). Use it. The seasonal naive forecast computed by the platform should match what you would compute by hand on the same data; if it does not, the platform has data alignment or configuration issues to fix before any method comparison is meaningful.

Connection to other forecasting methods

Naive methods sit at the bottom of the complexity stack. Each more sophisticated method should be evaluated on whether the additional complexity is justified by reduced error:

- Exponential Smoothing — ETS adds level, trend, and seasonal smoothing on top of the naive concept. Seasonal naive is roughly equivalent to ETS(A,N,A) with α near 0.

- ARIMA Models — ARIMA explicitly models autocorrelation that naive ignores. Use when ETS residuals show autocorrelation.

Maturity Model Position

In the WFM Labs Maturity Model™, the role of naive forecasting in an organization is itself a maturity tell. Whether the organization computes naive baselines at all — and what it does with them — separates Level 2 from Level 3.

- Level 1 — Initial (Emerging Operations) — naive forecasting may be the only method in use ("same as last week"); not as benchmark, but as the production forecast.

- Level 2 — Foundational (Traditional WFM Excellence) — sophisticated methods are used in production (typically Holt-Winters), but naive baselines are not computed; nobody knows whether the sophisticated method actually beats naive.

- Level 3 — Progressive (Breaking the Monolith) — Hyndman's benchmark imperative is enforced: every method must beat seasonal naive on the same data and same horizon before adoption; MASE is the natural metric; broken methods that lose to naive are diagnosed and fixed (not just escalated to vendor).

- Level 4 — Advanced (The Ecosystem Emerges) — naive forecasts participate in combinations (often as one of three to four diverse methods); seasonal naive is also the production method on series too short, too irregular, or too intermittent to support a sophisticated alternative.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — the analytical platform automatically falls back to seasonal naive on series where sophisticated methods fail diagnostic checks; baseline comparison is continuous, not one-off.

Naive baselines are simultaneously a legacy primitive (L1 production method) and a Level 3 discipline (the benchmark every method must beat). The maturity tell is whether the organization uses them as benchmark.

References

- Hyndman, R. J., & Athanasopoulos, G. "Some simple forecasting methods." Forecasting: Principles and Practice (Python edition). otexts.com/fpppy.

See Also

- Forecasting Methods — overview of all forecasting families

- Exponential Smoothing — when naive is not enough

- ARIMA Models — when ETS is not enough

- Demand calculation — calculator that consumes a forecast

- Power of One — interval-level sensitivity that depends on forecast quality

- ↑ Hyndman, R.J. & Athanasopoulos, G. (2021). "Forecasting: Principles and Practice" (3rd ed.). OTexts.