Quality Management

Quality Management is the practitioner discipline of evaluating, scoring, and improving the quality of customer contacts. Where Performance Management looks at the cumulative agent profile across a measurement period, quality management focuses on the contact itself — the call, chat, email, or interaction that occurred — and asks whether it met the standards the organization has defined for a good outcome.

Quality management is one of the foundational pillars Brad Cleveland identifies in Call Center Management on Fast Forward (4th ed., ICMI Press, 2019). It sits alongside forecasting, scheduling, and performance management as a discipline every operation must execute, and it is the layer that turns abstract definitions of "good service" into observable, scored, coachable evidence.

What practitioners build

Quality management practitioners build the evaluation system that makes contact quality visible and improvable. The deliverables are:

- A scoring rubric — the explicit criteria a contact is judged against, typically organized into categories (greeting, discovery, resolution, soft skills, compliance) with weighted point values.

- A monitoring program — the operational rhythm of selecting contacts, evaluating them, delivering scores back to agents and supervisors, and aggregating to operational dashboards.

- A calibration discipline — the practice of ensuring evaluators score consistently across contacts and across each other, so the rubric produces reliable signal.

- A linkage to coaching — quality findings flow into Coaching and Agent Development, where they become development input rather than just a score.

The quality program is the audit layer for the contact. Without it, the operation has volume metrics, time metrics, and outcome metrics — but no evidence about what actually happened on the contact.

Methodology / framework

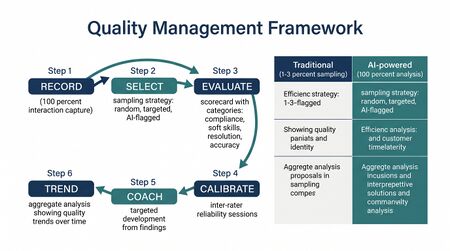

A standard quality management framework has four components:

Rubric design

The rubric defines what "good" looks like. Cleveland and ICMI guidance both emphasize that rubrics must be tied to customer-defined value rather than internal-process compliance. A rubric that scores adherence to a script but ignores whether the customer's problem was solved produces high-scoring contacts that customers hate. Common rubric categories:

- Customer experience — empathy, clarity, ownership, professional tone

- Resolution effectiveness — accuracy, completeness, First Contact Resolution

- Process compliance — required disclosures, security verification, system documentation

- Soft skills — active listening, de-escalation, voice control

Sampling design

Full-coverage QA — evaluating every contact — is rarely feasible at human cost. Sampling-based QA selects a subset. Practitioner guidance (SQM Group, ICMI) typically recommends 4-8 contacts per agent per month at minimum to produce statistically usable signal, though the right number depends on contact volume, score variance, and the decisions the score will drive. Speech analytics and AI-assisted QA change this calculation by making 100% coverage technically feasible, but they shift the practitioner discipline from sampling to model validation.

Calibration

Calibration sessions are the recurring meeting where evaluators score the same contact independently and then reconcile differences. Without calibration, the rubric's signal degrades — different evaluators score differently, and an agent's score depends as much on the evaluator as on the contact. SQM Group research (The First Contact Resolution Book, SQM Press) and ICMI guidance both treat calibration cadence as a core quality-program metric: typically weekly or bi-weekly with all evaluators participating.

Feedback loop

A quality score that doesn't reach the agent in time to influence behavior is a recordkeeping exercise. Modern quality programs target same-week or even same-day feedback delivery, with the score followed by a coaching conversation rather than just a number on a dashboard.

Practitioner playbook

- Define the quality model. Decide what the program is measuring and why. If the operation hasn't defined what good looks like, the rubric will accidentally codify whatever the loudest stakeholder cares about.

- Build the rubric. Limit it. A 60-line rubric is unreliable; a 12-line rubric is operable. Each line should be observable in the contact and tied to a behavior the agent can change.

- Pilot with a small evaluator team. Score 50-100 contacts before rolling the rubric out. Discrepancies surface rubric ambiguities that must be fixed before scale.

- Establish calibration cadence. Weekly or bi-weekly. Track inter-rater agreement as a program metric; if it drops, the rubric or the evaluators need attention.

- Wire feedback to coaching. QA scores flow to supervisors and agents within a defined SLA — typically 5 business days or faster. A score without a conversation is wasted work.

- Layer in analytics. Once manual QA is stable, add speech analytics or AI-assisted scoring to expand coverage. Use AI to flag high-risk contacts and patterns; keep human evaluators for nuance and calibration.

Common failure modes

- Rubric drift. The rubric accumulates items over years until it is unscorable. Symptom: evaluators are skipping criteria or fabricating scores.

- Compliance theater. The rubric is dominated by checkbox items (greeting word-for-word, recording the case number) and starves the customer-experience dimensions. High scores correlate poorly with customer outcomes.

- No calibration. Scores diverge by evaluator. Agents learn which evaluator they "want" and the program loses credibility.

- Score-as-discipline. Quality scores are treated as a punishment input rather than a development input. Agents learn to game the rubric and stop engaging with the underlying skill development.

- Disconnected from FCR and CX. Quality scores rise while First Contact Resolution, CSAT, and escalation rates don't move. The rubric is measuring the wrong thing.

- Samples too small. One or two contacts per agent per month produce a score that is mostly noise. Decisions made on that signal are arbitrary.

Maturity Model Position

In the WFM Labs Maturity Model™:

- Level 1 — Initial (Emerging Operations) organizations have ad-hoc quality monitoring — supervisors listen occasionally, no rubric, no aggregation. Quality is an opinion.

- Level 2 — Foundational (Traditional WFM Excellence) organizations run a structured QA program with a documented rubric, sampled monitoring, and regular calibration. Scores feed individual reviews. The discipline is typically owned by a QA team operating in parallel to operations.

- Level 3 — Progressive (Breaking the Monolith) organizations integrate QA findings with First Contact Resolution, CX, and Variance Harvesting — quality signal drives operational change in addition to individual coaching. Speech analytics expands sample coverage; the rubric is reviewed and revised quarterly.

- Level 4 — Advanced (The Ecosystem Emerges) organizations operate AI-assisted QA at near-100% coverage. The quality model is continuously validated against customer outcomes (CSAT, retention, complaint volume), and rubric weights are tuned by which behaviors actually predict outcomes. The QA team's role shifts from scoring to model governance and exception review.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) organizations treat quality as a closed-loop system where contact-level signal flows in real time to coaching, to product/process improvement, to forecasting (recurring contact themes signal upstream issues), and to the value routing decisions about which contacts deserve which kind of treatment. Quality is no longer a department; it is a layer of the operating system.

References

- Cleveland, B. Call Center Management on Fast Forward (4th ed.). ICMI Press, 2019. Primary practitioner treatment of quality monitoring, calibration, and the link to performance management.

- ICMI body of work on quality monitoring and calibration (icmi.com), including the ICMI Quality Monitoring training curriculum.

- SQM Group. The First Contact Resolution Book and SQM's body of FCR/QA research (sqmgroup.com). Quality scoring as a predictor of FCR and CSAT.

- Lee Resources research on quality monitoring sample sizes and inter-rater reliability.

- Hubbard, D. How to Measure Anything. Wiley. The measurement-design discipline that QA program design is a special case of.

See Also

- Interaction Analytics — Automated, full-coverage analysis powering modern QM

- Performance Management — the cumulative agent profile that QA scores feed into

- Coaching and Agent Development — the lever that converts QA findings into agent improvement

- First Contact Resolution — the most important customer-facing outcome QA should be predicting

- Knowledge Management — knowledge gaps surface as quality issues; the QA program is one of the best signals for KB improvement

- Customer Experience Management — the strategic outcome QA quality scores should correlate with

- Adherence and Conformance — separate dimension of agent performance; QA evaluates the contact, adherence evaluates the schedule

- Variance Harvesting — quality findings are one of the highest-value variance signals

- The Escalation Tax — repeat contacts driven by quality failures are a primary source of escalation cost

- Real-Time Operations — modern speech analytics push quality signal into the real-time layer