Performance Management

Performance Management is the operating discipline of setting expectations for agents and teams, measuring against those expectations, providing feedback, developing capability, and recalibrating targets as the operation evolves. Where Quality Management evaluates the individual contact, performance management evaluates the cumulative agent — the rolled-up profile of behavior, results, and growth across a measurement period.

Brad Cleveland frames performance management in Call Center Management on Fast Forward (4th ed., ICMI Press, 2019) as the connective tissue of the operation: the cycle through which strategy, metrics, and individual behavior are kept in alignment. Without it, an operation accumulates measurement systems that don't drive change.

What practitioners build

Performance management practitioners build the system that turns operational measurement into agent-level action. The deliverables are:

- A performance model — the explicit set of dimensions an agent is evaluated against (typically adherence, quality, FCR, AHT or productivity, CSAT, and behavioral/development dimensions).

- A goal-setting framework — how targets are set, who sets them, and how they connect to operational and business outcomes.

- A feedback cadence — the operational rhythm of one-on-ones, scorecard reviews, mid-cycle checks, and formal reviews.

- A development pathway — the structure that moves an agent from "meeting expectations" to "growing capability," tied to Coaching and Agent Development.

- A recalibration discipline — the practice of revisiting targets when the operation, the work, or the customer changes.

The performance model is what makes "high performer" and "low performer" mean something specific in the operation. Without a defined model, those labels are opinions, and the resulting decisions (assignments, promotions, separations) are arbitrary.

Methodology / framework

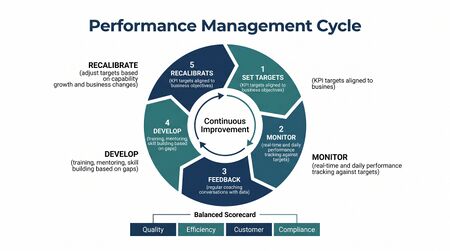

The performance management cycle, as Cleveland and ICMI describe it, has five phases that repeat continuously:

1. Target

Targets are set at multiple levels — operational targets (the queue's Service Level), team targets (the supervisor's roll-up), and individual targets (the agent's scorecard). Modern practice uses adaptations of SMART (specific, measurable, achievable, relevant, time-bound) or OKRs scoped to the contact center context. The target should be derived from operational reality — what the queue actually needs — rather than imposed top-down.

2. Monitor

Measurement against targets happens in real time (dashboards), daily (the agent's scorecard view), and at review cadence (weekly, monthly, quarterly). The performance management system is the aggregation layer above the raw measurements; it normalizes, weights, and surfaces the rolled-up profile.

3. Feedback

Feedback delivery is the most failure-prone step. Cleveland is explicit: feedback that arrives weeks after the behavior is mostly useless. Performance management programs that work compress the feedback loop — scorecard updates within days, coaching conversations within the week, formal reviews on a regular cadence.

4. Develop

The performance model identifies gaps; Coaching and Agent Development closes them. The handoff is critical: a performance review that identifies "low FCR" without a development plan to improve it is just a complaint.

5. Recalibrate

Targets must move when the operation moves. New product launches, channel shifts, contact-mix changes, and seasonal patterns all change what is achievable and what is needed. Performance models that don't recalibrate become disconnected from operational reality and lose credibility.

Practitioner playbook

- Choose the model. Decide which dimensions matter and how they weight together. The model should be visible to agents — they should be able to recite what they are being measured on without looking it up.

- Set baselines, not arbitrary targets. Before setting a target, measure the current distribution. Set the target relative to that distribution (e.g., 75th percentile) rather than picking a round number.

- Define cadence. Operational scorecard updates daily; one-on-ones weekly or bi-weekly; formal reviews quarterly or semi-annually. Document who owns each touchpoint.

- Build the supervisor capacity. The performance management cycle runs through supervisors. If supervisors are oversaturated (too many direct reports, too much administrative load), the cycle breaks regardless of how well it is designed.

- Connect to compensation carefully. Tying compensation to performance scores creates strong gaming incentives. Cleveland and ICMI both recommend linking compensation to outcome metrics (CSAT, retention, FCR) rather than process metrics (AHT, adherence) where possible. Recognition programs are often more effective than direct compensation linkage.

- Run the recalibration. Quarterly: are the targets still right? Have any of them become unreachable or too easy? Are new dimensions emerging that should be in the model?

Common failure modes

- Too many metrics. A 14-dimension scorecard is a scorecard the agent can't act on. The performance model should have 4-7 dimensions, weighted explicitly.

- Conflicting metrics. AHT and FCR pull in opposite directions; quality and productivity often do as well. If the model doesn't acknowledge tradeoffs, agents resolve them privately and unpredictably.

- Target by fiat. Targets set without reference to baseline distribution or operational reality lose credibility. Agents who exceed unreachable targets aren't high performers; they are gaming the metric.

- Feedback delay. Reviews delivered monthly or quarterly are too late to influence behavior on the contacts that produced the score.

- Compliance lens. The performance program is treated as a documentation exercise for separations rather than a development engine. Supervisors learn to prepare performance improvement plans rather than develop performers.

- No recalibration. The targets set at launch are still in place three years later, even though the work has changed.

- Decoupled from quality and FCR. Performance scores rise while quality, FCR, and customer outcomes are flat or declining. The model is measuring the wrong things.

Maturity Model Position

Performance management exists at every maturity level — every operation has some way of evaluating agents — but what the discipline looks like changes dramatically by level.

In the WFM Labs Maturity Model™:

- Level 1 — Initial (Emerging Operations) organizations evaluate agents informally — supervisor opinion, occasional formal reviews driven by HR cycles, no consistent scorecard. Performance is a story, not data.

- Level 2 — Foundational (Traditional WFM Excellence) organizations run a structured performance management program with a defined scorecard, regular reviews, and documented goals. The scorecard typically emphasizes productivity and adherence; quality and FCR are present but lighter weighted. The discipline is supervisor-owned and HR-supported.

- Level 3 — Progressive (Breaking the Monolith) organizations rebalance the scorecard toward customer-outcome dimensions — FCR, CSAT, escalation avoidance — and away from pure productivity. Feedback cadence compresses. The performance system explicitly connects to coaching rather than just documenting a score. Variance Harvesting is integrated as a development moment rather than treated as adherence violation.

- Level 4 — Advanced (The Ecosystem Emerges) organizations operate performance management as an analytic system — the model is continuously validated against business outcomes, weights are tuned, and predictive signals (early indicators of struggle, of growth, of attrition risk) are surfaced before they show up in the scorecard. Cross-functional metrics (value-routing outcomes, customer-lifetime impact) enter the model.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) organizations treat agent performance as a node in an enterprise outcomes graph: an agent's behavior is evaluated by its measured contribution to customer outcomes (retention, expansion, satisfaction) at the customer level. Compensation, development, and assignment are driven by causal contribution rather than process metrics. The performance "system" is a continuous closed loop integrating quality, FCR, CX, escalation cost, and business outcomes.

References

- Cleveland, B. Call Center Management on Fast Forward (4th ed.). ICMI Press, 2019. Primary practitioner treatment of the performance management cycle, scorecards, and the link from measurement to action.

- ICMI body of work on contact center performance management (icmi.com), including ICMI's certified contact center supervisor curriculum.

- Doerr, J. Measure What Matters. Portfolio, 2018. Source on OKR adaptation for operational contexts.

- Bersin/Deloitte research on continuous performance management.

- Hubbard, D. How to Measure Anything. Wiley. Foundational treatment of measurement design that performance scorecards inherit from.

See Also

- Quality Management — contact-level evaluation that feeds the performance scorecard

- Coaching and Agent Development — the development engine downstream of performance findings

- Adherence and Conformance — one performance dimension; signal-not-violation in modern practice

- Schedule Quality Metrics — operational quality lens, complement to agent-quality lens

- First Contact Resolution — primary outcome dimension belonging in any modern scorecard

- Customer Experience Management — strategic outcome the performance model should correlate with

- Speed to proficiency curve — performance trajectory during ramp; the scorecard must distinguish ramping agents from tenured ones

- Variance Harvesting — performance moments that the variance practice converts into development

- Value-Based Planning Model — strategic frame within which performance management operates

- WFM Roles — supervisor and team-leader roles that own the performance management cycle

- Frontline Leader WFM Literacy

- Sentiment Analysis and CX Signal Integration