Value Routing Model

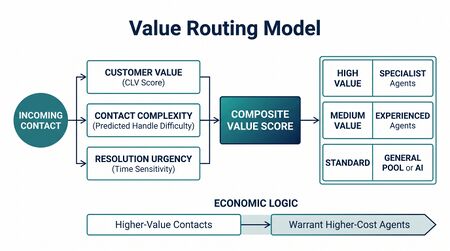

The Value Routing Model is the methodology that operationalizes the Value dimension of the Three-Pool Architecture routing decision. The Value-Based Planning Model specifies that interactions route to pools based on Value Score (1-10) and AI Capability (%); the Value Routing Model decomposes the Value Score into a composite of four evidence-based sub-dimensions, each scored separately for human and AI handling, with the differential driving the routing recommendation.

The model is a Level 4 — Advanced (The Ecosystem Emerges) framework on the WFM Labs Maturity Model™. Calibration relies on standard CX measurement infrastructure (CLV models, post-interaction CES surveys, conversion tracking, churn analytics) — Level 3 prerequisites for most operations.

The composite formula

The Value Score for each interaction type is the weighted sum of four dimensions:

Value = w_1 · CLV Impact + w_2 · Customer Effort Score + w_3 · Revenue Opportunity + w_4 · Churn Risk

Each dimension is scored 0-10 separately for human and AI handling. The differential between human and AI composite scores drives the routing recommendation: when human composite materially exceeds AI composite for an interaction type, route to a human pool; when AI composite is competitive, route to AI.

The four weights w_1 through w_4 sum to 1 and are set by organizational priority. There is no universal weighting; an operation that competes on retention should weight Churn Risk and CLV Impact more heavily, while an operation that competes on commercial expansion should weight Revenue Opportunity more heavily.

The four dimensions

CLV Impact

How much does this interaction change the customer's lifetime value? McKinsey's CLV literature treats CLV as the organizing metric for customer investment decisions; Forrester's interaction-optimization research recommends individual-level CLV calculation rather than segment-level averages.

How to measure:

- Segment customers by value tier. The familiar Pareto pattern holds — the top 20% of accounts often drive 80% of revenue.

- Track interaction type → subsequent behavior (churn, upgrade, cross-sell, NPS change) over a 90-180 day window.

- Attribute the delta in CLV to interaction type and channel.

Scoring: 0 = no CLV impact (e.g., password reset, address change). 10 = direct CLV determinant (e.g., retention save, contract renewal).

For Pool AA candidates, CLV Impact should be low (≤4); for Pool Spec candidates, CLV Impact should be high (≥7) on either or both human/AI dimensions.

Customer Effort Score (CES)

Harvard Business Review's research on 75,000 interactions found that reducing customer effort is more predictive of loyalty than increasing satisfaction. The empirical multipliers: 94% of low-effort customers repurchase; 88% increase spend.[1]

Important: AI scores higher than human on CES for many routine call types. No hold time, instant resolution, 24/7 availability — for password resets, balance checks, and FAQ-style inquiries, AI delivers lower effort than human. The CES dimension is one of the few in which AI handling routinely beats human handling on the customer side.

How to measure:

- Post-interaction CES survey per call type, per channel (human vs AI).

- Calculate revenue impact of the CES differential using the 94% / 88% loyalty multipliers.

Scoring: 0 = high effort, customer frustrated. 10 = effortless, instant, no friction.

Revenue Opportunity

Cross-sell, upsell, and direct revenue generation potential. Sales operations have routed on value for decades — highest-value lead to the closer first. Service operations face the same question: which interactions have hidden revenue potential that human handling can unlock but AI cannot?

How to measure:

- Track conversion rates per interaction type when handled by human vs AI.

- Compute expected revenue per interaction: P(conversion) × average deal value.

- Use conjoint analysis (Harvard Business School Online) to measure customer willingness-to-accept offers by channel.

Scoring: 0 = zero revenue potential (address change, status query). 10 = direct revenue driver (upgrade inquiry, plan-change request, cancellation save with offer).

This dimension is particularly susceptible to under-counting in legacy operations because service-channel revenue is rarely attributed to service handling. CRM-side attribution work is a Level 3 prerequisite for honest scoring here.

Churn Risk

Risk of customer loss if the interaction is mishandled or routed to the wrong channel. Research consistently shows 65% of customers prefer human interaction when strong emotions are involved.[2] The Escalation Tax is the cost-side companion: a $0.20 marginal AI interaction has a $2.06 expected cost when the full cascade is modeled — and that cost is dominated by retention impact, not handling cost.

How to measure:

- Track churn rates within 90 days of interaction, segmented by interaction type and channel.

- Compute expected churn cost: P(churn | channel) × CLV lost.

- For interaction types without historical data, use Hubbard's Applied Information Economics (AIE) calibrated estimation.[3]

Scoring: 0 = no churn risk (routine inquiry). 10 = high churn risk (cancellation, complaint, regulatory escalation, billing dispute).

This dimension is the strongest single argument against high-containment defaults: an interaction with Churn Risk ≥ 7 belongs in Pool Spec regardless of AI Capability, because the expected cost of mis-routing dominates handling cost.

Calibration guide

Five-step layered approach, ordered by data accessibility and rigor:

- Start with CLV tiers. High-value accounts already have CLV models in most enterprises. Map interaction types to CLV impact using existing data.

- Measure CES per channel. This is the cheapest data to collect. A post-interaction CES survey gives effort scores by interaction type and channel within weeks.

- Apply Hubbard AIE for gaps. For interaction types lacking empirical data, use expert panels with decomposition and calibration training. Hubbard's protocol produces defensible estimates for intangibles.

- A/B test the marginal interaction types. Randomly route borderline types across channels. Track resolution, repeat contact, CSAT, and churn within 90 days. This is empirical ground truth for routing decisions, not survey data.

- Run conjoint for customer preference. Choice-based conjoint surveys reveal the dollar value customers implicitly place on human handling per interaction type. The most rigorous method for the preference dimension; warranted only for high-stakes routing decisions.

The five steps are independent — operations should adopt as many as their measurement infrastructure supports, in order. Step 1 alone produces a defensible composite. Steps 1+2 produce a robust composite. Steps 1-5 produce the Level 5 calibration band.

Adjacent measurement frameworks

KPMG's Customer Experience Excellence Barometer uses a six-pillar CX model with Shapley Value regression weighting to estimate per-pillar contribution to overall CX outcomes.[4] Operations with mature CX measurement infrastructure can use Shapley regression to derive empirical weights for the four Value Routing dimensions rather than expert-set weights — a Level 5 calibration step.

Practitioner playbook

- Catalogue your interaction types from QA samples and contact-driver analysis. Aim for 40-100 distinct types in mid-sized operations.

- Score each type on the four dimensions, separately for human and AI handling. Use historical data first; Hubbard AIE for gaps; conjoint for high-stakes types.

- Set the four weights based on organizational priority. Default if no priority is set: equal weights (0.25 each). Document the weighting rationale; it is auditable.

- Compute composite Value Scores. Human score and AI score per interaction type.

- Apply the Three-Pool Architecture routing heuristic. AI Capability > 80% AND Value Score ≤ 4 → Pool AA. AI Capability < 30% OR Value Score ≥ 8 → Pool Spec. Else Pool Collab.

- Sweep the routing thresholds. Re-compute total expected cost, CX, and EX at threshold variations of ±5pp. The interior optimum is rarely exactly at the default 80/30/4/8 thresholds.

- Recalibrate quarterly. Customer behavior, AI capability, and product change all shift the scores. Annual is too slow.

Interactive tool — Value Routing Model

A live calculator at valuerouting.wfmlabs.com implements the composite scoring, four-dimension weighting, and net-benefit-versus-containment visualization in this article. Use it to:

- Score interaction types across the four dimensions (CLV Impact, Customer Effort, Revenue Opportunity, Churn Risk) for both human and AI handling, then see the routing recommendation update in real time.

- Adjust the four weights interactively. Weights auto-normalize to 100%; the calculator shows how the routing decisions and total expected value shift as priorities change.

- Read the Marginal Value Analysis — a per-interaction-type bar chart showing net benefit (cost saved minus value destroyed) of routing each type to AI, sorted from best AI candidate to worst. The green-to-red crossover identifies the inflection point.

- Inspect the AI containment U-curve (live demonstration of the Interior Optimum). The orange dashed line marks the cost-versus-value optimum. The drop-off after the peak is the visual evidence that 100% AI containment is never the cost-minimizing operating point.

Default seeded interaction types include Password Reset / Account Unlock, Balance & Status Inquiry, Address & Profile Changes, General Product Questions, Service Upgrade / Cross-sell, Retention / Cancellation Save, and Technical Support (Complex). Add your own to mirror your operation's taxonomy.

The tool is the working numerical companion to this article and to the Interior Optimum (containment rate) page. Source paper: Lango (2026), Value-Based Models for Customer Operations.

For practitioners calibrating the four scoring dimensions — particularly Churn Risk, where most operations have not historically tracked the input as a discrete variable — the Measure Anything tool (measure.wfmlabs.com) supports Hubbard Applied Information Economics decomposition. Build a calibrated range for the unmeasured dimension, run the routing model against the range, and decide whether the value-of-information justifies investment in formal measurement before committing to a routing recommendation.

Limitations

- Weight setting is a judgment call. Equal weights are defensible defaults but rarely optimal. Shapley regression is the rigorous answer; expert weighting is the pragmatic one.

- CES is reactive. Post-interaction surveys measure delivered effort, not predicted effort. Pre-deployment scoring relies on analog data.

- Conjoint requires investment. The most rigorous customer-preference data is also the most expensive to collect. Reserve for Pool Spec / high-CLV tier interaction types.

- Differentials can be unstable. The human − AI score difference can flip as AI capability improves; routing decisions need re-validation as capability evolves.

- The model assumes independent dimensions. In practice CLV Impact and Churn Risk are correlated. Weighting and interpretation should account for this.

Maturity Model Position

- Level 1 — Initial (Emerging Operations) — No interaction taxonomy. Routing is FIFO or skill-based on a thin skill model. Composite scoring is unreachable.

- Level 2 — Foundational (Traditional WFM Excellence) — Routing is skill-based. Value is implicit in skill assignment but not measured.

- Level 3 — Progressive (Breaking the Monolith) — CLV models, CES surveys, and conversion attribution exist. Composite scoring is approachable, but the four-dimension framework is typically not yet adopted; operations score one or two dimensions ad hoc.

- Level 4 — Advanced (The Ecosystem Emerges) — Full four-dimension composite with expert-weighted dimensions. Routing decisions are explicitly grounded in human-AI differentials. Quarterly recalibration cadence in place.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — Shapley-regression-derived weights. Conjoint-validated preferences for high-stakes types. A/B-tested boundary cases. Closed-loop integration with the [[Multi-Objective Optimization in Contact Center|multi-objective governance layer]].

The Level 3 → Level 4 transition is the move from ad-hoc dimension scoring to the explicit four-dimension composite with documented weights.

See Also

- Value-Based Planning Model — the framework the routing model serves

- Three-Pool Architecture — the routing target the Value Score drives

- The Escalation Tax — the cost-side companion to Churn Risk

- Service Demand Rebound Model — explains rebound generation, which feeds CLV Impact and Revenue Opportunity scoring

- Cognitive Portfolio Model (N*) — Pool Collab's staffing math, applied to interaction types whose Value composite lands them in Pool Collab

- Interior Optimum (containment rate) — operating point determined in part by the Value Score distribution

- Multi-Objective Optimization in Contact Center — surface against which routing thresholds are swept

- Probabilistic Forecasting — distributional scoring for the four dimensions

References

- ↑ Harvard Business Review research program on customer effort and loyalty (Dixon, Freeman & Toman; multiple HBR articles 2010-2024). The 94%/88% figures are the canonical multipliers from the program's longitudinal CES data.

- ↑ Harvard Business Review (multiple articles, 2023-2024): role of human empathy in retention-critical service interactions. The 65% figure is the canonical industry benchmark from the HBR program.

- ↑ Hubbard, D. (2014). How to Measure Anything: Finding the Value of "Intangibles" in Business. Wiley. AIE provides calibrated-estimation protocols for variables without historical data.

- ↑ KPMG Customer Experience Excellence Barometer (annual). Six pillars (Personalization, Integrity, Expectations, Resolution, Time and Effort, Empathy) with Shapley-value-weighted contribution estimates.

Source literature for the dimensions:

- McKinsey: Customer Lifetime Value — The Customer Compass (CLV as organizing metric for customer investment decisions).

- McKinsey: Next Best Experience — How AI Can Power Every Interaction (AI-powered interaction routing and value optimization).

- Forrester: Optimize Customer Interactions With CLV Analysis (individual-level CLV for interaction optimization).

- Forrester: How To Calculate Customer Lifetime Value (four industry-standard CLV calculation models).

- Harvard Business Review: Keeping Customer Service Human-Centered in an AI World (when human interaction creates irreplaceable value).

- Harvard Business School: When AI Chatbots Help People Act More Human (AI augmentation improving human agent performance by 20%).

- Harvard Business School Online: What Is Conjoint Analysis (measuring customer willingness-to-pay and channel preference).

- Hubbard, D. (2014): How to Measure Anything / Applied Information Economics (calibrated estimation for measuring intangibles).

- KPMG: Customer Experience Excellence Barometer (six-pillar CX model with Shapley Value regression weighting).

- Lango, T. (2026): Value-Based Models for Customer Operations (full mathematical framework for multi-pool value-based workforce planning).