Service Demand Rebound Model

The Service Demand Rebound Model (SDRM) is a quantitative framework for predicting the gap between projected and realized headcount savings when contact-center work is automated. It adapts the Jevons paradox from energy economics to service operations and decomposes total rebound into three measurable components plus a complexity premium on the work that remains.

SDRM is the diagnostic that explains why a 40% deflection projection routinely yields a 12-20% net FTE reduction. It is a Level 4 framework on the WFM Labs Maturity Model™ — Level 4 — Advanced (The Ecosystem Emerges) — because its quantification requires probabilistic forecasting, per-interaction-type costing, and post-deployment contact-driver analysis, all of which are Level 3 prerequisites.

The model is documented in Lango (2026), Value-Based Models for Customer Operations.[1]

The Jevons paradox in customer service

In 1865 William Stanley Jevons observed that efficiency improvements in coal use increased coal consumption, not decreased it. Cheaper energy raised the marginal economic value of energy-using activity, which expanded that activity. The pattern has since been confirmed across transportation,[2] energy retrofits,[3] and household appliances.[4]

Customer service has been living the Jevons paradox for thirty years. IVR did not eliminate calls; it routed them. Self-service web portals did not eliminate digital contacts; they multiplied them. Mobile apps did not flatten support volume; they introduced new failure modes (Zelle disputes, mobile-deposit holds, plan-change confusion) that did not exist before. Each cheapening of contact handling expanded the contact volume.

SDRM names what was happening and quantifies it.

The SDRM equation

Net FTE Impact is the gross deflection benefit reduced by demand rebound and complexity premium:

Net FTE Impact = Gross Deflection − Demand Rebound − Complexity Premium

Where:

- Gross Deflection is the headcount equivalent of contacts removed by automation (P_deflect × volume × AHT_human / hours_per_FTE).

- Demand Rebound is the headcount equivalent of new contacts induced by automation, decomposed below.

- Complexity Premium is the AHT increase on the human-handled remainder, expressed as a headcount delta.

In typical deployments the rebound and premium together absorb 30-70% of the gross deflection. The white paper's calibrated parameter set centers around 50%.

Three rebound components

Total rebound R = R_d + R_i + R_s, decomposed by the empirical taxonomy from Greening, Greene & Difiglio (2000) and Sorrell (2009):

| Component | What it is | Typical range |

|---|---|---|

| R_d — Direct rebound | Increased contact volume in the same channel because the channel is now cheaper / faster to use. | 15-35% |

| R_i — Indirect rebound | Increased contact volume in adjacent channels triggered by the automated channel (e.g., chatbot escalations to call). | 10-20% |

| R_s — Systemic rebound | Increased total contact volume because automation enables new product features, new self-service paths, or new failure modes. | 5-15% |

Total typically falls in 30-70%, with R_d the largest contributor in most deployments and R_s the slowest to materialize.

Five mechanisms of induced demand

The three rebound components are produced by five mechanisms. Practitioners need to recognize each because they require different mitigations.

- Latent demand unlock. Customers who would not have contacted the operation now do, because the cost of contact (effort, hold time, friction) has dropped below their personal threshold. Most prevalent in account-balance, FAQ, and information-lookup categories.

- Information-triggered anxiety. AI-surfaced information (account alerts, fraud warnings, status updates) triggers verification contacts. Banking and insurance see this acutely.

- Channel multiplication. The same customer issue is now contacted via two or three channels (chat, voice, email) in sequence as the customer seeks resolution. Each channel counts as a contact.

- Expectation escalation. Faster resolution on routine items raises customer expectations on complex items, which increases re-contact rates and escalations on the remainder.

- Complexity concentration. The contacts that remain after deflection are systematically harder than the average pre-deflection contact, because the easy ones were the deflection targets. This is the source of the Complexity Premium.

Emergent inquiry types

Pre-deployment, the contact mix is some distribution. Post-deployment, the distribution changes — and four categories of contact appear that did not previously exist:

- Digital platform support. Support for the support channels themselves: "the chatbot won't let me past this step", "the password reset email never arrived", "I can't get the AI to understand me".

- Cross-channel confusion. "I started in chat, then was sent an email, then got a text — what's actually happening with my issue?"

- Regulatory and privacy contacts. GDPR / CCPA / similar regimes generate contacts (data-access requests, deletion requests, opt-out disputes) that simply did not exist before the regulations.

- Product-of-technology failures. New product capabilities create new contact types: Zelle fraud disputes, mobile-deposit holds, plan-change billing confusion, two-factor-authentication lockouts.

These categories should be planned for explicitly. They are not edge cases; in mature digital operations they routinely account for 15-25% of total volume.

The Complexity Premium

Practitioner-facing rule of thumb from the white paper: AHT on the human-handled remainder rises 5-8% per 10 percentage points of automation penetration.

Mechanism: when 30% of the easy contacts deflect, the remaining 70% has a higher mean handle time because the easy ones are no longer in the average. At 60% deflection, the remaining 40% is harder still. The premium compounds.

Implication: the FTE math must use post-deflection AHT, not the pre-deflection average. Many vendor business cases use the pre-deflection AHT and over-credit the human cost saving.

Why rebound strengthens over time

Short-run elasticities are smaller than long-run elasticities. This is robust across rebound literature.[5] The same pattern shows up in service operations:

- Months 0-3: R_d only, modest. Customers don't yet know the new automation exists.

- Months 3-12: R_d builds toward its plateau. R_i appears as escalation paths develop.

- Year 1-2: R_s materializes. New product capabilities tied to the automation generate new contact types. The full rebound is visible.

Implication for sizing: a deflection business case measured at 6 months will systematically underestimate rebound. A 24-month measurement window is the minimum honest one.

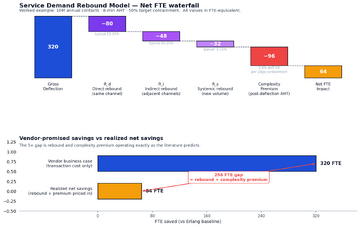

Worked sizing example

Hypothetical: 10M annual contacts, 8-minute AHT, 50% target containment via a new AI deflection layer.

- Gross Deflection: 5M contacts × 8 min = 40M handled-minutes saved ≈ 320 FTE (at 125,000 productive minutes / FTE / year)

- Demand Rebound at R = 50%: 2.5M new contacts × 8 min ≈ 160 FTE of rebound work

- Complexity Premium: AHT on remaining 5M rises from 8 to ~10.4 min (8 × 1.30 for ~6% × 5pp containment increments) → 12M added handled-minutes ≈ 96 FTE

- Net FTE Impact: 320 − 160 − 96 ≈ 64 FTE.

The vendor business case said 320. The realized number is 64. That is not a vendor failure — it is rebound and premium operating exactly as the literature predicts.

Practitioner playbook

- Apply the rebound discount to all deflection projections. Use 30-50% as a planning estimate when no direct measurement exists; tighten the band as post-deployment data accumulates.

- Measure the components separately. R_d (same-channel), R_i (cross-channel), R_s (new-volume) require different measurement instruments. Deploy all three before deployment for a clean baseline.

- Plan for the four emergent categories. Add capacity for digital platform support, cross-channel confusion, regulatory/privacy, and product-of-technology failures explicitly.

- Use post-deflection AHT in the staffing math. Apply the Complexity Premium (5-8% per 10pp containment) to the human-handled remainder.

- Set the measurement window at 24 months. Anything shorter understates rebound systematically.

- Treat rebound as input to the Interior Optimum (containment rate) calculation. Containment is a U-curve, not a maximization target — and SDRM is one of the curves on the underside.

Maturity Model Position

SDRM is operable at Level 4 — Advanced (The Ecosystem Emerges), with the underlying instrumentation as a Level 3 prerequisite.

- Level 1 — Initial (Emerging Operations) — Rebound is invisible. Operations report deflection without any post-deflection analysis.

- Level 2 — Foundational (Traditional WFM Excellence) — Rebound is anecdotally observed ("volume came back") but is not modeled or budgeted. Vendor business cases are taken at face value.

- Level 3 — Progressive (Breaking the Monolith) — The instrumentation exists (Variance Harvesting, probabilistic forecasting, contact-driver analysis) to measure rebound, but the model is typically still volume-only. Practitioners can see R_d but not R_i or R_s cleanly.

- Level 4 — Advanced (The Ecosystem Emerges) — SDRM is operating. All three rebound components are measured. Complexity Premium is in the staffing math. Emergent inquiry types are planned for. Deflection projections are routinely discounted by realistic rebound expectations.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — Rebound elasticities are calibrated against in-house longitudinal data. The model feeds the closed-loop governance layer; rebound drift triggers automatic recalibration of containment thresholds.

The Level 3 → Level 4 jump for this concept is the move from "we know rebound exists" to "we have a number for it that we put in the staffing model".

See Also

- Value-Based Planning Model — the framework SDRM sits inside

- Three-Pool Architecture — Pool AA's cost model includes the rebound term

- The Escalation Tax — the cost-side analog (rebound is a volume effect; escalation tax is a per-interaction effect)

- Interior Optimum (containment rate) — the U-curve where rebound is one of the rising-cost terms

- Variance Harvesting — Level 3 prerequisite for measuring rebound components

- Probabilistic Forecasting — provides the distributional outputs SDRM needs

- Intelligent Automation — the realism counterpart; automation's promise vs. its measured net effect

References

- ↑ Lango, T. (2026). Value-Based Models for Customer Operations — From Traditional Queuing to Bottom-Up Value Planning. WFM Labs white paper.

- ↑ Duranton, G. & Turner, M. (2011). The Fundamental Law of Road Congestion: Evidence from US Cities. American Economic Review.

- ↑ Sorrell, S. (2009). Jevons' Paradox revisited: The evidence for backfire from improved energy efficiency. Energy Policy.

- ↑ Greening, L., Greene, D. & Difiglio, C. (2000). Energy efficiency and consumption — the rebound effect — a survey. Energy Policy.

- ↑ Goodwin, P., Dargay, J. & Hanly, M. (2004). Elasticities of road traffic and fuel consumption with respect to price and income: a review. Transport Reviews.