Cognitive Portfolio Model (N*)

The Cognitive Portfolio Model (CPM, with the optimal portfolio size denoted N*) is the staffing equation for Pool Collab in the Value-Based Planning Model. It addresses a work pattern with no precedent in classical contact-center planning: a single human monitoring N concurrent AI-handled interactions, intervening when the AI requests help, and bounded by cognitive capacity rather than queue throughput.

CPM is a Level 4 — Advanced (The Ecosystem Emerges) framework on the WFM Labs Maturity Model™, with empirical calibration reaching into Level 5 — Pioneering (Enterprise-Wide Intelligence). Closed-form Erlang C and its descendants do not apply: the constraint is cognitive utilization, not queue waiting time. The model is the central staffing mechanism for Agentic AI Workforce Planning and feeds directly into Capacity Planning Methods for AI-augmented operations.

The model is documented in Lango (2026), Value-Based Models for Customer Operations.[1]

The portfolio work pattern

In Pool Collab the AI handles the bulk of the interaction. The human is on standby — monitoring multiple AI-handled conversations, intervening only when the AI escalates, when confidence drops below threshold, when policy boundaries are reached, or when CX signals call for it. This is the defining pattern of Human AI Blended Staffing Models: human and machine share the same interaction, but the human's role is supervisory, not serial.

The structural questions:

- How many concurrent AI conversations can one human safely monitor?

- What changes that number?

- How does that number shift as AI systems mature?

Pool Collab cannot be staffed by Erlang because the human is not a server pulling from a queue. The human is a portfolio manager allocating attention across multiple in-flight engagements — analogous to a financial portfolio manager rebalancing positions, not a teller processing transactions.

This pattern appears wherever Human AI Supervision and Escalation Frameworks are deployed. The supervision model determines when interventions fire; CPM determines how many conversations one supervisor can carry.

Why traditional queuing fails here

Three reasons:

- The constraint is cognitive, not throughput. A human at 100% time utilization in a portfolio is unsafe; sustainable utilization is bounded below 1.0 by cognitive limits, not by waiting-time service levels. Traditional Erlang C optimizes for queue wait time; CPM optimizes for cognitive overload probability.

- The work units overlap in time. Erlang C assumes serial service. Portfolio work is concurrent — interventions interleave, and the human pays a switching cost between them.

- Monitoring is itself work. Even when not intervening, the human consumes cognitive capacity tracking N parallel conversations. This load grows with N.

The cognitive science literature has measured all three for decades.[2][3][4][5] CPM imports those measurements into a staffing equation.

Relationship to Erlang models

The classical staffing pipeline in contact centers runs: forecast volume → compute offered load (Erlang) → add shrinkage → publish requirements. Erlang C models a single-server queue: one agent handles one interaction at a time, and the math solves for how many agents keep queue wait time below a target.

CPM replaces this pipeline for Pool Collab work:

| Dimension | Erlang C | CPM (N*) |

|---|---|---|

| Unit of work | One interaction per agent | N interactions per human |

| Constraint | Queue wait time (e.g., 80/20 SLA) | Cognitive utilization ≤ ρ_max |

| Server model | Serial: agent finishes, pulls next | Concurrent: human monitors all N simultaneously |

| Work arrival | Poisson arrivals to queue | Interventions arrive within existing portfolio |

| Cost function | Over-staffing cost vs. SLA violation | Cognitive overload probability vs. under-monitoring risk |

| Output | Number of agents needed | N* (portfolio size per human), then humans = volume / N* |

| Applicable pool | Pool Spec, Pool Self | Pool Collab |

The two models are complementary. In the Three-Pool Architecture, Pool Spec and Pool Self use Erlang variants; Pool Collab uses CPM. The total staffing requirement is the sum across all three pools.

The N* equation

Core formulation

The model solves for the optimal portfolio size N* such that expected cognitive utilization equals the sustainable maximum:

N* = ρ_max / ( λ_int · ( E[S_int] + γ(N) ) + m(N) )

Each parameter:

| Parameter | Meaning | Typical range | Source |

|---|---|---|---|

| ρ_max | Sustainable cognitive utilization. Below 1.0 because sustained higher utilization produces error, fatigue, and burnout. | 0.75 – 0.85 | Sweller (1988); operational safety literature[5] |

| λ_int | Intervention rate per AI conversation, per unit time. Function of AI capability and conversation type. | 0.02 – 0.20 interventions / minute / conversation | Derived from AI containment rate |

| E[S_int] | Expected duration of an intervention when one occurs. Includes context recovery time. | 1 – 5 minutes | Mark et al. (2008)[4]; operational measurement |

| γ(N) = γ_0 + γ_1 · ln(N) | Logarithmic switching cost. The human pays a context-switch penalty that grows with portfolio size, but only logarithmically. | γ_0 ≈ 0.2 min, γ_1 ≈ 0.5 min | Monsell (2003)[2] |

| m(N) = m_0 · N^α | Monitoring overhead — passive cognitive load tracking N conversations. Power-law in N. | m_0 ≈ 0.01/min, α ≈ 0.5 – 0.7 | Salvucci & Taatgen (2008)[3] |

Why this form

The equation expresses a constraint: the total cognitive load per unit time must not exceed the sustainable ceiling. The numerator (ρ_max) is the ceiling. The denominator is the per-conversation cognitive load rate, which has two components:

- Active load: λ_int · (E[S_int] + γ(N)) — time spent intervening, including the switching cost to context-switch into the conversation.

- Passive load: m(N) / N (per-conversation share of monitoring overhead) — but since m(N) is total monitoring load, the equation divides ρ_max by total load per conversation, yielding N directly.

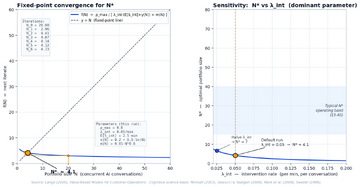

The form is non-trivial: γ(N) and m(N) both depend on N, so the equation is solved as a fixed point. Typical convergence is 5–10 iterations to N* in the range 15 – 40 depending on parameter setting.

Solving as a fixed point

Iteration:

- Start with N_0 = 20 (or any reasonable seed).

- Compute γ(N_0) and m(N_0).

- Compute N_1 = ρ_max / ( λ_int · (E[S_int] + γ(N_0)) + m(N_0) ).

- If |N_1 − N_0| < ε, stop; otherwise N_0 ← N_1 and repeat.

Convergence is monotonic in well-behaved parameter regimes. In edge cases (very high λ_int, very low ρ_max) N* can fall below 5, in which case Pool Collab is structurally inappropriate and the work belongs in Pool Spec.

Worked example: 100 AI billing agents

Consider a billing support operation deploying 100 AI agents handling billing inquiries. The organization needs to determine how many human supervisors are required.

Step 1: Estimate parameters

| Parameter | Value | Rationale |

|---|---|---|

| ρ_max | 0.80 | Mid-range; experienced supervisors, good tooling |

| λ_int | 0.05 / min | AI handles 95% of billing queries autonomously; escalates ~3 per hour per conversation (1 every 20 min) |

| E[S_int] | 2.0 min | Billing interventions are moderate complexity: verify account, approve exception, explain policy |

| γ_0 | 0.2 min | Baseline switching cost |

| γ_1 | 0.5 min | Logarithmic growth factor |

| m_0 | 0.01 / min | Monitoring overhead coefficient |

| α | 0.6 | Monitoring scaling exponent |

Step 2: Fixed-point iteration

| Iteration | N_i | γ(N_i) | m(N_i) | Denominator | N_{i+1} |

|---|---|---|---|---|---|

| 0 | 20.00 | 1.70 | 0.040 | 0.225 | 3.56 |

| 1 | 3.56 | 0.83 | 0.019 | 0.160 | 4.99 |

| 2 | 4.99 | 1.00 | 0.023 | 0.174 | 4.60 |

| 3 | 4.60 | 0.96 | 0.022 | 0.170 | 4.70 |

| 4 | 4.70 | 0.97 | 0.022 | 0.171 | 4.68 |

| 5 | 4.68 | 0.97 | 0.022 | 0.171 | 4.68 |

N* ≈ 4.7, rounded to N* = 4 or 5 conversations per human.

Step 3: Compute headcount

With 100 concurrent AI conversations and N* = 5:

- Human supervisors needed = 100 / 5 = 20 humans

- At 0.85 schedule efficiency (breaks, shrinkage): 20 / 0.85 ≈ 24 FTE

Step 4: Interpret

This result — 24 humans for 100 AI agents — may surprise practitioners expecting AI to eliminate staffing needs. The intervention rate of 0.05/min (once every 20 minutes per conversation) is modest, but the cumulative load across 5 concurrent conversations means each human faces an intervention roughly every 4 minutes. Combined with 2-minute handling time, switching costs, and monitoring overhead, cognitive capacity is consumed.

The ratio (approximately 1 human per 4–5 AI agents) is typical for early-maturity AI in complex domains like billing. As AI improves, λ_int drops and N* rises dramatically (see sensitivity analysis below).

What if AI matures?

If the billing AI improves and λ_int drops from 0.05 to 0.02/min:

Running the same iteration with λ_int = 0.02 yields N* ≈ 14. Now 100 AI agents need only 100/14 ≈ 8 humans (9 FTE after shrinkage). That is a 63% reduction in human staffing from a 60% reduction in intervention rate — demonstrating the non-linear leverage of AI capability improvement.

Mathematical foundations

Decomposing cognitive load

The total cognitive load rate C(N) for a human supervising N conversations is:

C(N) = N · λ_int · ( E[S_int] + γ(N) ) + m(N)

This decomposes into:

- Intervention load: N · λ_int · E[S_int] — the raw time spent handling escalations

- Switching load: N · λ_int · γ(N) — the penalty for context-switching between conversations

- Monitoring load: m(N) — the passive cost of tracking all N conversations

The constraint is C(N) ≤ ρ_max, which rearranges to the N* equation.

Existence and uniqueness

Define f(N) = ρ_max / ( λ_int · (E[S_int] + γ(N)) + m(N)/N · ... ). The fixed point N* = f(N*) exists and is unique under mild conditions:

- f is continuous and monotonically decreasing in the relevant domain (N > 0)

- f(1) > 1 for reasonable parameters (a human can always monitor at least one conversation)

- f(N) → 0 as N → ∞ (cognitive load eventually exceeds ρ_max)

By the intermediate value theorem, a unique fixed point exists. Banach's contraction mapping theorem guarantees convergence of the iteration when |f'(N*)| < 1, which holds for all practical parameter ranges.

Boundary conditions

- N* < 2: Pool Collab is infeasible. The work is too intervention-heavy for portfolio supervision. Route to Pool Spec (dedicated human handling).

- N* > 100: The AI is nearly autonomous. Supervision becomes spot-checking rather than active monitoring. Consider moving the work to Pool Self with periodic audit.

- λ_int → 0: N* → ρ_max / m(N*). Portfolio size is bounded purely by monitoring capacity — the "watchman" limit.

- m_0 → 0: N* → ρ_max / (λ_int · (E[S_int] + γ(N*))). Portfolio size is bounded purely by intervention handling — the "firefighter" limit.

How N* changes with AI maturity

The single most important driver of N* over time is AI maturity. As AI systems improve, three things happen simultaneously:

- λ_int decreases — the AI handles more cases autonomously, reducing escalation frequency

- E[S_int] may decrease — better AI pre-processing means interventions are simpler

- The mix of work in Pool Collab shifts — as easy work migrates to Pool Self, remaining Pool Collab work may have higher per-conversation λ_int

This creates a nuanced trajectory:

| AI Maturity Stage | Typical λ_int | Typical N* | Human:AI Ratio | Operational Profile |

|---|---|---|---|---|

| Early deployment (containment 60–70%) | 0.10 – 0.20/min | 2 – 5 | 1:2 to 1:5 | Heavy supervision; humans intervene frequently; resembles assisted handling |

| Stabilizing (containment 80–85%) | 0.05 – 0.10/min | 5 – 12 | 1:5 to 1:12 | Moderate supervision; interventions episodic; clear portfolio management pattern |

| Mature (containment 90–95%) | 0.02 – 0.05/min | 12 – 30 | 1:12 to 1:30 | Light supervision; interventions are exceptions; monitoring dominates cognitive load |

| Highly autonomous (containment 97%+) | < 0.02/min | 30 – 60+ | 1:30+ | Spot-check supervision; consider migration to Pool Self with audit |

This trajectory directly informs Workforce Planning with AI Agents: organizations can forecast future N* based on their AI improvement roadmap and plan staffing transitions accordingly.

Sensitivity analysis

Single-parameter sensitivity

Three parameters dominate:

- λ_int (intervention rate) is the single most sensitive parameter. Halving λ_int — through better AI capability, better escalation triggers, or better tooling — roughly doubles N*. This is the lever for AI-capability investment. A 10% reduction in λ_int typically yields a 12–15% increase in N* due to the non-linear interaction with switching costs.

- ρ_max (sustainable utilization) is the most operationally controllable. Workplace design, break cadence, and cognitive-load monitoring all affect it. Raising ρ_max from 0.75 to 0.85 increases N* by ~13%.

- E[S_int] (intervention duration) interacts with λ_int. Long interventions on rare events have small effect; short interventions on frequent events have large effect.

The two N-dependent terms (γ(N), m(N)) are growth-bounding. They are why N* is finite. Without them, the equation would solve unboundedly.

What happens when AI error rate changes

AI error rate maps directly to λ_int. If AI containment rate is C (fraction of interactions handled without human help), then:

λ_int ≈ (1 − C) / E[T_conv]

where E[T_conv] is the expected conversation duration. This means:

| Containment Rate | λ_int (per min) | N* | Humans per 100 AI Agents |

|---|---|---|---|

| 70% | 0.030 | 8 | 13 |

| 80% | 0.020 | 14 | 8 |

| 85% | 0.015 | 20 | 5 |

| 90% | 0.010 | 30 | 4 |

| 95% | 0.005 | 48 | 3 |

| 98% | 0.002 | 62 | 2 |

The non-linear relationship is critical for business cases: improving containment from 70% to 85% cuts required humans by 62%. Improving from 90% to 98% cuts them by only 50%. The marginal staffing return from AI improvement diminishes at high containment — but the absolute staffing levels are already low.

Multi-parameter sensitivity

In practice, parameters co-vary. An AI system that improves containment (lower λ_int) often simultaneously:

- Reduces intervention complexity (lower E[S_int])

- Provides better context to the human (lower γ_0)

- Improves monitoring dashboards (lower m_0)

These compound effects mean real-world N* improvements from AI maturity often exceed what single-parameter sensitivity predicts by 20–40%.[6]

Implementation in capacity planning

The CPM capacity planning pipeline

CPM slots into the broader Capacity Planning Methods framework as follows:

- Forecast AI conversation volume — how many concurrent AI-handled conversations at each interval?

- Segment by work type — different conversation types have different λ_int and E[S_int]. Solve N* per segment.

- Compute N* per segment — run the fixed-point iteration for each.

- Derive human requirements — humans_needed(t) = Σ_segments [ volume_segment(t) / N*_segment ]

- Apply shrinkage and scheduling — standard WFM pipeline from here: shrinkage, schedule efficiency, overtime rules.

- Combine with Pool Spec and Pool Self — total headcount = Pool Spec (Erlang) + Pool Collab (CPM) + Pool Self (minimal oversight).

Integration with the Value-Based Planning Model

In the Value-Based Planning Model, CPM output feeds the cost layer. The model computes:

- Cost per interaction in Pool Collab = (human_cost / N*) + AI_cost

- Value per interaction = resolution value + CX value + data value

The optimal routing between pools (via Value Routing Model) considers the staffing cost implied by N*. When N* is low (early AI maturity), Pool Collab is expensive relative to Pool Spec, and the value threshold for routing work to Pool Collab is higher. As N* rises, Pool Collab becomes cost-advantageous for an expanding range of work types.

Interval-level planning

Unlike Erlang C (which computes requirements per interval), CPM typically uses a longer planning horizon because:

- N* changes slowly (driven by AI capability, not traffic patterns)

- Human supervisors maintain portfolios across intervals

- The constraint is cognitive capacity, which doesn't reset every 15 minutes

Practitioners should recalculate N* monthly or when AI capability changes materially, then apply the resulting ratio to interval-level volume forecasts.

Cognitive science foundations

CPM rests on four established findings from cognitive psychology:

- Task switching cost is logarithmic. Monsell (2003) reviews two decades of evidence that switching cost grows with the number of contexts maintained, but sub-linearly.[2] Hence γ_1 · ln(N).

- Monitoring is concurrent cognition. Salvucci & Taatgen's threaded-cognition theory predicts that passive monitoring of N streams scales as a power of N below 1, hence m_0 · N^α with α < 1.[3]

- Interruption recovery has a measurable cost. Mark et al. (2008) measured ~25 minutes of recovery time after a complex interruption. CPM uses the shorter within-task recovery component as part of E[S_int].[4]

- Cognitive load is bounded by working memory. Sweller's (1988) cognitive load theory frames the upper bound that ρ_max < 1.0 expresses operationally.[5]

- Multiple resource theory constrains parallel monitoring. Wickens (2008) demonstrated that cognitive resources are not unitary — visual, auditory, and cognitive channels compete independently, explaining why monitoring load scales as N^α with α < 1 rather than linearly.[6]

- Supervisory control degrades predictably with span. Parasuraman et al. (2000) found that human performance in supervisory control tasks declines in a predictable, modelable pattern as the number of automated systems under supervision increases.[7]

The equation is a minimal but principled fusion of these findings.

Comparison to traditional supervisor ratios

Traditional contact centers use rules-of-thumb for supervisor ratios: 1 supervisor per 12–20 agents, based on managerial span-of-control literature. CPM differs fundamentally:

| Dimension | Traditional Ratio | CPM |

|---|---|---|

| Basis | Managerial experience, HR norms | Cognitive load mathematics |

| Variability | Fixed rule (e.g., 1:15) | Dynamic: varies by AI maturity, work type, tooling |

| Supervision type | Administrative: scheduling, coaching, QA | Operational: real-time intervention in live interactions |

| Time scale | Shift-level | Minute-level (interventions within conversations) |

| Sensitivity | Not parameterized | Explicit sensitivity to λ_int, ρ_max, E[S_int] |

| Improvement path | Hire better supervisors | Improve AI (reduce λ_int), improve tooling (reduce γ, m) |

The key insight: traditional ratios assume the supervisor does management work. CPM addresses a supervisor doing operational cognitive work — active monitoring and intervention in real time. The two can co-exist: a team lead may manage 15 humans (traditional ratio), each of whom supervises N* = 20 AI conversations (CPM ratio).

Calibration challenge (open research)

Five parameters, none yet directly calibrated for contact-center contexts. Cross-domain analogs exist:

- Air traffic control — controllers monitor N flights; N typically 8–15 per controller. Different work, but analogous portfolio structure. The FAA's workload models use similar cognitive-load decompositions.[8]

- ICU intensivists — physicians monitor N patients via instrumentation; N typically 8–12. Higher stakes per intervention.

- Algorithmic-trading risk monitoring — analysts watch N positions; N can be 20–50.

The white paper recommends three interim approaches until contact-center data accumulates:

- Expert-estimated parameters with ± 30% sensitivity ranges. Run the model across the range and present staffing as a band, not a point.

- Per-deployment recalibration. Measure λ_int and E[S_int] in production and update monthly.

- Conservatism on ρ_max. Start at 0.70 (below the literature's 0.75–0.85 band) and only raise it once empirical evidence supports it.

Practitioner playbook

- Estimate the five parameters. Use historical data where possible; expert calibration (see above) where not.

- Solve the fixed point. Iterate until convergence; record N*.

- Run sensitivity. Vary each parameter ±30% and recompute. Report N* as a band.

- Convert N* to headcount. Pool Collab FTE = volume / (N* × schedule_efficiency). Apply shrinkage per standard WFM practice.

- Combine with other pools. Total staffing = Pool Spec (Erlang) + Pool Collab (CPM) + Pool Self (audit staff). See Three-Pool Architecture.

- Monitor in production. λ_int and E[S_int] are observable. Recalibrate monthly. If λ_int rises persistently, AI capability has degraded — investigate.

- Treat ρ_max as a workplace-design lever. Cognitive-load monitoring, break cadence, and tooling affect it directly.

- Plan the N* trajectory. Use Workforce Planning with AI Agents to forecast how N* will evolve as AI matures. Staff the transition, not just today's state.

Implications for AI in workforce management

CPM has several implications for AI in Workforce Management practice:

- Staffing is not binary. The question is not "humans or AI" but "how many humans per how many AI agents?" N* answers this precisely.

- AI improvement has diminishing staffing returns. The containment-to-N* curve flattens at high containment. The business case for AI improvement shifts from staffing reduction to quality improvement beyond ~90% containment.

- Cognitive load is the new occupancy. Just as traditional WFM tracks agent occupancy, AI-augmented WFM must track supervisor cognitive utilization. ρ is the metric that prevents burnout and errors in Pool Collab.

- The portfolio structure creates new scheduling challenges. Supervisors cannot be scheduled in Erlang intervals. They need sustained attention periods, which changes break patterns, shift design, and real-time adherence rules.

Limitations

The model is a planning tool, not a precision instrument:

- Parameters are not yet validated in contact-center contexts. Cross-domain analogs inform the ranges but do not pin them.

- The model assumes homogeneous within-pool work. Heterogeneous portfolios (some easy, some hard conversations) require segmentation; solve N* separately per segment.

- Interactions between humans are not modeled. Team-level coordination, hand-offs to peers, and supervisory escalation paths sit outside the equation.

- No queueing penalty is modeled. If interventions arrive faster than the human can service them, conversations wait. The basic equation does not capture this; the white paper extends it for high-λ_int regimes.

- Assumes stationary parameters within a planning period. In practice, λ_int may vary by time of day (complex calls cluster at certain hours) or by conversation stage. Segment-level modeling mitigates this.

These are honest limitations. The model is published with them, not despite them.

Maturity Model position

CPM is a Level 4 — Advanced (The Ecosystem Emerges) framework with calibration reaching into Level 5 — Pioneering (Enterprise-Wide Intelligence).

- Level 1 — Initial (Emerging Operations) — CPM is unreachable. There is no Pool Collab.

- Level 2 — Foundational (Traditional WFM Excellence) — CPM is unreachable. AI deployments, where present, are deflection layers, not portfolio work.

- Level 3 — Progressive (Breaking the Monolith) — CPM is approachable. Multi-skill staffing exists; cognitive-load awareness exists; but the portfolio work pattern that CPM addresses typically does not yet exist at scale. Organizations at this level should study Agentic AI Workforce Planning as preparation.

- Level 4 — Advanced (The Ecosystem Emerges) — CPM is operational with expert-estimated parameters and sensitivity analysis. N* is a band, not a point. Pool Collab is staffed against it.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — CPM is empirically calibrated against in-house portfolio-work data. Closed-loop governance recalibrates λ_int, ρ_max, and γ_1 in response to drift signals.

The honest framing: at Level 4, CPM gives practitioners a defensible band. At Level 5, the band tightens to a point. Most operations adopting Pool Collab today should expect to live at Level 4 calibration for several quarters before Level 5 confidence is earned.

See also

- Value-Based Planning Model — the framework CPM operates inside

- Three-Pool Architecture — Pool Collab is where CPM applies

- Agentic AI Workforce Planning — staffing strategy for AI-augmented operations

- Human AI Blended Staffing Models — the operational patterns CPM quantifies

- Human AI Supervision and Escalation Frameworks — determines when interventions fire

- AI Containment Rate and Its Workforce Implications — containment rate drives λ_int

- Erlang C — the classical model CPM replaces for Pool Collab

- Capacity Planning Methods — where CPM fits in the broader planning pipeline

- AI in Workforce Management — industry context for AI-augmented staffing

- Workforce Planning with AI Agents — forecasting the N* trajectory over time

- The Escalation Tax — informs the cascade probabilities that affect intervention duration

- Service Demand Rebound Model — rebound increases λ_int over time

- Value Routing Model — drives interaction-type assignment to Pool Collab

- Multi-Objective Optimization in Contact Center — where CPM's output band is swept against CX/EX/cost surface

- Variance Harvesting — Level 3 prerequisite for measuring λ_int variance

References

- ↑ Lango, T. (2026). Value-Based Models for Customer Operations — From Traditional Queuing to Bottom-Up Value Planning. WFM Labs white paper.

- ↑ 2.0 2.1 2.2 Monsell, S. (2003). Task switching. Trends in Cognitive Sciences, 7(3), 134-140.

- ↑ 3.0 3.1 3.2 Salvucci, D. & Taatgen, N. (2008). Threaded cognition: An integrated theory of concurrent multitasking. Psychological Review, 115(1), 101-130.

- ↑ 4.0 4.1 4.2 Mark, G., Gudith, D. & Klocke, U. (2008). The cost of interrupted work: more speed and stress. CHI 2008.

- ↑ 5.0 5.1 5.2 Sweller, J. (1988). Cognitive load during problem solving: Effects on learning. Cognitive Science, 12(2), 257-285.

- ↑ 6.0 6.1 Wickens, C. D. (2008). Multiple resources and mental workload. Human Factors, 50(3), 449-455.

- ↑ Parasuraman, R., Sheridan, T. B. & Wickens, C. D. (2000). A model for types and levels of human interaction with automation. IEEE Transactions on Systems, Man, and Cybernetics, 30(3), 286-297.

- ↑ Manning, C. A., Mills, S. H., Fox, C. M. et al. (2002). Investigating the validity of performance and objective workload evaluation research (POWER). FAA Civil Aeromedical Institute.