Human AI Supervision and Escalation Frameworks

Human-AI supervision and escalation frameworks are the operational structures, staffing models, and governance mechanisms that govern how human workers monitor, correct, and intervene in the performance of autonomous AI agents deployed in contact center and knowledge-worker environments. As AI agents capable of handling end-to-end customer contacts move from experimental deployments to production operations, the question of how many humans are needed to supervise a given number of AI agents — and under what conditions human intervention is triggered — has become a distinct workforce planning problem. The Three-Pool Architecture describes a structural model for blended human-AI operations in which a supervision layer forms one of the three principal workforce pools. This article addresses the supervision ratio problem, the taxonomy of escalation mechanisms, the emerging role of the AI supervisor, and the cognitive and operational risks — including automation complacency and situation awareness degradation — that human oversight programs must actively counteract. Human-AI supervision frameworks sit at the intersection of human factors research, operational workforce management, and emerging AI governance regulation, and are likely to become a formal discipline as AI agent deployment scales.

The Supervision Ratio Problem

Defining the Supervision Ratio

The supervision ratio in human-AI blended operations is the number of human overseers required per N AI agents operating concurrently. Unlike the supervisor-to-agent ratio in traditional contact centers — which is primarily a management span-of-control question driven by coaching, quality, and escalation volume — the AI supervision ratio is a functional capacity question: how much supervisory attention does a given AI agent portfolio demand per unit of time, and how much can a single human supervisor provide?

The ratio is a function of several interacting variables:

- Contact complexity: AI agents handling simple, well-defined contact types (balance inquiries, appointment confirmation, status checks) require less oversight than agents handling complaint resolution, sales, or emotionally sensitive interactions. Complex contacts generate more edge cases and more escalation candidates per unit time.

- AI maturity and calibration: newly deployed AI agents have higher error rates and more unpredictable failure modes than mature, calibrated systems. Supervision ratios should be higher during initial deployment windows and reduced as the system demonstrates reliable performance against defined quality thresholds.

- Regulatory requirements: in regulated industries (healthcare, financial services, insurance), certain contact types may require human review of every AI-handled interaction regardless of AI confidence scores.

- Cognitive load ceiling: humans monitoring multiple AI agents simultaneously experience cognitive load that limits effective oversight. Research in applied cognitive psychology suggests that effective monitoring of autonomous systems degrades when the number of monitored systems exceeds an individual's working memory capacity.[1] For AI agent monitoring, where each "channel" is a live conversation or dashboard panel, the effective ceiling may be considerably lower than the theoretical working memory limit.

Empirical supervision ratios reported in early large-scale AI contact center deployments vary widely, from 1:20 (one human supervisor per 20 AI agents) in high-maturity deployments handling simple contact types, to 1:5 or lower in complex, regulated, or recently deployed environments. No universal standard exists; organizations must derive their own ratio from empirical observation of supervisor workload during initial deployments.

Staffing the Supervision Layer

Forecasting the staffing requirement for the supervision layer applies the same logical structure as Capacity Planning Methods for any workforce pool, with supervision demand driven by:

- Supervision Events per Hour = (AI Contact Volume) × (Escalation Rate) + (Quality Review Sample Rate) + (Monitoring Alert Rate)

Each component requires separate estimation: escalation rate (derived from AI Containment Rate and Its Workforce Implications — one minus containment rate plus partial-resolution escalations), quality review sample rate (determined by compliance requirements and QA methodology), and monitoring alert rate (frequency with which AI performance dashboards generate alerts requiring human assessment).

The average handling time for each supervision event type must be estimated to convert event rates into FTE requirements: an escalation resolution may require 5–15 minutes, a QA sample review 3–8 minutes, and a monitoring alert investigation 1–5 minutes depending on diagnostic complexity.

Intraday supervision demand is not flat. It correlates with AI agent contact volume (higher during peak periods), with AI confidence score patterns (which may show diurnal variation as contact mix changes), and with AI platform performance (degradation events can spike monitoring demands suddenly). Intraday Management of the supervision layer therefore requires real-time monitoring and staffing adjustment capability.

Escalation Taxonomy

Escalation from AI to human handling is not a single event type but a family of distinct mechanisms with different triggers, handling requirements, and workforce implications.

Confidence-Based Escalation

AI conversational agents assign internal confidence scores to their classifications of contact intent and the adequacy of their proposed responses. Confidence-based escalation routes a contact to a human agent when the AI's confidence score falls below a defined threshold. This is the most common escalation mechanism in production deployments.

The threshold setting involves a tradeoff between containment rate and quality: lowering the confidence threshold increases escalation rate (and human staffing cost) while reducing the probability of AI mishandling; raising it increases containment rate while accepting more AI errors. Threshold optimization should be empirically informed by the cost of AI errors in the specific contact type, not set to a universal default.

Confidence-based escalation has a structural weakness: AI agents can be confidently wrong. A poorly calibrated model may express high confidence in incorrect responses and fail to escalate precisely when human intervention would be most valuable. Calibration quality — the correspondence between a model's confidence scores and its actual accuracy — should be a monitored metric in AI quality assurance programs.

Topic-Based Escalation

Certain contact types are defined as human-mandatory regardless of AI confidence score. Common candidates include legal threats or regulatory complaints, mental health crisis indicators or expressions of self-harm, requests for exceptions requiring policy judgment, high-value customer segments where relationship continuity requires human engagement, and regulatory-sensitive information disclosures in governed industries. Topic-based escalation provides predictable routing for known high-risk contact categories and is more robust to AI calibration failures than pure confidence-based routing. Its limitation is completeness: only contact types anticipated and explicitly listed are protected.

Sentiment-Based Escalation

Sentiment analysis layers applied to real-time conversation transcripts can detect customer distress signals — expressed frustration, emotional intensity, repetitive complaint patterns — and trigger escalation when sentiment scores fall below defined thresholds. Sentiment-based escalation is designed to catch cases where the customer is dissatisfied with AI handling but has not explicitly requested a human agent.

The reliability of sentiment-based escalation is mixed in practice. Sentiment models trained on average text may misclassify culturally specific communication styles or formal complaint language. Calibration against human rater assessments of distress in the specific operational context — rather than use of generic pretrained sentiment models — substantially improves reliability.

Customer-Requested Escalation

The baseline escalation mechanism is explicit customer request. Effective AI design ensures this path is always available, clearly signaled, and immediately responsive. Blocking, obscuring, or delaying customer-requested escalation — sometimes described as "AI containment friction" — damages customer trust and may have regulatory implications in some jurisdictions. High rates of customer-requested escalation indicate that AI handling is not meeting customer expectations and may signal quality issues requiring investigation.

Timeout-Based Escalation

When an AI agent fails to reach resolution within a defined conversation length or time limit, timeout-based escalation routes the contact to a human agent. This mechanism catches situations where the AI has engaged in prolonged back-and-forth without converging on resolution — often indicative of a contact type at the boundary of AI capability. Timeout thresholds should be set by contact type based on empirical distributions of AI resolution times; applying a single universal timeout ignores natural variation in contact complexity.

The AI Supervisor Role

A New WFM Role

The proliferation of AI agent deployments has created a workforce role that did not exist in pre-AI contact center operations: the AI supervisor. Distinct from both the traditional contact center supervisor and the IT administrator, the AI supervisor is a frontline operational role responsible for: real-time monitoring of AI agent conversation quality and performance dashboards; handling escalated contacts passed from AI agents to human resolution; investigating performance anomalies and AI confidence score degradations; providing feedback to AI development teams on systematic failure patterns; and maintaining situational awareness of AI portfolio status during high-demand periods.

The skills required differ from those of traditional agent supervisors. AI supervisors must be capable of reading conversation transcripts analytically — identifying where AI reasoning went wrong, not just whether the outcome was correct — and of operating monitoring dashboards with multiple concurrent data streams. They require sufficient understanding of how the AI system works to distinguish between AI model failures, integration failures, and genuinely novel contact types the model was not designed to handle.

Cognitive Load and Burnout Risk

The cognitive load profile of AI supervision creates distinct burnout risk compared to traditional supervision. Traditional supervisors experience load peaks during queue crises, complex escalations, and coaching sessions. AI supervisors experience continuous, lower-amplitude cognitive load from monitoring multiple AI conversations simultaneously, punctuated by sudden high-intensity escalation events requiring rapid context acquisition.

The monitoring task has characteristics associated with vigilance decrements: it requires sustained attention to multiple data streams for extended periods in the absence of frequent high-signal events, which degrades human monitoring reliability over time. Vigilance research consistently shows that human detection rates for rare critical events decline significantly after 30–45 minutes of sustained monitoring, even when subjects are motivated and adequately trained.[2] Shift design for AI supervisors should account for this vigilance decrement through structured break cadences and rotation strategies that prevent prolonged unbroken monitoring periods.

The burnout risk specific to AI supervision includes the emotional labor of handling escalated contacts that have already failed AI resolution — a selection-enriched pool of frustrated, complex, or distressed customers — combined with the cognitive effort of sustained monitoring and the novel stress of working within a human-machine system that the supervisor cannot fully control or predict. Burnout and Schedule Induced Attrition frameworks developed for traditional agents require adaptation to capture the distinctive stressors of this role, and Agent Experience and Wellbeing monitoring should explicitly include AI supervisor populations.

Automation Complacency and Over-Trust

Parasuraman and Riley (1997) established a taxonomy of four failure modes in human-automation interaction: misuse (over-reliance on automation), disuse (rejection of automation despite its validity), abuse (inappropriate deployment), and complacency (failure to monitor automation adequately because it is "usually right").[3] Automation complacency is the failure mode most relevant to AI supervision: as AI agents demonstrate reliable performance over time, human supervisors progressively reduce monitoring intensity, trusting the system to perform correctly. This reduction is rational when performance is reliable — but it creates a monitoring gap precisely at moments when performance degrades unexpectedly.

Bansal et al. (2019) found in human-AI teaming experiments that human supervisors calibrate their intervention rates to recent AI performance, reducing oversight after periods of high AI accuracy.[4] This adaptive behavior creates systemic fragility: a shift in distribution (new product type, regulatory change, adversarial contact pattern) that degrades AI performance may not be detected for extended periods if supervisors have reduced monitoring intensity based on prior reliable performance.

Countermeasures to automation complacency include: regular complacency tests (deliberately injecting known-failure contact types into AI queues to verify supervisors detect and escalate them); monitoring intensity metrics (tracking the rate at which supervisors actively review AI conversations and flagging declining rates); and randomized mandatory review (requiring supervisors to review a random sample of AI conversations on each shift, regardless of escalation rates, maintaining active engagement with AI output).

Situation Awareness Degradation

Endsley's (1995, 2017) model of situation awareness (SA) describes three levels: perception of current state (SA Level 1), comprehension of situation meaning (SA Level 2), and projection of future states (SA Level 3).[5] As AI agent portfolios grow, each level degrades for human supervisors:

- SA Level 1 (perception): with 5 AI agents, a supervisor can track each conversation in near-real-time; with 50, tracking individual conversations is impossible and supervisors must rely on aggregated dashboards, losing visibility into individual agent behavior.

- SA Level 2 (comprehension): understanding which portions of the AI portfolio are at risk requires interpreting aggregated signals — confidence score distributions, escalation rate trends, sentiment alerts — rather than directly observing agent behavior.

- SA Level 3 (projection): anticipating future AI performance failures before they occur requires pattern recognition across historical performance data and current operating conditions — a task that places heavy demands on expert knowledge and is poorly supported by most current monitoring dashboards.

Endsley (2017) notes that the human factors challenge of supervisory control over autonomous systems is distinct from — and in some respects harder than — direct control, because direct control provides continuous sensorimotor feedback that automation supervision does not.[6] Dashboard design for AI agent monitoring must be deliberately structured to support all three SA levels.

Real-Time Monitoring Infrastructure

| Display Element | Information Supported | SA Level |

|---|---|---|

| Conversation-level confidence score feed | Per-agent current confidence in active conversations | Level 1 |

| Escalation queue depth and aging | Backlog of escalated contacts awaiting human resolution | Level 1 |

| Containment rate trend (15-minute rolling) | AI performance trend; early degradation detection | Level 2 |

| Confidence score distribution histogram | Shift in overall AI uncertainty — earlier warning than aggregate containment rate | Level 2 |

| Sentiment distribution across active conversations | Portfolio-level customer distress signal | Level 2 |

| Predicted escalation volume (next 30 minutes) | Forward staffing adequacy signal | Level 3 |

| Historical anomaly comparison | "Is current behavior normal for this time of day/contact mix?" | Level 3 |

Failure detection latency — the time between AI performance degradation onset and human detection — is a key monitoring system quality metric. Latency above 10–15 minutes in high-volume AI deployments allows substantial volumes of degraded interactions to reach customers before intervention.

Quality Assurance for AI Agents

Traditional contact center QA frameworks sample completed human interactions, evaluate them against rubrics, and feed results back through coaching cycles. AI agent QA requires structural adaptation:

- Sampling strategy: random sampling is insufficient when AI failure modes are rare events concentrated in specific contact types. Stratified sampling — with oversampling of escalated contacts, low-confidence interactions, and novel contact types — is necessary to detect systematic failure patterns with reasonable sample sizes.

- Evaluation rubrics: AI-specific rubrics must assess factual accuracy, policy adherence, and appropriate escalation judgment — not just interpersonal dimensions designed for human agent evaluation.

- Calibration: QA evaluators must be calibrated against a reference standard. Automated QA tools that use language models to evaluate AI interactions require careful prompt design and regular validation against human assessments.

- Feedback loops: QA findings for AI agents must flow to AI development and training teams, not just operations supervisors. This requires a defined channel between operations QA and AI engineering — a governance structure absent in most traditional contact center organizations.

Governance: Who Owns AI Quality?

The organizational question of who owns AI agent quality is unresolved in most early-deploying organizations. Four ownership models are observed in practice: WFM ownership (the WFM function extends its monitoring and staffing mandate to include AI agent capacity and quality — consistent with the Three-Pool Architecture model); IT/Technology ownership (AI agent performance treated as a platform SLA); Product ownership (the business product team that specifies AI agent behavior owns quality outcomes); and Dedicated AI Operations (a new function with explicit responsibility for AI agent performance management).

Shneiderman (2022) argues that human-centered AI requires clear accountability structures in which a specific human function is accountable for AI system behavior toward the humans it affects — customers and workers alike.[7] In contact center contexts, this accountability argument points toward WFM and Operations ownership, given their existing accountability for service level and quality outcomes. Raisch and Krakowski (2021) observe that AI governance in organizations frequently fails not due to inadequate technology but due to unclear human accountability structures — the "accountability gap" that arises when automated systems produce outcomes that no individual owns.[8]

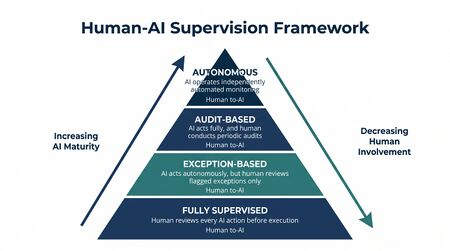

Maturity Model Considerations

| Maturity Level | Human-AI Supervision Posture |

|---|---|

| L1–L2 | AI agents (if deployed) treated as IVR-equivalent; no formal supervision layer; escalations handled ad hoc; no monitoring infrastructure |

| L3 | Basic escalation framework defined; human agents handle AI escalations; rudimentary AI performance monitoring; QA applied informally |

| L4 | Dedicated AI supervisor role defined and staffed; escalation taxonomy documented; real-time monitoring dashboards operational; QA sampling strategy formalized; supervision staffing model integrated with capacity planning |

| L5 | Supervision ratio empirically calibrated and dynamically adjusted; automation complacency countermeasures active; situation-awareness-structured dashboards (all three SA levels); AI QA feedback loop integrated with AI development cycle; governance structure for AI quality accountability formally established; compliance with EU AI Act human oversight requirements documented |

Related Concepts

- Three-Pool Architecture

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- AI Containment Rate and Its Workforce Implications

- Real-Time Operations

- Intraday Management

- Agent Experience and Wellbeing

- Burnout and Schedule Induced Attrition

- Adherence and Conformance

- Capacity Planning Methods

- Schedule Generation

- Interval Level Staffing Requirements

- Service Level

- Performance Management

- Reporting and Analytics Framework

- WFM Roles

- WFM Labs Maturity Model

- Workforce Management Governance and Change Management

References

- ↑ Parasuraman, R., & Riley, V. (1997). Humans and Automation: Use, Misuse, Disuse, Abuse. Human Factors, 39(2), 230–253.

- ↑ Parasuraman, R., & Riley, V. (1997). Humans and Automation: Use, Misuse, Disuse, Abuse. Human Factors, 39(2), 230–253.

- ↑ Parasuraman, R., & Riley, V. (1997). Humans and Automation: Use, Misuse, Disuse, Abuse. Human Factors, 39(2), 230–253.

- ↑ Bansal, G., Vaughan, J. W., Wallach, H., & Weld, D. S. (2019). Beyond Accuracy: The Role of Mental Models in Human-AI Team Performance. Proceedings of the 7th AAAI Conference on Human Computation and Crowdsourcing (HCOMP).

- ↑ Endsley, M. R. (2017). From Here to Autonomy: Lessons Learned from Human-Automation Research. Human Factors, 59(1), 5–27.

- ↑ Endsley, M. R. (2017). From Here to Autonomy: Lessons Learned from Human-Automation Research. Human Factors, 59(1), 5–27.

- ↑ Shneiderman, B. (2022). Human-Centered AI. Oxford University Press.

- ↑ Raisch, S., & Krakowski, S. (2021). Artificial Intelligence and Management: The Automation-Augmentation Paradox. Academy of Management Review, 46(1), 192–210.

---

Three articles written and saved to `/home/tlango/cloud/projects/wfmwiki/02-working/articles-pending/`:

- `back-office-and-knowledge-worker-wfm.wiki` — 2,786 words - `algorithmic-fairness-and-bias-in-workforce-scheduling.wiki` — 2,828 words - `human-ai-supervision-and-escalation-frameworks.wiki` — 3,554 words

- Key decisions made:**

- Back-Office WFM**: Built the foundational math around the backlog conservation equation and deadline-weighted capacity planning — the structural break from Erlang-based interval coverage. Covered all five work type categories (claims, underwriting, case management, loan origination, email/ticket). The SLA-vs-service-level distinction is called out explicitly as a common transition error.

- Algorithmic Fairness**: Led with the impossibility theorem before covering regulatory specifics — forces readers to understand that fairness is a values choice before they encounter the compliance requirements. The EU AI Act coverage is accurate to Annex III point 4. The Bertsimas "price of fairness" framing reframes the tradeoff as quantifiable rather than existential.

- Human-AI Supervision**: Built around the Parasuraman-Riley misuse/disuse/abuse/complacency taxonomy and Endsley's three-level SA model as the theoretical spine. The five-type escalation taxonomy (confidence, topic, sentiment, customer-requested, timeout) is distinct and actionable. The governance section names the four ownership models observed in practice and reaches a defensible position without being prescriptive.