Workforce Planning with AI Agents

Workforce Planning with AI Agents refers to the discipline of adapting the traditional workforce planning cycle—forecasting, capacity planning, scheduling, and real-time management—to operate effectively when the labor pool includes both human agents and autonomous AI agents. Unlike theoretical treatments of blended staffing (see Agentic AI Workforce Planning) or technical routing architectures (see AI Agent Orchestration for WFM), this article focuses on the practical planning processes that WFM practitioners must execute on annual, monthly, weekly, and daily cycles when AI agents handle a meaningful share of customer interactions.

The introduction of AI agents into the workforce represents the most significant operational change to contact center planning since the shift from single-channel voice to [[Omnichannel Workforce Management|omnichannel operations]]. Traditional WFM processes assume a workforce composed entirely of humans—workers who have shifts, take breaks, call in sick, require training, and operate within contractual and regulatory constraints. AI agents violate nearly every one of these assumptions. They do not have shifts. They do not take breaks. They do not experience fatigue. They can scale from ten concurrent interactions to ten thousand in minutes. But they also fail differently than humans: when a human agent has a bad day, one customer at a time is affected; when an AI platform degrades, every interaction in flight is simultaneously impacted.

The practical consequence is that every stage of the planning cycle must be rethought—not replaced, but extended to accommodate a labor pool with fundamentally different capacity characteristics, cost structures, and failure modes. The remainder of this article walks through each stage of the planning cycle as it changes when AI agents join the workforce, with emphasis on actionable methods rather than theoretical frameworks.

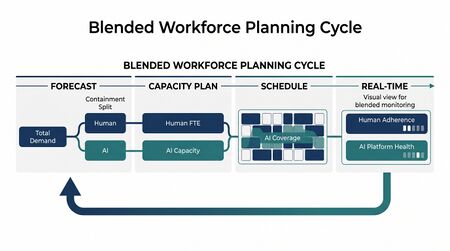

The Blended Planning Cycle

Main article: WFM Processes

The traditional workforce planning cycle operates on nested time horizons: annual capacity planning sets headcount budgets, monthly planning adjusts to emerging trends, weekly scheduling assigns agents to intervals, and daily real-time management closes the gap between plan and actual. This fundamental structure does not change when AI agents enter the workforce, but the content and cadence of each stage shifts substantially.

Annual Cycle Changes

In a traditional environment, the annual planning cycle centers on headcount budgeting: how many agents to hire, at what ramp schedule, against what attrition assumptions. When AI agents are part of the workforce, the annual cycle must additionally address AI licensing volumes, platform vendor contracts, and containment rate trajectory assumptions. The annual plan now produces two parallel outputs: a human headcount plan and an AI capacity plan, with explicit assumptions about how volume splits between the two pools over the planning horizon.

Monthly and Weekly Cycle Changes

Monthly planning traditionally focuses on schedule bid processes, vacation planning, and training allocation. In a blended environment, the monthly cycle adds containment rate monitoring and AI-versus-human volume split adjustments. If actual containment rates diverge from plan—either higher (requiring fewer humans than budgeted) or lower (requiring more)—the monthly review is where capacity rebalancing decisions are made. Weekly scheduling changes are discussed in detail in the scheduling section below.

Daily and Intraday Cycle Changes

The most dramatic changes occur at the real-time level. Traditional intraday management monitors human adherence, manages queues, and authorizes overtime or voluntary time off. Blended real-time management must additionally monitor AI platform health, track live containment rates, and maintain the ability to absorb sudden demand shifts if AI systems degrade. This is explored in the real-time management section.

| Time Horizon | Traditional Activities | Added Blended Activities |

|---|---|---|

| Annual | Headcount budgeting, attrition modeling, hiring plans | AI licensing, containment trajectory planning, vendor SLA negotiation |

| Monthly | Schedule bids, vacation management, training allocation | Containment monitoring, volume-split adjustment, AI capacity reviews |

| Weekly | Schedule generation, overtime/VTO decisions | Shift design around AI coverage, escalation staffing calibration |

| Daily/Intraday | Adherence monitoring, queue management | AI health monitoring, live containment tracking, degradation response |

Forecasting for a Blended Workforce

Main article: AI Containment Rate and Its Workforce Implications

In a traditional contact center, the forecasting problem is straightforward in structure: predict total contact volume by interval, apply an average handle time (AHT) assumption, and feed the result into a staffing model such as Erlang C. In a blended workforce, the forecasting problem splits into three interdependent components.

Component 1: Total Demand Forecasting

Total inbound demand—the number of customer interactions that will arrive regardless of who or what handles them—remains the starting point. Standard forecasting methods (time-series decomposition, regression, machine learning approaches) apply here without modification. The critical principle is that total demand forecasting should be independent of the resolution method. Customers do not change their behavior because an AI agent is answering; they contact the organization based on product issues, billing questions, service events, and seasonal patterns. Forecasting total demand separately from containment avoids the circular reasoning trap of forecasting "AI volume" and "human volume" as independent streams when they are in fact a single demand stream split by a routing decision.

Component 2: Containment Rate Forecasting

The containment rate—the percentage of interactions that AI agents resolve without human intervention—is the pivotal planning variable in a blended workforce. It determines the volume split between the two labor pools and therefore drives both AI capacity requirements and human staffing needs. Containment rates are influenced by multiple factors:

- Contact type mix — simple password resets may have containment rates above 90%, while complex billing disputes may be below 10%. Changes in the mix of contact types directly affect the aggregate containment rate.

- AI capability maturation — as AI models improve and knowledge bases expand, containment rates for specific contact types tend to increase over time, though not linearly and not without periodic regressions.

- Seasonality and novelty — during product launches, outages, or promotional events, customers present novel queries that AI agents may not be trained on, temporarily suppressing containment rates.

- Organizational policy — business rules about when to escalate (e.g., any interaction involving a retention offer) create a ceiling on containment regardless of AI capability.

Best practice is to forecast containment rates at the contact-type level rather than as a single aggregate number, then compose the aggregate forecast from the weighted mix. This approach allows planners to model scenarios such as "what happens to human staffing if we deploy AI to handle a new contact type that currently represents 15% of volume?"

Component 3: Human Demand Derivation

Human demand is derived, not independently forecast:

- Human Demand = Total Demand × (1 − Containment Rate) + Escalation Volume

Escalation volume represents interactions that begin with AI but are transferred to a human agent mid-interaction. These interactions often have different AHT characteristics than interactions that route directly to humans, because the customer has already spent time with the AI agent (potentially increasing frustration and handle time) but may also have had preliminary information gathered (potentially decreasing handle time). Organizations should track escalation AHT separately from direct-to-human AHT for accurate staffing calculations.

Forecasting Cadence

Because containment rates can shift rapidly—particularly when AI models are updated or when novel contact types emerge—blended forecasts require more frequent revision than traditional forecasts. Weekly containment rate reviews are a minimum; organizations with rapidly evolving AI capabilities may need daily reviews of containment trends with intraday monitoring of live containment for real-time adjustments.

Capacity Planning with AI Agents

Main article: Workforce Cost Modeling

Annual capacity planning in a blended environment requires planning two fundamentally different types of capacity with fundamentally different lead times, cost structures, and scaling characteristics.

Human Capacity Planning

Human capacity planning retains its traditional structure—headcount budgets, hiring pipelines, attrition assumptions, training ramp timelines—but the inputs change. The demand signal driving human capacity is no longer total volume; it is the residual volume after AI containment. This makes human capacity planning dependent on containment rate assumptions, which introduces a new source of uncertainty into what is already an uncertain process.

The practical implication is that human capacity plans need to be built against multiple containment scenarios rather than a single forecast. A responsible annual plan includes at minimum three scenarios:

- Conservative containment — AI handles less volume than projected (e.g., due to deployment delays, lower-than-expected accuracy, or policy changes that restrict AI scope). Human demand is higher than baseline.

- Baseline containment — AI performance meets projections. Human demand aligns with the central forecast.

- Aggressive containment — AI handles more volume than projected (e.g., due to faster-than-expected capability gains). Human demand is lower than baseline, and the organization faces potential overstaffing.

Each scenario produces a different headcount plan, and the gap between scenarios represents the planning uncertainty that blended operations introduce. Managing this uncertainty is arguably the central challenge of blended capacity planning.[1]

AI Capacity Planning

AI capacity is fundamentally different from human capacity in several respects:

- Lead time — hiring and training a human agent takes weeks to months. Provisioning additional AI capacity can take hours to days, assuming the platform supports elastic scaling. This asymmetry is strategically significant: organizations can under-provision AI capacity with less risk than they can under-hire humans, because the recovery time from an AI capacity shortfall is dramatically shorter.

- Cost structure — human agents incur ongoing labor costs (salary, benefits, facilities) that are relatively fixed within a planning period. AI agents typically incur per-interaction or per-minute costs that scale directly with usage. This makes AI capacity more variable in cost but also more predictable per unit.

- Scaling granularity — human staffing comes in integer units (you cannot hire half an agent), and each agent has a finite concurrency of one interaction at a time (or a small number in chat/messaging). AI capacity is effectively continuous and can handle many concurrent interactions up to platform limits.

- Vendor dependency — AI capacity is constrained by platform vendor SLAs, rate limits, and pricing tiers. Capacity planning must account for contractual limits and negotiate headroom for demand surges.

Lead Time Arbitrage

The dramatic difference in lead times between human and AI capacity creates a strategic planning opportunity. Because AI capacity can be provisioned quickly, organizations can maintain leaner human capacity plans with the explicit assumption that demand fluctuations will be absorbed by AI scaling. This is the operational equivalent of a financial options strategy: AI capacity acts as a flexible hedge against demand uncertainty, while human capacity represents the fixed base.

However, this strategy carries risk. If AI platform availability degrades or if containment rates drop unexpectedly, the organization faces a human capacity shortfall that cannot be remedied quickly. The lead time arbitrage works only when AI reliability is high and sustained. Organizations should stress-test their capacity plans against an "AI failure" scenario in which containment drops to zero for an extended period and calculate whether remaining human capacity can maintain acceptable service levels.[2]

Scenario Planning Framework

Effective blended capacity planning uses scenario matrices that cross containment trajectories with demand growth assumptions:

| Low Demand Growth | Baseline Growth | High Demand Growth | |

|---|---|---|---|

| Conservative Containment (30%) | Moderate FTE | High FTE | Very High FTE |

| Baseline Containment (50%) | Low FTE | Moderate FTE | High FTE |

| Aggressive Containment (70%) | Very Low FTE | Low FTE | Moderate FTE |

The specific percentages are illustrative; actual containment rates vary dramatically by industry, contact type mix, and AI maturity. The value of the framework is in making the planning assumptions explicit and quantifying the range of outcomes.

Scheduling Humans Around AI

Main article: Scheduling Methods

When AI agents handle a significant share of volume, the human scheduling problem changes in character. Traditional scheduling optimizes human coverage to match a demand curve that represents total volume. Blended scheduling optimizes human coverage to match a residual demand curve—the volume left after AI containment—plus escalation handling capacity.

Shift Design Changes

In a traditional contact center, shift design is driven by the shape of the intraday volume curve. Peak hours require maximum staffing; off-peak hours allow shorter shifts or part-time coverage. When AI handles a disproportionate share of simple, high-volume interactions, the residual human demand curve may have a different shape than the total volume curve.

Specifically, if AI agents handle routine inquiries that dominate peak periods (e.g., balance checks, order status, password resets), the peak-to-trough ratio of human demand may be lower than the peak-to-trough ratio of total demand. This potentially simplifies shift design by reducing the need for extreme part-time shifts or split shifts that exist solely to cover narrow peaks. Conversely, if AI containment rates vary by time of day—for example, lower containment during evening hours when different contact types arrive—the human demand curve may have peaks in unexpected places.

Scheduling for Escalation Coverage

A new scheduling dimension in blended operations is escalation coverage. Even when AI agents are handling most volume, human agents must be available to receive escalated interactions. Escalation patterns have their own arrival characteristics:

- Escalation lag — there is a delay between the start of an AI interaction and the point at which it escalates, creating a lagged demand pattern for human agents.

- Escalation clustering — if an AI model encounters a topic it handles poorly, escalations may cluster in time, creating micro-bursts of human demand.

- Escalation complexity — escalated interactions tend to be more complex than average, requiring more skilled agents and longer handle times.

Schedule planners must account for these patterns by ensuring adequate coverage of escalation-capable agents throughout all operating hours, not just peak volume hours. This often means maintaining a minimum staffing floor that is higher relative to baseline human demand than would be the case in a traditional operation.[3]

Impact on Shrinkage Calculations

Shrinkage in workforce management refers to the percentage of paid time during which agents are unavailable to handle interactions, due to breaks, training, meetings, absenteeism, and other activities. In traditional operations, shrinkage is applied uniformly across the staffing requirement: if you need 100 agents on the phones and shrinkage is 30%, you schedule 143 agents (100 / 0.70).

In blended operations, shrinkage calculations apply only to the human portion of the workforce, but the interaction between AI availability and human shrinkage creates new considerations. If human agents are scheduled to provide escalation coverage and a disproportionate number are simultaneously on break or in training, the escalation queue may spike even though the AI agents are functioning normally. This suggests that shrinkage management for escalation-covering agents may need to be tighter than for volume-covering agents—a scheduling constraint that traditional systems may not model natively.

Real-Time Management in Blended Operations

Main article: Real-Time Schedule Adjustment

Real-time workforce management in a blended operation is arguably the area where the most significant process changes are required. Traditional real-time management focuses on schedule adherence, queue monitoring, and same-day staffing adjustments (overtime authorization, voluntary time off). Blended real-time management adds an entirely new monitoring dimension: AI platform health.

Monitoring AI Platform Health

AI agents operate on cloud-hosted platforms with their own operational characteristics: latency, throughput limits, error rates, and availability SLAs. The WFM real-time team must monitor these metrics alongside traditional human metrics because AI platform degradation instantly becomes a human staffing problem.

Key AI health metrics for real-time monitoring include:

- Response latency — increasing latency may indicate platform stress that precedes containment rate drops.

- Error rate — the percentage of AI interactions that terminate abnormally or produce incorrect responses, triggering escalations.

- Containment rate (live) — real-time containment compared to forecast. A sustained drop of even a few percentage points can translate to a significant increase in human demand.

- Queue depth on AI platform — if the AI platform queues interactions rather than handling them concurrently, growing queue depth signals capacity saturation.

- Escalation rate (live) — the percentage of AI interactions escalating to humans, tracked in real time against forecast.

The AI Degradation Scenario

The highest-risk real-time scenario in blended operations is sudden AI platform degradation or failure. Unlike human attrition, which removes capacity gradually, AI failures are binary and simultaneous: the platform either works or it does not. When it fails, every interaction that would have been contained by AI is instantly redirected to the human queue.

Consider an operation where AI handles 50% of volume. If the AI platform goes down during peak hours, human demand instantaneously doubles. If the human workforce was staffed to handle only the 50% residual demand (plus a buffer), the result is immediate service level collapse. The speed of this transition—from normal operations to crisis—is measured in seconds, not hours.

Effective blended real-time management requires:

- Degradation runbooks — predefined response protocols for various levels of AI degradation (latency increase, partial failure, complete outage), including authority for immediate overtime callbacks, queue messaging changes, and IVR deflection strategies.

- Buffer staffing — maintaining human staffing above the minimum required for the residual demand curve, specifically to absorb AI degradation events. The size of this buffer is a risk management decision informed by AI platform reliability history and the organization's service level tolerance.

- Automatic failover alerts — systems that detect AI degradation and immediately notify the real-time team, before the human queue impact becomes visible. By the time human queue wait times spike, the degradation has been underway for minutes and the recovery window is narrower.

Intraday Reforecasting with Live Containment

Traditional intraday reforecasting updates the volume forecast based on actual arrivals. Blended intraday reforecasting must also update the containment forecast based on actual containment rates. If the morning shows containment running 5 points below forecast, the afternoon human staffing requirement must be recalculated and adjustments made proactively.

This creates a two-variable reforecasting problem: both total volume and containment rate may deviate from plan simultaneously. The combination of higher-than-expected volume and lower-than-expected containment produces a multiplicative effect on human demand that can be severe. Real-time teams should pre-calculate the human staffing impact of combined deviations using a matrix of volume variance × containment variance scenarios.

The Shrinkage and Occupancy Question

Main article: Occupancy

Two of the most fundamental metrics in traditional workforce management—shrinkage and occupancy—require careful reexamination in blended operations because AI agents exhibit radically different characteristics on both dimensions.

AI Shrinkage: Effectively Zero

Human shrinkage accounts for all the time an agent is paid but not available to work: breaks, lunches, training, team meetings, coaching, absenteeism, tardiness, and system downtime. In a typical contact center, shrinkage ranges from 25% to 35% of paid time. AI agents experience none of these categories. An AI agent is available 24/7 with the exception of planned maintenance windows and unplanned outages.

The practical planning implication is significant. If a human agent with 30% shrinkage delivers 5.6 productive hours in an 8-hour shift, an AI agent delivers the equivalent of 24 productive hours per day (or whatever its contracted uptime SLA guarantees). When calculating the effective capacity of a blended workforce, applying a single shrinkage rate to the combined pool would dramatically understate true capacity.

AI Occupancy: Near 100% Is Normal

Occupancy measures the percentage of available time that an agent spends handling interactions. For human agents, sustained occupancy above 85-90% leads to burnout, increased errors, and higher attrition—a well-documented phenomenon in contact center operations. AI agents face no such constraint. An AI agent can operate at 100% occupancy indefinitely without degradation, subject only to platform throughput limits.

This creates an asymmetry that challenges traditional blended metrics. If the blended workforce has an average occupancy of 92%, this might represent AI agents at 99% and human agents at 78%—a healthy state—or AI agents at 95% and human agents at 91%—a state where humans are approaching burnout. The blended average conceals the operational reality.

Do Blended Metrics Make Sense?

The fundamental question is whether traditional metrics computed across a blended human-AI workforce are meaningful or misleading. The argument for blended metrics is simplicity: a single set of numbers for the entire operation. The argument against is that blending metrics across populations with fundamentally different characteristics produces numbers that describe neither population accurately.

The emerging best practice is to maintain parallel metric tracks: one for the human workforce (using traditional definitions of shrinkage, occupancy, adherence, and utilization) and one for the AI workforce (using platform-appropriate metrics like availability, throughput, containment rate, and error rate). Blended metrics may be useful for executive reporting and cost analysis but should not be used for operational planning decisions, where the distinct characteristics of each workforce type must be visible.[4]

KPIs for Blended Workforce Planning

The introduction of AI agents into the workforce necessitates an expanded performance measurement framework. Traditional WFM KPIs remain relevant for the human workforce but are insufficient to describe the performance of a blended operation. The following metrics form a recommended measurement framework for blended workforce planning.

Containment-Related Metrics

- Containment rate — the percentage of total interactions resolved by AI without human intervention. This is the single most important planning variable in blended operations, as it determines the volume split between labor pools.

- Escalation rate — the percentage of AI-initiated interactions that transfer to a human agent. Escalation rate equals (1 − containment rate) for interactions routed to AI, but it is useful to track separately because it directly drives human queue volume.

- Escalation handle time — the average handle time of interactions that escalate from AI to human agents. This metric is critical for staffing calculations because escalated interactions frequently have different AHT profiles than direct-to-human interactions.

Quality and Experience Metrics

- AI CSAT vs. human CSAT — customer satisfaction scores segmented by resolution channel. Tracking these separately reveals whether AI-resolved interactions meet quality standards and whether escalated interactions suffer satisfaction penalties. Research from Gartner indicates that organizations are under significant pressure to ensure AI implementations improve, not merely maintain, customer satisfaction.[5]

- First-contact resolution by channel — the percentage of interactions resolved in a single contact, segmented by AI-only, AI-then-human (escalation), and human-only. This metric captures the end-to-end effectiveness of the blended model.

Cost Metrics

- Cost per resolution (CPR) by channel — the fully loaded cost of resolving an interaction through each channel (AI-only, escalation, human-only). This metric is essential for ROI analysis and for making informed decisions about where to expand or contract AI coverage.

- Blended cost per resolution — the weighted average CPR across all channels, useful for trending total operational efficiency over time.

Service Level Metrics

- Blended service level — the percentage of all interactions (AI and human) resolved within the target threshold. This is the metric customers experience and the one that most directly reflects operational performance.

- Human-queue service level — service level computed only for interactions that reach the human queue (direct-to-human plus escalations). This metric isolates human workforce performance and is the appropriate input for human staffing calculations.

- AI resolution time — the elapsed time from AI interaction start to resolution (for contained interactions) or to escalation (for escalated interactions). Monitoring this metric detects AI performance degradation before it manifests as human queue impact.

Workforce Efficiency Metrics

- Human utilization rate — the percentage of human agent productive time spent handling interactions, calculated against the human demand stream only.

- AI throughput — the number of concurrent interactions the AI platform is handling relative to its capacity limit, analogous to occupancy for humans but measured differently.

- Capacity headroom — the gap between current AI platform utilization and contractual or technical limits, expressed as a percentage. Low headroom signals the need for capacity expansion or demand routing adjustments.

Common Pitfalls

Organizations adopting blended human-AI workforces commonly encounter several planning failures that are predictable and preventable. Understanding these pitfalls allows WFM practitioners to design processes and controls that mitigate them.

Over-Relying on Containment Projections

The most common and most dangerous pitfall is building human capacity plans against aggressive containment assumptions without adequate contingency. AI vendors and internal project teams have strong incentives to project high containment rates to justify investment. WFM planners should treat containment projections the same way they treat volume forecasts: as uncertain estimates that require scenario planning, not as precise inputs.

Gartner's 2025 prediction that 50% of organizations would abandon AI-driven workforce reduction plans reflects the widespread experience of containment rates falling short of initial projections.[2] The root cause is not that AI is ineffective; it is that containment rate projections are often made without adequate consideration of contact type complexity, policy constraints, and the long tail of edge cases that resist automation.

Not Planning for AI Failure Scenarios

Many organizations plan for AI underperformance (lower-than-expected containment) but fail to plan for AI failure (complete platform outage). These are different scenarios with different implications. Underperformance is a gradual capacity gap that can be addressed through intraday adjustments. Failure is an instantaneous demand spike that requires pre-positioned human capacity or pre-planned degradation protocols.

Every blended operations plan should include a documented answer to the question: "If our AI platform goes down for four hours during peak, what happens and what do we do?" If the answer is "service level collapses and we have no response plan," the plan is incomplete.

Treating AI and Human Planning as Separate Streams

A third common pitfall is organizational: assigning AI capacity planning to the technology team and human capacity planning to the WFM team, with insufficient coordination between the two. Because human demand is a direct function of AI containment, these planning streams are mathematically linked. Changes to AI deployment scope, model updates, or platform capacity directly affect human staffing requirements.

Best practice is to establish a unified blended workforce planning function—or at minimum, a regular coordination cadence between the technology and WFM teams—that ensures AI and human capacity plans are developed against shared assumptions and updated in tandem.[6]

Ignoring the Human Experience Dimension

When AI agents absorb simple, repetitive interactions, the remaining human workload concentrates on complex, emotionally demanding, and often escalated interactions. This changes the nature of human agent work and can accelerate burnout if not managed. WFM planners should monitor not just staffing adequacy but also the complexity composition of the human queue, and adjust scheduling (e.g., building in more off-phone time, capping consecutive escalation handling) to account for increased cognitive load. This aligns with the supervision and role-design considerations explored in Human AI Supervision and Escalation Frameworks.

Neglecting Transition Period Planning

The period between "no AI" and "stable blended operations" is the most operationally risky phase. Containment rates are volatile during initial AI deployment. The human workforce may not yet trust AI performance. Routing rules may be poorly calibrated. Planning for this transition period—which may last months—requires conservative human staffing, aggressive monitoring, and frequent plan revisions. Organizations that attempt to capture AI-driven headcount savings during the transition period often find themselves understaffed when containment rates inevitably dip below initial projections.

Relationship to Adjacent Disciplines

Workforce planning with AI agents does not exist in isolation. It connects to and depends upon several adjacent planning disciplines:

- Agentic AI Workforce Planning provides the theoretical foundations, including the Three-Pool Architecture and Cognitive Portfolio Model (N*), that inform how demand is partitioned across worker types.

- AI Agent Orchestration for WFM addresses the technical routing and orchestration layer that determines which interactions reach AI agents and how escalations are handled.

- Human AI Blended Staffing Models explores the organizational design and staffing model choices that shape the blended workforce structure.

- Automation Economics and ROI Decision Frameworks provides the financial analysis methods for evaluating the cost-effectiveness of AI agent deployment.

- AI Scaffolding Framework offers a maturity model for understanding where an organization sits on the spectrum from fully human to heavily automated operations.

- Deterministic vs Probabilistic Models informs the modeling choices WFM practitioners face when forecasting and planning for blended workforces, particularly the importance of scenario-based approaches over point estimates.

Understanding these connections helps WFM practitioners position blended workforce planning within the broader organizational and technical context rather than treating it as a standalone process change.

See Also

- Workforce Planning

- Workforce Planning Calendar and Annual Planning Cycle

- AI in Workforce Management

- Artificial Intelligence Fundamentals

- Forecasting Methods

- Capacity Planning Methods

- Scheduling Methods

- Real-Time Operations

- Erlang C

- Service Level

- Shrinkage

- Occupancy

- WFM Processes

- WFM Roles

References

- ↑ Deloitte, "Reinventing Workforce Planning for an AI-Powered, Uncertain World," Deloitte Insights, 2025. https://www.deloitte.com/us/en/insights/topics/talent/future-of-workforce-planning/reinventing-workforce-planning.html

- ↑ 2.0 2.1 Gartner, "Gartner Predicts 50% of Organizations Will Abandon Plans to Reduce Customer Service Workforce Due to AI," press release, June 10, 2025. https://www.gartner.com/en/newsroom/press-releases/2025-06-10-gartner-predicts-50-percent-of-organizations-will-abandon-plans-to-reduce-customer-service-workforce-due-to-ai

- ↑ McKinsey & Company, "The Contact Center Crossroads: Finding the Right Mix of Humans and AI," 2025. https://www.mckinsey.com/capabilities/operations/our-insights/the-contact-center-crossroads-finding-the-right-mix-of-humans-and-ai

- ↑ Forrester Research, "Predictions 2026: AI Agents, Changing Business Models, and Workplace Culture Impact Enterprise Software," Forrester Blogs, 2025. https://www.forrester.com/blogs/predictions-2026-ai-agents-changing-business-models-and-workplace-culture-impact-enterprise-software/

- ↑ Gartner, "Customer Service and Support Leaders Must Prioritize Blending Human Strengths with AI Intelligence in 2026," press release, December 17, 2025. https://www.gartner.com/en/newsroom/press-releases/2025-12-17-customer-service-and-support-leaders-must-prioritize-blending-human-strengths-with-ai-intelligence-in-2026

- ↑ McKinsey & Company, "Building and Managing an Agentic AI Workforce," 2025. https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/the-future-of-work-is-agentic