AI Agent Orchestration for WFM

AI agent orchestration for WFM refers to the technical layer that routes work between AI agents and human agents in a contact center, manages fleets of AI models in production, handles failover when AI platforms degrade, and feeds operational data back into workforce management systems for forecasting, scheduling, and real-time management. As organizations deploy AI agents to handle increasing volumes of customer interactions—Gartner projects that agentic AI will autonomously resolve 80% of common customer service issues by 2029[1]—the orchestration layer becomes the critical infrastructure that determines whether blended human-AI operations succeed or fail.

This article covers the technical orchestration patterns that sit between AI agent platforms and the contact center routing infrastructure. It is distinct from Agentic AI Workforce Planning, which addresses the theoretical foundations and staffing mathematics for blended workforces, and from Workforce Planning with AI Agents, which covers the practical planning cycle. The orchestration layer described here is the runtime system that makes those plans executable.

The concept maps directly to Layer 5 (Workflow Orchestration) of the AI Scaffolding Framework, and represents a critical component of the broader WFM Ecosystem Architecture.

Orchestration Architecture

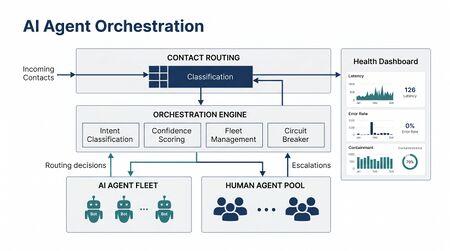

The orchestration layer occupies a specific position in the contact center technology stack: it sits between the Automatic Call Distributor (ACD) or CCaaS platform on one side and one or more AI agent platforms on the other. Its primary responsibility is to make routing decisions that the ACD cannot make on its own—decisions that require understanding AI agent capabilities, current AI system health, real-time confidence scoring, and the business rules governing when interactions should be handled by machines versus humans.

Position in the Technology Stack

In a traditional contact center, the routing path is straightforward: a customer interaction arrives at the ACD via IVR or digital channel, the ACD evaluates routing rules (often using skill-based routing), and the interaction is delivered to a qualified human agent. The orchestration layer introduces a decision point before or parallel to this traditional path.

The architectural position varies by implementation pattern:

- Pre-ACD orchestration — The orchestration layer intercepts interactions before they reach the ACD, determines whether AI can handle the interaction, and either routes to an AI agent or passes the interaction to the ACD for human routing. This pattern is common in CCaaS platforms with native AI capabilities.

- Parallel orchestration — The orchestration layer operates alongside the ACD, receiving a copy of the interaction metadata while the ACD simultaneously begins human agent selection. If the orchestrator assigns an AI agent before a human becomes available, the human reservation is released. This pattern reduces latency at the cost of some wasted routing computation.

- Post-classification orchestration — The IVR or a lightweight classifier determines intent first, then routes to either the AI orchestration layer or the traditional ACD based on intent category. This is the simplest pattern to retrofit into existing environments but creates a hard boundary between AI-eligible and human-only interactions.

Core Components

A production orchestration layer typically consists of five components:

Intent classifier. Determines what the customer needs, often using a lightweight model distinct from the AI agent that will ultimately handle the interaction. The classifier must operate with low latency (typically under 200 milliseconds) because it sits in the critical path of every interaction. It produces an intent label and a confidence score, both of which feed into routing decisions.

Routing engine. Evaluates the intent classification, current AI agent availability and health, business rules, and customer context to make the routing decision. The routing engine implements the patterns described in the routing patterns section below. It extends the concepts of Next Generation Routing to encompass AI agents as first-class routing targets.

Session manager. Manages the lifecycle of AI-handled interactions, including session creation, context passing between systems, mid-interaction handoff to human agents when needed, and session teardown. The session manager must maintain state across channel switches and escalation events.

Health monitor. Continuously evaluates the operational status of all AI agent endpoints, tracking latency, error rates, throughput, and quality metrics. The health monitor feeds the circuit breaker logic described in the failover and resilience section. It provides the data foundation for real-time operational decisions about AI fleet capacity.

Analytics pipeline. Captures interaction-level data from AI-handled sessions and transforms it into the metrics that WFM systems consume: volumes by channel and intent, handle times (including AI processing time), containment rates, escalation rates, and customer satisfaction signals. This pipeline feeds the integration patterns described in the WFM integration section.

Connection to Three-Pool Architecture

The orchestration layer is the runtime implementation of the Three-Pool Architecture, which conceptually separates the workforce into AI-contained, AI-assisted, and human-exclusive pools. The orchestrator determines, for each interaction, which pool handles it—and manages the transitions when an interaction must move between pools mid-conversation.

Routing Patterns

Routing in a blended human-AI environment is fundamentally more complex than traditional skill-based routing because AI agents introduce probabilistic capabilities rather than binary skills. A human agent either speaks Spanish or does not; an AI agent handles refund requests with 94% accuracy and billing disputes with 78% accuracy. The routing engine must reason about continuous capability scores rather than discrete skill assignments.

Intent-Based Routing

The simplest orchestration pattern routes interactions based on classified intent. Each intent category is mapped to either an AI agent or a human queue:

- Password resets → AI agent

- Billing inquiries → AI agent

- Complaints → Human agent

- Account closures → Human agent

This pattern is easy to implement and explain but creates rigid boundaries. It cannot adapt to situations where AI agent performance varies within an intent category (a straightforward billing inquiry versus a complex disputed charge) or where AI system capacity is temporarily constrained.

Confidence-Based Routing

A more sophisticated pattern uses the intent classifier's confidence score to make routing decisions. Rather than mapping intents to fixed destinations, the routing engine applies threshold logic:

- Confidence ≥ 0.92 → Route to AI agent

- Confidence 0.75–0.91 → Route to AI agent with human monitoring

- Confidence < 0.75 → Route to human agent

The thresholds are calibrated based on historical data correlating classifier confidence with AI resolution success. This approach allows the same intent to be handled by AI or humans depending on the complexity of the specific interaction. Organizations typically discover that the optimal thresholds vary by intent category, channel, and customer segment—leading to multi-dimensional threshold matrices rather than single cutoff values.

Hybrid Routing with Human Fallback

The most common production pattern combines intent-based and confidence-based routing with automatic human fallback. The orchestrator routes to an AI agent with a standing instruction to escalate if the interaction exceeds defined parameters:

- AI agent detects customer frustration (sentiment analysis drops below threshold)

- AI agent reaches maximum turn count without resolution

- AI agent encounters a topic outside its training scope

- Customer explicitly requests a human agent

This pattern requires the session manager to execute warm transfers—passing the full conversation history, customer context, and AI agent's assessment of the situation to the receiving human agent. Poor handoff implementation is the most common failure mode in hybrid routing deployments. The frameworks governing these transitions are detailed in Human AI Supervision and Escalation Frameworks.

Skill-Based Routing Extended to AI Capabilities

The most advanced pattern extends traditional skill-based routing to treat AI capabilities as continuous-valued skills. In this model, AI agents are registered in the routing system alongside human agents, with skill profiles that include:

- Capability scores — Probability of successful resolution for each intent/topic combination, derived from historical performance data.

- Capacity limits — Maximum concurrent sessions the AI agent can handle while maintaining acceptable latency and quality.

- Channel proficiency — Performance characteristics across voice, chat, email, and social channels.

- Language coverage — Supported languages with per-language quality scores.

The routing engine then applies the same best-fit matching algorithms used for human agent routing, extended to handle continuous rather than binary skill values. This approach integrates most naturally with existing ACD infrastructure but requires the ACD to support continuous skill scores—a capability that is available in modern CCaaS platforms but absent from many legacy systems.

Fleet Management

Managing a fleet of AI agents in production shares structural similarities with managing a human workforce—both involve capacity planning, performance management, scheduling, and continuous improvement—but the mechanisms are fundamentally different. Fleet management is where the operational practices of WFM and the engineering practices of MLOps converge.

Model Versioning

Production AI agent deployments must manage multiple model versions simultaneously. A typical environment might run:

- The current production model serving 95% of traffic

- A canary model serving 5% of traffic for validation

- A shadow model receiving copies of production traffic for offline evaluation

- A rollback model held in reserve in case the production model degrades

Each version must be independently addressable by the orchestration layer, and the routing engine must know which version to invoke for each interaction. Version management becomes particularly complex when different model versions have different capability profiles—a newer model might handle refund requests better but regress on technical support.

Research on production LLM deployment confirms that even with identical configurations, AI model outputs are not perfectly deterministic in practice, with documented accuracy variations of up to 15% across runs with identical inputs.[2] This non-determinism makes rigorous version validation essential before promoting models to full production traffic.

A/B Testing

A/B testing in AI agent fleets compares two or more model versions against specific business metrics under controlled conditions. Unlike canary deployment (which validates that a new model does not break things), A/B testing proves that a new model is quantifiably better.

The WFM implications of A/B testing are significant. During testing periods, different model versions may produce different containment rates, different average handle times, and different escalation patterns. The WFM forecast must account for the blended performance of the test population, and the scheduling engine must accommodate the human agent capacity needed to handle the escalation volume from the lower-performing variant.

Canary Deployments

Canary deployment routes a small fraction of production traffic (typically 1–5%) to a new model version while monitoring key metrics. If the canary model's performance meets or exceeds the production model, traffic is gradually increased; if metrics degrade, the canary is automatically rolled back.

The metrics evaluated during canary deployment of AI agents differ from traditional software canary patterns. In addition to standard infrastructure metrics (latency, error rates), AI canary evaluation must track:[3]

- Containment rate (percentage of interactions resolved without human escalation)

- Customer satisfaction delta between canary and production

- Average handle time comparison

- Escalation reason distribution

- Token consumption and cost per interaction

Rollback Patterns

When a production AI model degrades—whether due to a bad deployment, a shift in input distribution, or an upstream dependency failure—the orchestration layer must execute a rollback. Three rollback strategies are common:

- Instant rollback — Traffic immediately reverts to the previous model version. This requires the previous version to remain deployed and warm (loaded into memory and ready to serve requests).

- Graduated rollback — Traffic shifts back to the previous version incrementally (e.g., 10% per minute) to avoid overwhelming the previous version's infrastructure.

- Failover to human — When no AI model version is healthy, the orchestrator temporarily routes all interactions to human agents. This strategy requires the WFM real-time team to immediately adjust staffing, as described in Real-Time Schedule Adjustment.

Failover and Resilience

AI platform outages and degradation are not hypothetical risks—they are operational certainties. LLM API providers experience latency spikes, rate limiting events, and full outages. The orchestration layer must handle these events without degrading the customer experience below acceptable thresholds.

Circuit Breaker Patterns

The circuit breaker pattern, adapted from distributed systems engineering, is the foundational resilience mechanism for AI agent orchestration. The circuit breaker monitors the health of each AI agent endpoint and operates in three states:[4]

- Closed (normal operation) — Requests flow to the AI agent. The circuit breaker counts failures and resets the counter after a configurable success window.

- Open (failure detected) — All requests are diverted away from the failing AI agent, either to an alternative AI model or to human agents. The circuit breaker enters a timeout period.

- Half-open (recovery probe) — After the timeout, a single probe request is sent to the AI agent. If the probe succeeds, the circuit closes; if it fails, the circuit reopens for another timeout period.

AI agent circuit breakers require extensions beyond the traditional pattern. Standard circuit breakers detect timeouts and HTTP 500 errors, but AI agents can fail in ways that return 200-status responses—hallucinated answers, confident but incorrect resolutions, and reasoning loops that consume tokens without progress. Effective AI circuit breakers must also monitor semantic quality metrics, not just infrastructure health signals.

A recommended extension adds a Degraded state between Closed and Open, where the AI agent is functional but operating below quality thresholds. In the Degraded state, the orchestrator might route only simple interactions to the AI agent while sending complex interactions to humans.

Graceful Degradation

Rather than treating AI capacity as binary (available or unavailable), graceful degradation strategies maintain partial AI service during platform stress:

- Capability shedding — The orchestrator narrows the set of intents routed to AI agents, retaining only those with the highest containment rates and lowest risk. Complex or high-value interactions are routed to humans preemptively.

- Quality threshold tightening — The confidence threshold for AI routing increases during degradation. Only interactions where the classifier is highly confident are sent to AI agents.

- Rate limiting — The orchestrator caps the number of concurrent AI sessions below the platform's stressed capacity to prevent quality degradation from overload.

Automatic Human Spillover

When AI capacity drops—whether from an outage, degradation, or planned maintenance—the resulting volume must spill to human agents. This creates a sudden demand spike that the WFM real-time team must manage.

The orchestration layer should signal the WFM platform proactively when AI capacity changes, enabling the real-time management team to take preemptive action. Specific signals include:

- Circuit breaker state changes (Closed → Degraded, Degraded → Open)

- AI throughput dropping below configured thresholds

- Estimated human volume impact (additional interactions per minute requiring human handling)

- Expected duration of the capacity reduction (if known from the AI platform's status page or historical patterns)

This data enables the real-time team to activate contingency plans: extending shifts, recalling off-phone agents, opening overtime, or activating business continuity resources. The speed of this feedback loop—from AI degradation detection to WFM action—is a key operational metric, with best-practice targets under 60 seconds.

Queue Management During AI Outages

During a full AI outage, the interaction volume that was being contained by AI agents floods into human queues. The orchestration layer should manage this transition to prevent queue collapse:

- Priority-based deflection — Low-priority interactions that AI was handling are deflected to self-service channels (FAQ, knowledge base) rather than added to human queues.

- Callback offers — Voice interactions that would have been handled by AI are offered callback slots rather than holding in queue.

- Temporary IVR messaging — The IVR system is updated to set expectations about extended wait times and offer alternative channels.

- Volume smoothing — Rather than routing the entire AI backlog to humans simultaneously, the orchestrator meters the spillover to match available human capacity, using queue buffers to smooth the transition.

Real-Time Monitoring

Monitoring an AI agent fleet requires metrics that bridge infrastructure health, AI quality, and WFM operational outcomes. Traditional contact center monitoring tools track human agent adherence, occupancy, and service level; AI fleet monitoring must track analogous metrics for a fundamentally different type of workforce.

Health Metrics

| Metric | Description | Typical Threshold | WFM Impact |

|---|---|---|---|

| Latency (p50/p95/p99) | Response time at various percentiles | p95 < 2s (chat), p95 < 500ms (voice) | Affects customer experience scores; high latency may trigger escalation |

| Error rate | Percentage of requests returning errors | < 0.5% | Directly reduces containment rate; excess volume spills to humans |

| Containment rate | Percentage of AI-handled interactions resolved without human intervention | Varies by intent (typically 70–95%) | Primary input to WFM staffing models for human agent requirements |

| CSAT delta | Customer satisfaction difference between AI and human-handled interactions | Within 5 points of human baseline | Determines acceptable AI routing volume |

| Concurrent session utilization | Active sessions as percentage of maximum capacity | < 80% sustained | Indicates when AI fleet is approaching capacity limits |

| Escalation rate | Percentage of AI interactions requiring human takeover | < 30% overall | Feeds real-time human agent demand calculations |

| Cost per interaction | Token consumption and API costs per resolved interaction | Varies by provider and model | Input to cost optimization and model selection decisions |

| Resolution accuracy | Correctness of AI resolutions as measured by QA sampling | > 95% for production routing | Determines whether model should remain in production |

Containment Rate Trending

Containment rate is the single most important metric connecting AI orchestration to WFM. A declining containment rate directly increases human agent demand; a rising containment rate reduces it. The monitoring system must track containment rate trends at multiple granularities:

- Real-time (5-minute rolling window) — For circuit breaker and routing decisions

- Intraday (hourly) — For real-time WFM adjustments

- Daily — For short-term forecast updates

- Weekly/monthly — For planning cycle inputs

Sudden drops in containment rate often precede broader AI system failures and serve as an early warning signal. A containment rate that drops from 85% to 78% over 30 minutes may indicate model drift, an upstream data change, or the emergence of a new interaction pattern that the AI model was not trained to handle.

Dashboard Architecture

Production monitoring dashboards for AI agent fleets should present three views:

- Infrastructure view — Latency, throughput, error rates, and resource utilization across all AI agent endpoints. This view is consumed by platform engineering and DevOps teams.

- Quality view — Containment rate, CSAT, resolution accuracy, and escalation reasons. This view is consumed by contact center operations and quality management teams.

- WFM view — Human volume impact, staffing variance from plan, service level effect, and capacity utilization. This view is consumed by WFM analysts and real-time managers.

Integration with WFM Platforms

The orchestration layer generates data that WFM platforms need for every stage of the planning cycle. The integration architecture must deliver this data at the right granularity, latency, and format for each WFM function. This builds on the broader patterns described in WFM Data Infrastructure and Integration Architecture.

Data Flows

Four primary data flows connect the orchestration layer to the WFM platform:

Volume decomposition. The orchestrator reports interaction volumes split by handling type (AI-contained, AI-assisted with human escalation, human-only). This decomposition is essential for forecasting because AI-contained volume and human-handled volume follow different patterns, have different handle times, and respond differently to external drivers. The WFM forecasting engine must treat these as separate volume streams with distinct models.

Handle time data. AI handle times differ structurally from human handle times. AI interactions are typically shorter and less variable, but escalated interactions (which start with AI and transfer to human) are longer than interactions that go directly to humans, due to the context-switching overhead. The WFM platform needs handle time distributions segmented by handling type to produce accurate staffing requirements.

Capacity signals. Real-time AI capacity status (available, degraded, unavailable) feeds the WFM real-time management function. When AI capacity drops, the WFM platform must recalculate required human staffing immediately. This integration must operate with sub-minute latency.

Quality metrics. Containment rate trends, CSAT scores, and resolution accuracy feed the WFM planning function's assumptions about the split between AI and human volume. A declining containment rate may trigger a reforecast that shifts volume from the AI pool to the human pool.

API Integration Patterns

| Pattern | Latency | Use Case |

|---|---|---|

| Webhook / Event stream | Sub-second | Real-time capacity alerts, circuit breaker state changes, escalation events |

| Polling API | 1–5 minutes | Intraday volume updates, containment rate snapshots |

| Batch export | Hourly / Daily | Historical interaction data for forecast model training, handle time analysis |

| Bidirectional sync | Near-real-time | WFM schedule data informing orchestrator about human agent availability; orchestrator data informing WFM about AI capacity |

The bidirectional pattern is the most operationally valuable but least commonly implemented. When the WFM platform can inform the orchestrator that human agent availability is about to drop (due to a scheduled break wave or shift end), the orchestrator can proactively tighten AI routing criteria—retaining more interactions in AI rather than escalating them during periods of low human capacity.

Update Cadences

Different WFM functions consume orchestration data at different frequencies:

- Real-time management — Continuous (sub-minute updates on AI capacity, volume split, escalation rate)

- Intraday reforecasting — Every 15–30 minutes (containment rate trending, actual vs. planned AI volume)

- Short-term forecasting — Daily (previous day's volume decomposition, handle time distributions, containment rates by intent)

- Long-term planning — Weekly/monthly (containment rate trends, AI capability expansion roadmap, model upgrade impact estimates)

Supervision Models

The orchestration layer must implement different levels of AI autonomy depending on organizational risk tolerance, regulatory requirements, and AI model maturity. These supervision models determine how much human oversight is applied to AI agent decisions and map directly to the frameworks described in Human AI Supervision and Escalation Frameworks.

Fully Supervised

Every AI agent response is reviewed by a human before delivery to the customer. This model is appropriate during initial deployment, for high-risk interaction types (financial transactions, medical information, legal commitments), and in regulated industries where automated responses require human verification.

WFM implications: Requires staffing a supervision queue in addition to the standard agent queue. Each supervisor can typically monitor 3–8 concurrent AI sessions depending on complexity. The supervision function has its own staffing model with distinct skill requirements, handle time characteristics, and schedule patterns.

Semi-Autonomous

The AI agent operates independently for interactions within defined parameters but escalates or requests human approval for specific triggers:

- Transactions above a monetary threshold

- Customer sentiment falling below a defined level

- Interaction duration exceeding a maximum

- Topics flagged as requiring human judgment (exceptions, policy overrides, complaints)

This is the most common production model. WFM planning must account for both the AI-contained volume (which requires no human staffing) and the exception volume (which requires human agents with specialized skills in AI-escalated interactions, per the Three-Pool Architecture).

Fully Autonomous with Exception Handling

The AI agent handles interactions end-to-end without real-time human oversight. Human involvement is limited to post-interaction quality review, trend analysis, and model improvement. Exception handling is automated: the AI agent follows predefined protocols for edge cases rather than escalating to humans.

This model maximizes containment rate but requires high confidence in the AI model's quality and the organization's tolerance for occasional AI errors. Full autonomy is typically reserved for low-risk, high-volume interaction types where the cost of occasional errors is low relative to the cost of human handling.

Forrester predicts that by 2026, the top enterprise platforms will offer "digital employee management" capabilities, treating AI agents as managed workforce members with defined roles, permissions, and performance expectations.[5]

Choosing the Right Model

The supervision model is not a permanent choice—it should evolve as the AI system matures:

- Pilot phase (0–3 months) — Fully supervised. Establish baseline performance data and identify failure modes.

- Scaling phase (3–12 months) — Semi-autonomous. AI handles routine interactions independently; humans handle exceptions and edge cases.

- Maturity phase (12+ months) — Fully autonomous for proven interaction types. Semi-autonomous for newer capabilities. Fully supervised only for the initial deployment of new models or intent categories.

Each transition changes the WFM staffing model. The shift from fully supervised to semi-autonomous typically reduces human FTE requirements by 40–60% for the affected interaction types, while the shift from semi-autonomous to fully autonomous reduces requirements by an additional 20–30%.

Security and Compliance

AI agent orchestration introduces security and compliance requirements that go beyond traditional contact center infrastructure. The orchestration layer processes customer data, makes autonomous decisions, and generates records that may be subject to regulatory scrutiny.

Data Handling and PII

AI agents process personally identifiable information (PII) during customer interactions—names, account numbers, addresses, payment information. The orchestration layer must enforce data handling policies:

- PII redaction in logs — Interaction logs captured for analytics and model training must have PII removed or tokenized before storage. The redaction must occur at the orchestration layer, not downstream, to prevent PII from propagating into training datasets.

- Data residency — AI agent API calls may traverse geographic boundaries. The orchestration layer must route interactions to AI endpoints that comply with data residency requirements (GDPR, CCPA, industry-specific regulations).

- Encryption in transit and at rest — All data flowing between the orchestration layer, AI agent endpoints, and WFM systems must be encrypted. This is standard practice but requires explicit enforcement when integrating multiple vendor systems.

- Retention policies — Interaction transcripts, AI agent reasoning traces, and routing decisions must follow defined retention schedules that comply with both regulatory requirements and organizational data governance policies.

Audit Trails

Regulatory and internal compliance frameworks require traceable records of AI-made decisions. The orchestration layer must log:

- Every routing decision (why an interaction was sent to AI vs. human)

- Every AI agent response with the model version that generated it

- Every escalation event with the trigger condition

- Every human override of an AI agent decision

- Circuit breaker state changes and their impact on routing

These audit trails must be tamper-evident and retained for the period required by applicable regulations. In financial services, this may be seven years or more. In healthcare, HIPAA requirements add additional constraints on how interaction records are stored and accessed.

Regulatory Requirements

Several regulatory frameworks directly affect AI agent orchestration in customer service:

- AI Act (EU) — Customer service AI systems may be classified as limited-risk, requiring transparency obligations (customers must be informed they are interacting with AI).

- CCPA/CPRA (California) — Automated decision-making disclosures may be required when AI agents make decisions that affect consumers.

- TCPA (US) — Automated outbound communications, including those initiated by AI agents, must comply with consent requirements.

- Industry-specific regulations — Financial services (FINRA, OCC), healthcare (HIPAA), and other regulated industries impose additional requirements on automated customer interactions.

The orchestration layer is the natural enforcement point for these requirements because it controls the routing decision and has visibility into the full interaction lifecycle. Compliance logic embedded in the orchestrator ensures that regulatory requirements are met consistently across all AI agent deployments rather than implemented separately in each agent.

Security of the Orchestration Layer

The orchestration layer itself is a high-value target because it controls routing decisions and has access to customer data flows. Security requirements include:

- Authentication and authorization — All API calls between the orchestrator and AI agent endpoints must be authenticated. Role-based access controls must govern who can modify routing rules, thresholds, and circuit breaker configurations.

- Prompt injection defense — Customer inputs that flow through the orchestration layer to AI agents must be sanitized to prevent prompt injection attacks that could cause the AI agent to bypass its instructions or leak system prompts.

- Rate limiting and abuse detection — The orchestrator must detect and throttle unusual interaction patterns that may indicate automated attacks against the AI agent fleet.

Forrester's AEGIS (Agentic AI Enterprise Guardrails for Information Security) framework provides a structured approach for CISOs to secure autonomous AI systems across critical domains, and represents an emerging standard for AI agent security governance.[6]

Implementation Considerations

Build vs. Buy

Organizations face a build-versus-buy decision for the orchestration layer. Modern CCaaS platforms increasingly offer native orchestration capabilities, while best-of-breed AI platforms provide their own orchestration tooling. The decision factors include:

- Existing platform capabilities — If the CCaaS platform already provides confidence-based routing, circuit breakers, and WFM integration APIs, building a custom orchestration layer adds complexity without proportional value.

- Multi-vendor AI strategy — Organizations using multiple AI providers (e.g., one for voice, one for chat, one for email) may need a custom orchestration layer that abstracts across providers—a pattern analogous to what Kubernetes did for container orchestration.

- Integration depth — Custom orchestration layers can achieve deeper integration with WFM platforms, enabling the bidirectional data flows described in the integration section.

Multi-Agent Coordination

As organizations deploy specialized AI agents for different tasks—one for billing, one for technical support, one for sales—the orchestration layer must coordinate across agents. Academic research on multi-agent systems has explored several coordination paradigms, including centralized orchestration (where a single controller directs all agents), hierarchical orchestration (where supervisory agents manage groups of specialized agents), and decentralized collaboration (where agents negotiate directly).[7]

In contact center applications, centralized orchestration dominates because it provides the routing control and auditability that operations teams require. The orchestrator maintains the global view of all agent capabilities, current workloads, and health status needed to make optimal routing decisions.

The convergence of automation markets around AI-led orchestration has accelerated since 2025. Both Gartner and Forrester identify multi-agent orchestration platforms as critical infrastructure, with Forrester comparing the orchestration layer's importance to what Kubernetes provided for container management.[8]

Organizational Readiness

Deploying AI agent orchestration changes the operational model for contact center management. WFM teams must develop new competencies:

- AI capacity planning — Understanding AI model throughput limits, scaling characteristics, and cost structures.

- Blended workforce modeling — Forecasting and scheduling for a workforce that includes both human and AI agents, with dynamic allocation between pools.

- Real-time AI management — Monitoring AI fleet health alongside human workforce metrics and making coordinated decisions when either population is under stress.

- Cross-functional collaboration — WFM teams must work closely with AI engineering, platform operations, and data science teams to maintain and improve the orchestration layer.

These competencies represent an evolution of the WFM function, building on the practices described in AI in Workforce Management and the foundational concepts in Artificial Intelligence Fundamentals.

The connection to Robotic Process Automation is also worth noting: many organizations have already deployed RPA for back-office task automation, and the orchestration patterns used for customer-facing AI agents can extend to coordinate RPA bots alongside human and AI agent workflows, moving toward the unified Intelligent Automation vision.

See Also

- Agentic AI Workforce Planning

- Workforce Planning with AI Agents

- AI Scaffolding Framework

- Three-Pool Architecture

- AI Containment Rate and Its Workforce Implications

- Human AI Supervision and Escalation Frameworks

- Skill-Based Routing

- Next Generation Routing

- WFM Ecosystem Architecture

- WFM Data Infrastructure and Integration Architecture

- Conversational AI

- Speech Analytics

- Robotic Process Automation

- Intelligent Automation

- Real-Time Operations

- Contact Center as a Service

References

- ↑ Gartner Predicts Agentic AI Will Autonomously Resolve 80% of Common Customer Service Issues Without Human Intervention by 2029. Gartner. 2025-03-05.

- ↑ Releasing AI Features Without Breaking Production: Shadow Mode, Canary Deployments, and A/B Testing for LLMs. TianPan.co. 2026-04-09.

- ↑ Canary Deployment. Arize AI. 2026-05-13.

- ↑ Resilience Circuit Breakers for Agentic AI. Hannecke, Michael. Medium. 2026-05-13.

- ↑ Predictions 2026: AI Agents, Changing Business Models, And Workplace Culture Impact Enterprise Software. Forrester. 2026-05-13.

- ↑ Building a Security Strategy for Agentic AI with Forrester. Carahsoft. 2025.

- ↑ "The Orchestration of Multi-Agent Systems: Architectures, Protocols, and Enterprise Adoption". arXiv preprint. 2025.

- ↑ AI Agent Adoption 2026: What the Analysts' Data Shows. Joget. 2026-05-13.