Automation Economics and ROI Decision Frameworks

Automation economics in workforce management refers to the systematic analysis of costs, benefits, and decision logic governing the adoption, operation, and decommissioning of automated systems in contact center and knowledge-worker environments. As automation technologies—from interactive voice response and robotic process automation to large-language-model-based [[Agentic AI Workforce Planning|agentic AI]]—have proliferated, the analytical frameworks for evaluating their economic merit have become central to workforce cost modeling and capacity planning.

The core challenge in automation economics is that vendor and analyst presentations routinely present simplified ROI calculations that capture primary cost reductions while omitting secondary cost categories, overstating containment assumptions, and ignoring the new work categories that automation creates. A rigorous automation economics framework requires full cost accounting, honest containment modeling, sensitivity testing, and a lifecycle perspective that includes the decommissioning problem.

Task Decomposition for Automation Candidacy

Evaluating automation candidacy at the job or role level produces coarse and often misleading results. Frey and Osborne's foundational analysis of automation probability by occupation demonstrates that occupations are bundles of tasks with heterogeneous automation susceptibility—a finding that applies directly to WFM role analysis.[1]

Task decomposition methodology for WFM contexts proceeds through four stages. First, enumerate the task inventory for each role: what activities does the role perform, at what frequency, and with what decision authority? Second, score each task on automation feasibility dimensions: data availability (is the information required for this task structured and accessible?), routine regularity (does this task follow predictable rules or require judgment under uncertainty?), exception rate (what fraction of instances require human judgment to resolve?), and stakes (what is the cost of an automation error?). Third, estimate handle-time contribution of automatable tasks to generate a theoretical maximum labor-hour reduction. Fourth, apply a realization discount—the fraction of theoretical savings achievable given handoff costs, supervision requirements, and residual human touch—to produce an adjusted savings estimate.

Tasks scoring high on automation feasibility in WFM contexts include: routine report extraction and formatting, schedule adherence monitoring against predefined thresholds, standard intraday adjustment notifications, basic IVR-eligible contact routing, and repetitive data reconciliation between systems. Tasks with low automation feasibility include: exception handling requiring contextual judgment, escalation decisions with ambiguous authorization, novel contact types without training data, and stakeholder communications requiring relationship knowledge. The WFM analyst role typically contains 40–60% of task volume in the automatable range, with significant variance by specialization and organizational context.

The True Cost Stack of Automation

Vendor licensing fees are typically the smallest component of total automation cost. A rigorous cost stack includes:

Development and integration costs. Building or configuring automation to work with specific WFM platforms, telephony systems, CRM data, and workforce data requires engineering effort that vendor implementation estimates routinely understate. Integration complexity scales with data model heterogeneity; organizations with fragmented data architectures face higher integration costs that persist as maintenance burden.

Testing and validation costs. Automated systems require more rigorous pre-deployment testing than manual processes, because failure modes are systematic rather than individual. A human analyst making an error affects one scheduling decision; an automated system with a logic error affects thousands. User acceptance testing, parallel operation periods, and failure mode analysis consume analyst and engineering time that must be accounted.

Maintenance and monitoring costs. Automation models degrade over time as the operational environment changes. Forecasting models trained on pre-pandemic contact patterns require retraining as demand patterns shift. Routing logic built for a two-channel contact center requires redesign when a new channel is added. Maintenance costs are often treated as zero in initial ROI models and discovered as significant ongoing expenditures in years two and three.

Failure handling costs. Every automated system has failure modes, and failure handling must be designed, staffed, and operated. When an agentic AI session fails mid-contact, the escalated contact arrives at a human agent carrying unresolved context and elevated customer frustration—producing an escalated AHT that may be 30–50% higher than a standard contact. The staffing cost of handling automation failures is a real cost that belongs in the denominator.

Retraining and model refresh costs. Large language model-based systems require periodic fine-tuning as contact types, product sets, and policies evolve. This retraining requires curated training data (labor cost), engineering execution (compute and labor cost), and validation (analyst time). For organizations using [[WFM Technology Selection and Vendor Evaluation|third-party AI platforms]], model refresh may be bundled in vendor pricing or billed separately.

Supervision overhead costs. Automated systems operating in workforce management contexts require human oversight—not occasional review but systematic quality assurance. Brynjolfsson and McAfee's research on automation productivity emphasizes that supervision overhead is a structural feature of human-machine collaboration, not a transitional cost that disappears after implementation.[2] The supervisor role created by automation is a new permanent cost category.

Willcocks and Lacity's study of robotic process automation deployments found that total cost of ownership over a three-year period was typically 2.5–4× the initial licensing cost, with the ratio varying by integration complexity and organizational maintenance capability.[3]

Build vs Buy vs Hybrid Decision Frameworks

The build/buy/hybrid decision for workforce automation operates across three dimensions: capability specificity, organizational data advantage, and strategic control requirements.

Buy (platform AI): Appropriate when the automation use case is well-defined, vendor solutions are mature, and the organization has no proprietary data advantage. Standard IVR optimization, off-the-shelf chatbot deployment for FAQs, and scheduling automation using established optimization engines typically fall in this category. Buy decisions minimize upfront development cost but create vendor dependency and limit customization headroom.

Build (custom development): Appropriate when the automation use case requires proprietary data unavailable to vendors, when competitive differentiation depends on automation performance, or when existing vendor solutions are inadequate for the operational context. Organizations with large, clean, longitudinal contact and workforce datasets may have genuine data advantages that justify custom model development. Build decisions require sustained engineering capability and increase time to deployment.

Hybrid: The most common architecture in mature implementations uses vendor platforms for core automation infrastructure with custom development for proprietary optimization layers. A human-AI blended staffing model might use a vendor LLM platform for contact handling with custom routing logic, custom escalation triggers, and custom containment monitoring developed internally. This architecture preserves vendor-provided model maintenance while retaining control over decision logic.

The build/buy/hybrid decision should be revisited at each major capability inflection point, not fixed at initial implementation. Vendor platforms that were inadequate for agentic AI use cases in 2023 may be adequate by 2025; organizations that built custom solutions at high cost may face stranded investment when vendor solutions mature.

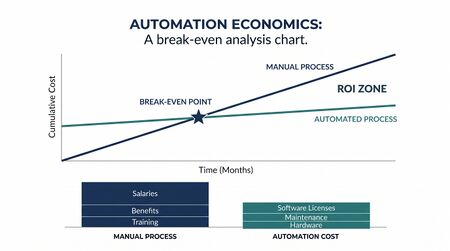

ROI Modeling: The Full Calculation

The canonical automation ROI formula—(labor savings from containment) / (automation cost)—omits enough cost and benefit categories to be systematically misleading. A complete ROI model requires:

Benefit side: - Avoided headcount cost = (contained volume × AHT reduction per contact) / (agent FTE productive hours × FTE cost) - Quality improvement value = (reduction in error rate) × (cost per error, including rework and customer impact) - Schedule flexibility value = reduction in overtime or on-call premium required to handle volume variance

Cost side: - Total cost of ownership (development + integration + testing + year 1 maintenance) - Ongoing annual cost (licensing + maintenance + supervision FTE + model refresh) - Transition cost (training + parallel operation + productivity dip during adoption) - Failure handling cost (escalated AHT premium × escalated volume × agent cost)

The containment rate assumption is the single highest-leverage variable in the model. At an assumed 70% containment, a 10-percentage-point reduction to 60% containment does not reduce savings by 14%—it reduces the effective labor savings by a larger margin because the residual escalated contacts carry elevated complexity and handling time. The mathematics of containment-adjusted savings are non-linear at the margins where most operational systems operate.

Davenport and Ronanki's analysis of AI business cases across industries found that inflated containment assumptions were the most common cause of ROI shortfall, with realized containment averaging 60–75% of projected containment in the first year of deployment.[4]

Payback Period Analysis with Sensitivity Testing

Payback period—the time required for cumulative net benefits to equal total investment—is the standard summary metric for automation investment decisions. A complete payback analysis includes:

Base case: Expected containment rate, expected AHT reduction, expected full cost stack, expected adoption timeline.

Sensitivity analysis on containment: What is the payback period if containment is 10 percentage points below expectation? 20 points below? At what containment rate does the investment become NPV-negative over a three-year horizon?

Sensitivity analysis on AHT for escalated contacts: Escalated contacts from AI failures typically carry 20–50% higher AHT due to customer frustration and lost context. What is the payback period if this premium is at the high end of the range?

Sensitivity analysis on adoption timeline: If full deployment takes six months longer than planned, what is the payback period impact? Integration delays are common; sensitivity testing should include a delay scenario.

Monte Carlo simulation: For investments above a materiality threshold, replacing point estimates with probability distributions for each key variable—and running 10,000 iterations to produce a distribution of payback periods—provides decision-makers with an honest view of outcome uncertainty rather than a single optimistic number.

The payback analysis should be separated from the business case approval document by organizational design: the team responsible for driving adoption has an incentive to present optimistic scenarios. An independent governance function reviewing automation economics provides a check on systematic optimism bias.

The Automation Paradox: New Work from Automation

The automation paradox refers to the empirical observation that automation creates new work categories even as it eliminates existing ones, often with different skill requirements and organizational placement. In WFM contexts, the new work categories include:

Automation quality assurance. Someone must review AI-generated schedule recommendations, containment event logs, and real-time adjustment decisions for accuracy and appropriateness. This role did not exist before automation; it is created by automation.

Exception handling specialization. Automated systems handle the routine; they escalate the complex. The human workload that remains after automation is systematically more complex, more ambiguous, and more cognitively demanding than the pre-automation average. Staffing models that assume post-automation contacts have the same AHT as pre-automation contacts are wrong by design.

Prompt and configuration engineering. LLM-based automation systems require ongoing prompt tuning, constraint configuration, and behavioral adjustment. This is skilled technical work that creates ongoing labor demand.

Supervisory and governance overhead. Governance frameworks for automated workforce decisions require human oversight roles that are new organizational creations.

McKinsey's 2023 analysis of automation adoption across service industries found that the net labor reduction in fully deployed automation programs was consistently smaller than projected—not because automation failed technically, but because new work categories absorbed a larger fraction of released capacity than anticipated.[5]

Portfolio Approach to Automation Investment

A portfolio framework for automation investment allocates resources across three categories distinguished by time horizon and uncertainty:

Quick wins (12–18 month payback): High-confidence, well-defined automation use cases where vendor solutions are mature and integration complexity is manageable. IVR containment improvement, automated adherence alerting, and standard reporting automation typically qualify. Quick wins generate cash flow and organizational confidence that fund and justify larger investments.

Strategic bets (24–36 month horizon, higher uncertainty): Use cases where the technology is capable but organizational readiness, integration complexity, or containment uncertainty creates material execution risk. Agentic AI for complex contact handling, AI-driven schedule optimization with preference learning, and predictive attrition-based staffing adjustments are current examples. Strategic bets require larger investment and tolerance for extended payback periods.

Capability builds (enabling infrastructure): Investments in data infrastructure, integration architecture, and analytical capability that do not themselves generate direct ROI but determine the ceiling on returns from quick wins and strategic bets. A unified data model connecting HRIS, WFM platform, and quality system data is a capability build; it does not contain contacts but it enables every downstream analytical application.

Portfolio balance requires governance that resists the pressure to defund capability builds when quick wins underperform. The organizations that generate the highest long-term automation returns are those that made data infrastructure investments early, even when those investments had no direct payback calculation.

The Sunset Problem

Decommissioning automation that underperforms its business case is organizationally difficult and analytically underspecified in most ROI frameworks. The sunset problem has three components.

Sunk cost bias. Organizations that have invested significantly in automation infrastructure resist recognizing underperformance as a decommissioning trigger. The relevant comparison is not historical cost but marginal benefit versus marginal cost going forward.

Decommissioning cost. Removing automation is not free. Systems that have been integrated into operational workflows, that agents and supervisors have been trained to work around, and that have generated technical debt in adjacent systems carry real decommissioning costs. These should be estimated at investment time and included in the full lifecycle cost.

Performance threshold definition. Decommissioning should be triggered by explicit, pre-defined performance thresholds, not by post-hoc judgment. An automation program should have documented minimum containment rates, maximum escalated AHT premiums, and maximum failure rates that, if breached for a defined period, automatically trigger a decommission review. Pre-defining these thresholds removes the organizational dynamics that otherwise produce indefinite underperforming automation operation.

The sunset decision connects directly to the WFM Assessment process, which should include periodic re-evaluation of deployed automation against original business cases.

Maturity Model Considerations

Within the WFM Labs Maturity Model, automation economics capability develops in stages. Organizations at lower maturity levels make automation decisions based on vendor-provided ROI calculators, which systematically favor acquisition. Mid-maturity organizations develop internal ROI modeling capability but typically focus on the benefit side, leaving cost stacks underspecified. High-maturity organizations run full lifecycle cost models, include sensitivity testing as a standard component of investment proposals, maintain automation performance dashboards that compare realized versus projected containment and AHT, and have defined decommissioning triggers in their automation governance framework.

The WFM Center of Excellence is the appropriate organizational home for automation economics methodology, providing consistency across business cases and independence from vendor relationships. COEs that include an analytics or finance liaison function are better positioned to produce credible automation economics than those that operate with WFM-only analytical resources.

Related Concepts

- Workforce Cost Modeling

- Capacity Planning Methods

- AI Containment Rate and Its Workforce Implications

- Agentic AI Workforce Planning

- Human AI Blended Staffing Models

- Three-Pool Architecture

- WFM Technology Selection and Vendor Evaluation

- Workforce Management Governance and Change Management

- WFM Center of Excellence CoE Design

- WFM Assessment

- Workload Based vs Headcount Based Budgeting

- Reporting and Analytics Framework

- WFM Labs Maturity Model

References

- ↑ Frey, C. B., & Osborne, M. A. (2017). The future of employment: How susceptible are jobs to computerisation? Technological Forecasting and Social Change, 114, 254–280.

- ↑ Brynjolfsson, E., & McAfee, A. (2017). The business of artificial intelligence. Harvard Business Review, July–August 2017.

- ↑ Willcocks, L. P., & Lacity, M. (2016). Service Automation: Robots and the Future of Work. Steve Brookes Publishing.

- ↑ Davenport, T. H., & Ronanki, R. (2018). Artificial intelligence for the real world. Harvard Business Review, January–February 2018.

- ↑ McKinsey & Company. (2023). The economic potential of generative AI: The next productivity frontier. McKinsey Global Institute.