WFM Technology Selection and Vendor Evaluation

WFM technology selection and vendor evaluation is the structured process by which an organization assesses, compares, and selects workforce management software platforms against defined operational requirements, integration constraints, and strategic objectives. The selection process spans requirements definition, market analysis, vendor solicitation (via request for proposal), scoring, negotiation, and implementation planning. Gartner's Magic Quadrant for Workforce Engagement Management provides an annual analyst assessment of the competitive WFM/WEM vendor landscape, evaluating vendors on completeness of vision and ability to execute.[1] The Forrester Wave for Workforce Optimization supplements this with detailed capability scoring across defined evaluation criteria.[2] The [[Society of Workforce Planning Professionals]] (SWPP) publishes practitioner guidance on technology selection that reflects operational priorities not always captured in analyst assessments.[3]

The Case for Structured Selection

WFM platform selection is a consequential, high-durability decision. Implementation cycles typically span 6–18 months; contract terms commonly extend 3–5 years; and the platform becomes deeply embedded in forecasting, scheduling, payroll integration, and real-time operations workflows. The cost of a poor selection — mismatched capabilities, integration failure, low adoption — extends across the full contract term and includes implementation cost recovery, productivity loss during transition, and the organizational disruption of a replacement cycle.

Structured selection processes reduce selection error by separating requirements from preferences, applying consistent evaluation criteria across vendors, and exposing gaps between vendor marketing claims and validated capabilities. The WFM Labs Maturity Model provides a useful frame: organizations should select platforms that serve current maturity needs while providing a credible path to target maturity — not platforms designed for maturity levels two or three levels above current state, where the organization lacks the data infrastructure and process maturity to utilize advanced features.

Requirements Definition

Functional Requirements

Functional requirements describe the capabilities the platform must support. Standard WFM functional requirement categories include:

- Forecasting — volume, AHT, multi-channel, multi-skill, method flexibility (statistical, ML), forecast accuracy tracking

- Scheduling — shift generation, rule compliance, preference bidding, multi-skill optimization, schedule publishing

- Schedule optimization — optimization algorithm sophistication, constraint handling, objective function configurability

- Intraday management — adherence monitoring, queue visibility, schedule adjustment tools

- Reporting and analytics — standard reports, custom reporting, self-service analytics

- Agent self-service — mobile apps, schedule viewing, time-off requests, shift swaps

- Administration — user management, role-based access, configuration flexibility, audit logging

Non-Functional Requirements

Non-functional requirements specify performance, reliability, and architectural constraints:

- Scalability — maximum agent population, maximum concurrent users, performance at peak scheduling cycles

- Availability — uptime SLA, maintenance window policy, disaster recovery RTO/RPO

- Security — data encryption standards, authentication requirements, compliance certifications (SOC 2, ISO 27001, regional data residency)

- Performance — schedule generation time for defined agent population and horizon; forecast calculation time

Integration Requirements

Integration requirements are frequently underweighted in selection processes and frequently cause implementation failure. Key integration requirements include:

| Integration Type | Typical Systems | Requirement Specifics |

|---|---|---|

| ACD / telephony | Genesys, Avaya, Amazon Connect, Five9, Cisco | Real-time agent state feed (interval, latency); historical interval data; schedule pushback |

| HRIS / HR system | Workday, SAP HCM, ADP, Oracle HCM | Headcount sync, hire/term events, leave data, skills |

| Payroll / time & attendance | ADP, Kronos/UKG, Ceridian | Actual hours, exception types, adherence data export |

| CRM | Salesforce, ServiceNow, Microsoft Dynamics | Demand signals, contact type data, customer segment routing |

| Quality management | NICE, Verint, Calabrio | Evaluation scores, coaching data, interaction metadata |

| AI / conversational AI platform | Google CCAI, Amazon Lex, Nuance | Containment rate data, escalation feeds |

Integration requirements should specify: protocol (API, flat file, streaming), frequency, data format, authentication method, and the specific data fields required in each direction.

Maturity-Adjusted Requirements

Requirements should reflect the organization's current maturity and target maturity, not a generic wish list. A Level 2 organization selecting a platform for the next three years needs robust foundational forecasting and scheduling; advanced ML forecasting and RL-based optimization are not priority requirements because the organization lacks the data infrastructure and analytical capacity to use them effectively. Selecting for maturity level is addressed in the WFM Labs Maturity Model and Capacity Planning Methods articles.

Market Analysis and Vendor Landscape

Analyst Framework

The primary analyst frameworks for WFM/WEM vendor assessment are:

- Gartner Magic Quadrant for Workforce Engagement Management — positions vendors on Ability to Execute and Completeness of Vision. Leaders quadrant vendors (typically NICE, Verint, Calabrio, Genesys, Alvaria, and UKG as of recent editions) have established track records and broad capability sets; Challengers have execution strength with narrower vision; Visionaries show innovation with less proven execution; Niche Players serve specific segments.

- Forrester Wave for Workforce Optimization — provides detailed capability scoring across defined criteria, often revealing differentiation within the Leaders tier that the MQ's two-axis view obscures.

Analyst reports are valuable as market maps and directional capability guides, but should not be used as primary selection criteria. Analyst assessments reflect vendor capability in general market terms; an organization's specific requirements — ACD integration, agent population size, regulatory environment — may not align with the dimensions on which analysts differentiate vendors.

Vendor Categories

The WFM vendor market spans several architectural categories:

- Integrated WEM suites — platforms that combine WFM, quality management, coaching, and analytics in a single product (NICE Workforce Management, Verint Workforce Management, Calabrio ONE). Reduce integration complexity; may include capabilities that overlap with existing point solutions.

- Standalone WFM platforms — dedicated workforce management without integrated quality or coaching (Assembled, Alvaria WFM, injixo). Often stronger at core WFM; require integration with separate quality and analytics tools.

- CCaaS-embedded WFM — WFM capability embedded within Contact Center as a Service platforms (Genesys Cloud WFM, Amazon Connect WFM, Five9 WFM). Tightest ACD integration; may be less capable than standalone WFM at advanced forecasting or scheduling optimization.

- Niche and emerging platforms — newer entrants with focused capabilities (e.g., Playvs for gaming industry scheduling, UJET WFM for digital-first contact centers).

RFP Design and Scoring =

Request for Proposal Structure

A structured RFP for WFM platform selection should include:

- Organizational context — contact center size, channels, ACD platform, current WFM solution, maturity level, strategic direction

- Functional requirements — capability-by-capability requirement statements, rated by priority (must-have, should-have, nice-to-have)

- Integration requirements — integration specifications for all required system connections

- Non-functional requirements — scalability, performance, availability, security

- Pricing request — per-seat, module, and implementation cost structure; year-over-year cost trajectory

- Implementation requirements — implementation methodology, typical timeline, training approach, support model

- Reference requirements — comparable customer references (similar ACD, similar size, similar complexity)

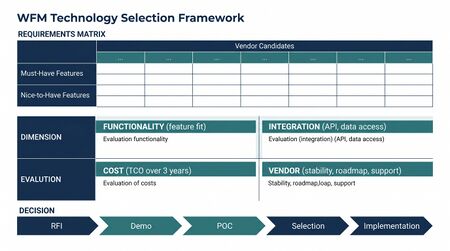

Scoring Framework

A structured scoring rubric weights requirements by priority and evaluates vendor responses against each requirement:

| Category | Suggested Weight | Rationale |

|---|---|---|

| Core WFM functionality (forecasting, scheduling, intraday) | 30% | Primary purpose of platform |

| Integration capability | 25% | Frequent failure point; high remediation cost |

| Ease of use and agent self-service | 15% | Adoption driver; operational efficiency |

| Analytics and reporting | 10% | Enabler of maturity advancement |

| Vendor stability and support | 10% | Contract duration risk |

| Total cost of ownership (3-year) | 10% | Budget constraint |

Weights should be adjusted based on organizational priorities — an organization with complex integration requirements may weight integration at 35%; an organization with high agent self-service adoption goals may weight UX more heavily.

Proof of Concept

For final-stage vendor evaluation, a structured proof of concept (POC) using the organization's own historical data is substantially more reliable than vendor-conducted demonstrations. A POC should:

- Use 6–12 months of the organization's actual volume and schedule history

- Test the specific integrations required (ACD agent state feed, HRIS sync)

- Measure forecast accuracy (comparing vendor forecast to held-out actuals)

- Evaluate schedule generation time for the full agent population

- Involve frontline WFM analysts and supervisors in usability assessment, not only technical evaluators

Implementation and Transition Planning

Platform selection is complete when a vendor is selected and contracted. The transition from the incumbent platform introduces operational risk that requires explicit planning:

- Parallel run period — operating incumbent and new platform simultaneously to validate output consistency before cutover

- Historical data migration — importing historical volume and schedule data into the new platform for forecasting model initialization

- Integration validation — systematic testing of each integration against production system data before go-live

- Training program — structured training for WFM analysts, supervisors, and agents, differentiated by role

- Rollback plan — defined criteria and procedures for reverting to the incumbent platform if go-live failures exceed defined thresholds

Maturity Model Considerations

| Maturity Level | Selection Posture |

|---|---|

| L1–L2 | Selecting first formal WFM platform; prioritize core forecasting and scheduling functionality, ACD integration, and ease of adoption; avoid over-specifying advanced capabilities |

| L3 | Selecting to replace or upgrade foundational platform; prioritize ML forecasting, multi-skill scheduling, and intraday automation; integration capability is critical |

| L4 | Selecting to enable real-time optimization, simulation, and advanced analytics; evaluate AI/ML roadmap credibility; API ecosystem for ecosystem integration |

| L5 | Selecting for unified workforce capability; evaluate AI agent capacity planning, agentic workforce integration, and RL-based scheduling roadmap |

Related Concepts

- Workforce Management Software

- WFM Ecosystem Architecture

- WFM Data Infrastructure and Integration Architecture

- Contact Center as a Service

- Reporting and Analytics Framework

- Schedule Optimization

- Forecasting Methods

- WFM Labs Maturity Model

- WFM Processes

- WFM Roles

- Skill-Based Routing

- Next Generation Routing

See Also

- Build vs Buy and Vendor Governance — Build-or-buy decisions and ongoing vendor governance

References

- ↑ Gartner. (2024). Magic Quadrant for Workforce Engagement Management. Gartner, Inc.

- ↑ Forrester Research. (2024). The Forrester Wave: Workforce Optimization Platforms. Forrester Research, Inc.

- ↑ SWPP. (2022). Technology Selection Guide for Workforce Management Professionals. Society of Workforce Planning Professionals.