Statsmodels and Time Series Analysis

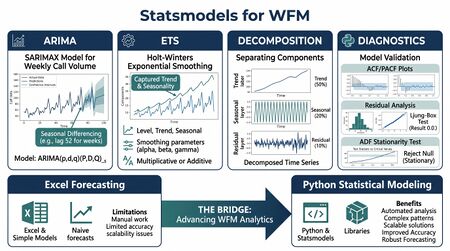

Statsmodels is an open-source Python library that provides classes and functions for the estimation of statistical models, hypothesis testing, and statistical data exploration.[1] For workforce management practitioners, statsmodels occupies a critical position in the analytical toolchain: it brings the same time series methods that underpin commercial WFM forecasting—ARIMA, exponential smoothing, decomposition, regression—into an environment where the analyst controls every parameter, inspects every diagnostic, and validates every assumption.

Most WFM analysts who have built forecasts in spreadsheets understand the core concepts: smoothing constants, seasonal indices, trend adjustments. What statsmodels provides is the ability to apply those same concepts at scale, with proper statistical rigor, and with none of the row-limit or transparency constraints that spreadsheets impose. At the same time, statsmodels remains firmly in the statistical tradition—these are not black-box machine learning models but interpretable, theory-grounded methods whose parameters have direct operational meaning.

This article walks through statsmodels' core time series capabilities as they apply to WFM forecasting workflows. It assumes familiarity with Pandas for data handling and a working understanding of forecasting concepts. The goal is not to replace a textbook but to bridge the gap between knowing what ARIMA is and knowing how to use it against real contact center data.

What Is Statsmodels

Statsmodels is Python's primary library for classical statistical modeling. Where scikit-learn focuses on predictive machine learning and SciPy provides low-level statistical functions, statsmodels occupies the space that SAS, SPSS, and R's core stats packages have held for decades: fitted models with summary tables, diagnostic tests, confidence intervals, and p-values.[1]

For WFM practitioners, the library matters because it implements exactly the methods that commercial WFM platforms use internally but rarely expose:

- Time series models — ARIMA, SARIMAX, exponential smoothing (Holt-Winters), unobserved components models, and vector autoregressions.

- Decomposition — Classical and STL decomposition for separating trend, seasonality, and residuals.

- Regression — Ordinary least squares (OLS), generalized linear models (GLMs), and mixed-effects models for causal forecasting.

- Statistical tests — Augmented Dickey-Fuller for stationarity, Ljung-Box for autocorrelation, Durbin-Watson for residual independence, and dozens more.

- Diagnostic tools — Residual plots, ACF/PACF functions, QQ plots, and influence diagnostics.

The practical advantage over Excel-based forecasting is not just scale—though processing 500 queues through a consistent ARIMA pipeline is trivially scriptable in Python while being nearly impossible in spreadsheets. The real advantage is transparency. When a commercial WFM tool produces a forecast, the analyst sees the output but not the model diagnostics. With statsmodels, every coefficient, every residual, every test statistic is accessible. This makes it possible to understand why a forecast behaves as it does, not just what it predicts.

Statsmodels integrates tightly with Pandas DataFrames and NumPy arrays, making it straightforward to move from data cleaning to model fitting to forecast generation within a single Python workflow.

ARIMA in Statsmodels

Main article: ARIMA Models

The SARIMAX class in statsmodels (statsmodels.tsa.statespace.sarimax.SARIMAX) is the primary interface for fitting autoregressive integrated moving average models, including seasonal variants and exogenous regressors.[1] The name stands for Seasonal ARIMA with eXogenous variables, and it subsumes plain ARIMA, SARIMA, and ARIMAX as special cases depending on which parameters are specified.

The ARIMA Framework for WFM Data

An ARIMA model captures three components of a time series:

- Autoregressive (AR) terms — today's call volume depends on yesterday's and the day before. The AR order p specifies how many lagged values enter the model.

- Differencing (I) terms — the model differences the series d times to achieve stationarity. For daily contact volume with a clear upward trend, first differencing (d = 1) typically suffices.

- Moving average (MA) terms — the model includes q lagged forecast errors, capturing short-run shocks that persist.

For seasonal data—and virtually all contact center data is seasonal—SARIMA adds a parallel set of seasonal AR, differencing, and MA terms at the seasonal period s. A daily call volume series with weekly seasonality uses s = 7. An interval-level series with daily seasonality might use s = 48 (for 30-minute intervals) or s = 96 (for 15-minute intervals), though these large seasonal periods can make estimation computationally intensive.

Parameter Selection

Choosing the right ARIMA order is one of the most important practical decisions. The classical Box-Jenkins methodology proceeds through identification, estimation, and diagnostic checking.[2]

Statsmodels supports this workflow through:

- ACF and PACF plots — the

plot_acf()andplot_pacf()functions reveal the autocorrelation structure. A PACF that cuts off after lag 2 suggests AR(2); an ACF that cuts off after lag 1 suggests MA(1). Significant spikes at seasonal lags (7, 14, 21 for daily data) indicate seasonal terms are needed. - Information criteria — after fitting candidate models, AIC (Akaike Information Criterion) and BIC (Bayesian Information Criterion) provide objective comparisons. Lower values indicate better trade-offs between fit and parsimony.

- Automated selection — the

pmdarimalibrary (a companion to statsmodels) provides anauto_arima()function that searches over order combinations using information criteria, analogous to R'sauto.arima(). This is useful for batch-fitting models across hundreds of queues.

In practice, WFM analysts often find that a SARIMA(1,1,1)(1,1,1,7) or SARIMA(2,1,1)(0,1,1,7) works well for daily contact volume, though the right specification always depends on the specific series. The Box-Jenkins discipline of fitting, diagnosing, and refining remains essential—automated selection is a starting point, not an endpoint.

Fitting and Forecasting

The workflow follows a consistent pattern: create the model object, fit it, generate forecasts. The .fit() method estimates parameters via maximum likelihood. The .forecast() method produces point predictions, and .get_forecast() returns a results object that includes prediction intervals—critical for probabilistic planning.

One practical consideration for WFM data: contact center volume series often contain outliers—system outages, snow days, one-time marketing blasts—that can distort ARIMA estimates. Preprocessing to handle these events (replacing outliers with interpolated values, or including them as exogenous dummy variables in the SARIMAX framework) materially improves forecast quality. The SARIMAX class's support for exogenous regressors makes the dummy-variable approach straightforward.

Exponential Smoothing

Main article: Exponential Smoothing

The ExponentialSmoothing class (statsmodels.tsa.holtwinters.ExponentialSmoothing) implements the Holt-Winters family of exponential smoothing methods, which remain among the most widely used forecasting techniques in operational WFM.[3]

The Holt-Winters Method

Exponential smoothing builds forecasts from three smoothed components:

- Level — the current baseline of the series, updated each period with smoothing parameter α.

- Trend — the current rate of increase or decrease, with smoothing parameter β. Trend can be additive (constant increment) or multiplicative (percentage growth), and optionally damped to prevent runaway extrapolation.

- Seasonality — the seasonal pattern, with smoothing parameter γ. Seasonality can also be additive (constant offset) or multiplicative (proportional scaling).

For contact center daily volume, multiplicative seasonality often provides a better fit than additive, because the Monday-through-Sunday pattern tends to scale with volume level—a queue handling 1,000 calls per day shows larger absolute day-of-week swings than one handling 200 calls per day.

Practical Application to Call Volume

A typical application fits Holt-Winters to daily call volume data with a seasonal period of 7 (weekly cycle). The key decisions are:

- Additive vs. multiplicative trend and seasonality — inspect the raw data. If the seasonal swings grow proportionally with the level, use multiplicative. If they stay roughly constant in absolute terms, use additive.

- Damped trend — for forecast horizons beyond a few weeks, damped trend almost always outperforms undamped, because contact center growth rarely sustains a linear trajectory indefinitely. Hyndman and Athanasopoulos recommend damped trend as the default unless there is strong evidence for sustained linear growth.[3]

- Initialization — statsmodels supports "estimated" (optimized via maximum likelihood), "heuristic" (following Hyndman's initialization method), and "known" (user-specified) initial values. Estimated initialization is generally preferred.

The ExponentialSmoothing class automatically optimizes the smoothing parameters (α, β, γ, and the damping parameter φ) using maximum likelihood estimation when .fit(optimized=True) is called. This removes the manual parameter-tuning step that spreadsheet implementations require, while producing statistically optimal values.

For sub-daily forecasting—interval-level call volume—the seasonal period becomes large (48 for half-hourly, 96 for 15-minute intervals), and the number of seasonal parameters grows accordingly. Statsmodels handles this, but estimation becomes slower. An alternative for high-frequency WFM data is to decompose the problem: use one model for daily volume and a separate intraday distribution pattern, combining the two to produce interval forecasts. This is, in fact, how most commercial WFM platforms approach interval forecasting internally.

Time Series Decomposition

Main article: Time Series Decomposition

Decomposition separates a time series into its constituent components—trend, seasonal, and residual—providing foundational insight into the structure of WFM data before any forecasting model is fitted.

Classical Decomposition

The seasonal_decompose() function in statsmodels (statsmodels.tsa.seasonal.seasonal_decompose) performs classical additive or multiplicative decomposition using moving averages.[1] For a daily contact volume series:

- Trend — a centered moving average (typically of length 7 for weekly seasonality) reveals the underlying direction of volume: growing, stable, or declining.

- Seasonal — the average deviation from trend for each day of the week (or each interval within the day, for sub-daily data). This is the recurring pattern.

- Residual — everything left after removing trend and seasonal components. Large residuals point to events the model cannot explain—outages, holidays, campaigns.

For WFM analysts, the decomposition plot is often the single most informative diagnostic. It immediately reveals whether a series has a meaningful trend (important for long-range capacity planning), how strong the seasonal pattern is relative to random variation (important for scheduling), and which specific dates or periods are anomalous (important for data cleaning).

STL Decomposition

Statsmodels also implements STL (Seasonal and Trend decomposition using Loess) through the STL class, which offers several advantages over classical decomposition:

- Robustness to outliers — STL uses locally weighted regression (loess), which can be made robust to extreme values. Classical decomposition propagates outliers directly into the seasonal and trend estimates.

- Flexible seasonality — STL allows the seasonal component to change over time, rather than assuming a fixed seasonal pattern. In contact centers, the day-of-week pattern does shift—Saturday might carry a larger share of volume during certain promotional periods.

- Control over smoothness — separate bandwidth parameters for trend and seasonal smoothing give the analyst control over how rapidly each component is allowed to change.

For WFM data with known outliers (holidays, system outages, marketing events), STL decomposition is generally preferable to classical decomposition.

Statistical Tests for WFM Data

Before fitting any time series model, the analyst needs to understand the statistical properties of the data. Statsmodels provides a comprehensive suite of tests that answer practical questions WFM analysts face daily.

Stationarity Testing

The Augmented Dickey-Fuller (ADF) test (statsmodels.tsa.stattools.adfuller) tests whether a time series has a unit root—informally, whether it wanders without reverting to a stable mean. Stationarity matters because ARIMA models require the (differenced) series to be stationary, and because a non-stationary series will produce forecasts that drift unpredictably.

Practical interpretation for WFM:

- ADF p-value < 0.05 — the series is likely stationary. If you are modeling daily call volume and the ADF test rejects the null after first differencing, ARIMA with d = 1 is appropriate.

- ADF p-value > 0.05 — the series likely has a unit root and needs differencing. If the test fails even after first differencing, consider second differencing (d = 2), though this is rare for contact center volume data.

The KPSS test (statsmodels.tsa.stattools.kpss) tests the opposite null hypothesis (stationarity), and using both ADF and KPSS together provides more diagnostic confidence. When ADF rejects (stationary) and KPSS does not reject (also stationary), the evidence for stationarity is strong.

Autocorrelation Testing

The Ljung-Box test (statsmodels.stats.diagnostic.acorr_ljungbox) tests whether the residuals of a fitted model exhibit significant autocorrelation—that is, whether the model has failed to capture temporal patterns in the data.

Practical interpretation for WFM:

- Ljung-Box p-values > 0.05 across multiple lags — residuals resemble white noise, meaning the model has adequately captured the time series structure. Good.

- Significant p-values at seasonal lags (7, 14, 21) — the model has missed seasonal structure. Consider adding or adjusting seasonal terms.

- Significant p-values at early lags (1, 2, 3) — the model has missed short-run dynamics. Consider increasing AR or MA order.

These tests are not academic exercises. A WFM forecast model whose residuals show strong autocorrelation is systematically leaving predictive information on the table—information that would reduce forecast error and improve staffing decisions.

Normality of Residuals

The Jarque-Bera test (reported automatically in statsmodels regression summaries) and Shapiro-Wilk test assess whether model residuals follow a normal distribution. Normality matters primarily for the validity of prediction intervals: if residuals are not approximately normal, the confidence bands around forecasts may be too narrow or too wide.

In WFM practice, residuals from daily volume models often exhibit mild non-normality due to holiday effects and operational disruptions. This is typically acceptable for point forecasting but should be noted when constructing prediction intervals.

Regression Analysis

Main article: Regression for Forecasting

Statsmodels' OLS class (statsmodels.regression.linear_model.OLS) provides full-featured ordinary least squares regression with the rich summary output—R-squared, F-statistics, coefficient standard errors, confidence intervals—that WFM analysts familiar with Excel's regression add-in will recognize, but with none of the variable-count or observation-count limitations.

Causal Forecasting in WFM

While ARIMA and exponential smoothing model the time series' own past behavior, regression enables causal forecasting: modeling contact volume as a function of external drivers. Common regression variables in WFM include:

- Day-of-week dummies — seven binary variables capturing the weekly pattern (drop one to avoid multicollinearity).

- Holiday indicators — binary flags for major holidays and the days immediately surrounding them. Holiday effects in contact centers often extend 1–2 days before and after the holiday itself.

- Marketing event flags — promotional campaigns, billing cycle dates, product launches that drive contact spikes.

- Weather variables — for industries where weather affects contact volume (utilities, insurance, travel).

- Lagged volume — yesterday's volume as a predictor of today's, capturing autocorrelation within the regression framework.

The OLS summary output provides coefficient estimates with confidence intervals, allowing the analyst to quantify the impact of each driver. If a major promotion historically adds 340 ± 45 calls per day (coefficient ± standard error), the planning team can budget staffing accordingly for the next campaign.

Combining Regression with Time Series

The SARIMAX class bridges regression and time series by accepting exogenous regressors alongside the ARIMA structure. This "regression with ARIMA errors" approach is often the most powerful single-model specification for WFM data, because it captures both the causal drivers (holidays, events) and the time series dynamics (autocorrelation, seasonality) in a unified framework. Hyndman and Athanasopoulos describe this as the "dynamic regression" approach and recommend it as a default strategy when external drivers are available.[3]

Prediction Intervals and Confidence Bands

Main article: Probabilistic Forecasting

Point forecasts—single numbers for each future period—are the standard output of most WFM tools. But a point forecast without a measure of uncertainty is incomplete and potentially dangerous. Statsmodels provides prediction intervals as a core output of its forecasting methods, directly supporting probabilistic planning approaches.

How Prediction Intervals Work

Every statsmodels time series model can produce prediction intervals through the .get_forecast() or .get_prediction() methods, which return a results object with .conf_int(alpha=0.05) for 95% intervals (or any other confidence level).

These intervals capture two sources of uncertainty:

- Parameter uncertainty — the model's coefficients are estimates, not known values, and this estimation error propagates into forecasts.

- Residual uncertainty — even with perfect parameters, future values will deviate from the forecast due to random shocks (the innovation variance).

For ARIMA models, prediction intervals widen as the forecast horizon extends, reflecting the compounding uncertainty of multi-step-ahead forecasting. A one-day-ahead forecast has a narrow interval; a 30-day-ahead forecast has a much wider one. This behavior is correct and informative—it quantifies exactly how much confidence degrades with horizon length.

WFM Application

Prediction intervals transform WFM planning conversations:

- Staffing decisions — instead of staffing to a single point forecast, the planning team can staff to the 80th or 90th percentile of the prediction interval, trading increased cost for increased reliability of service level achievement.

- Scenario planning — the lower bound of the prediction interval represents the optimistic scenario; the upper bound represents the pessimistic scenario. Capacity plans can be stress-tested against both.

- Forecast accountability — accuracy metrics like MAPE and WAPE evaluate point forecasts. Prediction intervals should also be evaluated using calibration: if the 95% interval covers the actual value less than 95% of the time, the model underestimates uncertainty.

The connection between statsmodels' prediction intervals and deterministic versus probabilistic planning is direct. A planning team that uses only point forecasts is doing deterministic planning by default. Adding prediction intervals—which statsmodels makes trivially easy—is the first step toward probabilistic planning, without requiring any change to the underlying model.

Diagnostic Plots

Diagnosing a fitted model is where statsmodels most clearly outperforms spreadsheet-based approaches. The library provides built-in plotting functions and diagnostic statistics that reveal whether a model is capturing the data's structure or systematically failing.

Residual Analysis

After fitting any time series model, the first diagnostic step is examining the residuals—the differences between fitted values and actual values. Good residuals should resemble white noise: no pattern, no trend, no remaining autocorrelation, and approximately constant variance.

Statsmodels' plot_diagnostics() method (available on state space model results, including SARIMAX) produces a four-panel diagnostic plot:

- Standardized residuals over time — reveals whether residual variance is stable or shows heteroskedasticity (variance changes over time). In WFM data, residual variance often increases with volume level, suggesting a multiplicative model or log transformation may be appropriate.

- Histogram with KDE — shows whether residuals are approximately normally distributed. Moderate departures from normality are common and usually acceptable; heavy tails may indicate outliers that warrant investigation.

- Normal QQ plot — plots residual quantiles against theoretical normal quantiles. Points should follow the diagonal. Systematic departures in the tails indicate non-normality.

- Correlogram (ACF of residuals) — shows remaining autocorrelation in the residuals. Significant spikes indicate the model has not fully captured the temporal structure.

ACF and PACF Plots

The autocorrelation function (ACF) and partial autocorrelation function (PACF) are the primary tools for understanding the temporal dependence structure of a time series and for diagnosing fitted models.[2]

Before fitting a model:

- ACF and PACF of the raw (or differenced) series guide ARIMA order selection. A slowly decaying ACF suggests non-stationarity and the need for differencing. Spikes at seasonal lags confirm seasonal structure.

After fitting a model:

- ACF and PACF of the residuals reveal what the model missed. The goal is residuals with no significant autocorrelation at any lag—indicating that the model has extracted all available temporal information.

For WFM data, the most common pattern in pre-model ACF/PACF plots is strong positive autocorrelation at lag 7 (and 14, 21, etc.) reflecting the weekly cycle, combined with moderate autocorrelation at lag 1 reflecting day-to-day momentum. A properly specified SARIMA model should eliminate both patterns from the residual ACF.

Information Criteria

When comparing multiple candidate models—different ARIMA orders, additive versus multiplicative seasonality, with and without exogenous regressors—information criteria provide an objective ranking. Statsmodels reports:

- AIC (Akaike Information Criterion) — balances goodness of fit against model complexity. Lower is better. Tends to favor slightly more complex models.

- BIC (Bayesian Information Criterion) — applies a stronger penalty for complexity than AIC. Lower is better. Tends to favor simpler models.

In WFM practice, AIC and BIC will sometimes disagree: AIC might prefer a SARIMA(2,1,1)(1,1,1,7) while BIC prefers the simpler SARIMA(1,1,0)(0,1,1,7). When they disagree, the more parsimonious model (BIC's preference) is often the safer choice for WFM forecasting, because overly complex models tend to overfit noise in the training data and produce less reliable forecasts at operational horizons.

Comparing Statsmodels to Commercial WFM Forecasting

Commercial WFM platforms—NICE, Verint, Alvaria, Calabrio, Genesys—include built-in forecasting engines that apply many of the same methods available in statsmodels: exponential smoothing, ARIMA, regression, and decomposition. Understanding when to use each approach, and how to use one to validate the other, is a practical skill for senior WFM analysts.

When Commercial Tools Suffice

For most day-to-day operational forecasting—next week's staffing, next month's capacity plan—commercial WFM platforms are the right tool. They integrate directly with ACD data, they automate the forecast-to-schedule pipeline, and they are maintained by vendor engineering teams. A WFM analyst does not need to rebuild this infrastructure in Python.

When Statsmodels Adds Value

Statsmodels becomes valuable in several specific scenarios:

- Validating platform forecasts — when the commercial tool produces a forecast that seems unreasonable, statsmodels provides an independent cross-check. If a SARIMA model fitted in Python produces a materially different forecast, it triggers investigation into the platform's configuration.

- Understanding method behavior — commercial tools often present forecasting as a "select method and click" workflow. Building the same model in statsmodels, with full access to residuals, diagnostics, and parameter estimates, deepens the analyst's understanding of why a method works or fails for specific data patterns.

- Handling edge cases — event-driven volume spikes, product migrations, channel shifts, and other structural changes can confuse commercial auto-forecasting algorithms. Manual model specification in statsmodels, with appropriate dummy variables and regime adjustments, often handles these cases more effectively.

- Multi-model ensembles — combining forecasts from multiple methods (a practice well supported by the forecasting literature[4]) is straightforward in Python but typically unsupported in commercial WFM tools.

- Custom accuracy analysis — statsmodels enables backtesting workflows where the analyst fits models on rolling training windows and evaluates accuracy on held-out periods, producing distributional accuracy metrics rather than single-number summaries.

- Long-range strategic planning — commercial WFM tools are optimized for short-to-medium horizons (days to weeks). Annual capacity plans and headcount budgets often require models with macroeconomic regressors, trend analysis, and scenario structures that are easier to implement in statsmodels.

Using Both Together

The strongest analytical workflow uses both commercial and open-source tools. The commercial platform handles the production forecasting pipeline—the daily/weekly operational cycle that feeds scheduling. Statsmodels serves as the analytical workbench: validating, diagnosing, prototyping new approaches, and handling the long-range and edge-case work that falls outside the platform's sweet spot.

This is not about replacing the WFM platform's forecasting engine. It is about augmenting it with the diagnostic depth that only a statistical programming environment can provide. When a WFM analyst can explain why the platform's triple exponential smoothing forecast underperformed last month—because the seasonal component was additive when the data pattern is multiplicative, or because the smoothing parameters had converged to values that over-weighted recent observations—that analyst has moved from using the tool to understanding the tool. Statsmodels is how that understanding is built.[3][2]

See Also

- Python for Workforce Management

- Forecasting Methods

- ARIMA Models

- Exponential Smoothing

- Time Series Decomposition

- Regression for Forecasting

- Probabilistic Forecasting

- Forecast Accuracy Metrics

- MAPE WAPE and Forecast Bias

- Deterministic Planning in WFM

- Probabilistic Planning in WFM

- Model Evaluation and Validation

- Pandas and Data Manipulation for WFM

References

- ↑ 1.0 1.1 1.2 1.3 Seabold, Skipper, and Josef Perktold. "Statsmodels: Econometric and Statistical Modeling with Python." Proceedings of the 9th Python in Science Conference, 2010. https://www.statsmodels.org/stable/index.html

- ↑ 2.0 2.1 2.2 Box, George E. P., Gwilym M. Jenkins, Gregory C. Reinsel, and Greta M. Ljung. Time Series Analysis: Forecasting and Control. 5th ed. Wiley, 2015. ISBN 978-1118675021.

- ↑ 3.0 3.1 3.2 3.3 Hyndman, Rob J., and George Athanasopoulos. Forecasting: Principles and Practice. 3rd ed. OTexts, 2021. https://otexts.com/fpp3/

- ↑ Clemen, Robert T. "Combining forecasts: A review and annotated bibliography." International Journal of Forecasting 5, no. 4 (1989): 559–583. doi:10.1016/0169-2070(89)90012-5.