Deterministic Planning in WFM

Deterministic Planning in WFM refers to the use of mathematical models that produce a single, exact output for any given set of inputs in workforce management. Unlike probabilistic approaches that generate distributions or ranges, deterministic models take fixed inputs — a forecast volume, an average handle time, a service level target — and return one answer: the number of agents needed, the optimal schedule, the expected workload per interval.

Most of what WFM practitioners do daily is deterministic planning, whether they recognize it or not. When a workforce planner plugs a volume forecast and AHT into a staffing calculator, the math is deterministic. When a scheduling engine solves for optimal shift assignments subject to labor rules, it runs a deterministic optimization. When a forecaster fits an ARIMA model and extracts a point forecast, the output is a single trajectory — deterministic by design.

This is not a limitation. Deterministic models form the mathematical backbone of WFM because they are fast, reproducible, auditable, and sufficient for the vast majority of operational decisions. Understanding where deterministic methods apply — and where they break down — is essential for any WFM practitioner who wants to move beyond button-clicking into genuine analytical fluency.

This article covers the specific deterministic techniques used across forecasting, staffing, and scheduling in WFM. For the conceptual distinction between deterministic and probabilistic paradigms, see Deterministic vs Probabilistic Models. For the probabilistic counterpart, see Probabilistic Planning in WFM.

Erlang Models

Main article: Erlang-A

The Canonical WFM Calculation

The Erlang C formula is the single most important deterministic model in contact center WFM. Developed by A.K. Erlang in the early twentieth century for telephone traffic engineering, it calculates the probability that an arriving call must wait in queue given three inputs: arrival rate, average service time, and number of servers (agents).[1]

The formula assumes:

- Calls arrive according to a Poisson process (random arrivals)

- Service times are exponentially distributed

- No caller abandons the queue (infinite patience)

- All agents are equally skilled and available

- The system operates in steady state

Given these assumptions, Erlang C produces an exact probability of wait, from which WFM tools derive the number of agents required to meet a service level target (e.g., 80% of calls answered within 20 seconds).

Why Erlang C Is Deterministic

Erlang C is deterministic in the engineering sense: identical inputs always yield identical outputs. Feed it 200 calls per half-hour, 300 seconds AHT, and a target of 80/20 — it returns the same staffing requirement every time. There is no random seed, no simulation variance, no confidence interval. The model captures randomness in arrival patterns through the Poisson assumption, but the calculation itself is closed-form and exact.

This determinism is what makes Erlang C operationally useful. A planner can build an entire day's staffing plan interval by interval, and the results are perfectly reproducible. Any other planner with the same inputs will get the same answer. Auditors can verify the math. Managers can trace a staffing decision back to its inputs.

Erlang-A and Abandonment

Erlang-A extends Erlang C by adding a single parameter: the abandonment rate (the rate at which callers hang up while waiting). This remains a deterministic model — four inputs in, one answer out — but it relaxes the unrealistic "infinite patience" assumption. In environments with significant abandonment (anything above 3-5%), Erlang-A produces materially different (and more accurate) staffing requirements than Erlang C, typically requiring fewer agents because some callers self-select out of the queue.

The practical difference matters. A center handling 500 calls per half-hour with a 4% abandonment rate might need 3-5 fewer agents per interval under Erlang-A versus Erlang C. Across a full week of scheduling, that compounds into meaningful labor cost differences.

When Erlang Models Are the Right Tool

Erlang models are appropriate when:

- The planning horizon is short (intraday to weekly)

- The center operates a relatively homogeneous queue (single skill or primary skill routing)

- Volume and AHT inputs are reasonably stable within each interval

- The goal is to determine interval-level staffing requirements for scheduling

They become less appropriate for multi-skill environments where interactions between queues create nonlinear effects, or for long-range capacity planning where input uncertainty dominates the calculation. In those cases, simulation or probabilistic methods may be warranted — but for the day-to-day work of converting a forecast into a staffing plan, Erlang models remain the standard tool.

Linear and Integer Programming for Scheduling

Main article: Schedule Generation

How Schedule Optimization Actually Works

When a WFM platform "optimizes" a schedule, it is typically solving a linear program (LP) or mixed-integer program (MIP). These are deterministic optimization techniques from the field of operations research that find the best solution subject to constraints.[2]

The structure of a scheduling optimization problem has three components:

Objective function: What to minimize or maximize. In WFM, this is usually minimizing total labor cost, minimizing overstaffing (the gap between scheduled agents and required agents across intervals), or maximizing service level coverage. The objective function is a mathematical expression — for example, minimize the sum of (scheduled FTE minus required FTE) across all intervals, weighted by interval priority.

Decision variables: What the solver controls. These are the assignments: which agent works which shift, which breaks slot each agent receives, which days off each agent gets. In integer programming, these are typically binary (0/1) variables — Agent A either works Shift 3 on Monday or does not.

Constraints: The rules the solution must satisfy. This is where labor law, business rules, and operational requirements live. Maximum hours per week, minimum rest between shifts, required break timing, skill coverage requirements, contractual obligations.

A Concrete Example

Consider a simplified break optimization problem. You have 50 agents working 8-hour shifts. Each needs a 30-minute lunch break between hours 3 and 6 of their shift. The staffing requirement varies by interval. The solver must assign each agent a lunch break start time such that the number of agents on the phones in each interval stays as close to the requirement as possible.

This is an integer program. The decision variables are the break start intervals for each agent. The constraint is that each break falls within the allowed window. The objective is to minimize the total deviation between scheduled coverage and required coverage across all intervals.

The solver evaluates this deterministically. Same inputs, same solution (with possible tie-breaking in degenerate cases, but the objective value is identical). There is no randomness in the optimization process. The solver uses algorithms like branch-and-bound or cutting planes to systematically search the solution space and prove optimality — or find solutions within a specified gap of optimal.[3]

Shift Design and Generation

Shift design and schedule generation follow the same paradigm. When a WFM system generates shifts to cover a demand curve, it solves an optimization problem: select from a set of possible shift patterns (start times, lengths, break configurations) the combination that covers the staffing requirement at minimum cost. Spine shift design takes this further by defining a core shift structure that covers the predictable base load, then layering variable shifts on top.

All of this is deterministic. The shift library is fixed. The demand curve is fixed (it came from the forecast, which is itself a deterministic output). The constraints are fixed. The solution is fixed. This is precisely why WFM platforms can generate schedules in seconds or minutes — they are solving well-structured deterministic problems, not running simulations.

Solvers and Computational Reality

Modern scheduling problems in large contact centers can involve tens of thousands of decision variables and hundreds of thousands of constraints. A center with 2,000 agents, 48 half-hour intervals per day, 7 days per week, and 15 possible shift patterns already produces a problem with millions of potential combinations.

Commercial solvers (Gurobi, CPLEX, the solvers embedded in NICE, Verint, Calabrio, and other WFM platforms) handle this through sophisticated algorithms that exploit problem structure. But there is a practical consideration: MIP problems are NP-hard in general, meaning solve times can grow exponentially with problem size. In practice, WFM platforms often solve to within 1-2% of optimal and declare victory — a pragmatic choice that trades theoretical perfection for operational speed. The result is still deterministic: same inputs, same solution every time.

For practitioners interested in building custom optimization, PuLP and Optimization for Scheduling covers practical implementation using open-source tools.

Time Series Forecasting as Deterministic Process

Main article: Forecasting Methods

Point Forecasts and Deterministic Output

Forecasting is where deterministic and probabilistic perspectives most commonly blur. A time series model like ARIMA or exponential smoothing estimates parameters from historical data and projects forward. The resulting point forecast — "we expect 1,247 calls next Tuesday between 10:00 and 10:30" — is a single number. One input history, one model, one output. Deterministic.

This is true even though the underlying data-generating process is stochastic. The model acknowledges randomness in its error term, but the forecast itself collapses that randomness into a single expected value. Every WFM analyst who has ever pulled a forecast from their platform and used it to build a staffing plan has engaged in deterministic planning — they used point estimates as if they were known quantities.

ARIMA

Main article: ARIMA Models

ARIMA (AutoRegressive Integrated Moving Average) models decompose a time series into autoregressive terms (past values predict future values), differencing terms (removing trends), and moving average terms (past forecast errors predict future values). Once the model parameters (p, d, q) are estimated and the coefficients are fit, the forecast is a deterministic function of the historical data.

An ARIMA(1,1,1) model applied to daily call volume might produce a forecast of 4,832 calls for next Wednesday. That number does not change if you re-run the model with the same data and parameters. It is deterministic. The model can also produce prediction intervals (e.g., 95% confidence: 4,200 to 5,464), but WFM practice almost universally ignores these intervals and uses only the point estimate for downstream planning.

Exponential Smoothing

Main article: Exponential Smoothing

Exponential Smoothing methods — simple, double (Holt), and triple (Holt-Winters) — are workhorses of WFM forecasting. They assign exponentially decreasing weights to older observations, producing a smoothed estimate of level, trend, and seasonality. The forecast is again a single value: deterministic output from a deterministic calculation.

Holt-Winters is particularly common in WFM because it captures the daily-of-week and time-of-day seasonality that dominates contact center volume patterns. Given a history of Monday 10:00-10:30 volumes, the model produces one forecast for next Monday 10:00-10:30. That forecast flows into the staffing calculation, which flows into the scheduling engine — a chain of deterministic transformations.

Time Series Decomposition

Main article: Time Series Decomposition

Time Series Decomposition separates a time series into trend, seasonal, and residual components. The trend and seasonal components are deterministic functions that can be projected forward. The residual is treated as noise and typically discarded for planning purposes (or its variance is used for confidence intervals that WFM practitioners rarely examine).

Regression

Main article: Regression for Forecasting

Regression models in WFM use causal variables (marketing spend, day of week, holiday indicators, economic indicators) to predict volume. The fitted regression produces a single predicted value for each combination of input variables — deterministic by construction. If you know next week's marketing campaign will generate $50K in spend and it is a non-holiday week, the model returns one volume estimate.

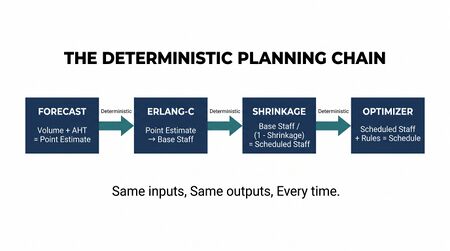

The Forecasting Chain Is Deterministic End to End

What matters operationally is recognizing that the entire forecasting-to-scheduling pipeline is typically deterministic:

- Historical data → forecast model → point forecast (deterministic)

- Point forecast + AHT → staffing requirements (deterministic via Erlang)

- Staffing requirements + labor rules → schedule (deterministic via optimization)

Each step takes fixed inputs and produces fixed outputs. No step in this chain quantifies uncertainty or propagates it forward. This is not necessarily wrong — it is efficient and usually adequate — but understanding it as a deterministic pipeline helps practitioners recognize when it might not be adequate.

Business Rules and Compliance

Main article: Labor Compliance Scheduling

The Most Deterministic Layer

Labor law and business rules represent the purest form of deterministic logic in WFM. There is no ambiguity, no estimation, no modeling uncertainty. Either a schedule complies with the rules or it does not.

Examples of deterministic compliance constraints:

- Maximum hours: An agent cannot be scheduled for more than 40 hours per week (or 48, or whatever the local law specifies). This is a hard constraint: a binary true/false evaluation.

- Minimum rest: An agent must have at least 11 hours between the end of one shift and the start of the next. Again, binary.

- Break timing: A 30-minute meal break must be provided no later than 5 hours after shift start. If the shift starts at 8:00, the break must begin by 13:00.

- Consecutive days: An agent cannot work more than 6 consecutive days without a day off.

- Overtime triggers: Any hours beyond 8 per day or 40 per week incur overtime pay at 1.5x.

These rules are encoded as constraints in the scheduling optimization (see Section 3) or as validation checks applied to completed schedules. They are entirely deterministic — no forecasting required, no statistical estimation, no judgment calls. The rules are what they are.

Why This Matters

Compliance constraints often drive scheduling outcomes more than the staffing requirement itself. A center might have an optimal staffing plan that calls for 85 agents on Wednesday afternoon, but if labor rules prevent the scheduler from assigning enough agents to that window, the actual coverage will fall short. Understanding that compliance is a deterministic constraint layer helps practitioners diagnose these gaps — the issue is not forecast error or model uncertainty, but a hard rule that limits the solution space.

Labor Compliance Scheduling covers these constraints in depth. The key insight here is that this layer is always deterministic, regardless of what forecasting or staffing methodology sits above it.

Interval-Level Staffing Calculations

Main article: Demand calculation

The Core WFM Math

The calculation at the heart of WFM planning is the conversion of workload into staffing requirements at the interval level (typically 15-minute or 30-minute intervals). This calculation is entirely deterministic and follows a well-defined formula:

Workload (Erlangs) = (Volume × AHT) / Interval Duration

For a 30-minute interval expecting 120 calls with a 300-second AHT:

- Workload = (120 × 300) / 1800 = 20 Erlangs

This workload, combined with a service level target, feeds into the Erlang C formula to produce the base staff requirement. Then occupancy limits, shrinkage, and other adjustment factors are applied:

Scheduled Staff = Base Staff / (1 − Shrinkage)

If Erlang C says 24 base agents are needed and shrinkage is 30%:

- Scheduled Staff = 24 / 0.70 ≈ 35

Every number in this chain is a fixed calculation. There are no random variables at the point of execution — the volume is a forecast (already collapsed to a point estimate), the AHT is a historical average (already collapsed to a single number), the service level target is a business decision, and shrinkage is an estimate applied as a constant.

Interval-Level Granularity

WFM planning typically operates at 15-minute or 30-minute intervals because this is the granularity at which volume and AHT can meaningfully vary within a day. The staffing calculation runs independently for each interval, producing a demand curve — the "spine" that scheduling must cover.

A typical day has 48 half-hour intervals (for a 24-hour operation) or 32-36 intervals for a business-hours operation. The staffing calculation runs 48 times, each time with that interval's specific volume forecast and AHT assumption. The result is 48 staffing numbers, forming a deterministic demand curve that the scheduling engine treats as given.

Where Determinism Helps and Hinders

The deterministic nature of interval-level calculations makes them extremely practical. A planner can build, review, and adjust a staffing plan in a spreadsheet. Every number is traceable. If the Tuesday 14:00 interval shows 42 agents needed, you can decompose that into: 180 calls forecast × 280 seconds AHT → 28 Erlangs → 34 base staff (at 80/20 SL) → 42 scheduled (at 20% shrinkage).

However, this transparency comes at a cost: every input is treated as known with certainty. If the volume forecast is 180 but actual volume has a standard deviation of 30, the staffing plan does not account for the scenarios where volume is 150 or 210. The plan is optimized for one specific future that may not materialize. For intraday operations, this is usually fine — volume deviations can be managed through real-time adjustments. For longer planning horizons, this deterministic certainty becomes increasingly unreliable. See Probabilistic Planning in WFM for approaches that address this limitation.

Strengths of Deterministic Approaches

Reproducibility

Any WFM analyst, anywhere, using the same inputs and methods, will get the same answer. This is not a trivial benefit. In an environment where staffing decisions affect labor budgets of millions of dollars, the ability to reproduce and verify a calculation matters enormously. When a finance partner asks "why did you schedule 420 FTE next week?", a deterministic plan provides a complete, traceable answer.

Auditability

Deterministic models create audit trails by construction. Every output can be traced back through the calculation chain to specific inputs. This matters for labor law compliance, union negotiations, budget justification, and operational governance. Regulators and auditors understand deterministic calculations. Try explaining a Monte Carlo simulation's confidence interval to a labor board — then try explaining an Erlang C staffing calculation. The latter is far more defensible precisely because it is deterministic.

Computational Speed

Deterministic calculations are fast. An Erlang C calculation takes microseconds. A day's staffing plan (48 intervals) takes milliseconds. Even a complex scheduling optimization with thousands of agents typically solves in minutes. This speed enables iterative planning — "what if AHT increases by 10%?" is a question that can be answered instantly with deterministic models. Probabilistic approaches (simulation, scenario analysis) are orders of magnitude slower, which limits their practical use in daily planning workflows.

Explainability

WFM operates in organizations where non-technical stakeholders make resource decisions. Deterministic models are explainable to these stakeholders. "We need 35 agents because we expect 120 calls at 5 minutes each, and at that load, 35 agents gives us 80/20 service level" is a sentence a VP of Operations can understand and challenge. This explainability builds trust and enables productive conversations about trade-offs.

Sufficient Accuracy for Most Decisions

For the majority of WFM decisions — next week's schedule, today's staffing adjustments, this month's hiring plan — deterministic models provide sufficient accuracy. The error introduced by using point estimates rather than distributions is small relative to other sources of uncertainty (forecast error, absenteeism variance, AHT fluctuations). The marginal improvement from probabilistic methods often does not justify the added complexity for routine operational planning.[4]

Limitations and When to Upgrade

When Point Estimates Fail

Deterministic planning breaks down when input uncertainty is large relative to the decision at hand. Specific scenarios where deterministic methods become inadequate:

High-variance environments: If call volume has a coefficient of variation above 0.25 within an interval, the point forecast is a poor summary of what might actually happen. A deterministic staffing plan built on the mean will understaff roughly half the time and overstaff the other half, with no quantification of the risk in either direction.

Long planning horizons: A 12-month capacity plan based on deterministic point forecasts ignores the compounding uncertainty over time. The forecast for next Tuesday is reasonably reliable. The forecast for a Tuesday six months from now is not. Deterministic planning treats both with the same confidence, which leads to false precision in long-range plans. Capacity Planning Methods benefits significantly from probabilistic approaches for this reason.

Scenario planning: When leadership asks "what happens if volume grows 20% faster than expected?", they are asking a probabilistic question. A deterministic model can answer "if volume is X, we need Y agents" — but it cannot quantify the probability of volume being X, nor the cost distribution across likely scenarios.

Risk quantification: "What is the probability we will miss service level on Monday?" is a question deterministic models cannot answer. They can say "if everything goes as planned, we will meet service level" — but that conditional is doing heavy lifting.

The Upgrade Path

The transition from deterministic to probabilistic planning is not binary. Practitioners can selectively introduce probabilistic elements where they add value:

- Add prediction intervals to forecasts and use the upper bound for staffing (simple, effective, slightly more expensive in labor)

- Run scenario analysis for capacity plans: best case, expected case, worst case, with probabilities assigned to each

- Use simulation for complex multi-skill routing environments where analytical models like Erlang C cannot capture queue interactions

- Apply Monte Carlo methods to long-range workforce plans to quantify budget risk

Each of these upgrades adds complexity and computational cost. The right approach is to use deterministic methods as the default and upgrade selectively when the decision stakes, planning horizon, or input uncertainty warrants it. The AI Scaffolding Framework positions these analytical methods at Layer 3 (Analytical Engine), where the choice between deterministic and probabilistic depends on the specific problem context.[5]

Tools and Implementation

Spreadsheets

Microsoft Excel and Google Sheets remain the most widely used tools for deterministic WFM planning. Erlang calculators, staffing models, and simple forecasts are commonly built and maintained in spreadsheets. This is not a criticism — for small to medium operations, spreadsheets provide transparency, flexibility, and accessibility that purpose-built software cannot match. The deterministic nature of the calculations makes them well-suited to spreadsheet implementation: every cell is a formula, every formula is visible, every result is reproducible.

Limitations emerge with scale. A 5,000-agent, multi-site, multi-skill operation cannot practically be planned in a spreadsheet — the optimization problem is too large for manual or even Solver-based approaches.

WFM Platform Solvers

Enterprise WFM platforms (NICE IEX, Verint, Calabrio, Aspect, Genesys) embed commercial optimization solvers that handle the scale problem. These solvers implement the linear and integer programming methods described in Section 3, along with proprietary heuristics tuned for WFM-specific problem structures.

From the practitioner's perspective, these are black-box deterministic engines: input a demand curve and a set of rules, receive a schedule. The deterministic nature means that re-running the optimization with the same inputs produces the same schedule (within solver tolerance), which supports the reproducibility and auditability requirements discussed earlier.

Python Libraries

For practitioners building custom models or extending platform capabilities, Python offers mature libraries for deterministic WFM calculations:

- scipy.optimize: General-purpose optimization including linear programming. Suitable for custom staffing models and simple scheduling problems.

- PuLP: A Python library for formulating and solving linear and integer programs. PuLP provides a clean interface for defining objective functions and constraints, and can call multiple backend solvers (CBC, CPLEX, Gurobi). See PuLP and Optimization for Scheduling for WFM-specific applications.

- OR-Tools: Google's open-source optimization suite, including constraint programming solvers well-suited to employee scheduling problems with complex constraint sets.

- statsmodels: Statistical modeling including ARIMA, exponential smoothing, and other time series methods used for deterministic forecasting.[6]

These tools make it practical for WFM teams to build and validate deterministic models outside of commercial platforms, enabling custom analysis, experimentation, and capability development.

Where AI and ML Fit

Artificial intelligence and machine learning do not replace deterministic WFM planning — they extend it. ML-based forecasting (gradient boosting, neural networks) produces point estimates just as classical methods do. The output is still deterministic at the point of use: one forecast number per interval. The model is more complex, but the planning paradigm remains the same.

Where ML genuinely changes the paradigm is when it produces probability distributions rather than point estimates (probabilistic forecasting) or when it enables real-time re-optimization that classical batch methods cannot support. These represent genuine upgrades from deterministic to probabilistic or adaptive planning — but they supplement rather than replace the deterministic foundation.[7]

Relationship to the AI Scaffolding Framework

The AI Scaffolding Framework organizes WFM analytical capabilities into layers, with Layer 3 (Analytical Engine) encompassing the mathematical models discussed in this article. Deterministic models form the base of Layer 3 — the established, well-understood techniques that have driven WFM planning for decades.

Within this framework, deterministic planning is not "old" or "inferior" — it is foundational. Probabilistic methods, machine learning, and adaptive algorithms are built on top of deterministic foundations, not instead of them. An ML-based forecasting model still feeds into Erlang-based staffing calculations. A simulation-based capacity plan still validates against deterministic baselines. Understanding deterministic planning deeply is a prerequisite for using advanced methods effectively.

See Also

- Deterministic vs Probabilistic Models — Conceptual distinction between paradigms

- Probabilistic Planning in WFM — The probabilistic counterpart to this article

- Erlang C — The foundational deterministic staffing model

- Erlang-A — Erlang with caller abandonment

- Schedule Optimization — Mathematical optimization for scheduling

- Scheduling Methods — Overview of scheduling approaches

- Forecasting Methods — Forecasting methodology survey

- Interval Level Staffing Requirements — Interval-level demand calculation

- Capacity Planning Methods — Long-range workforce capacity planning

- AI Scaffolding Framework — Analytical framework positioning these methods

- PuLP and Optimization for Scheduling — Practical optimization implementation

References

- ↑ Erlang, A.K. (1917). "Solution of some Problems in the Theory of Probabilities of Significance in Automatic Telephone Exchanges." Elektroteknikeren, vol. 13.

- ↑ Hillier, F.S. and Lieberman, G.J. (2021). Introduction to Operations Research, 11th edition. McGraw-Hill. Chapters 3-12.

- ↑ Wolsey, L.A. (2020). Integer Programming, 2nd edition. Wiley. Chapters 1-3.

- ↑ Gans, N., Koole, G., and Mandelbaum, A. (2003). "Telephone Call Centers: Tutorial, Review, and Research Prospects." Manufacturing & Service Operations Management, 5(2), 79-141.

- ↑ Cleveland, B. (2012). Call Center Management on Fast Forward, 3rd edition. ICMI Press. Chapters 5-8.

- ↑ Seabold, S. and Perktold, J. (2010). "Statsmodels: Econometric and Statistical Modeling with Python." Proceedings of the 9th Python in Science Conference.

- ↑ Taylor, J.W. (2008). "A Comparison of Univariate Time Series Methods for Forecasting Intraday Arrivals at a Call Center." Management Science, 54(2), 253-265.