PuLP and Optimization for Scheduling

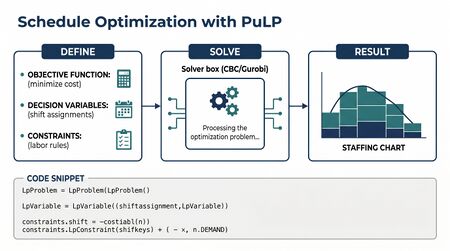

PuLP and Optimization for Scheduling is a practical guide to using mathematical optimization—specifically linear programming and mixed-integer programming—to solve schedule optimization problems in workforce management (WFM). While commercial WFM platforms include built-in solvers that handle the vast majority of scheduling scenarios, understanding how those solvers work—and being able to build custom formulations—gives practitioners a powerful diagnostic and prototyping capability. This page serves as the implementation companion to Deterministic Planning in WFM, grounding those theoretical concepts in working Python code using the PuLP library.

The ability to formulate a scheduling problem as a mathematical program is arguably the single most valuable technical skill a WFM analyst can develop beyond spreadsheet proficiency. It transforms scheduling from a black-box platform function into a transparent, auditable, and extensible analytical process. Even practitioners who never deploy a custom solver in production benefit from understanding what their platform's optimizer is actually doing when it generates a schedule.

What Is PuLP

PuLP is an open-source Python library for formulating and solving linear programming (LP) and mixed-integer programming (MIP) problems. Developed originally by Stuart Mitchell and maintained as part of the COIN-OR project, PuLP provides a high-level, readable syntax for defining optimization problems that would otherwise require specialized modeling languages like AMPL or GAMS.[1]

PuLP does not itself solve optimization problems. It is a modeling library: it translates a problem formulation into the standard matrix form that solvers consume, dispatches the problem to a solver, and returns the results. This separation of modeling from solving is important because it means the same PuLP formulation can be solved by different engines—from the bundled open-source CBC solver to commercial solvers like CPLEX and Gurobi—without changing a line of problem definition code.

Installation is trivial:

pip install pulp

The core workflow in PuLP follows four steps:

- Define the problem — create a problem object and specify whether it is a minimization or maximization.

- Create decision variables — define what the solver is allowed to choose (e.g., how many agents to assign to each shift).

- Add constraints — encode the rules that any feasible solution must satisfy (e.g., every interval must have at least n agents).

- Solve and extract results — invoke the solver and read back the optimal values of the decision variables.

This workflow maps directly to how schedule generation works inside commercial platforms. The platform's scheduler formulates the problem from configuration (shift rules, employee preferences, demand forecasts), solves it, and writes the result to the schedule. PuLP makes that same process explicit and programmable.

Mathematical Optimization Concepts for WFM

Before diving into code, a brief recap of the optimization concepts that underpin every WFM scheduling engine. These are covered more formally in operations research textbooks; what follows is the practitioner's translation.[2]

Decision Variables

Decision variables represent the choices the solver makes. In WFM scheduling, these typically include:

- Binary assignment variables — does agent i work shift j? (0 or 1)

- Integer staffing variables — how many agents are assigned to interval t?

- Continuous slack variables — how much understaffing exists at interval t?

The type of variable determines the problem class. If all variables are continuous and all constraints are linear, the problem is a linear program (LP), solvable in polynomial time. If some variables must be integers, the problem is a mixed-integer program (MIP), which is NP-hard in general but tractable for most WFM-scale problems with modern solvers.[3]

Objective Function

The objective function defines what "optimal" means. Common WFM objectives include:

- Minimize total labor cost — sum of (hours worked x pay rate) across all agents

- Minimize understaffing — sum of coverage gaps across all intervals

- Maximize schedule fairness — minimize the variance in shift quality across agents

- Minimize overstaffing — reduce idle agent time

Most real problems use a weighted combination: minimize cost subject to a penalty for understaffing. The weights encode business priorities—how much the organization values cost savings versus service level risk.

Constraints

Constraints encode the rules that any valid schedule must obey:

- Coverage constraints — each interval must have at least the required number of agents

- Availability constraints — agents can only work shifts within their availability windows

- Labor law constraints — maximum hours per week, minimum rest between shifts, consecutive day limits

- Skill constraints — only agents with skill s can cover demand for skill s

- Preference constraints — soft constraints on shift preferences, often modeled as terms in the objective

The art of schedule optimization lies in constraint formulation. A constraint that is too tight makes the problem infeasible (no valid schedule exists). A constraint that is too loose produces schedules that violate operational reality. Commercial platforms encode hundreds of constraint types; custom models start simple and add constraints as needed.

A Simple Staffing Example

Consider the most basic WFM optimization problem: given a demand curve across intervals, select the minimum-cost set of shifts to cover that demand. This is the core of what every schedule generation engine does.

Suppose a contact center operates from 08:00 to 20:00 in 30-minute intervals (24 intervals). The demand curve specifies the minimum number of agents required per interval, derived from interval-level staffing requirements. Three shift types are available: an 8-hour morning shift (08:00–16:00), an 8-hour mid shift (10:00–18:00), and an 8-hour evening shift (12:00–20:00).

import pulp

# Define intervals (08:00 to 20:00 in 30-min increments)

intervals = list(range(24))

interval_labels = [f"{8 + i // 2}:{(i % 2) * 30:02d}" for i in intervals]

# Demand: minimum agents required per interval

demand = [4, 5, 6, 8, 10, 12, 14, 15, 15, 14, 13, 12,

12, 13, 14, 14, 12, 10, 8, 6, 5, 4, 3, 3]

# Shift definitions: (name, cost, coverage vector)

shifts = {

"morning": {"cost": 200, "covers": [1]*16 + [0]*8},

"mid": {"cost": 210, "covers": [0]*4 + [1]*16 + [0]*4},

"evening": {"cost": 220, "covers": [0]*8 + [1]*16},

}

# Create problem

prob = pulp.LpProblem("MinCostStaffing", pulp.LpMinimize)

# Decision variables: how many of each shift to staff

shift_vars = {s: pulp.LpVariable(s, lowBound=0, cat="Integer") for s in shifts}

# Objective: minimize total cost

prob += pulp.lpSum(shifts[s]["cost"] * shift_vars[s] for s in shifts)

# Constraints: meet demand in every interval

for t in intervals:

prob += (

pulp.lpSum(shifts[s]["covers"][t] * shift_vars[s] for s in shifts) >= demand[t],

f"coverage_{interval_labels[t]}"

)

# Solve

prob.solve(pulp.PULP_CBC_CMD(msg=0))

# Results

print(f"Status: {pulp.LpStatus[prob.status]}")

print(f"Total cost: ${pulp.value(prob.objective):,.0f}")

for s in shifts:

print(f" {s}: {int(shift_vars[s].varValue)} agents")

This formulation contains the three essential elements: decision variables (how many of each shift), an objective function (minimize cost), and constraints (meet demand at every interval). The solver finds the combination of shift counts that satisfies all coverage requirements at the lowest total cost.

In practice, this is a dramatically simplified version of what platforms like NICE, Verint, or Calabrio solve. Real formulations include hundreds of shift patterns from a shift library, individual agent assignment rather than shift-count aggregation, and dozens of constraint families. But the structure is identical.

Break Optimization Example

Break optimization is one of the most common custom optimization problems in WFM. After shifts are assigned, lunch and rest breaks must be placed within each shift's allowable break windows while maintaining adequate coverage. This is the problem that most frequently drives WFM teams to explore custom solvers, because platform break optimizers sometimes produce suboptimal results for unusual shift configurations.

import pulp

# 12 intervals (e.g., 10:00-16:00 in 30-min blocks)

n_intervals = 12

n_agents = 8

# Staffing before breaks (all agents on-floor)

base_staff = [8] * n_intervals

demand = [6, 6, 7, 7, 8, 8, 7, 7, 6, 6, 5, 5]

# Break window: each agent's lunch can start in intervals 3-7

break_window = range(3, 8)

break_duration = 2 # 2 intervals = 1 hour lunch

prob = pulp.LpProblem("BreakOptimization", pulp.LpMinimize)

# Binary variable: agent i starts break at interval t

x = {}

for i in range(n_agents):

for t in break_window:

x[i, t] = pulp.LpVariable(f"break_{i}_{t}", cat="Binary")

# Understaffing slack variable per interval

under = {t: pulp.LpVariable(f"under_{t}", lowBound=0) for t in range(n_intervals)}

# Objective: minimize total understaffing

prob += pulp.lpSum(under[t] for t in range(n_intervals))

# Constraint: each agent takes exactly one break

for i in range(n_agents):

prob += pulp.lpSum(x[i, t] for t in break_window) == 1

# Constraint: coverage = base_staff minus agents on break >= demand - slack

for t in range(n_intervals):

agents_on_break = pulp.lpSum(

x[i, s]

for i in range(n_agents)

for s in break_window

if s <= t < s + break_duration

)

prob += base_staff[t] - agents_on_break + under[t] >= demand[t]

prob.solve(pulp.PULP_CBC_CMD(msg=0))

print(f"Status: {pulp.LpStatus[prob.status]}")

print(f"Total understaffing intervals: {pulp.value(prob.objective):.0f}")

for i in range(n_agents):

for t in break_window:

if x[i, t].varValue == 1:

print(f" Agent {i}: break at interval {t}")

The key modeling insight here is the use of binary decision variables. Each x[i, t] variable is 1 if agent i starts a break at interval t and 0 otherwise. The constraint that each agent takes exactly one break is expressed as the sum of that agent's binary variables equaling 1. The coverage constraint subtracts agents who are currently on break from the available staff, using the break duration to determine which start times affect which intervals.

This pattern—binary assignment variables with coverage tracking—is the backbone of virtually every WFM scheduling optimizer. Core-plus scheduling models use the same structure to assign flex shifts around a fixed spine.

Shift Selection Problem

The shift selection problem extends the simple staffing example to choose from a library of predefined shift patterns. This is closer to what real scheduling methods implement: rather than deciding how many agents work a generic "morning shift," the solver selects specific shift templates—each with defined start times, durations, and break placements—to cover a week's demand.

import pulp

# 48 intervals per day (15-min), simplified to one day

n_intervals = 48

# Demand curve (agents needed per 15-min interval, 06:00-18:00 mapped to 0-47)

demand = ([2]*4 + [4]*4 + [8]*4 + [12]*4 + [14]*4 + [15]*4 +

[14]*4 + [12]*4 + [10]*4 + [8]*4 + [5]*4 + [3]*4)

# Shift library: start_interval, duration_intervals, cost

shift_library = []

for start in range(0, 32, 2): # shifts can start every 30 min

for duration in [32, 34, 36]: # 8h, 8.5h, 9h shifts

if start + duration <= 48:

shift_library.append({

"id": f"shift_{start}_{duration}",

"start": start,

"duration": duration,

"cost": 15 * duration + (5 if start < 8 else 0), # early premium

"covers": [1 if start <= t < start + duration else 0

for t in range(n_intervals)]

})

prob = pulp.LpProblem("ShiftSelection", pulp.LpMinimize)

# Decision variable: number of agents assigned to each shift pattern

y = {s["id"]: pulp.LpVariable(s["id"], lowBound=0, cat="Integer")

for s in shift_library}

# Objective: minimize cost

prob += pulp.lpSum(s["cost"] * y[s["id"]] for s in shift_library)

# Coverage constraints

for t in range(n_intervals):

prob += (

pulp.lpSum(s["covers"][t] * y[s["id"]] for s in shift_library) >= demand[t],

f"cover_{t}"

)

prob.solve(pulp.PULP_CBC_CMD(msg=0))

selected = [(s["id"], int(y[s["id"]].varValue))

for s in shift_library if y[s["id"]].varValue > 0.5]

print(f"Status: {pulp.LpStatus[prob.status]}")

print(f"Total cost: {pulp.value(prob.objective):,.0f}")

for sid, count in sorted(selected):

print(f" {sid}: {count} agents")

The shift library approach is how most WFM platforms operate. Administrators define allowable shift patterns—specifying valid start times, durations, break windows, and activity sequences—and the optimizer selects the combination that best covers demand. The larger the shift library, the more flexibility the optimizer has, but also the more integer variables the solver must evaluate. A library of 200 shift patterns across 96 daily intervals creates a problem with 200 integer variables and 96 coverage constraints—well within the capability of CBC for a single day, though multi-week formulations with individual agent assignment scale to tens of thousands of variables.

Adding Labor Constraints

Real labor compliance scheduling requires constraints far beyond simple coverage. The following examples show how to encode common labor rules in PuLP. Each translates a human-readable rule into a mathematical constraint that the solver must respect.

Maximum Weekly Hours

# Agent i cannot work more than 40 hours (160 fifteen-minute intervals) per week

for i in agents:

prob += (

pulp.lpSum(

shift_duration[j] * x[i, j]

for j in shifts_available_to[i]

) <= 160,

f"max_hours_{i}"

)

Minimum Rest Between Shifts

# At least 11 hours (44 intervals) between end of one shift and start of next

for i in agents:

for d in range(n_days - 1):

for j1 in day_shifts[d]:

for j2 in day_shifts[d + 1]:

if shift_end[j1] + 44 > shift_start[j2]:

prob += x[i, j1] + x[i, j2] <= 1, f"rest_{i}_{j1}_{j2}"

Consecutive Day Limits

# No agent works more than 6 consecutive days

max_consecutive = 6

for i in agents:

for d in range(n_days - max_consecutive):

prob += (

pulp.lpSum(works_day[i, d + k] for k in range(max_consecutive + 1)) <= max_consecutive,

f"consec_{i}_{d}"

)

Multi-Skill Coverage

Multi-skill scheduling adds a dimension: demand is segmented by skill, and agents can only cover demand for skills they possess.

# Agent i assigned to skill s at interval t only if they have that skill

for i in agents:

for s in skills:

for t in intervals:

if s not in agent_skills[i]:

prob += assign[i, s, t] == 0, f"no_skill_{i}_{s}_{t}"

# Demand by skill must be met

for s in skills:

for t in intervals:

prob += (

pulp.lpSum(assign[i, s, t] for i in agents if s in agent_skills[i])

>= demand[s][t],

f"skill_cover_{s}_{t}"

)

These constraint patterns compose. A real formulation might include all of the above plus overtime rules, seniority-based shift bidding, minimum staffing floors, and union-negotiated schedule requirements. The power of the LP/MIP approach is that each rule adds constraints independently—the solver handles the interactions. This composability is why mathematical programming dominates WFM scheduling; heuristic approaches require re-engineering whenever a new rule is introduced.[4]

Solvers

PuLP ships with CBC (COIN-OR Branch and Cut), an open-source MIP solver that handles most WFM-scale problems adequately. For larger problems—multi-week schedules with hundreds of agents and complex constraint sets—commercial solvers offer significant performance advantages.

CBC (Default)

CBC is automatically installed with PuLP and requires no additional configuration. For problems with up to a few thousand integer variables (typical single-day or single-week scheduling for a team of 50–100 agents), CBC solves in seconds to minutes. Its performance degrades on problems with tens of thousands of integer variables or highly symmetric constraint structures.

# CBC is the default — no extra setup needed

prob.solve(pulp.PULP_CBC_CMD(msg=1, timeLimit=120))

CPLEX

IBM CPLEX is a commercial solver with a free academic license. It typically solves MIP problems 10–100x faster than CBC, which matters for large-scale scheduling runs. PuLP connects to CPLEX if it is installed on the system.

prob.solve(pulp.CPLEX_CMD(msg=1, timeLimit=300))

Gurobi

Gurobi is the other major commercial solver, generally considered the fastest for MIP problems. Like CPLEX, it offers free academic licenses. Gurobi's performance advantage over CBC is most pronounced on large, tightly constrained problems—exactly the profile of multi-site, multi-skill, multi-week scheduling.[5]

prob.solve(pulp.GUROBI_CMD(msg=1, timeLimit=300))

Solve Time Considerations

Solve time in MIP grows with the number of integer variables, the tightness of constraints, and the problem's symmetry structure. Practical guidelines for WFM problems:

- Single-day, aggregate shifts (the simple staffing example): seconds with any solver

- Single-day, individual agent assignment, 50 agents: seconds with CBC, sub-second with commercial

- Weekly schedule, 200 agents, complex constraints: minutes with CBC, seconds with commercial

- Multi-week, multi-site, 1000+ agents: may require commercial solver; CBC may not converge within reasonable time limits

When CBC runs too slowly, the first remedy is not switching solvers but simplifying the formulation. Reducing symmetry (e.g., ordering constraints on identical agents), relaxing non-critical constraints, or decomposing the problem by site or team often yields more improvement than a faster solver on the original formulation.

Google OR-Tools as Alternative

Google's OR-Tools is an open-source optimization suite that includes both a MIP solver (SCIP) and a constraint programming (CP) solver (CP-SAT). For certain classes of WFM problems, CP-SAT outperforms LP/MIP approaches.[6]

When CP-SAT Excels

Constraint programming is particularly well-suited for:

- Highly combinatorial problems with many discrete choices and few continuous variables — such as assigning specific agents to specific shifts with complex compatibility rules

- Problems dominated by logical constraints — "if agent works Monday morning, they cannot work Monday evening" is natural in CP, awkward in LP

- Scheduling with sequence-dependent rules — consecutive shift constraints, pattern-based rostering, and rotation requirements

- Feasibility problems — when the goal is finding any valid schedule rather than optimizing a cost function

When PuLP/LP Is Better

Linear programming remains the better choice when:

- The objective function is a clear, continuous cost metric (minimize labor cost, minimize deviation from demand)

- The problem has many continuous variables (staffing levels, overtime hours, partial shifts)

- The constraint structure is naturally linear and the problem benefits from LP relaxation bounds

- Integration with commercial solvers (CPLEX, Gurobi) is needed for performance

A CP-SAT Example

from ortools.sat.python import cp_model

model = cp_model.CpModel()

n_agents = 20

n_shifts = 3 # morning, mid, evening

n_days = 7

# Binary: agent i works shift s on day d

x = {}

for i in range(n_agents):

for d in range(n_days):

for s in range(n_shifts):

x[i, d, s] = model.new_bool_var(f"x_{i}_{d}_{s}")

# Each agent works at most one shift per day

for i in range(n_agents):

for d in range(n_days):

model.add(sum(x[i, d, s] for s in range(n_shifts)) <= 1)

# Each agent works at most 5 days per week

for i in range(n_agents):

model.add(sum(x[i, d, s] for d in range(n_days) for s in range(n_shifts)) <= 5)

# Coverage requirements per shift per day

demand = [[6, 8, 4]] * 7 # [morning, mid, evening] for each day

for d in range(n_days):

for s in range(n_shifts):

model.add(sum(x[i, d, s] for i in range(n_agents)) >= demand[d][s])

solver = cp_model.CpSolver()

solver.parameters.max_time_in_seconds = 30

status = solver.solve(model)

if status in (cp_model.OPTIMAL, cp_model.FEASIBLE):

for i in range(n_agents):

schedule = []

for d in range(n_days):

for s in range(n_shifts):

if solver.value(x[i, d, s]) == 1:

schedule.append(f"D{d}:S{s}")

if schedule:

print(f"Agent {i}: {', '.join(schedule)}")

Many practitioners use both tools: PuLP for cost-optimization problems where the LP relaxation provides good bounds, and CP-SAT for rostering and assignment problems where the constraint logic is the primary challenge. The two approaches are complementary, not competing.

Interpreting Results

Understanding solver output is as important as formulating the problem correctly. Three concepts matter most for WFM practitioners.

Optimal vs. Feasible

A solver returns one of several statuses:

- Optimal — the solver proved that no better solution exists. The returned solution is the global optimum.

- Feasible (or Integer Feasible) — the solver found a valid solution but could not prove it is optimal within the time limit. The solution satisfies all constraints but may not minimize (or maximize) the objective.

- Infeasible — no solution exists that satisfies all constraints simultaneously. This usually means the constraint set is too tight: the demand curve requires more agents than are available, or labor rules conflict with coverage requirements.

- Unbounded — the objective can be improved infinitely, which in WFM typically indicates a modeling error (e.g., a missing lower bound on a cost variable).

Infeasibility is the most common and most informative failure mode. When the solver reports infeasibility, the debugging process reveals which constraints are in tension—which is often more valuable than the schedule itself. Running an infeasibility analysis (identifying the irreducible infeasible subset, or IIS) tells the planner exactly which rules cannot be simultaneously satisfied, directly informing trade-off discussions with operations leadership.

The Optimality Gap

For MIP problems, the solver maintains two bounds during the search: the best feasible solution found so far (the incumbent) and a theoretical lower bound on the optimal value (derived from the LP relaxation). The gap is the percentage difference between these bounds:

gap = (incumbent - lower_bound) / incumbent x 100%

A gap of 0% means the solution is provably optimal. A gap of 1% means the solution is at most 1% worse than optimal. For most WFM applications, a gap of 1–2% is operationally insignificant—the uncertainty in the demand forecast dwarfs a 1% deviation in schedule cost. Setting a gap tolerance (e.g., fracGap=0.01 in CBC) often reduces solve time dramatically while sacrificing negligible solution quality.

# Accept solutions within 1% of optimal — dramatically reduces solve time

prob.solve(pulp.PULP_CBC_CMD(msg=1, fracGap=0.01))

Sensitivity and Shadow Prices

LP solvers produce dual values (shadow prices) for each constraint, indicating how much the objective would improve if that constraint were relaxed by one unit. In WFM terms: the shadow price on a coverage constraint tells you the marginal cost of requiring one additional agent in that interval. Intervals with high shadow prices are the expensive intervals to staff—they drive the total cost of the schedule. This information is directly actionable for capacity planning: if the shadow price at 10:00 AM is three times higher than at 2:00 PM, demand-shaping initiatives (callback scheduling, chat deflection) should target the morning peak.[2]

Shadow prices are only available for LP relaxations, not for integer solutions directly. To obtain them, solve the LP relaxation (set all integer variables to continuous), read the duals, then solve the full MIP. The LP shadow prices provide good directional guidance even though the integer solution may differ.

When to Build Custom vs. Use WFM Platform

Platform solvers—NICE, Verint, Calabrio, Alvaria, Genesys—handle approximately 90% of scheduling scenarios out of the box. They encode thousands of constraint types, handle multi-week horizons, integrate with real-time adherence, and manage the agent-facing experience (schedule publication, swap requests, preference bidding). Building a custom PuLP model to replace a platform scheduler is almost never the right answer.

Custom optimization is the right answer for:

- Validation and audit — building a simplified model of the platform's problem to verify that the platform's output is reasonable. If the platform says 150 agents and a simple LP says 120, the gap demands investigation.

- Edge-case scheduling — problems the platform does not natively support, such as novel shift structures, unusual labor rules for a specific jurisdiction, or hybrid human-multi-skill routing.

- Prototyping — testing whether a proposed shift design or schedule rule is feasible before configuring it in the platform. A 30-minute PuLP prototype can save days of platform configuration and testing.

- Scenario analysis — rapidly evaluating many what-if scenarios (different shift libraries, different demand curves, different constraint sets) to inform capacity planning decisions.

- Learning — understanding the mathematical structure of scheduling problems makes practitioners better users of platform optimizers. They write better shift rules, diagnose infeasibility faster, and communicate trade-offs more effectively.

The decision framework is straightforward: if the platform can do it, use the platform. If the platform cannot do it, or if you need to understand why the platform produces a particular result, build a custom model. PuLP's value is not as a platform replacement but as a thinking tool—a way to make the mathematics of scheduling explicit, inspectable, and modifiable.[7]

See Also

- Python for Workforce Management

- Deterministic Planning in WFM

- Schedule Optimization

- Schedule Generation

- Scheduling Methods

- Break Optimization

- Shift Design

- Labor Compliance Scheduling

- Multi-Skill Scheduling

- Interval Level Staffing Requirements

- Capacity Planning Methods

- Spine Shift Design and Core Plus Scheduling

References

- ↑ PuLP Documentation. COIN-OR. 2026-05-13.

- ↑ 2.0 2.1 Introduction to Operations Research. McGraw-Hill. 2021. ISBN 978-1-259-87205-0.

- ↑ Wolsey, Laurence A.. Integer Programming. Wiley. 2020. ISBN 978-1-119-60653-0.

- ↑ "Staff scheduling and rostering: A review of applications, methods and models". European Journal of Operational Research. 153(1): 3–27. 2004. doi:10.1016/S0377-2217(03)00095-X.

- ↑ Gurobi Optimization Benchmarks. Gurobi Optimization. 2026-05-13.

- ↑ Google OR-Tools Documentation. Google. 2026-05-13.

- ↑ Convex Optimization. Cambridge University Press. 2004. ISBN 978-0-521-83378-3.