Probabilistic Planning in WFM

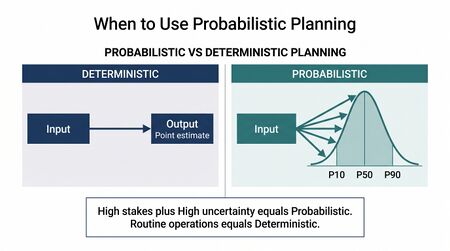

Probabilistic planning in workforce management (WFM) is the practice of building operational plans—forecasts, staffing models, schedules, and capacity strategies—that explicitly account for uncertainty by working with probability distributions, ranges, and confidence intervals rather than single point estimates. Where deterministic planning produces a single "best guess" output for each decision variable, probabilistic planning produces a distribution of possible outcomes along with their associated likelihoods, enabling planners to quantify risk, set confidence thresholds, and make decisions calibrated to the stakes involved.

Every contact center operates as a stochastic system. Calls arrive randomly. Handle times vary from interaction to interaction. Agents call in sick without warning. Customers abandon at unpredictable moments. The question is not whether uncertainty exists—it always does—but whether the planning methodology acknowledges it or sweeps it into an implicit assumption that the average will hold. Probabilistic planning makes the uncertainty explicit, measurable, and actionable.

This article covers the specific methods and applications of probabilistic thinking across the WFM workflow, from the distributional foundations that underpin contact center mathematics through simulation, forecasting, scheduling, and the emerging frontier of digital twins. For the conceptual distinction between deterministic and probabilistic modeling paradigms, see Deterministic vs Probabilistic Models. For the deterministic counterpart to this article, see Deterministic Planning in WFM.

Probability Distributions in WFM

The randomness in a contact center is not chaotic—it follows well-characterized probability distributions that have been studied for over a century in queueing theory and operations research. Understanding these distributions is the foundation of all probabilistic WFM work.

Arrival Distributions

Contact arrivals in most service environments follow a Poisson process, meaning that the number of arrivals in a given time interval follows a Poisson distribution and the time between successive arrivals follows an exponential distribution. The Poisson assumption underlies the Erlang C and Erlang-A formulas and has been validated empirically across thousands of contact centers.[1]

However, the Poisson assumption holds within a single interval where the arrival rate is approximately constant. Across the day, arrival rates vary substantially—the familiar intraday pattern of peaks and troughs. Moreover, the arrival rate itself is uncertain: tomorrow's 10:00 AM interval might see 45 calls or 62 calls, and this rate-level uncertainty is often described by an overdispersed distribution such as the negative binomial or a Poisson mixture. This "doubly stochastic" nature of arrivals—random arrivals at a random rate—is one of the primary motivations for moving beyond deterministic planning.

Service Time Distributions

Average handle time (AHT) is typically reported as a single number, but actual handle times vary widely across interactions. The distribution of individual service times is often right-skewed: most calls resolve relatively quickly, but a long tail of complex interactions pulls the average higher than the median. Common distributional models for service times include:

- Exponential distribution — assumed by Erlang C for mathematical tractability, though it poorly captures the heavy right tail observed in practice.

- Lognormal distribution — frequently provides a better empirical fit, capturing the right skew and heavy tail of real handle time data.

- Gamma distribution — another flexible two-parameter distribution used in simulation models for service times.

- Empirical distributions — simulation models can sample directly from historical handle time data without assuming any parametric form, preserving the exact shape of the distribution including any multimodality.

The choice of service time distribution matters most in simulation contexts (see Monte Carlo Simulation below), where the shape of the distribution—not just its mean—affects queueing behavior, agent utilization, and service level outcomes.

Patience Distributions

Main article: Abandonment Rate Modeling and Patience Distributions

Customer patience—how long a caller is willing to wait before abandoning—follows its own distribution, which directly determines abandonment rates. The Erlang-A model extends Erlang C by incorporating an exponential patience distribution, but empirical research has shown that patience distributions are often better described by lognormal or Weibull distributions with a mass point at zero (callers who hang up immediately upon hearing a queue message).[2]

Probabilistic planning takes patience distributions seriously because the interaction between arrival rates, service times, and patience determines the feedback loop between staffing levels and achievable service level. A deterministic plan that assumes a fixed abandonment rate misses the dynamic: when queues grow, more patients abandon, which reduces the queue, which changes the service level. Only a distributional approach captures this correctly.

Monte Carlo Simulation

Main article: Discrete-Event vs. Monte Carlo Simulation Models

Monte Carlo simulation is the most widely applicable probabilistic planning technique in WFM. The method works by running thousands of simulated scenarios, each drawing random values from the relevant probability distributions, and then analyzing the resulting distribution of outcomes.

How It Works

A Monte Carlo simulation for contact center planning follows this general structure:

- Define input distributions. Specify probability distributions for each uncertain variable: arrival rate, handle time, patience, shrinkage, and any other relevant parameter.

- Generate random scenarios. For each simulation run (or "replication"), draw random values from each input distribution.

- Compute outcomes. For each scenario, calculate the operational metrics of interest: service level, average speed of answer, abandonment rate, agent occupancy, required staffing.

- Aggregate results. After thousands of replications, analyze the empirical distribution of each output metric.

The power of Monte Carlo simulation lies in step 4: instead of a single answer ("you need 42 agents"), the planner obtains a distribution ("you need between 38 and 48 agents, with a median of 42 and a 90th percentile of 46"). This distribution directly supports risk-calibrated decision-making.

A Practical WFM Example

Consider a planning team preparing staffing for a Monday morning peak interval. Historical data suggests:

- Arrival rate: Mean of 55 calls per half-hour, with a standard deviation of 8 (modeled as a normal or negative binomial distribution).

- Average handle time: Mean of 360 seconds, with individual call times following a lognormal distribution (mean 360, standard deviation 120).

- Patience: Lognormal with a median of 90 seconds and a 10% immediate-abandon rate.

- Shrinkage: Beta distribution with a mean of 28% and a range of 22%–35%.

The planner runs 10,000 Monte Carlo replications. For each replication, the simulation draws a random arrival rate, generates individual call arrivals and handle times from their distributions, simulates the queueing process, and records the resulting service level.

The output might show:

- Staffing to the mean forecast (42 agents): Achieves 80/20 service level in only 48% of simulated scenarios.

- Staffing to the 80th percentile (46 agents): Achieves 80/20 service level in 79% of scenarios.

- Staffing to the 90th percentile (48 agents): Achieves 80/20 service level in 89% of scenarios.

This information transforms the staffing conversation from "we need 42 agents" to "we need 42 agents to hit target on an average day, but 48 agents to hit target 9 days out of 10." The cost difference between 42 and 48 agents is the price of reliability, and that trade-off can now be made explicitly rather than discovered after the fact when service level misses accumulate.

Tools for Monte Carlo simulation in WFM range from spreadsheet add-ins such as @RISK and Crystal Ball to dedicated simulation platforms like AnyLogic, Arena, and Simul8, as well as custom code using Python libraries (NumPy, SciPy, SimPy).[3]

Probabilistic Forecasting

Main article: Probabilistic Forecasting

Probabilistic forecasting extends the forecasting step of WFM beyond point predictions to produce full predictive distributions—or at minimum, prediction intervals—for each forecast period. Instead of forecasting "55 calls in the 10:00 AM interval," a probabilistic forecast might state "the 80% prediction interval for the 10:00 AM interval is [47, 64], with a median of 55."

Key Methods

Prediction intervals from time series models. Classical time series methods such as ARIMA and exponential smoothing produce prediction intervals as a natural byproduct of their statistical framework. These intervals widen as the forecast horizon extends, correctly reflecting increasing uncertainty about the future. Many WFM platforms suppress these intervals and display only the point forecast—a significant loss of information.

Quantile regression. Rather than modeling the conditional mean (as in ordinary regression), quantile regression models specific quantiles of the forecast distribution (e.g., the 10th, 50th, and 90th percentiles). This approach is particularly valuable when the forecast distribution is asymmetric—for example, when there is a long right tail of possible demand spikes.[4]

Ensemble forecasting. Multiple forecasting models are run in parallel, and the spread of their predictions forms an empirical forecast distribution. If five models predict Monday 10:00 AM volume as [52, 54, 55, 57, 63], the ensemble mean is 56.2 but the range and distribution of predictions provides uncertainty information. Ensemble methods are increasingly accessible through machine learning frameworks and are a core component of Layer 3 AI implementations in WFM.

Bayesian forecasting. Bayesian approaches treat model parameters as uncertain and update their distributions as new data arrives. The posterior predictive distribution naturally incorporates both parameter uncertainty and observation noise, producing well-calibrated prediction intervals. This connects to the broader application of Bayesian methods in WFM.

Value for WFM Planning

Probabilistic forecasts feed directly into downstream planning decisions. A staffing plan built on the 50th percentile (median) forecast will understaff half the time by definition. A plan built on the 80th percentile forecast will understaff only 20% of the time. The choice of which percentile to staff against is an explicit risk-tolerance decision that becomes possible only when the forecast includes distributional information. See Staffing to Percentile vs Mean Forecast for an extended treatment.

Stochastic Scheduling

Main article: Probabilistic Scheduling

Stochastic scheduling extends scheduling optimization to account for uncertainty in both demand and supply. Traditional schedule optimization treats the staffing requirement for each interval as a fixed number and solves for the schedule that covers those requirements at minimum cost. Stochastic scheduling recognizes that both the requirements (driven by uncertain demand) and the actual coverage (affected by uncertain shrinkage, adherence, and absenteeism) are random variables.

Approaches

Robust scheduling. Finds schedules that perform acceptably across a range of demand scenarios rather than optimally for a single scenario. This may involve optimizing against the worst case within a defined uncertainty set, or minimizing expected cost subject to a constraint on worst-case service level.

Two-stage stochastic programming. The first stage makes scheduling decisions before uncertainty is resolved (e.g., which agents are assigned to which shifts). The second stage makes recourse decisions after uncertainty is revealed (e.g., voluntary overtime offers, early releases, skill-group reassignments). The objective is to minimize first-stage cost plus expected second-stage cost.[5]

Chance-constrained scheduling. Requires that constraints (such as meeting the service level target) be satisfied with at least a specified probability rather than with certainty. For example, "the schedule must achieve 80/20 service level with at least 90% probability." This is computationally harder than deterministic scheduling but produces more realistic and less conservative plans.

Simulation-optimization. Combines a simulation model with an optimization algorithm. The optimizer proposes candidate schedules; the simulation evaluates each schedule under stochastic conditions; the optimizer uses the simulation results to guide the search toward better schedules. This approach is computationally intensive but can handle arbitrary complexity in the stochastic model.

Practical Implications

Even without full stochastic scheduling optimization, WFM teams can incorporate probabilistic thinking into scheduling by:

- Building schedules against multiple demand scenarios (optimistic, expected, pessimistic) and selecting the schedule that balances cost and risk.

- Adding buffer staff in intervals with high forecast uncertainty rather than uniform buffers across the day.

- Using variance harvesting techniques to identify which intervals and days exhibit the most variability, and concentrating flexibility (part-time agents, voluntary overtime, cross-trained agents) in those periods.

Staffing to Percentiles

Main article: Staffing to Percentile vs Mean Forecast

The concept of staffing to a percentile rather than the mean forecast is one of the most immediately actionable applications of probabilistic planning. It bridges the gap between probabilistic forecasting and deterministic staffing calculations.

The Core Idea

If the forecast for an interval is described by a distribution (say, mean of 55 calls with a standard deviation of 8), then staffing to the mean forecast of 55 calls will result in understaffing approximately half the time—every day that actual volume exceeds 55. Staffing to the 80th percentile (approximately 62 calls in this example) means the plan will be adequate for 80% of realizations.

The choice of percentile is a business decision that depends on:

- Cost of failure. High-stakes intervals (revenue-generating campaigns, compliance-sensitive queues) warrant higher percentiles.

- Cost of overstaffing. If surplus agents can be productively redeployed (through multiskilling, back-office work, or training), the marginal cost of overstaffing is lower, favoring higher percentile targets.

- Service level agreements. Contractual SLAs with financial penalties push toward higher percentiles.

- Planning horizon. Longer horizons carry more uncertainty, potentially warranting higher percentiles to absorb forecast error.

Implementation

Implementing percentile-based staffing requires two capabilities that most WFM platforms support but few practitioners use to their full potential:

- A forecasting method that produces distributional output (prediction intervals or quantiles), not just a point forecast.

- A staffing calculation that can be applied to any point on the forecast distribution, not just the mean.

The staffing calculation itself can remain deterministic (e.g., Erlang C). What changes is the input to that calculation: instead of plugging in the mean forecast, the planner plugs in the desired percentile of the forecast distribution. This is a straightforward way to inject probabilistic thinking into an otherwise deterministic workflow.

Scenario Planning and Sensitivity Analysis

Main article: Scenario Planning and Contingency Staffing

Scenario planning is the most accessible entry point to probabilistic thinking for WFM teams that are not yet ready for full distributional modeling. Rather than working with continuous probability distributions, scenario planning works with a discrete set of named scenarios, each representing a plausible future state of the world.

Scenario Construction

A well-constructed scenario set typically includes:

- Base case — the most likely outcome, corresponding roughly to the median forecast.

- High-demand scenario — a plausible increase in volume (e.g., +15–20% above base), representing a busy period, successful marketing campaign, or service disruption driving increased contacts.

- Low-demand scenario — a plausible decrease (e.g., -10–15% below base), representing seasonal softness or the effect of a successful self-service deflection initiative.

- Stress scenario — an extreme but not impossible outcome (e.g., +40–50%), representing a crisis event, system outage, or product recall.

Each scenario can be assigned a subjective probability (e.g., base case 50%, high demand 25%, low demand 15%, stress 10%), turning the discrete scenario set into a crude probability distribution over outcomes.

Sensitivity Analysis

Sensitivity analysis complements scenario planning by systematically varying individual input parameters while holding others constant to understand which inputs most influence outcomes. In WFM, the most impactful sensitivity parameters are typically:

- Volume forecast accuracy — the single largest source of uncertainty in most contact centers.

- Shrinkage rate — particularly when shrinkage has a wide historical range.

- Average handle time — especially during product launches, system changes, or agent skill-mix shifts.

- Attrition rate — for capacity planning horizons, small changes in attrition compound rapidly.

Tornado charts (showing the impact of each parameter on the outcome) and spider plots (showing how the outcome changes as each parameter varies across its range) are standard visualization tools for sensitivity analysis. These are available in spreadsheet add-ins (@RISK, Crystal Ball) and in dedicated simulation platforms.

Digital Twins and Continuous Simulation

Main article: Workforce Digital Twins and Continuous Planning

The frontier of probabilistic planning in WFM is the workforce digital twin: a continuously updated simulation model that mirrors the real contact center operation in near-real time.

Architecture

A workforce digital twin integrates:

- Live data feeds — real-time arrival rates, current staffing, agent states, queue depths, and handle times.

- A simulation engine — typically a discrete-event simulation model that can project forward from the current state.

- Probabilistic inputs — distributional assumptions for future arrivals, handle times, and agent availability, updated continuously based on the latest data.

- Decision support outputs — projected service levels, staffing recommendations, and risk assessments for the remainder of the day or planning period.

Unlike traditional planning, which runs periodically (weekly or daily), a digital twin runs continuously, re-simulating the future every few minutes as new data arrives. This transforms probabilistic planning from a periodic exercise into an ongoing process.

Applications

- Intraday management. The digital twin projects the probability distribution of end-of-day service level given current conditions, enabling proactive intervention (overtime offers, skill-group reassignment, queue prioritization changes) before problems materialize.

- What-if analysis. Planners can test interventions in the simulation before implementing them in reality: "If we release 5 agents early at 2:00 PM, what is the probability that we still meet service level for the rest of the day?"

- Anomaly detection. Deviations between the digital twin's predictions and actual outcomes signal that something has changed in the underlying system—a shift in arrival patterns, a new agent cohort with different handle times, or a technology issue affecting agent productivity.

Digital twins represent the convergence of probabilistic planning, real-time data, and artificial intelligence. They are an advanced application that requires significant data infrastructure, but the conceptual framework—continuously updated probabilistic simulation—is the logical endpoint of the methods described throughout this article.

Bayesian Methods in WFM

Bayesian statistics provides a formal framework for updating beliefs about uncertain quantities as new evidence arrives. In WFM, Bayesian methods are particularly valuable because operational environments are constantly generating new data that should inform planning assumptions.

The Bayesian Framework

The core Bayesian idea is simple: start with a prior distribution (what you believe before seeing new data), observe data, and compute a posterior distribution (your updated belief after incorporating the data). The update follows Bayes' theorem:

- P(θ | data) ∝ P(data | θ) × P(θ)

where θ represents the uncertain parameter (e.g., the true average arrival rate for Monday mornings), P(θ) is the prior distribution, P(data | θ) is the likelihood of the observed data given the parameter, and P(θ | data) is the posterior distribution.

Practical Applications

Updating arrival rate estimates. At the start of a shift, the planner's forecast represents a prior belief about today's volume. As actual calls arrive, the Bayesian framework provides a principled way to update the forecast in real time. If the first hour runs 15% above forecast, the posterior distribution for the remainder of the day shifts upward—but by how much depends on the historical correlation between early-day and late-day volumes, which is captured in the prior.

New product or channel forecasting. When launching a new product or communication channel with no historical data, the prior might be based on analogous products, industry benchmarks, or expert judgment. As initial data arrives, the posterior rapidly concentrates around the emerging empirical pattern. This is a more principled approach than the common practice of "waiting for enough data" before forecasting.

Shrinkage and attrition estimation. Shrinkage and attrition rates fluctuate seasonally, and a Bayesian approach can weight recent data more heavily while still incorporating longer-term patterns. This produces estimates that are more responsive to genuine changes without overreacting to noise—a persistent challenge with simple moving averages.

Agent performance modeling. A new agent's "true" handle time and quality metrics are uncertain after only a few weeks of data. A Bayesian model that combines a prior (based on the cohort average for agents at the same tenure) with the individual's observed data produces more stable and accurate estimates than raw individual metrics, particularly in the critical early weeks when sample sizes are small.

Bayesian vs. Frequentist in Practice

WFM practitioners need not engage deeply with the philosophical debate between Bayesian and frequentist statistics. The practical advantage of Bayesian methods in WFM is their natural handling of sequential updating (new data refines existing beliefs rather than requiring analysis to restart from scratch) and their ability to incorporate domain knowledge through priors. The disadvantage is computational: Bayesian methods often require Markov chain Monte Carlo (MCMC) sampling, which is slower than closed-form frequentist calculations. However, modern probabilistic programming languages (Stan, PyMC) have made Bayesian computation accessible to practitioners with intermediate programming skills.

When Probabilistic Approaches Add Value vs. Add Complexity

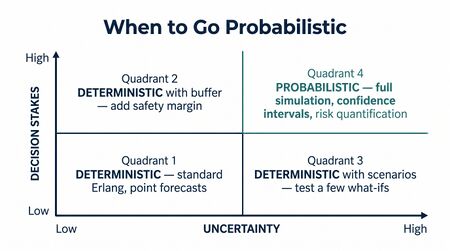

Not every WFM problem warrants a probabilistic approach. The decision framework below helps practitioners determine when distributional thinking justifies its additional complexity.

High-Value Applications (Probabilistic Recommended)

- High-stakes decisions with high uncertainty. Capacity planning for a new line of business, staffing for a product launch with unknown demand, or building a contingency plan for a crisis scenario. The cost of being wrong is high, and the uncertainty is wide enough that point estimates are dangerously misleading.

- Long planning horizons. Annual capacity plans, budget forecasts, and strategic headcount models. Uncertainty compounds over time, and deterministic long-range plans create a false sense of precision that can lead to poor investment decisions.

- Performance attribution. Determining whether a change in service level is due to a genuine operational issue or normal statistical variation. Variance harvesting requires distributional thinking to separate signal from noise.

- SLA risk management. When contractual penalties for service level misses create asymmetric cost functions (the cost of understaffing exceeds the cost of overstaffing by a large margin), probabilistic staffing to a high percentile is the appropriate response.

- Simulation-based capacity planning. Discrete-event simulation and Monte Carlo methods are inherently probabilistic and provide insights—such as the distribution of wait times, the probability of queue overflow, and the sensitivity of service level to staffing changes—that deterministic models cannot.[6]

Low-Value Applications (Deterministic Sufficient)

- Routine intraday staffing calculations. When forecast uncertainty is low (e.g., a mature, stable queue with years of history and low variance), the prediction interval is narrow enough that staffing to the mean is functionally equivalent to staffing to any reasonable percentile. The additional complexity of distributional staffing adds negligible value.

- Hard-constraint compliance checks. Labor law compliance (break rules, maximum consecutive days, overtime limits) is binary—pass or fail—and does not benefit from probabilistic treatment.

- Short-term scheduling in stable environments. When the planning horizon is short (same-day or next-day) and the environment is stable, the forecast is accurate enough that deterministic scheduling optimization produces near-optimal results.

- Low-stakes decisions. Decisions where the cost of being moderately wrong is small (e.g., allocating back-office staff between two low-priority queues) do not warrant the overhead of simulation.

The Decision Matrix

| Low Uncertainty | High Uncertainty | |

|---|---|---|

| High Stakes | Deterministic is acceptable but probabilistic adds insurance | Probabilistic strongly recommended |

| Low Stakes | Deterministic is sufficient | Probabilistic is optional; monitor for changes |

The goal is not to make everything probabilistic but to reserve distributional thinking for the decisions where uncertainty is large enough and stakes are high enough that the additional insight justifies the effort. As forecasting methods and tools improve, the cost of probabilistic approaches continues to decrease, shifting the threshold in favor of probabilistic planning for an expanding range of applications.

Tools and Implementation

Probabilistic planning does not require exotic technology. The methods described in this article can be implemented across a range of tools, from spreadsheets to dedicated simulation platforms.

Spreadsheet Add-Ins

- @RISK (Palisade/Lumivero) — adds Monte Carlo simulation to Microsoft Excel. Users define probability distributions for input cells, run simulations, and analyze output distributions. Widely used in operations research and financial modeling.

- Crystal Ball (Oracle) — similar functionality to @RISK with tight Excel integration. Includes optimization under uncertainty (OptQuest) and scenario analysis tools.

Both tools are accessible to WFM analysts who are proficient in Excel and provide a low-barrier entry point to Monte Carlo simulation without programming.

Programming Languages

Python is the dominant language for probabilistic WFM work, with a rich ecosystem of relevant libraries:

- NumPy (

numpy.random) — random number generation from a wide range of probability distributions. - SciPy (

scipy.stats) — probability distribution fitting, statistical tests, and distributional functions. - SimPy — a process-based discrete-event simulation library well-suited to modeling contact center queueing systems.

- PyMC — a probabilistic programming library for Bayesian modeling, including MCMC sampling and variational inference.

- Prophet / NeuralProphet — time series forecasting libraries that produce prediction intervals alongside point forecasts.

R offers comparable capabilities through packages such as forecast (Hyndman's forecasting toolkit, which produces prediction intervals by default), brms (Bayesian regression), and simmer (discrete-event simulation).[7]

Simulation Platforms

Main article: Simulation Software

Dedicated simulation platforms provide visual modeling environments for building and running complex stochastic models without extensive programming:

- AnyLogic — supports discrete-event, agent-based, and system dynamics simulation. Used for contact center capacity planning and what-if analysis.

- Arena (Rockwell Automation) — a long-established discrete-event simulation platform with built-in statistical analysis.

- Simul8 — focused on process simulation with an accessible drag-and-drop interface.

- SIMIO — object-oriented simulation with built-in optimization capabilities.

These platforms are most valuable for discrete-event simulation of complex contact center environments with multiple skill groups, priority routing, and callback queues, where the queueing dynamics exceed what Erlang-based formulas can capture.

WFM Platform Capabilities

Major WFM platforms (NICE, Verint, Calabrio, Genesys) are increasingly incorporating probabilistic features:

- Forecast confidence bands (upper and lower bounds around the point forecast).

- Scenario comparison tools (comparing staffing plans across multiple demand scenarios).

- Simulation-based capacity planning modules.

However, most WFM platforms still default to deterministic workflows, and probabilistic features are often buried in advanced configuration. Practitioners who want to work probabilistically may need to supplement their platform with external simulation or analytical tools.

Relationship to Deterministic Planning

Main article: Deterministic Planning in WFM

Probabilistic and deterministic planning are not competing approaches—they are complementary layers in a mature WFM practice. Deterministic methods provide the operational backbone: the daily staffing calculations, schedule optimization, and compliance checks that keep the contact center running. Probabilistic methods provide the risk layer: the uncertainty quantification, scenario analysis, and confidence calibration that improve decision quality.

A common and effective pattern is to use probabilistic methods upstream (distributional forecasting, simulation-based capacity planning) and deterministic methods downstream (Erlang-based staffing, LP-based scheduling). The probabilistic outputs feed the deterministic engines—for example, a probabilistic forecast produces a percentile-based volume input that is then consumed by a deterministic staffing formula. This hybrid approach captures most of the value of probabilistic planning without requiring a fully stochastic end-to-end workflow.

The Deterministic vs Probabilistic Models article explores the conceptual relationship between these paradigms, including the important distinction between a model's execution behavior and the meaning of its outputs. The AI Scaffolding Framework provides a maturity model for integrating probabilistic methods into WFM technology stacks.

See Also

- Deterministic vs Probabilistic Models

- Deterministic Planning in WFM

- Probabilistic Forecasting

- Probabilistic Scheduling

- Staffing to Percentile vs Mean Forecast

- Discrete-Event vs. Monte Carlo Simulation Models

- Discrete Event Simulation for Workforce Capacity Planning

- Simulation Software

- Scenario Planning and Contingency Staffing

- Variance Harvesting

- Erlang C

- Erlang-A

- Forecasting Methods

- Capacity Planning Methods

- Workforce Digital Twins and Continuous Planning

- AI Scaffolding Framework

- Machine Learning Concepts

- Artificial Intelligence Fundamentals

- Service Level

- Abandonment Rate Modeling and Patience Distributions

References

- ↑ "Telephone Call Centers: Tutorial, Review, and Research Prospects". Manufacturing & Service Operations Management. 5(2): 79–141. 2003. doi:10.1287/msom.5.2.79.16071.

- ↑ "Statistical Analysis of a Telephone Call Center: A Queueing-Science Perspective". Journal of the American Statistical Association. 100(469): 36–50. 2005. doi:10.1198/016214504000001808.

- ↑ Law, Averill M.. Simulation Modeling and Analysis. McGraw-Hill Education. 2015. ISBN 978-0-07-340132-4.

- ↑ Forecasting: Principles and Practice. OTexts. 2021. ISBN 978-0-9875071-3-6.

- ↑ Introduction to Stochastic Programming. Springer. 2011. ISBN 978-1-4614-0236-7.

- ↑ "Call Center Simulation Modeling: Methods, Challenges, and Opportunities". Proceedings of the 2003 Winter Simulation Conference: 135–143. 2003. doi:10.1109/WSC.2003.1261415.

- ↑ Automatic Time Series Forecasting: The forecast Package for R. 2008.