Workforce Digital Twins and Continuous Planning

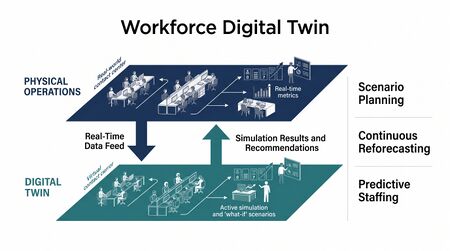

A workforce digital twin is a continuously updated virtual representation of an organization's workforce system — its people, skills, workload patterns, operational processes, and performance dynamics — that enables real-time analysis, scenario simulation, and optimization without intervening in the live operation. The concept extends digital twin theory from its origins in manufacturing and engineering into the domain of human capital and workforce management. Unlike dashboards, which report historical or current-state metrics, or simulation models, which are run periodically against static snapshots of data, a workforce digital twin maintains persistent synchronization with live operational systems and can evaluate the consequences of decisions before they are enacted. Continuous planning is the operational paradigm enabled by a mature workforce digital twin: forecasts updated hourly rather than weekly, schedules that adjust dynamically rather than at publish, and capacity plans treated as living documents rather than quarterly artifacts.

Conceptual Foundations

The digital twin concept was formally articulated by Grieves and Vickers (2017), who defined it as a set of virtual information constructs that fully describe a physical manufactured product — with the critical addition of a bidirectional data link maintaining synchronization between the physical object and its virtual counterpart.[1] Tao et al. (2019) extended the framework to industrial systems, proposing a five-dimension model: physical entity, virtual entity, services, data, and connections — with the data dimension serving as the synchronization substrate that keeps virtual and physical aligned.[2]

Applied to workforce management, the physical entity is the operation itself — agents, supervisors, queues, interaction volumes, schedules, and the processes that govern how work flows through the system. The virtual entity is the model: a parameterized representation of that operation capable of simulating its behavior under varying conditions. The data connections are the real-time feeds from ACD, WFM platform, HRIS, quality management, and other operational systems that keep the model calibrated against current reality.

What a workforce digital twin is not is equally important to specify. It is not a dashboard — dashboards display data from the physical system but have no simulation capability. It is not a spreadsheet model or a planning scenario run in isolation — these are static snapshots without persistent synchronization. It is not a data warehouse — warehouses store and serve data but do not model behavior. The distinguishing characteristic of a digital twin is the bidirectional live connection: the twin reflects the physical system, and decision-makers use the twin to evaluate actions before applying them to the physical system.

Architecture

A workforce digital twin architecture consists of four functional layers:

1. Data ingestion layer — Real-time and near-real-time feeds from operational systems. Core feeds include: ACD event streams (contact arrival, handle time, abandonment, queue state); WFM platform data (schedules, attendance, adherence status, exception activity); HRIS data (headcount, employment status, skill certifications, leave balances); and quality management data (evaluation scores, coaching records). Latency requirements vary by use case — real-time optimization requires sub-minute latency on ACD and adherence feeds, while strategic scenario planning can tolerate hourly HRIS synchronization. The WFM Data Infrastructure and Integration Architecture page covers the technical specification of these feeds in detail.

2. Simulation engine — The computational core of the twin. Takes current system state as input and models behavior under specified conditions. For workforce management, this typically includes an Erlang or discrete-event simulation component for queue dynamics, a staffing optimization module (see Schedule Optimization), and a demand forecasting module (see Forecasting Methods). The engine must be capable of running thousands of scenario iterations quickly enough to support real-time decision support.

3. Optimization layer — Takes simulation outputs and identifies recommended actions. For intraday management, this might recommend VTO offers, mandatory overtime, or skill rebalancing based on a four-hour demand projection. For strategic planning, it might recommend headcount changes, training investments, or queue restructuring based on modeled business scenarios.

4. Visualization and decision layer — Presents twin outputs to decision-makers in actionable form. This layer must distinguish clearly between current-state reporting (from the physical system), modeled projections (from the twin), and recommended actions (from the optimization layer). Conflating these creates decision-making confusion and erodes trust in the twin.

From Batch Planning to Continuous Planning

Traditional WFM planning operates on batch cycles: forecasts built weekly or bi-weekly, schedules published days in advance, capacity plans updated quarterly. This cadence made sense when data collection was manual and computational resources were limited. It is increasingly mismatched with operational reality.

In a batch planning paradigm, a forecast error discovered on Tuesday cannot be corrected until the following planning cycle. Intraday managers must compensate for structural errors through real-time adjustments that were never intended to absorb forecast variance of that magnitude. The weekly schedule is treated as authoritative even when actual arrival patterns diverge significantly from the forecast on which it was based.

Continuous planning, enabled by a workforce digital twin, changes this in three ways:

- Forecasts update on rolling short horizons. Rather than a weekly forecast updated once, a continuous planning system maintains a 48- or 96-hour rolling forecast updated every hour as actual arrival data accrues. This is the same principle as meteorological forecast systems, where models are continuously re-run against incoming observation data. Short-horizon forecast accuracy improves significantly with continuous updating.

- Schedules adjust within the published framework. The published schedule establishes commitments, but the twin continuously evaluates whether actual conditions match the assumptions under which the schedule was built. When they diverge significantly, the optimization layer recommends intraday adjustments — proactive VTO or overtime offers, rebalancing of flexible capacity across skills or queues — before service level degrades.

- Capacity plans are living documents. Strategic headcount targets, hiring plans, and training investment plans are updated as actual business conditions evolve, rather than remaining fixed between quarterly planning exercises. The Workforce Planning Calendar and Annual Planning Cycle process is retained as a governance rhythm but is no longer the sole mechanism for capacity plan revision.

Calibration and Drift

The most significant operational risk in a workforce digital twin is model drift — the progressive divergence between the virtual model and the physical system it represents. Drift accumulates when the underlying assumptions of the model no longer match reality: staffing mix has changed, handle time distributions have shifted, arrival patterns have evolved, or process changes have altered how work flows through queues.

A drifting twin is worse than no twin, because it produces confident recommendations based on incorrect premises. Decision-makers who trust the twin will make systematically poor decisions.

Calibration is the process of regularly comparing twin predictions against actual outcomes and adjusting model parameters to reduce the gap. A minimum calibration protocol for a WFM digital twin includes: weekly comparison of twin-generated demand forecasts against actual arrivals; daily comparison of twin-generated adherence projections against actual adherence; and event-triggered recalibration after major operational changes (new product launches, significant headcount changes, process redesigns).

Process mining provides a complementary approach to calibration. Van der Aalst (2016) developed process mining as a method for discovering actual operational processes from the event logs generated by information systems — producing a model of how processes actually execute rather than how they are documented to execute.[3] Applied to WFM, process mining on ACD event logs and WFM activity records can reveal how contacts actually flow through queues, how agents actually allocate time, and how actual adherence patterns differ from scheduled patterns. These empirically derived process models provide calibration inputs that operational observation alone cannot generate at scale.

Fuller et al. (2020) proposed a digital twin calibration framework based on continuous Bayesian updating — treating model parameters as probability distributions rather than point estimates and narrowing those distributions as new observational data arrives.[4] This approach is theoretically sound for WFM applications and is beginning to appear in advanced WFM analytics platforms.

Use Cases

Scenario planning. A planning manager asks: what is the service level impact if we lose 20 agents to unexpected attrition over the next 30 days? The twin models the headcount reduction against the demand forecast, runs the Erlang calculation across affected queues, and produces a projected service level trajectory with and without mitigation actions (accelerated hiring, temporary agency staff, skill rebalancing). This analysis, which might take days in a traditional model, runs in minutes in a mature twin.

Change impact analysis. Before deploying a chatbot to handle a new interaction type, planners can model the expected containment rate against the queue's volume and the resulting change in human workload. The twin tests the assumption that chatbot containment will reduce required staffing by X percent before the investment is made. This connects to Agentic AI Workforce Planning, which addresses the staffing model implications of AI-augmented operations.

Real-time intraday optimization. During an active shift, the twin projects forward four hours based on current arrival rates, current staffing levels, and current adherence patterns. It identifies whether a VTO offer is safe to extend (projected overstaffing) or whether mandatory overtime should be triggered (projected understaffing), before the actual condition develops. This reduces the reactive management that characterizes Intraday Management at lower maturity levels.

Retrospective analysis. A workforce digital twin can be run in historical replay mode — feeding past event data through the model to reconstruct what the operation looked like at any prior point in time. This supports root cause analysis of past service failures and provides a baseline for evaluating whether process changes produced the expected impact.

Data Infrastructure Requirements

Building a functioning workforce digital twin requires data infrastructure that most contact centers do not have in place by default. The minimum requirements include:

- ACD event-level data accessible in near real-time (sub-minute latency for intraday use cases, hourly for planning use cases).

- WFM platform data exports via API (schedule data, exception data, attendance data, adherence status).

- HRIS integration for headcount, employment type, skill certification, and leave balance data.

- A data integration layer capable of joining these streams into a unified operational view.

- A time-series store for model calibration history.

WFM Data Infrastructure and Integration Architecture and Reporting and Analytics Framework address these requirements in detail. Organizations pursuing digital twin capability at L4–L5 should treat data infrastructure investment as a prerequisite, not a parallel workstream.

Maturity Model Considerations

The WFM Labs Maturity Model positions workforce digital twin capability across L3–L5:

| Maturity Level | Twin Capability | Planning Paradigm |

|---|---|---|

| L1–L2 | No twin. Planning based on historical averages and manual adjustment. | Batch planning, long cycles. |

| L3 | Historical replay twin. Can reconstruct past operational states from event logs. Periodic scenario runs against static snapshots. | Batch planning with ad hoc scenario analysis. |

| L4 | Predictive twin. Live data feeds maintain synchronization. Rolling short-horizon forecast. Scenario planning available on demand. | Continuous forecasting; scheduled adjustment reviews. |

| L5 | Prescriptive twin. Real-time optimization recommendations. Automated calibration. Integrated with AI-assisted decision execution. Connected to Agentic AI Workforce Planning architecture. | Continuous planning across all horizons. |

Progression from L3 to L4 is primarily a data infrastructure challenge. Progression from L4 to L5 is primarily an organizational challenge — it requires decision-makers who trust the twin's recommendations sufficiently to act on them in real time, and governance processes that define the boundaries of automated versus human decision-making.

Related Concepts

- WFM Data Infrastructure and Integration Architecture

- Forecasting Methods

- Capacity Planning Methods

- Intraday Management

- Real-Time Operations

- Agentic AI Workforce Planning

- Schedule Optimization

- Reporting and Analytics Framework

- Reporting Automation and Self Service Analytics

- Workforce Planning Calendar and Annual Planning Cycle

- WFM Labs Maturity Model

- Performance Management

References

- ↑ Grieves, M., & Vickers, J. (2017). Digital twin: Mitigating unpredictable, undesirable emergent behavior in complex systems. In F.-J. Kahlen, S. Flumerfelt, & A. Alves (Eds.), Transdisciplinary Perspectives on Complex Systems (pp. 85–113). Springer.

- ↑ Tao, F., Zhang, H., Liu, A., & Nee, A. Y. C. (2019). Digital twin in industry: State-of-the-art. IEEE Transactions on Industrial Informatics, 15(4), 2405–2415.

- ↑ van der Aalst, W. M. P. (2016). Process Mining: Data Science in Action (2nd ed.). Springer.

- ↑ Fuller, A., Fan, Z., Day, C., & Barlow, C. (2020). Digital twin: Enabling technologies, challenges and open research. IEEE Access, 8, 108952–108971.