Probabilistic Scheduling

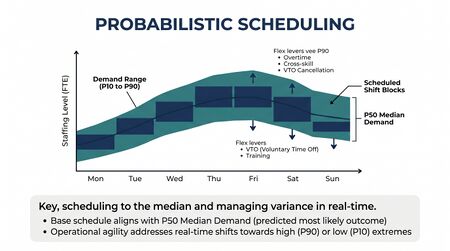

Probabilistic Scheduling treats the schedule as a distribution over plausible operating outcomes rather than as a single fixed plan. A traditional schedule is a point object — a roster that, given a point forecast, satisfies a coverage requirement. A probabilistic schedule is the same roster together with the probability that it meets a service-level constraint across the full distribution of demand and supply outcomes that could actually occur. The shift from point-estimate scheduling to probabilistic scheduling is the operational expression of the same risk-aware planning principle that drives Probabilistic Forecasting — and it is what makes the upper WFM Labs Maturity Model™ levels actually buildable.

This page documents what probabilistic scheduling is, the methods that produce it, how it differs by pool, and the failure modes that recur when teams attempt the transition.

From point estimates to distributional plans

Point-estimate scheduling treats a single forecast as truth. The forecast says 412 FTE on Tuesday at 10:00; the schedule places 412 (or 412 + a fixed buffer) on the floor; the day either works or it doesn't, and post-hoc analysis explains the gap. The plan is a fixed object; reality is variance the plan suffers.

Probabilistic scheduling inverts the framing. The forecast is a distribution — P50 = 412, P80 = 460, P95 = 510 — and so is the staffing requirement that follows from it. The schedule is then evaluated as an instrument applied to that distribution: what is the probability this roster delivers SL ≥ 80% across the realized demand?[1] A schedule that "works at P50" but fails at P80 is a fragile schedule — the WFM Labs Risk Score™ is the operational name for that fragility.

The output is no longer a roster; the output is a roster, a service-level distribution, and the probability mass that lies above the SL target. Two rosters with the same labor cost can have very different probabilistic profiles. Choosing between them requires probabilistic scheduling.

Inputs and outputs

The probabilistic scheduling pipeline:

- Probabilistic forecast — distribution of demand by interval, from Probabilistic Forecasting

- Probabilistic capacity band — the FTE distribution that satisfies the demand distribution under the queueing model (Erlang-C, Erlang-A, simulation), expressed as P50 / P80 / P95 staffing

- Probabilistic constraint — the explicit policy: e.g. "schedule such that P(SL ≥ target) ≥ 0.95 across the demand distribution"

- Schedule — the roster that satisfies the constraint at minimum cost

- Validation — Monte Carlo evaluation of the realized roster against many sampled demand paths

The traditional pipeline collapses steps 1-3 into a single point number. The probabilistic pipeline keeps the distribution intact end-to-end. This is the non-negotiable upgrade: a probabilistic forecast feeding a deterministic optimizer produces deterministic outputs and discards the information the forecast carried.

Methods

Three methods are in active use, each with different cost / honesty / tractability trade-offs.

Quantile-target scheduling

The simplest upgrade. Pick a quantile (commonly P80 or P90) of the staffing requirement distribution and schedule against that point. The optimizer remains a standard set-covering or integer program; the only change is the right-hand side of the coverage constraint.

Quantile-target scheduling is honest about what point estimate is chosen and why — "we are accepting a 20% probability of understaffing in exchange for the labor cost savings of not staffing to P95." It does not, however, capture cross-interval dependence. A roster that meets P80 at every interval independently does not meet "SL ≥ target with probability 0.8 across the full day," because errors compound across intervals. For multi-interval coverage promises, the next method is required.

Chance-constrained scheduling

The probabilistic constraint goes inside the optimization. The objective is still to minimize labor cost; the constraint is now P(SL ≥ target) ≥ α across the demand distribution, where α is the operator's risk tolerance.[2] Solving requires either (a) a tractable closed-form transformation of the chance constraint into deterministic equivalents (works for Gaussian demand assumptions) or (b) sample-average approximation, where many demand scenarios are drawn and the constraint is enforced on the sample.

Chance-constrained scheduling is the right method when the operator can articulate the policy "we accept a 5% probability of an SL miss day" — and when that articulation differs materially from "we accept 20% understaffing per interval." For Pool Spec workflows where SL misses cascade into customer harm, the joint probability is the meaningful object.

Simulation-validated scheduling

Generate candidate schedules using any optimizer (cost-minimization, quantile-target, chance-constrained); evaluate each candidate by Monte Carlo simulation across the demand distribution; choose the candidate with the highest expected utility (typically, lowest cost subject to the empirical SL constraint).[3] This is the simulation approach: the optimizer proposes; the simulator disposes.

Simulation-validated scheduling is the most flexible — any candidate-generation method can be plugged in, and any utility function can be evaluated — and the most expensive. It is the natural method for Pool Spec, where DES-based evaluation is already part of the planning stack.

Pool-aware probabilistic scheduling

The Three-Pool Architecture forces different probabilistic semantics on different pools.

- Pool AA (autonomous AI). Capacity is software; the "schedule" is a cost-vs-throughput sweep across configuration choices (model size, concurrency, retry policy). The probabilistic object is a distribution of cost outcomes given a containment-rate distribution. Quantile-target methods dominate — operators schedule the configuration that delivers P80 cost outcomes inside budget.

- Pool Collab (human-AI collaboration). The Cognitive Portfolio Model (N*) reports its core staffing parameter as a band, not a point. N* is the fixed-point solution of an equation in five parameters, three of which are estimated with high uncertainty in contact-center contexts; the band reflects that. The probabilistic schedule for Pool Collab propagates the N* band into roster construction directly — staffing recommendations are expressed as ranges (e.g. "schedule between 14 and 22 collaborators with the working point at 18 conditional on intervention rate < 0.4") and the chance-constraint method is applied across the band, not against a single N* value.

- Pool Spec (specialist humans). Long handle times, high handle-time variance, and consequential SL targets push Pool Spec toward simulation-validated scheduling. DES models the queue dynamics and agent variability; chance constraints encode the SL policy; the optimizer searches across rosters under the simulated dynamics. This is the most expensive pool to schedule for and the most rewarding to do correctly — Pool Spec is where probabilistic scheduling pays for itself in retention and customer outcomes.

The cross-pool point: there is no single "probabilistic schedule" — there are pool-specific probabilistic schedules with different mathematical structure, and the planning stack must support all three.

Practitioner playbook

A workable implementation sequence:

- Establish probabilistic forecasting first. Without distributional forecasts, everything downstream collapses to a point.

- Translate forecast distributions into capacity bands. Run the WFM Labs Erlang-O™ or simulation engine across many sampled demand paths; record the resulting staffing distribution.

- Articulate the SL policy probabilistically. "P(daily SL ≥ 80%) ≥ 0.95" is a policy. "We staff to forecast" is not.

- Pick the method that fits the pool. Quantile-target for Pool AA cost sweeps; chance-constrained for Pool Collab; simulation-validated for Pool Spec.

- Run the WFM Labs Risk Score™ on candidate rosters. The Risk Score is the operational summary of the probabilistic profile — fragility is the metric leaders communicate with.

- Validate with held-out data. Probabilistic schedules need calibration just like probabilistic forecasts. Coverage diagnostics on realized SL distributions vs. predicted distributions are the basic check.

The data inputs: probabilistic forecast (P10/P50/P80/P90/P95), AHT distribution by skill, shrinkage distribution, attrition / unplanned-leave distribution, intraday demand correlation matrix. Tools: any modern stochastic optimization library (Pyomo + sample-average approximation; Gurobi with chance constraints), any DES engine for Pool Spec validation, the WFM Labs calculator suite for inputs.

Common failure modes

- Confusing P80 staffing with P80 schedule. A roster that meets the P80 staffing requirement at every interval does not produce P80 service-level reliability across the day. Errors compound; cross-interval correlation matters. Treat the schedule probability and the staffing probability as different objects.

- Treating bands as static. The probabilistic forecast updates intraday as actuals arrive; the probabilistic capacity band must update with it. A schedule that was P80-honest in the morning may be P50-honest by 11am if demand has shifted.

- Skipping simulation validation. Optimizers report what they were asked to report — they tell you the schedule meets the constraint as the optimizer encoded it. Whether the schedule actually meets the policy under realistic demand requires Monte Carlo evaluation.

- Ignoring cross-day uncertainty correlation. A "P95 weekly schedule" that treats each day independently underestimates compound risk. Bad demand days cluster (around events, holidays, marketing pushes); the probabilistic schedule must use the joint distribution, not the marginals.

- Reporting probabilistic outputs as points. "We need 18 collaborators in Pool Collab" hides the band that should accompany it. The probabilistic schedule is only useful if its probabilistic character survives the trip to the operational decision.

Maturity Model Position

In the WFM Labs Maturity Model™, probabilistic scheduling is a Level 4-natural concept that is conceptually accessible from Level 3 but only systematically operated from Level 4 onward.

- Level 1 — Initial (Emerging Operations) — scheduling is point-estimate by default; uncertainty is ignored or absorbed by post-hoc explanation.

- Level 2 — Foundational (Traditional WFM Excellence) — schedules are deterministic; informal "buffer" practice (e.g. add 5% to the forecast) is the closest approximation to risk-aware staffing. The buffer is a point estimate of a probability adjustment, not the probability itself.

- Level 3 — Progressive (Breaking the Monolith) — probabilistic forecasts exist and inform conversations, but schedules typically still optimize against a single quantile (often the forecast point or a buffered point). Variance Harvesting depends on the probabilistic frame, but the schedule itself remains a fixed roster generated against a fixed coverage requirement.

- Level 4 — Advanced (The Ecosystem Emerges) — probabilistic schedules are produced and evaluated as distributions. Chance constraints, quantile targeting, and simulation-validated optimization are part of the planning stack. The WFM Labs Risk Score™ is the executive-facing summary of the probabilistic profile. Pool-specific methods are differentiated.

- Level 5 — Pioneering (Enterprise-Wide Intelligence) — probabilistic schedules update continuously against live data; cross-day correlation, cross-skill correlation, and cross-pool correlation are modeled jointly; schedule risk is part of the same probabilistic substrate that runs forecasting, capacity, and routing.

The mathematical content of probabilistic scheduling is L3-accessible. The discipline of running it consistently — articulating SL policy probabilistically, validating rosters by simulation, propagating bands into operational decisions — is a Level 4 operating posture.

References

- Koole, G. (2013). Call Center Optimization. MG Books. Chapters 6-7 cover staffing and scheduling under demand uncertainty.

- Charnes, A., & Cooper, W. W. (1959). "Chance-constrained programming." Management Science 6(1).

- Powell, W. B. (2019). Reinforcement Learning and Stochastic Optimization. Wiley. Stochastic resource allocation under uncertainty.

- Robbins, T. R., & Harrison, T. P. (2010). "A stochastic programming model for scheduling call centers with global service level agreements." European Journal of Operational Research 207(3). Sample-average approximation applied directly to call-center scheduling.

- Gans, N., Koole, G., & Mandelbaum, A. (2003). "Telephone call centers: tutorial, review, and research prospects." Manufacturing & Service Operations Management 5(2).

- Lango, T. (2026). Value-Based Models for Customer Operations. Establishes the L4 distributional planning frame.

- ↑ Koole, G. (2013). Call Center Optimization. MG Books. Chapter 6 covers staffing under demand uncertainty; chapter 7 covers shift scheduling.

- ↑ Charnes, A., & Cooper, W. W. (1959). "Chance-constrained programming." Management Science 6(1). The original formulation; widely extended for stochastic resource allocation.

- ↑ Powell, W. B. (2019). Reinforcement Learning and Stochastic Optimization. Wiley. Chapters 6-9 develop the simulation-evaluation framework for stochastic resource problems.

See Also

- Probabilistic Forecasting — the distributional input that makes probabilistic scheduling possible

- Schedule Generation — the deterministic optimizer underneath; probabilistic scheduling wraps it

- Scheduling Methods — master reference for the scheduling cluster

- WFM Labs Risk Score™ — the executive-facing summary of probabilistic schedule fragility

- Cognitive Portfolio Model (N*) — N* is itself a band; Pool Collab schedules propagate it

- Three-Pool Architecture — pool-specific probabilistic semantics

- Variance Harvesting — the operational layer that depends on probabilistic schedules being honest

- Discrete-Event vs. Monte Carlo Simulation Models — the simulation engines used for validation

- Capacity Planning Methods — upstream method family; capacity bands feed schedule construction

- Multi-Objective Optimization in Contact Center — when probabilistic scheduling is one of several objectives

- WFM Labs Maturity Model™ — maturity context