MAPE WAPE and Forecast Bias

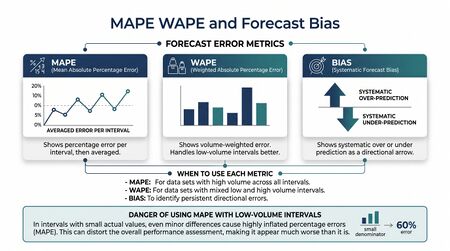

Forecast accuracy metrics quantify the difference between a forecast and the realized outcome, providing the empirical basis for evaluating, selecting, and improving forecasting models. Among the most widely used metrics in contact center and supply chain forecasting are the Mean Absolute Percentage Error (MAPE), the Weighted Absolute Percentage Error (WAPE), and measures of forecast bias. Each metric captures a different dimension of forecast quality and carries distinct mathematical properties, failure modes, and appropriate use cases. A thorough understanding of these metrics — including their limitations — is prerequisite for meaningful model comparison and governance within any WFM Processes framework that includes a formal forecasting function.

Mean Absolute Percentage Error (MAPE)

Definition

MAPE is defined as the mean across all forecast periods of the absolute percentage error for each period:

where is the actual value and is the forecast value for period .

MAPE expresses accuracy as a percentage, making it interpretable across time series of different scales and across organizations comparing forecasting performance.

Failure Modes

Despite its ubiquity, MAPE has well-documented mathematical pathologies. Hyndman and Koehler (2006) provide a systematic treatment of these failure modes and argue that MAPE should be used cautiously or replaced in many practical forecasting contexts.[1]

Division by zero. When , MAPE is undefined. In contact center forecasting, zero-volume intervals are common — overnight periods, closed days, or low-traffic queues during off-peak segments. Practitioners must either exclude these periods from MAPE calculations (risking bias in aggregate accuracy reporting) or substitute an alternative metric.

Asymmetric penalty structure. Over-forecasting is bounded at 100% error (a forecast infinitely larger than the actual approaches 100% error), while under-forecasting is unbounded. This asymmetry means MAPE implicitly penalizes under-forecasting more heavily than over-forecasting of the same absolute magnitude, potentially biasing model selection toward systematically high forecasts.

Sensitivity to small denominators. When actual volumes are small, small absolute errors produce large percentage errors. A forecast of 12 contacts against an actual of 10 contacts generates a 20% MAPE from a two-contact difference, while the same two-contact error against a 1,000-contact interval generates 0.2% MAPE. In heterogeneous contact center environments with a mix of high- and low-volume queues, simple averaging of interval-level MAPE can be dominated by low-volume intervals.

When MAPE Is Appropriate

MAPE remains useful when:

- Actual values are strictly positive and unlikely to approach zero.

- Scale-independent comparison across time series with different volume levels is required.

- Stakeholders are familiar with percentage error framing and results must be communicated broadly.

Weighted Absolute Percentage Error (WAPE)

Definition

WAPE (also called the Mean Absolute Deviation to Mean Ratio, or MAD/Mean) addresses MAPE's sensitivity to small denominators by weighting errors by the magnitude of the actual values:

This formulation is equivalent to computing the total absolute error divided by the total actual volume, expressed as a percentage. Kolassa and Siemsen (2015) describe WAPE as a preferable default metric for demand forecasting contexts where volume heterogeneity is present.[2]

Advantages Over MAPE

- Resistance to small-denominator distortion. High-volume periods contribute proportionally more to WAPE than low-volume periods, so the metric reflects accuracy where it operationally matters most.

- No undefined values. As long as total actual volume is nonzero over the evaluation window, WAPE is well-defined even if individual intervals have zero actuals.

- Equivalent to volume-weighted MAPE. WAPE can be interpreted as the percentage error on the aggregate forecast, making it intuitive for capacity planning purposes.

Limitations

WAPE can mask poor performance on low-volume segments that are operationally important. If a specialized skill queue carries 2% of total volume but is critical for a regulated service, its poor forecast accuracy will be diluted to near-invisibility in an enterprise-level WAPE calculation. Segmented WAPE reporting — computed separately by channel, skill, or time stratum — mitigates this problem.

Forecast Bias

Definition

Forecast bias refers to a systematic directional tendency in forecast errors — consistently over-forecasting or consistently under-forecasting. Unlike accuracy metrics (which measure magnitude of error), bias metrics measure the direction and persistence of error.

The simplest bias metric is Mean Error (ME):

A positive ME indicates systematic over-forecasting; a negative ME indicates systematic under-forecasting. Percentage bias (PBIAS) normalizes this:

Why Bias Matters More Than Accuracy Alone

A forecast that is randomly wrong by 20% in both directions may produce adequate operational outcomes if staffing models incorporate the uncertainty. A forecast that is systematically wrong by 10% in one direction — always over-forecasting by 10% — creates compounding operational and financial consequences:

- Systematic over-staffing — excess idle time, wasted labor budget, agent disengagement.

- Systematic under-staffing — chronic service level failure, agent burnout, customer dissatisfaction.

- Planning credibility erosion — when operational managers learn that forecasts are reliably wrong in a predictable direction, they apply informal adjustments that undermine the formal forecasting process.

Hyndman and Koehler (2006) note that unbiasedness is a distinct desideratum from accuracy, and that optimizing for accuracy metrics alone does not guarantee bias elimination.

Sources of Forecast Bias

Bias in contact center forecasting arises from several sources:

- Model misspecification — trend or seasonality components not captured by the model produce systematic errors.

- Anchoring in judgmental adjustment — when analysts manually adjust statistical forecasts, cognitive anchoring to the prior period or to comfortable round numbers introduces systematic distortion. Fildes and Goodwin (2007) document that judgmental adjustments to statistical forecasts are on average harmful, particularly for large upward adjustments.[3]

- Incentive misalignment — operations managers measured on service level may systematically inflate forecasts to build a staffing buffer; finance departments may deflate forecasts to reduce headcount approvals.

- Data leakage — if forecast evaluation uses actuals retroactively corrected for known errors, forecast errors will appear smaller than they are in practice.

Detecting Bias

Standard practice includes:

- Tracking rolling ME or PBIAS over a 4–8 week window and flagging systematic sign persistence.

- Theil's U statistic — comparing model forecast accuracy to a naive benchmark to distinguish skill from bias.

- Forecast error autocorrelation — serial correlation in residuals indicates systematic structure not captured by the model.

Metric Selection Framework

| Condition | Preferred Metric |

|---|---|

| High-volume, strictly positive actuals, scale comparison needed | MAPE |

| Heterogeneous volumes across intervals or queues | WAPE |

| Zero or near-zero actuals present | WAPE or MAE (not MAPE) |

| Detecting systematic directional error | ME or PBIAS |

| Model comparison across multiple time series of different scales | MASE (Mean Absolute Scaled Error) |

| Penalizing large errors more than small errors | RMSE |

Maturity Model Considerations

At Level 1–2 (Reactive/Foundational), accuracy reporting is typically absent or ad hoc. Forecasts are compared to actuals informally; no systematic metric tracking exists.

At Level 3 (Integrated), MAPE or WAPE is tracked on a defined cadence against a standard benchmark. Bias detection may be informal.

At Level 4 (Optimized), multiple accuracy metrics are tracked simultaneously. Bias is monitored systematically with automated alerts when rolling PBIAS exceeds a threshold. Model selection decisions are formally tied to accuracy metric performance. Metric governance — defining which metric governs which decision — is documented.

Related Concepts

- Forecast Accuracy Metrics

- Forecasting Methods

- Reforecast and Rolling Forecast Methodology

- Judgmental Forecasting

- Naive and Seasonal Naive Forecasting

- Probabilistic Forecasting

- Machine Learning for Volume Forecasting

- WFM Labs Maturity Model

References

- ↑ Hyndman, R.J. & Koehler, A.B. (2006). Another look at measures of forecast accuracy. International Journal of Forecasting, 22(4), 679–688.

- ↑ Kolassa, S. & Siemsen, E. (2015). Demand Forecasting for Managers. Business Expert Press.

- ↑ Fildes, R. & Goodwin, P. (2007). Against your better judgment? How organizations can improve their use of management judgment in forecasting. Interfaces, 37(6), 570–576.